Breaking the Species Barrier: Advanced Strategies for High-Resolution Microbiome Analysis in Biomedical Research

Achieving species-level resolution in microbiome data is a critical frontier for unlocking the full potential of microbiome research in drug discovery and therapeutic development.

Breaking the Species Barrier: Advanced Strategies for High-Resolution Microbiome Analysis in Biomedical Research

Abstract

Achieving species-level resolution in microbiome data is a critical frontier for unlocking the full potential of microbiome research in drug discovery and therapeutic development. This article synthesizes the latest methodological breakthroughs, from novel bioinformatics pipelines and machine learning calibration to long-read sequencing technologies, that are overcoming the traditional limitations of 16S rRNA amplicon sequencing. We provide a comprehensive framework for researchers and drug development professionals to navigate foundational concepts, implement advanced analytical techniques, troubleshoot common challenges, and validate findings against gold-standard metagenomic approaches, ultimately enabling more precise microbial biomarker discovery and targeted therapeutic interventions.

Why Species-Level Resolution Matters: From Therapeutic Imperatives to Technical Limitations

Technical Support Center

Frequently Asked Questions (FAQs)

FAQ 1: Why is strain-level resolution critical for microbiome research, and what are the consequences of overlooking it?

Overlooking strain resolution can lead to incomplete and misleading conclusions, hindering our understanding of microbial functions, interactions, and their impact on human health outcomes [1]. Strain-level variations are not just phylogenetic details; they have direct clinical consequences. For example, in the genus Bacteroides, different strains show vast differences in their accessory genes, which can comprise a significant portion of their genomes and are influenced by factors like bacteriophage activity [2]. Functionally, only 10% of Finnish infants in one study harbored Bifidobacterium longum subsp. infantis, a subspecies specialized in human milk metabolism, whereas Russian infants commonly maintained a different probiotic Bifidobacterium bifidum strain [2]. In a clinical trial, the specific strain of a probiotic Bifidobacterium can determine its success in engrafting and producing therapeutic effects [3].

FAQ 2: What are the primary methodological approaches for achieving strain-level resolution, and how do I choose?

The choice of method depends on your research goals, required resolution, and resources. The table below compares the key techniques.

| Method | Key Principle | Strengths | Limitations | Best Suited For |

|---|---|---|---|---|

| Shotgun Metagenomics [2] [1] | Sequencing all DNA in a sample; strain tracking via SNPs and gene content. | Untargeted; can discover novel strains and functions; high resolution. | Complex, resource-intensive, and slow; requires advanced bioinformatics [1]. | In-depth exploration of community structure and functional potential. |

| Optical Mapping (e.g., DynaMAP) [1] | Creating taxonomic barcodes based on the physical location of short nucleotide motifs on long DNA molecules. | Rapid results (<30 mins); no amplification or sequencing needed; high strain specificity. | Requires specialized equipment; newer technology with less established databases. | Rapid, high-throughput strain identification without sequencing. |

| PCR Assays [1] | Amplifying strain-specific DNA sequences. | High specificity and sensitivity. | Resource-intensive to design/validate; cost scales poorly for multiple targets [1]. | Detecting or quantifying a pre-defined, small set of target strains. |

| 16S rRNA Gene Sequencing [4] | Sequencing a single, hypervariable region of the 16S rRNA gene. | Low cost; high throughput; well-established. | Insufficient for strain-level resolution due to limited genetic information captured [1]. | Genus- or species-level community profiling. |

FAQ 3: How do I troubleshoot a failed experiment aimed at detecting strain-specific effects, such as in probiotic administration?

If your probiotic trial fails to show a strain-specific effect, systematically investigate these common points of failure using the workflow below.

FAQ 4: What are the key endpoints and design considerations for clinical trials involving strain-specific microbiome therapies?

Trials for live biotherapeutic products (LBPs) require a departure from traditional drug development. Key unique considerations include [5]:

- Engraftment as a Primary Endpoint: Success is measured by the therapeutic strain's ability to stably integrate into or replace the existing microbiome. This requires longitudinal sampling and strain-specific tracking.

- Safety Beyond Standard AEs: Monitor for long-term ecological disruption of the native microbiome and potential overgrowth, especially in patients who may already harbor the strain.

- Product-Specific Efficacy: Endpoints should be localized (e.g., metabolite production, reduction in specific pathogens, symptom improvement) rather than relying on systemic pharmacokinetics.

- Simplified Dosing: Microbiome-based products often do not follow traditional dose-response curves. Early trials may focus on single-dose regimens, with higher doses tested primarily for safety.

The Scientist's Toolkit: Research Reagent Solutions

This table details essential reagents and their functions for conducting strain-level research, as featured in the cited experiments.

| Research Reagent / Material | Function in Strain-Level Research | Key Consideration |

|---|---|---|

| Synthetic Bacterial Communities [6] | Defined mixtures of bacterial strains used in high-throughput screens to study drug metabolism and inter-strain interactions in a controlled setting. | Allows for the dissection of community effects from a bottom-up approach. Composition should be relevant to the research question. |

| UV-Killed Bacteria [3] | Used to isolate the immunomodulatory effects of bacterial surface components and MAMPs from effects due to bacterial replication or metabolism. | Crucial for determining if immune activation is contact-dependent and for identifying strain-specific surface properties. |

| Isolated Exopolysaccharide (EPS) [3] | Purified bacterial surface polysaccharides used to probe strain-specific immune responses mediated by this specific MAMP. | As shown with B. pseudolongum, EPS may not recapitulate the effects of whole bacteria, indicating other factors are at play [3]. |

| Fecalase Preparation [6] | A cell-free extract of fecal enzymes used to study microbial biochemical transformations of drugs or metabolites without the complexity of live communities. | Culture-independent; useful for initial metabolism screens but may miss multi-step processes requiring cofactors from live cells. |

| Gnotobiotic Mouse Models [6] | Animals with a completely defined microbiota (often germ-free colonized with specific strains) to isolate the in vivo effect of a single microbe or simple community. | The gold standard for establishing causal relationships between a strain and a host phenotype. Resource-intensive to maintain. |

Experimental Protocols & Data Analysis

Protocol 1: Assessing Strain-Specific Immune Modulation In Vitro

This protocol is adapted from studies demonstrating that different strains of Bifidobacterium pseudolongum elicit unique immune responses in innate immune cells [3].

- Bacterial Preparation: Grow the bacterial strains of interest to mid-log phase. Harvest cells and wash with PBS. Prepare two types of reagents:

- UV-Killed Bacteria: Irradiate the bacterial suspension with UV light to ensure cell death while preserving surface structures. Confirm killing by plating.

- Isolated Exopolysaccharide (EPS): Extract and purify EPS from the bacterial cultures using standard phenol-water or ethanol precipitation methods.

- Immune Cell Culture: Isolate and differentiate primary innate immune cells, such as bone marrow-derived dendritic cells (BMDCs) or peritoneal macrophages (MΦ).

- Stimulation: Treat the immune cells with:

- UV-killed bacteria (at a Multiplicity of Infection, MOI, to be optimized, e.g., 10:1)

- Isolated EPS (at a concentration to be optimized, e.g., 10-100 µg/mL)

- Appropriate controls (e.g., media alone, a known stimulant like LPS).

- Analysis (24-48 hours post-stimulation):

- Flow Cytometry: Analyze the expression of cell surface receptors (e.g., CD40, CD86, MHC class II) to assess cell activation.

- ELISA: Measure the concentration of cytokines (e.g., IL-6, TNF-α, IL-10) in the cell culture supernatant.

Quantitative Data from a Representative Experiment [3] The table below shows how different strains can produce quantitatively and qualitatively different immune responses.

| Treatment (on BMDCs) | CD86 Expression (Mean Fluorescence Intensity) | IL-6 Secretion (pg/mL) | IL-10 Secretion (pg/mL) |

|---|---|---|---|

| Media Control | Baseline | Low | Low |

| B. pseudolongum Strain A | 1,500 | 450 | 180 |

| B. pseudolongum Strain B | 3,200 | 850 | 300 |

Visualizing the Strain-Specific Immune Response Pathway The following diagram summarizes the key findings from the immune modulation study, illustrating the strain-specific pathways.

Protocol 2: Tracking Strain Engraftment and Ecological Impact In Vivo

This protocol is critical for trials of live biotherapeutic products (LBPs) and probiotics [3] [5].

- Animal Model Preparation: Subject mice to a broad-spectrum antibiotic regimen for 5-7 days to deplete the endogenous microbiota. Include untreated controls.

- Strain Administration: Orally gavage mice with a single dose or multiple doses of the live bacterial strain(s) of interest.

- Longitudinal Sampling: Collect fecal samples at regular intervals pre- and post-gavage (e.g., days 0, 1, 3, 7, 14).

- Sample Analysis:

- DNA Extraction: Perform microbial DNA extraction from all fecal samples.

- Strain-Level Profiling: Use a high-resolution method (e.g., shotgun metagenomics or optical mapping) to track the relative abundance of the administered strain over time. This confirms engraftment.

- Community Profiling: Use 16S rRNA sequencing to assess broader ecological changes in the gut microbiome composition (beta-diversity) in response to the introduced strain.

- Host Response: Terminally, collect intestinal tissues and/or blood serum. Analyze host gene expression via RNA sequencing and measure systemic immune markers.

Frequently Asked Questions

FAQ 1: Why can't 16S V3-V4 sequencing reliably distinguish between closely related bacterial species? The V3-V4 regions constitute only about 460 base pairs of the full 1,500 bp 16S rRNA gene, providing limited genetic information for differentiation [7]. This short region lacks sufficient variable sites to distinguish species that share highly similar 16S rRNA gene sequences, such as Escherichia and Shigella [4]. The inherent homology between sequences in these partial regions means some species remain indistinguishable regardless of bioinformatic methods used [8].

FAQ 2: What are the practical consequences of using a fixed similarity threshold for species classification? Fixed thresholds (typically 97-98.7%) inevitably cause misclassification because actual sequence divergence between species varies substantially [4]. Some species demonstrate differences below 97% similarity, while others share identical V3-V4 sequences despite being distinct species [4]. This results in both over-splitting (separating sequences from the same species) and over-merging (lumping different species together), distorting true microbial diversity metrics [9].

FAQ 3: Are there experimental approaches that can improve species-level resolution? Yes, full-length 16S rRNA sequencing using Oxford Nanopore or PacBio platforms provides enhanced species-level understanding by capturing all variable regions [10] [8]. Additionally, shotgun metagenomic sequencing enables accurate species-level identification and functional profiling by randomly sequencing all genetic material in a sample, though at higher cost and data storage requirements [8] [11].

Troubleshooting Guides

Issue 1: Low Species-Level Resolution in Taxonomic Profiles

Problem: Your analysis fails to resolve taxonomic classifications beyond genus level, or you suspect misclassification of closely related species.

Solution:

- Wet-Lab Considerations:

- Bioinformatic Improvements:

- Implement the ASVtax pipeline which applies flexible, species-specific classification thresholds rather than fixed cutoffs [4]

- Utilize the Emu bioinformatics pipeline specifically designed for Oxford Nanopore Technologies FL-16S reads to enhance classification accuracy [10]

- Apply machine learning calibration tools like TaxaCal to correct species-level abundance biases in 16S data using a two-tier correction strategy [11]

Validation: Include mock microbial communities with known composition to validate species-level classification performance in your specific experimental setup [7].

Issue 2: Inaccurate Diversity Metrics Due to Threshold Artifacts

Problem: Alpha and beta diversity metrics appear distorted, potentially due to over-splitting or over-merging of sequences.

Solution:

- Algorithm Selection:

- For high precision with Illumina data, consider DADA2 for Amplicon Sequence Variants (ASVs) which differentiates sequences varying by even a single base pair [7]

- For Operational Taxonomic Units (OTUs), UPARSE provides clusters with lower errors, though with potential over-merging [9]

- Benchmark multiple algorithms (DADA2, Deblur, UNOISE3, UPARSE) using mock communities to determine optimal performance for your specific samples [9]

- Parameter Optimization:

Experimental Design: Always include appropriate controls including negative (no template) controls and Zymo mock microbial community controls to calibrate experimental analysis parameters [7].

Comparative Performance Data

Table 1: Comparison of 16S rRNA Sequencing Approaches for Species-Level Resolution

| Sequencing Method | Target Region | Read Length | Species-Level Resolution | Primary Limitations |

|---|---|---|---|---|

| Illumina V3-V4 | ~460 bp | Short (~300 bp) | Limited to Genus Level [8] | Cannot distinguish closely related species; fixed threshold artifacts [4] |

| ONT FL-16S | Full-length (~1500 bp) | Long | Superior species-level resolution [10] | Higher error rate; requires specialized bioinformatics (Emu) [10] |

| Shotgun Metagenomics | Whole genome | Variable | High resolution with functional insights [8] | High cost; extensive data processing; host DNA contamination [8] [11] |

Table 2: Performance Characteristics of Bioinformatics Algorithms for 16S Data

| Algorithm | Method | Error Rate | Tendency | Best Application |

|---|---|---|---|---|

| DADA2 [9] | ASV (Denoising) | Low | Over-splitting [9] | High-resolution studies requiring single-nucleotide differentiation [7] |

| UPARSE [9] | OTU (Clustering) | Low | Over-merging [9] | Studies where genus-level classification is sufficient |

| Deblur [9] | ASV (Denoising) | Moderate | Balanced | Large-scale studies with consistent sequencing quality |

| Emu [10] | ONT-specific | Low (with correction) | Balanced | Oxford Nanopore long-read 16S data |

Experimental Protocols

Protocol 1: Species-Level Resolution Enhancement Using Flexible Thresholds

Purpose: To implement a dynamic threshold approach for improved species-level classification of V3-V4 16S rRNA data.

Materials:

- ASVtax pipeline and database [4]

- V3-V4 16S rRNA sequencing data

- High-performance computing resources

Procedure:

- Database Construction:

Threshold Determination:

Classification:

- Process your ASVs through the ASVtax pipeline using the established flexible thresholds

- Validate classification accuracy using mock community controls

Expected Results: Significant improvement in species-level classification accuracy and reduction in misclassification between closely related taxa.

Protocol 2: Machine Learning Calibration of 16S Species Profiles

Purpose: To calibrate species-level taxonomy profiles in 16S amplicon data to more closely resemble metagenomic whole-genome sequencing results.

Materials:

- TaxaCal algorithm [11]

- Paired 16S and WGS data (minimum 20 sample pairs for training) [11]

- R or Python environment

Procedure:

- Data Preparation:

- Obtain paired 16S and WGS data from the same samples

- Process both datasets through standardized taxonomic classification pipelines

- Normalize abundance data for comparative analysis

Model Training:

Application:

- Apply trained TaxaCal model to correct species-level abundances in 16S-only samples

- Validate calibrated profiles using holdout paired samples or mock communities

Expected Results: Bray-Curtis distances between calibrated 16S and WGS samples decrease significantly (from 0.54 to 0.46 in validation studies), with improved alpha diversity metrics alignment [11].

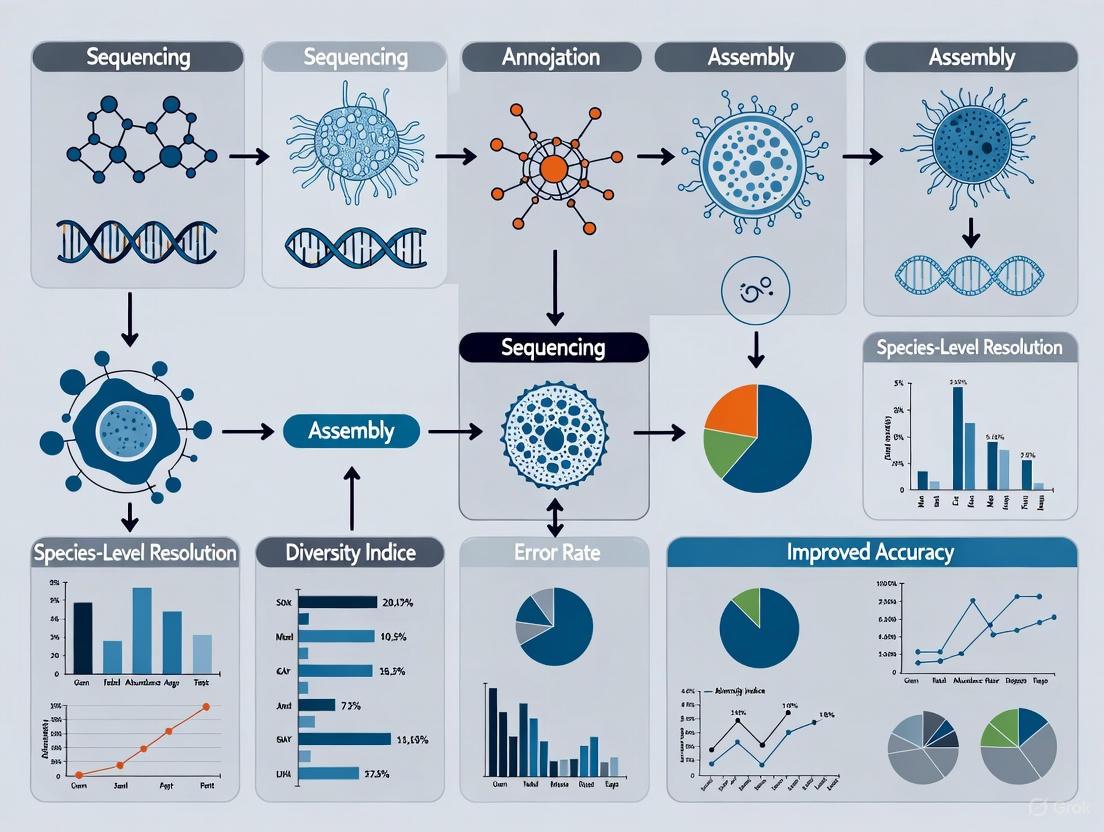

Experimental Workflow Visualization

Research Reagent Solutions

Table 3: Essential Research Materials for Overcoming V3-V4 Limitations

| Reagent/Resource | Function | Application Note |

|---|---|---|

| Zymo Mock Microbial Community [10] | Positive control for DNA extraction, PCR, and sequencing efficiency | Validates species-level classification performance in your specific experimental setup |

| HostZero DNA Extraction Kit [10] | Host DNA depletion for microbiome studies | Increases microbial DNA proportion (50-90%) in host-rich samples like tracheal aspirates |

| GreenGenes2 & SILVA Databases [7] | Taxonomic classification references | Use curated versions with standardized nomenclature for consistent classification |

| ONT R10.4.1 Flow Cells [10] | High-accuracy long-read sequencing | Provides ~99% read accuracy for full-length 16S sequencing |

| Emu Bioinformatics Pipeline [10] | Taxonomic classification of ONT FL-16S data | Specifically designed for long-read, error-prone sequences; uses curated database |

Frequently Asked Questions (FAQs) and Troubleshooting Guides

FAQ 1: What fundamentally limits 16S rRNA gene sequencing from achieving reliable species-level resolution?

The limitation stems from the genetic characteristics of the 16S rRNA gene and the technical constraints of common sequencing approaches.

- Genetic Conservation: The 16S rRNA gene is a highly conserved "housekeeping" gene, meaning its sequence is very similar across different species within the same genus. Short-read sequencing of only one or two hypervariable regions (e.g., V3-V4) lacks sufficient informative sites to distinguish between these closely related species [4] [12].

- Fixed Threshold Pitfalls: Traditional bioinformatics methods often use a fixed sequence similarity threshold (e.g., 97% for Operational Taxonomic Units - OTUs) to classify species. However, the actual 16S rRNA gene sequence divergence among species is not uniform; some species have identical 16S sequences (e.g., Escherichia and Shigella), while others show high intra-species variation, making a single fixed threshold unreliable for accurate species-level classification [4] [13].

Troubleshooting Guide: Overcoming 16S Limitations

| Challenge | Solution | Principle | Key Consideration |

|---|---|---|---|

| Short-Read Resolution | Use full-length 16S rRNA gene sequencing (e.g., PacBio, Nanopore) [4] [12]. | Provides the entire gene sequence, maximizing informative sites for discrimination. | Higher cost and longer sequencing time compared to partial gene sequencing [4]. |

| Primer Bias & Off-target Amplification | Employ micelle PCR (micPCR) for amplification [12]. | Compartmentalizes single DNA molecules to prevent chimera formation and PCR competition, providing more robust and accurate profiles. | Requires optimization of emulsion-based PCR protocols. |

| Fixed Threshold Misclassification | Implement flexible, species-specific classification thresholds [4]. | Uses dynamic similarity cutoffs tailored to the genetic variation of each specific species. | Requires a curated, high-quality reference database to define accurate thresholds. |

FAQ 2: How can I achieve species-level identification in samples dominated by host DNA, such as tissue or saliva?

Samples with high host content (>90% human DNA) present a major challenge because sequencing depth is wasted on host reads, drastically reducing microbial signal [14]. Solutions involve either depleting host DNA or using methods that selectively enrich for microbial sequences.

Troubleshooting Guide: Working with High-Host-Content Samples

| Challenge | Solution | Principle | Key Consideration |

|---|---|---|---|

| Low Microbial Signal | Use a reduced-representation metagenomic method like 2bRAD-M [14]. | Leverages higher restriction enzyme site density in microbial genomes vs. human genome to preferentially generate and sequence microbial tags. | Does not require prior host depletion; allows for concurrent host and microbiome analysis. |

| Host DNA Depletion | Apply pre-extraction methods (e.g., selective lysis) or post-extraction methods (e.g., methylation-based depletion) [14]. | Physically or enzymatically removes host DNA before or after extraction to increase the relative proportion of microbial DNA. | Can cause microbial DNA loss, may not work on frozen samples, and can skew microbial community representation [14]. |

| Low Biomass & Contamination | Include negative and positive controls in every experiment [15]. | Allows for identification and computational subtraction of contaminating DNA sequences introduced from reagents and the environment. | Critical for accurate detection in low-biomass samples where contamination can constitute most of the signal [15]. |

FAQ 3: What advanced analytical approaches can push resolution beyond the species level to strains and subspecies?

Strain and subspecies-level resolution is crucial as these can exhibit distinct functional characteristics and host interactions [16]. This typically requires moving beyond 16S rRNA sequencing to shotgun metagenomics and advanced computational tools.

- The Limitation of 16S: The 16S rRNA gene is generally insufficient for strain-level resolution because strains of the same species have identical or nearly identical 16S sequences [16].

- Shotgun Metagenomics: This method sequences all the DNA in a sample, allowing access to the entire genetic content of the microbial community, including genes beyond the 16S rRNA gene.

- The "Panhashome" Method: This is a sketching-based bioinformatics approach for rapid subspecies and species quantification from metagenomic data. It identifies genes that drive functional differences between subspecies within a species, known as Operational Subspecies Units (OSUs) [16].

Troubleshooting Guide: Achieving Subspecies Resolution

| Challenge | Solution | Principle | Key Consideration |

|---|---|---|---|

| Strain-Level Discrimination | Perform shotgun metagenomic sequencing and analyze with tools like panhashome [16]. | Identifies variations in gene content (the "pan-genome") between closely related strains to define subspecies. | Requires high-quality, deep sequencing data and sophisticated computational resources. |

| Lack of Reference Databases | Utilize comprehensive genomic catalogs like the HuMSub catalog [16]. | Provides a curated reference of human gut microbiota at the subspecies level for accurate annotation. | Existing databases are still being populated for various body sites and environments. |

| Clinical Diagnostic Speed | Adopt a full-length 16S micPCR/Nanopore workflow [12]. | Combines the accuracy of micPCR with the long reads of nanopore sequencing for rapid, species-level results (24hr turnaround). | While excellent for species-level ID, strain-level resolution may still require metagenomics. |

Experimental Protocols for Enhanced Resolution

Protocol 1: Full-Length 16S rRNA Gene Sequencing with micPCR and Nanopore

This protocol is adapted from [12] and is designed for rapid, species-level identification from clinical samples with high accuracy.

Key Research Reagent Solutions

| Item | Function | Specification |

|---|---|---|

| LongAmp Taq 2x MasterMix | Efficient amplification of long, full-length 16S rRNA gene amplicons. | New England Biolabs |

| 16S_V1-V9 Primers | Amplifies the nearly complete 16S rRNA gene. Include universal sequence tails for a two-step PCR [12]. | Forward: 5’-TTT CTG TTG GTG CTG ATA TTG CAG RGT TYG ATY MTG GCT CAG-3’ |

| Nanopore Barcodes | Allows for multiplexed sequencing of samples. | Part of the cDNA-PCR sequencing kit SQK-PCB114.24 (Oxford Nanopore Technologies) |

| Synechococcus DNA | Serves as an Internal Calibrator (IC) for absolute quantification of 16S rRNA gene copies. | ATCC 27264D-5 |

| Flongle Flow Cell | Provides a cost-effective and rapid sequencing platform for individual or small batches of samples. | Oxford Nanopore Technologies |

Detailed Workflow Diagram

The following diagram illustrates the optimized experimental workflow for full-length 16S sequencing.

Methodology Steps:

- DNA Extraction & Quantification: Extract DNA from the sample (e.g., using MagNA Pure 96 system). Quantify the total number of 16S rRNA gene copies using qPCR [12].

- Spike Internal Calibrator: Add a known quantity (e.g., 1,000 copies) of Synechococcus 16S rRNA gene to the DNA extract. This allows for absolute quantification and correction for background contamination [12].

- First micPCR Round:

- Primers: Use primers 16SV1-V9F and 16SV1-V9R.

- Reaction: Set up a micelle-based PCR using LongAmp Taq 2x MasterMix.

- Cycling Conditions: 95°C for 2 min; 25 cycles of (95°C for 15s, 55°C for 30s, 65°C for 75s); final extension at 65°C for 10 min [12].

- Amplicon Purification: Purify the resulting amplicons using AMPure XP beads at a 1:0.6 ratio [12].

- Second micPCR Round:

- Reaction: Use the purified amplicons as template, nanopore barcodes, and LongAmp Taq 2x MasterMix.

- Cycling Conditions: 95°C for 2 min; 25 cycles with a touch-down annealing (first 10 cycles: 15s at 95°C, 30s at 50-55°C, 75s at 65°C); final extension at 65°C for 10 min [12].

- Sequencing & Analysis: Pool barcoded libraries and load onto a Flongle Flow Cell for sequencing on a MinION device. Perform basecalling and analyze data using the Genome Detective platform or similar [12].

Protocol 2: 2bRAD-M for Host-Rich Microbiome Analysis

This protocol, based on [14], is designed for high-resolution microbiome profiling in samples with high host DNA content without the need for physical host depletion.

Detailed Workflow Diagram

The diagram below outlines the core steps of the 2bRAD-M method, from sample to analysis.

Methodology Explanation:

- Principle: The 2bRAD-M method exploits the fact that microbial genomes have a much higher density of genes, and therefore restriction enzyme sites, compared to the human genome. A type IIB restriction enzyme cuts genomic DNA at specific sites, generating short, uniform tags (e.g., 60-70 bp) that are representative of the entire genome [14].

- Selective Enrichment: Because microbial genomes generate far more of these tags per unit of DNA, sequencing these short tags effectively enriches for microbial sequences even when host DNA makes up over 99% of the sample [14].

- Bioinformatic Analysis: The sequenced tags are then mapped to an expanded reference database that includes genomes from GTDB and EnsemblFungi to achieve comprehensive taxonomic profiling at the species level [14].

Quantitative Data Comparison of Methodologies

The following table summarizes key performance metrics of different sequencing methods as benchmarked in recent studies, highlighting their capabilities for species-level resolution.

Table 1: Performance Benchmarking of Microbiome Sequencing Methods in High-Host-Content Conditions [14]

| Method | Target | Host DNA Context | Species-Level AUPR* | Species-Level L2 Similarity* | Key Advantage |

|---|---|---|---|---|---|

| 2bRAD-M | Genomic Tags | 90% | >93% | >93% | No host depletion needed; high resolution in HoC. |

| 2bRAD-M | Genomic Tags | 99% | High | High | Maintains performance in extreme HoC. |

| WMS | Whole Genome | 90% | High | High (but lower than 2bRAD-M at 99% HoC) | Considered gold standard; functional potential. |

| 16S (V4-V5) | Single Gene Region | 90% / 99% | Low | Low | Cost-effective; prone to off-target amplification in HoC. |

| Full-Length 16S (Nanopore) | Full 16S Gene | N/A | Matches WGS profiles [12] | N/A | Rapid turnaround (24h); excellent species discrimination. |

AUPR (Area Under the Precision-Recall Curve) and L2 Similarity are metrics for identification accuracy and abundance estimation fidelity, respectively. Higher values are better. [14]

Table 2: Thresholds for Taxonomic Classification in 16S rRNA Gene Analysis

| Taxonomic Level | Traditional Fixed Threshold | Modern Flexible Approach | Note |

|---|---|---|---|

| Species | 97% or 98.7% similarity | 80% to 100%, species-specific [4] | Flexible thresholds account for variable intra- and inter-species diversity. |

| Genus | 95% similarity | Clear thresholds for 98.38% of genera [4] | More reliable than species-level with fixed thresholds. |

| Subspecies (OSU) | Not applicable | Defined by panhashome gene content analysis [16] | Requires shotgun metagenomic data, not 16S rRNA sequencing. |

FAQs on Microbial Databases and the Tree of Life

Q1: How do newly discovered microorganisms, like Solarion, directly impact existing reference databases? The discovery of a new organism such as Solarion arienae necessitates a fundamental restructuring of our taxonomic frameworks [17]. This single-celled eukaryote did not fit into any known major lineages (supergroups) of eukaryotic life [18]. Its unique genetic and cellular makeup led researchers to establish both a new phylum (Caelestes) and a new eukaryotic supergroup (Disparia) to accommodate it [17]. For reference databases, this means they must be updated to include this new branch on the tree of life. Furthermore, the unique mitochondrial genes found in Solarion provide new reference points for understanding ancient evolutionary pathways, forcing databases to expand beyond just taxonomic names to include these novel genetic sequences [17] [19].

Q2: What is the specific genetic evidence from Solarion that informs our understanding of early mitochondrial evolution?

Solarion arienae contains a critical piece of genetic evidence: the secA gene within its mitochondrial DNA [18]. This gene is part of a protein translocation system and is a molecular relic from the ancient bacterial ancestor that evolved into mitochondria [19]. In the endosymbiotic event that created eukaryotes, an ancestral cell engulfed a bacterium, which later became the energy-producing mitochondrion [18]. Over billions of years, almost all eukaryotes lost the secA gene from their mitochondrial genomes. Solarion's retention of this gene provides direct genetic insight into the machinery of the proto-mitochondria, offering a "rare window" into the earliest stages of complex cellular evolution [17] [18].

Q3: Why is a fixed similarity threshold (e.g., 97-98.5%) problematic for species-level classification in microbiome studies? Using a fixed threshold for species-level classification, such as 97-98.5% similarity for the 16S rRNA gene, is a major source of misclassification because genetic divergence is not uniform across all microbial species [4]. This "one-size-fits-all" approach fails to account for the natural biological variation in evolutionary rates. For instance, some distinct species may share identical 16S sequences (e.g., Escherichia and Shigella), while other species exhibit substantial intraspecies diversity where different strains share less than 97% similarity [4]. Relying on a fixed threshold in these cases leads to false positives (lumping different species together) or false negatives (splitting one species into many) [4]. Advanced pipelines now establish flexible, species-specific thresholds that range from 80% to 100% to resolve these issues [4].

Q4: What are the key quality issues affecting microbial genome sequences in public databases? Public databases suffer from significant quality and completeness issues, which undermine the reliability of microbiome research. A survey of sequences derived from authenticated ATCC strains in two major databases (NCBI and Ensembl) revealed that most available genomes are incomplete drafts [20]. The table below summarizes the specific issues:

Table: Quality Issues with ATCC Strain Genomes in Public Databases

| Database | Total ATCC Genomes Surveyed | Incomplete Drafts (Contigs/Scaffolds) | Complete Genomes | Genomes with Plasmids |

|---|---|---|---|---|

| Microbial Genomes (NCBI) | 1,807 | 72.3% | 27.7% | 10.7% |

| Ensembl Bacteria | 715 | 72.9% | 27.1% | Data Not Available |

The primary challenges include a lack of complete, circularized chromosomes and plasmids, the use of non-authenticated or poorly characterized source cultures, and the application of non-standardized sequencing and assembly methods [20]. These factors contribute to inaccuracies in downstream analyses.

Q5: How can full-length 16S rRNA sequencing from PacBio be used to optimize a reference database for Illumina data? Full-length 16S rRNA sequencing data generated by PacBio's HiFi (high-fidelity) reads can be processed with denoising tools like DADA2 to generate highly accurate Amplicon Sequence Variants (ASVs) that provide single-nucleotide resolution [21] [8]. These full-length ASVs can then be assigned a taxonomy using a reference database (e.g., RDP) and used to construct a new, optimized, study-specific reference database [21]. When this custom database is used to classify shorter reads from Illumina (e.g., V3-V4 regions), it significantly increases classification accuracy and enhances the discovery of microbial biomarkers [21]. This method effectively translates the superior resolution of long-read sequencing to improve the analysis of more cost-effective, short-read data.

Troubleshooting Guide: Common Experimental Issues

Issue 1: Inability to Achieve Species-Level Resolution with V3-V4 16S rRNA Data

- Problem: Your 16S rRNA sequencing analysis, targeting the V3-V4 regions, is unable to reliably distinguish between closely related bacterial species, leading to ambiguous taxonomic assignments.

- Solution:

- Use a Flexible Threshold Pipeline: Implement a specialized bioinformatics pipeline, such as the

asvtaxtool, which uses dynamic, species-specific classification thresholds instead of a single fixed cutoff [4]. This accounts for the variable evolutionary rates of the 16S gene across different taxa. - Leverage a Custom Database: Construct or use a non-redundant ASV database that is specifically tailored to the V3-V4 regions and enriched with sequences from your target environment (e.g., the human gut) [4]. This improves coverage for hard-to-classify and uncultured organisms.

- Validate with Long-Read Data: If possible, use PacBio full-length 16S sequencing on a subset of samples to create a high-quality, study-specific reference database, which can then be used to train a classifier for your Illumina V3-V4 data [21].

- Use a Flexible Threshold Pipeline: Implement a specialized bioinformatics pipeline, such as the

Issue 2: Reference Database is Missing or Misclassifying a Novel Microbial Lineage

- Problem: Your metagenomic or phylogenetic analysis reveals a cluster of sequences that do not confidently map to any known taxonomic group in standard databases, suggesting a novel discovery.

- Solution:

- Conduct Deep Phylogenetic Analysis: Follow the methodology used for Solarion [17]. Combine microscopy, single-cell genomics, and phylogenetic analysis of multiple genes to determine the organism's evolutionary relationship to known lineages.

- Interrogate Environmental Databases: Search public environmental DNA (eDNA) databases to see if similar sequences have been detected elsewhere but not yet classified. Solarion was found to be both rare and widespread in marine sediments upon such a search [19].

- Update Your Reference Metadata: Use tools like the "Set Up Microbial Reference Database" function in bioinformatics suites (e.g., CLC Microbial Genomics Module) to incorporate new taxonomic metadata and custom sequences into your analytical workflow [22].

Issue 3: Low Quality or Incomplete Genomes in Public Databases are Affecting Analysis

- Problem: Your reference-based assembly or taxonomic profiling is yielding poor results due to the fragmented and incomplete nature of genomes in public repositories.

- Solution:

- Source Authenticated Materials: Whenever possible, use genomic data derived from authenticated, traceable biological materials, which ensures the identity and purity of the source culture [20].

- Prioritize Complete Genomes: Filter for complete, circularized genomes and chromosomes in your reference set, as these are of higher quality and allow for more accurate analysis, even though they are less common [20].

- Employ a Hybrid Assembly Workflow: For your own sequencing projects, use a standardized end-to-end workflow that combines long-read and short-read sequencing technologies to generate high-quality, complete genome assemblies, including plasmids [20].

Experimental Protocols & Workflows

Protocol 1: Methodology for Discovering and Characterizing a Novel Eukaryotic Microbe

This protocol is based on the groundbreaking study that discovered Solarion arienae and established the new supergroup Disparia [17] [18].

- Sample Collection & Cultivation: Collect environmental samples (e.g., marine water and sediment). Establish and maintain long-term laboratory cultures of microorganisms from these samples.

- Microscopy: Regularly observe cultures under a microscope. Note any unusual or previously overlooked morphological characteristics in the microbial community.

- Single-Cell Isolation: Isolate individual cells of interest using techniques such as micropipetting or cell sorting.

- Genome Sequencing & Assembly: Sequence the entire genome of the isolated organism using a combination of sequencing platforms to ensure high coverage and accuracy.

- Phylogenomic Analysis:

- Identify a set of highly conserved, universal marker genes from the sequenced genome.

- Build a phylogenetic tree including these genes from a broad representation of known eukaryotic supergroups.

- Statistically assess (e.g., with bootstrapping) where the new organism places on the tree. If it does not robustly cluster with any known supergroup, it may represent a new lineage.

- Mitochondrial Gene Analysis: Specifically assemble and annotate the mitochondrial genome. Search for and analyze the presence of rare, ancestral genes like

secAthat are typically lost in other eukaryotes. - Taxonomic Proposal: Based on the genetic distinctiveness and phylogenetic results, formally propose new taxonomic ranks (e.g., new phylum, new supergroup) as required.

Protocol 2: Workflow for Optimizing a 16S rRNA Reference Database Using PacBio Data

This methodology details how to use long-read sequencing to improve taxonomic classification for short-read studies [21].

- Sample Preparation & Sequencing:

- Extract microbial DNA from your sample set (e.g., oral or gut microbiome).

- Perform full-length 16S rRNA gene amplification and sequence on the PacBio platform to generate HiFi reads.

- On the same DNA samples, perform V3-V4 region amplification and sequence on the Illumina platform.

- PacBio Data Processing:

- Process the PacBio HiFi reads using the DADA2 pipeline to infer exact amplicon sequence variants (ASVs).

- Assign taxonomy to these full-length ASVs using a standard reference database (e.g., RDP or SILVA).

- Database Optimization:

- Use the confidently classified, full-length ASVs to construct a new, study-specific reference database.

- Optionally, trim the phylogenetic tree of this new database at various identity thresholds to reduce redundancy and computational demand.

- Classifier Training & Application:

- Train a taxonomic classifier (e.g., in QIIME2) on this optimized reference database.

- Use this trained classifier to assign taxonomy to the Illumina-derived V3-V4 reads.

- Validation:

- Compare the classification results (taxonomic richness, evenness, and resolution) against those obtained using standard, large databases.

- Use tools like LEfSe to assess the improvement in biomarker discovery efficiency.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials and Tools for Advanced Microbiome Research

| Item | Function & Application |

|---|---|

| PacBio HiFi Reads | Provides highly accurate long-read sequencing data, ideal for generating full-length 16S rRNA sequences and resolving complex genomic regions [21] [8]. |

| DADA2 Algorithm | A key bioinformatics tool for processing sequencing data that models and corrects Illumina-sequenced amplicon errors, resolving amplicon sequence variants (ASVs) that differ by as little as one nucleotide [21]. |

| Authenticated Microbial Strains | Certified microbial cultures from repositories like ATCC provide traceable and reliable genomic material, which is crucial for generating high-quality reference genomes and validating findings [20]. |

| Flexible Threshold Pipeline (e.g., ASVtax) | A specialized bioinformatics tool that applies dynamic, species-specific identity thresholds for taxonomic classification, dramatically improving species-level resolution from V3-V4 16S data [4]. |

| Hybrid Assembly Workflow | A methodology that combines the high accuracy of short-read sequencing (Illumina) with the long-range continuity of long-read sequencing (PacBio/Oxford Nanopore) to produce complete, closed microbial genomes [20]. |

Next-Generation Pipelines and Technologies for Precision Microbiome Profiling

Welcome to the technical support center for advanced microbiome bioinformatics. This resource is dedicated to supporting researchers, scientists, and drug development professionals in implementing cutting-edge methods for improving species-level resolution in microbiome data research. The center focuses specifically on the ASVtax pipeline and the development of customized reference databases, which address critical limitations of traditional fixed-threshold taxonomic classification methods.

Traditional 16S rRNA gene sequencing, particularly of the V3-V4 hypervariable regions, has been largely confined to genus-level identification due to the use of fixed similarity thresholds (typically 98.5-98.7%) for species classification [23] [24]. This approach causes significant misclassification because optimal discrimination thresholds actually vary substantially among different bacterial species, ranging from 80% to 100% similarity [23]. The ASVtax pipeline implements flexible classification thresholds that are specific to individual taxonomic groups, significantly improving species-level identification accuracy for complex microbial communities like the human gut microbiome [23] [24].

Troubleshooting Guides

Common Pipeline Implementation Issues

Table 1: Frequent ASVtax Pipeline Errors and Solutions

| Error Description | Potential Causes | Recommended Solutions |

|---|---|---|

| Low classification rate for new ASVs | Insufficient database coverage of target microbiome; Overly stringent default thresholds | Supplement with study-specific sequences; Verify threshold parameters for target taxa |

| Inconsistent taxonomy across samples | Variable sequence quality; Incomplete reference data | Implement rigorous quality control; Standardize taxonomic nomenclature across databases |

| Over-assignment of rare taxa | Database contamination; Inappropriate threshold settings | Apply decontamination protocols; Validate with negative controls |

| Discrepancies between classification tools | Different algorithmic approaches; Inconsistent database versions | Use consensus classification approaches; Maintain consistent database versions |

Database Construction and Curation Problems

Table 2: Custom Database Development Issues

| Problem Area | Technical Challenges | Resolution Strategies |

|---|---|---|

| Database incompleteness | Limited reference sequences for target taxa; Gaps in understudied lineages | Integrate multiple sources (SILVA, NCBI, LPSN); Add study-specific sequences |

| Taxonomic inconsistencies | Conflicting nomenclature across sources; Deprecated classifications | Implement standardized curation pipelines; Use authoritative taxonomy sources |

| Sequence quality issues | Variable lengths; Ambiguity bases; Mislabeled sequences | Apply rigorous filtering (e.g., <2% ambiguity bases); Remove short sequences |

| Region-specific biases | Primer mismatches; Hypervariable region selection | Extract specific regions (e.g., V3-V4 positions 341-806) from full-length references |

Frequently Asked Questions (FAQs)

Q1: What are the specific advantages of ASVtax over traditional OTU-based methods?

ASVtax provides several key advantages: (1) It employs flexible species-level thresholds (80-100%) tailored to specific taxonomic groups rather than a fixed cutoff, resolving misclassification between closely related species; (2) It uses a specialized V3-V4 region database that integrates multiple authoritative sources and study-specific sequences; (3) It achieves single-nucleotide resolution through Amplicon Sequence Variants (ASVs) rather than Operational Taxonomic Units (OTUs) with arbitrary similarity thresholds [23] [24].

Q2: How does database size affect taxonomic resolution, and why are customized databases recommended?

Paradoxically, as database size increases, species-level taxonomic resolution can actually decrease due to rising interspecies sequence collisions [25]. Comprehensive databases contain more sequences from taxa not present in your study environment, potentially leading to false assignments. Customized databases tailored to specific taxonomic groups and geographic regions improve assignment accuracy by reducing irrelevant sequences, though they may initially increase unassigned sequences until enriched with relevant local barcodes [26].

Q3: What methods can improve taxonomic assignment when reference databases are incomplete?

When databases are incomplete: (1) Implement consensus taxonomy approaches like CONSTAX that combine multiple classifiers (RDP, UTAX, SINTAX) to improve assignment power [27]; (2) Add local barcode sequences specifically from your study region/taxa - even small additions (e.g., 116 new barcodes increasing database by 0.04%) can improve resolution for 0.6-1% of ASVs [26]; (3) Apply abundance-based reassignment methods that preserve rare taxa information during ambiguous taxon resolution [28].

Q4: How do we handle ambiguous taxa that are identified to different taxonomic resolutions?

Ambiguous taxa resolution requires careful strategies: (1) For site-level comparisons, retain children and delete parents to preserve richness; (2) For study-area scale analyses, reassign parents to common children to maintain abundance patterns; (3) Avoid methods that simply merge all children with parents, as this significantly reduces apparent richness and distorts ecological patterns [28]. The choice of method significantly impacts estimates of projected taxa richness, particularly for conservation applications.

Q5: What are the key considerations when selecting hypervariable regions for species-level identification?

For human gut microbiome studies targeting Firmicutes and Bacteroidetes, the V3-V4 regions have been recognized as the optimal compromise between resolution, cost, and throughput [23] [24]. While full-length 16S sequencing provides superior species-level identification, V3-V4 regions offer practical advantages including reduced costs, higher throughput, smaller sample requirements, and shorter sequencing times (approximately 2-3 times faster than full-length) [23].

Experimental Protocols & Methodologies

ASVtax Database Construction Protocol

The ASVtax pipeline employs a robust methodology for constructing specialized databases:

Primary Database Construction: Collect seed sequences from authoritative sources including:

- LPSN (List of Prokaryotic names with Standing in Nomenclature) - using "validly published" species with "correct name" status

- NCBI RefSeq database - curated 16S sequences from bacterial and archaeal type materials

- This yields approximately 38,815 trusted reference sequences representing 18,287 bacterial and archaeal species/subspecies [23] [24]

Database Expansion and Curation:

- Incorporate quality-filtered sequences from SILVA SSU database

- Remove short sequences (<1,200 bp for bacteria, <900 bp for archaea)

- Exclude low-quality sequences (>2% ambiguity bases)

- Extract V3-V4 region sequences (positions 341-806) consistently

- Supplement with 1,082 human gut samples to improve coverage of uncultured organisms [23]

Threshold Determination:

Custom Database Development for Specific Environments

Table 3: Research Reagent Solutions for Database Development

| Reagent/Resource | Function | Implementation Considerations |

|---|---|---|

| SILVA SSU Database | Comprehensive 16S rRNA reference | Filter for quality (length, ambiguity); Extract target regions |

| NCBI RefSeq | Curated type material sequences | Use for seed sequences; Ensure taxonomic validity |

| LPSN Database | Nomenclatural standardization | Resolve taxonomic conflicts; Apply standing nomenclature |

| UNITE Database (fungal ITS) | Fungal-specific reference | Essential for ITS-based fungal studies; Requires different formatting |

| Local Barcode Sequences | Gap-filling for under-represented taxa | Even small additions significantly improve resolution |

Workflow Visualization

ASVtax Database Construction and Analysis Workflow

Advanced Technical Reference

Taxonomic Classification Algorithms Comparison

Table 4: Classification Algorithm Performance Characteristics

| Classifier | Algorithm Type | Key Features | Optimal Use Cases |

|---|---|---|---|

| RDP Classifier | Naïve Bayesian | Identifies 8-mers with higher probability of belonging to specific taxa; Provides confidence estimates | General-purpose classification with probability thresholds |

| UTAX | k-mer similarity | Calculates word count scores; Estimates error rates through reference training | Large-scale analyses requiring speed and efficiency |

| SINTAX | k-mer similarity | Identifies top hit in reference; Provides bootstrap confidence for all ranks | Situations requiring confidence values at all taxonomic levels |

| CONSTAX (Consensus) | Hybrid approach | Combines multiple classifiers; Improves assignment power through consensus | Maximizing classification accuracy and coverage |

Impact of Database Customization on Assignment Metrics

Research demonstrates that database customization significantly affects taxonomic assignment outcomes:

General vs. Specialized Databases: Reducing a comprehensive COI database to taxon-specific subsets (e.g., removing irrelevant insect sequences for marine studies) initially increases unassigned sequences but correctly reclassifies previously misassigned sequences [26].

Local Barcode Enrichment: Adding a small number of locally sourced barcodes (116 sequences, +0.04% database size) improved resolution for 0.6-1% of ASVs in marine benthic invertebrate studies [26].

Threshold Optimization: Establishing flexible thresholds for 896 common human gut species significantly improved identification of new ASVs and revealed 23 new genera within Lachnospiraceae that were previously missed with fixed thresholds [23] [24].

This technical support resource will be continuously updated as new bioinformatics approaches and reference materials become available. Researchers are encouraged to implement these methodologies to advance species-level resolution in microbiome studies, particularly for drug development and clinical applications where precise taxonomic identification is critical.

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: What is the primary function of TaxaCal? TaxaCal is a machine learning algorithm designed to calibrate species-level taxonomy profiles in 16S rRNA amplicon sequencing data. Its main purpose is to reduce profiling biases inherent in 16S data, making the results more comparable to the higher-resolution profiles obtained from whole-genome sequencing (WGS). This significantly improves cross-platform comparisons and enhances disease detection capabilities in 16S-based microbiome studies [29] [30].

Q2: Why is there a significant discrepancy between 16S and WGS data at the species level? The discrepancy arises from the inherent limitations of 16S sequencing. The technique has limited resolution at the species level and often struggles to distinguish between closely related species within the same genus due to the conservation of the 16S rRNA gene. Furthermore, biases can be introduced during PCR amplification due to primer design targeting specific variable regions [29] [31]. While overall community patterns are consistent at higher taxonomic levels (e.g., family, genus), the number and abundance of species detected exclusively by one method increase dramatically at the species level [29].

Q3: How much training data is needed for TaxaCal to be effective? Validation studies indicate that TaxaCal's performance stabilizes with a training set of as few as 20 paired 16S-WGS samples. While performance improves with more training pairs, this number provides a effective and practical benchmark for researchers to achieve significant calibration [29].

Q4: What are the specific output improvements I can expect after using TaxaCal? After calibration with TaxaCal, your 16S data will show much closer alignment with WGS data in several key metrics, as demonstrated in the table below [29].

Table 1: Improvements in 16S Data After TaxaCal Calibration

| Metric | Before Calibration | After Calibration |

|---|---|---|

| Beta Diversity (PCoA) | Significant distinction from WGS (PERMANOVA F = 34.33) | Much closer alignment with WGS (PERMANOVA F = 11.19) |

| Bray-Curtis Distance | Falls outside the intra-group range of WGS samples | Shrinks to within the intra-group range of WGS samples |

| Alpha Diversity (Shannon Index) | Significant deviation from WGS | Significant improvement, closely aligned with WGS |

| Species Abundance | Significant deviations (e.g., under-represented Bacteroides stercoris) | Abundances become more aligned with WGS profiles |

Q5: My microbiome samples have very high host DNA content (e.g., saliva, tissue). Are there other methods I should consider? For host-rich samples, a method called 2bRAD-M may be highly effective. It is a reduced-representation sequencing technique that preferentially generates microbial-derived tags without requiring prior host DNA depletion. In mock samples with >90% human DNA, 2bRAD-M achieved over 93% in performance metrics (AUPR and L2 similarity), outperforming 16S sequencing, especially in high-host-context conditions [14].

Troubleshooting Guides

Issue 1: Poor Species-Level Resolution in 16S Data

Problem: Your 16S amplicon sequencing data lacks the resolution to distinguish between closely related species, limiting your biological insights.

Solution: Implement a machine learning calibration tool like TaxaCal.

Step-by-Step Protocol:

- Obtain Paired Training Data: Secure a set of samples (recommended minimum n=20) from your study that have been sequenced using both 16S and WGS methods [29].

- Input Data Preparation: Format your 16S-derived species-level abundance profiles and the corresponding WGS-derived species-level profiles (the "ground truth") for the training samples.

- Execute the Two-Tier Calibration:

- Genus-Level Rough Correction: TaxaCal first constructs a linear regression model (using the least squares method) on the training pairs to perform a rough adjustment of microbial relative abundance at the genus level. This leverages the stronger consistency between 16S and WGS at this higher taxonomic level [29].

- Species-Level Refined Correction: After the genus-level adjustment, the profiles are further refined at the species level. This step uses a K-nearest neighbor (KNN) algorithm to select highly similar WGS samples from the training set to make the final, detailed species-level corrections [29].

- Apply the Model: Use the trained TaxaCal model to calibrate the species-level abundances of all other 16S samples in your dataset.

Visualization of Workflow: The following diagram illustrates the logical workflow and data flow of the TaxaCal calibration process.

Issue 2: Choosing the Right Machine Learning Model for Microbiome Data

Problem: You are unsure which machine learning model to use for analysis of your microbiome data, which is typically high-dimensional, sparse, and compositional [32] [31].

Solution: Select models based on proven performance and the specific task. The table below summarizes recommended models based on a multi-cohort CRC study and other microbiome research [32] [31].

Table 2: Machine Learning Model Selection Guide for Microbiome Data

| Task | Recommended Model(s) | Key Strengths & Notes |

|---|---|---|

| Disease Diagnosis / Classification | Random Forest (RF) | Often provides the most accurate performance estimates; robust with high-dimensional data [31]. |

| Identifying Predictive Biomarkers | Random Forest + Multivariate Feature Selection (e.g., Statistically Equivalent Signatures) | Effective in reducing classification error and identifying key microbial features [31]. |

| Model Interpretability & Biological Insight | Logistic Regression | Offers straightforward interpretation; coupled with visualization (e.g., ICE plots) for biological insights [31]. |

| Host Phenotype Prediction from Raw Data | Fully-Connected Neural Networks (FCNN) | Can achieve better classification accuracy over traditional methods [32]. |

| Phenotype Prediction using Phylogenetic Data | Convolutional Neural Networks (CNN) | Excell at summarizing local structure; use when data can be enriched with spatial/phylogenetic information [32]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Microbiome Calibration Experiments

| Item | Function / Application |

|---|---|

| Paired 16S-WGS Samples | A set of samples processed with both sequencing methods. Serves as the ground truth for training the TaxaCal machine learning model [29]. |

| TaxaCal Algorithm | The core machine learning tool that executes the two-tier (genus and species-level) calibration of 16S amplicon data [29] [30]. |

| 2bRAD-M Protocol | A reduced-representation sequencing method for analyzing microbiomes in host-dominated samples (e.g., saliva, tissue) without prior host DNA depletion [14]. |

| Reference Databases (e.g., GTDB, Greengenes2) | Standardized taxonomic databases crucial for consistent and accurate profiling and cross-method comparisons [14] [33]. |

| QIIME2 Platform | A powerful, user-friendly bioinformatic platform for processing and analyzing 16S rRNA sequencing data [29] [33]. |

| MetaPhlAn4 & Bracken | Widely recognized bioinformatic tools for deriving taxonomic profiles from shotgun metagenomic (WMS) sequencing data [14]. |

Frequently Asked Questions (FAQs)

Q1: My full-length 16S sequencing results show unexpected low species diversity. What could be the cause? Low diversity can often stem from primer bias during library preparation. Ensure you are using validated, universal primers that cover a broad taxonomic range. The choice of primer pairs significantly influences the resulting microbial composition, and some specific taxa may not be amplified by certain primers [34]. Additionally, confirm that your DNA extraction method is appropriate for your sample type (e.g., soil, stool, water) to ensure efficient lysis of all microbial cells [35].

Q2: What is the recommended sequencing coverage for reliable species-level identification using full-length 16S amplicons? For targeted full-length 16S sequencing on Oxford Nanopore platforms, it is recommended to sequence your amplified library to 20x coverage per microbe [35]. For a 24-plex library, this typically involves sequencing on a MinION flow cell for approximately 24–72 hours using the high-accuracy (HAC) basecaller [35].

Q3: I am getting a high proportion of chimeric sequences in my data. How can I reduce this? Chimeras often form during PCR amplification. To minimize them, use a high-fidelity polymerase and optimize your PCR cycle numbers to avoid over-amplification. During bioinformatic processing, employ established denoising and chimera removal tools that are part of standard pipelines like DADA2 (within QIIME2) or DADA2 itself, which includes a rigorous chimera removal step [7] [36].

Q4: Should I use OTUs or ASVs for analyzing my full-length 16S data? Amplicon Sequence Variants (ASVs) are generally recommended for full-length 16S data. ASVs differentiate sequences that vary by only a single nucleotide, providing higher resolution than Operational Taxonomic Units (OTUs), which cluster sequences at a fixed identity threshold (e.g., 97%) [7]. This single-nucleotide resolution is ideal for leveraging the power of long reads to distinguish between closely related species [36].

Q5: My analysis pipeline struggles with the higher error rate of long-read data. What is the best way to handle this? Modern workflows address this in several ways. During sequencing, use the high-accuracy (HAC) basecaller in MinKNOW software [35]. For data analysis, use pipelines specifically designed for long-read data that incorporate sophisticated denoising algorithms. The wf-16s pipeline in EPI2ME, for example, is optimized for Nanopore 16S data and offers both rapid real-time and high-accuracy post-run analysis modes [35]. Furthermore, ensure you perform appropriate quality filtering and truncation of your reads based on quality scores [34].

Troubleshooting Guides

Issue: Poor Sequencing Yield on Nanopore Flow Cells

| Potential Cause | Recommended Action | Preventive Measures |

|---|---|---|

| Insufficient or degraded DNA library | Check library concentration and quality using a fluorometric method. | Use a recommended extraction kit for your sample type (e.g., ZymoBIOMICS for water, QIAGEN PowerMax for soil) [35]. |

| Flow cell pore blockage | Perform a flow cell wash using the Flow Cell Wash Kit to recover pores. | Properly purify and clean up your PCR amplicons before library preparation to remove contaminants. |

| Old or expired flow cell | Check the flow cell's quality control report and usage history. | Plan your sequencing runs to use flow cells within their recommended shelf life. |

Issue: Low Taxonomic Resolution Despite Full-Length Sequencing

| Potential Cause | Recommended Action | Preventive Measures |

|---|---|---|

| Using an outdated or limited reference database | Re-analyze your data with a comprehensive and updated database like SILVA or Greengenes2 [7]. | Regularly update your bioinformatic pipelines and reference databases to the latest versions. |

| Incorrect bioinformatic parameters | Test different truncation length parameters during quality filtering, as this is critical for optimal results [34]. | Use standardized, well-documented pipelines like QIIME2 with DADA2 for reproducible analysis [7]. |

| High microdiversity in the sample | Increase sequencing depth to better capture rare species and strain-level variants. | For highly complex samples like soil, consider deeper sequencing or complementary metagenomic approaches [37]. |

Issue: Inconsistent Results Between Replicates or Mock Communities

| Potential Cause | Recommended Action | Preventive Measures |

|---|---|---|

| Contamination during library prep | Include and analyze negative controls (no-template controls) to identify contaminant sequences. | Use a dedicated clean lab area for pre-PCR steps and employ decontamination tools like the decontam R package [38]. |

| PCR amplification bias | Use a mock microbial community of known composition to assess bias and error rates in your workflow [34]. | Standardize PCR conditions and use a high-fidelity polymerase with minimal bias. |

| Over-splitting (ASVs) or over-merging (OTUs) | Benchmark your chosen algorithm (e.g., DADA2 or UPARSE) against a complex mock community to understand its behavior [36]. | Select a clustering/denoising method based on your accuracy needs; DADA2 is precise but can over-split, while UPARSE is robust but may over-merge [36]. |

Experimental Protocols & Workflows

Detailed Protocol: Full-Length 16S rRNA Gene Sequencing with Oxford Nanopore Technology

This protocol is adapted from the ONT workflow for polymicrobial samples [35].

1. DNA Extraction:

- Sample Type-Specific Kits: Use a method appropriate for your sample to obtain high-quality, high-molecular-weight DNA.

- Environmental Water: ZymoBIOMICS DNA Miniprep Kit

- Soil: QIAGEN DNeasy PowerMax Soil Kit

- Stool: QIAmp PowerFecal DNA Kit or QIAGEN Genomic-tip 20/G

- Quality Control: Verify DNA integrity and quantity using gel electrophoresis and a fluorometric assay.

2. Library Preparation:

- Amplification and Barcoding: Use the 16S Barcoding Kit 24 to amplify the full ~1.5 kb 16S rRNA gene from extracted gDNA and attach sample-specific barcodes via PCR.

- Multiplexing: Pool up to 24 uniquely barcoded libraries into a single sequencing run.

- Adapter Ligation: Add the provided sequencing adapters to the pooled, barcoded amplicons.

3. Sequencing:

- Platform: Load the library onto a MinION or GridION sequencer using a MinION Flow Cell.

- Basecalling: Run the sequencer using the MinKNOW software with the high-accuracy (HAC) basecaller enabled.

- Run Time: Sequence for 24–72 hours to achieve the recommended ~20x coverage per microbe for a 24-plex library.

4. Analysis:

- Real-Time/Post-Run Analysis: Utilize the EPI2ME platform and its wf-16s workflow for taxonomic classification and abundance profiling [35].

- Alternative Pipelines: For more customized analysis, process raw FASTQ files through QIIME2 using the DADA2 plugin for denoising, chimera removal, and ASV table construction [7].

Quantitative Data for Experimental Planning

Table 1: Key Performance Metrics for Full-Length 16S Sequencing on Nanopore [35]

| Parameter | Recommended Value | Notes |

|---|---|---|

| Target Gene Length | ~1,500 bp | Full-length 16S rRNA gene (V1-V9). |

| Coverage per Microbe | 20x | Ensures high taxonomic resolution. |

| Sequencing Run Time | 24 - 72 hours | Duration depends on sample complexity and multiplex level. |

| Barcodes per Run | Up to 24 | Using the 16S Barcoding Kit 24. |

Table 2: Comparison of Common Clustering and Denoising Algorithms [36]

| Algorithm | Method | Key Characteristics | Best for |

|---|---|---|---|

| DADA2 | ASV (Denoising) | Consistent output, high resolution, but may over-split rRNA copies. | Studies requiring single-nucleotide resolution. |

| Deblur | ASV (Denoising) | Uses error profiles to correct sequences. | Rapid processing of large datasets. |

| UPARSE | OTU (Clustering) | Lower error rates, but may over-merge distinct species. | Robust, general-purpose analysis. |

| VSEARCH/DGC | OTU (Clustering) | Open-source alternative to UPARSE. | Users requiring a free clustering solution. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Full-Length 16S rRNA Gene Sequencing

| Item | Function | Example Products |

|---|---|---|

| Sample-specific DNA Extraction Kits | To obtain high-quality, inhibitor-free genomic DNA from complex samples. | ZymoBIOMICS DNA Miniprep Kit (water), QIAGEN DNeasy PowerMax Soil Kit (soil), QIAmp PowerFecal DNA Kit (stool) [35]. |

| Targeted PCR & Barcoding Kit | To amplify the full-length 16S gene and attach unique barcodes for sample multiplexing. | Oxford Nanopore 16S Barcoding Kit 24 [35]. |

| Long-Record Sequencing Kit | Prepares the amplicon library for loading onto the flow cell. | Ligation Sequencing Kit (SQK-LSK114). |

| Flow Cell | The consumable containing nanopores for sequencing. | MinION Flow Cell (R10.4.1). |

| Flow Cell Wash Kit | Allows washing and reusing flow cells, reducing cost per sample. | Flow Cell Wash Kit (EXP-WSH004) [35]. |

| Positive Control DNA | Validates the entire workflow from extraction to sequencing. | ZymoBIOMICS Microbial Community Standard. |

| Bioinformatic Tools | For processing raw data, denoising, chimera removal, and taxonomic assignment. | EPI2ME wf-16s, QIIME2, DADA2, phyloseq R package [35] [7] [38]. |

Frequently Asked Questions (FAQs)

Q1: What is strain-level deconvolution and why is it important for microbiome research?

Strain-level deconvolution refers to the computational process of determining the identities and relative proportions of different bacterial strains within a metagenomic sample. Bacterial strains under the same species can exhibit different biological properties due to genomic variations, making this level of analysis crucial for understanding the true dynamics of microbial communities. For example, some E. coli strains are pathogens causing severe diarrhea, while others are described as probiotics used in treating diarrhea. Pinpointing specific strains is therefore essential for both composition and functional analysis of microbiomes, as strain-level variations can determine pathogenicity, antibiotic resistance, impacts on drug metabolism, and the ability to utilize dietary components [39] [40].

Q2: How does StrainScan differ from other strain-level analysis tools?

StrainScan employs a novel hierarchical k-mer indexing structure that balances strain identification accuracy with computational complexity. Unlike tools that only report representative strains from clusters (e.g., StrainGE, StrainEst) or those that struggle with highly similar strains, StrainScan uses a two-step approach: first, it clusters highly similar strains and uses a Cluster Search Tree (CST) for fast cluster identification; second, it uses strain-specific k-mers to distinguish different strains within identified clusters. This allows for higher resolution, enabling StrainScan to differentiate between strains that other tools would group together. Benchmarks show StrainScan improves the F1 score by 20% in identifying multiple strains at the strain level compared to state-of-the-art tools [39].

Q3: My metagenomic samples have low sequencing depth (<5X). Can StrainScan still detect strains effectively?

Yes, but it requires parameter adjustment. For samples with sequencing depth between 1-5X, use the parameter -l 1. For super low depth samples (<1X), use -l 2. Additionally, when dealing with very low sequencing depth (e.g., <1X), you can use the parameter -b 1 to output the probability of detecting a strain rather than a definitive presence/absence call. The higher the probability, the more likely the strain is to be present [41].

Q4: What are the common reasons for StrainScan returning "No clusters can be detected!" and how can I troubleshoot this?

This warning typically appears when the sequencing depth of targeted strains is very low (e.g., <1X). To address this:

- Use the

-bparameter to output detection probabilities instead of definitive calls - Verify the quality and quantity of your input sequencing data

- Ensure your reference database is appropriate for your sample type

- Check that the k-mer size (default k=31) is suitable for your data—smaller k-mers may be more sensitive for low-depth samples but offer less specificity

- Consider increasing sequencing depth if consistently working with low-abundance strains [41]

Q5: How does StrainScan handle the presence of multiple highly similar strains in one sample?

StrainScan is specifically designed to address the challenge of multiple highly similar strains coexisting in a sample. Its hierarchical approach first identifies clusters of similar strains, then uses carefully chosen strain-specific k-mers and k-mers representing SNVs and structural variations to distinguish between strains within these clusters. This allows it to untangle strain mixtures even when strains share high sequence similarity, such as the case with C. acnes strains that have a Mash distance of approximately 0.0004 [39].

Troubleshooting Guides

Database Construction Issues

Problem: Database construction is too slow or requires excessive memory.

Solution: Use the memory-efficient mode during database construction with the -e 1 parameter. Additionally, you can use multiple threads with the -t parameter to speed up the process. For large strain collections with high redundancy, pre-process your strains using the StrainScan_subsample.py script to reduce redundancy through hierarchical clustering [41].

Problem: Want to use a custom clustering method instead of the default.

Solution: StrainScan allows use of custom clustering files generated by external methods like PopPunk. Use the -c parameter followed by your custom clustering file during database construction. The file format should have the first column as cluster ID, the second column as cluster size, and the last column as the prefix of reference genomes in the cluster [41].

Strain Identification Problems

Problem: StrainScan fails to identify known plasmids in my samples.

Solution: Use StrainScan's plasmid mode. For option 1 (identifying plasmids using contigs <100000 bp): use -p 1 -r <Ref_genome_Dir>. For option 2 (identifying plasmids or strains using provided reference genomes): use -p 2 -r <Ref_genome_Dir>. The reference genome directory should contain genomes of identified clusters or all strains used to build the database [41].

Problem: Suspecting novel strains not in my reference database.

Solution: Use the extraRegion mode with -e 1. This mode will search for possible strains and return strains with "extra regions" (different genes, SNVs, or structural variations) covered. If there's a novel strain not in the database, this mode can identify its closest relative and highlight regions similar to other strains for downstream analysis [41].

Performance and Accuracy Optimization

Problem: Poor accuracy when dealing with highly similar strains.

Solution: Adjust the -s parameter (minimumsnvnum), which controls the minimum number of SNVs during iterative matrix multiplication at Layer-2 identification. The default is 40, but increasing this value may improve specificity at the cost of potential false negatives. Additionally, consider using a larger k-mer size (via -k) for better specificity with highly similar strains [41].

Problem: Tool comparison shows inconsistent results for low-abundance strains. Solution: Recent benchmarking indicates that StrainScan may demonstrate low accuracy for low-abundance strains and scale poorly to large synthetic communities. For quantitative analysis of strain abundances in complex communities, consider complementary tools like StrainR2, which has shown higher accuracy for low-abundance strains in synthetic communities. The choice of tool should depend on your specific application—StrainScan for high-resolution identification of known strains, and tools like StrainR2 for quantitative abundance analysis in complex mixtures [40].

Experimental Protocols & Workflows

Standard StrainScan Workflow for Strain-Level Identification

Protocol Details:

- Database Construction:

- Input: Reference genomes in FASTA format

- Command:

python StrainScan_build.py -i <Input_genomes> -o <Database_Dir> - Optional parameters: Custom clustering file (

-c), k-mer size (-k, default=31), threads (-t)

Strain Identification:

- Input: Short reads (FASTQ, can be gzipped), paired-end supported

- Command:

python StrainScan.py -i <input_fastq> -j <input_fastq_2> -d <Database_Dir> -o <Output_Dir> - For low-depth samples: Use

-l 1(1-5X) or-l 2(<1X) - For probability output in low-depth samples: Use

-b 1

Output Interpretation:

- Primary output:

final_report.txtwith columns: StrainID, StrainName, ClusterID, RelativeAbundanceInsideCluster, Predicted_Depth (two methods), Coverage [41]

- Primary output:

Comparative Framework for Tool Selection

Table 1: Strain-Level Deconvolution Tool Characteristics

| Tool | Methodology | Strengths | Limitations | Best Use Cases |

|---|---|---|---|---|

| StrainScan | Hierarchical k-mer indexing with Cluster Search Tree | High resolution for distinguishing highly similar strains; 20% higher F1 score than alternatives [39] | Can scale poorly with large communities; lower accuracy for very low-abundance strains [40] | Targeted analysis of specific bacteria with known references; distinguishing highly similar strains |