Beyond Trial and Error: Ensuring Accuracy in High-Throughput Screening for Catalyst Discovery

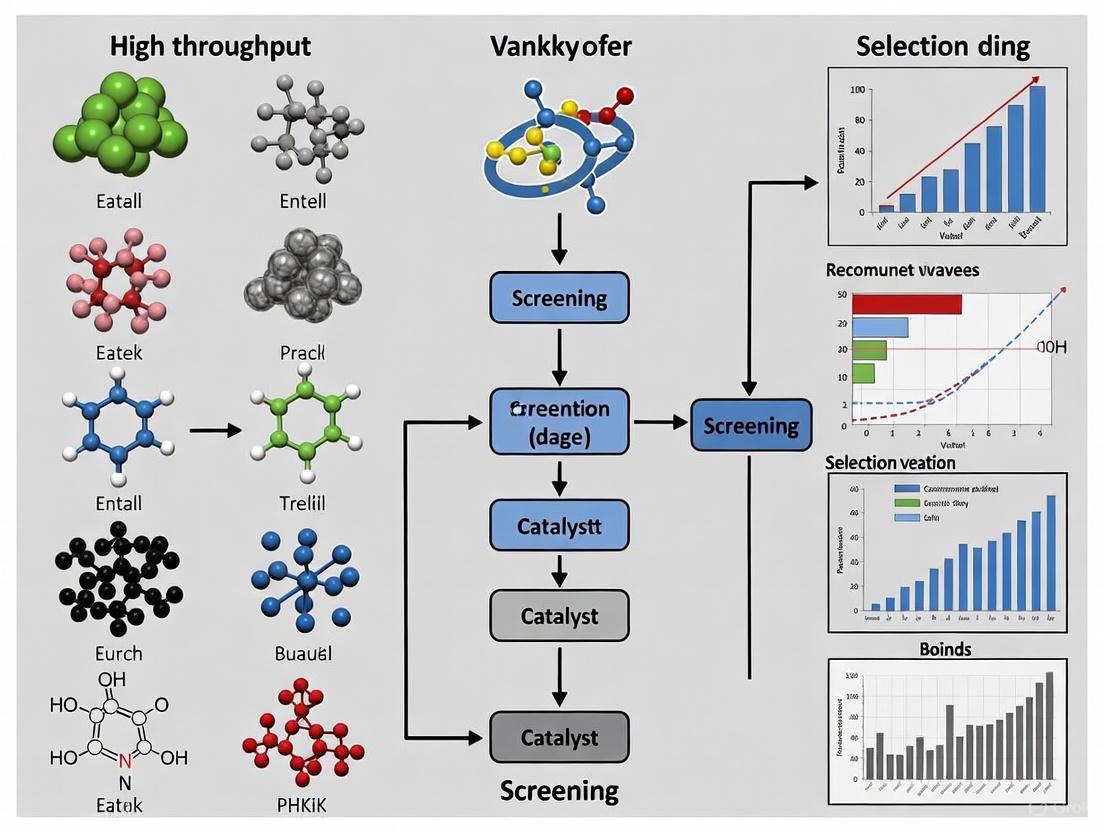

This article explores the critical role of accuracy in high-throughput screening (HTS) for modern catalyst discovery.

Beyond Trial and Error: Ensuring Accuracy in High-Throughput Screening for Catalyst Discovery

Abstract

This article explores the critical role of accuracy in high-throughput screening (HTS) for modern catalyst discovery. It addresses the foundational shift from empirical methods to data-driven paradigms, detailing the advanced methodologies and technologies that enhance screening precision. For researchers and drug development professionals, the article provides a comprehensive guide on optimizing assays, integrating machine learning, and validating hits to mitigate false positives. It further compares performance metrics across different screening platforms, offering a strategic framework for selecting and implementing HTS approaches that deliver reliable, physiologically relevant data to accelerate the development of efficient catalytic systems.

The New Paradigm: From Empirical Intuition to Data-Driven Catalyst Discovery

Catalyst research is undergoing a profound transformation, shifting from traditional intuition-driven approaches to a new era characterized by data-driven methodologies and artificial intelligence. This evolution represents a fundamental change in how scientists discover and optimize catalysts—materials that are indispensable for chemical processes across industries from pharmaceuticals to clean energy. The development of catalysts has traditionally been hampered by complex, high-dimensional search spaces involving catalyst composition, structure, and synthesis conditions, making traditional trial-and-error approaches increasingly limited in their ability to address modern challenges [1]. Within this context, high-throughput screening (HTS) has emerged as a critical methodology for accelerating catalyst discovery, yet its accuracy and effectiveness have been constrained by the limitations of each evolutionary stage of catalyst research.

This whitepaper delineates the three definitive stages of catalyst research, tracing the trajectory from empirical intuition to AI-driven discovery, with particular emphasis on how each stage has shaped the capabilities and accuracy of high-throughput screening. By examining the experimental protocols, data handling methodologies, and technological integrations characteristic of each stage, we provide researchers with a comprehensive framework for understanding the current state and future trajectory of catalytic science. The integration of artificial intelligence and machine learning is not merely an incremental improvement but represents a paradigm shift that is reshaping research methodologies, validation processes, and ultimately, the accuracy of high-throughput screening in catalyst discovery [2].

The Three Evolutionary Stages of Catalyst Research

Catalysis research has evolved through three distinct developmental phases, each characterized by different approaches to catalyst design, experimentation, and data analysis. The transition between these stages has been gradual, with elements of earlier stages persisting even as new methodologies emerge.

Stage 1: The Intuition-Driven Era

The initial stage of catalyst research was predominantly driven by empirical observation and chemical intuition. During this period, catalyst discovery relied heavily on researcher experience, analogical reasoning based on known catalytic systems, and manual trial-and-error experimentation.

Table 1: Characteristics of Intuition-Driven Catalyst Research

| Aspect | Methodology | Limitations | Impact on HTS Accuracy |

|---|---|---|---|

| Knowledge Foundation | Chemical intuition, empirical observations | Limited theoretical understanding | N/A - HTS not yet developed |

| Experimental Approach | Manual, sequential trial-and-error | Low throughput, time-consuming | N/A - HTS not yet developed |

| Data Collection | Laboratory notebooks, isolated measurements | Susceptible to cognitive biases | N/A - HTS not yet developed |

| Design Strategy | Analogical reasoning from known systems | Limited exploration of chemical space | N/A - HTS not yet developed |

| Success Factors | Researcher expertise, serendipity | Difficult to replicate or systematize | N/A - HTS not yet developed |

The intuition-driven stage was characterized by limited theoretical frameworks for understanding catalytic mechanisms. Researchers relied on qualitative concepts rather than quantitative models, and catalyst optimization was an artisanal process that required deep experiential knowledge of chemical behavior. The absence of computational tools and standardized testing protocols meant that catalyst development was slow, expensive, and difficult to replicate systematically [2]. During this period, high-throughput screening methodologies had not yet been developed, as the conceptual and technological foundations for systematic catalyst testing across multiple variables were not in place.

Stage 2: The Theory-Driven Computational Era

The second stage emerged with advances in computational chemistry, particularly density functional theory (DFT), which provided a theoretical foundation for understanding catalyst behavior at the atomic level. This stage introduced quantitative modeling of catalytic properties and reaction mechanisms, enabling more rational catalyst design.

Table 2: Characteristics of Theory-Driven Catalyst Research

| Aspect | Methodology | Advancements | Impact on HTS Accuracy |

|---|---|---|---|

| Knowledge Foundation | First-principles calculations, DFT | Atomic-level understanding of mechanisms | Enabled targeted screening based on electronic properties |

| Experimental Approach | Computational screening followed by validation | Reduced purely experimental workload | Improved hit rates through computational pre-screening |

| Data Collection | Structured computational datasets | Standardized descriptors (e.g., d-band center) | Provided quantitative parameters for screening assays |

| Design Strategy | Descriptor-based optimization (e.g., Sabatier principle) | More systematic exploration of materials space | Established structure-activity relationships for HTS |

| Success Factors | Computational accuracy, experimental validation | Transferable principles across catalyst families | Enhanced reproducibility of screening results |

The theory-driven stage introduced descriptor-based approaches to catalyst design, with concepts like the Sabatier principle helping researchers identify optimal adsorption energies for catalytic intermediates. Computational tools enabled the prediction of catalytic activity through descriptors such as d-band center for transition metal catalysts and scaling relations that connected adsorption energies across different reaction intermediates [3]. These developments created the foundational framework for high-throughput screening by identifying key parameters that could be systematically tested. However, this stage faced significant challenges in accurately predicting complex catalytic behavior across diverse materials spaces, and computational methods like DFT remained resource-intensive, limiting the breadth of materials that could be practically screened [2].

High-throughput screening methodologies developed during this stage leveraged computational predictions to focus experimental efforts on promising regions of chemical space. The integration of automation technologies enabled parallel testing of multiple catalyst formulations, significantly increasing experimental throughput compared to manual approaches. Companies like AstraZeneca implemented high-throughput experimentation (HTE) systems that could screen dozens of catalytic reactions per week, dramatically accelerating catalyst optimization for pharmaceutical applications [4]. However, the accuracy of these screening approaches remained constrained by the limitations of theoretical models, particularly for complex catalytic systems where multiple facets, binding sites, and reaction pathways contributed to overall performance.

Stage 3: The Data-Driven AI Era

The current stage of catalyst research is characterized by the integration of artificial intelligence and machine learning with high-throughput experimentation, creating a powerful paradigm for catalyst discovery and optimization. This stage leverages large datasets, advanced algorithms, and automated workflows to navigate complex catalyst spaces with unprecedented efficiency.

Table 3: Characteristics of Data-Driven AI Catalyst Research

| Aspect | Methodology | Advancements | Impact on HTS Accuracy |

|---|---|---|---|

| Knowledge Foundation | Machine learning, pattern recognition | Ability to model complex, non-linear relationships | Dramatically improved prediction accuracy for screening prioritization |

| Experimental Approach | Automated high-throughput systems with AI guidance | Orders of magnitude increase in testing capability | Closed-loop systems with continuous improvement of screening models |

| Data Collection | Multi-modal databases, standardized descriptors | Large, high-quality datasets for ML training | Enhanced data quality and standardization improves screening reliability |

| Design Strategy | AI-generated candidate screening, active learning | Exploration of vast chemical spaces impossible manually | Optimized screening strategies that balance exploration and exploitation |

| Success Factors | Data quality, algorithm selection, integration | Autonomous discovery systems | Higher success rates with reduced experimental burden |

The data-driven stage leverages diverse machine learning approaches, including regression models for predicting catalyst performance, neural networks for capturing complex non-linear relationships, and generative algorithms for proposing novel catalyst structures [5]. These methods excel at identifying patterns in high-dimensional data that are difficult for humans to discern, enabling more accurate predictions of catalytic behavior. Modern ML applications in catalysis have evolved through a three-stage process: initial high-throughput screening based on experimental and computational data, performance modeling using physically meaningful descriptors, and advanced symbolic regression aimed at uncovering general catalytic principles [2].

The integration of AI with high-throughput screening has dramatically improved the accuracy and efficiency of catalyst discovery. Machine learning models can process data from characterization techniques such as microscopy and spectroscopy, providing critical feedback for refining synthesis parameters and catalyst design [1]. AI-driven platforms enable autonomous robotic synthesis systems that can plan and execute catalyst synthesis with minimal human intervention. These closed-loop systems integrate ML algorithms with automated synthesis and characterization technologies, creating self-optimizing workflows that continuously improve based on experimental feedback [1].

Advanced AI methodologies are addressing fundamental challenges in high-throughput screening accuracy. For complex catalytic systems with multiple facets and binding sites, new descriptor designs like Adsorption Energy Distributions (AEDs) provide comprehensive representations of catalyst behavior across diverse structural environments [3]. Unsupervised machine learning techniques applied to these complex descriptors enable more accurate clustering of catalysts with similar properties, improving the predictive power of screening workflows. The integration of large language models for data mining and knowledge extraction further enhances the ability to identify meaningful patterns in complex catalytic data [2].

Experimental Protocols in Modern AI-Driven Catalyst Research

Workflow for ML-Accelerated Catalyst Discovery

The integration of machine learning into catalyst discovery has established new experimental protocols that significantly enhance the accuracy of high-throughput screening. A representative example is the workflow for discovering CO₂ hydrogenation catalysts, which demonstrates how AI methods are systematically applied to identify promising candidate materials [3].

Protocol 1: Computational Screening with Machine-Learned Force Fields

Step 1: Search Space Definition - Select metallic elements based on prior experimental evidence and computational feasibility. The Open Catalyst Project database provides a curated starting point containing 18 relevant metals (K, V, Mn, Fe, Co, Ni, Cu, Zn, Ga, Y, Ru, Rh, Pd, Ag, In, Ir, Pt, Au) and their bimetallic alloys [3].

Step 2: Stable Phase Identification - Query materials databases (e.g., Materials Project) for stable crystal structures. Perform bulk structure optimization using density functional theory (DFT) at the RPBE level to align with machine learning force field training data.

Step 3: Key Adsorbate Selection - Identify critical reaction intermediates through literature mining. For CO₂ to methanol conversion, essential intermediates include *H (hydrogen atom), *OH (hydroxy group), *OCHO (formate), and *OCH₃ (methoxy) [3].

Step 4: Surface Generation - Create surfaces with Miller indices ∈ {-2, -1, 0, 1, 2} using computational tools like the fairchem repository from the Open Catalyst Project. Select the most stable surface terminations for further analysis [3].

Step 5: Adsorption Energy Calculation - Engineer surface-adsorbate configurations and optimize using machine-learned force fields (e.g., OCP equiformer_V2). This approach provides a computational speed-up factor of 10⁴ or more compared to conventional DFT while maintaining quantum mechanical accuracy [3].

Step 6: Validation and Data Cleaning - Implement robust validation protocols to ensure prediction reliability. Sample minimum, maximum, and median adsorption energies for each material-adsorbate pair and compare with explicit DFT calculations for benchmark systems. Reported mean absolute error for adsorption energies should not exceed 0.16 eV for reliable predictions [3].

Protocol 2: High-Throughput Experimental Validation

Step 1: Automated Catalyst Synthesis - Utilize robotic systems like the CHRONECT XPR for precise powder dosing of catalyst precursors. These systems can handle mass ranges from 1 mg to several grams with deviations below 10% at low masses and below 1% for masses above 50 mg [4].

Step 2: Parallel Reaction Screening - Conduct reactions in 96-well array manifles within inert atmosphere gloveboxes. Systematically vary parameters including catalyst composition, solvent environment, and reaction conditions across the array.

Step 3: Performance Characterization - Implement automated product analysis using techniques such as gas chromatography (GC) or high-performance liquid chromatography (HPLC). Couple with in-situ spectroscopic methods where possible.

Step 4: Data Integration - Feed experimental results back into machine learning models to refine predictions and guide subsequent screening iterations. This closed-loop approach continuously improves model accuracy and screening efficiency [1].

Advanced Descriptor Design for Improved Screening Accuracy

A significant innovation in AI-driven catalyst research is the development of sophisticated descriptors that more accurately capture catalyst behavior. The Adsorption Energy Distribution (AED) descriptor represents a notable advancement beyond traditional single-value descriptors [3].

The AED approach aggregates binding energies across different catalyst facets, binding sites, and adsorbates, creating a comprehensive representation of the catalyst's energetic landscape. This methodology specifically addresses the limitations of conventional descriptors that were often restricted to specific surface facets or limited material families. By treating adsorption energies as probability distributions and analyzing their similarity using metrics like the Wasserstein distance, researchers can more accurately identify catalysts with similar performance characteristics [3].

This advanced descriptor design enables more accurate high-throughput screening by providing a multidimensional representation of catalyst properties that better correlates with experimental performance. The application of unsupervised machine learning to these complex descriptors facilitates the identification of promising catalyst candidates that might be overlooked by conventional screening methods.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Modern AI-driven catalyst research requires specialized materials, computational tools, and experimental systems. The following table catalogues essential resources referenced in recent literature.

Table 4: Essential Research Reagents and Solutions for AI-Driven Catalyst Research

| Category | Item/System | Function/Purpose | Technical Specifications |

|---|---|---|---|

| Computational Tools | OCP equiformer_V2 MLFF | Rapid prediction of adsorption energies | MAE: 0.23 eV for small molecular fragments; 10⁴ speed-up vs. DFT [3] |

| Computational Tools | Density Functional Theory (DFT) | Quantum mechanical calculations for validation | RPBE level for bulk optimization; serves as accuracy benchmark [3] |

| Computational Tools | SISSO algorithm | Identification of optimal catalytic descriptors | Compressed-sensing method for descriptor design from many candidates [2] |

| Experimental Systems | CHRONECT XPR | Automated solid weighing and dosing | Range: 1 mg - several grams; Deviation: <10% (low mass), <1% (>50 mg) [4] |

| Experimental Systems | High-throughput reactor arrays | Parallel catalyst testing | 96-well format; heated/cooled manifolds; inert atmosphere capability [4] |

| Data Resources | Open Catalyst Project (OC20) | Training data for ML force fields | Comprehensive dataset of catalyst calculations [3] |

| Data Resources | Materials Project | Crystal structure information | Database of stable and experimentally observed structures [3] |

| Catalyst Components | Transition metal complexes | Active sites for heterogeneous catalysis | Elements: K, V, Mn, Fe, Co, Ni, Cu, Zn, Ru, Rh, Pd, Ag, Ir, Pt, Au [3] |

| Catalyst Components | Oxide supports (e.g., ZnO, Al₂O₃) | High-surface-area catalyst supports | Structural promoters; impact dispersion and stability [1] |

The evolution of catalyst research from intuition to AI represents a fundamental transformation in scientific methodology. The integration of artificial intelligence with high-throughput screening has addressed significant limitations in both the intuition-driven and theory-driven approaches, enabling more accurate and efficient catalyst discovery. Current research focuses on enhancing this integration through improved descriptor design, more sophisticated machine learning algorithms, and fully autonomous experimental systems.

Future developments in AI-driven catalyst research will likely focus on several key areas. Small-data algorithms will address the challenge of limited experimental data for novel catalyst systems. Standardized catalyst databases will improve data quality and model transferability. Physically informed interpretable models will enhance researcher trust and provide deeper mechanistic insights [2]. The emerging application of large language models for data mining and knowledge extraction represents another promising direction for enhancing the accuracy of high-throughput screening [2].

The trajectory from intuition to AI has progressively enhanced the accuracy of high-throughput screening in catalyst discovery. Each evolutionary stage has built upon the previous one, addressing limitations and incorporating new capabilities. The current AI-driven paradigm represents the most powerful approach yet developed, enabling researchers to navigate complex catalyst spaces with unprecedented efficiency and accuracy. As these methodologies continue to mature, they promise to accelerate the development of advanced catalysts for addressing critical challenges in energy, sustainability, and chemical production.

In the high-stakes realm of high-throughput screening (HTS) for catalyst discovery, the accuracy of experimental outcomes directly dictates the efficiency and economic viability of the entire research pipeline. False positives—erroneously identifying inactive compounds as hits—and false negatives—failing to identify truly active compounds—incur profound costs, wasting valuable resources and potentially causing researchers to overlook groundbreaking catalytic systems. This whitepaper delves into the quantitative economic and scientific consequences of these errors, framed within the context of catalyst discovery research. Furthermore, it provides a rigorous technical guide detailing established and emerging experimental protocols, statistical frameworks, and computational tools designed to minimize these errors, thereby empowering researchers to enhance the predictive power and reliability of their screening campaigns.

High-Throughput Screening (HTS) utilizes automated equipment to rapidly test thousands to millions of samples—from small molecules to natural product extracts—for specific biological or catalytic activity [6]. In catalyst discovery, this paradigm allows for the rapid evaluation of vast libraries of potential catalytic compounds or materials against a desired transformation. The global HTS market, valued at USD 32.0 billion in 2025 and projected to reach USD 82.9 billion by 2035, is a testament to its critical role in industrial and academic research [7]. The primary goal of any HTS campaign is to reliably distinguish true "hits" from the vast background of inactive entities. The efficacy of this triage process hinges on assay sensitivity (the ability to correctly identify true hits, minimizing false negatives) and specificity (the ability to correctly reject inactive compounds, minimizing false positives) [8]. A failure in either dimension carries significant, multi-faceted costs that can derail a discovery program.

The Tangible and Intangible Costs of Inaccuracy

The Economic Burden of False Results

The financial impact of false positives and negatives is staggering, stemming from wasted reagents, squandered personnel time, and misguided research directions.

Table 1: Quantitative Impact of False Positives and Negatives in HTS

| Impact Category | False Positives (Inactive compounds mistaken for hits) | False Negatives (Active compounds mistakenly discarded) |

|---|---|---|

| Direct Economic Cost | A single HTS campaign can require \$25,000 in enzymes alone; false positives amplify this cost in downstream validation [8]. A screening system with multiple tests can have a false positive burden 150 times higher than a more accurate alternative [9]. | Wastes the entire initial investment in library synthesis, screening reagents, and instrumentation time without return. |

| Downstream Resource Drain | Consumes resources on futile confirmatory assays, hit optimization, and medicinal chemistry efforts [10]. | Obscures promising research avenues, potentially terminating a project based on inaccurate data. |

| Operational Efficiency | Leads to lower screening efficiency (e.g., Positive Predictive Value of 0.44% vs. 38% in accurate systems) [9]. | Requires re-screening or larger library sizes to compensate for missed opportunities, increasing time and cost. |

| Long-Term Project Impact | Can lead to the pursuit of "dead-end" leads, delaying project timelines by months or years. | Loss of potentially superior catalysts or drugs, impacting competitive advantage and scientific progress. |

As illustrated in Table 1, a comparative framework evaluating screening systems showed that a system prone to false positives (multiple single-cancer tests) detected only 1.4 times more true positives but generated 188 times more diagnostic investigations in cancer-free individuals and had 3.4 times the total cost compared to a more accurate single test [9]. While this example is from diagnostics, the underlying principle directly translates to catalyst discovery, where investigating a single false-positive "hit" through subsequent optimization cycles is a massive resource sink.

The Scientific and Opportunity Costs

Beyond direct financial costs, inaccuracy inflicts deep scientific wounds:

- Erosion of Scientific Rigor: A high rate of false discoveries undermines the reproducibility and integrity of research findings, a cornerstone of the scientific method.

- Loss of Breakthrough Catalysts: A false negative represents a missed opportunity of potentially monumental significance. The most active or unique catalyst in a library could be erroneously discarded, potentially setting back a field by years. In drug discovery, for instance, HTS has been instrumental in developing targeted therapies like Adcetris, an outcome of stringent screening initiatives [7]. A single false negative could have scuttled such a breakthrough.

- Compromised Data-Driven Decisions: Modern discovery relies on data to guide the optimization of compound properties. Inaccurate primary screening data, such as skewed IC₅₀ values resulting from low-sensitivity assays, misdirects medicinal chemistry efforts, leading to the optimization of compounds based on flawed potency rankings [8].

Mitigating False Positives and Negatives: A Technical Guide

Minimizing error rates requires a multi-faceted approach spanning assay design, statistical quality control, and computational pre-screening.

Foundational Assay Design and Optimization

The first line of defense against inaccuracy is a robust, well-characterized assay.

1. Maximize Assay Sensitivity and Signal Quality: Assay sensitivity—the ability to detect minimal biochemical change—is paramount. A highly sensitive assay allows for the use of lower enzyme/catalyst concentrations, which not only reduces costs but also enables accurate measurement of potent inhibitors/catalysts [8].

- Key Metrics:

- Z′-factor: A statistical measure of assay quality. Values >0.5 are acceptable for HTS, but values >0.7 indicate excellent separation between positive and negative controls and are a target for robust screening [8].

- Signal-to-Background (S/B) Ratio: A high S/B ratio (>6:1) ensures small changes in product concentration are easily detectable, reducing the chance of false negatives due to signal ambiguity [8].

- Strictly Standardized Mean Difference (SSMD): A robust metric for quality control and hit selection that quantifies the standardized mean difference between control groups, accounting for variability. It provides intuitive, probabilistic interpretations of assay quality [11].

2. Implement Redundant and Orthogonal Assays: A compound identified as a hit in a primary screen must be confirmed in a secondary, orthogonal assay that operates on a different detection principle. This step is critical for triaging false positives arising from the specific artifacts or interference mechanisms of the primary assay.

3. Utilize Quantitative HTS (qHTS): Screening compounds at multiple concentrations, rather than a single dose, generates concentration-response data upfront. qHTS more fully characterizes biological effects and is recognized for decreasing the number of false negatives and false positives [6].

The following workflow diagram illustrates a robust HTS campaign designed to minimize false results through iterative quality control and orthogonal confirmation.

Statistical and Computational Quality Control

Sophisticated statistical analysis is non-negotiable for distinguishing signal from noise in HTS data.

1. Integrated Metrics for Quality Control: The integration of SSMD and the Area Under the Receiver Operating Characteristic Curve (AUROC) provides a powerful framework for QC. SSMD offers a standardized, interpretable measure of effect size, while AUROC provides a threshold-independent assessment of an assay's inherent power to discriminate between positive and negative controls [11].

- Mathematical Relationship: For normally distributed data, AUROC is directly related to SSMD via the standard normal cumulative distribution function:

AUROC = Φ(SSMD/√2)[11]. This relationship allows researchers to set quality thresholds based on both effect size and classification power.

2. Advanced Computational Pre-screening: Machine learning and deep learning models are increasingly deployed to virtual screen compound libraries, prioritizing those with a high predicted probability of activity.

- Deep Learning Models: As demonstrated by tools like PBScreen (a server for screening placental barrier–permeable contaminants), multifusion deep learning models can achieve state-of-the-art performance with high accuracy (0.927) and a low false negative rate (0.074) [12]. Applying similar models to catalyst discovery can pre-filter virtual libraries, reducing the experimental burden and the risk of false negatives in the primary screen by ensuring a higher proportion of promising candidates are tested.

The diagram below outlines the statistical and computational relationships key to a robust HTS QC process.

The Scientist's Toolkit: Essential Reagents and Materials

Table 2: Key Research Reagent Solutions for Robust HTS

| Reagent/Material | Function in HTS | Impact on Accuracy |

|---|---|---|

| High-Sensitivity Detection Kits (e.g., Transcreener) | Homogeneous, antibody-based detection of nucleotide products (e.g., ADP, GDP). Enables use of low enzyme/catalyst concentrations. | Reduces false negatives by providing a strong signal window; improves IC₅₀ accuracy for ranking hits, reducing false positives from poorly characterized compounds [8]. |

| qHTS-Compatible Compound Libraries | Libraries formatted for screening across multiple concentration titrations. | Directly mitigates false positives/negatives by providing concentration-response data upfront, identifying promiscuous or weak inhibitors that single-dose screens miss [6]. |

| Validated Positive/Negative Controls | Well-characterized compounds that define the assay's dynamic range and baseline in every plate. | Essential for calculating Z'-factor and SSMD, allowing for per-plate QC and identification of assay drift that could cause false results [11] [8]. |

| Robotic Liquid Handling Systems | Automated dispensers and pipettors for precise, reproducible reagent and compound transfer. | Minimizes volumetric errors and cross-contamination, major technical sources of both false positives and false negatives. |

In the accelerated world of catalyst discovery, the tolerance for inaccurate data is vanishingly low. The costs of false positives and negatives are not merely line items in a budget but represent significant impediments to scientific progress and innovation. By adopting a rigorous, multi-pronged strategy—featuring sensitively optimized assays, redundant orthogonal confirmation, robust statistical quality control with integrated SSMD and AUROC metrics, and leveraging advanced computational pre-screening—research teams can significantly enhance the accuracy of their HTS campaigns. This commitment to precision ensures that resource investment is channeled toward the most promising catalytic leads, ultimately accelerating the journey from discovery to application.

Within catalyst discovery research, the accuracy of a High-Throughput Screening (HTS) workflow is the primary determinant between successful lead identification and costly, misguided research paths. An accurate HTS system transcends mere rapid testing; it is an integrated framework where high-fidelity data generation, predictive molecular descriptors, and profound physical insights from real-time kinetics converge. This synergy is especially critical in catalysis, where catalyst performance is a multidimensional property influenced by composition, dynamic surface changes, and reaction conditions [13]. The complexity of this design space means that traditional one-variable-at-a-time approaches are insufficient. This guide details the core components of an accurate HTS workflow, framing them within the essential context of catalyst informatics—the practice of using data to guide catalyst design [13]. By implementing the robust methodologies and quality controls outlined herein, researchers can transform their HTS processes from simple sorting tools into powerful, predictive engines for catalyst discovery.

Core Component I: High-Fidelity Data Generation and Management

The foundation of any accurate HTS workflow is the generation of reliable, high-quality data. This involves a meticulously designed experimental apparatus, rigorous assay validation, and a sophisticated data management infrastructure.

Experimental Protocol: Real-Time Kinetic Data Collection

The following protocol, adapted from a catalyst informatics study on nitro-to-amine reduction, exemplifies the acquisition of high-fidelity, time-resolved data [13].

- Objective: To screen a library of catalysts for a reduction reaction while collecting real-time kinetic and spectral data to assess activity, selectivity, and mechanism.

- Materials:

- Probe Molecule: A nitronaphthalimide (NN) compound. Its reduction from a non-fluorescent nitro-moiety to a fluorescent amine form (AN) provides a direct optical readout of reaction progress [13].

- Catalysts: A library of catalysts (e.g., 114 heterogeneous and homogeneous catalysts).

- Reagents: Aqueous hydrazine (N₂H₄) as a reductant, and acetic acid.

- Hardware: A multi-mode microplate reader (e.g., Biotek Synergy HTX) capable of measuring absorbance and fluorescence, paired with 24-well plates [13].

- Methodology:

- Well Plate Setup: Each 24-well plate is populated with 12 reaction wells (S) and 12 corresponding reference wells (R). Each reaction well contains a mixture of catalyst (0.01 mg/mL), NN probe (30 µM), N₂H₄ (1.0 M), acetic acid (0.1 mM), and H₂O for a total volume of 1.0 mL. Each reference well contains the same mixture but with the AN product instead of the NN probe to serve as a standard for signal conversion and stability monitoring [13].

- Real-Time Data Acquisition:

- The plate reader is programmed for a cyclic routine.

- Orbital Shaking: 5 seconds to ensure mixing.

- Fluorescence Detection: Excitation at 485 nm, emission at 590 nm.

- Absorbance Scanning: A full spectrum scan from 300 nm to 650 nm.

- This cycle is repeated every 5 minutes for a total duration of 80 minutes, generating kinetic profiles for each well [13].

- Data Outputs:

- Kinetic Graphs: Absorbance decay at 350 nm (NN), growth at 430 nm (AN), fluorescence growth at 590 nm (AN), and stability at the isosbestic point (385 nm) [13].

- Mechanistic Insight: The evolution of the isosbestic point and the appearance of intermediates (e.g., absorbance at 550 nm for azo/azoxy species) provide critical information on reaction selectivity and pathway [13].

Data Processing, Normalization, and Quality Control

The massive volume of raw data generated must be processed and rigorously quality-controlled to be biologically meaningful. Key steps include normalization and the application of statistical metrics to ensure assay robustness.

Table 1: Essential Data Quality Control (QC) Metrics for HTS Assays [14]

| QC Metric | Calculation | Interpretation and Acceptable Range | ||

|---|---|---|---|---|

| Z'-Factor | `1 - (3*(σp + σn) / | μp - μn | )` | A measure of assay robustness and signal window. An assay with Z' > 0.5 is considered excellent for HTS [14]. |

| Signal-to-Background (S/B) | μ_p / μ_n |

The ratio of the positive control signal to the negative control signal. A higher ratio is desirable [14]. | ||

| Signal-to-Noise (S/N) | (μ_p - μ_n) / √(σ_p² + σ_n²) |

Measures the assay signal relative to variability. A higher value indicates a more reliable assay [14]. | ||

| Coefficient of Variation (CV) | (σ / μ) * 100% |

The standard deviation expressed as a percentage of the mean, typically calculated for control wells. CVs < 10% are ideal [14]. |

- Data Normalization: Raw data from plate readers must be normalized to account for plate-to-plate variation. Common techniques include [14]:

- Z-Score Normalization: Expressing each well's signal in terms of standard deviations from the plate mean.

- Percent Inhibition/Activation: Calculating the signal relative to positive (100% inhibition) and negative (0% inhibition) controls.

- Informatics Infrastructure: Robust data management is non-negotiable. Platforms like Genedata Screener are often used to process, manage, and analyze complex HTS datasets, ensuring data fidelity and facilitating detailed interrogation by plate, batch, and screen [15].

The following workflow diagram synthesizes the key stages of data generation and management, from experimental setup to the final scoring of catalyst performance.

Core Component II: Molecular Descriptors and Hit Rate Analysis

Beyond the immediate experimental data, the chemical nature of the compounds being screened plays a critical role in the outcome and interpretability of an HTS campaign. Statistical analysis of historical HTS data reveals clear correlations between molecular descriptors and hit rates.

Key Molecular Descriptors and Their Influence

Hit rates in HTS are not random; they are influenced by the physicochemical properties of the screening library. A beta-binomial statistical analysis of HTS campaigns quantified the relative influence of several key descriptors [16]:

- Lipophilicity (ClogP): This descriptor, measuring a compound's partition coefficient between octanol and water, was found to have the largest influence on molecular hit rate. Excessively high lipophilicity is often linked to promiscuous, non-specific binding, leading to false positives [16].

- Fraction of sp³-Hybridized Carbons (Fsp3): Calculated as the number of sp³ carbons divided by the total carbon count, Fsp3 is a measure of molecular complexity and three-dimensionality. A higher Fsp3 (indicating a more complex, "chiral" structure) is correlated with better hit quality and is a positive predictor in hit rate models, second only to ClogP in influence [16].

- Molecular Size (Heavy Atom Count): The number of non-hydrogen atoms in a molecule also significantly impacts hit rates. Larger, more complex molecules may have different binding propensities compared to smaller, simpler fragments [16].

- Fraction of Molecular Framework (fMF): This descriptor had only a minor influence on hit rates after accounting for its correlation with the other, more dominant descriptors [16].

Table 2: Influence of Molecular Descriptors on HTS Hit Rates [16]

| Molecular Descriptor | Description | Relative Influence on Hit Rate | Implication for Library Design |

|---|---|---|---|

| ClogP | Calculated lipophilicity | Largest | Prioritize compounds with optimal logP ranges to minimize promiscuous, non-specific binding and false positives. |

| Fsp3 | Fraction of sp³-hybridized carbons | Second Highest | Favor complex, three-dimensional structures over flat, aromatic ones to improve hit quality and developability. |

| Heavy Atom Count | Number of non-hydrogen atoms | Significant | Balance molecular size to maintain desirable drug-like properties while ensuring sufficient interaction potential. |

| Fraction of Molecular Framework (fMF) | A measure of molecular skeleton complexity | Minor | Considered less critical for predicting hit rates compared to the other descriptors. |

Application in Catalyst-Focused Libraries

The principles of descriptor-informed library design are directly applicable to catalyst discovery. For instance, the design of the "LeadFinder Prism" library (48k compounds) explicitly incorporates high chirality and Fsp3, focusing on novel, natural-product-inspired scaffolds to yield high-quality, exclusive hits with enhanced intellectual property potential [15]. Screening such a thoughtfully constructed library increases the probability that identified "hits" are genuine, specific, and possess desirable properties for downstream development.

Core Component III: Physical Insights from Real-Time Profiling

Endpoint analysis alone provides an incomplete picture of catalyst performance. The third core component of an accurate HTS workflow is the extraction of physical insights from real-time, time-resolved data, which offers a window into kinetic behavior and reaction mechanisms.

Extracting Kinetic Profiles and Mechanistic Indicators

The real-time fluorogenic assay described in Section 2.1 generates rich data beyond a simple endpoint readout. The analysis of this data yields critical physical insights [13]:

- Reaction Completion Time: The time taken to reach 50% conversion (or another threshold) provides a direct measure of catalyst activity and allows for a simple, quantitative comparison across a vast library of catalysts.

- Isosbestic Point Stability: The presence and stability of an isosbestic point in the absorbance spectra (e.g., at 385 nm) indicate a clean, direct conversion from starting material to product. A shifting or unstable isosbestic point, as observed with catalyst Zeolite NaY, signals a more complex mechanism, such as a change in pH, the formation of stable intermediates, or side reactions. This insight can lead to the exclusion of catalysts that are active but non-selective [13].

- Detection of Intermediates: The appearance and decay of spectral signatures for reactive intermediates (e.g., an absorbance peak at 550 nm attributed to azo/azoxy species) provide direct evidence of the reaction pathway. Catalysts that lead to a significant buildup of such intermediates can be assigned a low "selectivity" score, as these byproducts complicate synthesis and product isolation [13].

A Multi-Parameter Scoring Model for Catalyst Selection

The ultimate goal of an accurate HTS workflow is to identify the best overall catalysts, not just the most active ones. This requires a multi-parameter scoring system that integrates the physical insights with practical considerations [13].

Table 3: Multi-Parameter Scoring System for Catalyst Evaluation [13]

| Evaluation Parameter | Data Source | Explanation and Role in Scoring |

|---|---|---|

| Activity | Kinetic profile (e.g., time to 50% conversion) | A primary driver; catalysts that achieve faster conversion are scored higher. |

| Selectivity | Spectral data (isosbestic point stability, intermediate levels) | Penalizes catalysts that produce long-lived reactive intermediates or byproducts, ensuring cleaner reactions. |

| Material Abundance & Cost | External data (catalyst price, elemental abundance) | Promotes the selection of sustainable, scalable, and economically viable catalysts. |

| Recoverability | Assay design (heterogeneous vs. homogeneous) | Favors catalysts (often heterogeneous) that can be easily separated and reused. |

| Safety | External data (handling requirements, toxicity) | Integrates green chemistry principles and operational safety into the selection process. |

By plotting catalysts based on their cumulative scores across these dimensions, researchers can make informed, balanced decisions, potentially applying intentional biases, such as a preference for catalysts aligned with green chemistry principles [13].

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials and reagents essential for implementing the high-accuracy HTS workflow for catalyst discovery described in this guide.

Table 4: Essential Research Reagent Solutions for HTS in Catalyst Discovery

| Item | Function in the HTS Workflow |

|---|---|

| Fluorogenic Probe (e.g., Nitronaphthalimide - NN) | Acts as a reaction reporter; its non-fluorescent nitro form is reduced to a highly fluorescent amine, providing a real-time optical readout of catalytic activity [13]. |

| Microplates (e.g., 24, 96, 384-well) | The standardized platform for miniaturized, parallel reactions. Format choice balances reagent consumption, evaporation control, and compatibility with automation [14]. |

| Automated Liquid Handling System | Provides high-precision, non-contact dispensing of reagents and compounds at micro- to nanoliter volumes, which is critical for assay reproducibility and miniaturization [15]. |

| Multi-Mode Microplate Reader | The core detection instrument, capable of performing various measurements (e.g., fluorescence, absorbance) on the microplates to quantify reaction progress [13]. |

| Diverse Catalyst Library | A curated collection of catalysts, potentially designed with specific molecular descriptors (e.g., high Fsp³), which serves as the source for discovery [15]. |

| Informatics & Data Analysis Software (e.g., Genedata Screener) | A robust data management platform essential for processing, normalizing, storing, and analyzing the vast, complex datasets generated by HTS campaigns [15]. |

An accurate HTS workflow for catalyst discovery is a sophisticated, multi-component system. It is built upon the seamless integration of high-fidelity data generated from robust, real-time assays, interpreted through the lens of predictive molecular descriptors, and elevated by the deep physical insights gleaned from kinetic and mechanistic analysis. Individually, each component enhances the screening process; together, they form a cohesive and powerful informatics-driven strategy that moves beyond mere screening to true catalyst understanding and optimization. By adopting this comprehensive approach, researchers can significantly improve the accuracy, efficiency, and predictive power of their discovery pipelines, ultimately accelerating the development of novel, high-performance catalytic systems.

Bridging Data-Driven Discovery with Physical Catalytic Principles

The field of catalysis is undergoing a profound paradigm shift, moving from traditional trial-and-error approaches and theory-driven models toward a new era characterized by the deep integration of data-driven methods and fundamental physical insights [2]. This transformation is particularly crucial in high-throughput virtual screening (HTVS) for catalyst discovery, where the reconciliation of computational predictions with physical catalytic principles determines the ultimate accuracy and practical utility of research outcomes. The emergence of machine learning (ML) as a powerful tool in chemical sciences has accelerated this transformation, enabling researchers to navigate complex, multidimensional catalytic systems with unprecedented efficiency [17]. However, the central challenge remains: how to effectively bridge the gap between black-box data-driven predictions and physically meaningful catalytic mechanisms to ensure both discovery speed and fundamental understanding.

This technical guide examines the current state of data-driven catalyst discovery within the context of high-throughput screening accuracy, focusing specifically on the integration of physical principles into ML workflows. By exploring innovative representation methods, selectivity mapping techniques, and experimental validation paradigms, we provide researchers with a framework for developing more reliable, interpretable, and physically-grounded catalytic discovery pipelines. The insights presented here aim to enhance the predictive accuracy of HTVS approaches while ensuring that data-driven discoveries remain connected to fundamental catalytic science.

The Evolution of Machine Learning in Catalysis

A Three-Stage Developmental Framework

The integration of machine learning into catalysis research has followed a distinct evolutionary path that reflects the field's growing sophistication. According to recent analyses, this development can be categorized into three sequential stages [2]:

- Data-Driven Screening Phase: The initial stage focused primarily on high-throughput screening of catalyst candidates based on experimental and computational data, with emphasis on prediction accuracy rather than mechanistic understanding.

- Descriptor-Based Modeling Phase: The intermediate stage incorporated physically meaningful descriptors into ML models, enabling the establishment of structure-property relationships and more interpretable predictions.

- Symbolic Regression and Theory-Oriented Phase: The most advanced stage, which is currently emerging, focuses on uncovering general catalytic principles through symbolic regression and other interpretable ML approaches, explicitly bridging data-driven discovery with physical insight.

Table 1: Evolutionary Stages of Machine Learning in Catalysis

| Stage | Primary Focus | Key Methods | Physical Integration Level |

|---|---|---|---|

| Data-Driven Screening | High-throughput candidate identification | Regression ML, neural networks | Low: Emphasis on predictive accuracy |

| Descriptor-Based Modeling | Structure-property relationships | Feature engineering, physically-informed descriptors | Medium: Incorporation of catalytic descriptors |

| Symbolic Regression | General principle discovery | Symbolic regression, interpretable ML | High: Explicit physical principle extraction |

Critical Challenges in Current ML Approaches

Despite considerable progress, ML applications in catalysis face several significant challenges that impact the accuracy of high-throughput screening approaches. The performance of ML models is highly dependent on data quality and volume, with issues of data acquisition and standardization remaining major bottlenecks [2]. Additionally, constructing meaningful descriptors that effectively represent catalysts and reaction systems poses a substantial challenge, as descriptors must balance physical relevance with computational feasibility [2].

Perhaps most critically, there exists a fundamental trade-off between model complexity and interpretability. While deep learning models often achieve high predictive accuracy, their black-box nature limits physical insights and mechanistic understanding [2]. This limitation is particularly problematic in high-throughput screening contexts, where researchers require not just predictions but actionable insights for catalyst design and optimization.

Integrating Physical Principles into Data-Driven Workflows

Active Motif Representations for Physical Meaning

The DSTAR (structure-free active motif-based representation) methodology exemplifies the successful integration of physical principles into data-driven catalyst discovery. This approach encodes catalytic sites through their local atomic environments, dividing active motifs into three distinct sites [18]:

- First Nearest Neighbor (FNN) atoms: Atoms directly bonded to the adsorption site

- Second Nearest Neighbor in Same Layer (SNN~same~): Atoms in the same surface layer as the binding site

- Sublayer Atoms (SNN~sub~): Atoms in the layer beneath the binding site

This physical representation captures essential chemical information while remaining sufficiently general to explore vast chemical spaces without requiring explicit surface structure modeling [18]. The methodology enables the enumeration of all possible active motifs for any elemental combination, constructing histograms of predicted binding energies that facilitate high-throughput screening across expanded chemical spaces.

Diagram 1: Physical motif integration workflow for catalyst screening.

Selectivity Maps Based on Physical Descriptors

The development of three-dimensional selectivity maps represents another significant advancement in bridging physical principles with data-driven discovery. By employing multiple binding energy descriptors (ΔE~CO~, ΔE~H~, and ΔE~OH*~), researchers can establish thermodynamic boundary conditions that predict CO~2~ reduction reaction (CO~2~RR) products with greater accuracy [18]. This approach moves beyond single-descriptor predictions to capture the multidimensional nature of catalytic selectivity.

The selectivity map incorporates six thermodynamic boundary conditions that determine product distributions for formate, CO, C1+ products, and H~2~ [18]. These boundary conditions are derived from fundamental physical principles, including:

- BC1: Comparison between initial protonation steps leading to formate vs. CO/C1+ pathways

- BC2: Favorability of the Volmer step

- BC3: Possibility of surface poisoning by OH*

- BC4: Binding strength of CO* for further reduction to C1+ products

- BC5: Competition between HER and CO~2~RR

- BC6: Favorability of the Heyrovsky reaction

This physically-grounded framework enables more accurate predictions of catalytic selectivity while maintaining connections to fundamental reaction mechanisms.

High-Throughput Virtual Screening: A Case Study in CO2RR

Workflow Implementation and Validation

The integration of physical principles with data-driven discovery is exemplified by a recent high-throughput virtual screening study for CO~2~ reduction reaction (CO~2~RR) catalysts. This workflow combined binding energy prediction ML models based on DSTAR with the CO~2~RR selectivity map to discover active and selective catalysts [18]. The implementation proceeded through several distinct phases:

Table 2: HTVS Workflow for CO2RR Catalyst Discovery

| Phase | Methodology | Scale | Physical Principle Integration |

|---|---|---|---|

| Active Motif Enumeration | DSTAR representation of catalytic sites | 2,463,030 active motifs | Local atomic environment descriptors |

| Binding Energy Prediction | Machine learning models (ΔE~CO~, ΔE~OH~, ΔE~H*~) | 465 metallic catalysts | Structure-property relationships based on DFT |

| Selectivity Mapping | 3D thermodynamic boundary conditions | 4 main products | Scaling relations between intermediates |

| Experimental Validation | Synthesis and electrochemical testing | 2 promising alloy systems | Experimental verification of predictions |

The screening process identified 465 metallic catalysts with predicted activity and selectivity toward four reaction products (formate, CO, C1+, and H~2~) [18]. During this process, researchers discovered previously unreported promising behavior of Cu-Ga and Cu-Pd alloys, which were subsequently validated through experimental methods. This end-to-end workflow demonstrates how physical principles can guide and validate data-driven discovery in a high-throughput screening context.

Quantitative Performance Metrics

The accuracy of the integrated physical-data driven approach was quantitatively assessed through multiple validation metrics. The ML models achieved test mean absolute errors (MAEs) of 0.118 eV, 0.227 eV, and 0.107 eV for ΔE~CO~, ΔE~OH~, and ΔE~H*~, respectively, based on five-fold cross-validation [18]. While these accuracies were slightly lower than state-of-the-art ML models based on crystal graphs, the approach offered significant advantages in terms of chemical space exploration capabilities.

The DSTAR methodology enabled the expansion from 1,089 bulk structures in the original GASpy database to 279,690 structures through numerical substitution of elemental fingerprints [18]. This thousand-fold expansion of accessible chemical space demonstrates the power of combining physical representations with data-driven approaches for comprehensive catalyst screening.

Diagram 2: Experimental validation pathway for predicted catalysts.

Experimental Protocols for Validation

Catalyst Synthesis and Characterization

The validation of data-driven predictions requires rigorous experimental protocols to ensure accuracy and reproducibility. For the CO~2~RR catalyst case study, the experimental validation followed a multi-stage process:

Catalyst Synthesis Protocol:

- Alloy Preparation: Cu-Ga and Cu-Pd alloys were synthesized through arc-melting of pure metal constituents under inert atmosphere

- Electrode Preparation: Catalysts were processed into electrode configurations through mechanical polishing and electrochemical pretreatment

- Surface Characterization: Pre- and post-reaction surface analysis was conducted using scanning electron microscopy (SEM) and X-ray diffraction (XRD) to verify composition and structure

Electrochemical Testing Protocol:

- Cell Configuration: H-type electrochemical cells were employed with appropriate reference and counter electrodes

- Reaction Conditions: CO~2~-saturated electrolytes were used with controlled mass transport conditions

- Product Analysis: Liquid products were quantified using nuclear magnetic resonance (NMR) spectroscopy, while gaseous products were analyzed by gas chromatography (GC)

- Performance Metrics: Faradaic efficiency, partial current densities, and stability metrics were calculated from experimental data

This comprehensive validation protocol ensured that the predicted catalytic performance was accurately assessed and confirmed through multiple complementary techniques.

Research Reagent Solutions and Materials

Table 3: Essential Research Materials for Catalytic Validation Experiments

| Material/Reagent | Specification | Function in Protocol | Physical Principle Addressed |

|---|---|---|---|

| High-Purity Metal Precursors | 99.99% pure Cu, Ga, Pd metals | Catalyst synthesis and alloy formation | Composition-structure relationships |

| CO2-Saturated Electrolyte | 0.1M KHCO3 saturated with high-purity CO2 | Electrochemical reaction medium | Mass transport and reaction environment |

| Nafion Membrane | Proton exchange membrane | Cell separation while allowing ion transport | Product separation and purity |

| Reference Electrode | Reversible Hydrogen Electrode (RHE) | Potential calibration and control | Thermodynamic accuracy |

| Deuterated Solvents | D2O for NMR analysis | Product quantification medium | Reaction mechanism verification |

Enhancing HTVS Accuracy through Physical Insights

Addressing Data Limitations through Physical Principles

The accuracy of high-throughput virtual screening is fundamentally constrained by data limitations, including sparse datasets, data inhomogeneity, and the scarcity of standardized catalyst databases [2]. Physical principles offer powerful approaches to address these limitations:

Small-Data Algorithms: Incorporating physical constraints through techniques like symbolic regression and physically-informed neural networks enables effective modeling even with limited data. The SISSO (Sure Independence Screening and Sparsifying Operator) method, for example, can identify optimal descriptors from millions of candidates while maintaining physical interpretability [2].

Transfer Learning Across Systems: Physical similarities between catalytic systems can be leveraged to transfer knowledge from data-rich to data-poor systems, enhancing prediction accuracy where experimental or computational data is limited.

Multi-fidelity Modeling: Integrating data from different sources and levels of accuracy (from high-accuracy DFT calculations to experimental observations) within physically-consistent frameworks improves overall predictive capability while respecting fundamental constraints.

Future Directions: Physically Informed ML Frameworks

The future of accurate high-throughput screening lies in the development of increasingly sophisticated physically-informed ML frameworks. Several promising directions are emerging:

Large Language Model-Augmented Mechanistic Modeling: LLMs show significant potential for automated data mining from the catalytic literature, extracting hidden relationships and mechanistic insights that can inform and validate screening approaches [2].

Multi-scale Modeling Integration: Connecting electronic structure calculations with microkinetic modeling and reactor-scale simulations through ML surrogates enables comprehensive catalyst evaluation across relevant length and time scales.

Active Learning with Physical Constraints: Incorporating physical knowledge into active learning loops guides data acquisition toward regions of chemical space that are both promising and physically plausible, optimizing experimental and computational resources.

The integration of data-driven discovery with physical catalytic principles represents the frontier of high-throughput screening accuracy in catalyst research. By moving beyond black-box predictions to embrace physically meaningful representations, descriptor selection, and validation methodologies, researchers can significantly enhance the reliability and utility of computational screening approaches. The case study in CO~2~RR catalysis demonstrates how this integrated approach can successfully identify novel catalyst materials while maintaining connections to fundamental catalytic mechanisms. As the field advances, further development of physically-informed ML frameworks, small-data algorithms, and standardized validation protocols will continue to bridge the gap between data-driven discovery and physical catalytic principles, ultimately accelerating the development of advanced catalytic materials for energy, environmental, and industrial applications.

Precision in Practice: Advanced HTS Technologies and Assay Designs for Catalysis

The pursuit of novel catalysts for sustainable energy technologies and pharmaceuticals demands exploration of vast compositional and synthetic parameter spaces. This high-throughput screening (HTS) process, whether for electrocatalyst discovery or drug development, faces a fundamental challenge: maintaining experimental reproducibility and accuracy at miniaturized scales and accelerated paces. Manual experimentation introduces significant variability, particularly in repetitive tasks like reagent dispensing and solid weighing, compromising data reliability and hindering the identification of true performance trends.

Automation through liquid handlers and solid dosing systems has emerged as a critical solution to this reproducibility challenge. These technologies standardize experimental workflows by minimizing human intervention, thereby reducing errors and generating consistently high-quality data. In catalyst discovery, where subtle performance differences distinguish promising candidates, the precision offered by automated platforms enables researchers to explore complex multi-element systems with confidence in their results. This technical guide examines the core technologies, validation methodologies, and implementation frameworks that establish automation as the foundation for reproducible high-throughput research.

Core Technologies for Automated Experimentation

Advanced Liquid Handling Systems

Modern liquid handling technologies have evolved significantly from basic manual pipetting to sophisticated automated systems capable of handling nanoliter volumes with precision. These systems form the backbone of HTS workflows for both catalyst discovery and pharmaceutical applications.

Key Technology Specifications: Contemporary liquid handlers employ multiple dispensing technologies suited to different applications. Acoustic dispensing and pressure-driven methods provide nanoliter precision for reagent addition and compound management in miniaturized assays [19]. Systems like Tecan's Veya platform offer walk-up automation for routine tasks, while integrated workflows such as FlowPilot software coordinate complex multi-instrument operations involving liquid handlers, robots, and analytical instruments [20]. The SPT Labtech firefly+ platform exemplifies integration by combining pipetting, dispensing, mixing, and thermocycling within a single compact unit, significantly enhancing reproducibility in genomic and catalytic workflows [20].

Performance Metrics: Liquid handling quality control is essential for maintaining reproducibility. Standard operating procedures for assessing liquid handler performance in HTS include routine daily testing on existing instrumentation and rigorous testing of new dispensing technologies [21]. These procedures help identify both method programming and instrumentation performance shortcomings, harmonizing instrumentation usage across research groups and facilitating data exchange across the industry.

Table 1: Liquid Handler Performance Validation Metrics

| Parameter | Assessment Method | Acceptance Criteria |

|---|---|---|

| Dispensing Accuracy | Gravimetric analysis or dye-based absorbance measurements | <5% deviation from target volume |

| Precision | Coefficient of variation across multiple dispensings | CV <10% for nanoliter volumes |

| Carryover Contamination | Cross-contamination tests between reagent wells | <1% signal transfer between wells |

| DMSO Compatibility | Signal stability across DMSO concentrations | Minimal signal variation at 0-1% DMSO |

Automated Solid Dosing Systems

The accurate dispensing of solid materials—including catalyst precursors, inorganic additives, and organic starting materials—presents unique challenges in high-throughput experimentation. Automated powder dosing systems have been developed specifically to address the limitations of manual weighing, especially at milligram and sub-milligram scales.

Technology Evolution: Early automated weighing systems such as the Flexiweigh robot (Mettler Toledo) provided starting points for current technologies despite limitations [4]. Subsequent collaborations between pharmaceutical companies and equipment manufacturers led to the development of next-generation powder and liquid dosing technologies. Systems like the CHRONECT XPR workstation exemplify modern solutions, combining robotics with market-leading weighing technology in a compact footprint suitable for handling powder samples in safe, inert gas environments critical for HTS workflows [4].

Performance Capabilities: Modern solid dosing systems demonstrate exceptional accuracy across a broad mass range. Case studies from AstraZeneca's HTE labs in Boston documented that the CHRONECT XPR system achieved <10% deviation from target mass at low masses (sub-mg to low single-mg) and <1% deviation at higher masses (>50 mg) [4]. This precision is maintained across diverse solid types, including transition metal complexes, organic starting materials, and inorganic additives—all crucial for catalyst discovery research.

Table 2: Solid Dosing System Specifications and Performance

| Parameter | CHRONECT XPR Specifications | Performance Outcomes |

|---|---|---|

| Dispensing Range | 1 mg - several grams | <10% deviation (sub-mg to low mg); <1% deviation (>50 mg) |

| Dosing Heads | Up to 32 Mettler Toledo standard dosing heads | Wide compound compatibility |

| Suitable Powders | Free-flowing, fluffy, granular, electrostatically charged | Successful dosing of diverse catalyst precursors |

| Dispensing Time | 10-60 seconds per component | 5-10x faster than manual weighing |

| Target Vials | Sealed and unsealed vials (2 mL, 10 mL, 20 mL) | Compatibility with standard HTS formats |

Experimental Validation and Quality Control

Robust validation protocols are essential to ensure that automated systems consistently produce reliable data. These methodologies establish performance baselines and monitor system stability over time.

Liquid Handler Performance Validation

A standardized approach to liquid handler validation involves comprehensive testing across multiple parameters to ensure dispensing accuracy and reproducibility:

Reagent Stability Assessment: Determining reagent stability under storage and assay conditions is fundamental. This includes validating manufacturer specifications for commercial reagents, identifying optimal storage conditions to prevent activity loss, testing stability after multiple freeze-thaw cycles, and examining storage stability of reagent mixtures [22].

DMSO Compatibility Testing: Since test compounds are typically delivered in 100% DMSO, solvent compatibility with assay reagents must be rigorously evaluated. Validation protocols recommend testing DMSO concentrations from 0% to 10%, though cell-based assays typically maintain final DMSO concentrations under 1% unless specifically validated for higher tolerance [22].

Plate Uniformity Assessment: A critical three-day validation study assesses signal variability across plates using three control signals: "Max" signal (maximum assay response), "Min" signal (background measurement), and "Mid" signal (intermediate response point) [22]. The Interleaved-Signal format, where all three signals are distributed across each plate in a defined pattern, enables comprehensive variability assessment while controlling for plate-to-plate and day-to-day variations.

Solid Dosing System Validation

Validation of automated powder dispensing systems follows similarly rigorous principles but addresses unique challenges associated with solid materials:

Mass Accuracy Protocols: Systematic testing across the operational mass range using standard reference materials establishes accuracy profiles. This involves repeated dispensing at target masses from sub-milligram to gram quantities, with gravimetric analysis comparing actual versus target masses [4].

Material Compatibility Testing: Given the diverse physical properties of solid materials used in catalyst research (free-flowing, fluffy, granular, electrostatically charged), validation must demonstrate consistent performance across this spectrum. This includes testing with representative materials from each category and optimizing dispensing parameters accordingly.

Cross-Contamination Assessment: Particularly important when screening diverse catalyst libraries, validation protocols quantify carryover between different compounds through sensitive analytical techniques like HPLC or ICP-MS, ensuring that minuscule residues don't compromise subsequent experiments.

Integrated Workflows in Practice

Catalyst Discovery Applications

Automated platforms have demonstrated transformative potential in electrocatalyst discovery, where multidimensional parameter spaces exceed manual exploration capabilities.

CatBot System: The CatBot platform exemplifies integrated automation for catalyst synthesis and testing, featuring a roll-to-roll transfer mechanism that automates substrate cleaning, catalyst loading via electrodeposition, and electrochemical testing [23]. This system operates under harsh conditions (highly acidic to highly alkaline media, temperatures up to 100°C) while maintaining reproducibility, achieving overpotential uncertainties of just 4-13 mV at -100 mA cm⁻² for the hydrogen evolution reaction—a critical metric for catalyst performance assessment [23].

CRESt AI System: The Copilot for Real-world Experimental Scientists (CRESt) platform integrates multimodal large vision-language models with robotic automation for high-throughput synthesis, characterization, and electrochemistry [24]. In one application, CRESt synthesized over 900 chemistries and performed approximately 3,500 electrochemical tests in three months, identifying an optimized octonary high-entropy alloy catalyst with 9.3-fold improvement in cost-specific performance compared to conventional Pd catalysts [24].

Pharmaceutical Screening Applications

In drug discovery, automation has dramatically enhanced reproducibility while increasing throughput. AstraZeneca's implementation of HTE across multiple global sites demonstrates the scalability of these approaches. At their Boston facility, installation of CHRONECT XPR systems and complementary liquid handlers increased average quarterly screen size from 20-30 to 50-85, while the number of conditions evaluated surged from under 500 to approximately 2000 over a comparable period [4].

The integration of 3D cell models with automated screening represents another advancement, providing more physiologically relevant data while maintaining reproducibility. As noted by researchers, "The beauty of 3D models is that they behave more like real tissues. You get gradients of oxygen, nutrients and drug penetration that you just don't see in 2D culture" [19]. Automated platforms like mo:re's MO:BOT system standardize 3D cell culture processes, enabling reproducible production of organoids for high-throughput screening with human-relevant results [20].

Essential Research Reagent Solutions

Successful implementation of automated workflows requires careful selection of reagents and materials compatible with robotic systems while maintaining assay integrity.

Table 3: Essential Research Reagent Solutions for Automated HTS

| Reagent Category | Specific Examples | Function in HTS Workflows | Automation Compatibility Requirements |

|---|---|---|---|

| Catalyst Precursors | Transition metal salts (Ni, Pd, Pt), Metal complexes | Source of catalytic elements in material synthesis | Soluble in automated dispensing solvents; stable under storage conditions |

| Electrolytes | KOH, HCl, Buffer solutions | Provide conductive medium for electrochemical testing | Stable viscosity for reproducible liquid handling; non-corrosive to dispensing components |

| Biological Reagents | Enzymes, Cell cultures, Assay kits | Enable functional screening in pharmaceutical applications | Stability through freeze-thaw cycles; compatibility with DMSO |

| Solid Additives | Inorganic bases, Ligands, Supports | Modify catalyst properties and reaction conditions | Free-flowing characteristics for reliable powder dispensing; controlled particle size distribution |

| Detection Reagents | Fluorogenic substrates, Chromogenic probes, Luminescent compounds | Enable quantitative measurement of catalytic activity or biological effect | Signal stability over assay duration; minimal interference with catalytic processes |

Implementation Framework and Best Practices

Successful integration of automation technologies into high-throughput screening workflows requires strategic planning and attention to both technical and human factors.

Laboratory Integration Strategy

A phased implementation approach minimizes disruption while maximizing technology adoption. Initial focus should address the most significant reproducibility bottlenecks—often solid dosing for catalyst research or compound management for pharmaceutical screening. The colocation of HTE specialists with general researchers, as practiced at AstraZeneca, fosters cooperative rather than service-led approaches, enhancing technology utilization and problem-solving [4].

Modular automation design allows laboratories to scale capabilities according to evolving research needs. As noted by industry experts, "There are still tasks best done by hand. If you only run an experiment once every few years, it is probably not worth automating it. Our job is to help customers find that balance—when automation adds real value and when it does not" [20]. This principle ensures appropriate resource allocation while maintaining flexibility.

Data Management and Analysis

The increased throughput enabled by automation generates massive datasets requiring sophisticated management and analysis solutions. Experimental data must capture not only results but complete contextual metadata, including all experimental conditions and system states. As emphasized by Tecan's Mike Bimson, "If AI is to mean anything, we need to capture more than results. Every condition and state must be recorded, so models have quality data to learn from" [20].

Standardized data formats and protocols facilitate data exchange and reproducibility across research groups. Initiatives such as the SiLA (Standardization in Lab Automation) and AnIML (Analytical Information Markup Language) standards provide frameworks for instrument communication and data representation, respectively, supporting interoperable automation ecosystems [20].

Automation through liquid handlers and solid dosing systems has fundamentally transformed the reproducibility landscape in high-throughput screening for catalyst discovery and beyond. By standardizing experimental workflows, these technologies minimize human-introduced variability while enabling exploration of vastly larger parameter spaces. The integration of advanced robotics with artificial intelligence and machine learning creates powerful feedback loops that accelerate discovery while maintaining data integrity.

As research challenges grow increasingly complex—from multi-element catalyst optimization to personalized therapeutic development—the role of automation in ensuring reproducible, statistically significant results will only expand. Future advancements will likely focus on even greater integration, with self-driving laboratories orchestrating complete experimental workflows from hypothesis to results with minimal human intervention. Through continued refinement of these technologies and methodologies, the scientific community can address pressing global challenges with unprecedented speed and confidence.

In the field of high-throughput screening for catalyst discovery, the transition from endpoint analysis to real-time kinetic profiling represents a paradigm shift with profound implications for research accuracy. Traditional endpoint methods, which capture data only at a reaction's conclusion, overlook the rich, time-resolved information essential for understanding catalyst behavior and reaction mechanisms [13]. This limitation is particularly critical in catalyst informatics, where multidimensional performance criteria—including activity, selectivity, and stability—must be balanced alongside sustainability considerations [13]. Fluorogenic and optical assays now provide a powerful methodological foundation for acquiring this kinetic data, enabling researchers to move beyond simple conversion metrics toward a more comprehensive understanding of catalytic function. This technical guide examines the experimental frameworks, analytical approaches, and practical implementations of real-time kinetic profiling, positioning these methodologies within the broader thesis that temporal resolution significantly enhances the accuracy and predictive power of high-throughput screening in catalyst discovery research.

The Experimental Platform: Real-Time Monitoring in Well-Plate Formats

Core System Components and Workflow