Beyond Trial and Error: Advanced Strategies for Handling Missing Data in High-Throughput Materials Growth

High-throughput materials growth, crucial for accelerating discovery in pharmaceuticals and clean energy, is frequently hampered by experimental failures that result in missing data.

Beyond Trial and Error: Advanced Strategies for Handling Missing Data in High-Throughput Materials Growth

Abstract

High-throughput materials growth, crucial for accelerating discovery in pharmaceuticals and clean energy, is frequently hampered by experimental failures that result in missing data. This creates a significant bottleneck, as traditional data analysis methods often discard these incomplete results, wasting valuable resources. This article provides a comprehensive guide for researchers and scientists on modern, data-centric strategies to overcome this challenge. We explore the fundamental causes and impacts of missing data, detail cutting-edge computational methods like Bayesian optimization and multi-omics integration for handling incomplete datasets, offer practical troubleshooting for autonomous labs, and present a rigorous validation framework for comparing strategy performance. By synthesizing insights from recent breakthroughs and benchmark studies, this article equips professionals with the knowledge to transform missing data from a roadblock into a source of information, thereby maximizing the efficiency and success of their materials discovery pipelines.

The Missing Data Problem: Understanding the Root Causes and Impact on Materials Discovery

FAQ: Understanding Experimental Failure

What constitutes an "experimental failure" in high-throughput research? In high-throughput research, an experimental failure occurs when a planned experiment does not yield a usable or interpretable data point for its intended purpose. This is not just a failed synthesis but any outcome that results in missing data, such as a grown thin film that cannot be characterized due to poor quality, a mechanical test specimen that breaks prematurely due to a fabrication flaw, or a sequencing reaction that provides no readable output [1] [2] [3]. In the context of data analysis, these failures create missing data points that can bias results and reduce statistical power if not handled correctly [4] [5].

Why is it critical to systematically handle failures in an automated workflow? High-throughput systems are designed for rapid, sequential experimentation. A single unhandled failure can disrupt the entire automated process, causing halts or generating garbage data. More importantly, failure data contains valuable information. Systematically logging failures allows machine learning algorithms to learn from them, avoiding unproductive regions of the parameter space and accelerating the convergence towards optimal conditions [1]. Proper handling prevents the bias that missing data can introduce into your final analysis [4] [5].

What are the common categories of experimental failures? Failures can be broadly classified into several categories, which are summarized in the table below.

Table 1: Common Categories of Experimental Failure

| Failure Category | Description | Examples in High-Throughput Contexts |

|---|---|---|

| Process-Related | Failures caused by equipment malfunction, sample handling errors, or protocol deviations [2]. | Clogged printer nozzles in additive manufacturing; robotic pipetting errors; blocked capillaries in sequencing [2] [6]. |

| Synthesis-Related | The target material is not formed or is of insufficient quality for characterization [1]. | Incorrect phase formation in thin-film growth; powder contamination in alloy synthesis. |

| Template-Related | Failures inherent to the sample itself or its properties [2]. | DNA sequences with homopolymer stretches causing sequencing dropouts; material microstructures prone to cracking [2] [7]. |

| Characterization-Related | The synthesized material exists, but its properties cannot be measured reliably [3]. | A thin film too rough for electrical measurement; a microscale specimen breaking at a grip during mechanical testing. |

The following diagram illustrates a logical workflow for classifying and responding to an experimental failure.

FAQ: Data and Analysis

How should I handle the missing data from failed experiments in my analysis? The appropriate method depends on the mechanism of missingness. It is crucial to avoid simply ignoring failed runs (complete-case analysis), as this can introduce severe bias unless the data is Missing Completely at Random (MCAR), which is rare [4] [5] [8]. The following table compares common methods.

Table 2: Methods for Handling Missing Data from Experimental Failures

| Method | Description | Best Use Case in High-Throughput Research |

|---|---|---|

| Complete-Case Analysis | Discards all data points with any missing values. | Only if the failure is verified to be MCAR (e.g., due to random equipment fault) and the sample size is large [4] [8]. |

| Floor/Ceiling Imputation | Replaces the missing value with the worst/best observed value. | Optimizing a property with Bayesian optimization; provides a conservative estimate that guides the algorithm away from failures [1]. |

| Multiple Imputation (MI) | Creates multiple plausible versions of the dataset by filling in missing values with predictions, then combines the results. | The gold standard for statistical analysis when data is Missing at Random (MAR); suitable for final data analysis before publication [5] [8]. |

| Informed Missingness Models | The machine learning model directly incorporates the probability of failure. | When using a binary classifier alongside a regression model to predict both failure and performance [1]. |

What is the "floor padding trick" and when should I use it? The floor padding trick is an adaptive imputation method used specifically in Bayesian optimization (BO). When an experiment fails, instead of leaving a gap, the failure is assigned the worst evaluation value observed so far in the campaign. For example, if you are maximizing a material's conductivity and the worst successful sample has a value of 10, a failed run would also be recorded as 10 [1]. This simple method tells the BO algorithm that this set of parameters produced a "bad" outcome, guiding it to explore more promising regions without requiring pre-set penalty values. It has been shown to enable efficient optimization in a wide parameter space for processes like molecular beam epitaxy [1].

Troubleshooting Guide: Common Failure Scenarios

Problem: Inconsistent or Failed Synthesis in a High-Throughput Alloy Campaign

- Symptoms: Missing data points for certain compositional spreads; inability to characterize some samples due to lack of formation, porosity, or contamination.

- Potential Causes:

- Process-Related: Calibration drift in deposition sources (e.g., in sputtering or MBE); inhomogeneous powder mixing in composite libraries; oxidation during synthesis [6].

- Template-Related: Exploring a region of parameter space (e.g., temperature-composition) where the target phase is not stable [1].

- Solutions:

- Replicate the Run: Immediately re-run the specific failed synthesis to distinguish a random process error from a systematic parameter-space issue.

- Review Sensor Data: Check logs from in-situ monitors (e.g., temperature, pressure, deposition rate) for the failed run to identify equipment anomalies.

- Implement Bayesian Optimization with Failure Handling: Use an algorithm that incorporates failed runs via the floor padding trick. This allows you to start with a wide, exploratory parameter space without fear of breaking the optimization loop, as the algorithm will learn to avoid failure regions [1].

- Characterize the "Failure": Perform microscopy or spectroscopy on the failed sample. It might be a different, but interesting, phase, providing valuable data for your model.

Problem: Failed or Unreliable Small-Scale Mechanical Testing

- Symptoms: Specimens break at the grips; large scatter in measured properties (e.g., Young's modulus, strength); no valid data for a subset of samples.

- Potential Causes:

- Process-Related (Fabrication): Improper specimen fabrication (e.g., FIB-induced damage, non-uniform gauge sections), misalignment during loading, or damaged gripper surfaces [3].

- Template-Related (Material): Intrinsic material issues like pre-existing voids, brittle phases, or surface cracks that act as stress concentrators [3] [7].

- Solutions:

- Post-Mortem Analysis: Use SEM to image the fracture surface of failed test specimens. Determine if the failure initiated at the grip (suggesting a stress concentration issue) or in the gauge length (suggesting a material issue) [7].

- Standardize Fabrication Protocol: Develop and rigorously adhere to a site-specific specimen fabrication procedure that is agnostic to the synthesis route to ensure consistency [3].

- Design of Experiments: Include control samples with known properties in each testing batch to validate the equipment and methodology.

- Implement Real-Time Decision Making: Use a workflow where initial test results (e.g., stiffness) inform whether a more time-consuming test (e.g., fatigue) should be performed on the same specimen, optimizing the speed-fidelity tradeoff [3].

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for High-Throughput Experimentation

| Item / Solution | Function in High-Throughput Workflows |

|---|---|

| Automated Synthesis Platforms | Enables rapid, sequential fabrication of sample libraries with minimal human intervention (e.g., combinatorial sputtering, automated pipetting) [6]. |

| High-Throughput Characterization Tools | Allows for rapid property mapping across many samples. Examples include automated XRD, nanoindentation arrays, and high-speed SEM/EBSD [6] [3]. |

| Small-Scale Mechanical Testers | Devices like micromanipulators and nanoindenters designed to test the mechanical properties of tiny specimens fabricated from individual library members [3]. |

| Bayesian Optimization Software | AI agent that decides the next best experiment to run based on all previous data (both successes and failures), dramatically accelerating the optimization process [1] [6]. |

| In-Situ Monitoring Sensors | Provides real-time data on synthesis conditions (e.g., pyrometers for temperature, RHEED for surface structure), crucial for diagnosing process-related failures [1]. |

The following diagram outlines a closed-loop, failure-resistant high-throughput methodology that integrates the solutions discussed.

Technical Support Center

Troubleshooting Guides

Guide 1: Resolving High Rates of Experimental Failure in High-Throughput Screening

Problem: A high proportion of materials synthesis experiments fail, yielding no usable data and creating missing data points that halt optimization pipelines.

Symptoms:

- Evaluation value (e.g., RRR) is not returned for specified growth parameters.

- Target material phase is not formed under certain synthesis conditions.

- Sequential optimization algorithms stall or suggest parameters in known failure regions.

Solution:

- Implement the Floor Padding Trick: When an experiment fails, assign the worst observed evaluation value from successful experiments to the failed parameters. This informs the search algorithm to avoid similar regions.

- Method: After a failure at parameter

x_n, sety_n = min(y_1, ..., y_{n-1})for all successful observationsi[1]. - Rationale: This adaptive method avoids careful tuning of a fixed penalty value and provides the optimization algorithm with a negative signal, encouraging exploration of other parameter spaces [1].

- Method: After a failure at parameter

Incorporate a Binary Failure Classifier: Use a Gaussian process-based binary classifier to predict the probability that a given parameter set will lead to failure.

- Method: Train the classifier on historical data of successful and failed growth runs. Use its predictions to guide the Bayesian optimization procedure away from high-risk parameter regions [1].

Widen the Search Space: Do not restrict the initial search to a small, empirically "safe" parameter space. Use the above methods to enable a safe and flexible search across a wide multi-dimensional space to locate optimal conditions that may exist outside expected ranges [1].

Verification: After implementation, the optimization algorithm should begin to suggest parameters away from failure-dense regions, leading to a higher proportion of successful synthesis runs and a more efficient path to the optimal material.

Guide 2: Handling Missing Data in Patient-Reported Outcomes (PROs) from Clinical Trials

Problem: Missing questionnaire data from patients introduces bias and reduces the statistical power of clinical trials, potentially invalidating conclusions about a drug's efficacy.

Symptoms:

- Incomplete multiple-item PRO instruments (e.g., HRQL scales).

- Diminished statistical power and biased estimates of treatment effects.

- Challenges in analyzing longitudinal data with monotonic (drop-out) or non-monotonic missing patterns.

Solution:

- Select the Appropriate Imputation Method Based on Mechanism:

- For MAR/MCAR Data: Use Mixed Model for Repeated Measures (MMRM) or Multiple Imputation by Chained Equations (MICE) at the item level, not the composite score level. Item-level imputation leads to smaller bias and less reduction in statistical power [9].

- For MNAR Data: Use control-based Pattern Mixture Models (PMMs), such as Jump-to-Reference (J2R), Copy Reference (CR), or Copy Increment from Reference (CIR). These methods are superior under MNAR mechanisms as they impute missing data in the treatment group using models from the control group [9].

- Avoid Simple Methods: Do not rely on Last Observation Carried Forward (LOCF) or simple mean substitution as a primary approach. These methods are well-documented to underestimate variability and produce biased estimates [9] [4].

Verification: A sensitivity analysis comparing results from the chosen method (e.g., MMRM) against other methods (e.g., PMM) can help verify the robustness of your trial's conclusions.

Frequently Asked Questions (FAQs)

Q1: What are the fundamental types of missing data I need to know? A: There are three primary mechanisms, detailed in the table below [4] [10] [11].

| Mechanism | Full Name | Description | Example in Materials Science |

|---|---|---|---|

| MCAR | Missing Completely at Random | The missingness is unrelated to any data values. | A sample is lost due to equipment failure or a random power outage [4]. |

| MAR | Missing at Random | The missingness is related to other observed variables, but not the missing value itself. | The probability of a failed synthesis may depend on the observed substrate temperature, but not on the unmeasured film quality [9]. |

| MNAR | Missing Not at Random | The missingness is related to the unobserved missing value itself. | A thin film is too discontinuous to measure its resistivity, which is the very property being studied. The failure is directly linked to the missing value [1]. |

Q2: Which machine learning algorithms can handle missing data automatically? A: Some tree-based algorithms, like XGBoost and scikit-learn's Decision Trees, have built-in methods. However, their strategies vary and must be reviewed to avoid bias.

- scikit-learn (splitter='best'): Evaluates whether sending all missing values to the left or right node during a split gives better performance [11].

- XGBoost (default): Learns default directions for missing values during training [11].

- Caution: In some modes (e.g., XGBoost with 'gblinear' booster), missing values are treated as zero, which can introduce significant bias if the data is not MCAR [11].

Q3: What is the single most important step in dealing with missing data? A: Prevention. Carefully planning your study and data collection process is always superior to treating missing data after the fact [4]. This includes:

- Minimizing follow-up visits and collecting only essential information.

- Developing user-friendly data collection forms.

- Training all personnel thoroughly.

- Conducting a pilot study to identify potential problems.

- Aggressively engaging participants at risk of dropping out [4].

Experimental Protocols

Protocol 1: Multiple Imputation by Chained Equations (MICE) for Materials Data

Purpose: To create multiple complete versions of a dataset with missing values, capturing the uncertainty of the imputation process.

Materials: A dataset with missing values; statistical software (e.g., R with 'mice' package, Python with 'scikit-learn').

Procedure:

- Setup: Specify the imputation model for each variable with missing data. Typically, a regression model is used, predicting a variable from all other variables in the dataset.

- Cycle: For each variable, impute the missing values by running a regression on the other variables, using only the complete cases.

- Iterate: Repeat step 2 for all variables, cycling through them multiple times (e.g., 10-20 cycles). This completes one imputed dataset.

- Repeat: Repeat the entire process to generate

mseparate imputed datasets (common choices arem=5tom=20). - Analyze: Perform your intended statistical analysis (e.g., linear regression) separately on each of the

mdatasets. - Pool: Combine the results from the

manalyses using Rubin's rules, which average the parameter estimates and adjust the standard errors to account for the between-imputation and within-imputation variability [9] [11].

Protocol 2: Control-Based Pattern Mixture Models (PPMs) for Clinical Trials

Purpose: To handle missing data assumed to be Missing Not at Random (MNAR) in clinical trials, providing a conservative estimate of treatment effects.

Materials: Longitudinal clinical trial data with patient dropouts; statistical software capable of multiple imputation and pattern mixture models.

Procedure:

- Identify Patterns: Identify groups of patients with similar missing data patterns (e.g., those who dropped out after week 4).

- Specify Imputation Method: Choose a control-based imputation strategy for the missing data in the treatment group. Common methods include:

- Jump-to-Reference (J2R): After dropout, a patient's future outcomes are imputed based on the model from the control/reference group, "jumping" to the control group's trajectory [9].

- Copy Reference (CR): The entire post-dropout profile is copied from a patient in the control group [9].

- Copy Increments in Reference (CIR): The change from baseline in the treatment group is set to match the change from baseline seen in the control group after dropout [9].

- Impute and Analyze: Use multiple imputation to create several datasets based on the chosen PPM rule.

- Pool Results: Analyze each dataset and pool the results to get a final, conservative estimate of the treatment effect that accounts for the worst-case scenario of patients discontinuing treatment [9].

Quantitative Data on Imputation Methods

The table below benchmarks the performance of various imputation methods in materials science, evaluated using different error metrics [12].

| Imputation Method | Description | Root Mean Square Error (RMSE) | Data Set Correlation Convergence (DCC) | Suitability for Small Data |

|---|---|---|---|---|

| MatImpute | Newly proposed method using nearest neighbors and iterative predictions | Lowest | Highest | High [12] |

| MissForest | Random forest-based imputation | Medium | Medium | Medium [12] |

| Gain | Generative Adversarial Imputation Networks | Medium | Medium | Low [12] |

| Mean Imputation | Replaces missing values with the feature's mean | Highest | Lowest | Low [13] [11] |

Research Reagent Solutions: The Scientist's Toolkit

| Item | Function in Handling Missing Data |

|---|---|

| Bayesian Optimization (BO) Algorithm | A sample-efficient global optimization method that can sequentially suggest the next most promising experiment, even when previous runs have failed, thereby reducing wasted resources [1]. |

| Multiple Imputation by Chained Equations (MICE) | A robust statistical method for handling MAR data by creating multiple plausible datasets with imputed values, allowing for proper uncertainty estimation [9] [11]. |

| Control-Based Pattern Mixture Models (PPMs) | A family of statistical models used for sensitivity analysis in clinical trials when data is suspected to be MNAR, providing a conservative estimate of treatment effect [9]. |

| MatImpute Software | A specialized imputation tool designed for materials science data, reportedly outperforming other methods in recovering data fidelity [12]. |

| Binary Classifier (Gaussian Process) | A machine learning model that can predict the probability of experimental failure for a given set of parameters, helping to avoid missing data proactively [1]. |

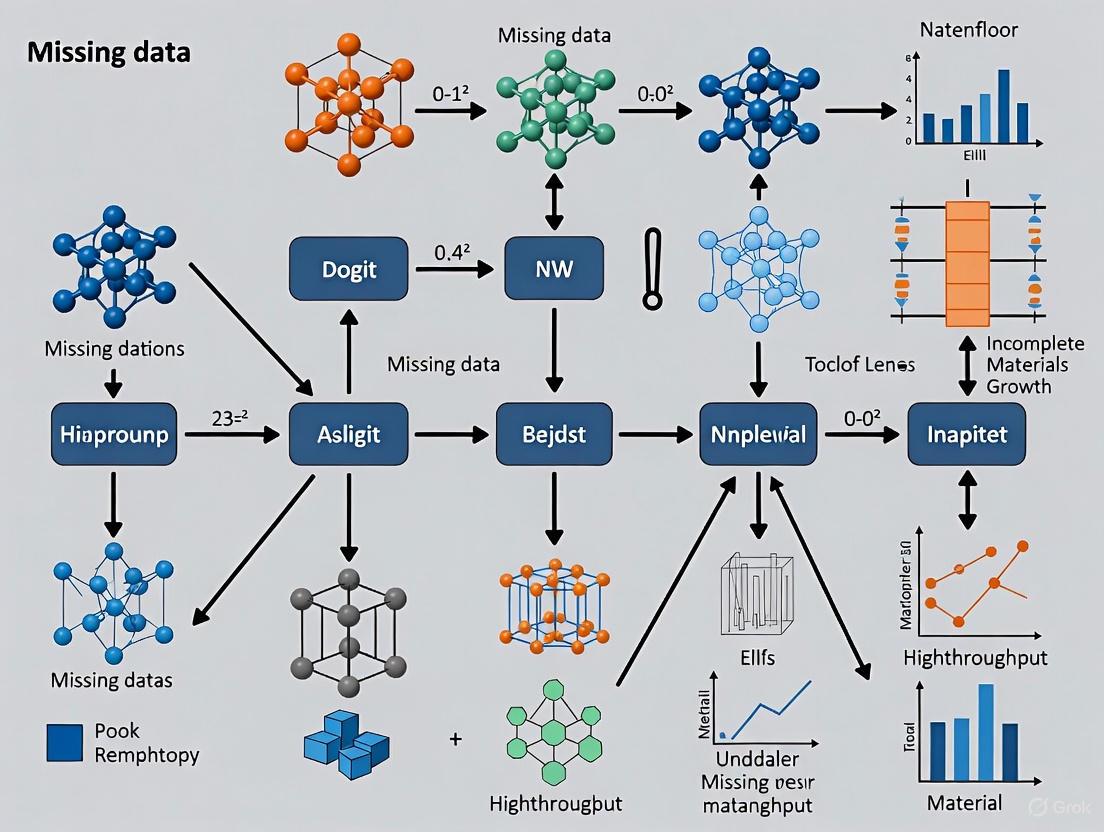

Workflow and Process Diagrams

Diagram 1: High-Throughput Materials Growth with Failure Handling

This diagram illustrates the integration of the floor padding trick into a high-throughput materials growth pipeline, enabling continuous optimization despite experimental failures [1].

Diagram 2: Decision Framework for Missing Data Methods

This decision flowchart helps researchers select an appropriate statistical method for handling missing data based on the suspected missingness mechanism [9] [4] [10].

Common Scenarios Leading to Block-Wise Missing Data in Multi-Omics and Growth Experiments

Frequently Asked Questions (FAQs)

1. What is block-wise missing data and how does it differ from randomly missing values? Block-wise missing data, also known as missing views, occurs when entire blocks of data from specific omics sources or experimental conditions are absent for a subset of samples [14]. Unlike randomly scattered missing values, block-wise missingness involves the systematic absence of all features from one or more data modalities. For example, in multi-omics studies, you might have complete transcriptomics data but completely missing proteomics data for a group of patients [15]. In materials growth experiments, this manifests as complete experimental failures where no usable evaluation data is obtained for certain parameter combinations [1].

2. What are the primary experimental scenarios that cause block-wise missing data? The most common scenarios stem from technical, logistical, and biological constraints. In high-throughput materials growth, unsuccessful synthesis conditions where target materials fail to form create blocks of missing evaluation data [1]. In longitudinal multi-omics studies, missing views arise from dropouts in measurements, experimental errors, platform unavailability at certain timepoints, or cost limitations that prevent comprehensive profiling across all omics types for all samples [16]. In clinical multi-omics research, tissue quality or sample volume limitations may make certain assays impossible to perform for specific patient subsets [17].

3. How does block-wise missing data impact analytical outcomes? Block-wise missing data reduces statistical power and can introduce bias if the missingness mechanism isn't properly addressed [17]. It complicates integrated analysis because standard machine learning algorithms typically require complete datasets. Excluding samples with missing blocks leads to substantial data loss - in some multi-omics datasets, this can eliminate over 50% of samples [14]. In materials optimization, failing to account for experimental failures can prevent effective exploration of parameter spaces and lead to suboptimal synthesis conditions [1].

4. What methodological approaches effectively handle block-wise missingness? Several specialized approaches have been developed. The profile-based method groups samples by their missingness pattern and learns models using all available complete data blocks [14] [15]. The floor padding trick replaces missing experimental outcomes with the worst observed value, enabling Bayesian optimization to continue while avoiding failed regions [1]. Advanced neural networks like LEOPARD disentangle content and temporal representations to complete missing views in longitudinal omics data [16]. Each approach has strengths depending on your data structure and analysis goals.

Troubleshooting Guides

Problem: Experimental Failures in High-Throughput Materials Growth

Symptoms

- No usable material forms under certain synthesis conditions

- Missing evaluation metrics for specific parameter combinations

- Inability to optimize growth parameters across wide search spaces

Solution Protocol

- Implement Bayesian Optimization with Floor Padding [1]

- For each experimental iteration:

- Propose next parameters using Gaussian process regression

- Execute growth experiment with proposed parameters

- If experiment succeeds, record evaluation metric

- If experiment fails, assign worst observed value (floor padding)

- Continue iterations until convergence or resource exhaustion

- Use binary classifier alongside evaluation predictor to avoid failure regions

Validation Metrics

- Success rate in achieving target material properties

- Number of experiments required to reach optimization target

- Comparison to optimization with restricted parameter spaces

Problem: Missing Omics Views in Multi-Timepoint Studies

Symptoms

- Complete absence of specific omics types at certain timepoints

- Inconsistent omics coverage across longitudinal samples

- Reduced sample size for integrated temporal analysis

Solution Protocol

- Apply LEOPARD Framework for View Completion [16]

- Preprocess omics data using view-specific pre-layers to equal dimensions

- Factorize data into omics-specific content and timepoint-specific knowledge via contrastive learning

- Transfer temporal knowledge to omics content using Adaptive Instance Normalization

- Train model using combined contrastive, representation, reconstruction, and adversarial losses

- Complete missing views by generating data from content and temporal representations

Validation Metrics

- Mean squared error between imputed and held-out observed data

- Preservation of biological signals in regression/classification tasks

- Robustness across different missingness patterns and ratios

Table 1: Performance Metrics for Block-Wise Missing Data Handling Methods

| Method | Application Context | Performance Metrics | Key Advantages |

|---|---|---|---|

| Profile-based Integration [14] [15] | Multi-omics classification & regression | Binary classification: 86-92% accuracy, F1: 68-79% [14]; Multi-class: 73-81% accuracy [15]; Regression: 72-76% correlation [14] | No imputation required; Utilizes all available data blocks |

| Bayesian Optimization with Floor Padding [1] | Materials growth optimization | Achieved high RRR (80.1) in SrRuO3 films in 35 runs [1] | Enables wide parameter space search; Automatically avoids failure regions |

| LEOPARD [16] | Longitudinal multi-omics | Superior to PMM, missForest, GLMM, cGAN across benchmarks [16] | Captures temporal patterns; Preserves biological variation |

| MMRM with Item-Level Imputation [9] | Clinical trials with PROs | Lowest bias and highest power for MAR mechanisms [9] | Handles monotonic and non-monotonic missing patterns |

Table 2: Common Scenarios and Characteristics of Block-Wise Missing Data

| Scenario | Missingness Mechanism | Typical Missing Data Ratio | Field Prevalence |

|---|---|---|---|

| Materials Growth Failures [1] | MNAR (missing not at random) | Varies by parameter space | Common in autonomous materials synthesis |

| Multi-omics Platform Limitations [17] | MAR/MNAR | 20-50% of possible peptide values [18] | Widespread in proteomics and metabolomics |

| Longitudinal Dropouts [16] | MAR/MNAR | Varies by study duration and design | Increasingly common in cohort studies |

| Clinical Sample Limitations [17] | MCAR/MAR | 10-30% in typical clinical trials [9] | Universal in clinical research |

Experimental Protocols

Protocol 1: Profile-Based Multi-Omics Integration

- Profile Identification: For S data sources, identify all 2^S - 1 possible missing block patterns in your dataset

- Binary Encoding: Create indicator vector I = [I(1),...,I(S)] where I(i) = 1 if i-th data source is available, 0 otherwise

- Profile Assignment: Convert binary vectors to decimal profile numbers for each sample

- Data Partitioning: Group samples by profile and form complete data blocks for source-compatible profiles

- Model Formulation: Implement the regression model: y = ΣαiXiβi + ε, where βi remains consistent across profiles while α components vary

- Two-Step Optimization: Learn parameters (β1,...,βS) and weights (α1,...,αS) using available complete data blocks

Implementation Notes:

- Available as R package "bwm" [14]

- Supports continuous, binary, and multi-class response variables [15]

- Particularly effective when missingness affects multiple omics sources [14]

Protocol 2: Bayesian Optimization with Experimental Failure Handling

Methodology [1]:

- Initialization: Start with 5-10 randomly selected growth parameters

- Gaussian Process Modeling: Build surrogate model of evaluation landscape using observed data

- Acquisition Function: Select next parameters using expected improvement criterion

- Experimental Execution: Run growth experiment with selected parameters

- Failure Handling:

- If successful: Record evaluation metric

- If failed: Apply floor padding (assign worst observed value)

- Model Update: Incorporate new data (success or failure) into Gaussian process

- Iteration: Repeat steps 3-6 until convergence (typically 30-100 iterations)

Implementation Notes:

- Combine with binary classifier to predict failure probability

- Enables exploration of wide parameter spaces without manual restriction

- Demonstrated effectiveness for molecular beam epitaxy optimization [1]

Research Reagent Solutions

Table 3: Essential Computational Tools for Handling Block-Wise Missing Data

| Tool/Resource | Function | Application Context |

|---|---|---|

| bwm R Package [14] [15] | Profile-based integration | Multi-omics regression and classification |

| LEOPARD Framework [16] | Missing view completion | Longitudinal multi-omics data |

| Bayesian Optimization with Floor Padding [1] | Experimental optimization | Materials growth parameter search |

| MICE (Multiple Imputation by Chained Equations) [9] [5] | Multiple imputation | Clinical trials with PRO endpoints |

| MMRM (Mixed Model for Repeated Measures) [9] | Direct analysis with missing data | Longitudinal clinical trials |

| Control-Based PPMs (Pattern Mixture Models) [9] | Sensitivity analysis | Clinical trials with potential MNAR data |

Methodological Workflows

Method Selection Workflow for Block-Wise Missing Data

Profile-Based Multi-Omics Integration Workflow

FAQ 1: What is listwise deletion and why is it a default in many statistical software?

Listwise deletion, also known as complete-case analysis, is an approach where any case (e.g., a sample or experimental run) with a missing value in any variable is entirely omitted from the analysis [4] [19]. This method has become the default option in most statistical software packages, leading to its widespread, often uncritical, adoption [4]. While simple to implement, this approach simply discards incomplete data, which can have severe consequences for the integrity of your research findings.

FAQ 2: Under what conditions is listwise deletion an acceptable method?

Listwise deletion is considered acceptable only under the highly restrictive and often unrealistic condition that data are Missing Completely at Random (MCAR) [20] [4]. The MCAR assumption holds when the probability of a value being missing is unrelated to any observed or unobserved data [21] [19]. In this specific scenario, listwise deletion produces unbiased estimates, though with a loss of statistical power due to the reduced sample size [20] [4].

However, in reality, the MCAR assumption is unlikely to be met in high-throughput research. A more plausible mechanism is Missing at Random (MAR), where missingness may be related to some other observed variable (e.g., low-yielding samples may be less likely to have certain measurements recorded) [20] [22]. If the data are not MCAR, listwise deletion may cause biased estimates [4] [19].

FAQ 3: What are the primary risks of using listwise deletion with my high-throughput data?

Relying on listwise deletion for your experimental data carries several critical risks that can compromise your results:

- Biased Parameter Estimates: When data are not MCAR, the complete cases may no longer be representative of your entire sample, leading to skewed and biased estimates of relationships between variables [4] [19].

- Reduced Statistical Power: Discarding data inherently reduces your sample size. This diminishes the power of your statistical tests, increasing the chance of false negatives (Type II errors) [4].

- Loss of Information and Costly Data: In high-throughput experiments where each data point is resource-intensive to produce, discarding entire cases due to a single missing value is an inefficient waste of valuable information and experimental effort.

- Invalid Conclusions: The combination of bias and reduced power can ultimately lead to invalid scientific conclusions, undermining the reliability of your research [4].

Decades of methodological research have indicated that listwise deletion can be a suboptimal strategy, and it has been referred to as "among the worst methods available for practical applications" [20].

Advanced Troubleshooting: Implementing Superior Methods

FAQ 4: What are the main advanced alternatives to listwise deletion?

The two most powerful and recommended categories of modern missing data handling are Multiple Imputation (MI) and Maximum Likelihood (ML) methods [20] [4] [5]. These methods are designed to produce unbiased and efficient estimates under the more realistic MAR assumption.

Multiple Imputation (MI) involves creating multiple (m) plausible copies of the dataset, with the missing values filled in by imputation. The analytic model is then run separately on each dataset, and the results are pooled into a final set of estimates that account for the uncertainty of the imputation [20] [5]. The following workflow illustrates this process:

Maximum Likelihood (ML) methods use all the available observed data to estimate parameters that would maximize the likelihood of observing that data. Unlike MI, ML does not impute data points but uses the raw incomplete data directly for model fitting [4].

FAQ 5: How do I choose the right imputation method for mixed data types?

High-throughput phenomic data often contain a mix of continuous, ordinal, and categorical variables, which voids the application of many methods designed only for continuous data [23]. The table below summarizes several robust methods capable of handling mixed data types.

| Method | Brief Description | Key Features / Best For |

|---|---|---|

| Multiple Imputation by Chained Equations (MICE) [23] | A multiple imputation method that models each variable conditionally on the others in an iterative cycle. | Flexible; can specify different models for different variable types (e.g., logistic regression for binary, linear for continuous). |

| missForest [23] | A non-parametric method that uses a random forest model to impute missing values. | Powerful for complex interactions and non-linear relationships; makes no assumptions about data distribution. |

| K-Nearest Neighbors Imputation (KNN) [22] [23] | Imputes missing values based on the values from the 'k' most similar complete cases. | Simple and effective; similarity can be calculated on mixed data types with appropriate distance metrics. |

| Precision Adaptive Imputation Network (PAIN) [24] | A novel hybrid algorithm integrating statistical methods, random forests, and autoencoders. | Designed to dynamically adapt to varying data types, distributions, and missingness patterns (MAR, MNAR). |

FAQ 6: How many imputations are needed for Multiple Imputation?

The old rule of thumb of 3-10 imputations is now considered insufficient. Modern recommendations emphasize that more imputations are better for the efficiency and replicability of standard errors [20].

- A rough guideline is to set the number of imputations (m) based on the percentage of incomplete cases. For example, if 20% of your cases have any missing data, you should consider generating at least 20 imputed datasets [20].

- For more elaborate hypotheses or to ensure stability, generating 100 or more imputations can be beneficial [20].

FAQ 7: My dataset has a complex, multi-level structure (e.g., reactions within batches). How should I impute?

The clustered nature of many experimental designs (e.g., samples within plates, measurements within growth cycles) adds a layer of complexity. The imputation model must account for this hierarchy to be valid.

- The Principle of Congeniality: Your imputation model must match your intended analytic model [20]. If your final analysis will use a multilevel (mixed) model, your imputation procedure must also be multilevel.

- Solution: Use imputation software specifically designed for multilevel data. Freely available tools like Blimp can handle this complexity [20]. Ensure that the imputation model includes the same cluster variables and level-1/level-2 predictors that you plan to use in your substantive analysis.

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Resource | Function in the Context of Missing Data Analysis |

|---|---|

| R Statistical Software | An open-source environment with extensive packages for advanced imputation (e.g., mice, missForest, Blimp) [20] [23]. |

| Blimp Software | A dedicated, freely available program for multilevel multiple imputation, ideal for complex, hierarchical experimental data [20]. |

| Python with Scikit-learn | Provides simple imputation methods (e.g., SimpleImputer, KNNImputer) and the framework for building more complex, custom imputation pipelines [25]. |

| SAS / Stata | Commercial statistical software with robust procedures for multiple imputation (e.g., PROC MI in SAS) and maximum likelihood estimation [5]. |

| 'phenomeImpute' R Package | A specialized package developed for high-dimensional phenomic data with mixed variable types, as cited in research literature [23]. |

Quantitative Comparison of Missing Data Methods

The table below summarizes the properties of different missing data handling techniques to guide your selection [4] [19] [25].

| Method | Handles MAR? | Preserves Sample Size? | Preserves Variable Distribution? | Key Limitation(s) |

|---|---|---|---|---|

| Listwise Deletion | No | No | Yes (on reduced sample) | Severe loss of power; high bias if not MCAR [20] [4] |

| Mean/Median Imputation | No | Yes | No (reduces variance, distorts shape) [25] | Biases correlations and standard errors downwards [4] |

| k-Nearest Neighbors (KNN) | Yes | Yes | Moderate | Computationally expensive for large datasets; choosing 'k' can be challenging [22] |

| Multiple Imputation (MI) | Yes | Yes | Yes (when model is correct) | Requires careful specification of the imputation model [20] [5] |

| Maximum Likelihood (ML) | Yes | Yes (uses all info) | Yes | Can be computationally intensive for complex models [4] |

| Random Forest (e.g., missForest) | Yes | Yes | Yes (highly accurate) | Computationally intensive for very large datasets [23] |

From Theory to Practice: Computational Frameworks and Algorithms for Incomplete Datasets

Frequently Asked Questions (FAQs)

Q1: What is the core challenge that the 'floor padding' and binary classifier tricks address? These methods address a critical problem in high-throughput materials growth and other expensive experimental domains: missing data due to experimental failures. When an experiment fails (e.g., a target material doesn't form), no useful evaluation data is obtained. Standard Bayesian Optimization (BO) doesn't know how to handle these missing values. The proposed tricks allow the BO algorithm to learn from these failures and continue searching the parameter space effectively without getting stuck [1] [26].

Q2: How does the 'Floor Padding' trick work?

The Floor Padding trick handles a failed experiment at a parameter x_n by assigning it the worst evaluation value observed so far (min(y_1, ..., y_{n-1}) [1]. This simple but effective method automatically informs the BO algorithm that the parameter led to an undesirable outcome, encouraging it to avoid similar regions in the future. It is adaptive, as the "worst value" is updated as more experiments are completed.

Q3: What is the role of the Binary Classifier in this framework? A separate binary classifier is trained to predict whether a given set of parameters will lead to a success or a failure [1]. This model learns the regions in the parameter space that are likely to cause experimental failures. Its predictions can be used to steer the BO algorithm away from these high-risk areas, preventing wasted resources on experiments that are probable to fail.

Q4: When should I use the Floor Padding trick versus the Binary Classifier? Based on simulation studies [1]:

- The Floor Padding (F) method alone often leads to quick improvements in the early stages of optimization.

- The combination of Floor Padding and a Binary Classifier (FB) can be more robust but may show slower initial improvement.

- Using only a Binary Classifier (B) without floor padding can lead to sensitivity in the choice of a constant failure value. For many scenarios, starting with the Floor Padding trick is a good default choice due to its simplicity and effectiveness.

Q5: Can I combine both techniques? Yes, the methods can be combined (FB). The floor padding handles the data imputation for the surrogate model, while the binary classifier explicitly models and helps avoid failure-prone regions [1].

Q6: In which experimental domains are these methods particularly useful? These methods are highly valuable in any domain where experiments are expensive and failures are common. The original research demonstrated their success in high-throughput materials growth using machine-learning-assisted molecular beam epitaxy (ML-MBE) to optimize the growth of SrRuO3 films [1] [27]. They are equally applicable to fields like drug development and hyperparameter tuning for machine learning models.

Troubleshooting Guides

Issue 1: Bayesian Optimization is Not Avoiding Failed Experiment Regions

Problem: Your BO algorithm continues to suggest parameters in regions where previous experiments have failed.

Solution:

- Verify Failure Imputation: Ensure that failed experiments are correctly flagged and imputed with the worst observed value (Floor Padding) or a predetermined low constant. Check that this data is correctly incorporated into the dataset for the Gaussian Process surrogate model [1].

- Check Binary Classifier Performance: If using a binary classifier, evaluate its accuracy on a validation set. If it performs poorly:

- Ensure your training data has a balanced number of success and failure examples.

- Tune the classifier's hyperparameters. Consider using diverse classifiers (e.g., Random Forest, XGBoost) and ensembling them for better performance [28] [29].

- Use a solid cross-validation strategy to assess its real-world performance [28].

- Adjust Acquisition Function: Consider using an acquisition function that more heavily weights exploration, such as Expected Improvement (EI) or Upper Confidence Bound (UCB). You can increase the

xiparameter in the EI function to encourage more exploration of uncertain regions [30] [31].

Issue 2: Optimization Process is Converging Too Slowly or to a Poor Optimum

Problem: The optimization is not finding good parameters efficiently, even though few experiments are failing.

Solution:

- Review Initial Samples: The BO process is sensitive to the initial set of random samples. Ensure that your initial design (e.g., Latin Hypercube Sampling) covers the parameter space adequately to build a reasonable initial surrogate model [31] [32].

- Inspect Surrogate Model Fit: Plot the Gaussian Process posterior mean and uncertainty. If the model fit is poor, consider:

- Kernel Selection: Choose a kernel that matches your expectations of the objective function (e.g., Matérn kernel for less smooth functions) [31].

- Hyperparameter Tuning: Optimize the GP hyperparameters (length scale, variance) by maximizing the marginal likelihood, rather than using default values [33].

- Balance Exploration and Exploitation: The trade-off between exploration and exploitation is controlled by the acquisition function. If converging too fast, increase exploration (

xiin EI). If converging too slow, decrease it [30] [31].

Issue 3: The Binary Classifier Has High Error Rates

Problem: The classifier predicting success/failure is inaccurate, leading to the avoidance of good parameters or acceptance of bad ones.

Solution:

- Feature Engineering: Re-examine your input features. Domain knowledge is critical for creating informative features. Consider techniques like target encoding for categorical variables or creating interaction terms [29].

- Address Class Imbalance: Experimental failures might be rare or common, leading to imbalanced data. Use techniques like SMOTE (Synthetic Minority Over-sampling Technique) or adjust class weights in your classifier to mitigate this [29].

- Model Diversity: Don't rely on a single classifier. Implement an ensemble of diverse models (e.g., RandomForest, XGBoost, and a non-tree model) and combine their predictions using stacking or averaging for improved robustness and accuracy [28] [29].

Experimental Protocols & Data

The table below summarizes the quantitative findings from testing the methods on simulated functions, as reported in the foundational research [1].

| Method | Description | Key Performance Findings |

|---|---|---|

| Baseline (@-1) | Failure assigned a constant value of -1. | Slow initial improvement, but can achieve high final evaluation. |

| Baseline (@0) | Failure assigned a constant value of 0. | Fast initial improvement, but sensitive to constant choice; may lead to suboptimal final performance. |

| F (Floor Padding) | Failure assigned the worst value observed so far. | Fast initial improvement without need for constant tuning; robust performance. |

| B (Binary Classifier) | A classifier predicts failure regions. | Suppresses sensitivity to constant value choice; can have slower initial improvement. |

| FB (Floor Padding + Binary Classifier) | Combination of both techniques. | Robustness of both methods; may show slower initial improvement. |

Detailed Methodology: Implementing BO with Floor Padding

This protocol outlines the steps for implementing a Bayesian Optimization algorithm with the Floor Padding trick, based on the approach used in materials growth optimization [1].

Initialization:

- Define the parameter space to be searched.

- Select an initial set of points (e.g., via random sampling or Latin Hypercube) and run experiments to obtain evaluations.

- Initialize the Gaussian Process surrogate model with this initial data.

Main Optimization Loop (Repeat until budget is exhausted): a. Update Surrogate Model: Condition the Gaussian Process on all available data (successes and padded failures) to obtain the posterior mean

μ(x)and uncertaintyσ(x)for the entire search space. b. Optimize Acquisition Function: Find the next parameterx_tthat maximizes an acquisition function (e.g., Expected Improvement) based on the GP posterior. c. Run Experiment & Evaluate: Conduct the experiment atx_t. d. Handle Result: * If Successful: Record the evaluationy_t. * If Failure: Impute the value fory_tasmin(all previous successful y). e. Augment Data: Add the new data point(x_t, y_t)to the dataset.

Detailed Methodology: Incorporating a Binary Classifier

This protocol adds a binary classifier to the BO workflow to proactively avoid failures [1] [28].

- Data Collection: Run initial experiments to build a dataset labeled with "success" or "failure".

- Classifier Training & Tuning:

- Integrated Optimization Loop: a. Update GP and Classifier: Train both models on the current data. b. Constrained Acquisition: When maximizing the acquisition function, reject points for which the classifier predicts a failure with a probability above a set threshold (e.g., >50%). c. Experiment and Augment Data: Proceed as in the standard loop, labeling the new experiment's outcome as success or failure for the classifier.

Workflow Visualization

Bayesian Optimization with Failure Handling

The diagram below illustrates the integrated workflow for Bayesian Optimization that incorporates both the Floor Padding trick and a Binary Classifier to handle experimental failures.

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key components used in the seminal study that demonstrated these BO tricks for optimizing the growth of SrRuO3 films via molecular beam epitaxy (ML-MBE) [1].

| Item / Component | Function / Role in the Experiment |

|---|---|

| Molecular Beam Epitaxy (MBE) System | A high-vacuum deposition system used to grow high-purity thin films with precise atomic-layer control. The core experimental platform. |

| SrRuO3 Target Material | The perovskite oxide material being grown. It is widely used as a metallic electrode in oxide electronics. |

| Substrate (e.g., SrTiO3) | The base crystal on which the thin film is epitaxially grown. The substrate choice imposes strain, affecting the film's properties. |

| Residual Resistivity Ratio (RRR) | The key evaluation metric (y). Defined as the ratio of electrical resistivity at room temperature to resistivity at low temperature. A higher RRR indicates better crystalline quality and purity. |

| Bayesian Optimization Algorithm | The machine learning driver that autonomously decides the growth parameters for each subsequent experiment based on past results. |

| Gaussian Process Model | The surrogate model that approximates the unknown relationship between growth parameters and the RRR, providing predictions and uncertainty estimates. |

| Floor Padding & Binary Classifier | The software components that handle missing data from failed growth runs, enabling efficient optimization over a wide parameter space. |

In high-throughput materials growth research, the integration of multi-modal data—from scientific literature and microstructural images to chemical compositions—is key to accelerating discovery. Platforms like the Copilot for Real-world Experimental Scientists (CRESt) exemplify this approach, using robotic equipment and AI to optimize materials recipes [34]. However, a major challenge that arises during this data fusion is the prevalence of missing data, which can stem from experimental failures, sensor malfunctions, or data processing errors [1] [35]. Effectively handling this missing data is not merely a preprocessing step; it is fundamental to ensuring the reliability of downstream AI models and the validity of scientific conclusions. This technical support guide provides targeted troubleshooting and methodologies to address this critical issue.

Frequently Asked Questions (FAQs)

Q1: What are the common types of missing data I might encounter in materials experiments? Missing data is typically categorized by its underlying mechanism, which dictates the appropriate handling method [35] [36]:

- Missing Completely at Random (MCAR): The missingness is unrelated to any observed or unobserved data. This often occurs due to random instrument error or sample loss [36].

- Missing at Random (MAR): The probability of a value being missing depends on other observed variables in the dataset. For example, a specific sensor might fail only under certain observed temperature ranges [35] [36].

- Missing Not at Random (MNAR): The probability of missingness is related to the unobserved missing value itself. A classic example in materials science is when a signal is below the instrument's detection limit [1] [36]. This is common in failed growth experiments where the target material phase does not form [1].

Q2: My autonomous experiments sometimes fail, resulting in missing data points. How can my optimization algorithm handle this? Bayesian Optimization (BO) can be adapted to handle experimental failures. The "floor padding trick" is a simple yet effective strategy where a failed experiment's output is imputed with the worst observed value in the dataset so far [1]. This informs the model that the parameters led to a failure, guiding it to explore more promising regions of the parameter space in subsequent runs [1].

Q3: My dataset has a mix of missing data types. Is there a one-size-fits-all imputation method? No. Using a single imputation method for a dataset containing a mixture of MAR, MCAR, and MNAR mechanisms can introduce bias [36]. A two-step, mechanism-aware approach is recommended:

- Classify the likely missingness mechanism for each missing value using a classifier (e.g., Random Forest) [36].

- Impute each value using a method specifically suited to its predicted mechanism. For instance, Random Forest imputation often works well for MAR/MCAR data, while methods like Quantile Regression Imputation of Left-Censored Data (QRILC) are better for MNAR [36].

Q4: How does the missing data pattern affect my choice of imputation method? The pattern (sporadic, block, etc.) and the rate of missingness significantly impact imputation performance. As the missing rate increases, the accuracy of any imputation method decreases [37]. Research in other fields with large-scale sensor data, like Tunnel Boring Machine monitoring, shows that sporadic missing is easiest to impute accurately, while block missing (consecutive missing values) is the most challenging [37].

Troubleshooting Guides

Issue 1: Bayesian Optimization Failing Due to Experimental Errors

Problem: Your autonomous materials growth platform (e.g., a system like CRESt) cannot proceed with Bayesian Optimization because some experiments fail to yield a measurable output, creating missing data.

Solution: Implement the Floor Padding Trick within your BO routine [1].

Step-by-Step Protocol:

- Define Failure: Clearly define what constitutes an experimental "failure" (e.g., no film formation, resistance outside measurable range).

- Initialize: Begin the BO process with a small set of initial, randomly selected growth parameters.

- Run and Evaluate: Execute the experiment and attempt to measure the target property (e.g., Residual Resistivity Ratio - RRR).

- Impute on Failure: If the experiment is a failure and no measurement is possible, assign it the value:

y_failed = min(Y_observed), whereY_observedis the list of all successfully measured outputs from previous runs [1]. - Update Model: Proceed by updating your Gaussian Process model with the parameter set and the value (

y_failedif failed, or the actual measurement if successful). - Iterate: Allow the BO algorithm to suggest the next parameter set based on the updated model, which now actively avoids regions associated with failure.

Visual Guide to the Process: The following workflow diagram illustrates the Bayesian Optimization process with integrated failure handling:

Issue 2: Poor Imputation Accuracy in Multi-Modal Datasets

Problem: After imputing missing values in your dataset, the quality of downstream analyses (e.g., property prediction) remains poor, likely because a single imputation method was applied to a dataset with mixed missing mechanisms.

Solution: Adopt a Mechanism-Aware Imputation (MAI) pipeline [36].

Step-by-Step Protocol:

- Data Preparation: Start with your incomplete dataset. Extract a complete subset of data (with no missing values) to train the classifier.

- Generate Training Labels: On this complete subset, algorithmically impose missing values with known mechanisms (e.g., using a Mixed-Missingness algorithm) to create a ground-truth training set [36].

- Train a Classifier: Train a Random Forest classifier on the artificially generated data from Step 2 to predict whether a missing value is

MAR/MCARorMNARbased on observed patterns in the data [36]. - Classify Real Missingness: Apply the trained classifier to your original, incomplete dataset to predict the missing mechanism for each true missing value.

- Targeted Imputation: Impute the values based on their classified mechanism.

- Proceed with Analysis: Use the fully imputed dataset for your subsequent materials informatics tasks.

Visual Guide to the Process: The following chart outlines the two-step mechanism-aware imputation workflow:

Comparative Data Tables

Table 1: Comparison of Common Imputation Methods

| Imputation Method | Best Suited For | Key Advantages | Key Limitations |

|---|---|---|---|

| K-Nearest Neighbors (KNN) | MAR, MCAR, Sporadic Patterns [37] [36] | Simple, model-free, can capture local data structure [37]. | Computationally heavy for large datasets, sensitive to distance metric. |

| Random Forest | MAR, MCAR [36] | Robust to outliers and non-linear relationships, requires no data scaling [36]. | Can be computationally intensive, may overfit without proper tuning. |

| Bayesian Optimization with Floor Padding | MNAR (Experimental Failures) [1] | Actively guides parameter search away from failures, integrated into optimization loop [1]. | Specific to optimization contexts, not for general data analysis. |

| Quantile Regression (QRILC) | MNAR (e.g., Left-censored data) [36] | Specifically designed for data below a detection limit, imputes realistic low values [36]. | Assumes a specific (log-normal) distribution for the data. |

| Mechanism-Aware Imputation (MAI) | Mixed MAR/MCAR/MNAR [36] | Tailors the method to the mechanism, can combine advantages of multiple methods [36]. | More complex two-step process, requires a complete subset for training. |

Table 2: Impact of Missing Data Patterns on Imputation

| Missing Pattern | Description | Imputation Challenge Level | Recommended Strategy |

|---|---|---|---|

| Sporadic | Isolated, random missing values. | Low [37] | Most standard methods (KNN, mean/mode) work well [37]. |

| Block | Consecutive missing values in a sequence. | High [37] | Time-series specific methods (e.g., last observation carried forward, splines) or advanced ML models [37]. |

| Mixed | A combination of sporadic and block patterns. | Medium [37] | A robust method like Random Forest or a mechanism-aware approach is often necessary [37] [36]. |

The Scientist's Toolkit: Research Reagent Solutions

- Autonomous Robotic Platform: A system for high-throughput synthesis (e.g., ML-MBE). Function: Executes materials growth experiments based on AI-suggested parameters, generating consistent data while operating in failure-prone regions [1].

- Bayesian Optimization Software: A library (e.g., in Python) for sequential model-based optimization. Function: Guides the experimental search for optimal materials by suggesting the next best parameters to test, even when handling failed runs [1].

- Multi-Modal Data Fusion Platform: A system like MIT's CRESt. Function: Integrates diverse data sources (literature, images, compositions) into a unified model, making the problem of missing data across modalities a central concern [34].

- Mechanism-Aware Imputation Pipeline: Custom code implementing a two-step classification-and-imputation process. Function: Systematically addresses the mixed missing data problem, leading to less biased and higher-quality datasets for analysis [36].

Frequently Asked Questions (FAQs)

1. What is block-wise missing data and how does it differ from randomly missing values? Block-wise missing data occurs when entire blocks of data from specific omics sources are absent for certain samples [15] [38]. For example, in a multi-omics study, some patients might have transcriptomics data but completely lack proteomics or metabolomics measurements [15]. This differs from randomly missing values, which are scattered sporadically throughout the dataset. The key distinction is structural pattern: block-wise missingness creates systematic, sample-wide absences of entire data modalities rather than random, individual value omissions [38].

2. When should I use the profile-based approach versus traditional imputation methods? The profile-based approach is particularly advantageous when:

- Missing data affects entire omics sources for subsets of samples [15] [38]

- The missingness mechanism is unknown or cannot be reliably modeled [15]

- You want to avoid potential biases introduced by imputation [15] [10] Traditional imputation methods (like MICE, kNN, or missForest) work better for randomly missing values but risk introducing bias when applied to block-wise missing patterns, as they assume missingness occurs randomly rather than in structured blocks [39] [40].

3. How does the two-step optimization maintain model performance with incomplete data? The two-step optimization procedure maintains performance by first learning distinct models for each available data source independently, then effectively merging these learned models through a second optimization stage [15] [38]. This approach leverages all available complete data blocks without requiring imputation, and uses regularization and constraint techniques at each stage to prevent overfitting and incorporate prior knowledge [38]. The method preserves statistical power by utilizing all available information from different sample subgroups [15].

4. What are the computational requirements for implementing this approach? While specific computational requirements aren't detailed in the literature, the method involves solving multiple optimization problems across data profiles. The complexity scales with the number of profiles (up to 2S-1 for S data sources) and the dimensionality of each omics dataset [15] [38]. For large multi-omics studies, adequate memory for handling multiple high-dimensional datasets and efficient optimization algorithms are essential. The associated R package 'bwm' provides an implemented framework for practical application [15].

Troubleshooting Guides

Problem: Poor Model Performance with High Percentage of Missing Blocks

Symptoms:

- Accuracy metrics declining as more data sources contain missing blocks

- Inconsistent feature selection across different missing data scenarios

- High variance in performance metrics across cross-validation folds

Solutions:

- Profile Compatibility Analysis: Ensure you're correctly identifying all possible profiles in your dataset. For S data sources, you should have up to 2S-1 profiles [15] [38].

- Regularization Tuning: Increase regularization parameters to prevent overfitting to specific profiles with small sample sizes [38].

- Source Weight Examination: Check the learned α vectors across profiles. Sources with consistently low weights across profiles may need to be excluded [15].

Verification: After implementation, performance decline should be minimal even with 30-50% of samples having block-wise missingness. Studies show the method maintains 73-81% accuracy in multi-class cancer classification and 75% correlation in regression tasks under various missing data scenarios [15].

Problem: Implementation Errors in Profile Assignment

Symptoms:

- Incorrect number of profiles generated

- Samples assigned to wrong profiles

- Complete data blocks not properly formed

Solutions:

- Binary Indicator Validation: Verify the binary indicator vector I[1,...,S] for each sample, where I(i)=1 if the i-th data source is available, 0 otherwise [15].

- Profile Decimal Conversion Check: Confirm correct conversion from binary to decimal for profile assignment. For example, with 3 data sources:

- Sources 1 and 2 available: [1,1,0] = 6 (profile 6)

- All sources available: [1,1,1] = 7 (profile 7) [15]

- Compatible Profile Grouping: Ensure samples are grouped with source-compatible profiles as shown in the table below [15]:

Table: Complete Data Blocks for S=3 Data Sources

| Complete Data Block | Compatible Profiles | Available Sources |

|---|---|---|

| Profile 7 | 7 | 1, 2, 3 |

| Profile 6 | 6, 7 | 1, 2 |

| Profile 5 | 5, 7 | 1, 3 |

| Profile 3 | 3, 7 | 2, 3 |

Problem: Convergence Issues in Two-Step Optimization

Symptoms:

- Optimization algorithm failing to converge

- Oscillating objective function values

- Inconsistent results across different initializations

Solutions:

- Gradient Expression Verification: Check the gradient expressions of the loss functions, particularly for multi-class classification scenarios [15].

- Constraint Implementation: Ensure the constraints on parameters are properly implemented, particularly the zero constraints for missing sources in each profile [15].

- Regularization Parameters: Adjust regularization parameters to improve convergence. Start with stronger regularization and gradually decrease if underfitting occurs [38].

Verification: The optimization should converge to a solution where the β coefficients for each data source remain consistent across profiles, while the α weights vary appropriately by profile [15].

Experimental Protocols

Protocol 1: Implementing Profile-Based Data Organization

Purpose: To correctly organize multi-omics data with block-wise missingness into profiles for analysis [15] [38].

Materials:

- Multi-omics datasets (e.g., transcriptomics, proteomics, metabolomics)

- Computational environment (R recommended with bwm package)

- Data matrix integration framework

Procedure:

- Data Integration: Combine all omics datasets, maintaining sample identifiers across sources.

- Availability Assessment: For each sample, create a binary indicator vector I[1,...,S] where I(i)=1 if the i-th data source is available, 0 otherwise [15].

- Profile Assignment: Convert each sample's binary vector to a decimal profile value.

- Profile Vector Creation: Compile all unique profile values into vector pf = (m1, ..., mr) where r is the number of profiles [15].

- Complete Block Formation: For each profile m, group samples with profile m together with samples that have complete data for all sources contained in profile m [15].

Validation: Verify that for profile m, all samples in the complete data block have values for at least the sources specified in profile m.

Protocol 2: Two-Step Optimization Implementation

Purpose: To implement the two-step optimization procedure for learning models from data with block-wise missingness [15] [38].

Materials:

- Profile-organized data from Protocol 1

- R with bwm package installed

- Computational resources adequate for optimization problems

Procedure:

- First Stage - Source-Specific Models: Learn distinct models βi for each data source i using only samples with complete data for that source [38].

- Second Stage - Model Integration: Learn the combining vectors αm that integrate the source-specific models for each profile m [15].

- Apply Constraints: Set αmi=0 for missing sources i in profile m [15].

- Regularization: Apply appropriate regularization at each stage to prevent overfitting and handle high dimensionality [38].

Validation: Check that the final model achieves performance metrics comparable to published results: 73-81% accuracy for classification tasks and 75% correlation for regression tasks under block-wise missingness [15].

Quantitative Performance Data

Table: Performance of Two-Step Method Under Different Missing Data Scenarios

| Application Domain | Task Type | Performance Metric | Performance Range | Missing Data Conditions |

|---|---|---|---|---|

| Breast Cancer Subtyping | Multi-class Classification | Accuracy | 73% - 81% | Various block-wise missing scenarios [15] |

| Exposome Data Analysis | Regression | Correlation (true vs predicted) | ~75% | Multiple missing data patterns [15] |

| Binary Classification | Binary Classification | Accuracy | 86% - 92% | Block-wise missing across omics [38] |

| Binary Classification | Binary Classification | F1 Score | 68% - 79% | Block-wise missing across omics [38] |

Workflow Visualization

Profile-Based Two-Step Optimization Workflow

Research Reagent Solutions

Table: Essential Computational Tools for Handling Block-Wise Missing Data

| Tool/Resource | Type | Function | Implementation Notes |

|---|---|---|---|

| bwm R Package | Software Package | Implements two-step optimization for block-wise missing data | Supports binary, continuous, and multi-class response types [15] |

| Profile Assignment Algorithm | Computational Method | Organizes samples into profiles based on data availability | Core component for handling block-wise missing structure [15] [38] |

| Regularization Framework | Mathematical Method | Prevents overfitting in high-dimensional settings | Applied at both stages of optimization [38] |

| Constraint-Based Optimization | Mathematical Method | Ensures proper handling of missing sources in profiles | Sets αmi=0 for missing sources i in profile m [15] |

Active Learning and AutoML for Data-Efficient Experimentation in Small-Sample Regimes

Frequently Asked Questions (FAQs)

Q1: What are the main advantages of combining Active Learning with AutoML for materials research?

Combining these approaches creates a powerful, automated pipeline for data-efficient research. AutoML automates the process of model selection and hyperparameter tuning, which is crucial when you lack extensive machine learning expertise. Active Learning strategically selects the most informative data points to label next, minimizing experimental costs. Used together, they significantly reduce the volume of labeled data required to build robust predictive models for material properties, which is ideal when synthesis and characterization are expensive and time-consuming [41] [42].

Q2: My dataset has fewer than 1,000 samples. Is AutoML still a viable option?

Yes, recent benchmarks demonstrate that AutoML is highly competitive with manual model optimization, even on small datasets with little training time. Studies focusing on small-sample tabular data common in materials engineering have shown that AutoML can match or even surpass the performance of manually tuned models from scientific publications on the same datasets [43]. The key is to ensure proper data sampling techniques, like nested cross-validation, to achieve reliable and trustworthy results.

Q3: Which Active Learning query strategies are most effective early in the experimentation cycle?

In the early, data-scarce stages of an experiment, uncertainty-based and diversity-based query strategies tend to perform best. A 2025 benchmark study found that uncertainty-driven strategies (like LCMD and Tree-based-R) and diversity-hybrid strategies (like RD-GS) clearly outperformed geometry-only heuristics and random sampling. These methods are more effective at selecting informative samples that rapidly improve model accuracy when the initial labeled set is very small [41].

Q4: I'm encountering library dependency errors with my AutoML framework. How can I resolve this?

Version conflicts are a common issue in AutoML. The solution depends on your SDK version. For instance, if you are using an AzureML SDK version greater than 1.13.0, you may need to pin specific versions of pandas and scikit-learn:

If your version is less than or equal to 1.12.0, you would need different versions. Always check your framework's documentation for specific dependency requirements [44].

Troubleshooting Guides

Issue 1: Poor AutoML Performance on Small Datasets

Problem: Your AutoML model is underperforming or is unreliable when trained on a small dataset.

| Solution Step | Description | Key Considerations |

|---|---|---|

| Implement Nested Cross-Validation (NCV) | Use NCV for a more robust estimate of model performance and to reduce overfitting. | Crucial for small datasets to ensure reliability and model robustness [43]. |

| Verify Data Splits | Ensure your train/test split is representative. Consider repeated cross-validation. | Data sampling is of crucial importance for reliable results with limited data [43]. |

| Leverage Multiple AutoML Frameworks | Combine results from different AutoML tools to potentially enhance performance. | Different frameworks may find better solutions for different datasets [43]. |

Issue 2: Active Learning Yields Diminishing Returns

Problem: Initial rounds of Active Learning improve the model, but subsequent samples provide less and less benefit.

| Solution Step | Description | Key Considerations |

|---|---|---|

| Understand Convergence | Recognize that this is expected behavior. As the labeled set grows, the performance gap between AL strategies and random sampling narrows. | The benchmark shows all methods converge as the labeled set grows [41]. |

| Re-evaluate Strategy | The optimal query strategy may change as your dataset evolves. An uncertainty-based strategy might be best early on, while a diversity-based method could help later. | Early leaders (e.g., LCMD, RD-GS) may not maintain dominance in later acquisition stages [41]. |

| Set a Stopping Criterion | Define a performance threshold or budget limit to stop the AL process once significant improvements are no longer observed. | Prevents wasting resources on labeling samples that offer minimal performance gains [41]. |

Issue 3: Dependency and Installation Failures

Problem: Errors when setting up or running your AutoML environment, such as ImportError or Module not found.

| Solution Step | Description | Key Considerations |

|---|---|---|

| Uninstall Previous Versions | When upgrading an AutoML SDK, completely uninstall the previous version before installing the new one. | For example, run pip uninstall azureml-train automl before installing a new version to avoid conflicts [44]. |

| Check Version Compatibility | Confirm that all package versions are compatible with your AutoML SDK version. | This is a common source of ImportError and AttributeError issues [44]. |

| Use a Clean Conda Environment | Create a fresh conda environment to isolate your AutoML dependencies from other projects. | Helps avoid conflicts with pre-existing packages on your system [44]. |

Experimental Protocols & Workflows

Protocol: Iterative Pool-Based Active Learning with AutoML

This protocol details the core methodology for data-efficient experimentation, as benchmarked in recent literature [41].

- Initialization: Start with a small, initially labeled dataset (L = {(xi, yi)}{i=1}^l) and a large pool of unlabeled data (U = {xi}_{i=l+1}^n).

- Model Training: Train an initial AutoML model on the labeled set (L). The AutoML system automatically handles model selection, hyperparameter tuning, and feature engineering.

- Querying: Use an Active Learning query strategy (see Table 1) to select the most informative sample(s) (x^*) from the unlabeled pool (U).

- Annotation: Obtain the target value (y^*) for the selected sample(s) through human annotation (e.g., experimental synthesis and characterization).

- Update Sets: Expand the labeled set: (L = L \cup {(x^, y^)}). Remove the sampled instance(s) from the unlabeled pool (U).

- Iteration: Repeat steps 2-5 until a predefined stopping criterion is met (e.g., performance plateau, budget exhaustion).

Core Active Learning Query Strategies

The table below summarizes key Active Learning strategies evaluated for regression tasks within AutoML pipelines [41] [45].

| Strategy Category | Example Methods | Core Principle | Best Use-Case |

|---|---|---|---|

| Uncertainty Sampling | LCMD, Tree-based-R | Selects data points where the model's prediction is most uncertain. | Early-stage learning when the model is most unsure about the data distribution. |

| Diversity Sampling | GSx, EGAL | Selects a set of data points that are most diverse or representative of the overall unlabeled pool. | Ensuring the model sees a broad range of examples, preventing cluster bias. |

| Hybrid Methods | RD-GS | Combines uncertainty and diversity principles to select points that are both informative and representative. | Often outperforms single-principle methods, balancing exploration and exploitation. |

Workflow: Active Learning with AutoML for Materials Discovery

The following diagram illustrates the iterative cycle of integrating Active Learning with an AutoML framework.

Active Learning and AutoML Integration

The Scientist's Toolkit: Key Research Reagents & Solutions

This table details essential computational "reagents" for setting up a data-efficient materials discovery pipeline.

| Item | Function / Description | Relevance to Small-Sample Regimes |

|---|---|---|