Beyond the Molecule: How BERT is Revolutionizing Materials and Drug Property Prediction

This article explores the transformative application of BERT-based architectures in predicting materials and molecular properties, a critical task in drug development and materials science.

Beyond the Molecule: How BERT is Revolutionizing Materials and Drug Property Prediction

Abstract

This article explores the transformative application of BERT-based architectures in predicting materials and molecular properties, a critical task in drug development and materials science. We first establish the foundational principles of adapting transformer models from natural language to chemical representations like SMILES. The discussion then progresses to methodological implementations, including multitask learning and cross-modal knowledge transfer, which address the pervasive challenge of data scarcity. Further, we delve into optimization strategies such as advanced positional embeddings and active learning integration to enhance model robustness and data efficiency. Finally, the article provides a comprehensive validation of BERT's performance against state-of-the-art graph neural networks and other traditional methods across diverse benchmarks, highlighting its superior accuracy and interpretability. This guide is tailored for researchers and professionals seeking to leverage cutting-edge deep learning for accelerated discovery.

From Text to Toxicity: The Foundational Shift to BERT in Materials Informatics

The Data Scarcity Problem in Drug and Materials Discovery

In both drug and materials discovery, researchers face a fundamental constraint: the scarcity of high-quality, labeled experimental data. This data scarcity problem creates a significant bottleneck in the development cycle, traditionally requiring years of laboratory experimentation and enormous financial investment to generate sufficient data for reliable predictive modeling. In drug discovery, the issue manifests in limited toxicology labels and clinical trial outcomes, while materials science grapples with sparse measurements of complex properties across vast chemical spaces. The high cost and extended timelines associated with experimental data generation—often requiring $10-100 million and 10-20 years to bring a new material to market—make this scarcity a critical barrier to innovation [1] [2].

Within this challenging landscape, BERT (Bidirectional Encoder Representations from Transformers) architecture and its derivatives have emerged as powerful frameworks for addressing data scarcity through representation learning and transfer learning. These models, initially pretrained on large unlabeled datasets, learn fundamental chemical and structural patterns that can be fine-tuned for specific prediction tasks with limited labeled examples. This approach has demonstrated remarkable success in both domains, effectively decoupling representation learning from downstream task-specific fine-tuning to overcome data limitations [3] [4] [5]. This article provides a comprehensive comparison of BERT-based approaches tackling the data scarcity problem, examining their experimental methodologies, performance benchmarks, and practical implementations.

Comparative Analysis of BERT-Based Solutions

Table 1: Overview of BERT-Based Architectures for Property Prediction

| Model Name | Architectural Features | Pretraining Data | Target Applications | Key Innovations |

|---|---|---|---|---|

| Molecular BERT [3] | Transformer-based BERT | 1.26 million compounds | Drug toxicity prediction | Disentangles representation learning and uncertainty estimation |

| GEO-BERT [4] | Geometry-enhanced BERT | Molecular structures with 3D conformations | Drug discovery (DYRK1A inhibitors) | Incorporates atom-atom, bond-bond, and atom-bond positional relationships |

| Cross-modal BERT [5] | Multimodal BERT with knowledge transfer | Multimodal materials data | Composition-based materials property prediction | Aligns compositional and structural embeddings through implicit/explicit transfer |

| CrystalTransformer [6] | Transformer-generated atomic embeddings | Crystal structures from materials databases | Crystal property prediction | Generates universal atomic embeddings (ct-UAEs) transferable across properties |

Table 2: Experimental Performance Benchmarks of BERT Models

| Model | Dataset | Key Metrics | Performance Improvement | Data Efficiency Advantage |

|---|---|---|---|---|

| Molecular BERT [3] | Tox21, ClinTox | Toxic compound identification | Achieved equivalent performance with 50% fewer iterations vs conventional AL | Reliable uncertainty estimation with limited labeled data |

| GEO-BERT [4] | DYRK1A inhibitor screening | IC50 values (<1 μM) | Identified two potent novel inhibitors in prospective validation | Enhanced molecular characterization from 3D structural information |

| Cross-modal BERT [5] | LLM4Mat-Bench (32 tasks) | Mean Absolute Error (MAE) | State-of-the-art in 25 out of 32 cases, MAE reduced by 15.7% on average | Effective knowledge transfer from compositional to structural domains |

| CrystalTransformer [6] | Materials Project database | Formation energy prediction | 14% improvement in CGCNN, 18% in ALIGNN with ct-UAEs | Addresses data scarcity through transferable atomic fingerprints |

Experimental Protocols and Methodologies

Molecular BERT for Active Learning in Drug Discovery

The Molecular BERT framework employs a sophisticated Bayesian experimental design integrated with active learning to address data scarcity in toxicity prediction [3]. The methodology begins with pretraining a transformer-based BERT model on 1.26 million unlabeled compounds, enabling the model to learn fundamental chemical representations without labeled data. For downstream tasks, the implementation uses a small initial labeled set (100 molecules with balanced positive/negative instances) from Tox21 and ClinTox datasets, with the remaining training data forming an unlabeled pool set.

The experimental workflow applies scaffold splitting with an 80:20 ratio to create distinct training and testing sets, ensuring that molecules with similar core structures are segregated between sets to test generalization capability. The active learning cycle employs Bayesian acquisition functions to strategically select the most informative samples from the unlabeled pool:

- BALD (Bayesian Active Learning by Disagreement): Selects samples that maximize information gain about model parameters, calculated as

BALD(x) = H[y|x,D] - E_φ∼p(φ|D)H[y|x,φ]where the first term represents total uncertainty and the second term captures aleatoric uncertainty [3]. - EPIG (Expected Predictive Information Gain): Prioritizes samples expected to most improve predictive performance on target distributions by explicitly reducing model output uncertainty.

Through iterative cycles of sample selection, labeling, and model retraining, this approach achieves progressive improvement in predictive accuracy while minimizing labeling efforts. The disentanglement of representation learning (handled during pretraining) from uncertainty estimation (managed during active learning) enables reliable molecule selection despite limited initial labeled data [3].

GEO-BERT Geometry-Enhanced Molecular Representation

GEO-BERT addresses data scarcity by incorporating three-dimensional structural information through a self-supervised learning framework [4]. The model enhances its ability to characterize molecular structures by introducing three distinct positional relationships derived from 3D conformations:

- Atom-atom relationships: Spatial proximities and interactions between atoms

- Bond-bond relationships: Geometric arrangements between chemical bonds

- Atom-bond relationships: Relative orientations between atoms and bonds

The experimental validation involved prospective studies for DYRK1A inhibitor discovery, where the model was tasked with identifying novel inhibitors from chemical libraries. The methodology included transfer learning from the pretrained GEO-BERT to specific property prediction tasks with limited labeled examples, demonstrating that geometric pretraining provides robust molecular representations that transfer effectively to low-data scenarios. The model's open-source implementation (https://github.com/drug-designer/GEO-BERT) has proven practical utility in early-stage drug discovery, with experimental confirmation of two potent inhibitors (IC50: <1 μM) identified through this approach [4].

Cross-Modal Knowledge Transfer for Materials Property Prediction

For materials discovery where crystal structure data is often scarce, cross-modal BERT approaches address data scarcity through knowledge transfer between different representations of materials [5]. The methodology implements two distinct transfer learning strategies:

- Implicit Transfer (imKT): Involves pretraining chemical language models on multimodal embeddings aligned with a foundation model trained on multiple materials modalities (crystal structure, density of electronic states, charge density, and textual descriptions).

- Explicit Transfer (exKT): Generates crystal structures from composition using a large language model (CrystaLLM) as a crystal structure predictor, followed by structure-aware predictors (graph neural networks) fine-tuned on the generated crystals.

The experimental protocol evaluated these approaches on the LLM4Mat-Bench and MatBench datasets, encompassing 32 different prediction tasks. For composition-based property prediction, the models were trained using masked language modeling objectives on stoichiometric formulas, then fine-tuned for specific property prediction tasks. This approach demonstrated particularly strong performance on band gap-related predictions, with MAE reductions of 15.2% on average compared to previous state-of-the-art models [5].

CrystalTransformer for Universal Atomic Embeddings

CrystalTransformer addresses data scarcity in crystal property prediction through transferable atomic embeddings called universal atomic embeddings (ct-UAEs) [6]. The methodology involves:

- Pretraining: The CrystalTransformer model learns atomic embeddings directly from chemical information in crystal databases without relying on predefined atomic attributes.

- Transfer Learning: The generated ct-UAEs are transferred to various graph neural network backends (CGCNN, MEGNET, ALIGNN) for specific property prediction tasks.

- Multi-task Learning: Embeddings are trained across multiple properties to enhance transferability across different prediction tasks.

The experimental validation used Materials Project datasets (MP and MP) with standard splits (60,000 training, 5,000 validation, 4,239 testing for MP; 80%/10%/10% split for MP). Results demonstrated that ct-UAEs achieve significant accuracy improvements across multiple back-end models and properties, with the largest improvement (18% MAE reduction) observed in ALIGNN for formation energy prediction. The embeddings also showed excellent transferability across databases, with a 34% accuracy boost in MEGNET when applied to hybrid perovskites database [6].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagents and Computational Tools

| Reagent/Solution | Function | Application Context |

|---|---|---|

| Tox21 Dataset [3] | Provides ~8,000 compounds with 12 toxicity pathway measurements | Benchmark for computational toxicology models |

| ClinTox Dataset [3] | Contains 1,484 FDA-approved and failed clinical trial drugs | Drug safety profiling and toxicity prediction |

| Materials Project Database [6] | Computational repository with 134,243+ material structures and properties | Training and validation for materials property prediction |

| OMol25 Dataset [2] | Contains over 100 million DFT evaluations across ~83 million molecular systems | Training machine-learned interatomic potentials with near-DFT accuracy |

| Bayesian Active Learning Framework [3] | Strategically selects informative samples for labeling to minimize experimental costs | Active learning cycles for iterative model improvement |

| Universal Atomic Embeddings (ct-UAEs) [6] | Transferable atomic fingerprints capturing complex atomic features | Enhancing prediction accuracy across multiple GNN architectures |

| Cross-modal Alignment [5] | Bridges compositional and structural representations of materials | Property prediction for compounds without known crystal structures |

Technical Implementation and Workflow Integration

The technical implementation of BERT-based solutions for data scarcity follows a consistent pattern across drug and materials discovery domains. The fundamental approach involves decoupling representation learning from task-specific fine-tuning, which proves particularly valuable in low-data regimes. Implementation typically begins with self-supervised pretraining on large unlabeled datasets—1.26 million compounds for Molecular BERT or extensive crystal structure databases for CrystalTransformer—to learn fundamental chemical and structural patterns without expensive experimental labels [3] [6].

For specific property prediction tasks, the pretrained models undergo fine-tuning with limited labeled examples, leveraging the acquired representations to achieve robust performance with minimal task-specific data. In drug discovery applications, this process is often enhanced through Bayesian active learning frameworks that strategically select the most informative samples for experimental labeling, maximizing information gain while minimizing labeling costs [3]. The BALD and EPIG acquisition functions play crucial roles in this process, quantifying different aspects of uncertainty to guide sample selection.

In materials discovery, cross-modal knowledge transfer enables prediction for compounds without known structures by aligning compositional and structural representations [5]. The implicit transfer approach (imKT) aligns chemical language model embeddings with multimodal foundation models, while explicit transfer (exKT) generates plausible crystal structures from composition alone. This enables structure-aware property prediction even when experimental structure determinations are unavailable, significantly expanding the explorable chemical space.

BERT-based architectures have fundamentally transformed the approach to data scarcity in drug and materials discovery, demonstrating that representation learning and transfer learning can effectively mitigate the challenges of limited experimental data. The comparative analysis reveals that while architectural variants differ in their specific implementations—incorporating geometric information, cross-modal transfer, or universal embeddings—they share a common foundation of pretraining followed by task-specific adaptation.

The most successful approaches effectively disentangle representation learning from uncertainty estimation, enabling robust performance even with limited labeled examples. Molecular BERT's 50% reduction in required iterations for equivalent toxicity identification, GEO-BERT's experimental validation through novel inhibitor discovery, and CrystalTransformer's 14-18% accuracy improvements across multiple graph neural network architectures collectively demonstrate the transformative potential of these approaches [3] [4] [6].

As these technologies evolve, key challenges remain in improving interpretability, enhancing multimodal integration, and developing more sophisticated uncertainty quantification methods. However, the current state of BERT-based property prediction already offers powerful solutions to the data scarcity problem, enabling more efficient exploration of chemical and materials spaces while significantly reducing the experimental burden required for discovery and development.

In the realm of computational chemistry and drug discovery, the Simplified Molecular Input Line Entry System (SMILES) has established itself as a fundamental vocabulary for representing molecular structures. Much like natural language processing (NLP) models operate on sequences of words, chemical language models (CLMs) utilize SMILES strings as their foundational linguistic elements. These strings encode two-dimensional molecular information through a specialized vocabulary of characters ("tokens") that represent atoms, bonds, rings, and branches [7]. The SMILES notation functions as a specialized chemical grammar, with specific syntax rules governing how tokens can be combined to form valid molecular representations. This linguistic analogy extends to how researchers can apply NLP-inspired techniques—including data augmentation, token manipulation, and semantic analysis—to enhance model performance in critical tasks such as materials property prediction and drug-target interaction (DTI) forecasting [7] [8].

Within BERT-based architectures for materials property prediction, understanding SMILES as a vocabulary is not merely an abstract concept but a practical framework that drives methodological innovation. The representation of molecules as sequences enables the application of transformer-based models that can capture complex, long-range dependencies within molecular structures [9]. This approach has demonstrated significant potential in addressing one of the field's most pressing challenges: achieving accurate predictions with limited labeled data. By leveraging pre-trained chemical language models, researchers can transfer knowledge from large unlabeled molecular datasets to specific property prediction tasks, substantially improving data efficiency and model generalization [9].

The SMILES Lexicon: Tokenization and Vocabulary Construction

Fundamental Token Types

The SMILES vocabulary consists of distinct token types that collectively describe molecular structure:

- Element tokens: Represent atomic species (e.g., "C" for carbon, "N" for nitrogen, "O" for oxygen), with aromaticity distinguished by lowercase characters ("c" for aromatic carbon) [10].

- Bond tokens: Describe connection types ("-" for single bonds, "=" for double bonds, "#" for triple bonds) with single bonds often omitted as defaults [7].

- Branching tokens: Parentheses "(" and ")" indicate molecular branching patterns [7].

- Ring tokens: Numeric labels (e.g., "1", "2") mark ring closure points within the structure [7].

This grammatical framework allows SMILES to represent complex molecular graphs as linear strings through depth-first traversal of the molecular structure [7]. A single molecule can generate multiple valid SMILES strings depending on the starting atom and traversal path, creating inherent synonymity within the chemical language [7].

Advanced Tokenization Strategies

Recent research has evolved beyond basic SMILES tokenization to address vocabulary limitations. The Atom-In-SMILES (AIS) approach enhances token informativeness by incorporating local chemical environment context into each token [10]. Unlike standard SMILES tokens that represent atoms in isolation, AIS tokens encapsulate three key aspects: the elemental symbol of the central atom, ring membership information ("R" for ring atoms, "!R" for non-ring atoms), and the neighboring atoms connected to the central atom [10]. This environment-aware tokenization creates a more chemically meaningful vocabulary while maintaining SMILES grammar compatibility.

Hybrid representation methods such as SMI+AIS(N) selectively replace frequently occurring SMILES tokens with their AIS counterparts, balancing chemical expressiveness with vocabulary size [10]. This approach mitigates the significant token frequency imbalance inherent in standard SMILES, where common tokens like "C" (carbon) appear with disproportionately high frequency compared to other elements [10].

Table 1: Comparison of Molecular Representation Methods

| Representation | Token Diversity | Chemical Context | Validity Guarantee | Primary Applications |

|---|---|---|---|---|

| Standard SMILES | Limited | Minimal | No | General molecular representation |

| SELFIES | Limited | Minimal | Yes | Robust molecular generation |

| AIS | High | Extensive | No | Property prediction tasks |

| SMI+AIS | Moderate | Selective | No | Structure generation optimization |

Quantitative Performance Comparison of SMILES Representation Methods

Structure Generation and Optimization Performance

The effectiveness of different SMILES representations has been quantitatively evaluated in molecular structure generation tasks. When applied to latent space optimization with Bayesian optimization for generating structures with improved binding affinity and synthesizability, the SMI+AIS representation demonstrated measurable advantages over established alternatives [10]. Specifically, SMI+AIS achieved a 7% improvement in binding affinity and a 6% increase in synthesizability scores compared to standard SMILES representations [10]. This performance enhancement stems from the richer chemical context encoded within AIS tokens, which allows optimization algorithms to better capture structure-property relationships.

The hybridization approach in SMI+AIS also addresses vocabulary imbalance issues that can impede model training. Analysis of the ZINC database revealed that introducing 100-150 carefully selected AIS tokens effectively redistributes token frequencies, creating a more balanced vocabulary without excessive expansion that could lead to data sparsity issues [10]. This balanced vocabulary composition correlates with improved model performance in downstream tasks.

Data Augmentation Strategies and Their Effects

SMILES enumeration (generating multiple valid representations of the same molecule) has emerged as a powerful data augmentation technique, particularly beneficial in low-data scenarios [7]. Beyond simple enumeration, researchers have developed sophisticated augmentation strategies that further enhance model performance:

Table 2: Performance of SMILES Augmentation Strategies in Low-Data Scenarios

| Augmentation Method | Validity | Uniqueness | Novelty | Optimal Probability (p) |

|---|---|---|---|---|

| Token Deletion | Variable | High | High | 0.05 |

| Atom Masking | High | High | Moderate | 0.05 |

| Bioisosteric Substitution | High | Moderate | Moderate | 0.15 |

| Self-training | Highest | High | High | N/A |

These augmentation strategies exhibit distinct performance characteristics across dataset sizes. Atom masking has proven particularly effective for learning desirable physicochemical properties in very low-data regimes, while token deletion shows promise for creating novel molecular scaffolds [7]. Self-training augmentation, wherein SMILES strings generated by a chemical language model are used as input for subsequent training phases, consistently outperforms basic enumeration across all dataset sizes [7].

Experimental Protocols for SMILES-Based Molecular Representation

SMILES Alignment Protocol for Molecular Similarity

A key methodology for comparing SMILES-represented molecules adapts the Needleman-Wunsch algorithm for global sequence alignment with a modified scoring function [11]. This approach enables quantitative assessment of molecular transformations in biochemical pathways:

- Input Preparation: Convert molecules to canonical SMILES representations using standard toolkits.

- Scoring Matrix Definition: Implement a substitution matrix based on atomic partial charges rather than simple atomic identities, reflecting electronegativity differences.

- Gap Penalty Configuration: Assign appropriate gap opening and extension penalties to balance alignment flexibility with chemical plausibility.

- Dynamic Programming Execution: Perform global alignment using the modified scoring scheme.

- Pathway Analysis Application: Quantify structural changes between metabolites in pathways such as glycolysis and the Krebs cycle.

This method has validated its efficacy by correctly aligning atoms known to be conserved across biochemical transformations, successfully capturing the structural evolution patterns characteristic of linear versus cyclical metabolic pathways [11].

Chemical Language Model Pretraining and Fine-Tuning

Effective implementation of BERT-style architectures for molecular property prediction follows a rigorous protocol:

- Large-Scale Pretraining: Train transformer models on extensive unlabeled molecular datasets (e.g., 1.26 million compounds for MolBERT) using masked language modeling objectives [9].

- Embedding Alignment: For materials property prediction, align CLM embeddings with those from multimodal foundation models incorporating crystal structure, electronic states, and textual descriptions [5].

- Task-Specific Fine-Tuning: Adapt pretrained models to specific property prediction tasks using limited labeled data, typically with scaffold-based dataset splits to ensure generalization [9].

- Bayesian Active Learning Integration: Employ acquisition functions like Bayesian Active Learning by Disagreement (BALD) to strategically select informative molecules for labeling, maximizing model improvement per experiment [9].

This protocol has demonstrated remarkable data efficiency, achieving equivalent toxic compound identification with 50% fewer iterations compared to conventional active learning approaches [9].

SMILES Processing in Chemical Language Models

SMILES-Enhanced Architectures for Property Prediction

Cross-Modal Knowledge Transfer Frameworks

Advanced SMILES-based prediction systems increasingly employ cross-modal knowledge transfer to enhance performance. Two predominant formulations have emerged:

- Implicit Transfer (imKT): Involves pretraining chemical language models on multimodal embeddings aligned with foundation models incorporating multiple materials modalities (crystal structure, density of electronic states, charge density, textual descriptions) [5].

- Explicit Transfer (exKT): Generates crystal structures using large language models like CrystaLLM, followed by structure-aware predictor fine-tuning on generated crystals [5].

These approaches have demonstrated state-of-the-art performance on benchmark datasets, achieving mean absolute error reductions of 15.7% on JARVIS-DFT tasks and 15.2% on SNUMAT band-gap prediction tasks compared to previous benchmarks [5]. The integration of SMILES representations with multimodal knowledge creates more robust and accurate property prediction systems.

Hybrid Model Architectures for Specialized Applications

Sophisticated hybrid architectures have emerged for specific drug discovery applications:

SVDTI Framework: This drug-target interaction prediction model employs a stacked variational autoencoder (SVAE) with Long Short-Term Memory (LSTM) networks to map high-dimensional SMILES and protein sequence data into compact, informative low-dimensional vectors [12]. The framework subsequently processes these representations through a neural collaborative filtering (NCF) model that combines the linear characteristics of matrix factorization with the nonlinear representation power of multilayer perceptrons [12].

Imagand Model: This SMILES-to-Pharmacokinetic (S2PK) diffusion model generates pharmacokinetic properties conditioned on learned SMILES embeddings, addressing the challenge of sparse PK datasets with limited overlap [13]. The model employs a Discrete Local Gaussian Noise (DLGN) approach that creates a prior distribution closer to the true data distribution, improving generation performance for non-Gaussian distributed molecular properties [13].

SVDTI Framework for Drug-Target Interaction Prediction

Table 3: Key Research Resources for SMILES-Based Molecular Modeling

| Resource | Type | Function | Application Context |

|---|---|---|---|

| RDKit | Software Library | Molecular fingerprint generation & manipulation | Similarity analysis, descriptor calculation [14] |

| Yamanishi Dataset | Curated Dataset | Gold-standard drug-target interactions | Model benchmarking & validation [12] |

| ZINC Database | Molecular Database | Large collection of commercially available compounds | Vocabulary analysis & model pretraining [10] |

| SwissBioisostere | Specialized Database | Bioisosteric replacement patterns | Data augmentation strategy [7] |

| Tox21/ClinTox | Benchmark Datasets | Toxicology & clinical failure data | Model evaluation & validation [9] |

| MolBERT | Pretrained Model | Chemical language model with 1.26M compounds | Transfer learning initialization [9] |

The evolving understanding of SMILES as a specialized vocabulary continues to drive innovation in chemical language modeling. Current research demonstrates that moving beyond basic tokenization toward environmentally aware representations like AIS tokens and hybrid SMI+AIS approaches yields measurable performance improvements in critical tasks including molecular generation, property prediction, and drug-target interaction forecasting [10]. The integration of SMILES processing with multimodal knowledge transfer and sophisticated architectures like stacked variational autoencoders and diffusion models represents the cutting edge of computational molecular design [5] [12] [13].

As the field advances, the SMILES vocabulary is likely to further evolve toward increasingly context-aware representations that capture richer chemical semantics while maintaining compatibility with the extensive existing ecosystem of computational tools. These developments will strengthen the foundation for more accurate, data-efficient, and interpretable molecular property prediction systems, ultimately accelerating the drug and materials discovery pipeline.

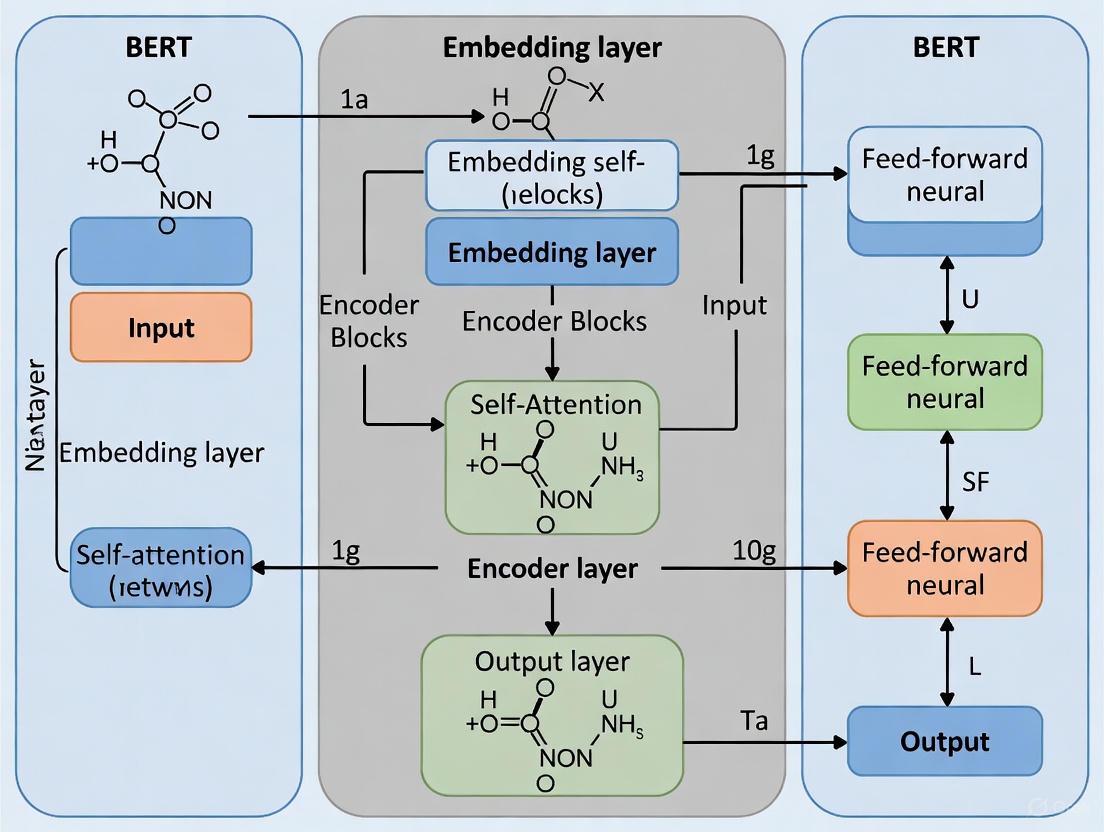

The Bidirectional Encoder Representations from Transformers (BERT) represents a fundamental shift in how machines understand human language. Introduced by Google in 2018, its core innovation lies in its bidirectional context processing and sophisticated self-attention mechanism [15]. Unlike previous models that processed text sequentially (either left-to-right or right-to-left), BERT's key innovation is its ability to read an entire sequence of words at once [15]. This non-directional approach enables the model to learn a deeper context of a word by considering all of its surroundings simultaneously [15].

In the specific context of materials property prediction and drug discovery, this architectural advantage translates into a powerful ability to understand complex molecular representations and clinical text data. Models like GEO-BERT and DrugBERT, built upon the core BERT architecture, leverage these capabilities to predict molecular properties and drug efficacy with remarkable accuracy [4] [16]. This guide will objectively compare BERT's performance against alternative architectures and provide detailed experimental protocols from recent research, focusing specifically on applications in scientific property prediction.

Core Architectural Components

The Self-Attention Mechanism

At the heart of BERT lies the self-attention mechanism, which allows the model to weigh the importance of different words in a sequence when encoding a particular word [17]. In technical terms, self-attention is a mechanism where each token in the input pays attention to all other tokens, including itself, to generate its contextual embedding [17]. Calculating attention is a way for each token to ask, "Which other words should I focus on to understand my meaning?"

The mechanism operates through three learned vectors for each token:

- Query (Q): Represents what the current token is looking for in other tokens

- Key (K): Helps other tokens decide how relevant the current token is to them

- Value (V): Contains the actual information that a token contributes [17]

These vectors are computed using learned weight matrices (Wq, Wk, W_v) during training. The attention score is calculated by taking the dot product of the query vector of one token with the key vector of another, then applying a softmax function to obtain normalized weights [18].

Bidirectional Context Processing

BERT's bidirectionality is fundamentally different from the unidirectional approach of models like GPT. While GPT processes text strictly from left to right, BERT's encoder-only architecture processes all words in a sequence simultaneously [15]. This bidirectional training enables BERT to develop a deeper understanding of language context, making it particularly effective for tasks that require comprehensive contextual analysis rather than text generation [15].

The bidirectional capability is achieved through BERT's pre-training tasks:

- Masked Language Modeling (MLM): Approximately 15% of words in input sequences are randomly masked, and the model must predict these masked words based on their bidirectional context [15]

- Next Sentence Prediction (NSP): The model receives pairs of sentences and predicts whether the second sentence logically follows the first [15]

Comparative Performance Analysis

Benchmark Performance Across Domains

Extensive testing across multiple domains reveals distinct performance patterns for BERT and its alternatives. The following table summarizes key comparative findings:

Table 1: Performance comparison of BERT and alternative models across different domains and tasks

| Model | Architecture Type | Primary Strengths | Notable Performance Metrics | Domain Applications |

|---|---|---|---|---|

| BERT | Encoder-only, Bidirectional | Deep contextual understanding, NLU tasks | Superior performance on medical concept recognition vs. general BERT [19] | Drug discovery, molecular property prediction [4] |

| GEO-BERT | BERT-based with geometric encoding | Molecular property prediction, 3D structure integration | Identified potent DYRK1A inhibitors (IC50: <1 μM) [4] | Drug discovery, molecular analysis [4] |

| GPT Series | Decoder-only, Unidirectional | Text generation, creative tasks | 87% accuracy in clinical sentiment classification [20] | Content creation, conversational AI [15] |

| LLaMA | Decoder-only, Autoregressive | Computational efficiency, strong performance with fewer parameters | Comparable performance to larger models with fewer parameters [15] | Accessible AI research, resource-constrained environments [15] |

| BioBERT | Domain-specific BERT | Biomedical text processing | F1-score of 0.836 on clinical trial NER [21] | Clinical text analysis, biomedical NER [21] |

| DrugBERT | BERT with LDA topic embedding | Drug efficacy prediction | 3% improvement in AUC over previous methods [16] | Anti-tumor drug efficacy prediction [16] |

Domain-Specific Performance in Scientific Applications

In specialized scientific domains, BERT-based models consistently demonstrate advantages over general-purpose alternatives:

Table 2: Performance of BERT-based models in specialized scientific applications

| Application Domain | Model Variant | Task | Performance Metrics | Comparative Advantage |

|---|---|---|---|---|

| Molecular Property Prediction | GEO-BERT [4] | Molecular property prediction, inhibitor identification | Identified two novel DYRK1A inhibitors with IC50 <1 μM [4] | Incorporates 3D structural information via atom-atom, bond-bond, and atom-bond relationships [4] |

| Drug Efficacy Prediction | DrugBERT [16] | Predicting efficacy of anti-tumor drugs | 3% AUC improvement on independent bowel cancer dataset [16] | Integrates LDA topic embedding and drug efficacy-aware attention mechanism [16] |

| Clinical Text Analysis | BioBERT/ClinicalBERT [19] | Medical concept recognition | Outperformed general BERT; ClinicalBERT achieved mean macro-F1 score of 0.761 [19] | Domain-specific pre-training on biomedical corpora [19] |

| Clinical Trial NER | PubMedBERT [21] | Named Entity Recognition in eligibility criteria | F1-scores of 0.715, 0.836, and 0.622 across three corpora [21] | Superior to both general BERT and other biomedical variants [21] |

Experimental Protocols and Methodologies

GEO-BERT for Molecular Property Prediction

The GEO-BERT framework exemplifies how core BERT architecture can be adapted for molecular property prediction in drug discovery. The experimental protocol involves several sophisticated components:

Molecular Representation: GEO-BERT considers atoms and chemical bonds in chemical structures as input, integrating positional information from three-dimensional molecular conformations [4]. Specifically, it introduces three different positional relationships: atom-atom, bond-bond, and atom-bond [4].

Architecture Enhancements:

- Self-supervised representation learning framework based on BERT

- Incorporation of 3D structural information within molecules

- Pre-training on large-scale small molecule data [4]

Experimental Validation:

- Benchmarking studies across multiple benchmarks demonstrated optimal performance

- Prospective validation through screening for DYRK1A inhibitors

- Discovery of two potent and novel DYRK1A inhibitors (IC50: <1 μM) confirmed practical utility [4]

DrugBERT for Anti-Tumor Drug Efficacy Prediction

DrugBERT represents another BERT-based adaptation specifically designed for predicting anti-tumor drug efficacy based on clinical text data:

Architecture Modifications:

- Integration of LDA-generated topic embeddings as semantic enhancement modules

- Drug efficacy-aware attention mechanism to prioritize drug efficacy-related semantic features

- LSTM integration to capture long-range dependencies in clinical text data [16]

Experimental Setup:

- Dataset: 958 patients with non-small cell cancer treated with anti-tumor drugs

- Independent validation: 266 bowel cancer patients

- Addressing data imbalance using SMOTE algorithm to synthesize minority class samples [16]

Methodological Innovation: The drug efficacy-aware attention mechanism enhances attention weights between drug efficacy relevant keywords. From K topics, m topics demonstrating significant drug efficacy relevance are selected, with the top w probability-ranked words extracted from chosen topics [16]. After deduplication, a Drug Efficacy-Related Keyword Repository (DEKR) containing n unique keywords is constructed [16].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential research reagents and computational tools for BERT-based molecular property prediction

| Tool/Resource | Type | Primary Function | Application Example |

|---|---|---|---|

| GEO-BERT Framework [4] | Software Framework | Molecular property prediction with 3D structural integration | Predicting molecular properties and identifying DYRK1A inhibitors in early-stage drug discovery [4] |

| DrugBERT Framework [16] | Software Framework | Drug efficacy prediction from clinical text | Predicting efficacy of anti-tumor drugs based on clinical radiomic text data [16] |

| LDA Topic Model [16] | Computational Algorithm | Extracting latent topics from text corpora | Generating topic embeddings for semantic enhancement in DrugBERT [16] |

| SMOTE Algorithm [16] | Data Preprocessing | Addressing class imbalance in datasets | Synthesizing minority class samples in clinical trial data [16] |

| BioBERT/ClinicalBERT [19] [21] | Pre-trained Models | Domain-specific natural language processing | Medical concept recognition and named entity recognition in clinical text [19] [21] |

| SHAP (SHapley Additive exPlanations) [18] | Model Interpretation | Explaining model predictions based on game theory | Providing interpretability for BERT-based model predictions in academic assessment [18] |

The core BERT architecture, with its fundamental components of self-attention and bidirectional context processing, provides a powerful foundation for scientific property prediction research. The experimental evidence demonstrates that BERT-based models consistently outperform general-purpose alternatives in specialized domains such as molecular property prediction and drug efficacy assessment [4] [16].

The success of domain-specific adaptations like GEO-BERT and DrugBERT highlights the importance of architectural customization for scientific applications. By integrating domain knowledge through geometric representations [4] or topic-aware attention mechanisms [16], researchers can leverage BERT's core strengths while addressing specific challenges in materials science and drug development.

For research teams working in molecular property prediction, the evidence suggests that BERT-based architectures provide a robust foundation that can be productively specialized through domain-specific modifications. The bidirectional context understanding that defines BERT appears particularly valuable for analyzing complex molecular structures and clinical text data, making it an enduring architectural paradigm for scientific AI applications.

The Bidirectional Encoder Representations from Transformers (BERT) model, renowned for its revolutionary impact on natural language processing (NLP), is now pioneering a transformative shift in scientific computation, particularly in molecular property prediction for drug discovery. Originally designed for masked language modeling (MLM) tasks, BERT's core architecture possesses a unique capability to learn profound contextual relationships from sequential data. This intrinsic strength has enabled its successful adaptation from textual sequences to the structural "languages" of science—namely, the sequences of atoms and bonds that define chemical compounds. The adaptation of BERT for scientific applications represents a significant paradigm shift, moving beyond traditional quantitative structure-property relationship (QSPR) models that rely on hand-crafted descriptors towards deep learning approaches that learn optimal structure-to-descriptor mappings directly from data [9]. This guide provides a comprehensive comparison of emerging BERT-based frameworks for molecular property prediction, detailing their experimental performance, methodologies, and practical implementations to inform researchers and drug development professionals.

Understanding the Foundation: Masked Language Modeling

Masked language modeling serves as the foundational pre-training objective that enables BERT's sophisticated contextual understanding. In standard NLP applications, MLM involves randomly masking a portion of input tokens (typically 15%) and training the model to predict the original vocabulary identifiers of these masked tokens based on their bidirectional context [22] [23]. This self-supervised approach forces the model to develop a deep, bidirectional understanding of sequential relationships without requiring labeled datasets. The model achieves this by generating probability distributions over the input vocabulary for each masked token and minimizing the prediction error against the original tokens [22]. This pre-training paradigm has proven exceptionally transferable to molecular representations, where atoms or molecular fragments can be treated as "words" and entire molecular structures as "sentences," creating a powerful framework for learning complex chemical relationships from large unannotated molecular datasets [9].

Comparative Analysis of Molecular BERT Frameworks

Performance Benchmarking

Recent research has yielded several specialized BERT adaptations for molecular property prediction. The table below summarizes the key performance metrics of these frameworks across established benchmarks.

Table 1: Performance Comparison of BERT-based Molecular Property Prediction Models

| Model Name | Architectural Features | Benchmark Datasets | Key Performance Results | Computational Requirements |

|---|---|---|---|---|

| GEO-BERT [4] | Incorporates 3D molecular conformation data; Atom-atom, bond-bond, and atom-bond positional relationships | Multiple benchmarks (unspecified); DYRK1A inhibitor case study | "Optimal performance across multiple benchmarks"; Identified two novel DYRK1A inhibitors (IC50: <1 μM) | Requires 3D structural information |

| Pretrained BERT + Bayesian AL [9] [24] | BERT pretrained on 1.26M compounds combined with Bayesian active learning | Tox21; ClinTox | Achieved equivalent toxic compound identification with 50% fewer iterations vs. conventional active learning | Pretraining on large dataset; efficient fine-tuning |

| Ensemble Model (BERT, RoBERTa, XLNet) [25] | Ensemble learning with BERT, RoBERTa, and XLNet without extensive pretraining | Molecular property prediction tasks | "Significant effectiveness compared to existing advanced models"; addresses limited computational resources | Resource-efficient; no extensive pretraining needed |

Experimental Workflows and Methodologies

GEO-BERT Experimental Protocol

GEO-BERT introduces a geometry-aware framework that incorporates three-dimensional molecular conformation data into the BERT architecture [4]. The methodology involves:

- Molecular Representation: Atoms and chemical bonds in chemical structures serve as input, with integration of three-dimensional conformational positional information.

- Positional Relationships: Implementation of three novel positional encoding types - atom-atom, bond-bond, and atom-bond relationships - to enhance molecular structure characterization.

- Pre-training Strategy: Self-supervised pre-training on large-scale small molecule datasets using the MLM objective, incorporating 3D structural information.

- Fine-tuning: Supervised fine-tuning on specific property prediction tasks, such as DYRK1A inhibitor identification.

The model's effectiveness was validated through prospective studies identifying novel DYRK1A inhibitors, with two compounds demonstrating potent inhibition (IC50: <1 μM) [4].

Pretrained BERT with Bayesian Active Learning Protocol

This approach integrates transformer-based BERT pretrained on 1.26 million compounds into a Bayesian active learning pipeline [9]:

Data Preparation:

- Utilizes Tox21 (≈8,000 compounds, 12 toxicity pathways) and ClinTox (1,484 compounds) datasets.

- Implements scaffold splitting with 80:20 ratio for training and testing to evaluate generalization.

- Constructs a balanced initial set of 100 molecules with equal positive/negative representation.

Model Architecture:

- Employs MolBERT, a BERT adaptation pretrained on 1.26 million compounds.

- Combines pretrained representations with Bayesian neural networks for uncertainty estimation.

Active Learning Cycle:

- Starts with small initial labeled dataset (≈100 molecules).

- Uses Bayesian acquisition functions (BALD, EPIG) to select informative samples from unlabeled pool.

- Iteratively incorporates newly labeled data and retrains model.

- Evaluates performance using Expected Calibration Error (ECE) measurements.

This framework disentangles representation learning from uncertainty estimation, proving particularly valuable in low-data scenarios common early-stage drug discovery [9].

Ensemble Model Experimental Protocol

The ensemble approach combines BERT, RoBERTa, and XLNet without extensive pretraining requirements [25]:

- Model Integration: Implements ensemble learning with BERT, RoBERTa, and XLNet architectures.

- Training Strategy: Uses supervised fine-tuning rather than extensive pretraining from scratch.

- Resource Optimization: Specifically designed to address computational resource limitations in experimental settings.

- Performance Validation: Demonstrates significant effectiveness compared to existing advanced models while maintaining resource efficiency.

Visualizing Experimental Workflows

GEO-BERT 3D Molecular Representation Workflow

Diagram 1: GEO-BERT 3D Molecular Representation Workflow

Bayesian Active Learning with Pretrained BERT

Diagram 2: Bayesian Active Learning with Pretrained BERT

Table 2: Key Research Reagent Solutions for BERT-based Molecular Property Prediction

| Resource Category | Specific Tool/Dataset | Function and Application | Access Information |

|---|---|---|---|

| Benchmark Datasets | Tox21 Dataset [9] | Provides ≈8,000 chemical compounds with binary toxicity labels across 12 pathways; used for model validation | Publicly available |

| ClinTox Dataset [9] | Contains 1,484 FDA-approved and clinically failed drugs; evaluates clinical toxicity prediction | Publicly available | |

| Computational Frameworks | GEO-BERT Model [4] | Geometry-aware BERT for molecular property prediction; integrates 3D structural information | Open-source (GitHub: drug-designer/GEO-BERT) |

| HuggingFace Transformers [23] | Provides libraries for training and testing masked language models in Python | Open-source | |

| Pretrained Models | MolBERT [9] | BERT model pretrained on 1.26 million compounds; enables transfer learning | Reference implementation available |

| Evaluation Metrics | Expected Calibration Error (ECE) [9] | Measures reliability of uncertainty estimates in Bayesian active learning | Standard implementation |

The adaptation of BERT architectures for molecular property prediction represents a significant advancement in computational drug discovery, offering substantial improvements over traditional QSPR methods. GEO-BERT demonstrates the value of incorporating 3D structural information through its successful identification of novel DYRK1A inhibitors [4]. The integration of pretrained BERT with Bayesian active learning establishes a paradigm for data-efficient screening, reducing experimental iterations by 50% while maintaining predictive accuracy [9]. For resource-constrained environments, ensemble approaches provide a balanced solution that delivers competitive performance without extensive pretraining requirements [25]. These frameworks collectively highlight the transformative potential of adapted BERT architectures in accelerating early-stage drug discovery, enabling more efficient exploration of chemical space, and ultimately reducing the time and cost associated with identifying promising therapeutic candidates.

The application of BERT (Bidirectional Encoder Representations from Transformers) architectures has marked a significant evolution in molecular property prediction, a core task in modern drug discovery and materials science. These models, pre-trained on vast corpora of chemical data, leverage self-supervised learning to generate rich molecular representations that can be fine-tuned for specific predictive tasks with limited labeled data. The transition from traditional machine learning methods to sophisticated deep learning frameworks like BERT has been driven by the need for more accurate, efficient, and generalizable models in chemical research [9] [26]. This shift is particularly relevant in the context of materials property prediction, where the ability to accurately predict molecular behavior can dramatically reduce the time and cost associated with traditional experimental methods [27].

The fundamental advantage of BERT-based models lies in their bidirectional nature, which allows them to process molecular representations in context from both directions, capturing complex chemical patterns that unidirectional models might miss. Inspired by breakthroughs in natural language processing, chemical BERT models treat molecular structures as a "language" with its own syntax and grammar, whether represented as SMILES strings, molecular graphs, or other notation systems [26] [28]. This approach has proven particularly valuable in addressing the pervasive challenge of data scarcity in chemical research, where labeled experimental data is often limited due to the high costs and time requirements of wet lab experiments [9] [27].

Comparative Analysis of Chemical BERT Models

Model Architectures and Methodologies

Chemical BERT models share a common foundation but diverge in their architectural specifics, training methodologies, and molecular representations. The table below summarizes the key characteristics of prominent models in this domain.

Table 1: Architectural Overview of Key Chemical BERT Models

| Model Name | Core Architecture | Molecular Representation | Pre-training Strategy | Key Innovations |

|---|---|---|---|---|

| MolBERT [9] | Transformer-based BERT | SMILES strings | Masked language modeling on 1.26 million compounds | Effective disentanglement of representation learning and uncertainty estimation |

| GEO-BERT [4] | Geometry-enhanced BERT | 3D molecular conformations | Incorporates 3D positional information | Introduces atom-atom, bond-bond, and atom-bond positional relationships |

| MolLLMKD [27] | LLM-enhanced framework | 2D molecular graphs + semantic prompts | Multi-level knowledge distillation with reinforcement learning | Integrates LLM-generated prompts with graph neural networks |

| Graph Transformers [29] | Graph transformer | Molecular graphs | Masked atom prediction and property prediction | Extends self-attention to graphs with distance-aware mechanisms |

Performance Benchmarking

Rigorous evaluation across standardized benchmarks is essential for comparing model capabilities. The following table summarizes quantitative performance metrics for key chemical BERT models across various tasks.

Table 2: Performance Comparison of Chemical BERT Models on Benchmark Tasks

| Model | Tox21 AUC | ClinTox AUC | QM9 MAE | Virtual Screening Efficiency | Data Efficiency |

|---|---|---|---|---|---|

| MolBERT [9] | ~0.85 | ~0.90 | - | 50% fewer iterations for toxic compound identification | High (effective with limited labeled data) |

| GEO-BERT [4] | - | - | - | Identified two potent DYRK1A inhibitors (IC50: <1 μM) | - |

| MolLLMKD [27] | - | - | - | - | State-of-the-art on 12 benchmark datasets |

| Traditional Fingerprints (ECFP) [29] | Comparable to neural models | Comparable to neural models | - | - | - |

Recent benchmarking studies have revealed surprising insights about chemical BERT models. A comprehensive evaluation of 25 pretrained molecular embedding models across 25 datasets found that nearly all neural models showed negligible or no improvement over the traditional ECFP molecular fingerprint baseline [29]. This finding raises important questions about evaluation rigor in the field and suggests that the reported advantages of some complex models may be less pronounced than initially claimed when evaluated under standardized conditions.

Diagram 1: Chemical BERT Model Ecosystem showing the relationship between molecular representations, pre-training objectives, model variants, and downstream prediction tasks.

Experimental Protocols and Benchmarking Methodologies

Standardized Evaluation Frameworks

The assessment of chemical language models requires rigorous, standardized protocols to ensure comparable and reproducible results. Key benchmarking frameworks include:

ChemBench: An automated framework for evaluating chemical knowledge and reasoning abilities of LLMs, containing over 2,700 question-answer pairs across diverse chemistry topics. This benchmark measures reasoning, knowledge, and intuition across undergraduate and graduate chemistry curricula, with human expert performance for comparison [30].

Tox21 and ClinTox Protocols: Standardized datasets and splitting strategies for evaluating toxicology predictions. The Tox21 dataset contains approximately 8,000 compounds with binary labels across 12 toxicity pathways, while ClinTox includes 1,484 FDA-approved and failed drugs. Standard practice employs scaffold splitting with 80:20 ratio to create distinct training and testing sets, ensuring models are evaluated on structurally distinct molecules [9].

MOSES and GuacaMol: Platforms for measuring the quality, diversity, and fidelity of generated molecules, assessing the ability of models to explore chemical space effectively. These benchmarks provide standardized metrics for comparing generative model performance [26].

Data Efficiency and Active Learning Protocols

A critical advantage of BERT-based models is their performance in data-scarce environments, which is common in chemical research. Experimental protocols for evaluating data efficiency typically involve:

Bayesian Active Learning: A principled framework that quantifies the utility of conducting experiments. The Bayesian Active Learning by Disagreement (BALD) acquisition function selects samples that maximize information gain about model parameters, while Expected Predictive Information Gain (EPIG) prioritizes samples expected to most improve predictive performance [9].

Progressive Sampling: Experiments where models are trained with progressively larger subsets of available data to measure learning efficiency. MolBERT demonstrated equivalent toxic compound identification with 50% fewer iterations compared to conventional active learning, highlighting its data efficiency [9].

Diagram 2: Active Learning Workflow for Data-Efficient Molecular Property Prediction showing the iterative process of model training, uncertainty estimation, and selective sample acquisition.

Successful implementation of chemical BERT models requires familiarity with key datasets, software tools, and computational resources. The following table outlines essential components of the molecular property prediction toolkit.

Table 3: Essential Research Reagents and Computational Tools for Chemical BERT Implementation

| Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| Tox21 Dataset [9] | Chemical Dataset | Benchmark for toxicity prediction | Contains ~8,000 compounds with 12 toxicity pathway assays |

| ClinTox Dataset [9] | Chemical Dataset | Distinguishes FDA-approved from failed drugs | 1,484 compounds with clinical trial toxicity outcomes |

| ZINC Database [31] | Compound Library | Source of drug-like molecules for training | Provides commercially available compounds for virtual screening |

| SMILES Notation [26] | Molecular Representation | Text-based molecular encoding | Standard input format for sequence-based models like MolBERT |

| Molecular Graphs [26] | Molecular Representation | Graph-based molecular encoding | Nodes (atoms) and edges (bonds) for graph neural networks |

| ECFP Fingerprints [29] | Molecular Representation | Circular substructure fingerprints | Traditional baseline for molecular machine learning |

| OPSIN Tool [31] | Cheminformatics Software | IUPAC name parsing | Validates chemical name-to-structure conversions |

| Scaffold Splitting [9] | Data Splitting Method | Ensures evaluation on distinct molecular scaffolds | Prevents data leakage and tests generalization capability |

Future Directions and Research Opportunities

The field of chemical BERT models continues to evolve rapidly, with several promising research directions emerging:

Multimodal Integration: Future models will likely combine molecular structure with diverse data types, including scientific literature, experimental protocols, and spectral data. The development of "active" environments where LLMs interact with tools and data, rather than merely responding to prompts, represents a significant frontier [32] [33].

3D Structural Incorporation: While models like GEO-BERT have begun incorporating 3D conformational information, more sophisticated integration of spatial and dynamic molecular properties remains an open challenge. The high computational cost of 3D conformation generation currently limits widespread application [4] [29].

Reasoning Capabilities: Recent "reasoning models" such as OpenAI's o3-mini have demonstrated substantially improved chemical reasoning capabilities, correctly answering 28%-59% of questions on the ChemIQ benchmark compared to just 7% for GPT-4o [31]. This suggests that enhanced reasoning architectures will play a crucial role in future chemical AI systems.

Evaluation Rigor: The surprising performance of traditional fingerprints against sophisticated neural models highlights the need for more rigorous evaluation standards. Future research must address this benchmarking gap to ensure meaningful progress [29].

As chemical BERT models mature, they are poised to transform materials property prediction from a largely empirical process to a more rational, accelerated workflow—ultimately reducing the time and cost associated with traditional experimental approaches while expanding the explorable chemical space for drug discovery and materials design.

Building Predictive Power: Methodologies and Real-World Applications of BERT

The application of BERT architecture to molecular property prediction represents a significant evolution in cheminformatics, transitioning from traditional descriptor-based methods to sophisticated deep-learning models. Inspired by breakthroughs in natural language processing (NLP), researchers have adapted transformer-based models to interpret chemical structures as a specialized language, where sequences like SMILES (Simplified Molecular Input Line Entry System) serve as sentences and atoms or functional groups as words [34] [35]. This approach allows models to learn rich, contextual molecular representations from massive unlabeled datasets, capturing complex structural patterns and chemical rules without costly experimental data. The core premise is that pretraining on diverse chemical corpora enables models to develop fundamental chemical intuition, which can then be efficiently fine-tuned for specific property prediction tasks with limited labeled data [9] [36]. Within the broader thesis of BERT architecture for materials property prediction, these molecular pretraining strategies demonstrate how transfer learning can address data scarcity, improve generalization, and accelerate discovery timelines in pharmaceutical research and development.

Comparative Analysis of Molecular Pretraining Approaches

Molecular pretraining strategies have diversified significantly, each employing distinct architectural choices and learning objectives to capture chemical information. The following table summarizes major approaches and their performance characteristics.

Table 1: Comparison of Molecular Pretraining Strategies and Performance

| Model | Architecture | Pretraining Strategy | Key Innovation | Reported Performance Advantages |

|---|---|---|---|---|

| Standard BERT [9] | Transformer (SMILES) | Masked Language Modeling (MLM) | Basic molecular string representation | 50% fewer iterations needed for equivalent toxic compound identification on Tox21/ClinTox vs. conventional active learning [9] |

| MLM-FG [35] | Transformer (SMILES) | Functional Group-targeted Masking | Selectively masks chemically significant functional groups | Outperformed existing SMILES & graph models in 9/11 benchmark tasks; surpassed some 3D-graph models [35] |

| GEO-BERT [4] | Transformer (3D Graph) | MLM with 3D Geometry | Incorporates atom-atom, bond-bond, and atom-bond positional relationships | Demonstrated optimal performance on multiple benchmarks; successfully identified novel DYRK1A inhibitors (IC50: <1 μM) [4] |

| MoleVers [36] | Branching Encoder | Two-Stage: Self-supervised + Auxiliary Labels | Combines masked atom prediction, dynamic denoising, and inexpensive computational labels | SOTA on 20/22 low-data MPPW benchmark datasets; ranks second on remaining two [36] |

| ECFP (Baseline) [29] | Fixed Fingerprint | Rule-based substructure identification | Traditional circular fingerprint | Extensive benchmarking (25 models, 25 datasets) showed nearly all neural models had negligible or no improvement over ECFP baseline [29] |

The experimental data reveals several key trends. First, specialized masking strategies that incorporate chemical knowledge, such as MLM-FG's functional group masking, consistently outperform standard masked language modeling [35]. Second, the integration of 3D structural information, as demonstrated by GEO-BERT, provides significant performance gains by capturing spatial relationships critical to molecular properties and interactions [4]. Third, hybrid pretraining frameworks that combine multiple objectives—such as MoleVers' integration of self-supervised and supervised pretraining—show remarkable effectiveness in data-scarce scenarios common in real-world drug discovery [36].

However, a crucial critical perspective emerges from recent benchmarking studies. A comprehensive evaluation of 25 pretrained models across 25 datasets revealed that nearly all neural approaches showed negligible or no improvement over the traditional ECFP fingerprint baseline, with only the CLAMP model (also fingerprint-based) achieving statistically significant superiority [29]. This finding raises important concerns about evaluation rigor in the field and suggests that the reported advantages of complex pretraining strategies require careful validation against simpler baselines.

Experimental Protocols and Methodologies

Data Preparation and Pretraining Corpus

Successful molecular pretraining begins with curating large-scale, diverse chemical datasets. Common sources include PubChem (containing over 100 million purchasable compounds), ZINC, and ChEMBL [35] [36]. The standard protocol involves extracting SMILES strings or 2D/3D molecular graphs from these databases. For SMILES-based models, data preprocessing includes canonicalization (standardizing string representation) and tokenization, which can occur at the character level (individual atoms, bonds) or substructure level (using a learned vocabulary or chemically aware fragmentation) [34] [35]. For graph-based approaches, molecules are represented as topological graphs with atoms as nodes and bonds as edges, often with additional features for atom type, charge, hybridization, and bond type [4] [29].

Critical to evaluating generalization is the data splitting strategy. While random splitting is common, scaffold splitting—which partitions molecules based on their core Bemis-Murcko scaffolds—provides a more rigorous test by ensuring structurally distinct molecules appear in training and test sets [9] [35]. This method prevents artificially inflated performance from evaluating on molecules structurally similar to training examples and better simulates real-world drug discovery where novel scaffolds are frequently sought.

Detailed Pretraining Methodologies

Table 2: Core Pretraining Objectives and Their Implementation

| Pretraining Objective | Mechanism | Chemical Knowledge Encoded | Implementation Example |

|---|---|---|---|

| Masked Language Modeling (MLM) | Randomly masks tokens in SMILES string; model predicts masked tokens | Contextual relationships between atoms/substructures in molecular sequences | Standard BERT: 15% masking rate; predicts original vocabulary tokens [9] |

| Functional Group Masking (MLM-FG) | Identifies and masks subsequences corresponding to functional groups | Critical chemical substructures (e.g., carboxylic acids, esters) determining molecular properties | MLM-FG: Parses SMILES, identifies functional groups via RDKit, masks 15% of FG tokens [35] |

| 3D Geometry Integration | Incorporates spatial distance/angle relationships between atoms | Three-dimensional molecular conformation critical for binding and activity | GEO-BERT: Uses atom-atom, bond-bond, atom-bond positional encodings from 3D conformers [4] |

| Dynamic Denoising | Adds noise to atom coordinates; model learns to denoise | Molecular force fields and structural stability principles | MoleVers: Applies Gaussian noise to coordinates; model predicts original equilibrium structure [36] |

| Two-Stage Pretraining | Stage 1: Self-supervised learning; Stage 2: Predicting computational labels | Transfers knowledge from inexpensive computational properties (e.g., DFT) to experimental properties | MoleVers: Stage 1: Masked atom prediction + denoising; Stage 2: Fine-tunes on auxiliary computational labels [36] |

The workflow for implementing these pretraining strategies follows a systematic pipeline, visualized below.

Diagram 1: Molecular Pretraining Workflow

Evaluation Metrics and Benchmarking

Standardized evaluation is critical for comparing pretraining approaches. For classification tasks (e.g., toxicity prediction, activity classification), the Area Under the Receiver Operating Characteristic Curve (AUC-ROC) is the primary metric, measuring the model's ability to distinguish between positive and negative classes across threshold settings [9] [35]. For regression tasks (e.g., predicting binding affinity, solubility), Mean Absolute Error (MAE) and Root Mean Squared Error (RMSE) quantify the deviation between predicted and experimental values [35].

Beyond predictive accuracy, Expected Calibration Error (ECE) measures how well the model's confidence scores align with actual accuracy, which is crucial for active learning applications where uncertainty estimation guides experimental design [9]. Benchmark datasets from MoleculeNet—including Tox21, ClinTox, HIV, BBBP, and others—provide standardized evaluation platforms [9] [35] [29]. Recent benchmarks like the Molecular Property Prediction in the Wild (MPPW) dataset, comprising 22 small datasets from ChEMBL with 50 or fewer training labels, better simulate real-world data scarcity [36].

The Scientist's Toolkit: Essential Research Reagents

Implementing molecular pretraining strategies requires both computational tools and chemical knowledge resources. The following table details essential "research reagents" for conducting these experiments.

Table 3: Essential Research Reagents for Molecular Pretraining Experiments

| Resource Category | Specific Tools / Databases | Function in Pretraining Research |

|---|---|---|

| Chemical Databases | PubChem, ZINC, ChEMBL | Provide large-scale unlabeled molecular datasets for pretraining; source of experimental labels for fine-tuning [35] [36] |

| Cheminformatics Toolkits | RDKit, OpenBabel | Process molecular representations; convert between formats; identify functional groups; generate descriptors [35] |

| Deep Learning Frameworks | PyTorch, TensorFlow, DeepGraphLibrary | Implement transformer and GNN architectures; manage pretraining and fine-tuning workflows [9] [35] |

| Molecular Representation Libraries | SMILES, SELFIES, Molecular Graphs | Standardized formats for representing chemical structures as model inputs [34] [35] |

| Benchmarking Suites | MoleculeNet, MPPW | Standardized datasets and evaluation protocols for comparing model performance [35] [36] [29] |

| Pretrained Models | GEO-BERT, MLM-FG, MoleVers | Available model weights for transfer learning; baselines for comparative studies [35] [36] [4] |

The relationship between these resources in a typical research workflow is illustrated below, showing how data flows from raw chemicals to validated predictions.

Diagram 2: Research Resource Integration

The pretraining landscape for molecular property prediction demonstrates a clear evolution from generic masked language modeling toward chemically-aware strategies that explicitly incorporate structural knowledge. Approaches that target functionally important substructures (MLM-FG), integrate 3D geometry (GEO-BERT), or combine multiple pretraining objectives (MoleVers) show consistent performance advantages across standardized benchmarks [35] [36] [4]. The integration of these pretrained models with active learning frameworks further enhances their practical utility, enabling more efficient experimental design and compound prioritization in drug discovery pipelines [9].

However, the field faces critical challenges regarding evaluation rigor and practical utility. The surprising benchmarking result that most neural approaches fail to consistently outperform traditional fingerprints raises important questions about the true extent of progress in this domain [29]. Future research should prioritize (1) more rigorous evaluation against simple baselines, (2) standardization of benchmarking protocols to prevent data leakage, and (3) development of pretraining strategies that more effectively capture the fundamental principles of molecular structure-activity relationships. For researchers and drug development professionals, the current evidence suggests adopting a hybrid approach that leverages the strengths of both modern pretrained models and traditional chemical descriptors, while maintaining realistic expectations about the achievable performance gains in practical applications.

The accurate prediction of materials and molecular properties is a cornerstone of modern drug development and materials science. However, the field consistently grapples with the fundamental challenge of data sparsity; high-quality, annotated experimental data is often scarce and costly to obtain, creating a significant bottleneck for training robust machine learning models [37]. Within the broader context of BERT architecture research for materials property prediction, two innovative strategies have emerged as powerful solutions: multitask learning (MTL) and SMILES enumeration. Multitask learning improves generalization by leveraging information from multiple related tasks, thereby effectively amplifying the learning signal from limited data [38] [39]. Concurrently, SMILES enumeration acts as a powerful data augmentation technique, expanding the effective size of training sets by representing a single molecule with multiple valid text strings [40]. This guide provides an objective comparison of these approaches, detailing their experimental protocols, performance, and practical utility for researchers and scientists.

Multitask learning is a subfield of machine learning where multiple learning tasks are solved simultaneously, exploiting commonalities and differences across tasks to improve generalization and prediction accuracy for each individual task [39]. The central idea is that by learning tasks in parallel using a shared representation, the model can prevent overfitting and perform better on sparse data tasks. As Rich Caruana stated in his seminal 1997 work, MTL "improves generalization by using the domain information contained in the training signals of related tasks as an inductive bias" [39].

Key Methodologies and Optimization Approaches

Several methodological frameworks have been developed to implement MTL effectively:

- Task Grouping and Overlap: Information can be shared selectively across tasks. Tasks may be grouped in a hierarchy or related according to a learned metric, where similarity in a underlying parameter basis indicates relatedness [39].

- Multi-task Optimization: This is inherently a multi-objective optimization problem. Modern approaches include Multi-task Bayesian Optimization, which builds multi-task Gaussian process models to capture inter-task dependencies, and Evolutionary Multi-tasking, which uses population-based search algorithms to progress multiple optimization tasks simultaneously through genetic transfer [39].

- Direct Metric Optimization: Some methods, such as those based on the Alternating Direction Method of Multipliers (ADMM), directly optimize evaluation metrics for a family of MTL problems by combining a regularizer on the weight matrix with a sum of structured hinge losses [41].

Experimental Evidence in Materials Science

The PolyQT (Polymer Quantum-Transformer) model exemplifies a sophisticated MTL approach applied to polymer informatics. This hybrid architecture combines Quantum Neural Networks (QNNs) with a Transformer to address sparse data challenges [37]. In prediction experiments for six key polymer properties, the PolyQT model demonstrated significant advantages, achieving R² values of 0.85, 0.77, 0.85, 0.83, and 0.92 for ionization energy, dielectric constant, glass transition temperature, refractive index, and polymer density, respectively, outperforming all benchmarked classical models [37]. Crucially, its performance remained robust under different data sparsity conditions (40%, 60%, and 80% data), confirming MTL's utility in data-limited scenarios [37].

SMILES Enumeration: Augmenting Molecular Representations

SMILES (Simplified Molecular-Input Line-Entry System) is a line notation for representing molecular structures as text strings. A single molecule can be represented by multiple, equally valid SMILES strings due to different possible atom ordering during the traversal of the molecular graph [40]. SMILES enumeration, also known as randomized SMILES, leverages this property as a powerful data augmentation technique.

Implementation and Impact on Model Generalization

In practice, models are trained using different SMILES representations of the same molecule for each epoch. For example, a model trained on one million molecules for 300 epochs would be exposed to approximately 300 million different randomized SMILES, vastly increasing the effective diversity of the training data [40]. Benchmark studies have conclusively shown that models trained on randomized SMILES generalize better than those trained on canonical (unique) SMILES. They generate chemical spaces that are more uniform, complete, and closed, representing the target chemical space more accurately [40].

A particularly counter-intuitive yet profound finding is that the ability of language models to generate invalid SMILES is actually beneficial rather than detrimental [42]. Research demonstrates that invalid SMILES are typically sampled with significantly lower likelihoods than valid SMILES, meaning that filtering them out acts as an intrinsic self-corrective mechanism that removes low-quality samples [42]. Enforcing 100% validity, as done with alternative representations like SELFIES, can introduce structural biases and impair a model's ability to learn the true data distribution and generalize to unseen chemical space [42].

Performance Comparison: Quantitative Benchmarks

The following tables summarize experimental data comparing the performance of these and other related approaches on standardized benchmarks.

Table 1: Performance Comparison of Cross-Modal Knowledge Transfer on LLM4Mat-Bench (Selected Tasks)

| Predictive Task | SOTA Existing Model (MAE) | SOTA Presented Model (MAE) | Performance Boost | Best-Performing Architecture |

|---|---|---|---|---|