Beyond Single-Omics: A Strategic Framework for Validating Microbiome Findings in Biomedical Research

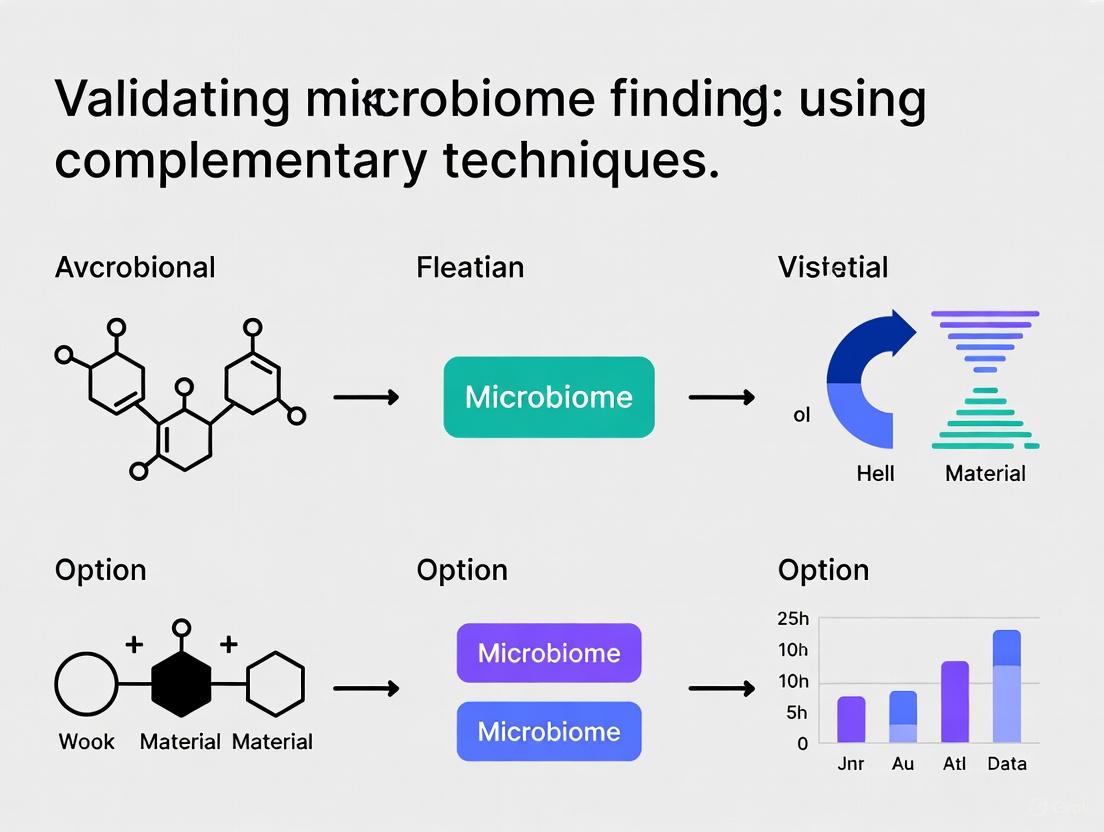

This article provides a comprehensive framework for researchers and drug development professionals to validate microbiome findings through complementary techniques.

Beyond Single-Omics: A Strategic Framework for Validating Microbiome Findings in Biomedical Research

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to validate microbiome findings through complementary techniques. It covers the foundational rationale for multi-method validation, benchmarks current integrative methodologies for microbiome-metabolome data, addresses key troubleshooting and optimization challenges in pipeline reproducibility, and outlines robust comparative and validation strategies. By synthesizing the latest research, including performance benchmarks of 19 integrative methods and new standardization tools like the NIST reference material, this guide aims to enhance the rigor, reproducibility, and translational potential of microbiome science in clinical and pharmaceutical applications.

The Critical Imperative: Why Multi-Technique Validation is Non-Negotiable in Microbiome Science

The human microbiome represents one of the most promising frontiers in modern medicine, with its manipulation offering potential pathways to addressing conditions ranging from gastrointestinal disorders to cancer and antibiotic resistance [1]. The global microbiome market reflects this potential, projected to grow from $0.62 billion in 2024 to $1.52 billion by 2030 [2]. Similarly, the microbiome diagnostics market is expected to reach $391.33 million by 2031, expanding at a CAGR of 13.4% [3]. Yet, beneath this promise lies a fundamental challenge: a reproducibility crisis rooted in the complex, dynamic nature of microbial communities and methodological inconsistencies that undermine the translation of research findings into reliable clinical applications. This crisis carries high stakes for drug development professionals, clinicians, and patients awaiting novel treatments. This guide examines the sources of this crisis and outlines strategies for validating microbiome findings through complementary techniques that enhance reproducibility and foster confidence in microbiome-based science.

The Complex Landscape of Microbiome-Based Products

Microbiome-based therapies exist on a spectrum from minimally manipulated ecosystems to highly characterized single-strain products, each with distinct reproducibility considerations [4].

Table 1: Spectrum of Microbiome-Based Therapies and Reproducibility Challenges

| Therapy Category | Description | Examples | Key Reproducibility Challenges |

|---|---|---|---|

| Microbiota Transplantation (MT) | Transfer of minimally manipulated microbial communities | Faecal Microbiota Transplantation (FMT) | Donor variability, undefined composition, batch consistency |

| Whole-Ecosystem-Based Medicinal Products | Industrially manufactured complex ecosystems from human microbiome samples | Rebyota (for rCDI) | Standardizing complex communities, quality control of diverse taxa |

| Rationally Designed Ecosystem-Based Products | Co-fermentation of multiple selected strains to create controlled ecosystems | Products in development containing dozens of strains | Process validation for co-fermentation, functional consistency |

| Live Biotherapeutic Products (LBPs) | Defined strains grown separately and blended | VOWST (for rCDI), single or multi-strain products | Establishing clonal cell banks, ensuring viability and potency |

The regulatory landscape for these products is evolving, with the European Medicines Agency (EMA) and U.S. Food and Drug Administration (FDA) working to adapt frameworks for evaluating these complex therapies [4]. The first approved microbiome-based medicinal products, Rebyota and VOWST, both for preventing recurrent Clostridioides difficile infection (rCDI), mark a transformative shift but also highlight the challenges in standardizing complex biological products [4].

Technical and Methodological Variability

Substantial technical variability in microbiome analysis protocols introduces significant noise and complicates cross-study comparisons. Sample collection methods (including timing, storage conditions, and collection tools), DNA extraction protocols, sequencing platforms, and bioinformatic processing pipelines all contribute to variability that can obscure true biological signals [5]. Studies have revealed substantial inter-laboratory variation in metagenomic outputs, prompting initiatives like the National Institute of Standards and Technology (NIST) human gut microbiome reference materials to improve consistency [3].

Sources of Microbiome Reproducibility Crisis

Biological Complexity and Dynamic Nature

The microbiome is inherently variable, both between individuals and within the same individual over time. Factors including dietary habits, medication use (particularly antibiotics), circadian rhythms, and environmental exposures can significantly alter microbial composition [5]. This biological dynamism complicates efforts to develop diagnostic tools based on static microbiome profiles. For instance, the ROSCO-CF trial revealed substantial interindividual variability in lung microbiomes among cystic fibrosis patients, despite all participants sharing the same clinical diagnosis and chronic Pseudomonas aeruginosa colonization [6].

Analytical and Interpretive Challenges

Many analytical approaches in microbiome research oversimplify complex microbial ecosystems. The Firmicutes-to-Bacteroidetes ratio, while commonly used, risks overlooking the complexity of microbial ecosystems and can lead to misleading interpretations [5]. Additionally, the compositional nature of microbiome data (where relative abundances sum to 100%) presents statistical challenges that, if not properly addressed through appropriate transformations like centered log-ratio (CLR) or isometric log-ratio (ILR), can generate spurious correlations [7].

Validating Findings with Complementary Techniques

Multi-Omics Integration Strategies

Integrating multiple omics layers provides a powerful approach to validating microbiome findings through convergent evidence. A comprehensive benchmark study evaluated nineteen integrative methods for linking microbiome and metabolome data, identifying optimal strategies for different research questions [7].

Table 2: Benchmark Performance of Microbiome-Metabolite Integration Methods

| Research Goal | Best-Performing Methods | Key Strengths | Data Requirements |

|---|---|---|---|

| Global Association Testing | MMiRKAT, Mantel Test | Controls false positives, detects overall correlation | Paired microbiome-metabolome matrices |

| Data Summarization | sPLS, MOFA2 | Captures shared variance, enables visualization | Large sample sizes for stable components |

| Individual Association Detection | Maaslin2, SparCC | Identifies specific microbe-metabolite relationships | Multiple testing correction needed |

| Feature Selection | sCCA, LASSO | Identifies stable, non-redundant feature sets | High-dimensional data with collinearity |

The benchmark analysis determined that multi-omics factor analysis (MOFA2) and sparse Partial Least Squares (sPLS) were particularly effective for data summarization, while Maaslin2 excelled at identifying robust individual associations between specific microorganisms and metabolites [7].

Multi-Omics Integration Workflow

Complementary Experimental Protocols

Protocol 1: 16S rRNA Gene Sequencing with Metabolite Integration

Purpose: To link microbial community structure with metabolic output while addressing compositionality.

Methodology:

- Sample Collection: Collect stool samples using standardized kits with stabilizers, documenting timing relative to food intake and medication [5].

- DNA Extraction: Use mechanical lysis with bead-beating for comprehensive cell disruption, including spores [5].

- Library Preparation: Amplify the V4 region of 16S rRNA gene using dual-indexed primers with the following cycling conditions: 94°C for 3 minutes, 30 cycles of (94°C for 45s, 50°C for 60s, 72°C for 90s), final extension at 72°C for 10 minutes [6].

- Sequencing: Perform paired-end sequencing (2×250 bp) on Illumina MiSeq platform with 10% PhiX spike-in [6].

- Bioinformatic Processing: Process sequences using DADA2 for error correction, ASV inference, and chimera removal. Transform abundance data using centered log-ratio (CLR) transformation for downstream correlation analysis [7].

- Metabolite Profiling: Analyze same samples using LC-MS metabolomics with internal standards [7].

- Integration: Apply Maaslin2 to identify robust microbe-metabolite associations while controlling for confounders [7].

Protocol 2: Longitudinal Microbial Dynamics Analysis

Purpose: To capture temporal microbial coordination in response to interventions.

Methodology:

- Study Design: Collect multiple samples per participant over time (pre-, during, and post-intervention) [6].

- Sequencing: Use shotgun metagenomics for strain-level resolution and functional profiling.

- Quality Control: Include reference materials (NIST gut microbiome standard) to monitor technical variability [3].

- Analysis: Apply the non-parametric microbial interdependence test (NMIT) to evaluate temporal coordination of microbial taxa within each subject [6].

- Validation: Integrate with metabolomic data using sparse Canonical Correlation Analysis (sCCA) to identify stable associations between microbial features and metabolic pathways [7].

Essential Research Reagent Solutions

Table 3: Essential Research Reagents for Reproducible Microbiome Research

| Reagent/Category | Specific Examples | Function & Importance | Considerations for Selection |

|---|---|---|---|

| Sample Stabilization Kits | DNA/RNA Shield, RNAlater | Preserves microbial composition at collection, reduces pre-analytical variability | Compatibility with downstream applications, stability during transport |

| Standardized DNA Extraction Kits | QIAamp PowerFecal Pro, DNeasy PowerLyzer | Comprehensive lysis of diverse microbial cells, including difficult-to-lyse species | Inclusion of bead-beating, extraction efficiency for Gram-positive bacteria |

| Reference Materials | NIST Human Gut Microbiome RM, ZymoBIOMICS Microbial Community Standards | Controls for technical variability, enables cross-laboratory comparisons | Representation of relevant microbial taxa, well-characterized composition |

| 16S rRNA Primer Panels | 515F/806R (V4), 27F/338R (V1-V2) | Amplification of target regions for community profiling | Coverage of relevant taxa, compatibility with established bioinformatic pipelines |

| Sequencing Standards | PhiX Control v3, Mock Microbial Communities | Monitoring sequencing performance, error rates | Inclusion in every run, appropriate concentration for platform |

| Bioinformatic Tools | DADA2, QIIME 2, Maaslin2, MOFA2 | Data processing, quality control, and integrative analysis | Reproducibility of workflow, active community support, documentation |

Case Study: ROSCO-CF Trial - Success Through Multi-Method Validation

The ROSCO-CF trial evaluating R-roscovitine in cystic fibrosis provides a compelling case study in implementing complementary approaches. While the trial found no direct impact on Pseudomonas aeruginosa using conventional endpoints, multi-faceted microbiome analysis revealed important biological insights [6].

Methodological Integration:

- Primary Analysis: 16S rDNA sequencing of sputum and fecal samples collected before and after treatment.

- Community-Level Assessment: Alpha and beta diversity metrics showed overall stability but suggested dose-dependent trends in Bray-Curtis dissimilarity (p=0.052) [6].

- Temporal Dynamics: NMIT analysis revealed emerging patterns in microbial coordination at higher doses (F=1.18, R²=0.20, p=0.061), indicating personalized restructuring of microbial communities [6].

- Taxon-Level Analysis: Maaslin2 identified specific taxa with dose-responsive abundance changes: Tannerella (coefficient=0.69, p<0.01) and Granulicatella elegans (coefficient=0.75, p<0.01) increased with dose, while Streptococcus decreased (coefficient=-0.58, p=0.02) [6].

This layered analytical approach detected signals that would have been missed by conventional methods alone, highlighting how complementary techniques can reveal biologically meaningful effects despite high interindividual variability [6].

Emerging Solutions and Standards

The field is moving toward improved standardization through several key developments:

- Reference Materials: NIST's human gut microbiome reference materials aim to reduce inter-laboratory variability [3].

- Reporting Guidelines: The STORMS checklist provides framework for transparent reporting of microbiome studies [5].

- Advanced Technologies: AI-driven analytics and organ-on-chip systems are emerging as tools for precision interventions [8].

- Regulatory Science: Evolving frameworks specifically address the unique challenges of microbiome-based therapies [4].

The reproducibility crisis in microbiome-based diagnostics and therapeutics stems from interconnected technical, biological, and analytical challenges. However, strategic implementation of complementary techniques—particularly multi-omics integration, standardized protocols, and appropriate statistical methods—provides a pathway toward more robust and translatable findings. The ROSCO-CF trial demonstrates how layered analytical approaches can detect meaningful biological signals despite high variability [6]. As the field matures, commitment to methodological rigor, transparent reporting, and validation through convergent evidence will be essential for realizing the full potential of microbiome-based medicine to address pressing human health challenges.

The field of microbiome research has been built on a foundation of correlative observations, with sequencing studies revealing countless associations between microbial communities and host health. However, a significant challenge persists: correlation does not imply causation. Without establishing causal relationships, microbiome findings cannot be reliably translated into clinical interventions or therapeutic applications. This guide compares the performance of various techniques and methodologies that, when used complementarily, enable researchers to move beyond correlation toward establishing mechanistic causation in microbiome research.

The Methodological Spectrum: From Observation to Causation

No single technique can fully unravel the complex causal relationships between host and microbiome. The most robust findings emerge from an iterative approach that leverages multiple complementary methodologies [9]. The table below summarizes the primary techniques used across this validation spectrum.

Table 1: Methodological Approaches for Establishing Causality in Microbiome Research

| Method Category | Primary Function | Causation Strength | Key Limitations |

|---|---|---|---|

| Observational Studies | Identify microbiome-disease associations | Low | Vulnerable to confounding; reveals correlation only [10] |

| Multi-omics Integration | Generate hypotheses about mechanisms | Low-Medium | Computational challenges; requires validation [11] |

| In Vitro Models | Initial screening under controlled conditions | Medium | Lack host physiology and immune responses [12] |

| Ex Vivo Models | Study host-microbiome interactions at cellular level | Medium | Lack full microenvironment; long-term culture difficulties [12] |

| Animal Models | Establish cause-effect relationships in living systems | High | Limited translational potential to humans [12] |

| Causal ML & Econometric Methods | Control for confounding in high-dimensional data | Medium-High | Complex implementation; requires specialized expertise [10] |

| Human Clinical Trials | Validate efficacy in human populations | Highest | Ethical, regulatory, and economic challenges [12] |

Benchmarking Integrative Analytical Approaches

The computational integration of different data types represents the first step toward identifying potential causal mechanisms. A systematic benchmark of integrative strategies for microbiome-metabolome data has evaluated nineteen different methods for disentangling relationships between microorganisms and metabolites [11]. These methods address distinct research goals including global associations, data summarization, individual associations, and feature selection.

Table 2: Performance Comparison of Select Integrative Methods for Multi-omics Data

| Method Type | Representative Methods | Best Use Cases | Key Performance Findings |

|---|---|---|---|

| Global Association | CCA, PLS | Identifying overall relationships between omic layers | Effective for data summarization; validated on real gut microbiome datasets [11] |

| Feature Selection | Sparse PLS, MINT | Identifying specific microbial-metabolite links | Addresses key research goals; performance varies by data type [11] |

| Causal Machine Learning | Double ML, Causal Forests | Controlling for high-dimensional confounders | Quantifies heterogeneous treatment effects; robust to confounding [10] |

| Experimental Design Integration | GLM-ASCA | Analyzing complex experimental factors | Effectively separates effects of treatment, time, and interactions in multivariate data [13] |

Experimental Models for Causation: A Comparative Analysis

Each experimental model system offers distinct advantages and limitations for establishing causal relationships. The selection of an appropriate model depends on the research question, with the most robust conclusions often drawn from concordant results across multiple systems [12].

Table 3: Performance Comparison of Preclinical Models for Establishing Causality

| Model System | Key Strengths | Principal Limitations | Causality Evidence Level |

|---|---|---|---|

| In Vitro Continuous Culture | High reproducibility; controlled manipulation of microbial communities | No host information or physiology | Low-medium; identifies microbial mechanisms only [12] |

| Organoids | Recapitulates cellular architecture and functionality of native tissues | Simplicity lacks full organ context; technical limitations | Medium; demonstrates host-cell level interactions [12] |

| Organ-on-a-Chip | Dynamic propagation with physiological relevance; multiple cell types | High costs; specialized equipment requirements | Medium; incorporates some physiological complexity [12] |

| Germ-free Animals | Direct testing of microbial causality via colonization | Limited translational potential to humans | High; establishes cause-effect in living systems [12] |

| Human Microbiota-Associated (HMA) Mice | More human-relevant microbial communities | Does not fully replicate human gut microbiome | High-medium; improves translational relevance [12] |

Detailed Experimental Protocols

Protocol 1: Cross-Cohort Microbiome-Wide Association Study

A recent hypertension study demonstrates a robust approach for identifying consistent microbial signatures across populations [14]:

Cohort Selection: Recruit 159 hypertensive patients and 101 healthy controls across two distinct geographical regions (Beijing and Dalian) with no antibiotic use in past 3 months.

Sample Processing:

- Extract DNA from fecal samples using standardized kits

- Perform quality control using fastp v0.20.164 with multiple filtering steps:

- Remove reads shorter than 90bp

- Discard reads with average Phred quality score below 20

- Eliminate reads with average complexity below 30%

- Remove unpaired reads

Taxonomic Profiling:

- Align high-quality metagenomic reads to 4,644 reference prokaryotic genomes from Unified Human Gastrointestinal Genome (UHGG) database using Bowtie2

- Apply stringent 95% nucleotide similarity threshold for taxonomic precision

- Perform batch effect correction using MMUPHin pipeline

Statistical Analysis:

- Calculate alpha diversity (Shannon's index, Simpson's index) using vegan package in R

- Perform multivariate analyses including Principal Coordinate Analysis (PCoA)

- Identify differentially abundant species with combined P < 0.05, q = 0.25 threshold

This protocol successfully identified 61 bacterial species with significantly different abundance between hypertensive patients and controls across both regions, with bacterium-based classification models achieving AUCs >0.70 in cross-cohort validation [14].

Protocol 2: Causal Machine Learning with Double ML

For establishing causality from observational data, Double Machine Learning (Double ML) provides a robust framework that controls for high-dimensional confounders [10]:

Data Preparation:

- Define treatment variable (e.g., microbial abundance)

- Specify outcome variable (e.g., disease status)

- Identify potential confounders (diet, medication, comorbidities)

Model Specification:

- Partition data into cross-fitting samples to avoid overfitting

- Use flexible ML algorithms (random forests, gradient boosting) to estimate:

- The conditional expectation of the outcome given confounders

- The propensity score (probability of treatment given confounders)

Causal Effect Estimation:

- Compute residuals of outcome and treatment

- Regress outcome residuals on treatment residuals to obtain causal estimate

- Calculate confidence intervals via bootstrap or asymptotic approximations

Validation:

- Perform sensitivity analyses to assess robustness to unmeasured confounding

- Test for effect heterogeneity across subpopulations using causal forests

This approach has been successfully applied to quantify microbiome-mediated treatment effects while controlling for numerous potential confounders that plague traditional observational studies [10].

Visualizing the Iterative Causation Workflow

The following diagram illustrates the integrated, iterative approach for establishing causal relationships in microbiome research, from initial observations to clinical translation:

Figure 1: Iterative Workflow for Establishing Microbiome Causality

Signaling Pathways in Microbiome-Host Interactions

The mechanistic gap between microbial association and host physiology is bridged by understanding specific signaling pathways. The following diagram illustrates key pathways implicated in microbiome-related diseases, such as hypertension, based on cross-cohort validation studies [14]:

Figure 2: Validated Microbiome-Host Signaling Pathways in Hypertension

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Research Reagents and Platforms for Causal Microbiome Research

| Reagent/Platform | Function | Application Context |

|---|---|---|

| UHGG Database | Reference prokaryotic genomes for taxonomic profiling | Shotgun metagenomic analysis; enables precise taxonomic assignment [14] |

| MetaPhlAn4 Database | Species-level taxonomic profiling | Microbial community analysis; distinguishes closely related species [14] |

| Custom Fungal Genome Catalog | Fungal reference genomes for mycobiome analysis | Cross-kingdom microbiome studies; enables fungal biomarker discovery [14] |

| Double ML Software Packages | Causal inference with high-dimensional controls | Econometric causal analysis; controls for numerous confounders [10] |

| GLM-ASCA Algorithms | Multivariate analysis with experimental design integration | Analyzing treatment, time, and interaction effects in microbiome data [13] |

| Germ-free Animal Models | Testing causal role of specific microbes | In vivo causality establishment; human microbiota-associated studies [12] |

| Organoid Culture Systems | Studying host-microbiome interactions ex vivo | Cellular mechanism elucidation; personalized therapy development [12] |

| MMUPHin Pipeline | Batch effect correction and meta-analysis | Cross-cohort validation; improves reproducibility and generalizability [14] |

Bridging the mechanistic gap from correlation to causation in microbiome research requires a methodical, iterative approach that leverages complementary techniques. Computational methods like causal machine learning and multi-omics integration can identify potential mechanisms, but these must be rigorously tested through experimental models ranging from in vitro systems to animal studies, ultimately culminating in human clinical trials. The most robust conclusions emerge when multiple methods yield concordant results, providing the evidence necessary to move from observational associations to causal mechanisms that can be targeted for therapeutic intervention. As the field advances, standardized methodologies, improved model systems, and sophisticated analytical frameworks will further accelerate our ability to distinguish causal relationships from mere correlations in the complex ecosystem of host-microbiome interactions.

In the era of precision medicine, multi-omics integration has emerged as a powerful paradigm for unraveling complex biological systems. Among the various omics layers, metagenomics and metabolomics offer particularly complementary insights into host-microbiome interactions and their implications for health and disease. Metagenomics provides a comprehensive view of microbial community composition and genetic potential, identifying which microorganisms are present and what functions they could perform [15] [16]. In contrast, metabolomics delivers a functional readout of the physiological state by measuring the complete collection of small-molecule metabolites, revealing what biochemical activities are actually occurring [17] [18]. This powerful combination allows researchers to move beyond correlation toward mechanistic understanding, as metabolites serve as critical mediators linking microbial functions to host physiology, immune responses, and disease progression [17].

The synergy between these approaches is particularly valuable for validating microbiome findings with complementary techniques. While metagenomic analyses can identify microbial signatures associated with disease states, metabolomic profiling provides functional validation of these associations by revealing corresponding alterations in biochemical pathways [15]. For example, in inflammatory bowel disease (IBD), integrated analyses have identified consistent alterations in underreported microbial species alongside significant metabolite shifts, directly linking microbial community disruptions to disease status through perturbed microbial pathways and functions [15]. This review provides a comprehensive comparison of these two omics technologies, their analytical challenges, and integrative strategies, with a special focus on their application in validating microbiome research findings.

Technology Comparison: Metagenomics vs. Metabolomics

Core Principles and Analytical Techniques

Metagenomics encompasses culture-independent techniques for analyzing the genetic material of entire microbial communities. Two primary approaches dominate the field: 16S rRNA amplicon sequencing, which targets a specific region of the 16S ribosomal RNA gene to provide taxonomic identification of bacteria and archaea, and shotgun metagenomics, which sequences all DNA in a sample, enabling simultaneous taxonomic profiling and functional characterization [16]. While 16S sequencing is more cost-effective and suitable for large-scale studies, it offers limited taxonomic resolution (typically to genus level) and provides only predicted functional profiles through bioinformatic tools like PICRUSt2 [16]. Shotgun metagenomics enables species- or strain-level identification and direct assessment of functional potential but generates more complex data requiring advanced computational resources [16].

Metabolomics focuses on the comprehensive analysis of small molecules (<1 kDa) in biological systems, with two main strategic approaches: untargeted metabolomics (global discovery-based analysis) and targeted metabolomics (quantification of predefined metabolite panels) [18]. The field employs complementary analytical platforms: mass spectrometry (MS), often coupled with separation techniques like liquid or gas chromatography (LC/GC), offers high sensitivity and broad coverage, while nuclear magnetic resonance (NMR) spectroscopy provides superior structural elucidation and absolute quantification without extensive sample preparation [18]. Metabolomics captures the functional output of biological systems, reflecting the influence of genetics, environment, diet, and gut microbiota [18].

Table 1: Core Technical Specifications of Metagenomics and Metabolomics

| Feature | Metagenomics | Metabolomics |

|---|---|---|

| Analytical Target | Microbial DNA/RNA | Small-molecule metabolites |

| Primary Platforms | 16S rRNA sequencing, Shotgun sequencing | Mass Spectrometry (MS), Nuclear Magnetic Resonance (NMR) |

| Key Outputs | Taxonomic profile, Functional gene content | Metabolite identification, Concentration levels, Pathway activity |

| Typical Coverage | 16S: ~Genus level; Shotgun: Species/Strain level | Targeted: Dozens to hundreds; Untargeted: Thousands of features |

| Temporal Resolution | Snapshot of microbial potential | Near real-time functional activity |

| Main Challenge | Compositional nature, High dimensionality, Bioinformatics complexity | Extreme chemical diversity, Dynamic range, Annotation limitations |

Data Characteristics and Analytical Challenges

Both technologies generate complex, high-dimensional data with distinctive characteristics that present analytical challenges. Microbiome data is inherently compositional, meaning that measurements represent relative rather than absolute abundances, which can lead to spurious correlations if not properly handled [7]. Additional characteristics include over-dispersion, zero-inflation due to rare taxa, and high collinearity between microbial taxa [7]. Proper handling of compositionality through transformations like centered log-ratio (CLR) or isometric log-ratio (ILR) is crucial for avoiding spurious results [7].

Metabolomics data similarly exhibits over-dispersion and complex correlation structures, compounded by the extreme physicochemical diversity of metabolites, which span a wide range of concentrations and chemical properties [7] [18]. This diversity necessitates sophisticated separation and detection technologies, and even with advanced platforms, comprehensive metabolome coverage remains challenging due to limitations in metabolite identification and annotation [18].

Integrative Strategies for Microbiome-Metabolome Data

Analytical Frameworks and Benchmarking Insights

Integrating microbiome and metabolome data requires specialized statistical approaches that account for the unique properties of both data types. A comprehensive benchmark study evaluated nineteen integrative methods across four key research goals: detecting global associations, data summarization, identifying individual associations, and feature selection [7].

For global association analysis, which tests whether an overall relationship exists between microbiome and metabolome datasets, multivariate methods like Procrustes analysis, the Mantel test, and MMiRKAT are commonly employed [7]. These approaches provide an initial screening step before more detailed analyses but lack resolution for identifying specific microbe-metabolite relationships.

Data summarization methods aim to reduce dimensionality while preserving the shared signal between datasets. Techniques include Canonical Correlation Analysis (CCA), Partial Least Squares (PLS), Redundancy Analysis (RDA), and Multi-Omics Factor Analysis (MOFA2) [7]. These methods facilitate visualization and interpretation by identifying latent variables that capture co-variation between omics layers, successfully revealing associations in complex diseases like Type 2 diabetes [7].

For individual association detection, which identifies specific microbe-metabolite pairs, common strategies involve computing association measures (correlation or regression) for each possible pair, though this faces challenges with multiple testing burden [7]. Alternative approaches include sparse CCA (sCCA) and sparse PLS (sPLS), which perform simultaneous dimension reduction and feature selection [7].

Table 2: Performance Comparison of Integrative Analysis Methods

| Method Category | Representative Methods | Primary Research Question | Key Strengths | Key Limitations |

|---|---|---|---|---|

| Global Association | Procrustes, Mantel, MMiRKAT | Is there an overall association between datasets? | Controls false positives, Good for initial screening | No specific feature relationships |

| Data Summarization | CCA, PLS, RDA, MOFA2 | What are the major patterns of co-variation? | Dimensionality reduction, Visualization capabilities | Limited biological interpretability |

| Individual Associations | Pairwise correlation/regression | Which specific microbe-metabolite pairs are linked? | Intuitive results, Simple implementation | Multiple testing burden, False discoveries |

| Feature Selection | sCCA, sPLS, LASSO | Which features are most relevant? | Addresses multicollinearity, Identifies robust features | Complex parameter tuning |

Experimental Workflows for Integrated Analysis

The following diagram illustrates a generalized workflow for conducting an integrated metagenomics and metabolomics study, from experimental design through biological interpretation:

Research Reagent Solutions and Essential Materials

Successful integration of metagenomics and metabolomics requires specialized reagents, platforms, and computational tools. The following table details key solutions essential for conducting robust multi-omics studies:

Table 3: Essential Research Reagents and Solutions for Multi-Omics Studies

| Category | Specific Tool/Reagent | Function & Application |

|---|---|---|

| Sequencing Platforms | Shotgun metagenomic sequencing | Comprehensive taxonomic and functional profiling of microbial communities [17] |

| Metabolomics Panels | Targeted microbiome metabolite panels | Quantification of microbially-related metabolites (e.g., SCFAs, bile acids) [17] |

| Bioinformatics Pipelines | MicrobiomeAnalyst, Metaviz, PUMA | Statistical analysis, visualization, and interpretation of metagenomics data [16] |

| Multi-Omics Integration | MOFA2, sCCA, sPLS | Identification of correlated patterns across omics layers [7] |

| Reference Databases | Curated metabolite libraries (e.g., 5,400+ metabolites) | Metabolite identification and annotation using reference libraries [19] |

| Pathway Analysis Tools | Metabolic pathway mapping software | Contextualizing findings within established biochemical pathways [19] |

Experimental Protocols for Method Validation

Protocol 1: Integrated Microbiome-Metabolome Correlation Analysis

This protocol describes a robust approach for identifying significant associations between microbial taxa and metabolites, validated through realistic simulations [7].

Sample Preparation:

- For metagenomics: Collect samples (stool, tissue, etc.) and preserve immediately at -80°C. Extract DNA using standardized kits with bead-beating for cell lysis. For 16S sequencing, amplify the V4 region of the 16S rRNA gene; for shotgun sequencing, prepare libraries without amplification bias [16].

- For metabolomics: Immediately quench metabolism using cold methanol. Extract metabolites with appropriate solvent systems (e.g., methanol:water:chloroform). For LC-MS analysis, derivatize if necessary [18].

Data Generation:

- Sequence metagenomic libraries on Illumina platforms to sufficient depth (≥10 million reads/sample for shotgun) [16].

- Analyze metabolites using UPLC-MS with both positive and negative electrospray ionization modes. Include quality control pools and blank samples throughout the run [18].

Data Processing:

- Process metagenomic data: Trim adapters, quality filter, remove host reads. For 16S data, cluster sequences into OTUs/ASVs using DADA2 or Deblur. For shotgun data, perform taxonomic profiling with MetaPhlAn and functional profiling with HUMAnN2 [16].

- Process metabolomic data: Perform peak picking, alignment, and compound identification against reference databases. Apply quality control filters and correct for batch effects [18].

Integration Analysis:

- Apply CLR transformation to microbiome data to address compositionality [7].

- Apply log transformation to metabolomics data to normalize distributions [7].

- Conduct pairwise association testing using Spearman correlation with false discovery rate (FDR) correction or employ sparse Canonical Correlation Analysis (sCCA) to identify robust microbe-metabolite associations [7].

Protocol 2: Longitudinal Multi-Omics in Dietary Intervention Studies

This protocol captures the dynamic response of gut microbiome and metabolome to dietary changes, as demonstrated in rabbit diet transition studies [20].

Study Design:

- Implement a longitudinal sampling scheme with frequent intervals before, during, and after dietary transition. For rabbit studies, sample during exclusive milk feeding, through mixed feeding, to exclusive solid feed [20].

- Include sufficient biological replicates (n≥6 per time point) to account for inter-individual variation.

Sample Collection:

- Collect fecal samples consistently at the same time of day to control for diurnal variation.

- Immediately flash-freeze samples in liquid nitrogen and store at -80°C until processing.

Multi-Omics Data Integration:

- Generate time-series profiles of microbial taxa and metabolites.

- Apply multivariate longitudinal analysis methods to identify trajectories of change.

- Use network analysis to construct microbe-metabolite interaction networks that shift across dietary phases.

- Validate findings through functional assays such as quantification of carbohydrate-degrading enzymes and bile acid profiling [20].

The strategic integration of metagenomics and metabolomics provides a powerful framework for advancing microbiome research from correlative observations to mechanistic understanding. As methodological standards continue to evolve, researchers must carefully select analytical approaches aligned with their specific biological questions, whether investigating global associations between omics datasets or identifying specific microbe-metabolite interactions. The benchmarking studies and protocols outlined here provide a foundation for designing robust integrative analyses that leverage the complementary strengths of these omics technologies. Future advances will likely come from improved standardization, expanded reference databases, and more sophisticated computational methods that can capture the dynamic, multi-scale nature of host-microbiome-metabolite interactions across diverse physiological and disease contexts.

In the rigorous world of clinical trials, conventional microbiological endpoints have long been the standard for assessing therapeutic impact on microbial communities. However, these methods, often focused on monospecific changes in known pathogens, can fail to capture the full scope of a drug's effect, particularly for agents with non-traditional mechanisms of action. This creates a critical blind spot in therapeutic development. The emerging paradigm of microbiome analysis—using high-throughput sequencing and bioinformatics to characterize microbial communities—is proving to be a powerful tool that reveals these hidden therapeutic effects. This case study examines the ROSCO-CF trial, where microbiome analysis uncovered dose-dependent drug effects on the lung microbiome that were entirely missed by conventional European Medicines Agency (EMA) endpoints [6]. This instance serves as a compelling validation for integrating microbiome findings with complementary analytical techniques in clinical research.

The ROSCO-CF Trial: A Primer on Conventional vs. Microbiome Approaches

Trial Design and Conventional Outcome

The ROSCO-CF trial was a multicenter, randomized, controlled, phase IIA, dose-ranging study investigating oral R-roscovitine (Seliciclib) in 23 people with cystic fibrosis (pwCF) chronically infected with Pseudomonas aeruginosa (PA) [6]. R-roscovitine is a protein kinase inhibitor initially developed for cancer and repurposed for CF due to its potential mechanism of action, which, unlike antibiotics, does not directly target PA [6].

The trial used standard EMA microbiological endpoints, which focus on monospecific absolute changes in PA burden. Based on these conventional measures, the study concluded that R-roscovitine, while safe and well-tolerated, showed no impact on PA infection [6]. This result would typically mark the drug as ineffective against the target pathogen using the standard lens of assessment.

The Microbiome-Driven Investigation

Given the drug's indirect mechanism of action, researchers conducted a complementary investigation to explore its broader effects on the lung and gut microbiomes. They analyzed sputum and fecal samples collected before and after treatment using 16S rDNA sequencing [6]. This approach allowed them to move beyond a single pathogen and assess the entire microbial community's response to the treatment.

Key Findings: How Microbiome Analysis Uncovered Hidden Effects

The application of microbiome analysis revealed a layer of biological activity that was invisible to conventional methods. The key findings are summarized in the table below.

Table 1: Microbiome Findings vs. Conventional Endpoints in the ROSCO-CF Trial

| Analysis Method | Primary Finding | Result | Significance |

|---|---|---|---|

| Conventional EMA Endpoints | Change in P. aeruginosa load | No significant impact detected [6] | Suggested drug was ineffective against the primary pathogen |

| Microbiome Alpha Diversity | Within-sample microbial richness | No significant shifts detected [6] | Indicated overall community richness/stability was maintained |

| Microbiome Beta Diversity | Between-sample microbial community dissimilarity | Dose-dependent increase (Bray-Curtis dissimilarity), most pronounced in the 800 mg group [6] | Revealed a subtle, dose-related restructuring of the microbial community |

| Non-parametric Microbial Interdependence Test (NMIT) | Changes in temporal coordination of microbial taxa | Trend toward distinct microbial trajectories in high-dose group (F=1.18, R²=0.20, p=0.061) [6] | Suggested the drug influenced microbial population dynamics and interactions |

| Differential Abundance (Maaslin2) | Abundance of individual taxa vs. dose | ↑ Tannerella, ↑ Granulicatella elegans, ↓ Streptococcus with increasing dose [6] | Identified specific, potentially beneficial, taxon-level shifts |

Interpretation of the Hidden Signals

The microbiome data painted a different picture from the conventional results. While the overall diversity (alpha diversity) remained stable and the dominant pathogen (PA) did not change, the therapy induced a subtle but significant restructuring of the lung microbiome. The dose-dependent increase in beta diversity indicated that the microbial community composition was changing in response to the drug in a way that was not destructive but modulatory.

Furthermore, the shifts in specific taxa were clinically suggestive. The enrichment of Tannerella and Granulicatella elegans—anaerobic commensals often associated with stable clinical status and better lung function in pwCF—coupled with a reduction in Streptococcus, points toward a potentially beneficial modulatory effect that conventional methods failed to detect [6]. This demonstrates that a drug's efficacy may not solely lie in pathogen eradication but also in fostering a more resilient and health-associated microbial community.

Experimental Protocols: Methodologies for Robust Microbiome Analysis

The insights from the ROSCO-CF trial were contingent on a rigorous methodological workflow. Below is a detailed protocol for implementing such a microbiome analysis in a clinical trial setting, from sample collection to data interpretation.

Sample Collection and DNA Sequencing

- Sample Type: The trial collected sputum (for lung microbiome) and fecal samples (for gut microbiome) from participants before and after treatment [6].

- Preservation: Samples should be immediately frozen at -80°C after collection to preserve microbial DNA integrity until processing.

- DNA Extraction: Use a commercial kit designed for microbial DNA extraction, which includes steps for effective cell lysis of diverse bacterial species and removal of inhibitors.

- Library Preparation and Sequencing: Amplify the hypervariable regions of the 16S rRNA gene (e.g., V4 region) using universal primers. Subsequently, perform high-throughput sequencing on a platform such as Illumina MiSeq or NovaSeq to generate millions of paired-end reads per sample.

Bioinformatic Processing and Data Analysis

The computational workflow transforms raw sequencing data into biologically interpretable information. The following diagram illustrates the key steps from sample to insight.

Graphviz DOT code for generating a workflow diagram titled "Microbiome Analysis Workflow" depicting the key steps from raw data to biological interpretation.

- Quality Filtering and Denoising: Use a pipeline like DADA2 or QIIME 2 to trim low-quality bases, remove chimeric sequences, and infer Amplicon Sequence Variants (ASVs), which provide single-nucleotide resolution.

- Taxonomic Assignment: Classify the ASVs against a curated reference database (e.g., SILVA or GreenGenes) to assign taxonomic identities from phylum to species level.

- Diversity Analysis:

- Alpha Diversity: Calculate metrics like Shannon Index or Observed ASVs within individual samples. Statistical comparison between groups (e.g., pre- vs. post-treatment) can be done using Wilcoxon signed-rank test or linear mixed-effects models [6].

- Beta Diversity: Calculate pairwise community dissimilarity using metrics like Bray-Curtis or Weighted Unifrac. Visualize using Principal Coordinates Analysis (PCoA). Statistical significance of group clustering can be tested with PERMANOVA [6].

- Differential Abundance Testing: Identify specific taxa whose abundances change significantly in response to treatment. Use specialized tools like Maaslin2 (Multivariate Association with Linear Models), which accounts for confounders and multiple testing, as was done in the ROSCO-CF trial [6].

Successfully implementing a microbiome study requires a suite of specialized reagents and computational tools. The following table details the key solutions for this field.

Table 2: Essential Research Reagent Solutions for Microbiome Clinical Studies

| Category | Item / Solution | Function / Application | Example / Note |

|---|---|---|---|

| Sample Collection | Stabilization Kits | Preserves microbial DNA/RNA at ambient temperature for transport. | OMNIgene•GUT, DNA Genotek kits [21] |

| DNA Extraction | Microbial DNA Isolation Kits | Efficient lysis of Gram-positive/negative bacteria; removes PCR inhibitors. | QIAamp PowerFecal Pro DNA Kit |

| Library Prep | 16S rRNA PCR Primers | Amplifies target hypervariable regions for sequencing. | 515F/806R for V4 region |

| Sequencing | High-Throughput Sequencer | Generates millions of sequencing reads for community profiling. | Illumina MiSeq, NovaSeq |

| Bioinformatics | Analysis Pipelines | Integrated suites for processing raw data, diversity analysis, and stats. | QIIME 2, mothur, DADA2 [7] |

| Statistical Analysis | Specialized R Packages | Statistical testing and visualization of microbiome data. | Maaslin2, phyloseq, vegan [6] |

| Data Integration | Multi-omics Tools | Integrates microbiome data with metabolomics, metagenomics, etc. | MOFA+, Sparse PLS, MixMC [7] |

The ROSCO-CF trial provides a powerful case study for the clinical research community. It demonstrates that relying solely on conventional, narrow-spectrum endpoints risks overlooking meaningful biological effects of novel therapeutics, especially those with immunomodulatory or host-mediated mechanisms. Microbiome analysis served as a crucial complementary technique that validated a biological effect of R-roscovitine, transforming the narrative from "no effect" to "dose-dependent microbial modulation."

For researchers, this underscores the importance of incorporating exploratory microbiome profiling into early-phase trial designs, even with small sample sizes [6] [22]. Future studies should aim to integrate microbiome data with other 'omics' layers, such as metabolomics, to move from correlation to mechanism [7] [22]. As the field progresses towards standardization and validated biomarkers, microbiome analysis is poised to transition from an exploratory tool to a core component of clinical trial endpoints, enabling a more holistic and accurate assessment of therapeutic impact [22].

The Integrative Toolkit: Benchmarking Methods for Microbiome-Metabolome Data Fusion

The integration of multi-omics data represents a formidable challenge in computational biology, particularly for exploring the complex interactions between microbiome and metabolome in human health and disease. The rapid advancement of high-throughput sequencing technologies has enabled the generation of these data at an exponential scale, yet no standard currently exists for jointly integrating microbiome and metabolome datasets within statistical models [23]. This methodological gap hinders the establishment of best practices for result interpretability and reproducibility in the growing field of microbiome-metabolome research [23].

This benchmarking study addresses a critical need in the field by systematically evaluating nineteen integrative methods to disentangle the relationships between microorganisms and metabolites. Through extensive simulation studies that mimic real-world data structures and challenges, this work provides valuable insights into the strengths and limitations of methods commonly used in practice [23]. The findings establish a foundation for research standards in metagenomics-metabolomics integration and support future methodological developments, while also providing guidance for designing optimal analytical strategies tailored to specific integration questions.

Methodological Framework

Benchmarking Design Principles

Rigorous benchmarking requires careful design to provide accurate, unbiased, and informative results [24]. This study adopted a comprehensive approach consistent with essential guidelines for computational method benchmarking, focusing on four key analytical questions: global associations, data summarization, individual associations, and feature selection [23]. The benchmarking methodology employed realistic simulations with known ground truth, enabling quantitative performance assessment across multiple scenarios.

The evaluation design addressed the unique analytical challenges presented by microbiome and metabolome data, including over-dispersion, zero inflation, high collinearity between taxa, and compositional nature [23]. Proper handling of compositionality is crucial for avoiding spurious results, and the study evaluated the impact of different normalization approaches, including centered log-ratio (CLR) and isometric log-ratio (ILR) transformations [23].

Data Simulation and Evaluation Metrics

Microbiome and metabolome data were simulated using the Normal to Anything (NORtA) algorithm, which generates data with arbitrary marginal distributions and correlation structures [23]. The simulations were grounded in three real microbiome-metabolome datasets with distinct characteristics:

- Konzo dataset: 171 samples, 1,098 taxa, and 1,340 metabolites, with microbiome data following a negative binomial distribution and metabolome data following a Poisson distribution [23]

- Adenomas dataset: 240 samples, 500 taxa, and 463 metabolites, with zero-inflated negative binomial distributions for microbiome data and log-normal distribution for metabolome data [23]

- Autism spectrum disorder dataset: 44 samples, 322 microbial taxa, and 61 metabolites, with zero-inflated negative binomial structures for microbiome data and Poisson distribution for metabolome data [23]

To assess Type-I error control, null datasets with no associations were generated. For alternative scenarios, the number and strength of associations between microorganisms and metabolites were systematically varied. Methods were tested under three realistic scenarios with varying sample sizes, feature numbers, and data structures, with 1,000 replicates per scenario [23].

Performance was evaluated based on multiple criteria: (i) for global associations, the focus was on detecting significant overall correlations while controlling false positives; (ii) for data summarization, methods were assessed on their ability to capture and explain shared variance; (iii) for individual associations, performance was measured by detecting meaningful pairwise specie-metabolite relationships with high sensitivity and specificity; and (iv) for feature selection, the focus was on identifying stable and non-redundant features across datasets [23].

The following workflow illustrates the comprehensive benchmarking process implemented in this study:

Comprehensive Analysis of Integrative Methods

Categorization of Analytical Approaches

The nineteen integrative methods evaluated in this benchmark address complementary biological questions through distinct analytical approaches [23]. Consistent with a recent report, traditional workflows for microbiome-metabolome integration include four primary types of analysis [23]:

- Global Association Methods: Determine the presence of an overall association between the two omic datasets using multivariate methods like Procrustes analysis, Mantel test, and MMiRKAT [23]

- Data Summarization Methods: Summarize information within each dataset to facilitate visualization and interpretation using approaches like canonical correlation analysis (CCA), Partial Least Squares (PLS), redundancy analysis (RDA), and MOFA2 [23]

- Individual Association Methods: Detect specific microorganism-metabolite relationships through association measures (correlation or regression) for each metabolite-species pair [23]

- Feature Selection Methods: Identify the most relevant associated features across datasets using univariate or multivariate approaches like LASSO, sparse CCA (sCCA), and sparse PLS (sPLS) [23]

The following diagram illustrates the methodological categorization and their relationships to different research goals:

Performance Across Method Categories

The benchmarking results revealed that method performance varied substantially across the four analytical goals, with different methods excelling in different tasks. The table below summarizes the top-performing methods for each analytical goal based on the comprehensive evaluation:

Table 1: Top-Performing Methods by Analytical Goal

| Analytical Goal | Best-Performing Methods | Key Strengths | Performance Characteristics |

|---|---|---|---|

| Global Associations | Procrustes analysis, Mantel test, MMiRKAT | Controls false positives, detects overall correlations | High specificity, moderate sensitivity for complex associations |

| Data Summarization | CCA, PLS, RDA, MOFA2 | Captures shared variance, facilitates interpretation | Explains maximum covariance between datasets |

| Individual Associations | Pairwise correlation/regression with multiple testing correction | Identifies specific microbe-metabolite relationships | High sensitivity for strong pairwise associations |

| Feature Selection | LASSO, sCCA, sPLS | Identifies stable, non-redundant feature sets | Handles multicollinearity, selects parsimonious feature sets |

The simulation studies provided insights into how method performance was affected by data characteristics. Methods specifically designed to handle compositional data generally outperformed standard approaches, particularly for microbiome data where proper normalization through CLR or ILR transformations was crucial [23]. The performance advantages were most pronounced in scenarios with high dimensionality, strong collinearity between features, and the presence of zero-inflation [23].

Experimental Protocols and Validation

Simulation Framework Specifications

The benchmarking study employed a rigorous simulation framework based on the Normal to Anything (NORtA) algorithm, which allows for generating data with arbitrary marginal distributions and correlation structures [23]. The key steps in the simulation protocol included:

- Parameter Estimation: Marginal distributions and correlation structures were estimated from the three real microbiome-metabolome datasets (Konzo, Adenomas, and Autism spectrum disorder) by pooling all samples regardless of study group [23]

- Correlation Network Estimation: Correlation networks for species and metabolites were estimated using SpiecEasi [23]

- Data Generation: Normal distributions were converted into correlated distributions matching the original data structures using the NORtA approach [23]

- Transformation Evaluation: The impact of different microbiome transformations (CLR, ILR, and alpha) on method performance was systematically evaluated [23]

The simulation approach allowed for the generation of datasets with known ground truth, enabling quantitative assessment of method performance through metrics including sensitivity, specificity, false discovery rate, and overall accuracy in recovering the true associations [23].

Real-Data Validation Protocol

After comprehensive simulation studies, the top-performing methods were validated on real gut microbiome and metabolome data from Konzo disease [23]. The validation protocol included:

- Data Preprocessing: Application of appropriate normalization methods to address compositionality and technical variation

- Method Application: Implementation of top-performing methods across the four analytical goals

- Biological Interpretation: Assessment of whether identified associations aligned with known biological processes

- Cross-Validation: Evaluation of result stability through resampling approaches

This validation revealed complementary biological processes across the two omic layers, demonstrating the value of integrative analysis for uncovering mechanistically meaningful relationships in complex biological systems [23].

The Scientist's Toolkit

Successful implementation of integrative microbiome-metabolome analysis requires specialized computational tools and statistical approaches. The table below details key resources identified through the benchmarking study:

Table 2: Essential Resources for Microbiome-Metabolome Integration

| Resource Category | Specific Tools/Methods | Function/Purpose | Key Considerations |

|---|---|---|---|

| Compositional Data Transformations | CLR, ILR, ALR | Normalize microbiome data to address compositionality | CLR most widely applicable; ILR preserves metric properties |

| Global Association Tests | Procrustes analysis, Mantel test, MMiRKAT | Detect overall association between datasets | Control Type I error; appropriate for initial screening |

| Data Summarization Methods | CCA, PLS, RDA, MOFA2 | Identify latent factors explaining shared variance | Balance interpretability with variance explanation |

| Feature Selection Approaches | LASSO, sCCA, sPLS | Select most relevant features across omics | Handle multicollinearity; avoid overfitting |

| Simulation Frameworks | NORtA algorithm, SpiecEasi | Generate realistic benchmark data with ground truth | Capture key data characteristics: zero-inflation, over-dispersion |

Implementation Guidelines

Based on the comprehensive benchmarking results, the following implementation guidelines are recommended for researchers undertaking microbiome-metabolome integration studies:

- Data Preprocessing: Apply appropriate compositional data transformations (CLR or ILR) to microbiome data before analysis to avoid spurious results [23]

- Method Selection: Choose analytical methods based on specific research questions rather than seeking a universal best method

- Validation: Employ complementary approaches across different analytical goals to strengthen biological conclusions

- Result Interpretation: Consider the inherent limitations of each method type when interpreting results, particularly for causal inference

The benchmarking study emphasizes that method performance is context-dependent, influenced by data characteristics including sample size, dimensionality, effect sizes, and data distributions [23]. Researchers should therefore consider their specific data properties and research questions when selecting and implementing integrative methods.

Implications for Research Standards

This systematic benchmark represents a significant step toward establishing research standards for microbiome-metabolome integration. By providing empirically grounded recommendations for method selection based on specific research goals and data types, the study addresses a critical gap in the field [23]. The findings support the development of more reproducible and interpretable analytical workflows for multi-omics integration.

The complementary strengths of different methodological approaches highlighted in this benchmark underscore the importance of method diversity in addressing complex biological questions. Rather than identifying a single best method, the results provide a framework for matching methodological approaches to specific research goals, data characteristics, and analytical priorities [23].

Future methodological development should focus on improving computational efficiency for high-dimensional data, enhancing interpretability of identified associations, and developing approaches that more explicitly account for the compositional nature of microbiome data in integrative frameworks.

A critical challenge in microbiome research is the high dimensionality and sparsity of sequencing data, often containing hundreds or thousands of microbial features and 70–90% zeros [25]. Selecting the right analytical method is paramount for identifying robust, reproducible microbial signatures for diagnosis and therapy. This guide compares four key methodological categories to help you validate findings with complementary techniques.

Quantitative Comparison of Method Performance

Experimental benchmarks across multiple microbiome datasets provide clear evidence for method selection. The following tables summarize key performance metrics from published studies.

Table 1: Performance of Feature Selection Methods Across Multiple Microbiome Datasets [25]

| Method Category | Specific Method | Average Prevalence of Selected Features | Classification Accuracy (AUC) | Feature Set Stability |

|---|---|---|---|---|

| Statistics-Based | LEfSe, edgeR, NBZIMM | Lower | Variable, higher false positives | Lower |

| Machine Learning | LASSO, Random Forest | Medium | High (~0.98 AUC) | Medium |

| Innovative Framework | PreLect (with prevalence penalty) | Higher | High (0.985 AUC) | Higher |

Table 2: Normalization & Feature Selection Interaction with Classifiers [26]

| Normalization Technique | Best-Performing Classifier(s) | Key Feature Selection Partners | Performance Note |

|---|---|---|---|

| Centered Log-Ratio (CLR) | Logistic Regression, Support Vector Machine | mRMR, LASSO | Improves performance with linear models |

| Relative Abundance | Random Forest | mRMR, LASSO | Strong results without transformation |

| Presence-Absence | All tested classifiers | mRMR, LASSO | Achieved similar performance to abundance-based data |

Detailed Experimental Protocols

To ensure reproducibility, here are the core methodologies from the cited benchmarking studies.

Protocol for Benchmarking Feature Selection Methods

This protocol is derived from large-scale comparisons evaluating methods across 42 microbiome datasets [25].

- Data Preparation: Collect multiple 16S rRNA gut microbiome datasets from curated repositories like MicrobiomeHD and MLrepo. Criteria often include a minimum sample size (e.g., 75 samples) and a controlled case-control imbalance ratio [26].

- Method Evaluation: Apply various feature selection methods (e.g., PreLect, LASSO, RF, Mutual Information) to each dataset. To ensure fair comparison, the number of features selected by each method can be fixed to match that of a benchmark method [25].

- Performance Assessment:

- Prevalence & Abundance: Calculate the mean prevalence (frequency across samples) and mean relative abundance of the selected feature set.

- Predictive Power: Use a classifier (e.g., a linear model) with nested cross-validation to compute the Area Under the Receiver Operating Characteristic Curve (AUC) based on the selected features.

- Statistical Comparison: Use effect size measures like Cohen's d to quantify the performance differences between methods across all datasets.

Protocol for Evaluating Normalization and Feature Selection

This protocol assesses the interaction between data normalization, feature selection, and classifiers [26].

- Normalization Application: Transform raw microbiome data using different techniques:

- Relative Abundance: Convert counts to proportions per sample.

- Centered Log-Ratio (CLR): A compositional data transformation that uses a log-ratio of components to address data closure.

- Presence-Absence: Convert all non-zero abundances to 1, focusing only on microbial presence.

- Model Training & Validation: Train multiple classifiers (e.g., Random Forest, Logistic Regression, SVM) on the normalized data. Use a nested cross-validation approach, where the inner loop is dedicated to hyperparameter tuning to prevent overfitting and ensure robust performance estimation [26].

- Feature Selection Integration: Incorporate a feature selection step (e.g., mRMR, LASSO) within the cross-validation pipeline and evaluate its impact on model performance and the number of required features.

Visualizing Method Selection and Workflows

Microbiome Analysis Decision Workflow

This diagram outlines the logical process for selecting analytical methods based on research goals and data characteristics.

Class-Specific vs. Global Feature Selection

The DRFS (Dual-Regularized Feature Selection) method illustrates how combining different association types improves feature selection [27].

The Scientist's Toolkit: Essential Research Reagents & Solutions

This table details key computational tools and their functions in a microbiome analysis pipeline.

Table 3: Key Reagent Solutions for Microbiome Analysis

| Tool/Reagent | Function in Analysis | Application Context |

|---|---|---|

| Centered Log-Ratio (CLR) | Normalization technique that addresses compositionality of microbiome data by using log-ratios. | Essential pre-processing step before applying linear models like Logistic Regression or SVM [26]. |

| LASSO (L1-regularization) | An embedded feature selection method that performs automatic variable selection and regularization through L1-penalty. | Effective for creating compact, interpretable feature signatures; works well with various normalizations [26] [25]. |

| mRMR (Minimum Redundancy Maximum Relevance) | A filter feature selection method that finds features maximally relevant to the target while being minimally redundant. | Identifies compact, non-redundant feature sets; performance is comparable to LASSO [26]. |

| PreLect Framework | A feature selection method that incorporates a prevalence penalty to avoid selecting rare, potentially noisy taxa. | Superior for identifying reproducible, high-prevalence microbial signatures across different cohorts [25]. |

| MaAsLin 2 | A statistical tool for identifying multivariable associations between microbial metadata and community profiles. | Useful for covariate adjustment and identifying individual associations in complex study designs [6]. |

In fields ranging from microbiome research to glycomics and geochemistry, scientists are frequently confronted with compositional data—vectors of positive values that carry only relative information because they are parts of a constrained whole [28]. Whether representing microbial abundances that sum to a fixed sequencing depth, hydrochemical parameters in groundwater, or glycan relative abundances, these datasets share a fundamental mathematical constraint: an increase in one component necessarily forces a decrease in others due to the closure property [29]. This inherent characteristic presents substantial statistical challenges, as traditional methods assuming Euclidean geometry can produce spurious correlations and misleading conclusions [30] [28].

The recognition of compositional data challenges has catalyzed the development of specialized analytical frameworks, notably Compositional Data Analysis (CoDA) [30]. Central to CoDA are log-ratio transformation techniques—including Centered Log Ratio (CLR), Additive Log Ratio (ALR), and Isometric Log Ratio (ILR)—which aim to properly handle the relative nature of compositional data by transferring observations from the constrained simplex space to real Euclidean space [31] [28]. Despite their mathematical elegance, practical implementation of these transformations requires careful consideration of their respective strengths, limitations, and appropriate application contexts, particularly given the zero-inflation and high dimensionality common in modern biological datasets [31] [32].

This guide provides a comprehensive comparison of these transformation methods, focusing on their theoretical foundations, practical performance characteristics, and implementation considerations for validating microbiome findings with complementary techniques.

Fundamentals of Compositional Data Analysis

What Makes Data Compositional?

Compositional data are defined as vectors of positive real numbers in which the components carry only relative information, with the absolute sum or total being arbitrary or irrelevant [28]. Such data are pervasive across life science domains:

- Microbiome research: 16S rRNA gene sequencing and shotgun metagenomics produce relative abundance data where microbial taxa proportions sum to 1 or 100% [31] [33]

- Glycomics: Mass spectrometry measures glycan relative abundances as proportions of total ion intensity [29]

- Groundwater geochemistry: Hydrochemical parameters represent proportions of total dissolved solids [28]

- Time-use epidemiology: Daily activity durations sum to 24 hours [30]

The fundamental challenge with compositional data stems from their constraint to a sample space called the simplex, which does not obey the principles of standard Euclidean geometry [28]. This means that applying traditional statistical methods without appropriate transformation can generate spurious correlations and misleading results [30] [29].

Foundational Principles of CoDA

Compositional Data Analysis rests upon several key principles that guide proper analytical approaches:

- Relative information: Compositional data carry information only about relative, not absolute, magnitudes between components [28]

- Subcompositional coherence: Analysis should yield consistent results regardless of whether a full composition or a subcomposition is analyzed [34]

- Scale invariance: The meaningful information is unchanged when the composition is multiplied by a constant (total sum) [34]

These principles necessitate specialized transformation approaches that convert constrained compositional data into coordinates in unconstrained real space for valid statistical analysis [28].

Core Transformation Methodologies: Mathematical Foundations and Workflows

The Centered Log Ratio (CLR) Transformation

The CLR transformation, introduced by John Aitchison, centers components by comparing them to the geometric mean of all components in the composition [34]. For a composition with D parts (x₁, x₂, ..., xD), the CLR transformation is defined as:

This transformation treats all parts symmetrically and preserves the original number of components [32] [34]. However, the resulting CLR-transformed variables are linearly dependent, as they sum to zero, which can cause issues with statistical methods requiring matrix inversion [32].

Table 1: CLR Transformation Characteristics

| Aspect | Description |

|---|---|

| Dimensionality | Maintains original D dimensions |

| Reference | Geometric mean of all components |

| Linearity | Produces linearly dependent variables |

| Interpretation | Log-ratio to geometric mean |

| Zero Handling | Problematic (zeros create undefined logarithms) |

The Additive Log Ratio (ALR) Transformation

The ALR transformation, also known as the "logistic" transformation, selects one component as a reference and calculates log-ratios of all other components to this reference [34]. For a composition with D parts and selecting xD as the reference:

This transformation reduces dimensionality from D to D-1 and produces coordinates in unconstrained real space [32]. The choice of reference component is critical and should ideally be informed by domain knowledge, though statistical criteria can also guide selection [32] [29].

The Isometric Log Ratio (ILR) Transformation

The ILR transformation represents a more sophisticated approach that creates an orthonormal coordinate system in the simplex [28]. ILR coordinates, often called "balances," contrast groups of parts through a sequential binary partition (SBP) process [28]. For two non-overlapping groups of parts J₁ and J₂:

where |J₁| and |J₂| denote the number of parts in each group [34]. The ILR transformation maintains isometry between the simplex and real space, preserving distances and angles [28].

Figure 1: ILR Transformation Workflow. The process involves sequential binary partitioning to define balance coordinates, followed by calculation of geometric means and log-ratios to create an orthonormal basis in reduced dimensionality space.

Comparative Performance Analysis of Transformation Methods

Theoretical and Practical Comparison

Table 2: Comprehensive Comparison of Log-Ratio Transformation Methods

| Characteristic | CLR | ALR | ILR |

|---|---|---|---|

| Dimensionality | D (linearly dependent) | D-1 | D-1 (orthonormal) |

| Interpretability | Moderate | High (with meaningful reference) | Variable (depends on balance structure) |

| Zero Handling | Problematic | Problematic (if reference has zeros) | Problematic (if groups contain zeros) |

| Subcompositional Coherence | No | Yes | Yes |

| Isometry Preservation | No | No | Yes |

| Reference/Basis | Geometric mean of all parts | Single reference part | Orthonormal basis (balances) |

| Optimal Use Cases | Exploratory analysis, CLR-PCA, feature selection | Regression with meaningful reference, intuitive interpretation | Distance-based methods, PCA, clustering |

Experimental Performance in Simulation Studies

Recent simulation studies have provided empirical evidence of transformation performance under various conditions. A 2024 systematic review of compositional data transformation in microbiome research demonstrated that CLR and ALR transformations are more effective when zero values are less prevalent, while novel approaches like Centered Arcsine Contrast (CAC) and Additive Arcsine Contrast (AAC) show enhanced performance in high zero-inflation scenarios [31].

A 2025 simulation study comparing methods for analyzing compositional data with fixed and variable totals revealed that the performance of each approach depends critically on how closely its parameterization matches the true data generating process [30]. The consequences of using an incorrect parameterization were shown to be more severe for larger reallocations (e.g., 10-minute time reallocations in activity data) than for 1-unit reallocations [30].

In practical applications, studies have demonstrated that:

- ILR transformations provide optimal performance for distance-based analyses like PCA and clustering due to isometry preservation [32] [28]

- CLR transformation followed by robust PCA effectively identifies key subcompositional parts in groundwater pollution studies [28]

- ALR transformation offers superior interpretability in differential abundance analysis when a biologically meaningful reference component is available [29]

Zero-Handling Capabilities

The challenge of zero values remains significant across all transformation methods, as logarithms of zero are undefined. The 2024 review of compositional data transformation identified three types of zeros in microbiome data: biological zeros (true absence), sampling zeros (due to sequencing depth limitations), and technical zeros (from sample preparation errors) [31]. The study proposed a new framework combining proportion conversion with contrast transformations to better handle zero-inflation [31].

Table 3: Zero-Handling Strategies for Compositional Transformations

| Transformation | Zero Challenges | Common Solutions |

|---|---|---|

| CLR | Any zero makes geometric mean zero | Pseudocounts, multiplicative replacement |

| ALR | Zero in reference component problematic | Careful reference selection, imputation |

| ILR | Zeros in any partition component problematic | Balance-aware zero imputation, model-based approaches |

Advanced Methodological Considerations

Alternative Balance Schemes: Amalgamation Approaches