Benchmarking Success Rates in Autonomous Materials Discovery: AI, Agents, and Real-World Performance

This article provides a comprehensive benchmark and analysis of success rates for autonomous materials discovery platforms.

Benchmarking Success Rates in Autonomous Materials Discovery: AI, Agents, and Real-World Performance

Abstract

This article provides a comprehensive benchmark and analysis of success rates for autonomous materials discovery platforms. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of AI-driven discovery, from foundation models to self-driving labs. It details the methodologies and real-world applications that demonstrate high success rates, such as the A-Lab's synthesis of 41 novel compounds. The content further investigates troubleshooting, optimization strategies to overcome failure modes, and provides a comparative validation of different autonomous systems and their performance metrics, offering a clear-eyed view of the current state and future trajectory of the field.

The Foundations of AI-Driven Discovery: From Foundation Models to Autonomous Agents

The field of autonomous scientific discovery is rapidly evolving, transitioning from a paradigm where artificial intelligence (AI) acts as a computational oracle to one of Agentic Science, where AI systems operate as full research partners with significant autonomy [1]. This shift is particularly impactful in materials science and drug development, where self-driving labs (SDLs)—which integrate AI-driven experimental selection with robotic execution—promise to accelerate discovery [2] [3].

A critical challenge for researchers and scientists is quantifying the performance and success of these autonomous platforms. Without standardized benchmarks, comparing systems and measuring true progress becomes difficult. This guide provides an objective comparison of the key metrics, experimental protocols, and current performance data essential for benchmarking autonomous discovery platforms within a rigorous research framework.

Core Benchmarking Metrics

Quantifying the acceleration provided by autonomous platforms requires comparing their performance against established reference strategies. Two metrics have emerged as central to this evaluation.

Table 1: Core Metrics for Benchmarking Autonomous Discovery Platforms

| Metric | Definition | Formula | Interpretation |

|---|---|---|---|

| Acceleration Factor (AF) [2] | Ratio of experiments needed by a reference strategy versus an active learning (AL) campaign to achieve a specific performance target. | ( AF = n{\text{ref}} / n{\text{AL}} ) | Higher AF indicates a more efficient AL process. An AF of 6 means the SDL is 6 times faster. |

| Enhancement Factor (EF) [2] | Improvement in performance achieved after a given number of experiments compared to a reference strategy. | ( EF = (y{\text{AL}} - y{\text{ref}}) / (y^* - \text{median}(y)) ) | Higher EF indicates the AL process finds significantly better results. EF is often reported per dimension of the search space. |

These metrics work in tandem: AF measures efficiency gains in the discovery process, while EF quantifies the improvement in outcome quality [2]. A comprehensive benchmark should report both. A literature survey of experimental benchmarks reveals a median AF of 6, with EF values consistently peaking at 10–20 experiments per dimension of the search space [2].

Benchmarking Experimental Protocols

A robust benchmark requires a carefully controlled experimental campaign where an autonomous learning strategy is compared directly to a reference method.

Campaign Workflow and Design

The following diagram illustrates the standard parallel workflow for benchmarking an autonomous discovery platform.

The canonical task for an SDL is to optimize a measurable property ( y ) (e.g., catalyst efficiency, drug potency) that depends on a set of ( d ) input parameters ( \mathbf{x} ) (e.g., compositions, processing conditions) [2]. The goal of the campaign is to identify the conditions ( \mathbf{x}^* ) that maximize ( y ). Progress is tracked by the best performance observed after ( n ) experiments, defined as ( y{\text{AL}}(n) ) for the active learning campaign and ( y{\text{ref}}(n) ) for the reference campaign [2].

Key Methodological Considerations

- Choice of Reference Strategy: The most common and statistically rigorous reference is uniform random sampling across the parameter space, as its expected convergence can be analytically derived [2]. Other references include Latin hypercube sampling (LHS), grid-based sampling, or human-directed experimentation [2].

- Measuring Progress: Benchmarking should use the maximum experimentally observed value of the target property, not the value predicted by a surrogate model. This ensures results are grounded in experimental reality and do not require doubling the experimental budget for validation [2].

- Defining the Search Space: The dimensionality (( d )) and statistical contrast (( C )) of the parameter space profoundly impact results. Studies show that AF tends to increase with dimensionality—a phenomenon termed the "blessing of dimensionality"—while EF peaks at 10-20 experiments per dimension [2].

Performance Comparison of Platforms and Algorithms

Performance varies significantly across systems, reflecting differences in algorithmic maturity and domain complexity.

Performance in Scientific Discovery

A comprehensive literature survey reveals quantitative data on the acceleration provided by SDLs in materials science.

Table 2: Reported Performance of Self-Driving Labs in Materials Science

| Application Domain | Reported Acceleration Factor (AF) | Typical Dimensionality (d) | Key Insights |

|---|---|---|---|

| Materials Optimization (Broad Survey) [2] | Wide range: 2x to 1000xMedian: 6x | Varies | AF tends to increase with the dimensionality of the search space. |

| Chemical & Materials Discovery (Theoretical Simulation) [2] | N/A | 1 to 10+ | Enhancement Factor (EF) consistently peaks at 10–20 experiments per dimension. |

Performance in Agentic AI Benchmarks

Beyond materials science, general-purpose AI agents are benchmarked on tasks requiring tool use, planning, and execution. Their performance on standardized tests provides insight into the current state of autonomous intelligence.

Table 3: Performance of AI Agents on Standardized Benchmarks (2025)

| Benchmark | Focus | Top Reported Performance | Implications for Discovery |

|---|---|---|---|

| GAIA [4] | General AI assistant tasks requiring multi-step reasoning & tool use. | 52.73% accuracy (Anemoi multi-agent system) | Demonstrates capability for complex, multi-step workflows relevant to experimental procedures. |

| AgentArch [4] | Complex enterprise & workflow tasks (proxy for research management). | Max success rate: 35.3% (on complex tasks) | Highlights a significant "reality gap"; full autonomy in complex, critical tasks remains challenging. |

| WebArena [5] | Realistic web environment for autonomous task completion. | 812 distinct web-based tasks | Tests ability to operate digital interfaces, a key skill for querying databases or operating lab software. |

Recent analyses conclude that while architectural advances are rapid, the immediate deployment of unsupervised, fully autonomous agents in critical enterprise workflows is technically premature, with success rates on complex tasks peaking around 35% [4]. This underscores the need for a strategy of "Controlled Autonomy" in scientific settings [4].

The Researcher's Toolkit: Essential Components

Building or evaluating an autonomous discovery platform requires familiarity with its core components, which combine physical robotics with digital intelligence.

Table 4: Essential Components of an Autonomous Discovery Platform

| Component / Solution | Category | Function in the Discovery Process |

|---|---|---|

| Automated Robotic Platform [3] | Hardware & Control | Executes physical experiments (synthesis, characterization) with high precision and reliability, enabling the "doing" in the closed loop. |

| Bayesian Optimization Algorithm [2] | AI & Decision-Making | The core "brain" that selects the most informative next experiment based on a surrogate model, balancing exploration and exploitation. |

| Tool-Using AI Agent [5] [4] | AI & Orchestration | An AI capable of dynamically using software tools (e.g., databases, simulation software) to plan and adjust experimental strategies. |

| Context-Folding Memory [4] | AI & Memory | A novel memory architecture that compresses interaction history to maintain task coherence in long-horizon research campaigns, overcoming the limitations of standard LLMs. |

| Multi-Agent Orchestration [4] | System Architecture | A framework for coordinating multiple specialized AI agents (e.g., for planning, analysis, execution) to tackle complex, multi-faceted discovery problems. |

| Data Discovery Platform [6] [7] | Data Infrastructure | Automatically finds, classifies, and manages structured and unstructured data across sources, providing the high-quality, accessible data required for AI-driven discovery. |

The architectural trend is moving towards semi-centralized multi-agent systems that facilitate direct agent-to-agent communication, reducing reliance on a single, brittle central planner and enabling more scalable and adaptive experimentation [4]. Furthermore, training frameworks like GOAT are democratizing the development of robust agents by automating the creation of synthetic training data from API documentation, thus overcoming a major bottleneck for specialized domain applications [4].

Table of Contents

- Introduction to Foundation Models in Materials Science

- Performance Comparison of Materials Science AI Models

- Experimental Protocols for Benchmarking AI in Materials Discovery

- Visualizing the Autonomous Discovery Workflow

- Essential Research Reagent Solutions

Foundation Models (FMs) and Large Language Models (LLMs) are catalyzing a paradigm shift in materials science, moving beyond traditional, task-specific machine learning models towards scalable, general-purpose, and multimodal AI systems for scientific discovery [8] [9]. Unlike their predecessors, these models are trained on broad data using self-supervision and can be adapted to a wide range of downstream tasks, from property prediction and molecular generation to synthesis planning [9]. Their versatility is particularly well-suited to materials science, where research challenges span diverse data types—including atomic structures, textual literature, experimental spectra, and simulation data—and multiple scales, from atomic to macroscopic [8].

The integration of these models into autonomous laboratories is creating closed-loop discovery systems. These systems, often called Self-Driving Labs or Materials Acceleration Platforms (MAPs), combine AI-driven hypothesis generation with robotic experimentation to execute and analyze experiments with minimal human intervention [10] [11]. This convergence of digital and physical experimentation is poised to dramatically compress the two-decade average timeline from materials discovery to commercialization, a critical acceleration for climate tech and other hard-to-abate sectors [10] [12]. However, this promise hinges on the ability to rigorously benchmark and evaluate the performance and robustness of these AI models under realistic, dynamic conditions that mirror the iterative nature of scientific discovery [13] [14].

Performance Comparison of Materials Science AI Models

Benchmarking is essential for objectively comparing the capabilities of different AI models. The following tables summarize quantitative performance data for LLMs on question-answering tasks and for various foundation models on specific materials discovery applications.

Table 1: Performance of LLMs on the MaScQA Benchmark for Materials Science Q&A [15]

| Model Name | Model Type | Overall Accuracy on MaScQA |

|---|---|---|

| Claude-3.5-Sonnet | Closed-source | ~84% |

| GPT-4o | Closed-source | ~84% |

| Llama3-70b | Open-source | ~56% |

| Phi3-14b | Open-source | ~43% |

Table 2: Performance of Foundation Models and Autonomous Systems on Discovery Tasks [8] [10] [11]

| Model/System Name | Primary Task | Reported Performance / Output |

|---|---|---|

| GNoME (Google DeepMind) | Predict stability of new crystal structures | Discovered over 2.2 million stable structures; 736 independently synthesized [10]. |

| A-Lab (Berkeley Lab) | Autonomous synthesis of inorganic compounds | Synthesized 41 of 58 targeted materials in 17 days (71% success rate) [11]. |

| MatterSim | Universal machine-learned interatomic potential | Trained on 17 million DFT-labeled structures for universal simulation [8]. |

| Coscientist | LLM-driven autonomous chemical research | Successfully optimized palladium-catalyzed cross-coupling reactions [11]. |

The data reveals a significant performance gap between closed-source and open-source LLMs on specialized materials science knowledge, highlighting the potential for improvement in open-source models via fine-tuning and prompt engineering [15]. Furthermore, foundation models have demonstrated substantial real-world impact, moving from theoretical prediction to validated experimental synthesis, as evidenced by GNoME and A-Lab [10] [11].

Experimental Protocols for Benchmarking AI in Materials Discovery

Evaluating the robustness and real-world applicability of AI models in materials science requires carefully designed experimental protocols. Below are detailed methodologies for key benchmarking approaches cited in recent research.

Robustness Evaluation for LLMs in Materials Science

A comprehensive study assessed the performance and robustness of LLMs for materials science under diverse and adversarial conditions [14].

- Datasets: Three distinct datasets were used:

- Multiple-choice questions from undergraduate-level materials science courses.

- Steel composition and yield strength data for property prediction.

- Textual descriptions of material crystal structures and band gap values.

- Prompting Strategies: Models were tested using various strategies, including zero-shot chain-of-thought, expert prompting, and few-shot in-context learning.

- Noise and Adversarial Testing: The robustness of these models was tested against a range of 'noise', from realistic disturbances to intentionally adversarial manipulations, to evaluate their resilience under real-world conditions. The study also investigated phenomena like mode collapse and performance recovery from train/test mismatches [14].

Protocol for Autonomous Synthesis and Validation (A-Lab)

The workflow of the A-Lab provides a benchmark for fully autonomous materials synthesis [11].

- Target Selection: Novel and theoretically stable materials were selected using large-scale ab initio phase-stability databases from the Materials Project and Google DeepMind.

- Synthesis Recipe Generation: Natural-language models trained on literature data were used to propose initial synthesis recipes.

- Robotic Execution: A robotic system automatically carried out solid-state synthesis based on the generated recipes.

- Phase Identification: X-ray diffraction (XRD) patterns of the products were analyzed by machine learning models, specifically convolutional neural networks, for phase identification.

- Active Learning Optimization: The ARROWS3 algorithm was used for iterative route improvement. If a synthesis failed, the system analyzed the result and proposed a modified recipe for a subsequent attempt, all within a closed loop [11].

Towards Dynamic Benchmarks for Autonomous Discovery

Recognizing the limitations of static benchmarks, a new proposal argues for dynamic benchmarks that simulate closed-loop discovery campaigns [13].

- Objective: The benchmark environment is designed to require autonomous agents to iteratively propose, evaluate, and refine material candidates under a constrained evaluation budget.

- Task: The specific goal is the efficient discovery of new thermodynamically stable compounds within chemical systems.

- Fidelity Levels: The benchmark accommodates multiple levels of evaluation, from fast machine-learned interatomic potentials to high-fidelity density functional theory (DFT) and ultimately experimental validation.

- Key Metrics: Success is measured by the efficiency and effectiveness of the agent in navigating the chemical space, handling uncertainty, and refining its approach based on iterative results, thereby emphasizing the realistic, exploratory nature of scientific discovery [13].

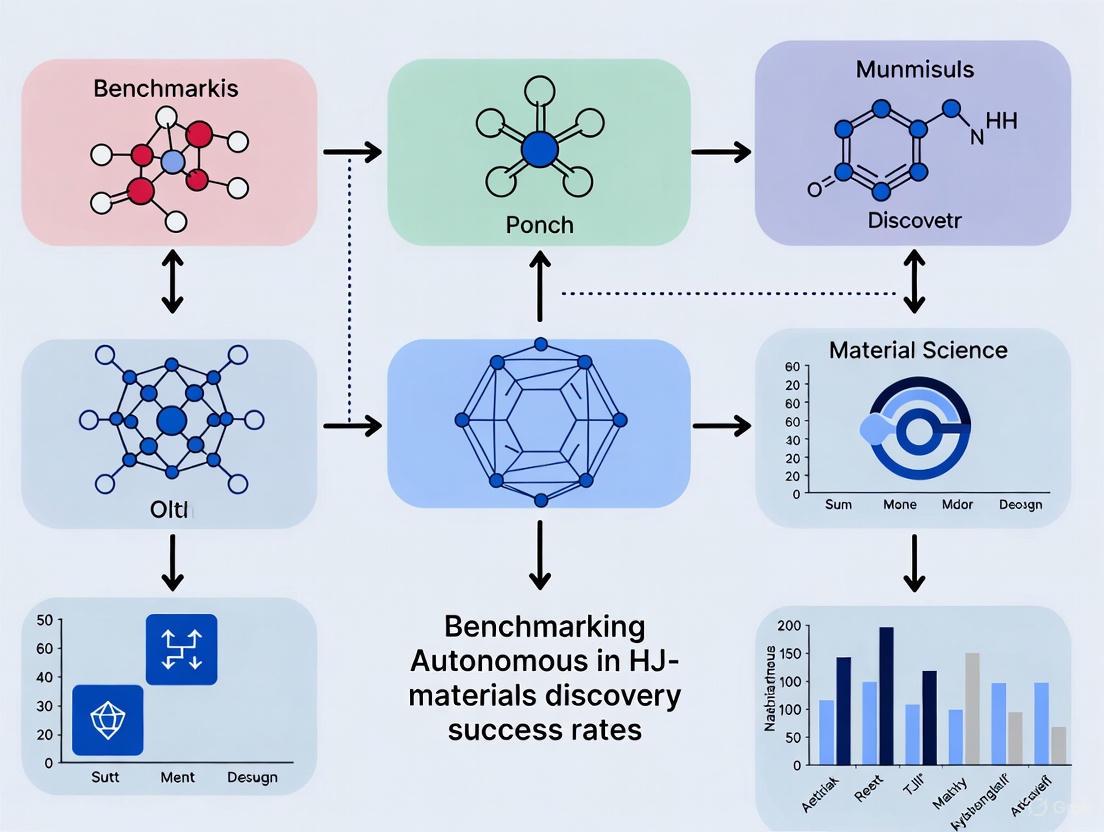

Visualizing the Autonomous Discovery Workflow

The core of an autonomous materials discovery platform is a continuous cycle of AI-driven planning and robotic execution. The diagram below illustrates this integrated workflow.

Autonomous Discovery Workflow: This diagram illustrates the closed-loop cycle of an AI-driven autonomous laboratory, integrating computational planning with physical robotic experimentation to accelerate materials discovery [11] [12].

Essential Research Reagent Solutions

The development and operation of AI models and autonomous labs in materials science rely on a suite of computational and physical "research reagents." The table below details key resources that form the backbone of this field.

Table 3: Key Research Reagent Solutions for AI-Driven Materials Science

| Resource Name / Type | Primary Function | Relevance to AI & Materials Discovery |

|---|---|---|

| The Materials Project [10] | Open-access database of known and hypothetical materials properties. | Provides foundational data for training predictive models (e.g., GNoME, A-Lab target selection) and benchmarking. |

| High-Throughput Experimentation (HTE) [10] | Robotic systems for conducting hundreds of parallel experiments. | Generates large, consistent datasets crucial for training robust machine learning models. |

| Density Functional Theory (DFT) [10] | Computational method for modeling electronic structures at the quantum level. | Generates high-quality, synthetic data for training models like MatterSim; used for high-fidelity validation in benchmarks. |

| Open MatSci ML Toolkit [8] | Open-source toolkit for graph-based materials learning. | Standardizes model development and evaluation, ensuring reproducibility and comparability in research. |

| Vision Transformers & GNNs [9] | AI model architectures for processing images and graph data. | Enables extraction of materials data from non-textual sources like spectroscopy plots and molecular structure images. |

| LLM Agents (ChemCrow, Coscientist) [11] | AI systems that use LLMs as a core reasoner to plan and execute tasks. | Acts as the "brain" of autonomous laboratories, orchestrating tools for synthesis planning and data analysis. |

Self-driving labs (SDLs) represent a paradigm shift in materials science and chemistry, transforming research from a slow, manual process into a rapid, automated discovery engine. These systems are designed to autonomously navigate the complex, high-dimensional design spaces common in modern materials research, where the number of possible experiments far exceeds practical human capacity [16]. By integrating artificial intelligence (AI) with robotic experimentation systems, SDLs create a closed-loop workflow capable of continuous learning and optimization [11]. The fundamental value proposition of SDLs lies in their ability to accelerate the pace of discovery while reducing material usage and human labor requirements. Recent experimental benchmarking studies reveal that well-architected SDLs can achieve median acceleration factors of 6× compared to conventional research methods, with performance gains increasing significantly with the dimensionality of the search space [2]. This architectural analysis examines the core components that enable this transformative capability, providing researchers with a framework for evaluating, designing, and benchmarking autonomous experimentation platforms.

The Architectural Blueprint: Deconstructing SDL Components

The architecture of a self-driving lab can be conceptualized as a stack of five specialized layers that work in concert to achieve autonomous operation. This layered architecture enables the complete Design-Make-Test-Analyze (DMTA) cycle that forms the core workflow of autonomous experimentation [16] [17]. Each layer addresses a distinct aspect of the experimental process while maintaining seamless integration with adjacent layers through standardized interfaces and data protocols.

Figure 1: The five-layer architecture of self-driving labs showing information flow between specialized components.

Layer 1: Actuation Layer

The actuation layer comprises the robotic systems and automated hardware that perform physical tasks in the laboratory environment. This includes robotic arms for sample manipulation, fluid handling systems for precise liquid dispensing, automated synthesis reactors for material creation, and environmental control systems for maintaining specific experimental conditions [17]. Unlike industrial automation designed for fixed workflows, SDL actuation systems must demonstrate exceptional flexibility and reconfigurability to handle diverse experimental requirements. For example, Berkeley Lab's A-Lab employs specialized solid-state synthesis equipment capable of handling powder precursors and operating high-temperature furnaces, enabling the autonomous synthesis of inorganic materials [10] [11]. The key challenge at this layer is balancing specialization for specific material classes with the flexibility to adapt to new research questions, often addressed through modular hardware architectures with standardized interfaces.

Layer 2: Sensing Layer

The sensing layer encompasses the sensors and analytical instruments that capture experimental outcomes and process conditions. This includes both inline characterization tools (such as spectrometers and chromatographs integrated directly into fluidic systems) and offline analytical instruments (such as X-ray diffraction systems and electron microscopes) [17]. In SDLs, sensing systems must not only generate high-quality data but do so in formats readily consumable by AI algorithms. For instance, A-Lab utilizes machine learning models for real-time phase identification from X-ray diffraction patterns, transforming raw analytical data into structured information about material properties [11]. The precision and throughput of sensing systems directly impact SDL performance, as high-precision measurements enable more efficient navigation of parameter spaces while high-throughput sensing prevents bottlenecks in the experimental cycle [18].

Layer 3: Control Layer

The control layer consists of the software infrastructure that orchestrates experimental sequences, ensuring synchronization, safety, and precision across multiple hardware components [17]. This layer manages the low-level coordination of instruments, executes experimental protocols, monitors system status, and implements safety interlocks. Specialized operating systems for SDLs, such as Chemspyd, PyLabRobot, and PerQueue, provide the foundational software infrastructure for instrument control and workflow management [19]. The control layer must handle exceptional situations through fault detection and recovery mechanisms, enabling continuous operation even when individual components fail or produce unexpected results. This capability is essential for achieving the extended operational lifetimes required for autonomous campaigns spanning days or weeks.

Layer 4: Autonomy Layer

The autonomy layer contains the AI agents and decision-making algorithms that plan experiments, interpret results, and update research strategies [17]. This layer represents the "brain" of the SDL, where optimization algorithms such as Bayesian optimization and reinforcement learning navigate complex parameter spaces by balancing exploration of unknown regions with exploitation of promising areas [2] [16]. Recent advances have incorporated large language models (LLMs) capable of parsing scientific literature and translating research objectives into experimental constraints [11] [17]. Systems like Coscientist and ChemCrow demonstrate how LLM-based agents can autonomously design experiments, plan synthetic routes, and control robotic systems [11]. The autonomy layer increasingly employs multi-objective optimization frameworks that balance competing goals such as performance, cost, and safety while quantifying uncertainty to guide informative experiments.

Layer 5: Data Layer

The data layer provides the infrastructure for storing, managing, and sharing experimental data, metadata, and provenance information [17]. This layer ensures that all experimental actions are captured as machine-readable records, including reagent identities, equipment settings, environmental conditions, and calibration metadata. By implementing standardized data formats and ontologies, the data layer enables the aggregation of results across multiple experiments and different SDL platforms. High-quality, well-structured datasets are essential for training robust AI models, and the data layer addresses the historical challenge of sparse, inconsistent experimental data in materials science [10]. Platforms like the Materials Project and Renewable Energy Materials Properties Database exemplify the role of structured data repositories in accelerating materials discovery [10].

Quantifying Performance: Benchmarking SDL Architectures

The performance of SDL architectures can be quantitatively evaluated using standardized metrics that capture efficiency, autonomy, and experimental capability. These metrics enable meaningful comparison across different platforms and guide architectural improvements.

Table 1: Key Performance Metrics for Self-Driving Labs

| Metric Category | Specific Metrics | Measurement Approach | Reported Values |

|---|---|---|---|

| Learning Efficiency | Acceleration Factor (AF) [2] | Ratio of experiments needed vs. reference method to reach target performance | Median: 6× (increasing with dimensionality) [2] |

| Enhancement Factor (EF) [2] | Improvement in performance after a given number of experiments | Peaks at 10-20 experiments per dimension [2] | |

| Autonomy Level | Degree of Autonomy [18] | Classification as piecewise, semi-closed, closed-loop, or self-motivated | Most advanced: Closed-loop (self-motivated not yet achieved) [18] |

| Operational Lifetime [18] | Demonstrated unassisted/assisted runtime | Varies by platform (e.g., A-Lab: 17 days continuous) [11] | |

| Experimental Capability | Throughput [18] | Experiments/measurements per unit time | A-Lab: 41 materials in 17 days [10] [11] |

| Experimental Precision [18] | Standard deviation of replicate measurements | Critical for algorithm performance; varies by technique [18] | |

| Material Usage [18] | Consumption of valuable/hazardous materials | Microgram to milligram scale for high-value compounds [18] |

Benchmarking Methodologies and Experimental Protocols

Rigorous benchmarking of SDL performance requires carefully designed experimental protocols that enable fair comparison between autonomous and conventional approaches. The acceleration factor (AF) is calculated by comparing the number of experiments required by an SDL versus a reference method (typically random sampling or human-directed experimentation) to achieve a specific performance target [2]. For example, in a typical optimization campaign, both the SDL and reference method would be run repeatedly on the same experimental space, tracking the best performance achieved after each experiment. The enhancement factor (EF) quantifies the performance improvement at a fixed experimental budget, normalized by the contrast of the property space [2]. These metrics are particularly valuable because they don't require complete exploration of the parameter space or prior knowledge of the global optimum.

Experimental benchmarking must control for critical variables that influence outcomes. Experimental precision is quantified through unbiased replication of control conditions interspersed throughout the campaign to measure inherent variability [18]. Algorithm performance is often evaluated through surrogate benchmarking using well-characterized analytical functions before implementation on physical systems [18]. The operational lifetime is measured as both theoretical maximum (based on consumable limits) and demonstrated runtime in actual campaigns [18]. These standardized protocols enable meaningful comparison across different SDL architectures and application domains.

Implementation Models: Centralized, Distributed, and Hybrid Architectures

SDL architectures are implemented through different organizational models that balance capability, accessibility, and specialization. Each model offers distinct advantages for specific research contexts and resource environments.

Table 2: Comparison of SDL Deployment Models

| Implementation Model | Key Characteristics | Advantages | Limitations | Example Applications |

|---|---|---|---|---|

| Centralized Facilities | High-cost equipment Shared access Economies of scale [19] | Cost-effective for expensive tools Standardized protocols High throughput [19] | Limited customization Bureaucratic access Potential inertia [19] | National lab facilities (e.g., A-Lab) [10] |

| Distributed Networks | Modular platforms Specialized capabilities Peer-to-peer collaboration [19] | Flexibility and customization Rapid iteration Domain specialization [19] | Lower individual throughput Coordination challenges [19] | Academic research labs Open-source platforms [19] |

| Hybrid Approaches | Local testing + central execution Shared standards + customization [19] [17] | Balances accessibility with capability Leverages specialized equipment [17] | Complex logistics and data management [19] | Networked university facilities [19] |

The centralized model concentrates advanced capabilities in shared facilities, such as national laboratories or core facilities, providing access to high-end instrumentation that would be prohibitively expensive for individual research groups [19]. These facilities benefit from specialized staffing and standardized protocols but may lack flexibility for highly specialized research needs. In contrast, distributed networks of smaller, modular SDLs enable customization and rapid iteration for specific scientific domains, though with lower individual throughput [19]. Emerging hybrid approaches combine local workflow development on distributed platforms with execution at centralized facilities, mirroring the cloud computing paradigm where local devices handle preliminary work while data-intensive tasks are offloaded to specialized infrastructure [17].

Essential Research Reagents and Materials

The experimental capabilities of SDLs depend on carefully selected research reagents and materials that enable automated synthesis and characterization. The following table details key components used in advanced SDL platforms.

Table 3: Key Research Reagent Solutions for Self-Driving Labs

| Reagent/Material Category | Specific Examples | Function in SDL Workflow | Implementation Considerations |

|---|---|---|---|

| Precursor Materials | Powdered inorganic compounds Metal salts Organic building blocks [11] | Starting materials for synthesis reactions | Stability under storage conditions Compatibility with automated dispensing [11] |

| Solvents & Carriers | Aqueous solutions Organic solvents Ionic liquids [18] | Reaction media and transport fluids | Viscosity for fluid handling Compatibility with tubing and seals [18] |

| Characterization Standards | Reference samples Calibration materials Internal standards [18] | Instrument calibration and data validation | Stability and reproducibility Automated loading capabilities [18] |

| Catalysts & Additives | Metal catalysts Ligands Surfactants [11] | Reaction acceleration and control | Stability in automated environments Compatibility with other components [11] |

The architecture of self-driving labs represents a fundamental reengineering of the materials discovery process, creating integrated systems that combine physical automation with intelligent decision-making. The five-layer model—encompassing actuation, sensing, control, autonomy, and data—provides a robust framework for understanding and improving these complex systems. Quantitative benchmarking demonstrates that well-designed SDLs can achieve significant acceleration factors, particularly in high-dimensional parameter spaces where human intuition struggles [2]. As SDL technology matures, emerging deployment models offer complementary pathways for democratizing access to autonomous experimentation, from centralized facilities to distributed networks [19].

The future development of SDL architectures will focus on enhancing interoperability, robustness, and generality. Standardized interfaces and data protocols will enable seamless integration of components from different vendors and research groups [17]. Improved fault detection and recovery mechanisms will extend operational lifetimes and reduce human intervention requirements [18]. More sophisticated AI algorithms, particularly those incorporating physical knowledge and uncertainty quantification, will enhance the efficiency of autonomous exploration [16]. By advancing along these architectural dimensions, self-driving labs will increasingly function as trusted partners in the scientific process, accelerating the discovery of materials needed to address critical challenges in energy, healthcare, and sustainability.

The field of artificial intelligence is undergoing a profound transformation in scientific contexts, evolving from single-shot computational tools toward sophisticated systems capable of sustained reasoning, planning, and self-refinement. This progression represents a fundamental shift from what surveys term "AI as a Computational Oracle" – where models function as specialized prediction tools within human-led workflows – to full "Agentic Science," where AI systems operate as autonomous research partners [1]. This transition is particularly evident in materials science and drug development, where autonomous laboratories now demonstrate capabilities in hypothesis generation, experimental design, execution, and iterative refinement – behaviors once regarded as exclusively human domains [1] [20]. The emergence of these scientific agents marks a pivotal stage within the broader AI for Science paradigm, enabled by converging advances in large language models, multimodal systems, and integrated research platforms [1]. Within this context, benchmarking autonomous discovery success rates has become crucial for evaluating the maturity and practical utility of these systems across diverse scientific domains.

Benchmarking Autonomous Discovery: Quantitative Performance Comparisons

Rigorous benchmarking provides critical insights into the current capabilities and limitations of autonomous scientific agents. The following comparative analysis synthesizes performance data across multiple agentic systems and research domains.

Table 1: Comparative Performance of Autonomous Scientific Agents in Materials Discovery

| System/Platform | Domain | Success Rate | Experimental Scale | Key Performance Metrics |

|---|---|---|---|---|

| A-Lab [21] | Inorganic Materials Synthesis | 71% (41/58 compounds) | 17 days continuous operation | 35 compounds via literature-inspired recipes; 6 optimized via active learning |

| Polybot [22] | Electronic Polymer Films | Target optimization against ~1M processing combinations | Fully autonomous optimization | Achieved conductivity comparable to highest standards; significantly reduced defects |

| HexMachina [23] | Strategic Planning (Catan) | 54% win rate against strongest baseline | Learned from scratch without documentation | Outperformed prompt-driven agents and human-crafted AlphaBeta bot |

| Multi-Agent Research [24] | Information Research | 90.2% improvement over single-agent | Parallel subagent deployment | Superior performance on breadth-first queries requiring parallel investigation |

Table 2: Cross-Domain Performance Analysis of AI Agent Capabilities

| Agent Capability | Materials Science | Biomedical Research | Strategic Planning | Information Research |

|---|---|---|---|---|

| Reasoning & Planning | Active learning integration [21] | Hypothesis generation & workflow planning [20] | Long-horizon strategy refinement [23] | Dynamic search strategy adaptation [24] |

| Tool Integration | Robotic material handling & characterization [21] [22] | Biomedical tool integration & experimental platforms [20] | Game API interaction & code generation [23] | Parallel web search & specialized tool use [24] |

| Optimization & Refinement | Recipe optimization via ARROWS3 [21] | Iterative hypothesis refinement [20] | Continual strategy evolution [23] | Query refinement based on intermediate results [24] |

| Multi-Agent Collaboration | Not prominently featured | Multi-agent collaboration for complex discovery [20] | Multi-role system (Orchestrator, Strategist, Coder) [23] | Orchestrator-worker pattern with parallel subagents [24] |

The quantitative evidence reveals several key patterns. First, success rates for autonomous discovery vary significantly by domain complexity, from 54% in adversarial strategic environments to over 70% in controlled materials synthesis [23] [21]. Second, the scale of experimental optimization achievable by these systems dramatically exceeds human capacity, with platforms like Polybot navigating nearly one million processing combinations [22]. Third, architectural decisions profoundly impact performance, with multi-agent systems demonstrating 90%+ improvements over single-agent approaches for parallelizable research tasks [24].

Experimental Protocols and Methodologies

Autonomous Materials Synthesis (A-Lab Protocol)

The A-Lab employed an integrated workflow combining computational screening, historical data mining, and robotic experimentation [21]. The methodology followed these key stages:

Target Identification: Compounds were selected from large-scale ab initio phase-stability data from the Materials Project and Google DeepMind, focusing on materials predicted to be stable or near-stable (<10 meV per atom from convex hull) and air-stable [21].

Literature-Inspired Recipe Generation: Initial synthesis recipes were proposed by natural language models trained on historical synthesis data from literature, using target "similarity" metrics to identify effective precursor combinations [21].

Active Learning Optimization: When initial recipes failed to produce >50% target yield, the ARROWS3 (Autonomous Reaction Route Optimization with Solid-State Synthesis) algorithm took over, integrating ab initio computed reaction energies with observed outcomes to propose improved recipes based on pairwise reaction hypotheses and driving force optimization [21].

Robotic Execution and Characterization: Robotic arms handled precursor mixing, furnace loading, and XRD sample preparation. Phase identification used probabilistic machine learning models trained on experimental structures, with automated Rietveld refinement for weight fraction quantification [21].

This protocol successfully identified 41 novel compounds from 58 targets, with literature-inspired recipes succeeding for 35 targets and active learning optimizing 6 additional syntheses [21].

Electronic Polymer Optimization (Polybot Protocol)

The Polybot system implemented a fully autonomous workflow for optimizing electronic polymer thin films [22]:

AI-Guided Exploration: Given the vast parameter space (nearly one million processing combinations), the system used statistical methods and AI guidance to efficiently navigate possible fabrication conditions.

Integrated Formulation and Characterization: The platform automated formulation, coating, and post-processing steps, with computer vision systems automatically capturing and evaluating film quality and defects.

Multi-Objective Optimization: The system simultaneously optimized for both high conductivity and low coating defects, requiring balanced exploration of the complex parameter space.

Knowledge Preservation: All experimental data and recipes were systematically captured in a shared database, enabling knowledge transfer to manufacturing scales [22].

Strategic Planning Agent (HexMachina Protocol)

HexMachina addressed long-horizon planning in the complex game of Settlers of Catan through a distinctive methodology [23]:

Environment Discovery: The system learned the game environment without formal documentation, inducing an adapter layer through exploration.

Separation of Concerns: The architecture cleanly separated environment discovery from strategy improvement, allowing compiled code to execute strategy while the LLM focused on high-level refinement.

Continual Learning Through Code: The system evolved players through code refinement and simulation, preserving executable artifacts rather than relying on prompt-centric reasoning.

Multi-Role Agent System: Different specialized roles (Orchestrator, Analyst, Strategist, Researcher, Coder) collaborated to hypothesize strategies, implement players, review APIs, and evaluate performance [23].

This approach demonstrated that separating environment learning from strategy refinement enables more consistent long-horizon planning, achieving a 54% win rate against strong human-crafted bots [23].

Workflow Architectures for Autonomous Discovery

The operational workflows of advanced scientific agents follow sophisticated architectures that enable autonomous reasoning and experimentation. The following diagrams illustrate key system designs.

The Scientist's Toolkit: Essential Components for Autonomous Discovery

The effective implementation of scientific agents requires specialized tools and resources that enable autonomous operation across the discovery pipeline.

Table 3: Research Reagent Solutions for Autonomous Materials Discovery

| Tool/Category | Function | Implementation Examples |

|---|---|---|

| Computational Databases | Provides stability predictions & reaction energies | Materials Project, Google DeepMind data [21] |

| Literature Mining AI | Extracts synthesis knowledge from text | Natural language models trained on historical data [21] |

| Active Learning Algorithms | Optimizes experimental pathways based on outcomes | ARROWS3 integrating thermodynamics with observations [21] |

| Robotic Handling Systems | Automated powder processing & transfer | Robotic arms for precursor mixing & furnace loading [21] [22] |

| Characterization Tools | Phase identification & property measurement | XRD with automated Rietveld refinement [21] |

| Computer Vision Systems | Automated quality assessment & defect detection | Image processing for film quality evaluation [22] |

| Multi-Agent Frameworks | Parallel investigation & specialized tool use | Orchestrator-worker patterns with subagent delegation [24] |

The benchmarking data presented reveals substantial progress in autonomous scientific discovery, with success rates exceeding 70% for materials synthesis and demonstrating significant advantages over traditional approaches. However, performance gaps remain, particularly in complex, adversarial environments where success rates drop to 35-54% [23] [4]. The evolution from single-shot models to systems that reason, plan, and refine represents a fundamental shift in scientific methodology, enabling exploration of experimental spaces at scales and complexities beyond human capacity. As these systems continue to develop, integrating more sophisticated reasoning, improved multi-agent coordination, and enhanced learning from failure, they promise to accelerate discovery across materials science, biomedicine, and beyond. The benchmarking frameworks established will be crucial for tracking progress and guiding the development of increasingly capable scientific agents.

The paradigm of materials discovery is undergoing a profound shift, moving from traditional trial-and-error approaches to an era of autonomous, AI-driven research. The success of this new paradigm, particularly in benchmarking the performance of autonomous discovery systems, is fundamentally dependent on the quality, scale, and diversity of the underlying data [9] [25]. This guide objectively compares the capabilities and performance of various data-centric approaches, demonstrating how advanced data extraction, curation, and multimodal integration form the bedrock of successful agentic science platforms [1] [26].

Data Extraction and Curation Methodologies

The starting point for any robust materials discovery pipeline is the creation of high-quality, large-scale datasets. This process involves sophisticated data extraction and curation protocols, each with distinct methodologies and performance outcomes as detailed in the table below.

Table 1: Comparison of Data Extraction and Curation Protocols

| Protocol / Model Name | Core Methodology | Input Data Modality | Key Output | Reported Performance / Advantage |

|---|---|---|---|---|

| Traditional Named Entity Recognition (NER) [9] | Text-based entity identification using pre-defined vocabularies and patterns. | Scientific text from documents and literature. | Structured list of material names and properties. | Limited to textual data; struggles with complex chemical nomenclature and data in figures [9]. |

| Multimodal Extraction (e.g., Vision Transformers, GNNs) [9] | Computer vision and deep learning to parse images, tables, and structures within documents. | Text, molecular images, tables, and plots from patents and papers. | Comprehensive datasets associating materials with properties from multiple sources. | Extracts critical information from non-textual elements (e.g., Markush structures in patents), significantly enriching datasets [9]. |

| Specialized Algorithms (e.g., Plot2Spectra, DePlot) [9] | Converts visual data representations (plots, charts) into structured, machine-readable formats. | Spectroscopy plots, charts, and other visual data in literature. | Structured tabular data (e.g., numerical spectra). | Enables large-scale analysis of material properties previously locked in image formats [9]. |

| Robocrystallographer [26] | Machine-generated textual descriptions of crystal structures and their features. | Crystal structure data (CIF files). | Textual description of a material. | Provides a computationally cheap, information-rich text modality for training foundation models [26]. |

Experimental Protocol for Data Extraction and Curation: The benchmarked workflows typically follow a multi-stage process. First, source documents (scientific papers, patents) are gathered. For multimodal extraction, models like Vision Transformers are trained on annotated datasets to identify and classify material-related information across text, tables, and images [9]. Specialized algorithms like Plot2Spectra are specifically designed to extract data points from common visualization types, such as converting an image of a spectroscopy plot into a digital (x,y) data series [9]. Finally, tools like Robocrystallographer automatically generate descriptive text for crystal structures, creating a natural language modality from structured data [26]. The quality of extraction is typically validated by comparing model-extracted data against a manually curated gold-standard dataset, with performance measured by precision and recall.

Multimodal Foundation Models: Architectures and Performance

Integrating these curated datasets into foundation models, especially those capable of processing multiple data types (multimodal), is the next critical step. The MultiMat framework represents a state-of-the-art approach in this domain [26].

Table 2: Benchmarking Foundation Model Approaches for Materials Discovery

| Model / Framework | Core Architecture | Training Modalities | Primary Downstream Tasks | Reported Performance |

|---|---|---|---|---|

| Encoder-Only Models (e.g., BERT-style) [9] | Transformer-based encoders. | Primarily text (e.g., SMILES, SELFIES) or graph representations. | Property prediction from structure. | Strong predictive performance but limited to the modalities seen during training [9]. |

| MultiMat Framework [26] | Multiple encoders (e.g., PotNet GNN for structure, MLPs for other data) aligned in a shared latent space. | Crystal structure, Density of States (DOS), Charge Density, Textual Descriptions. | Property prediction, novel material discovery, latent space interpretation. | Achieves state-of-the-art performance on challenging property prediction tasks. Enables novel material discovery via latent space similarity search [26]. |

Experimental Protocol for Multimodal Model Training (MultiMat): The MultiMat framework adapts and extends the Contrastive Language-Image Pre-training (CLIP) methodology to an arbitrary number of modalities [26]. For each material, separate neural network encoders are trained for each modality (e.g., a PotNet Graph Neural Network for crystal structures, MLPs for DOS and charge density, a text encoder for descriptions). The core of the training involves a contrastive learning objective that pulls the latent space embeddings of different modalities from the same material closer together, while pushing apart embeddings from different materials [26]. This creates a unified, shared latent space. For downstream tasks like property prediction, the pre-trained encoder (e.g., the crystal structure encoder) can be fine-tuned with a small amount of labeled data, leveraging the rich representations learned during multimodal pre-training [26].

The logical workflow of such an integrated, data-driven discovery system is visualized in the following diagram.

Data-Driven Materials Discovery Workflow

Essential Research Reagent Solutions

The following table details key computational tools and data resources that function as essential "research reagents" in the field of AI-driven materials discovery.

Table 3: Key Research Reagents for Data-Centric Materials Discovery

| Reagent / Resource Name | Type | Primary Function in the Workflow |

|---|---|---|

| Materials Project [26] | Public Database | Provides a vast repository of computed material properties and crystal structures, serving as a primary data source for training and benchmarking. |

| PubChem, ZINC, ChEMBL [9] | Chemical Databases | Offer extensive structured information on molecules, commonly used for training chemical foundation models. |

| PotNet [26] | Graph Neural Network (GNN) | A state-of-the-art GNN architecture that serves as a powerful encoder for crystal structure data within larger frameworks like MultiMat. |

| Robocrystallographer [26] | Text Generation Tool | Automatically generates textual descriptions of crystal structures, creating a natural language modality for multimodal learning. |

| Vision Transformers [9] | Computer Vision Model | Used within multimodal extraction pipelines to identify and interpret molecular structures and data from images in scientific documents. |

| Plot2Spectra [9] | Specialized Algorithm | Converts visual representations of spectroscopy plots into structured, numerical data, unlocking information from literature images. |

Benchmarking studies consistently show that the autonomy and success rates of AI-driven materials discovery platforms are not merely a function of their algorithms but are critically dependent on their data foundation. Systems leveraging advanced multimodal data extraction and curation protocols demonstrate a superior ability to build comprehensive datasets [9]. Furthermore, frameworks like MultiMat, which employ self-supervised training on these rich, multimodal datasets, achieve state-of-the-art performance in key tasks like property prediction and novel material identification [26]. The evidence confirms that the strategic integration of high-quality, multimodal data is the essential bedrock for training robust AI agents capable of accelerating scientific discovery.

Measuring Success: Methodologies and Real-World Performance of Autonomous Systems

Autonomous laboratories represent a paradigm shift in materials science, accelerating the discovery and synthesis of novel compounds. Central to this transformation is the A-Lab, a groundbreaking platform that has demonstrated the viability of fully autonomous materials research. This case study examines the A-Lab's performance, methodology, and places its achievements within the broader context of emerging autonomous discovery platforms.

Performance Benchmarking: A-Lab and Contemporary Platforms

The table below compares the key performance metrics of the A-Lab against other notable autonomous laboratory systems.

| Platform/System | Primary Focus | Reported Success Rate / Key Outcome | Throughput / Scale | Autonomy Level |

|---|---|---|---|---|

| A-Lab [21] [11] | Solid-state synthesis of inorganic powders | 41 of 58 novel compounds synthesized (71%) [21] | 41 novel materials in 17 days [21] | Full Agentic Discovery (Level 3) [1] |

| CRESt [27] | Discovery of fuel cell catalysts | Discovery of a catalyst with 9.3-fold improvement in power density per dollar [27] | 900+ chemistries, 3,500+ tests over 3 months [27] | AI Copilot / Assistant [27] |

| Coscientist [11] | Planning & execution of organic reactions | Successful optimization of palladium-catalyzed cross-coupling reactions [11] | Not Specified | Partial Agentic Discovery (Level 2) [1] |

| ChemCrow [11] | Chemical synthesis planning | Automated synthesis of an insect repellent and an organocatalyst [11] | Not Specified | Partial Agentic Discovery (Level 2) [1] |

The A-Lab's 71% success rate in synthesizing previously unreported inorganic materials from computational predictions sets a significant benchmark for the field [21]. This high success rate not only validates the stability predictions from ab initio databases but also demonstrates the effectiveness of its AI-driven synthesis planning.

Deconstructing the A-Lab's Experimental Protocol

The A-Lab's success is underpinned by a tightly closed-loop, autonomous workflow that integrates computational prediction, robotic execution, and AI-powered analysis.

Detailed Workflow and Methodology

The A-Lab's operation can be broken down into four core stages, which create a continuous cycle of hypothesis, testing, and learning [21] [11].

1. Target Identification and Feasibility Assessment

- Target Source: Novel, air-stable inorganic compounds were selected from large-scale ab initio phase-stability databases (Materials Project and Google DeepMind) [21] [11].

- Stability Criterion: Targets were predicted to be on or near (within <10 meV per atom) the thermodynamic convex hull, ensuring a high likelihood of stability [21].

2. AI-Driven Synthesis Recipe Generation

- Precursor Selection: A natural language processing (NLP) model, trained on a database of 29,900 solid-state synthesis recipes text-mined from scientific literature, proposed initial precursors based on analogy to known, similar materials [21] [28].

- Temperature Prediction: A second machine learning model, trained on literature heating data, recommended the initial synthesis temperature [21].

3. Robotic Synthesis Execution

- Automated Preparation: A robotic station dispensed and mixed precursor powders in precise proportions and transferred them into alumina crucibles [21] [29].

- High-Temperature Heating: A robotic arm loaded crucibles into one of four box furnaces for heating according to the AI-proposed schedule [21].

4. ML-Powered Characterization and Analysis

- Automated Processing: After cooling, samples were ground into fine powder by a robotic system [21].

- Phase Identification: X-ray diffraction (XRD) patterns were analyzed by probabilistic machine learning models to identify phases and estimate weight fractions. Patterns were compared against simulated spectra from computed structures [21].

- Validation: Results were confirmed using automated Rietveld refinement [21].

5. Active Learning for Route Optimization

- Algorithm: When initial recipes failed (yield <50%), the lab employed the ARROWS3 algorithm [21].

- Mechanism: This active learning system integrated ab initio reaction energies with observed experimental outcomes. It leverages a growing database of observed pairwise solid-state reactions to avoid pathways with low driving forces and prioritize those with more favorable thermodynamics [21].

The following table details the essential computational, data, and hardware resources that empowered the A-Lab's autonomous discovery process.

| Resource Name | Type | Function in the A-Lab |

|---|---|---|

| Materials Project/Google DeepMind DB [21] [11] | Computational Database | Provided target materials screened using large-scale ab initio phase-stability calculations. |

| Text-Mined Synthesis Database [21] | Knowledge Base | A database of 29,900 solid-state synthesis recipes used to train NLP models for precursor recommendation. |

| ARROWS3 [21] | Active Learning Algorithm | Integrated computed reaction energies with experimental outcomes to optimize failed synthesis routes. |

| AlabOS [29] | Workflow Management Software | A Python-based framework for orchestrating experiments, managing robotic devices, and tracking samples. |

| Robotic Furnaces [21] | Hardware | Four box furnaces with robotic loading/unloading for high-temperature solid-state reactions. |

| Automated XRD Station [21] | Characterization Hardware | For automated X-ray diffraction analysis of synthesized powders, coupled with ML for phase ID. |

Comparative Analysis of Autonomous Laboratory Architectures

The A-Lab exemplifies a highly integrated, single-platform approach to autonomy. In contrast, other systems are exploring different architectural paradigms, as shown in the following comparison.

- The A-Lab's Integrated Approach: The A-Lab is a dedicated, fixed system where hardware and AI are co-designed for a specific domain—solid-state synthesis of inorganic powders [21]. Its strength lies in its high throughput and deep domain knowledge embedded via its NLP and active learning models.

- LLM as Central Planner (Coscientist, ChemCrow): These systems use a large language model (LLM) as a central "brain" to plan and execute experiments by leveraging various software and hardware tools [11]. They demonstrate strong generalization for tasks like organic synthesis but may lack the deep, domain-specific physical models of the A-Lab.

- Modular Multi-Agent Systems (ChemAgents): This emerging architecture employs a hierarchical multi-agent system, where a central manager (often an LLM) coordinates specialized sub-agents (e.g., for literature review, experiment design, computation) [11]. This promises greater flexibility and complexity in handling multi-step research tasks on demand.

- Mobile Robotics (Dai et al.): This paradigm uses free-roaming mobile robots to transport samples between standard, stationary laboratory instruments, creating a flexible and reconfigurable laboratory environment [11].

Key Insights and Failure Analysis

A critical component of benchmarking is understanding failure modes. Analysis of the 17 unobtained targets (29% failure rate) in the A-Lab run revealed specific barriers to synthesis [21]:

- Sluggish Reaction Kinetics: The most common cause, affecting 11 targets, often involved reaction steps with low driving forces (<50 meV per atom) [21].

- Other Failure Modes: Precursor volatility, amorphization, and computational inaccuracies were also identified [21].

The researchers noted that minor adjustments to the decision-making algorithm could increase the success rate to 74%, and improvements in computational techniques could push it to 78% [21]. This highlights that the 71% figure is not a static ceiling but a benchmark for ongoing development.

In the fields of materials science and drug development, the high cost and time-intensive nature of experiments necessitate highly efficient data acquisition strategies. Active Learning (AL), a subfield of machine learning dedicated to optimal experiment design, has emerged as a powerful solution to this challenge. By iteratively selecting the most informative experiments to perform, AL aims to maximize learning outcomes while minimizing resource expenditure [30] [31]. This guide provides an objective comparison of prevalent AL strategies and their experimental protocols, contextualized within the broader mission of benchmarking success rates for autonomous materials discovery. The performance of these strategies varies significantly based on the application domain, data characteristics, and the specific learning goal, whether it is global optimization, model generalization, or rapid identification of high-performance candidates.

Comparing Active Learning Strategies: Performance and Applications

The table below provides a comparative overview of common Active Learning strategies, their underlying principles, and their performance across different scientific domains.

Table 1: Comparison of Active Learning Strategies and Performance

| Strategy Name | Primary Principle | Key Performance Characteristics | Ideal Use Case |

|---|---|---|---|

| Uncertainty Sampling (e.g., LCMD, Tree-based-R) [32] | Uncertainty Estimation | Excels in early stages of data acquisition; outperforms random sampling and geometry-based methods when labeled data is sparse [32]. | Rapidly reducing model error with a very small initial dataset. |

| Diversity-Hybrid (e.g., RD-GS) [32] | Hybrid (Uncertainty + Diversity) | Clearly outperforms geometry-only heuristics early in the acquisition process by selecting more informative samples [32]. | Building a robust general model when the data distribution is unknown. |

| Expected Improvement (EI) [33] | Expected Model Change | Demonstrated the best overall performance in benchmarking studies for materials optimization within compositional phase diagrams [33]. | Global optimization tasks, such as finding a material with an optimal property. |

| Upper Confidence Bound (UCB) [34] | Hybrid (Exploration + Exploitation) | Balances property prediction with uncertainty; effective for navigating complex search spaces and preventing workflow stagnation [34]. | Discovering novel candidates in generative AI workflows; balancing exploration and exploitation. |

| Greedy Causal Discovery [35] | Single-Vertex Intervention | Maximizes the number of oriented edges in a causal graph after each intervention; outperforms random intervention targets [35]. | Active learning of causal Bayesian network structures from interventional data. |

| Minimum Set Causal Discovery [35] | Minimum Intervention Set | Guarantees full identifiability of a causal graph with a minimal number of (potentially multi-vertex) interventions [35]. | Applications where full causal identifiability is required and the number of experiments must be minimized. |

Experimental Protocols for Active Learning

A standardized experimental framework is essential for the fair benchmarking of AL strategies. The following protocols are adapted from comprehensive studies and can be applied to new domains.

General Benchmarking Workflow for Regression Tasks

This protocol, as detailed in a comprehensive benchmark, evaluates AL strategies within an Automated Machine Learning (AutoML) framework for regression tasks common in materials informatics [32].

- Initialization: A small labeled dataset (L = {(xi, yi)}{i=1}^l) is created by randomly sampling from a larger pool of unlabeled data (U = {xi}_{i=l+1}^n).

- Iterative Active Learning Cycle: The following steps are repeated until a stopping criterion (e.g., a fixed budget) is met:

- Model Training: An AutoML system is used to train a surrogate model on the current labeled set (L). The use of AutoML automates model and hyperparameter selection, ensuring a fair comparison and reducing human bias [32] [36].

- Querying: The AL strategy (e.g., Uncertainty Sampling, Expected Improvement) selects the most informative sample (x^) from the unlabeled pool (U) based on the surrogate model's predictions.

- Labeling: The target value (y^) for the selected sample is acquired (e.g., via simulation or experiment).

- Update: The newly labeled sample ((x^, y^)) is added to (L) and removed from (U).

- Evaluation: Model performance is tracked throughout the cycles using metrics like Mean Absolute Error (MAE) and the Coefficient of Determination ((R^2)) on a held-out test set. The efficiency of each strategy is measured by the rate of performance improvement relative to the number of acquired samples [32].

Protocol for Drug Discovery with Fixed Budgets

This protocol, benchmarked on ligand-binding affinity data, focuses on identifying top-binders with a fixed experimental budget [37].

- Data Preparation: Use a curated affinity data set (e.g., for a specific protein target like TYK2 or D2R) with known binding affinities for all compounds.

- Initial Batch Selection:

- An initial batch of compounds is selected for "labeling." Studies show that a larger initial batch size, especially on diverse data sets, increases the recall of top binders [37].

- For diverse chemical spaces, an exploration-focused strategy (e.g., based on molecular diversity) is beneficial for the initial batch.

- Iterative Cycles with Fixed Batch Size:

- A model (e.g., Gaussian Process or fine-tuned Chemprop) is trained on all currently labeled data.

- A fixed number of new compounds (e.g., a batch size of 20 or 30) are selected from the remaining pool using an acquisition function. Smaller batch sizes are generally more effective in these subsequent cycles [37].

- The "oracle" (in benchmarking, the pre-known affinity) provides the labels, and the data set is updated.

- Performance Assessment: Strategies are evaluated based on:

- Overall Model Performance: (R^2), Spearman rank correlation.

- Exploitative Capability: Recall and F1 score for the top 2% or 5% of binders, measuring success in exhaustively finding the most potent compounds [37].

Workflow Visualization: The Active Learning Cycle

The following diagram illustrates the standard closed-loop workflow of an Active Learning process, as implemented in autonomous discovery systems [32] [31].

The Standard Active Learning Cycle

The Scientist's Toolkit: Essential Research Reagents and Solutions

This section details key computational tools and methodologies that function as essential "reagents" in an Active Learning experiment.

Table 2: Key Research Reagent Solutions for Active Learning

| Tool / Solution | Function in Active Learning Protocol |

|---|---|

| Automated Machine Learning (AutoML) [32] [36] | Automates the selection and hyperparameter tuning of surrogate models (e.g., tree-based models, neural networks), ensuring optimal performance and reducing human bias during the iterative AL cycle. |

| Gaussian Process (GP) Regression [37] | A probabilistic model that provides naturally calibrated uncertainty estimates, making it a strong choice for uncertainty-based AL strategies, especially when training data is sparse. |

| Graph-Based Phase Mapping [31] | Used in materials discovery to infer structural phase diagrams from diffraction data. In AL, it guides measurements to maximize knowledge of the phase map, which can accelerate property optimization. |

| Molecular Dynamics (MD) Simulators [34] | Acts as a computationally expensive "oracle" to score candidate materials (e.g., on properties like binding affinity). AL is used to prioritize which candidates are sent to this resource-intensive simulation. |

| Pre-trained Generative Model [34] | Expands and explores the chemical or materials design space by generating novel candidate structures. When combined with AL for prioritization, it prevents the waste of resources on nonsensical candidates. |

| Bayesian Optimization [30] [31] | A framework for global optimization of black-box functions. Its acquisition functions (e.g., Expected Improvement, UCB) are central AL strategies for goal-driven experimental design. |

The field of inorganic materials discovery has traditionally been hampered by slow, trial-and-error experimentation, with average development timelines spanning two decades from discovery to commercialization. [10] Conventional machine learning approaches have accelerated materials design through improved property prediction, but they operate as single-shot models limited by the knowledge embedded in their training data. [38] [39] A fundamental challenge lies in creating intelligent systems capable of autonomously executing the full discovery cycle—from ideation and planning to experimentation and iterative refinement. [38]

This challenge has spurred the development of multi-agent AI frameworks like SparksMatter, which aim to automate the entire materials discovery process. [38] [39] However, the emergence of these sophisticated systems has revealed a critical gap: existing benchmarks for computational materials discovery primarily evaluate static predictive tasks or isolated computational sub-tasks, inadequately capturing the iterative, exploratory nature of scientific discovery. [13] This article examines current benchmarking approaches for autonomous materials discovery systems, with a focused analysis on how frameworks like SparksMatter perform against alternatives and the emerging methodologies needed to properly evaluate their capabilities.

Comparative Analysis of Key Autonomous Materials Discovery Systems

Table 1: Performance comparison of major materials discovery systems across standardized metrics.

| System Name | Architecture | Primary Function | Reported Performance | Key Advantages | Limitations |

|---|---|---|---|---|---|

| SparksMatter [38] [39] | Multi-agent AI with LLM integration | End-to-end autonomous materials design | 80% precision in stability prediction; Significant improvement in novelty scores vs. frontier models [38] | Integrates ideation, planning, experimentation, refinement; Self-critique capability [38] | Limited experimental validation data available |

| GNoME [40] [41] | Graph Neural Network (GNN) | Stability prediction & materials discovery | Discovered 2.2M new crystals with 380,000 stable materials; 736 externally synthesized [40] [41] | Unprecedented scale of discovery; Emergent out-of-distribution generalization [40] | Focused primarily on stability prediction, not full discovery cycle |

| Sequential Learning (SL) [42] | Various ML models with active learning | Experiment guidance & optimization | Up to 20x acceleration vs. random acquisition; Performance highly goal-dependent [42] | Proven experimental acceleration; Adaptable to various research goals [42] | Can substantially decelerate discovery if poorly configured [42] |

| A-Lab [10] | Autonomous robotic lab | Autonomous synthesis & characterization | 71% success rate (41/58 materials synthesized in 17 days) [10] | Physical implementation; Integrated synthesis and characterization [10] | Limited to known synthesis pathways; Physical throughput constraints |

Table 2: Benchmarking results across different materials classes and research goals.

| System/Approach | Materials Class | Research Goal | Success Metric | Efficiency Gain |

|---|---|---|---|---|

| SparksMatter [38] [39] | Thermoelectrics, Semiconductors, Perovskites | Novel stable material discovery | Higher relevance, novelty, scientific rigor vs. benchmarks [38] | Not explicitly quantified but demonstrated end-to-end automation |

| GNoME [40] [41] | Inorganic crystals | Stability prediction | 80%+ hit rate with structure; 33% with composition only [40] | Order-of-magnitude improvement in discovery efficiency [40] |

| Sequential Learning [42] | Metal oxide OER catalysts | Discovery of "good" catalysts | Varies from 20x acceleration to drastic deceleration [42] | Highly sensitive to research goal and algorithm selection [42] |

| FlowSearch [43] | Multi-disciplinary QA | Scientific question answering | SOTA on GAIA, HLE, TRQA; competitive on GPQA [43] | Dynamic knowledge flow enables parallel exploration [43] |

Experimental Protocols and Methodologies

SparksMatter's Multi-Agent Workflow Protocol

SparksMatter employs a structured multi-agent framework that automates the complete materials discovery pipeline through four specialized agents working in coordination. [38] [39] The experimental protocol follows these key phases:

Query Clarification & Ideation: The system begins by interpreting user queries and contextualizing key terms. Scientist agents then generate hypotheses by combining domain knowledge with generative modeling, returning structured responses with scientific reasoning, core ideas, justifications, and high-level approaches. [39]

Planning & Workflow Design: A planner agent translates these ideas into detailed, executable plans specifying tasks, tools, and parameters. This includes selecting appropriate computational methods, simulation parameters, and validation steps. [39]

Iterative Execution & Refinement: An assistant agent implements the plan by generating and running Python code to interact with computational tools including the Materials Project database, MatterGen for structure generation, and CGCNN for property prediction. After each step, the system reflects on results and refines the plan adaptively. [39]

Critical Evaluation & Reporting: A critic agent synthesizes all outputs into a comprehensive document containing motivation, methodology, findings, limitations, and future directions, including recommendations for DFT calculations and experimental synthesis. [38] [39]

The methodology was validated across case studies in thermoelectrics, semiconductors, and perovskite oxides, with performance benchmarking against frontier models conducted by blinded evaluators assessing relevance, novelty, and scientific rigor. [38]

Dynamic Benchmarking Methodology for Autonomous Discovery

Traditional static benchmarks fail to capture the iterative nature of materials discovery. [13] The emerging methodology for proper evaluation involves dynamic benchmarking environments that simulate closed-loop discovery, requiring autonomous agents to iteratively propose, evaluate, and refine candidates under constrained evaluation budgets. [13] Key aspects include:

Multi-Fidelity Evaluation: Benchmarks accommodate multiple fidelity levels, from machine-learned interatomic potentials to density functional theory and experimental validation, reflecting real-world discovery processes. [13]

Open-Ended Exploration: Rather than targeting fixed answers, benchmarks evaluate the system's ability to efficiently explore chemical spaces and discover thermodynamically stable compounds. [13]

Adaptive Decision-Making Assessment: Systems are evaluated on their capacity for iterative refinement, adaptive decision-making, handling uncertainty, and traversing unknown chemical landscapes. [13]

This approach emphasizes the realistic elements of scientific discovery that static benchmarks miss, providing a more meaningful evaluation of autonomous systems' capabilities. [13]

Workflow Visualization of Autonomous Discovery Systems

SparksMatter Multi-Agent Workflow - This diagram illustrates the dynamic, iterative workflow of the SparksMatter system, showing how specialized agents collaborate throughout the materials discovery process with continuous refinement.

Materials Discovery Benchmarking Types - This visualization compares traditional static benchmarking with emerging dynamic approaches that better capture the iterative nature of autonomous discovery systems.

Table 3: Key computational tools and databases enabling autonomous materials discovery.

| Tool/Resource | Type | Primary Function | Application in Discovery Workflows |

|---|---|---|---|

| Materials Project [10] [40] | Database | Open-access platform for known/hypothetical materials | Provides foundational data for training models and validating predictions; used by SparksMatter for candidate screening [10] |

| Density Functional Theory (DFT) [10] [40] | Computational Method | Quantum-level electronic structure modeling | Gold standard for verifying stability and properties; used for final validation in autonomous workflows [10] |

| Graph Neural Networks (GNNs) [40] [41] | AI Model | Structure-property prediction | Backbone of GNoME system; enables accurate stability predictions from crystal structures [40] |

| MatterGen [38] [39] | Generative Model | Inverse materials design | Conditionally generates novel crystal structures meeting target property requirements; used in SparksMatter pipeline [38] |

| CGCNN [39] | AI Model | Property prediction | Crystal Graph Convolutional Neural Network for predicting material properties from atomic structures [39] |

| Machine-Learned Interatomic Potentials [25] | Simulation Method | Large-scale atomistic simulations | Provides near-DFT accuracy with significantly lower computational cost for screening candidates [25] |

Performance Analysis and Research Implications

The benchmarking data reveals distinct strengths and limitations across autonomous materials discovery systems. SparksMatter demonstrates particular effectiveness in generating chemically valid, physically meaningful hypotheses beyond existing knowledge, with blinded evaluation showing significant improvements in novelty scores across multiple real-world design tasks. [38] Its multi-agent architecture enables comprehensive scientific reasoning that spans from initial ideation to detailed experimental planning.