Benchmarking Materials Synthesis Approaches: From Traditional Methods to AI-Driven Innovation

This article provides a comprehensive benchmarking analysis of contemporary materials synthesis approaches, tailored for researchers, scientists, and drug development professionals.

Benchmarking Materials Synthesis Approaches: From Traditional Methods to AI-Driven Innovation

Abstract

This article provides a comprehensive benchmarking analysis of contemporary materials synthesis approaches, tailored for researchers, scientists, and drug development professionals. It explores the foundational principles of traditional physical, chemical, and biological methods before delving into advanced computational and AI-driven strategies. The scope includes methodological applications for specific biomedical goals, practical troubleshooting and optimization techniques powered by machine learning, and a critical validation of approaches through comparative analysis of efficiency, scalability, and experimental success rates. By synthesizing the latest research and real-world case studies, this review serves as a strategic guide for selecting and optimizing synthesis pathways in modern materials development.

The Synthesis Landscape: Core Principles and Emerging Frontiers

This guide provides an objective comparison of physical, chemical, and biological methods used in materials synthesis and pretreatment, with a specific focus on their application in bioprocessing and lignocellulosic biomass valorization. The data and experimental protocols presented herein are framed within a broader research thesis on benchmarking materials synthesis approaches.

The optimization of material properties and the efficient conversion of raw biomass into valuable products are central to advancements in drug development, biofuel production, and sustainable manufacturing [1] [2]. The efficacy of these processes is often limited by the inherent recalcitrance of raw materials, such as the lignin content in plant biomass or the presence of toxins in agricultural by-products [1] [3]. To overcome these challenges, foundational pretreatment and synthesis routes—categorized as physical, chemical, and biological methods—are employed to modify the structural and chemical composition of materials. This guide provides a comparative assessment of these core methodologies, detailing their performance, experimental protocols, and applications to serve researchers and scientists in selecting and benchmarking the optimal synthesis pathway for their specific needs.

Comparative Analysis of Foundational Methods

The following table summarizes the performance outcomes of physical, chemical, and biological treatments as applied to two distinct material systems: lignocellulosic grass clippings and cottonseed for detoxification [1] [3].

Table 1: Comparative Performance of Physical, Chemical, and Biological Treatments

| Method Category | Specific Treatment | Key Experimental Conditions | Primary Outcome | Quantitative Result |

|---|---|---|---|---|

| Chemical | Alkaline (NaOH) | 0.9% NaOH, 37°C, 24 hours [1] | Lignin reduction in grass clippings [1] | 58% reduction [1] |

| Chemical | Alkaline (Ca(OH)₂) | 1-2% Ca(OH)₂ on crushed whole cottonseed [3] | Free gossypol (FG) detoxification [3] | FG reduced to 0.04% [1] |

| Chemical | Acid Thermal Hydrolysis | H₂SO₄, 120°C, 103 kPa, 1 hour [1] | Hemicellulose removal in grass clippings [1] | Significant removal (specific % not provided) [1] |

| Physical | Autoclaving | Crushed whole cottonseed [3] | Free gossypol (FG) detoxification [3] | 96% detoxification [3] |

| Physical | Ultrasonication | 150W, 20Hz, 30 min on grass clippings [1] | Lignin reduction [1] | Notable reduction (efficacy below alkaline treatment) [1] |

| Biological | Solid-State Fermentation (SSF) | Pleurotus ostreatus CC389 on autoclaved cottonseed, 6 days [3] | Free gossypol (FG) detoxification [3] | FG reduced to trace levels (>99.66%) [3] |

| Biological | Enzymatic Cocktail | Cellulase & Laccase on grass clippings, 55°C, up to 48 hours [1] | Lignin reduction [1] | Notable reduction (efficacy below alkaline treatment) [1] |

| Combined | Physical & Biological (SSF) | Autoclaving followed by fungal treatment (P. lecomtei CC40) on cottonseed [3] | Free gossypol (FG) detoxification & improved nutrition [3] | FG reduced to trace levels, increased crude protein [3] |

Detailed Experimental Protocols

Protocol 1: Alkaline Treatment of Lignocellulosic Biomass

This protocol describes the process for treating grass clippings with sodium hydroxide (NaOH) to reduce lignin content, based on a study comparing multiple pretreatment methods [1].

- Step 1: Sample Preparation. Collect and homogenize grass clippings (e.g., Digitaria sanguinalis) using a commercial blender to create a uniform mixture. Store the ground material at 4°C to preserve consistency [1].

- Step 2: Treatment Setup. Use a mixing ratio of 1:10 (e.g., 10 g of ground grass with 100 mL of 0.9% w/v NaOH solution). Maintain a constant temperature of 37°C for 24 hours [1].

- Step 3: Post-Treatment Processing. After the incubation period, filter the mixture to separate the solid biomass from the liquid chemical solution. Wash the solid residue three times with distilled water to neutralize pH and remove any residual chemicals that could interfere with subsequent analysis [1].

- Step 4: Drying. Dry the washed solid samples at 60°C for 6 hours to prepare them for compositional analysis [1].

- Step 5: Analysis. Assess treatment efficacy using Van Soest Fiber Analysis to determine lignin, cellulose, and hemicellulose content. Advanced techniques like Scanning Electron Microscopy (SEM) and Fourier Transform Infrared Spectroscopy (FTIR) can be used for structural and chemical characterization [1].

Protocol 2: Solid-State Fermentation for Toxin Detoxification

This protocol outlines the biological detoxification of free gossypol (FG) in crushed whole cottonseed using white-rot fungi, adapted from a comparative study on detoxification methods [3].

- Step 1: Substrate Preparation. The cottonseed substrate is first autoclaved. This physical treatment serves both to pre-detoxify the material and to sterilize it, eliminating competing microorganisms [3].

- Step 2: Inoculation. Inoculate the autoclaved substrate with a pure culture of a selected basidiomycete fungus, such as Pleurotus ostreatus CC389 or Panus lecomtei CC40. Ensure even distribution of the fungal inoculum throughout the substrate [3].

- Step 3: Fermentation. Incubate the inoculated substrate under controlled conditions for a defined period, typically 6 days. Maintain appropriate temperature and humidity to support fungal growth and enzyme production [3].

- Step 4: Monitoring. During fermentation, monitor the secretion of key lignin-modifying enzymes, such as laccase and manganese peroxidase, which are correlated with gossypol degradation [3].

- Step 5: Termination and Analysis. After the incubation period, terminate the fermentation. Analyze the final FG content using sensitive chromatographic methods like Ultra High-Performance Liquid Chromatography (UHPLC). The nutritional quality of the fermented biomass can also be assessed by analyzing changes in crude protein and total lipid content [3].

Experimental Workflow and Key Reagent Solutions

Workflow for Comparative Treatment Assessment

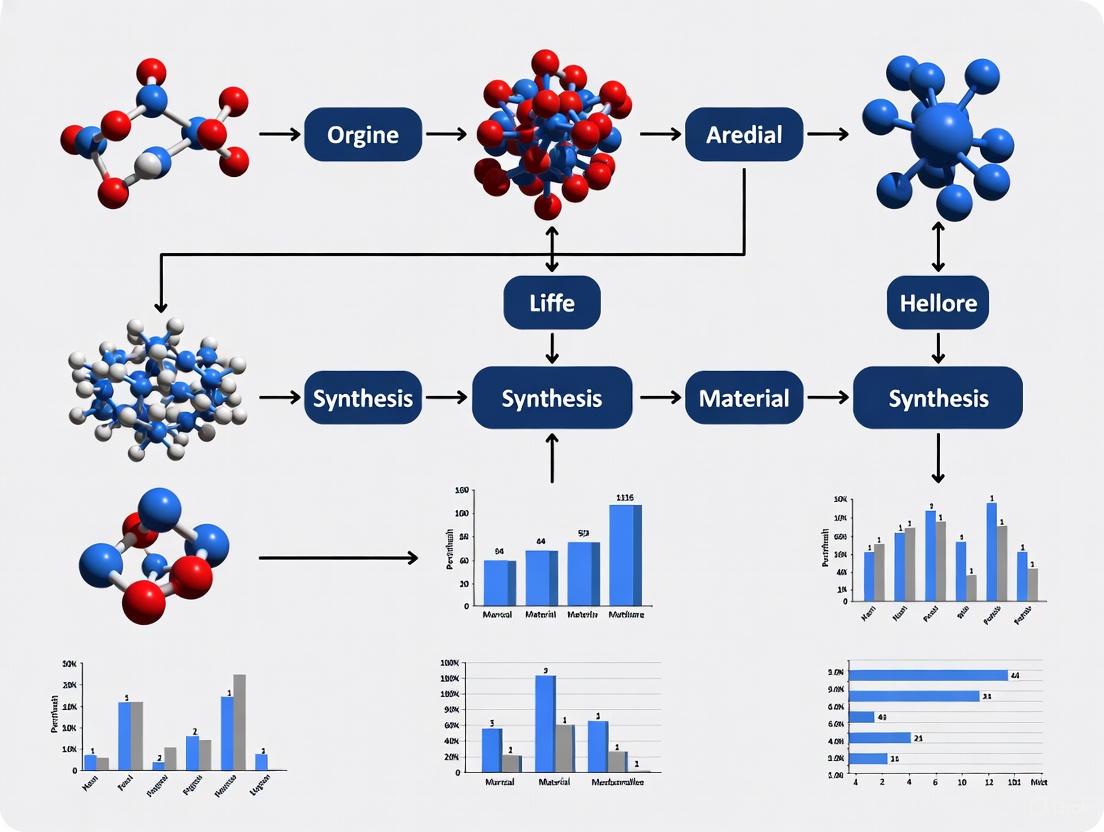

The diagram below illustrates a generalized experimental workflow for the comparative assessment of physical, chemical, and biological treatment methods.

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential reagents, materials, and biological agents used in the experimental protocols for the foundational treatment methods.

Table 2: Key Research Reagent Solutions and Their Functions

| Item Name | Function / Role in Treatment | Category |

|---|---|---|

| Sodium Hydroxide (NaOH) | Alkaline agent that disrupts lignin structure, solubilizing it and enhancing biomass digestibility [1]. | Chemical Reagent |

| Calcium Hydroxide (Ca(OH)₂) | Alternative alkaline agent used for detoxification, effective in binding or degrading toxins like free gossypol [3]. | Chemical Reagent |

| Sulphuric Acid (H₂SO₄) | Acid catalyst that targets and hydrolyzes hemicellulose polymers into soluble sugars under thermal conditions [1]. | Chemical Reagent |

| Cellulase Enzyme | Hydrolyzes cellulose into glucose, reducing the recalcitrance of the cellulose crystalline structure [1]. | Biological Reagent |

| Laccase Enzyme | Oxidizes and breaks down phenolic components of lignin, a key step in biological delignification [1] [3]. | Biological Reagent |

| Pleurotus ostreatus | White-rot fungus that secretes extracellular enzymes (laccase, peroxidase) for lignin and toxin degradation [3]. | Biological Agent |

| Sodium Acetate Buffer | Maintains optimal pH (e.g., 4.8) for the activity of enzymatic cocktails during biological treatment [1]. | Buffer Solution |

| Basal Culture Medium | Provides essential nutrients (C, N, trace elements) to support microbial growth during fermentation [2] [3]. | Growth Medium |

The quantitative data and protocols presented reveal a clear trade-off between the efficiency, cost, and environmental impact of the different foundational methods. Chemical treatments, particularly alkaline methods, offer strong and rapid delignification, making them highly effective for lignocellulosic biomass [1]. Physical methods like autoclaving can achieve high detoxification rates and also serve to sterilize substrates for subsequent biological processing [3]. Biological treatments, while often requiring longer incubation times, provide a highly specific, low-energy, and non-polluting route for detoxification and valorization, often enhancing the nutritional value of the treated material [3].

Combined treatments, such as physical pre-processing followed by biological fermentation, exemplify how the integration of these foundational routes can yield synergistic results, achieving near-complete detoxification while improving the overall quality of the output material [3]. This underscores the importance of a holistic benchmarking approach that considers not only the primary performance metric but also factors like energy consumption, equipment needs, and the potential for generating value-added by-products. The choice of an optimal method is therefore highly context-dependent, dictated by the nature of the source material, the target product, and the economic and sustainability constraints of the overall process.

Green Nanotechnology and Sustainable Synthesis Principles

Green nanotechnology represents a transformative approach within materials science, focusing on the environmentally friendly production of nanoparticles through biological and sustainable processes. This paradigm shift from conventional chemical and physical synthesis methods offers a safer, eco-friendly, non-toxic, and cost-effective alternative for generating metal nanoparticles with diverse applications across pharmaceuticals, energy, electronics, and bioengineering [4]. The field is characterized by its utilization of biological organisms such as plants, algae, fungi, and bacteria as biofactories for nanoparticle synthesis, capitalizing on their rich repertoire of phytochemicals and enzymes that serve as both reducing and stabilizing agents [4] [5].

The fundamental principles guiding green nanotechnology align with the broader concepts of green chemistry, emphasizing waste reduction, sustainable feedstock, and benign synthesis pathways. Compared to traditional top-down and bottom-up nanoparticle production strategies that often require hazardous chemicals, high energy inputs, and generate toxic byproducts, biologically driven synthesis demonstrates superior environmental compatibility while maintaining precise control over nanoparticle characteristics [4]. This review provides a comprehensive comparison between green synthesis approaches and conventional methods, examining performance metrics through quantitative experimental data and detailed methodological protocols to establish rigorous benchmarking criteria for researchers and drug development professionals engaged in materials synthesis optimization.

Methodological Comparison: Green vs. Conventional Synthesis

Synthesis Approaches and Characteristics

Table 1: Comparison of Nanoparticle Synthesis Methodologies

| Synthesis Aspect | Green/Biological Synthesis | Chemical Synthesis | Physical Synthesis |

|---|---|---|---|

| Reducing Agents | Plant metabolites (phenols, flavonoids, terpenoids), microbial enzymes [4] [5] | Chemical reductants (sodium borohydride, citrate) | High energy (laser ablation, arc discharge) |

| Stabilizing Agents | Natural biomolecules from extracts [5] | Synthetic polymers, surfactants | Requires additional stabilizers |

| Reaction Conditions | Ambient temperature/pressure, aqueous phase [6] | Often extreme pH, high temperature | High energy input, vacuum systems |

| Environmental Impact | Low toxicity, biodegradable waste [4] | Hazardous chemical waste | High energy consumption |

| Cost Considerations | Low-cost, sustainable biomass [5] | Expensive chemical precursors | Capital-intensive equipment |

| Scalability | Promising with optimization needed [5] | Well-established | Limited by energy requirements |

| Nanoparticle Biocompatibility | Enhanced due to bio-capping [5] | Potential cytotoxicity concerns | Variable depending on stabilizers |

Quantitative Performance Metrics

Table 2: Experimental Performance Comparison of Synthesis Methods

| Performance Metric | Plant-Mediated Green Synthesis | Chemical Reduction Method | Laser Ablation Method |

|---|---|---|---|

| Synthesis Duration | 30 minutes - 24 hours [6] | Minutes to hours | Hours to days |

| Temperature Range | 25-100°C [6] | 50-300°C | Room temperature to high |

| Energy Consumption | Low to moderate | Moderate | Very high |

| Particle Size Range | 5-100 nm [4] | 10-150 nm | 5-200 nm |

| Size Dispersity | Moderate to low with optimization | Low to moderate | Often broad |

| Shape Control | Good with parameter optimization | Excellent | Limited |

| Yield | Variable, medium to high | High | Low to medium |

Experimental Data and Performance Benchmarking

Antimicrobial Efficacy of Green-Synthesized Nanoparticles

Experimental data demonstrates the significant biomedical potential of green-synthesized nanoparticles, particularly against clinically relevant pathogens. Research utilizing Canna indica leaf extract for silver and silver/nickel bimetallic nanoparticle synthesis revealed substantial antimicrobial activity across multiple pathogen strains [6].

Table 3: Antimicrobial Activity of Green-Synthesized Silver and Silver/Nickel Nanoparticles

| Nanoparticle Type & Concentration | S. aureus | S. pyogenes | E. coli | P. aeruginosa | C. albicans | T. rubrum |

|---|---|---|---|---|---|---|

| Ag 0.5 mM | 7±0.2 mm | 9±0.4 mm | 7±0.1 mm | No activity | 9±0.2 mm | No activity |

| Ag 3.0 mM | 13±1 mm | 14±0.2 mm | 15±0.3 mm | 8±0.1 mm | 15±0.2 mm | 9±0.1 mm |

| Ag/Ni 0.5 mM | 9±0.2 mm | 11±0.4 mm | 12±0.5 mm | 8±0.3 mm | 8±0.1 mm | 9±0.2 mm |

| Ag/Ni 3.0 mM | 15±0.4 mm | 16±0.6 mm | 17±0.6 mm | 9±0.1 mm | 12±0.1 mm | 16±0.2 mm |

| Control (Ciprofloxacin/Fluconazole) | 21±0.8 mm | 18±0.3 mm | 21±0.2 mm | 20±0.4 mm | 19±0.6 mm | 18±0.3 mm |

Note: Values represent mean inhibition zone diameters (mm) ± standard deviation [6]

The concentration-dependent efficacy is evident across all tested microorganisms, with silver/nickel bimetallic nanoparticles at 3.0 mM concentration demonstrating superior activity compared to monometallic silver nanoparticles. Statistical analysis revealed significant differences (P<0.05) between the antimicrobial activity of bimetallic nanoparticles compared to controls, highlighting their potential as effective antimicrobial agents [6].

Table 4: Minimum Inhibitory Concentration (MIC) and Minimum Bactericidal/Fungicidal Concentration (MBC/MFC) of Green-Synthesized Nanoparticles

| Nanoparticle Type | S. aureus (MIC, MBC) | S. pyogenes (MIC, MBC) | E. coli (MIC, MBC) | P. aeruginosa (MIC, MBC) | C. albicans (MIC, MFC) | T. rubrum (MIC, MFC) |

|---|---|---|---|---|---|---|

| Ag 0.5 mM | 100, 100 mg/mL | 50, 100 mg/mL | 100, 100 mg/mL | 100, 100 mg/mL | 50, 50 mg/mL | 100, 100 mg/mL |

| Ag 3.0 mM | 12.5, 25 mg/mL | 12.5, 25 mg/mL | 12.5, 12.5 mg/mL | 100, 100 mg/mL | 12.5, 12.5 mg/mL | 50, 100 mg/mL |

| Ag/Ni 0.5 mM | 50, 100 mg/mL | 25, 50 mg/mL | 12.5, 25 mg/mL | 100, 100 mg/mL | 50, 100 mg/mL | 50, 100 mg/mL |

| Ag/Ni 3.0 mM | 12.5, 12.5 mg/mL | 6.25, 12.5 mg/mL | 6.25, 12.5 mg/mL | 100, 100 mg/mL | 12.5, 25 mg/mL | 12.5, 25 mg/mL |

| Control | 3.13 mg/mL | 6.25 mg/mL | 6.25 mg/mL | 6.25 mg/mL | 6.25 mg/mL | 6.25 mg/mL |

Control: Ciprofloxacin (Bacteria) and Fluconazole (Fungi) [6]

Notably, the minimum inhibitory concentration values decreased with increasing nanoparticle concentration, demonstrating enhanced efficacy at higher synthesis precursor concentrations. The bimetallic Ag/Ni nanoparticles at 3.0 mM concentration exhibited the strongest activity, with MIC values as low as 6.25 mg/mL against S. pyogenes and E. coli [6].

Optical Properties and Material Characteristics

Green-synthesized nanoparticles exhibit tunable optical properties that can be optimized for various applications. Research has demonstrated that nanoparticle structural colors depend on material composition, size, shape, and volume fraction, enabling precise control through synthesis parameter manipulation [7].

Advanced characterization techniques including UV-Vis spectrophotometry, Fourier-transform infrared spectroscopy (FT-IR), scanning electron microscopy (SEM), transmission electron microscopy (TEM), X-ray diffraction (XRD), and atomic force microscopy (AFM) confirm the structural properties of green-synthesized nanoparticles [4]. These analytical methods verify the crystalline nature, size distribution, morphology, and surface functionalization of biologically synthesized nanoparticles, providing critical quality assessment parameters for benchmarking against conventionally synthesized alternatives.

Experimental Protocols and Methodologies

Detailed Workflow for Plant-Mediated Nanoparticle Synthesis

Graph 1: Green Synthesis Workflow. This diagram illustrates the sequential steps in plant-mediated nanoparticle synthesis.

Protocol: Silver Nanoparticle Synthesis UsingCanna indicaLeaf Extract

Materials and Reagents:

- Fresh leaves of Canna indica (voucher specimen deposited for authentication)

- Silver nitrate (AgNO₃) solution (0.5-3.0 mM concentrations)

- Deionized water (resistance 18.25 MΩ·cm)

- Laboratory glassware, heating mantle, filtration apparatus

Experimental Procedure:

- Plant Extract Preparation: Collect fresh Canna indica leaves, wash thoroughly with deionized water, and air-dry. Prepare aqueous extract by boiling 10 g of finely cut leaves in 100 mL deionized water at 70°C for 30 minutes on a hot plate. Filter the resulting extract through Whatman No. 1 filter paper to remove particulate matter [6].

Reaction Mixture Preparation: Add the filtered plant extract to aqueous AgNO₃ solution at varying concentrations (0.5, 1.0, 2.0, and 3.0 mM) in a 1:4 volume ratio (extract:precursor solution). Maintain the reaction mixture at 70°C with continuous stirring for 30 minutes until color change indicates nanoparticle formation [6].

Purification and Recovery: Centrifuge the nanoparticle suspension at 15,000 rpm for 20 minutes, discard the supernatant, and resuspend the pellet in deionized water. Repeat this washing process three times to remove unreacted plant metabolites. Lyophilize the purified nanoparticles for long-term storage and further characterization [6].

Characterization Methods:

- Optical Properties: Analyze using double beam Thermo Scientific GENESYS 10S UV-vis spectrophotometer or UV-vis spectrophotometer model T90+ [6].

- Crystallinity Assessment: Perform X-ray powder diffraction (XRPD) using Bruker D8 XRD model [6].

- Morphological Analysis: Utilize scanning electron microscopy (SEM) and transmission electron microscopy (TEM) for size and shape determination [4].

- Surface Chemistry: Employ Fourier-transform infrared spectroscopy (FT-IR) to identify functional groups involved in reduction and stabilization [4].

Antimicrobial Activity Assessment Protocol

Materials and Microbial Strains:

- Test microorganisms: Staphylococcus aureus, Streptococcus pyogenes, Escherichia coli, Pseudomonas aeruginosa, Candida albicans, Trichophyton rubrum

- Mueller-Hinton agar, Sabouraud dextrose agar

- Ciprofloxacin and fluconazole as positive controls

- Sterile paper disks (6 mm diameter)

Experimental Procedure:

- Agar Well Diffusion Assay: Prepare microbial suspensions equivalent to 0.5 McFarland standard. Swab inoculate the surfaces of Mueller-Hinton agar (bacteria) or Sabouraud dextrose agar (fungi) plates. Create wells (6 mm diameter) and add 50 μL of different nanoparticle concentrations (0.5-3.0 mM). Incubate plates at 37°C for 24 hours (bacteria) or 28°C for 48-72 hours (fungi). Measure inhibition zone diameters in millimeters [6].

Minimum Inhibitory Concentration (MIC) Determination: Prepare two-fold serial dilutions of nanoparticles in appropriate broth media in 96-well microtiter plates. Inoculate each well with standardized microbial suspension (5×10⁵ CFU/mL). Include growth and sterility controls. Incubate plates at appropriate temperatures for 24-48 hours. The MIC is defined as the lowest concentration showing no visible growth [6].

Minimum Bactericidal/Fungicidal Concentration (MBC/MFC) Determination: Subculture aliquots from wells showing no growth in MIC determination onto fresh agar plates. The MBC/MFC is defined as the lowest concentration yielding no growth on subculture, indicating ≥99.9% killing of the initial inoculum [6].

Statistical Analysis: Perform one-way analysis of variance (ANOVA) using SPSS statistical tools with significance at P < 0.05. All experiments should be conducted in triplicate with mean values and standard deviations reported [6].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 5: Key Research Reagents and Materials for Green Nanoparticle Synthesis

| Reagent/Material | Function/Application | Specifications/Considerations |

|---|---|---|

| Plant Biomass | Source of reducing and stabilizing metabolites | Select species rich in phenols, flavonoids; authenticate and deposit voucher specimens [6] [5] |

| Metal Salt Precursors | Source of metal ions for nanoparticle formation | AgNO₃, HAuCl₄, CuSO₄, FeCl₃; vary concentration (0.5-3.0 mM) to control size [6] |

| Culture Media | Microbial cultivation for antimicrobial assays | Mueller-Hinton agar, Sabouraud dextrose agar; standardize inoculum density [6] |

| Reference Antimicrobials | Positive controls for bioactivity studies | Ciprofloxacin (bacteria), fluconazole (fungi); prepare fresh stock solutions [6] |

| Characterization Reagents | Sample preparation for analytical techniques | Grids for TEM, KBr for FT-IR; ensure high purity to avoid interference [4] [6] |

| Solvents | Extraction and purification | Deionized water (resistance ≥18 MΩ·cm), ethanol; remove dissolved oxygen when necessary [6] |

Advanced Applications and Performance in Material Science

Nanocomposite Reinforcement Capabilities

Beyond biomedical applications, green-synthesized nanoparticles demonstrate exceptional performance as reinforcement agents in polymer nanocomposites. Experimental studies investigating energy absorption in polymer nanocomposites reinforced with nano-clay and nano-silica reveal significant enhancements in mechanical properties [8].

Table 6: Energy Absorption Performance of Nanoparticle-Reinforced Polymer Composites

| Nanomaterial Type | Weight Percentage | Energy Absorption Performance | Optimal Concentration | Composite Structure |

|---|---|---|---|---|

| Nano-silica | 0-0.4% | Increase up to central point (0.2%), then decreased intensity | 0.2% | Cylindrical and conical |

| Nano-clay | 0-0.4% | Significant rise up to 0.4%, maintained intensity after central point | 0.4% | Cylindrical and conical |

| Diethylenetriamine | 1,3,5% | Highest absorption at central point, downward trend thereafter | 3% | Cylindrical and conical |

Research findings indicate that the addition of nano-silica up to 0.2% weight percentage significantly enhances energy absorption in polymer nanocomposites, with cone-shaped structures demonstrating superior performance compared to cylindrical configurations [8]. These results highlight the potential of green-synthesized nanoparticles in advanced material applications requiring specific mechanical properties.

Optical and Sensing Applications

The optical properties of green-synthesized nanoparticles enable advanced applications in sensing and display technologies. Gold nanoparticles synthesized through green methods exhibit tunable structural colors dependent on particle size, volume fraction, and layer thickness [7]. Machine learning approaches utilizing bidirectional neural networks have achieved high accuracy (99.83%) in predicting structural colors and inversely designing geometric parameters for desired color output, demonstrating the precision achievable with green-synthesized nanomaterials [7].

Advanced characterization and design protocols for structural color optimization involve:

- Mie scattering calculations to determine single particle optical properties

- Monte Carlo simulations to model multiple scattering in nanoparticle systems

- Spectrum-to-color conversion based on CIE color spaces for accurate color reproduction

- Bidirectional neural networks (BNN) for predictive modeling and inverse design [7]

The comprehensive comparison of green synthesis approaches against conventional methods demonstrates significant advantages in sustainability, biocompatibility, and environmental impact. Quantitative experimental data reveals that green-synthesized nanoparticles, particularly silver and bimetallic systems, exhibit substantial antimicrobial activity with minimum inhibitory concentrations as low as 6.25 mg/mL against clinically relevant pathogens [6]. The concentration-dependent efficacy and enhanced performance of bimetallic nanoparticles highlight the optimization potential through precursor modulation and reaction parameter control.

While green nanotechnology shows remarkable promise across biomedical, material science, and optical applications, research gaps remain in standardization, scalability, and long-term toxicological assessments [5]. Future research directions should focus on optimizing reaction parameters for enhanced reproducibility, developing hybrid bimetallic systems with superior functionality, and establishing comprehensive toxicity profiles for clinical translation. The integration of machine learning and computational design approaches with experimental validation will further advance the precision and application scope of green-synthesized nanomaterials, solidifying their role in sustainable materials development for pharmaceutical and technological applications.

The Rise of Computational Guidance and Data-Driven Discovery

The field of materials science is undergoing a profound transformation, shifting from reliance on empirical, trial-and-error experimentation to sophisticated computational and data-driven approaches. This paradigm shift is accelerating the discovery and development of novel materials crucial for addressing global challenges in energy, healthcare, and sustainability. Traditional experimental synthesis has long been hampered by being resource-intensive and time-consuming, often requiring years of laboratory work to identify promising material candidates [9]. The emergence of computational guidance and data-driven discovery represents a fundamental change in this process, enabling researchers to predict material properties, optimize synthesis parameters, and identify novel compounds with desired characteristics before ever entering the laboratory.

This transformation is being driven by several convergent technological trends. Increased computing power allows for complex simulations that were previously impossible, while big data integration from historical experiments provides a foundation for predictive modeling. Enhanced modeling techniques, particularly in machine learning and artificial intelligence, now offer deep insights into experimental outcomes and structure-property relationships [10]. The integration of these technologies has given rise to a new ecosystem of materials research that combines high-throughput computation, open data platforms, and intelligent algorithms to dramatically compress the discovery timeline. As these approaches mature, they are reshaping not only how materials are discovered but also expanding the very boundaries of what is possible in materials design and optimization.

Benchmarking Computational Approaches: Methodologies and Performance

Key Methodological Frameworks

High-Throughput Computing and Density Functional Theory

High-throughput computing (HTC) has revolutionized materials design by enabling rapid screening of vast material libraries through first-principles calculations. This approach leverages density functional theory (DFT) to accurately predict electronic structures, stability, and reactivity without empirical parameters. The methodology involves systematically varying compositional and structural parameters to construct comprehensive databases that can be mined for materials with optimal characteristics [11]. Platforms like the Materials Project have utilized this approach to compute properties of thousands of inorganic compounds, creating invaluable resources for researchers seeking materials with specific functionalities [12]. The technical workflow typically involves automated structure generation, property calculation through DFT, and systematic data analysis, with robust workflow management systems handling error handling, data storage, and resource allocation.

The Materials Project, launched in 2011, exemplifies this approach, driving materials discovery through high-throughput computation and open data sharing. This platform has become an indispensable tool used by more than 600,000 materials researchers worldwide, significantly accelerating materials design through sustainable software and computational methods that are open-source and collaborative in nature [12]. The platform's infrastructure includes sophisticated data architecture, cloud resources, and interactive web applications that make complex materials data accessible to a broad research community. The technical implementation involves Python Materials Genomics (pymatgen), a robust open-source Python library for materials analysis, along with workflow systems like Atomate2 that modularize materials science computations [12].

Machine Learning and Deep Learning Approaches

Machine learning techniques have significantly enhanced the ability to predict material performance by learning complex patterns from existing data. These approaches include supervised learning methods such as support vector machines, decision trees, and Gaussian processes for material property predictions based on training data from experiments and simulations [11]. The methodology typically involves several stages: data acquisition and cleaning, feature engineering using material descriptors, model training, and validation. More recently, deep learning architectures including graph neural networks (GNNs), convolutional neural networks (CNNs), and transformers have revolutionized material informatics by capturing intricate structure-property relationships [11]. These models automatically extract complex hierarchical features from large-scale material datasets, enabling more accurate and scalable predictions.

A particularly innovative approach combines physics-informed machine learning with generative optimization for material design. This framework consists of three major components: a graph-embedded material property prediction model that integrates multi-modal data for structure-property mapping, a generative model for structure exploration using reinforcement learning, and a physics-guided constraint mechanism that ensures realistic and reliable material designs [11]. By embedding domain-specific priors into the deep learning framework, this method significantly improves prediction accuracy while maintaining physical interpretability. The technical implementation involves specialized architectures that can handle diverse material representations while incorporating physical constraints directly into the learning objective.

Large Language Models and Automated Discovery Systems

The emergence of large language models (LLMs) with advanced reasoning capabilities has opened new possibilities for autonomous discovery systems in materials science. Systems like DataVoyager demonstrate how LLMs can semantically understand datasets, programmatically explore verifiable hypotheses, run statistical tests, and analyze outputs in detail [13]. The methodology employs specialized agents—planner, programmer, data expert, and critic—designed to manage various aspects of the data-driven discovery process, along with structured functions or programs for specific data analyses [13]. The capabilities of the underlying LLM, such as function calls, code generation, and language generation, are critical for success in automating the scientific process.

Benchmarks like DiscoveryBench have been developed to systematically evaluate LLM capabilities in automated data-driven discovery. This benchmark formalizes discovery tasks as searching for relationships between variables within a specific context, where descriptions may not directly correspond to dataset language [14]. The methodology incorporates scientific semantic reasoning, including deciding on appropriate analysis techniques for specific domains, data cleaning and normalization, and mapping goal terms to dataset variables. DiscoveryBench consists of two main components: DB-REAL, with hypotheses and workflows from published scientific papers across six domains, and DB-SYNTH, a synthetically generated benchmark that allows for controlled model evaluations [14].

Experimental Protocols and Benchmarking Standards

MDBench Framework for Model Discovery

The MDBench framework provides a standardized approach for benchmarking model discovery methods on dynamical systems. This open-source benchmarking framework evaluates algorithms on differential equations, assessing 12 algorithms on 14 partial differential equations (PDEs) and 63 ordinary differential equations (ODEs) under varying noise levels [15]. The experimental protocol involves several key steps: dataset preparation with controlled noise introduction, algorithm training with standardized parameters, and comprehensive evaluation using multiple metrics. Evaluation metrics include derivative prediction accuracy, model complexity, and equation fidelity, providing a holistic view of algorithm performance [15]. The framework also introduces seven challenging PDE systems from fluid dynamics and thermodynamics specifically designed to reveal limitations in current methods.

The benchmarking process in MDBench follows rigorous statistical protocols to ensure fair comparison across methods. Each algorithm undergoes multiple runs with different random seeds to account for variability, with performance metrics aggregated across all runs. The framework tests algorithms under varying noise conditions—from clean data to significant noise contamination—to assess robustness. This systematic approach has revealed that linear methods and genetic programming methods achieve the lowest prediction error for PDEs and ODEs, respectively, and that linear models are generally more robust against noise [15].

Automated Synthesis Prediction Evaluation

Recent advances have focused on benchmarking automated materials synthesis prediction systems. The AlchemyBench benchmark offers an end-to-end framework that supports research in large language models applied to synthesis prediction [16]. The experimental protocol encompasses key tasks including raw materials and equipment prediction, synthesis procedure generation, and characterization outcome forecasting. The methodology employs an LLM-as-a-Judge framework that leverages large language models for automated evaluation, demonstrating strong statistical agreement with expert assessments [16]. This approach is built on a curated dataset of 17K expert-verified synthesis recipes from open-access literature, providing a robust foundation for evaluation.

The evaluation protocol in AlchemyBench involves both quantitative and qualitative assessment across multiple dimensions. For synthesis prediction, systems are evaluated on accuracy of precursor identification, reaction conditions, and procedural steps. The benchmark employs both exact match metrics and semantic similarity measures to account for syntactically different but functionally equivalent procedures. This comprehensive evaluation approach has revealed significant challenges in the field, with even state-of-the-art systems struggling with complex synthesis prediction tasks.

Performance Comparison of Computational Approaches

Table 1: Performance Benchmarking of Major Computational Discovery Approaches

| Method Category | Representative Platforms | Accuracy Metrics | Strengths | Limitations |

|---|---|---|---|---|

| High-Throughput DFT | Materials Project, OQMD, AFLOW | DFT formation energy accuracy: ~0.1-0.2 eV/atom [12] | High physical rigor, excellent interpretability | Computational expensive, limited to idealized structures |

| Machine Learning Potentials | CHGNet, M3GNet, NequIP | Force prediction accuracy: ~30-50 meV/Å [12] | Near-DFT accuracy at fraction of computational cost | Transferability challenges, training data requirements |

| Symbolic Regression | PySR, SINDy, Operon | Equation recovery rate: 60-80% on clean data [15] | Interpretable models, physical insights | Struggles with high noise, limited complexity |

| LLM-Based Discovery | DataVoyager, DiscoveryBench | Task success rate: ~25% on DiscoveryBench [14] | Natural language interface, reasoning capability | Hallucination, limited mathematical rigor |

Table 2: Performance Under Noisy Conditions in Dynamical System Discovery

| Method Type | Clean Data Accuracy | Low Noise (1%) | Medium Noise (5%) | High Noise (10%) | Robustness Ranking |

|---|---|---|---|---|---|

| Linear Models (SINDy) | 92% | 88% | 75% | 52% | 1 |

| Genetic Programming (PySR) | 95% | 82% | 60% | 35% | 3 |

| Deep Learning (DeepMoD) | 88% | 80% | 65% | 45% | 2 |

| Bayesian Methods | 85% | 83% | 78% | 65% | 4 |

Research Reagent Solutions: Essential Tools for Computational Materials Discovery

Table 3: Key Research Tools and Platforms for Computational Materials Discovery

| Tool/Platform | Type | Primary Function | Domain Application |

|---|---|---|---|

| Materials Project | Database/Platform | High-throughput computed material properties | Inorganic materials, battery materials, catalysts |

| pymatgen | Software Library | Materials analysis and workflow management | Crystal structure analysis, DFT calculations |

| SINDy | Algorithm | Sparse identification of nonlinear dynamics | Dynamical systems, PDE discovery |

| PySR | Software | Symbolic regression for equation discovery | Empirical law discovery, model reduction |

| CHGNet | Pretrained Model | Universal neural network potential | Atomistic simulations, molecular dynamics |

| Atomate2 | Workflow System | Automated materials science computations | High-throughput DFT, materials screening |

| DataVoyager | LLM System | Automated hypothesis generation and testing | Cross-domain discovery, data exploration |

Workflow Visualization of Computational Discovery Approaches

High-Throughput Materials Discovery Workflow

High-Throughput Discovery Pipeline - This diagram illustrates the standardized workflow for high-throughput computational materials discovery, from initial structure generation to experimental validation.

Automated Hypothesis Discovery System

LLM-Driven Discovery Process - This workflow shows the automated hypothesis discovery process used in systems like DataVoyager, from data input to insight generation.

Comparative Analysis and Future Outlook

The benchmarking of various computational approaches reveals distinct trade-offs between accuracy, interpretability, and computational efficiency. High-throughput DFT methods provide the highest physical rigor but at significant computational cost, limiting their application to systems of moderate complexity. Machine learning potentials strike a balance between accuracy and efficiency, enabling molecular dynamics simulations at scales previously impossible with DFT alone. Symbolic regression methods excel in interpretability, producing human-readable models that provide physical insights, though they struggle with high-dimensional problems and noisy data. LLM-based approaches offer unprecedented natural language interaction capabilities but currently face challenges in mathematical rigor and reliability [15] [14] [12].

The integration of these approaches into hybrid frameworks represents the most promising direction for future development. Combining the physical rigor of DFT with the efficiency of machine learning, while leveraging LLMs for interface and reasoning capabilities, could overcome the limitations of individual methods. The Materials Project's evolution toward more accessible and easy-to-understand materials data exemplifies the trend toward democratizing materials knowledge and fostering collaborative communities [12]. As these technologies mature, we can anticipate increasingly automated discovery systems that not only assist researchers but actively drive the scientific process, potentially leading to accelerated innovation across multiple domains of materials science.

Future developments will likely focus on addressing current limitations in generalization, interpretability, and robustness. For machine learning approaches, this means developing more transferable models that can accurately predict properties for novel material classes outside their training distribution. For automated discovery systems, improving mathematical reasoning and reducing hallucination will be critical for scientific applications. The integration of real-time experimental feedback into computational frameworks represents another important frontier, creating closed-loop discovery systems that continuously refine their predictions based on laboratory results. As these advances materialize, the pace of materials discovery is poised to accelerate dramatically, potentially transforming how we develop materials for energy storage, electronics, healthcare, and countless other applications.

Key Performance Indicators for Benchmarking Synthesis Approaches

Benchmarking synthesis approaches is a cornerstone of modern materials science, providing a systematic framework to evaluate and compare the performance of diverse synthesis methodologies. As the pace of materials discovery accelerates, rigorous benchmarking has become indispensable for validating new synthesis protocols, guiding experimental efforts, and ensuring reproducibility across laboratories. This process relies on the precise definition and application of Key Performance Indicators (KPIs)—quantifiable metrics that objectively measure the efficiency, effectiveness, and overall success of synthesis methods. For researchers, scientists, and drug development professionals, selecting appropriate KPIs is crucial for moving beyond qualitative assessments to data-driven decision-making. This guide provides a comparative analysis of contemporary synthesis benchmarking frameworks, detailing their core KPIs, experimental protocols, and underlying methodologies to establish a standardized approach for evaluating synthesis performance across the materials science landscape.

Comparative Analysis of Benchmarking Frameworks

The evaluation of synthesis approaches spans multiple methodologies, from automated machine learning (ML) pipelines to human-in-the-loop systems. The table below summarizes the primary KPIs and the contexts in which they are most effectively applied.

Table 1: Key Performance Indicators for Synthesis Benchmarking

| Benchmarking Framework | Primary Application Context | Key Performance Indicators (KPIs) | Data Modality |

|---|---|---|---|

| Matbench [17] | General-purpose ML for materials property prediction | - Mean Absolute Error (MAE)- Root Mean Squared Error (RMSE)- Cross-validation scores- Generalization error on hold-out sets | Composition, Crystal Structure |

| JARVIS-Leaderboard [18] | Comprehensive materials design (AI, Electronic Structure, Force-fields, QC, Experiments) | - Reproducibility rate- Computational cost/time- Accuracy vs. experimental validation- Property prediction error (e.g., bandgap) | Atomic Structures, Spectra, Images, Text |

| Synthetic Data Integration [19] | ML training with privacy and data scarcity challenges | - Accuracy (vs. real data characteristics)- Diversity of generated scenarios- Realism (ability to generalize to real tasks)- Bias metrics in synthetic datasets | Computer Vision, Text, Tabular Data |

| Language Models (LMs) for Synthesis [20] | Inorganic synthesis planning (precursor & condition prediction) | - Top-1/Top-5 precursor-prediction accuracy- Mean Absolute Error (MAE) for temperature prediction (e.g., ±126°C for sintering)- Inference cost per prediction | Text-based scientific literature |

| ML-Guided Experimental Design [21] | Nanomaterial synthesis (e.g., TiO2 nanoparticles) | - Predictive accuracy for size, polydispersity, aspect ratio- Model performance vs. classical regression- Achievement of target morphology (e.g., aspect ratio 1.4 to 6) | Experimental process parameters (concentration, pH, temperature) |

Each framework employs a distinct set of KPIs tailored to its specific objectives. For instance, Matbench and the JARVIS-Leaderboard utilize classical error metrics like MAE to evaluate predictive accuracy across a wide range of material properties [18] [17]. In contrast, frameworks incorporating synthetic data or language models must also assess the quality and diversity of the generated data itself, alongside the final model's predictive power [19] [20]. For direct experimental synthesis, as in nanomaterial design, KPIs directly reflect target product characteristics such as size, shape, and polydispersity [21].

Experimental Protocols for KPI Evaluation

To ensure KPIs are measured consistently and reproducibly, standardized experimental protocols are essential. The following section details the methodologies underpinning the KPIs described in the previous section.

Protocol for Benchmarking Automated ML Pipelines

The Matbench protocol provides a robust method for evaluating ML models on materials property prediction tasks [17].

- Dataset Curation: The test suite comprises 13 supervised ML tasks sourced from 10 DFT-derived and experimental databases. Tasks include predicting optical, thermal, electronic, and mechanical properties. Datasets are pre-cleaned to remove unphysical data and are used as-is to ensure consistent comparisons.

- Nested Cross-Validation (NCV): A nested cross-validation procedure is employed to mitigate model selection bias.

- Outer Loop: The data is split into training and test sets to estimate the generalization error.

- Inner Loop: The training set is further split to perform hyperparameter tuning.

- Reference Algorithm (Automatminer): The benchmarking process uses an automated pipeline (Automatminer) as a reference. This pipeline performs autofeaturization using published featurizations, cleans the feature matrix, performs dimensionality reduction, and automatically selects and tunes the best ML model [17].

- KPI Calculation: The final model performance is evaluated on the held-out test set from the outer NCV loop, reporting metrics such as MAE and RMSE.

Protocol for Evaluating Synthesis Prediction with Language Models

A recent study benchmarked state-of-the-art language models (LMs) on inorganic solid-state synthesis tasks, establishing this protocol [20].

- Test Dataset Curation: A held-out test set of 1,000 synthesis reactions is curated from a literature-mined database (e.g., derived from Kononova et al.).

- Task-Specific Prompting:

- Precursor Recommendation: Models are prompted to predict precursor sets for a target material without specifying the number of precursors required. Performance is evaluated using Top-1 and Top-5 exact-match accuracy.

- Condition Prediction: For tasks like predicting calcination and sintering temperatures, the LM's performance is measured using Mean Absolute Error (MAE) in degrees Celsius.

- In-Context Learning: Models are provided with approximately 40 in-context examples from a validation set to guide their predictions without task-specific fine-tuning [20].

- Ensembling: Predictions from multiple LMs (e.g., GPT-4.1, Gemini 2.0 Flash) are ensembled to enhance accuracy and reduce inference costs.

Protocol for ML-Guided Nanomaterial Synthesis

This protocol, used for predicting TiO2 nanoparticle morphology, combines experimental design with machine learning [21].

- Experimental Design: A Response Surface Methodology, specifically a Box-Wilson Central Composite Design (CCD), is employed. This design efficiently explores the effect of four independent factors over a wide range:

- Z1: Precursor concentration (e.g., 30-120 mM [Ti(TeoaH)2])

- Z2: Shape controller concentration (e.g., 0-70 mM TeoaH3)

- Z3: Initial pH (e.g., 8.7-12)

- Z4: Reaction temperature (e.g., 135-220 °C)

- Characterization and Response Measurement: The synthesized nanoparticles are characterized to measure the target responses:

- Y1: Hydrodynamic radius (RH) via Dynamic Light Scattering (DLS)

- Y2: Polydispersity (as a percentage, derived from DLS)

- Y3: Aspect ratio (p), determined by fitting an ellipse to particle boundaries in electron microscopy images.

- Model Training and Validation: An Artificial Neural Network (ANN) is trained on the experimental data to predict the outcomes (Y1, Y2, Y3) from the input parameters (Z1-Z4). The model's accuracy is quantified by its error in predicting size, polydispersity, and aspect ratio on validation experiments. A reverse engineering approach is then used to identify optimal synthesis parameters for a desired nanoparticle characteristic [21].

The logical workflow for this multi-faceted benchmarking is outlined below.

Figure 1: A multi-faceted benchmarking workflow for synthesis approaches, integrating automated ML, language models, and experimental design.

The Scientist's Toolkit: Essential Reagents & Materials

Successful execution of the described experimental protocols requires specific reagents and computational tools. The following table details key solutions and their functions in synthesis benchmarking.

Table 2: Essential Research Reagent Solutions for Synthesis Benchmarking

| Research Reagent / Tool | Function in Benchmarking Protocol | Example Application Context |

|---|---|---|

| Titatrane Precursor ([Ti(TeoaH)₂]) | Primary titanium source for controlled hydrothermal synthesis of anatase TiO₂ nanoparticles. | ML-guided nanomaterial synthesis [21]. |

| Triethanolamine (TeoaH₃) | Shape-controlling agent; modulates crystal growth and aspect ratio by selective surface binding. | Experimental design for nanoparticle morphology [21]. |

| Matbench Test Suite | A curated set of 13 ML tasks providing standardized datasets for benchmarking predictive models. | General-purpose ML for materials property prediction [17]. |

| Pretrained Language Models (e.g., GPT-4.1, Gemini 2.0 Flash) | Recall and predict synthesis protocols from vast chemical knowledge in their training corpora. | Inorganic synthesis planning (precursor & condition prediction) [20]. |

| Synthetic Data Generators (e.g., GANs, VAEs) | Generate artificial datasets to augment training data, addressing scarcity, privacy, and cost issues. | Training ML models for autonomous vehicles, healthcare [19]. |

| JARVIS-Leaderboard Platform | An open-source, community-driven platform for benchmarking across multiple data modalities and methods. | Comprehensive materials design (AI, FF, ES, QC, EXP) [18]. |

The rigorous benchmarking of synthesis approaches is fundamental to advancing materials science and drug development. As this guide illustrates, a suite of well-defined KPIs—from predictive accuracy metrics like MAE to material-specific outcomes like aspect ratio—provides the objective foundation for comparing diverse methodologies. Frameworks such as Matbench and the JARVIS-Leaderboard offer standardized protocols for fair evaluation, while emerging technologies like language models and synthetic data generation are creating new paradigms for data-driven synthesis planning. For researchers, the critical takeaway is that the choice of KPIs must be directly aligned with the benchmarking objective, whether it is validating a computational model, optimizing an experimental synthesis parameter, or ensuring generated data is both private and useful. By adhering to the detailed protocols and utilizing the essential tools outlined herein, the scientific community can continue to enhance the reproducibility, efficiency, and overall success of materials synthesis.

Methodologies in Action: From Theory to Biomedical Application

Hybrid Synthesis Strategies for Enhanced Control and Purity

The pursuit of novel materials and pharmaceutical compounds with tailored properties represents a cornerstone of modern scientific advancement. However, traditional synthesis methods, often reliant on iterative trial-and-error or purely empirical approaches, face significant challenges in terms of time, cost, and achieving desired purity and performance. In response, hybrid synthesis strategies have emerged as a transformative paradigm, integrating complementary methodologies to overcome the limitations of individual techniques. This guide benchmarks the performance of various hybrid approaches against traditional and standalone alternatives, providing a structured comparison of their efficacy in enhancing control over synthesis outcomes and final product purity. Framed within a broader thesis on benchmarking synthesis approaches, this analysis leverages quantitative data and detailed experimental protocols to offer researchers, scientists, and drug development professionals a clear, evidence-based resource for strategic decision-making.

Comparative Analysis of Hybrid Synthesis Performance

The integration of disparate methodologies into a cohesive hybrid workflow has demonstrated significant advantages across multiple domains, from inorganic materials to pharmaceutical development. The quantitative performance data, summarized in the table below, highlights the measurable benefits of these integrated approaches.

Table 1: Performance Benchmarking of Synthesis Approaches

| Synthesis Strategy | Application Domain | Key Performance Metric | Reported Result | Comparative Advantage |

|---|---|---|---|---|

| LM-Enhanced Planning [20] | Inorganic Solid-State Materials | Precursor Prediction Accuracy (Top-1) | 53.8% | Surpasses heuristic and specialized ML models trained on limited data [20]. |

| LM-Enhanced Planning [20] | Inorganic Solid-State Materials | Calcination Temperature Prediction | MAE: <126 °C | Matches the performance of specialized regression methods [20]. |

| Hybrid MTE Model (SyntMTE) [20] | Inorganic Solid-State Materials | Sintering Temperature Prediction | MAE: 73 °C | Outperforms baseline models by up to 8.7% after training on LM-augmented data [20]. |

| Optimal Experimental Design [22] | Methanol Synthesis (Chemical Engineering) | Kinetic Model Quality | Significant Improvement | Enhanced quality of the kinetic model needed for advanced process control and optimization [22]. |

| Tetracycline Hybrids [23] | Pharmaceutical Antibiotics | Antibacterial Activity (e.g., S. aureus) | More potent than Minocycline | Overcomes bacterial resistance mechanisms; multiple hybrids show enhanced potency [23]. |

| Solvent-Free Curcuminoid Synthesis [24] | Organic/Pharmaceutical Synthesis | Product Yield | Moderate to Excellent | Green protocol with good functional group tolerance and minimal workup [24]. |

The data reveals a consistent theme: hybrid strategies mitigate the core bottlenecks of their respective fields. In materials science, the principal challenge is the scarcity of high-quality synthesis data. By using language models (LMs) to generate synthetic yet plausible reaction recipes, the SyntMTE model was pretrained on a dataset of 28,548 entries, a 616% increase over existing solid-state synthesis datasets, which directly contributed to its superior predictive accuracy [20]. In pharmaceuticals, the challenge is biological efficacy and resistance. Tetracycline hybrids, created by conjugating minocycline with natural aldehydes and ketones, successfully target multiple bacterial pathways, demonstrating potency against resistant strains where the parent antibiotic fails [23].

Experimental Protocols for Key Hybrid Workflows

Data-Augmented Synthesis Planning for Inorganic Materials

This protocol outlines the hybrid workflow combining language models (LMs) and specialized transformer models for predicting synthesis conditions [20].

- Step 1: Benchmarking Off-the-Shelf LMs: A test dataset of 1,000 held-out synthesis recipes was curated. Prompts containing 40 in-context examples were submitted to various LMs (e.g., GPT-4.1, Gemini 2.0 Flash) via OpenRouter. The models were tasked with predicting precursor sets and reaction temperatures without task-specific fine-tuning. Performance was evaluated using Top-K exact-match accuracy for precursors and mean absolute error (MAE) for temperatures [20].

- Step 2: Ensemble LM Predictions: Predictions from multiple LMs were combined into an ensemble. This step was shown to enhance predictive accuracy and reduce inference cost per prediction by up to 70% [20].

- Step 3: Synthetic Data Generation and Model Training: The best-performing LMs were employed to generate 28,548 synthetic solid-state synthesis recipes. These were combined with literature-mined data to pretrain a specialized transformer-based model (SyntMTE). The SyntMTE model was then fine-tuned on the combined dataset [20].

- Step 4: Validation: The model's performance was validated on a separate test set and in a case study on Li₇La₃Zr₂O₁₂ solid-state electrolytes, where it successfully reproduced experimentally observed dopant-dependent sintering trends [20].

The workflow for this protocol is visualized below.

Development and Evaluation of Antibiotic Hybrids

This protocol details the synthesis, in-silico analysis, and in-vitro testing of novel tetracycline hybrids, a key strategy to combat antibiotic resistance [23].

- Step 1: Synthesis of Hybrids: Minocycline hydrochloride was used as the starting material. The synthesis focused on modifying the 9th position of minocycline. Ten hybrids were created by covalently linking 9-aminominocycline with various natural and synthetic aldehydes/ketones. Purification was performed using preparative HPLC with a C18 column and a mobile phase of water (pH 3.0) and acetonitrile [23].

- Step 2: Molecular Docking: The three-dimensional crystal structures of target proteins from pathogens like S. aureus (PDB ID: 6TTG) and E. coli (PDB ID: 4DUH) were retrieved from the RCSB protein data bank. The synthesized compounds were sketched and energy-minimized using SYBYL-X 2.1 software. The Surflex-Dock module was used to dock these compounds into the binding sites of the enzymes to predict binding affinity and hydrogen-bond interactions [23].

- Step 3: Molecular Dynamics Simulation: Selected protein-ligand complexes were simulated using Sybyl X-2.1 software for a duration of 0–1000 femtoseconds. The simulation captured conformation snapshots to analyze the stability and behavior of the biomolecular complex [23].

- Step 4: In-vitro Antibacterial Activity: The synthesized hybrids were evaluated in vitro against Gram-positive (Enterococcus faecalis, Staphylococcus aureus) and Gram-negative bacteria (Klebsiella pneumoniae, Pseudomonas aeruginosa, Escherichia coli) using standard protocols. Minimum Inhibitory Concentration (MIC) was determined and compared to the standard drug minocycline [23].

The logical relationship of this multi-stage validation protocol is shown in the following diagram.

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful implementation of hybrid synthesis strategies relies on a suite of specialized reagents, materials, and computational tools. The following table catalogs key solutions referenced in the featured experimental protocols.

Table 2: Key Research Reagent Solutions in Hybrid Synthesis

| Reagent / Material / Tool | Function in Hybrid Synthesis | Example Application |

|---|---|---|

| CuO/ZnO/Al₂O₃ Catalyst [22] | Heterogeneous catalyst for methanol synthesis. | Used in a Berty-type reactor to investigate reaction kinetics under dynamic conditions for hybrid model calibration [22]. |

| HATU (Hexafluorophosphate Azabenzotriazole Tetramethyl Uronium) [25] | Coupling reagent for amide bond formation in peptide synthesis. | Employed in solid-phase peptide synthesis (SPPS) for constructing linear precursors of cyclic peptides like himastatin [25]. |

| Preparative HPLC with C18 Column [23] | High-performance purification technique for complex molecules. | Critical for the purification of synthesized tetracycline hybrids before biological evaluation [23]. |

| Boric Oxide / Borate Esters [24] | Complexation agent to control reactivity and regioselectivity in diketone condensations. | Key reagent in the solvent-free, green synthesis of curcuminoids, enabling high yields and functional group tolerance [24]. |

| SYBYL-X Software Suite [23] | Integrated software for molecular modeling, docking, and simulation. | Used for energy minimization, molecular docking studies, and molecular dynamics simulations of tetracycline hybrids [23]. |

| Language Models (e.g., GPT-4.1) [20] | Knowledge retrieval and synthetic data generation for planning. | Used to recall synthesis conditions and generate synthetic reaction recipes to augment limited experimental datasets [20]. |

The empirical data and methodologies presented in this guide unequivocally demonstrate that hybrid synthesis strategies are a superior paradigm for enhancing control and purity in both materials science and pharmaceutical development. The integration of computational intelligence—from LMs and optimal design to molecular docking—with experimental science creates a synergistic effect that addresses the fundamental limitations of traditional approaches. Whether by dramatically expanding the available data for training predictive models or by rationally designing molecules with multi-target efficacy, these hybrid workflows offer a more efficient, precise, and actionable path from concept to validated product. For researchers benchmarking synthesis approaches, the evidence indicates that the future of discovery and development lies in the continued fusion and refinement of these hybrid techniques.

AI-Driven Synthesis Planning for Drug Analogs and Organic Molecules

The discovery and synthesis of novel organic molecules and drug analogs are foundational to pharmaceutical and materials innovation. Traditional synthesis planning, reliant on manual experimentation and expert intuition, is often a time-consuming, resource-intensive process characterized by low success rates and prolonged development timelines [26] [27]. Artificial intelligence (AI) has emerged as a transformative force, introducing data-driven methodologies that are redefining the landscape of retrosynthetic analysis and reaction optimization [26] [28].

This guide provides a comparative benchmark of modern AI-driven synthesis planning technologies. It objectively evaluates the performance of leading computational frameworks and autonomous platforms against traditional methods and among themselves, focusing on key metrics such as search efficiency, success rate in finding viable pathways, and experimental performance of proposed syntheses. The analysis is structured to equip researchers and drug development professionals with the data needed to select appropriate tools for their specific discovery pipelines.

Comparative Performance Analysis of AI Synthesis Platforms

The performance of AI-driven synthesis tools can be evaluated along two primary dimensions: (1) the computational efficiency and success of in silico pathway planning, and (2) the experimental performance of the proposed routes in a laboratory setting. The following tables summarize quantitative benchmarking data and key characteristics of the leading approaches.

Table 1: Benchmarking of Computational Synthesis Planning Frameworks on Retrosynthesis Tasks

| Framework | Core Approach | Reported Solve Rate | Search Efficiency (vs. Baselines) | Key Metric / Highlight |

|---|---|---|---|---|

| AOT* [29] | LLM + AND-OR Tree Search | State-of-the-art on multiple benchmarks | 3-5x fewer iterations required | Superior on complex molecular targets |

| Retro* [29] | Neural-guided A* AND-OR Search | High (baseline for comparisons) | Baseline (1x) | Foundational AND-OR tree search algorithm |

| MCTS [29] | Monte Carlo Tree Search | High | Lower than AOT* | Pioneering neural-guided search |

| LLM-Syn-Planner [29] | Evolutionary Algorithms + LLMs | Competitive | Lower than AOT* | Uses mutation operators to refine routes |

| DeepRetro [29] | Iterative LLM Reasoning + Validation | High (with human feedback) | Not Specified | Integrates chemical validation and human feedback |

Table 2: Experimental Performance of AI-Proposed and AI-Optimized Syntheses

| System / Platform | Type | Molecule / Reaction | Reported Experimental Outcome |

|---|---|---|---|

| AI Robotic Chemist [30] | Autonomous Lab System | Three Organic Compounds | Conversion rates outperformed existing literature references |

| Chemspeed SWING [27] | High-Throughput Batch Platform | Stereoselective Suzuki–Miyaura Couplings | 192 reactions completed in 4 days (high throughput) |

| Custom Mobile Robot [27] | Automated Experimentation | Hydrogen Evolution Reaction | Achieved H₂ rate of 21.05 µmol·h⁻¹ via 10D parameter search in 8 days |

| Portable Synthesis Platform [27] | Custom Automated System | Small Molecules, Oligopeptides, Oligonucleotides | Synthesized 13 molecules in high purity and yield |

Key Performance Insights

- Search Efficiency is Critical: For computational retrosynthesis, the efficiency of the search algorithm directly impacts the time and cost required to find a viable pathway. The AOT* framework demonstrates a significant advance, achieving state-of-the-art solve rates using 3-5 times fewer iterations than other LLM-based approaches [29]. This advantage is particularly pronounced for complex molecular targets where the search space is vast.

- Closing the Loop with Experimentation: The ultimate validation of a synthesis plan is its success in the lab. Autonomous systems that integrate AI planning with robotic execution have proven they can not only replicate but exceed human-reported results. The AI robotic chemist, for instance, iteratively refined synthetic recipes based on experimental feedback, ultimately achieving conversion rates that outperformed those found in existing literature [30].

- The Throughput vs. Flexibility Trade-off: Commercial high-throughput experimentation (HTE) platforms like Chemspeed excel at rapidly screening vast arrays of reaction conditions in parallel (e.g., 192 reactions in 4 days) [27]. In contrast, custom-built robotic systems, while potentially more complex to develop, can be tailored to link disparate experimental stations and tackle highly complex, multi-dimensional optimization problems that are infeasible with standard tools [27].

Experimental Protocols for Benchmarking AI Synthesis Tools

To ensure fair and reproducible comparisons between different AI-driven synthesis approaches, benchmarking must follow standardized experimental and computational protocols. The methodologies below are derived from the cited literature.

Protocol 1: Computational Retrosynthesis Planning

This protocol is used to evaluate the performance of frameworks like AOT* and Retro* in identifying viable synthetic pathways for target molecules [29].

- Input Definition: The target molecule is specified using a standard representation (e.g., SMILES string). A set of commercially available building blocks (e.g., ZINC database) is defined as the allowed starting materials.

- Pathway Generation: The AI model executes its search algorithm (e.g., AND-OR tree search, evolutionary algorithm) to generate one or more complete retrosynthetic pathways ending at the available building blocks.

- Evaluation Metrics:

- Solve Rate: The percentage of target molecules for which the framework can find any valid synthetic pathway within a fixed computational budget (e.g., a maximum number of search iterations).

- Search Efficiency: The average number of search iterations, model inferences, or computational time required to find the first valid pathway.

- Pathway Quality: Post-hoc analysis of successful pathways for attributes like number of steps, cumulative yield, and cost. This often requires external scoring functions.

Protocol 2: Closed-Loop Synthesis Optimization

This protocol validates AI-proposed pathways and optimizes reaction conditions through autonomous experimentation, as seen in [30] and [27].

- Initial Proposal: The AI system proposes an initial synthetic pathway and a set of starting reaction conditions (e.g., temperature, catalyst, solvent, concentration).

- Automated Execution: A robotic platform prepares the reaction mixture in an automated reactor, controls the reaction parameters, and allows the reaction to proceed.

- In-line Analysis: An integrated analytical tool (e.g., HPLC, GC-MS, NMR) monitors reaction progress and quantifies output (e.g., conversion, yield, selectivity).

- Iterative Optimization: The AI model uses the experimental outcome as feedback. It employs an optimization algorithm (e.g., Bayesian Optimization) to propose the next, improved set of reaction conditions. This design-make-test-analyze (DMTA) cycle repeats automatically until a performance target is met or the experimental budget is exhausted.

The following workflow diagram illustrates the closed-loop optimization process:

Protocol 3: High-Throughput Reaction Screening

This protocol uses parallel reactors to efficiently explore a broad chemical space, as implemented with platforms like Chemspeed [27].

- Design of Experiments (DoE): A set of diverse reaction conditions is generated, varying multiple continuous (e.g., temperature, stoichiometry) and categorical (e.g., solvent, catalyst type) parameters simultaneously.

- Parallel Execution: A liquid-handling robot dispenses reagents into multi-well reaction plates (e.g., 96-well plates). The plate is heated, cooled, and agitated as required in a parallel reactor block.

- High-Throughput Analysis: Automated analytical tools, often coupled directly to the reactor block, analyze the outcomes of all reactions in the plate.

- Data Mapping and Model Training: The collected data (inputs and outputs) is used to build a machine learning model (e.g., a surrogate model) that maps reaction conditions to the outcome. This model can then be used to predict optimal conditions.

The Scientist's Toolkit: Key Research Reagent Solutions

Successful implementation of AI-driven synthesis relies on a suite of computational and experimental tools. The following table details essential "reagent solutions" for this field.

Table 3: Essential Research Reagents and Tools for AI-Driven Synthesis

| Tool / Resource Name | Type | Primary Function in AI-Driven Synthesis |

|---|---|---|

| AiZynthFinder [31] | Software Platform | Automates retrosynthetic planning using a trained neural network and readily available starting materials. |

| IBM RXN [31] | Software Platform | Uses transformer-based models to predict chemical reaction outcomes and perform retrosynthetic analysis. |

| ChEMBL [32] | Database | Provides curated bioactivity data for small molecules, used for training predictive ML models. |

| ZINC [32] | Database | A vast database of commercially available compounds, typically used as the set of allowed starting materials for synthesis planning. |

| RDKit [31] | Cheminformatics Toolkit | Provides fundamental functions for molecular visualization, descriptor calculation, and chemical structure standardization. |

| Chemspeed SWING [27] | Automated Robotic Platform | Enables high-throughput screening of reactions in batch mode, accelerating data generation for ML models. |

| Gaussian/ORCA [31] | Computational Chemistry | Quantum chemistry software used to predict activation energies and reaction mechanisms, providing data for AI training. |

The benchmarking data and experimental protocols presented in this guide confirm that AI-driven synthesis planning has matured into a powerful paradigm, offering tangible advantages over traditional methods. The transition from purely computational suggestions to integrated, closed-loop systems represents the most significant leap forward. Frameworks like AOT* demonstrate that algorithmic innovations can dramatically improve computational efficiency, while autonomous robotic chemists provide proof-of-concept that AI can lead to experimentally validated, high-performing synthetic protocols that may elude human intuition.

For researchers, the choice of tool depends on the specific challenge. For rapid in silico route discovery, efficient search algorithms like AOT* are paramount. For optimizing a known reaction or exploring a complex parameter space, closed-loop systems or high-throughput HTE platforms are indispensable. As these technologies continue to evolve, their integration will likely become seamless, further accelerating the design and synthesis of next-generation drugs and functional organic molecules.

Inorganic Materials and Metal Halide Perovskites for Optical Devices

The relentless growth in data traffic and the advent of technologies like 5G communication demand optical devices with superior performance, including higher bandwidth, lower power consumption, and greater integration [33]. At the heart of these devices—such as modulators, photodetectors, and light emitters—lies the critical choice of material. This guide provides an objective comparison between two prominent material classes: traditional inorganic electro-optical materials and the emerging metal halide perovskites (MHPs). The benchmarking is framed within a modern research context that increasingly relies on data-driven and predictive synthesis approaches to accelerate materials discovery and optimization [20] [34]. We compare these materials based on quantifiable performance metrics, detail the experimental protocols used to obtain this data, and situate the discussion within the evolving paradigm of computational synthesis planning.

Performance Comparison: Quantitative Data

The following tables summarize key performance parameters for the two material classes, highlighting their respective strengths and weaknesses in optical device applications.

Table 1: Core Material Properties for Optical Applications

| Property | Traditional Inorganic Electro-Optics (e.g., LiNbO₃, BTO, PZT) | Metal Halide Perovskites (e.g., CsPbIₓBr₃₋ₓ, MAPbI₃) |

|---|---|---|

| EO Coefficient (pm/V) | High (e.g., Thin-film LiNbO₃ & BTO are promising) [33] | Not primarily known for linear EO effect; strong focus on emission/absorption [35] |

| Bandgap Tunability | Limited, typically fixed by crystal structure | Highly tunable (1.5 - 3.0 eV) via composition & dimensionality [35] |

| Carrier Mobility (cm²/Vs) | Varies by material | High (tens of cm²/Vs) [35] |

| Defect Tolerance | Generally low; performance sensitive to defects | High; good performance despite low-cost processing [35] |

| Carrier Diffusion Length | Varies by material | Long (>10 μm) [35] |

| Optical Absorption | Strong, utilized in modulators | Exceptionally strong [35] |

Table 2: Experimental Device Performance Metrics

| Metric | Traditional Inorganic Electro-Optics | Metal Halide Perovskites |

|---|---|---|

| Modulator Bandwidth | Under development for thin-film platforms [33] | Not the primary application |

| Photodetector Response Time | N/A | 20 ns (for CsPbIBr₂) [36] |

| Photodetector Detectivity | N/A | ~21.5 pW cm⁻² (detectable limit for CsPbIBr₂) [36] |

| LED External Quantum Efficiency (EQE) | N/A | Up to 21.6% (for near-infrared LEDs) [35] |

| Solar Cell PCE (Single-Junction) | N/A | Certified 25.7% [35] |

| Environmental Stability | High (intrinsically stable) [33] | Improved; CsPbIBr₂ devices stable >2000 hours in ambient [36] |

Experimental Protocols for Performance Benchmarking

To ensure the comparability of data presented in the previous section, researchers adhere to standardized experimental protocols for characterizing key properties.

Protocol for Electro-Optic Coefficient Measurement

The linear electro-optic (Pockels) effect is a critical metric for modulator materials [33].