Bayesian Optimization for Materials Exploration: A Comprehensive Guide from Foundations to Advanced Applications

This article provides a comprehensive examination of Bayesian Optimization (BO) as a powerful, data-efficient methodology for accelerating materials discovery and development.

Bayesian Optimization for Materials Exploration: A Comprehensive Guide from Foundations to Advanced Applications

Abstract

This article provides a comprehensive examination of Bayesian Optimization (BO) as a powerful, data-efficient methodology for accelerating materials discovery and development. It covers fundamental principles, including the exploration-exploitation trade-off quantified through novel measures like observation entropy and traveling salesman distance. The review explores advanced methodological frameworks such as Bayesian Algorithm Execution (BAX) and target-oriented BO for precise property targeting, alongside practical implementations in diverse materials systems from shape memory alloys to battery materials. Critical analysis addresses BO limitations in high-dimensional spaces and strategies for overcoming them through surrogate model selection and acquisition function design. The article benchmarks BO performance against alternative optimization approaches and synthesizes validation studies across experimental materials domains, providing researchers with practical insights for implementing BO in resource-constrained experimental settings.

Bayesian Optimization Fundamentals: Mastering the Exploration-Exploitation Trade-off in Materials Science

Core Principles of Bayesian Optimization for Experimental Materials Design

Bayesian Optimization (BO) is a powerful computational strategy for efficiently optimizing expensive-to-evaluate black-box functions, making it particularly valuable for experimental materials design where physical experiments or complex simulations are resource-intensive. By building a probabilistic model of the objective function and using it to direct subsequent evaluations, BO enables researchers to find optimal material formulations and processing conditions with significantly fewer experimental iterations than traditional approaches. This method has demonstrated substantial impact across diverse materials domains, including the discovery of shape memory alloys, hydrogen evolution reaction catalysts, high-entropy alloys, and organic-inorganic perovskites [1] [2] [3].

The fundamental strength of BO lies in its ability to intelligently balance exploration (sampling regions with high uncertainty) and exploitation (sampling regions likely to yield improvement). This balance is particularly crucial in materials science applications where each data point may require days or weeks of laboratory work and characterization. As a next-generation framework for autonomous experimentation, BO is increasingly integrated with automated laboratory hardware and high-performance computing to create self-driving laboratories that can rapidly navigate complex materials spaces with minimal human intervention [4].

Core Mathematical Framework

Gaussian Process Surrogate Modeling

The foundation of Bayesian Optimization relies on Gaussian Process (GP) as a surrogate model to approximate the unknown objective function. A GP defines a prior over functions, where any finite collection of function values has a joint Gaussian distribution. This distribution is completely specified by its mean function μ(x) and covariance kernel k(x,x') [5]:

Commonly used covariance kernels include the Gaussian kernel k(x,y) = exp(-∥x-y∥²/h), where h is a length-scale parameter, and more flexible alternatives like the Matérn kernel that can accommodate different smoothness assumptions about the underlying function. The choice of kernel encodes prior beliefs about function properties such as smoothness and periodicity, significantly impacting model performance [5].

After each function evaluation, the GP prior is updated using Bayes' rule to obtain a posterior distribution. This posterior provides not only predictions of the objective function at unobserved points but also quantifies the uncertainty in these predictions through predictive variances. This uncertainty quantification is essential for guiding the adaptive sampling strategy in BO [5].

Acquisition Functions for Experimental Guidance

Acquisition functions leverage the GP posterior to determine the most promising candidate for the next evaluation by balancing exploration and exploitation. The Expected Improvement (EI) acquisition function is among the most widely used in materials applications [1] [5].

For minimization problems, given the best observed value so far (ymin), the improvement at a point x is defined as I = max(ymin - Y, 0), where Y is the random variable representing the predicted function value at x. The Expected Improvement is then calculated as [1]:

where φ(·) and Φ(·) are the probability density and cumulative distribution functions of the standard normal distribution, μ is the predicted mean, and s is the predicted standard deviation at point x [1].

Table 1: Common Acquisition Functions in Bayesian Optimization

| Acquisition Function | Mathematical Expression | Key Advantages | Typical Applications |

|---|---|---|---|

| Expected Improvement (EI) | EI(x) = E[max(0, fmin - f(x))] |

Balanced exploration-exploitation | General materials optimization |

| Upper Confidence Bound (UCB) | UCB(x) = μ(x) + κσ(x) |

Explicit exploration parameter | Rapid exploration |

| Target-Oriented EI (t-EI) | t-EI(x) = E[max(0, |yt.min - t| - |Y - t|)] |

Optimizes for specific target value | Shape memory alloys, catalysts |

| Probability of Improvement (PI) | PI(x) = P(f(x) ≤ fmin + ξ) |

Simpler computation | When computational efficiency critical |

Specialized BO Methodologies for Materials Design

Target-Oriented Bayesian Optimization

Many materials applications require achieving a specific target property value rather than simply maximizing or minimizing a property. For example, catalysts for hydrogen evolution reactions exhibit enhanced activities when adsorption free energies approach zero, and shape memory alloys used in thermostatic valves require specific transformation temperatures [1].

The target-oriented Bayesian optimization method (t-EGO) introduces a novel acquisition function called target-specific Expected Improvement (t-EI). For a target property value t, and the current closest value yt.min, t-EI is defined as [1]:

This formulation differs fundamentally from standard EI by specifically rewarding candidates whose predicted properties move closer to the target value, rather than simply improving upon the best-observed extremum. This approach has demonstrated remarkable efficiency in real materials discovery, identifying a shape memory alloy Ti₀.₂₀Ni₀.₃₆Cu₀.₁₂Hf₀.₂₄Zr₀.₀₈ with a transformation temperature difference of only 2.66°C from the target in just 3 experimental iterations [1].

Handling Mixed Variable Types

Real materials design problems typically involve both quantitative variables (e.g., composition ratios, processing temperatures, time parameters) and qualitative variables (e.g., material constituents, crystal structures, processing methods). Standard BO approaches that represent qualitative factors as dummy variables perform poorly because they fail to capture complex correlations between qualitative levels [2].

The Latent Variable Gaussian Process (LVGP) approach provides an elegant solution by mapping each qualitative factor to underlying numerical latent variables in the GP model. This mapping has strong physical justification—the effects of any qualitative factor on quantitative responses must originate from underlying quantitative physical variables [2]. The LVGP approach dramatically outperforms dummy-variable methods in predictive accuracy while providing intuitive visualizations of the relationships between qualitative factor levels [2] [6].

Table 2: Performance Comparison of BO Methods on Mixed-Variable Problems

| Method | Qualitative Variable Handling | Predictive RMSE | Optimization Efficiency | Interpretability |

|---|---|---|---|---|

| LVGP-BO | Latent variable mapping | 0.23 (test case) | 85% success in <20 iterations | High (visualizable latent spaces) |

| Dummy Variable BO | Independent levels | 0.41 (test case) | 45% success in <20 iterations | Low (no inherent structure) |

| Target-Oriented BO | Compatible with LVGP | N/A | ~50% fewer iterations than EI | Medium (target-focused) |

Incorporating Experimental Constraints

Practical materials optimization must accommodate various experimental constraints, which can be interdependent, non-linear, and define non-compact optimization domains. Recent advances extend BO algorithms like PHOENICS and GRYFFIN to handle arbitrary known constraints through intuitive interfaces [4].

Constrained Bayesian optimization typically employs one of two strategies: (1) modeling the probability of constraint satisfaction and multiplying it with the acquisition function, or (2) using a separate GP model for each constraint. These approaches have demonstrated effectiveness in optimizing chemical processes under constrained flow conditions and designing molecules under synthetic accessibility constraints [4].

Experimental Protocols and Workflows

General Bayesian Optimization Protocol

The standard BO workflow for materials design follows these methodical steps [5]:

- Initial Experimental Design: Select an initial set of candidates using space-filling designs like Latin Hypercube Sampling (LHS) to obtain a representative baseline.

- Experiment Execution: Conduct physical experiments or high-fidelity simulations for each candidate to measure properties of interest.

- Surrogate Model Training: Train a Gaussian Process model on all data collected so far, potentially using specialized approaches like LVGP for mixed variable problems.

- Acquisition Function Optimization: Evaluate the acquisition function across the design space to identify the most promising next candidate.

- Iterative Refinement: Repeat steps 2-4 until meeting convergence criteria (performance target, budget exhaustion, or minimal expected improvement).

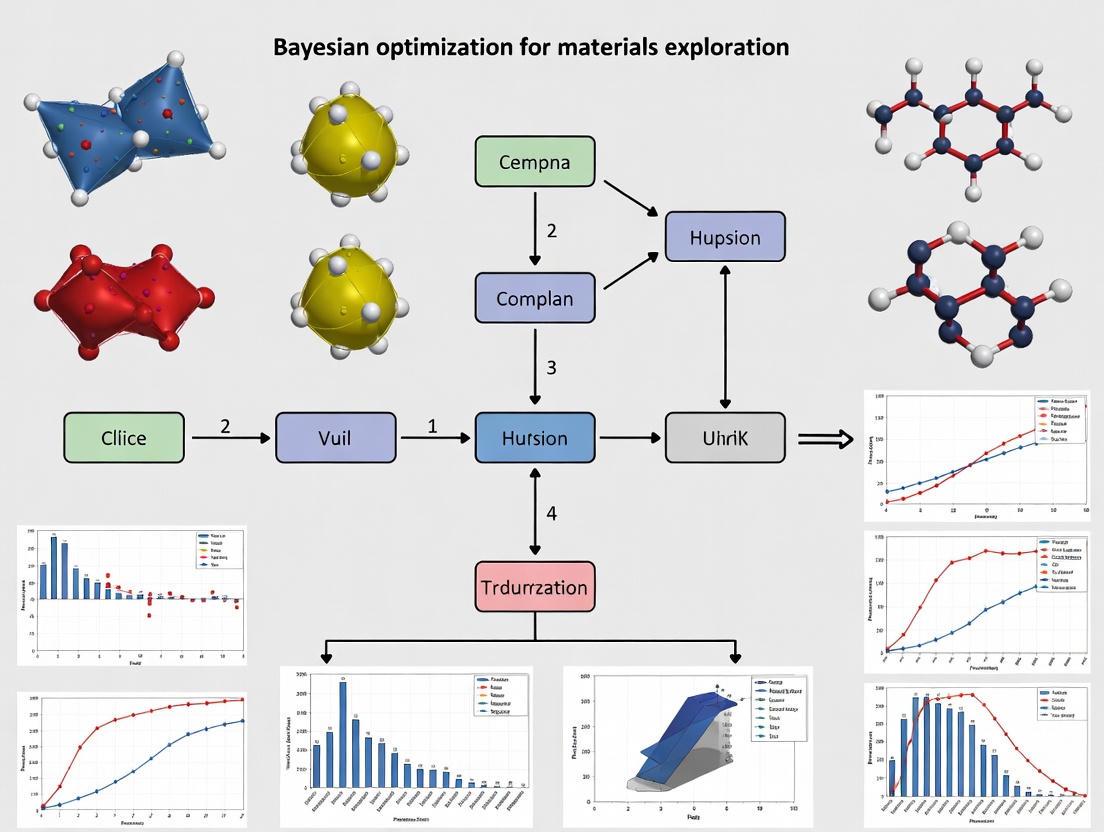

The following diagram illustrates this iterative workflow:

Target-Oriented Materials Design Protocol

For target-specific property optimization using t-EGO, the specialized workflow includes these key adaptations [1]:

- Target Definition: Precisely specify the target property value t based on application requirements.

- Model Training: Train GP models using raw property values y (not absolute deviations from target).

- t-EI Calculation: Compute target-specific Expected Improvement using the formula in Section 3.1.

- Candidate Selection: Prioritize candidates that minimize the absolute difference |y-t| while accounting for uncertainty.

- Termination: Converge when |ybest - t| falls below application-specific tolerance.

This protocol successfully discovered a shape memory alloy with transformation temperature within 0.58% of the target value (440°C) in just 3 experimental iterations, demonstrating remarkable efficiency for target-oriented applications [1].

Density Functional Theory with BO Guidance

For computational materials discovery, BO can guide quantum mechanical calculations such as Density Functional Theory (DFT):

- Search Domain Definition: Establish domains for composition variables and initial molecular coordinates.

- Molecular Alignment Setup: Set initial molecular alignments using maximum angle parameter (Δamax) relative to crystal directions.

- DFT Execution: Perform geometry relaxation calculations using specified k-point meshes.

- Property Prediction: Extract target properties (e.g., formation enthalpy ΔHmix) from relaxed structures.

- BO-Guided Iteration: Use EI acquisition to suggest next composition/alignment combinations [5].

Research Reagent Solutions for Materials Optimization

Table 3: Essential Research Materials and Computational Tools for BO-Guided Materials Design

| Category | Specific Items | Function in Bayesian Optimization |

|---|---|---|

| Computational Framework | Gaussian Process Models, Acquisition Functions | Surrogate modeling and candidate selection |

| High-Throughput Characterization | XRD, SEM, DSC, BET Surface Area Analyzer | Rapid property measurement for experimental feedback |

| Quantum Chemistry Software | VASP, Quantum ESPRESSO, Gaussian | First-principles property prediction (DFT calculations) |

| Material Precursors | Transition metal salts, Organic cations, Inorganic precursors | Synthesis of target materials (alloys, perovskites, catalysts) |

| Automation Equipment | Liquid handling robots, Automated synthesis reactors | High-throughput experimental execution |

| Data Management | Materials databases (OQMD, NanoMine), Citrine Platform | Data storage, retrieval, and model training |

Advanced Considerations and Limitations

Explainable Bayesian Optimization

The black-box nature of traditional BO can hinder adoption in experimental research where interpretability is crucial. Explainable BO methods like TNTRules (Tune-No-Tune Rules) address this by generating both global and local explanations through actionable rules and visual graphs [7].

These explanation methods identify optimal solution bounds and potential alternative solutions, helping researchers understand which parameters should be adjusted or maintained. By encoding uncertainty through variance pruning and hierarchical agglomerative clustering, these approaches make BO recommendations more interpretable and trustworthy for domain experts [7].

Practical Limitations and Alternative Approaches

While powerful, BO faces several limitations in industrial materials development:

- Computational Scaling: Traditional GP-based BO scales poorly with dimensionality, becoming prohibitively expensive beyond approximately 20 dimensions [8].

- Discontinuous Search Spaces: Materials spaces often contain incompatible combinations and abrupt property changes that challenge smooth GP modeling [8].

- Multi-Objective Optimization: Real applications typically require balancing multiple competing objectives (performance, cost, toxicity), substantially increasing complexity [8].

- Time Constraints: Industrial R&D often requires actionable results within hours, which traditional BO may not deliver for high-dimensional problems [8].

Alternative approaches like Citrine's random forest-based sequential learning retain BO's data efficiency while improving scalability, interpretability, and constraint handling. These methods provide feature importance measures and Shapley values to explain predictions, building trust and enabling scientific insight [8].

The following diagram illustrates the LVGP approach for handling mixed variable types, a key advancement for practical materials design:

Bayesian Optimization represents a paradigm shift in experimental materials design, enabling efficient navigation of complex materials spaces through intelligent adaptive sampling. The core principles of GP surrogate modeling and acquisition function-guided exploration provide a robust framework for minimizing expensive experimental iterations. Specialized advancements including target-oriented BO, latent variable GP for mixed variables, and constrained BO have addressed critical challenges in real-world materials applications. While limitations in scalability and interpretability remain active research areas, BO continues to evolve as an essential component of autonomous materials discovery platforms, accelerating the development of next-generation materials with tailored properties.

Bayesian optimization (BO) stands as a powerful paradigm for the global optimization of expensive, black-box functions, with significant applications in materials exploration and drug development. A well-balanced exploration-exploitation trade-off is crucial for the performance of its acquisition functions, yet a lack of quantitative measures for exploration has long made this trade-off difficult to analyze and compare systematically. This technical guide details two novel, empirically validated metrics—Observation Traveling Salesman Distance (OTSD) and Observation Entropy (OE)—designed to quantify exploration. We frame these measures within the context of materials science research, providing detailed methodologies, experimental protocols, and visualizations to equip researchers with the tools to understand and apply these advancements in their optimization workflows.

In materials science and pharmaceutical development, researchers are frequently confronted with the challenge of optimizing complex, costly processes or formulations with limited experimental data. Bayesian optimization has emerged as a leading method for such tasks, efficiently navigating high-dimensional search spaces to find optimal conditions, such as polymer compound formulations or drug product properties [9]. The core of BO lies in its use of a probabilistic surrogate model, typically a Gaussian Process (GP), to approximate the unknown objective function, and an acquisition function (AF) to guide the sequential selection of experimental samples by balancing the exploration of uncertain regions with the exploitation of known promising areas [10].

However, the exploration-exploitation trade-off (EETO) has historically been a qualitative concept. While it is widely recognized that different AFs, such as Expected Improvement (EI), Upper Confidence Bound (UCB), and Thompson Sampling (TS), exhibit varying explorative behaviors, the field has lacked robust, quantitative measures to characterize this crucial aspect [11] [12]. This gap makes it difficult to objectively compare algorithms, diagnose optimization failures, and select the most appropriate AF for a given problem, such as designing a new shape memory alloy with a specific transformation temperature or optimizing a pharmaceutical tablet's formulation [1] [9]. The recent introduction of OTSD and OE provides a principled foundation for a deeper understanding and more systematic design of acquisition functions, paving the way for more efficient and reliable materials discovery [13].

Novel Metrics for Quantifying Exploration

Observation Traveling Salesman Distance (OTSD)

The Observation Traveling Salesman Distance (OTSD) is a geometric measure that quantifies exploration by calculating the total Euclidean distance required to traverse all observation points selected by an acquisition function in a single, continuous route [12].

- Core Concept: The fundamental idea is that a set of points that is more spread out and explorative will require a longer path to connect. The OTSD metric is formulated as the solution to the Traveling Salesman Problem (TSP) on the set of observation points ( {\mathbf{x}1, \mathbf{x}2, \ldots, \mathbf{x}_T} ), where ( T ) is the total number of observations [10].

- Mathematical Definition: The OTSD is defined as the minimum length of a tour that visits each point exactly once and returns to the start: [ \text{OTSD} = \min{\pi} \sum{i=1}^{T-1} \|\mathbf{x}{\pi(i+1)} - \mathbf{x}{\pi(i)}\| + \|\mathbf{x}{\pi(T)} - \mathbf{x}{\pi(1)}\| ] where ( \pi ) is a permutation of the indices ( {1, \ldots, T} ) [12].

- Computational Implementation: Since solving TSP exactly is NP-hard, an efficient insertion heuristic algorithm is used in practice, resulting in a time complexity of ( O(dT^2) ), where ( d ) is the dimensionality of the input space. To ensure fair comparison across problems of different sizes and dimensions, the OTSD value is often normalized [12].

Observation Entropy (OE)

The Observation Entropy (OE) adopts an information-theoretic approach, measuring the uniformity and spread of the observation points by calculating their empirical differential entropy [12].

- Core Concept: A higher entropy value indicates a more uniform distribution of points across the search space, which is characteristic of explorative behavior. Conversely, a cluster of points in a small region (pure exploitation) would result in lower entropy [10].

- Mathematical Definition: OE uses the Kozachenko-Leonenko estimator to compute the empirical differential entropy without assuming a specific underlying distribution. For a set of points ( {\mathbf{x}1, \mathbf{x}2, \ldots, \mathbf{x}_T} ), the estimator is based on the distances to each point's nearest neighbors [12].

- Computational Implementation: The computational complexity of OE is also ( O(dT^2) ). The algorithm can be optimized for sequential BO by updating distance matrices incrementally as new observations are added, making it suitable for high-dimensional spaces (typically for ( d < 50 )) [12].

Metric Comparison and Interpretation

Table 1: Comparison of Exploration Metrics

| Feature | Observation TSD (OTSD) | Observation Entropy (OE) |

|---|---|---|

| Underlying Principle | Geometric, based on total path length | Information-theoretic, based on distribution uniformity |

| Core Idea | Explorative sequences force a longer path | Explorative sequences have higher disorder |

| Computational Complexity | ( O(dT^2) ) | ( O(dT^2) ) |

| Normalization | Required for cross-problem comparison | Inherently scale-aware |

| Primary Application | Comparing exploration between AFs on a problem | Understanding the distribution shape of queries |

These two metrics provide complementary views. Empirical studies have shown a strong correlation between OTSD and OE across a diverse set of benchmark problems, cross-validating their reliability as measures of exploration [10]. Together, they enable the creation of a quantitative taxonomy of acquisition functions, moving beyond qualitative descriptions.

Experimental Protocols and Validation

The development and validation of OTSD and OE involved extensive experimentation on both synthetic functions and real-world benchmarks. The following section outlines the core methodology and key findings.

Core Experimental Workflow

The general protocol for evaluating an acquisition function's exploration characteristics using OTSD and OE follows a structured workflow.

Key Experimental Findings

Researchers applied this workflow to benchmark a wide range of acquisition functions. The results allowed for the creation of the first empirical taxonomy of AF exploration.

Table 2: Exploration Taxonomy of Common Acquisition Functions

| Acquisition Function | Exploration Rank (High to Low) | Key Characteristic | Control Parameter |

|---|---|---|---|

| UCB | High | Explicit balance via parameter | (\beta) (High (\beta) = More exploration) |

| Thompson Sampling (TS) | Medium-High | Stochastic, probabilistic exploration | Implicit in posterior sampling |

| Max-value Entropy Search (MES) | Medium | Information-based, targets uncertainty at optimum | None |

| Expected Improvement (EI) | Medium-Low | Improves upon best-known point | Can be weighted for more exploration |

| Probability of Improvement (PI) | Low | Tends to exploit quickly | None |

- Correlation with Performance: A critical finding is the link between exploration and empirical performance. The study revealed that the best-performing acquisition functions typically exhibit a balanced exploration-exploitation trade-off, rather than being extremely explorative or purely exploitative [12]. This underscores the value of OTSD and OE in diagnosing and selecting AFs.

- Validation on Real-World Problems: These metrics were validated beyond synthetic functions. For instance, in a challenging industrial case study focused on developing a recycled plastic compound, initial BO performance was subpar. Analysis suggested that over-complicating the model with expert knowledge (adding features from data sheets) led to a high-dimensional problem where exploration became inefficient [14]. Using metrics like OTSD and OE could help diagnose such issues by quantifying whether the AF is exploring the space effectively.

Applications in Materials and Pharmaceutical Research

The quantification of exploration has direct and impactful applications in scientific research and development.

Case Study: Target-Oriented Materials Design

A key challenge in materials science is finding formulations with target-specific properties, not just maxima or minima. For example, a shape memory alloy might need a specific phase transformation temperature (e.g., 440°C) for use in a thermostatic valve [1]. A novel target-oriented BO (t-EGO) method uses a modified acquisition function (t-EI) that explicitly maximizes the expected improvement towards a target value. In one application, t-EGO discovered a shape memory alloy, ( \text{Ti}{0.20}\text{Ni}{0.36}\text{Cu}{0.12}\text{Hf}{0.24}\text{Zr}_{0.08} ), with a transformation temperature of 437.34°C—only 2.66°C from the target—within just 3 experimental iterations [1]. In such scenarios, OE can be invaluable for monitoring whether the algorithm is exploring enough of the space to find the narrow region where the target property is achievable, rather than converging prematurely.

Pharmaceutical Product Development

In pharmaceutical development, BO has been successfully applied to optimize formulation and manufacturing processes for orally disintegrating tablets, integrating multiple objective functions into a single composite score [9]. This approach reduced the number of required experiments from about 25 (using traditional Design of Experiments) to just 10. In this context, the interpretability of the optimization process is critical for gaining the trust of scientists. The use of scalable models like Random Forests, as implemented in the Citrine platform, alongside exploration metrics, can provide both actionable insights and explainable AI, revealing which ingredients or parameters most influence the predicted performance [8].

The Scientist's Toolkit for Bayesian Optimization

Implementing BO and its novel exploration metrics requires a suite of software tools and theoretical components.

Table 3: Essential Research Reagents & Computational Tools

| Tool Category | Example(s) | Function in the Research Process |

|---|---|---|

| BO Software Frameworks | Ax, BoTorch, BayBE, COMBO | Provides robust, tested implementations of Gaussian processes, acquisition functions, and optimization loops. Essential for applied research. |

| Surrogate Models | Gaussian Process (GP), Random Forest | The core predictive model that estimates the objective function and its uncertainty from available data. |

| Acquisition Functions | EI, PI, UCB, KG, MES, t-EI | The decision-making engine that selects the next experiment by balancing exploration and exploitation. |

| Exploration Metrics | OTSD, OE | New tools for quantifying and diagnosing the exploration behavior of any acquisition function. |

| Underlying Algorithms | TSP Heuristic, Kozachenko-Leonenko Estimator | The computational engines for calculating the novel exploration metrics. |

The introduction of Observation Traveling Salesman Distance and Observation Entropy marks a significant step towards a more rigorous and quantitative science of Bayesian optimization. By providing concrete measures to quantify the previously abstract concept of exploration, these metrics enable researchers to analyze, compare, and design acquisition functions with unprecedented precision. For professionals in materials exploration and drug development, this translates to a enhanced ability to navigate complex experimental landscapes, diagnose optimization failures, and ultimately accelerate the discovery of new materials and pharmaceutical products with greater efficiency and confidence. Future work will likely focus on extending these principles to more complex spaces, such as those with non-Euclidean or compositional constraints, further broadening their impact in scientific discovery.

In the realm of materials science and drug discovery, where experiments and simulations are costly and time-consuming, Bayesian optimization (BO) has emerged as a powerful framework for data-efficient optimization. The core of BO lies in its use of a surrogate model—a probabilistic approximation of the expensive, black-box objective function. This model guides the search process by predicting the performance of unexplored configurations and quantifying the associated uncertainty. The choice of surrogate model is not merely a technical detail but a critical determinant of the success of any BO campaign. This technical guide provides an in-depth analysis of two predominant surrogate modeling approaches: Gaussian Processes (GPs) and Random Forests (RFs), framing the discussion within the context of materials exploration research.

Gaussian Process Regression

Mathematical Foundation

Gaussian Process regression is a non-parametric Bayesian approach that places a prior over functions. A GP is fully specified by a mean function, μ(x), and a covariance kernel function, k(x, x'), which encodes assumptions about the function's smoothness and structure [15]. Given a dataset D = {(x₁, y₁), ..., (xₙ, yₙ)} of n observations, the posterior predictive distribution at a new point x is Gaussian with mean and variance given by:

$$ \begin{aligned} \mun(\textbf{x}) &= \mu(\textbf{x}) + \textbf{k}n(\textbf{x})^T (\textbf{K}n + \boldsymbol{\Lambda}n)^{-1}(\textbf{y}n - \textbf{u}n) \ \sigma^2n(\textbf{x}) &= k(\textbf{x}, \textbf{x}) - \textbf{k}n(\textbf{x})^T (\textbf{K}n + \boldsymbol{\Lambda}n)^{-1}\textbf{k}_n(\textbf{x}) \end{aligned} $$

where kₙ(x) is the vector of covariances between x and the training points, Kₙ is the covariance matrix between training points, yₙ is the vector of observed values, uₙ is the vector of mean values at the training points, and Λₙ is a diagonal matrix of measurement noise variances [16].

Kernels and Automatic Relevance Detection

The choice of kernel function is pivotal. Common kernels include the Matérn class, which generalizes the Radial Basis Function (RBF) kernel. For example, the Matérn52 kernel is defined as:

$$ k(\textbf{p}j, \textbf{q}j) = \sigma0^2 \cdot \left(1 + \frac{\sqrt{5}r}{lj} + \frac{5r^2}{3lj^2}\right)\exp\left(-\frac{\sqrt{5}r}{lj}\right) $$

where $r = \sqrt{(pj - qj)^2}$, σ is the standard deviation, and lⱼ is the characteristic length scale for dimension j [17].

A crucial advancement is the incorporation of Automatic Relevance Detection (ARD), which allows the kernel to have independent length scales lⱼ for each input dimension. This creates an anisotropic kernel that can automatically identify and down-weight irrelevant features, significantly improving performance on high-dimensional materials datasets [17].

Advanced GP Architectures for Materials Science

- Multi-Task Gaussian Processes (MTGPs): Model correlations between distinct but related material properties (e.g., thermal expansion coefficient and bulk modulus). By sharing information across tasks, MTGPs can enhance prediction and optimization efficiency [15].

- Deep Gaussian Processes (DGPs): Offer a hierarchical extension of GPs, combining the flexibility of deep neural networks with the uncertainty quantification of GPs. They are particularly effective at capturing complex, non-linear relationships in materials data [15].

- Sparse Axis-Aligned Subspace Priors (SAAS): Utilize sparsity-inducing priors to identify low-dimensional, property-relevant subspaces within large descriptor libraries, enabling efficient optimization in high-dimensional molecular spaces [16].

Random Forest Regression

Algorithmic Fundamentals

Random Forest is an ensemble learning method that operates by constructing a multitude of decision trees at training time. For regression tasks, the model prediction for a new point is the average prediction of the individual trees. While RFs are not inherently probabilistic, they can be adapted for Bayesian optimization by estimating uncertainty through the variance of the individual tree predictions [17].

The two key mechanisms that make RFs effective are:

- Bagging (Bootstrap Aggregating): Each tree is trained on a different bootstrap sample of the original dataset.

- Feature Randomization: At each split in a tree, a random subset of features is considered, which decorrelates the trees and improves robustness.

Uncertainty Quantification

The native uncertainty estimate from a Random Forest comes from the empirical variance of the predictions of its T individual trees:

$$ \begin{aligned} \mu(\textbf{x}) &= \frac{1}{T} \sum{t=1}^{T} ft(\textbf{x}) \ \sigma^2(\textbf{x}) &= \frac{1}{T-1} \sum{t=1}^{T} \left(ft(\textbf{x}) - \mu(\textbf{x})\right)^2 \end{aligned} $$

where fₜ(x) is the prediction of the t-th tree. This variance can be used directly by acquisition functions in BO, though it is a frequentist rather than a Bayesian measure of uncertainty [17].

Performance Benchmarking in Materials Science

Quantitative Performance Comparison

Extensive benchmarking across five diverse experimental materials systems—including carbon nanotube-polymer blends, silver nanoparticles, and lead-halide perovskites—provides critical insights into the relative performance of GP and RF surrogates [17].

Table 1: Benchmarking Results for Surrogate Models in Bayesian Optimization [17]

| Surrogate Model | Performance Summary | Robustness | Time Complexity | Hyperparameter Sensitivity |

|---|---|---|---|---|

| GP (Isotropic Kernel) | Generally outperformed by GP-ARD and RF | Moderate | O(n³) for inference | High sensitivity to kernel choice and length scales |

| GP (ARD Kernel) | Comparable to RF; outperforms isotropic GP | High - most robust overall | O(n³) for inference | Requires careful hyperparameter tuning |

| Random Forest (RF) | Comparable to GP-ARD; outperforms isotropic GP | High - close alternative to GP-ARD | O(n trees · depth) for inference | Low; minimal tuning required (e.g., ntree=100) |

Practical Considerations for Researchers

- Data Efficiency and Initial Performance: GPs with anisotropic kernels demonstrate strong performance and robustness across diverse materials datasets [17]. The sample efficiency of GPs makes them particularly well-suited for the low-data regimes typical in early-stage materials research.

- Computational and Usability Trade-offs: RFs present a compelling alternative with lower time complexity and less demanding hyperparameter tuning, offering a practical advantage for researchers with limited machine learning expertise [17] [18].

- Handling High-Dimensional Spaces: In very high-dimensional problems, such as molecular optimization with large descriptor libraries, RFs or specialized GPs (like those with SAAS priors) can be more effective than standard GPs [16].

Experimental Protocols and Workflows

Standard Bayesian Optimization Workflow

The following diagram illustrates the standard iterative workflow of a Bayesian optimization campaign, which is universal across surrogate model choices.

Surrogate Model-Specific Methodologies

The internal processes for building the GP and RF surrogate models differ significantly, as detailed below.

Table 2: Essential Computational Tools for Surrogate-Based Materials Optimization

| Tool / Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| BOTORCH [14] | Software Library | BO framework built on PyTorch | Implementing advanced BO loops with GP/RF surrogates |

| AX [14] | Software Platform | Accessible BO platform | Adaptive experimentation, multi-objective optimization |

| SAAS Prior [16] | Bayesian Method | Sparse axis-aligned subspace modeling | High-dimensional molecular optimization |

| MatSci-ML Studio [18] | GUI Toolkit | Automated ML for materials science | Lowering technical barriers for surrogate modeling |

| Optuna [18] | Hyperparameter Opt. | Automated hyperparameter optimization | Tuning surrogate model parameters efficiently |

| ARD Kernel [17] | Algorithmic Feature | Automatic relevance detection kernel | Identifying critical features in GP models |

The choice between Gaussian Processes and Random Forests as surrogate models in Bayesian optimization is not a matter of absolute superiority but rather contextual appropriateness. Gaussian Processes offer principled uncertainty quantification, strong data efficiency, and robustness, particularly when equipped with anisotropic kernels like ARD. Their Bayesian nature aligns perfectly with the philosophical underpinnings of BO. Random Forests provide a powerful, distribution-free alternative with lower computational complexity and easier implementation, making them highly accessible and effective across a broad range of materials science applications.

For researchers and drug development professionals, the practical implications are clear: GP-ARD should be strongly considered for its robustness and performance, especially in lower-dimensional problems or when data is extremely limited. RFs warrant serious consideration as a close-performing alternative that is computationally more scalable and requires less expert tuning. The ongoing development of advanced GP architectures and the integration of these surrogate models into user-friendly platforms promise to further accelerate materials discovery and development in the years to come.

Bayesian Optimization (BO) has emerged as a powerful framework for optimizing expensive black-box functions, a common scenario in fields like materials science and drug development where each experiment can be costly and time-consuming [19] [20]. The core challenge BO addresses is balancing the conflicting goals of exploration (probing uncertain regions to improve the model) and exploitation (concentrating on areas known to yield good results) with a limited experimental budget [10] [21]. A BO algorithm consists of two key components: a surrogate model, typically a Gaussian Process (GP), which approximates the unknown objective function and quantifies uncertainty at unobserved points; and an acquisition function, which guides the search by determining the next most promising point to evaluate based on the surrogate model's predictions [22] [20]. The acquisition function is the decision-making engine of BO, and its choice critically impacts the efficiency and success of the optimization campaign [23]. This guide provides an in-depth examination of three fundamental acquisition functions—Expected Improvement (EI), Upper Confidence Bound (UCB), and Probability of Improvement (PI)—within the context of materials exploration research.

Mathematical Foundations of Key Acquisition Functions

Probability of Improvement (PI)

Probability of Improvement (PI) was one of the earliest acquisition functions developed for Bayesian optimization. It operates on a simple principle: select the next point that has the highest probability of improving upon the current best observed value, denoted ( f(x^+) ) [22] [24]. Mathematically, this is expressed as finding the point ( x ) that maximizes:

[ \alpha_{\text{PI}}(x) = P(f(x) \geq f(x^+) + \epsilon) = \Phi\left(\frac{\mu(x) - f(x^+) - \epsilon}{\sigma(x)}\right) ]

where ( \mu(x) ) and ( \sigma(x) ) are the posterior mean and standard deviation from the GP surrogate model, ( \Phi ) is the cumulative distribution function of the standard normal distribution, and ( \epsilon ) is a user-defined trade-off parameter [24] [21]. The ( \epsilon ) parameter plays a crucial role in controlling the exploration-exploitation balance. A small ( \epsilon ) value makes PI highly exploitative, favoring points with a high probability of improvement even if the magnitude of improvement is small. Increasing ( \epsilon ) promotes more exploratory behavior by requiring a more substantial improvement before considering a point promising [21]. While PI is conceptually straightforward and computationally simple, a key limitation is that it only considers the likelihood of improvement and ignores the potential magnitude of improvement, which can lead to overly greedy behavior and stagnation in regions of small, certain improvements [22] [21].

Expected Improvement (EI)

Expected Improvement (EI) addresses the primary limitation of PI by considering both the probability of improvement and the magnitude of potential improvement [22] [20]. Instead of simply calculating the probability that a point will improve upon the current best, EI computes the expected value of the improvement at each point. For a point ( x ), the improvement is defined as ( I(x) = \max(f(x) - f(x^+), 0) ), and EI is then the expectation of this improvement: ( \alpha_{\text{EI}}(x) = \mathbb{E}[I(x)] ) [20]. When the surrogate model is a Gaussian Process, this expression has a closed-form solution:

[ \alpha_{\text{EI}}(x) = (\mu(x) - f(x^+) - \epsilon)\Phi\left(\frac{\mu(x) - f(x^+) - \epsilon}{\sigma(x)}\right) + \sigma(x) \phi\left(\frac{\mu(x) - f(x^+) - \epsilon}{\sigma(x)}\right) ]

where ( \phi ) is the probability density function of the standard normal distribution, and ( \epsilon ) can optionally be used to encourage more exploration [22] [24]. The first term in the EI equation favors points with high predicted mean (exploitation), while the second term favors points with high uncertainty (exploration) [22]. This built-in balance between exploration and exploitation has made EI one of the most popular and widely used acquisition functions in practice, known for its robust performance across a variety of optimization problems [20] [24]. Its analytical tractability under Gaussian assumptions further contributes to its popularity, as it can be computed efficiently without resorting to Monte Carlo methods.

Upper Confidence Bound (UCB)

The Upper Confidence Bound (UCB) acquisition function takes a different approach by combining the surrogate model's predicted mean and uncertainty into a simple additive form [22] [25]. For a maximization problem, UCB is defined as:

[ \alpha_{\text{UCB}}(x) = \mu(x) + \beta \sigma(x) ]

where ( \beta ) is a parameter that explicitly controls the trade-off between exploration and exploitation [22] [10]. The UCB acquisition function has a strong theoretical foundation, with proven regret bounds for certain choices of ( \beta ) in finite search spaces [10]. The interpretation of UCB is intuitive: it optimistically estimates the possible function value at each point by taking the upper confidence bound of the surrogate model's prediction [25]. The ( \beta ) parameter directly determines how optimistic this estimate is—larger values of ( \beta ) place more weight on uncertain regions, promoting exploration, while smaller values focus on points with high predicted performance, favoring exploitation [22] [10]. This explicit control over the exploration-exploitation balance makes UCB particularly appealing in applications where the desired level of exploration is known in advance or needs to be tuned for specific problem characteristics. Unlike EI and PI, UCB does not require knowledge of the current best function value, which can be advantageous in certain implementation scenarios.

Quantitative Comparison of Acquisition Functions

Table 1: Mathematical Properties and Characteristics of Acquisition Functions

| Acquisition Function | Mathematical Formulation | Key Parameters | Exploration-Exploitation Balance | Computational Complexity |

|---|---|---|---|---|

| Probability of Improvement (PI) | (\alpha_{\text{PI}}(x) = \Phi\left(\frac{\mu(x) - f(x^+) - \epsilon}{\sigma(x)}\right)) | (\epsilon) (margin) | Controlled by (\epsilon) | Low (closed form) |

| Expected Improvement (EI) | (\alpha_{\text{EI}}(x) = (\mu(x) - f(x^+))\Phi(Z) + \sigma(x)\phi(Z))where (Z = \frac{\mu(x) - f(x^+)}{\sigma(x)}) | (\epsilon) (optional) | Built-in balance | Low (closed form) |

| Upper Confidence Bound (UCB) | (\alpha_{\text{UCB}}(x) = \mu(x) + \beta\sigma(x)) | (\beta) (explicit weight) | Explicitly controlled by (\beta) | Low (closed form) |

Table 2: Performance Characteristics and Typical Use Cases in Materials Research

| Acquisition Function | Theoretical Guarantees | Noise Tolerance | Batch Extension | Ideal Application Scenarios in Materials Science |

|---|---|---|---|---|

| Probability of Improvement (PI) | Asymptotic convergence | Moderate | Local Penalization (LP) | Refined search near promising candidates; phase boundary mapping |

| Expected Improvement (EI) | Practical efficiency | Good | q-EI, q-logEI | General-purpose optimization; materials property maximization |

| Upper Confidence Bound (UCB) | Finite-time regret bounds | Good | q-UCB | High-dimensional searches; exploration of unknown synthesis spaces |

Advanced Adaptations for Materials Science Applications

Batch Bayesian Optimization for Parallel Experimentation

In real-world materials research, experimental setups often allow parallel evaluation of multiple samples, making batch Bayesian optimization particularly valuable for reducing total research time [26] [23]. Standard acquisition functions like EI, UCB, and PI were originally designed for sequential selection but have been extended to batch settings through various strategies. Serial approaches like Local Penalization (LP) select points sequentially within a batch by artificially reducing the acquisition function in regions around already-selected points [23]. For example, UCB can be combined with LP to create the UCB/LP algorithm, which has shown good performance in noiseless conditions [23]. Parallel batch approaches like q-EI, q-logEI, and q-UCB generalize their sequential counterparts by integrating over the joint probability distribution of multiple points [23]. These Monte Carlo-based methods select all batch points simultaneously by considering their collective impact on the optimization objective. Recent research has introduced more sophisticated entropy-based batch methods like Batch Energy-Entropy Bayesian Optimization (BEEBO) and its multi-objective extension MOBEEBO, which explicitly model correlations between batch points to reduce redundancy and enhance diversity in batch selection [26].

Targeted Materials Discovery with Custom Experimental Goals

Materials research often involves goals beyond simple optimization, such as discovering materials with specific property combinations or mapping particular regions of interest in the design space [19]. The Bayesian Algorithm Execution (BAX) framework addresses these needs by allowing researchers to define custom target subsets of the design space through algorithmic descriptions, which are then automatically translated into acquisition strategies like InfoBAX, MeanBAX, and SwitchBAX [19]. For instance, a researcher might want to find all synthesis conditions that produce nanoparticles within a specific size range for catalytic applications—a goal that goes beyond finding a single optimal point [19]. This approach enables targeting of complex experimental goals without requiring the design of custom acquisition functions from scratch, making advanced Bayesian optimization more accessible to materials scientists [19].

Hybrid and Adaptive Acquisition Strategies

Recent research has explored hybrid acquisition functions that dynamically combine the strengths of different approaches. The Threshold-Driven UCB-EI Bayesian Optimization (TDUE-BO) method begins with exploration-focused UCB and transitions to exploitative EI as model uncertainty decreases, enabling more efficient navigation of high-dimensional material design spaces [27]. Quantitative measures like Observation Traveling Salesman Distance (OTSD) and Observation Entropy (OE) have been developed to quantify the exploration characteristics of acquisition functions, providing researchers with tools to analyze and compare different strategies more systematically [10]. Adaptive strategies like SwitchBAX automatically switch between different acquisition policies (e.g., InfoBAX and MeanBAX) based on dataset size and model confidence, ensuring robust performance across different stages of the experimental campaign [19].

Experimental Protocols and Case Studies in Materials Research

Protocol for Benchmarking Acquisition Functions

A standardized protocol for evaluating acquisition functions in materials research involves several key steps [23]:

Initialization: Begin with an initial dataset, typically 20-50 points selected via Latin Hypercube Sampling (LHS) to ensure good coverage of the parameter space without clustering.

Surrogate Modeling: Employ a Gaussian Process with an ARD Matérn 5/2 kernel, which provides a flexible prior for modeling complex material response surfaces. Hyperparameters should be optimized by maximizing the marginal log-likelihood.

Acquisition Optimization: For sequential methods, use quasi-Newton or other deterministic optimizers to find the point that maximizes the acquisition function. For batch methods, especially Monte Carlo variants, use stochastic gradient descent with multiple restarts.

Evaluation and Iteration: Evaluate selected points (either physically or through simulation), update the surrogate model, and repeat until the experimental budget is exhausted.

Performance Assessment: Compare acquisition functions based on convergence efficiency (number of iterations to reach a target performance), final best value discovered, and robustness across different initial conditions.

Case Study: Optimization of Flexible Perovskite Solar Cells

A recent study compared acquisition functions for maximizing the power conversion efficiency (PCE) of flexible perovskite solar cells, a complex 4-dimensional optimization problem involving multiple synthesis parameters [23]. Researchers built an empirical regression model from experimental data and compared serial UCB/LP against Monte Carlo batch methods qUCB and q-logEI. The results demonstrated that qUCB achieved the most reliable performance, converging to high-efficiency regions with fewer experimental iterations while maintaining reasonable noise immunity [23]. This finding suggests qUCB as a promising default choice for optimizing materials synthesis processes when prior knowledge of the landscape is limited.

Case Study: Multi-Objective Nanoparticle Synthesis

In TiO₂ nanoparticle synthesis, researchers employed the BAX framework to target specific regions of the design space corresponding to desired size and crystallinity characteristics [19]. By expressing their experimental goal as an algorithm that would identify the target subset if the underlying function were known, they used InfoBAX and MeanBAX to efficiently guide experiments toward synthesis conditions meeting their precise specifications, significantly outperforming standard approaches like EI and UCB for this targeted discovery task [19].

Implementation and Decision Framework

The Scientist's Toolkit: Essential Components for Bayesian Optimization

Table 3: Essential Computational Tools and Their Functions in Bayesian Optimization

| Tool Category | Specific Examples | Function in Bayesian Optimization Workflow |

|---|---|---|

| Surrogate Models | Gaussian Process (GP) with ARD Matern 5/2 kernel | Provides probabilistic predictions of the objective function and uncertainty quantification at unobserved points |

| Optimization Libraries | BoTorch, Emukit, Scikit-Optimize | Offer implementations of acquisition functions and optimization algorithms for efficient candidate selection |

| Experimental Design Utilities | Latin Hypercube Sampling (LHS) | Generates space-filling initial designs for efficient exploration of the parameter space before Bayesian optimization begins |

| Parallelization Frameworks | q-UCB, q-EI, Local Penalization | Enable simultaneous evaluation of multiple experimental conditions in batch settings |

Workflow Diagram for Acquisition Function Selection

Practical Implementation Guidelines

Based on empirical studies across materials science applications, the following practical guidelines emerge for selecting acquisition functions [23]:

For general-purpose optimization with unknown landscapes: qUCB demonstrates robust performance across various functional landscapes and reasonable noise immunity, making it a safe default choice, particularly in batch settings [23].

When computational efficiency is paramount: EI provides a good balance between exploration and exploitation with minimal parameter tuning and closed-form computation [20] [24].

For targeted discovery of specific regions: Frameworks like BAX that translate algorithmic experimental goals into acquisition strategies outperform standard approaches for subset estimation tasks [19].

During different optimization phases: Consider adaptive approaches like TDUE-BO that begin with exploratory UCB and transition to exploitative EI as uncertainty decreases [27].

For high-noise environments: Monte Carlo acquisition functions like qUCB and qlogEI typically show better convergence and less sensitivity to initial conditions compared to serial approaches [23].

The selection of an appropriate acquisition function is a critical decision in designing effective Bayesian optimization campaigns for materials research. Expected Improvement offers a well-balanced default choice for many single-objective optimization problems, while Upper Confidence Bound provides explicit control over exploration and demonstrates strong performance in batch settings and high-dimensional spaces. Probability of Improvement serves specialized needs for focused exploitation in later stages of optimization. Recent advances in hybrid methods like TDUE-BO and framework-based approaches like BAX extend these core acquisition functions to address the complex, targeted discovery goals common in modern materials science. By understanding the mathematical foundations, performance characteristics, and practical implementation considerations of these acquisition functions, researchers can make informed decisions that accelerate materials discovery and development.

Bayesian optimization (BO) has established itself as a powerful paradigm for optimizing expensive-to-evaluate black-box functions, finding significant application in scientific and engineering fields such as materials science [17] [3] and drug discovery [28]. Its sample efficiency makes it particularly valuable when each function evaluation is costly, time-consuming, or requires physical experimentation. However, a persistent challenge restricts its broader application: a pronounced performance degradation in high-dimensional spaces. It is widely recognized that the efficiency of standard BO begins to decline noticeably around 20 dimensions [29] [28], a threshold often cited in literature and tribal knowledge. This article delves into the fundamental reasons behind this dimensional limitation, explores advanced methodologies designed to overcome it, and provides a technical guide for researchers aiming to apply BO to high-dimensional problems in domains like materials exploration.

The Core Challenge: The Curse of Dimensionality

The "curse of dimensionality" (COD) refers to a collection of phenomena that arise when analyzing and organizing data in high-dimensional spaces, which do not occur in low-dimensional settings. For Bayesian optimization, this curse manifests in several specific and debilitating ways.

Exponential Growth of Search Space and Data Sparsity

The most intuitive facet of the COD is the exponential growth of the search volume with increasing dimensions. As the number of dimensions (d) increases, the number of points required to maintain the same sampling density over the search space grows exponentially. This leads to an intrinsic data sparsity in high dimensions; the small number of samples typically affordable for expensive optimization problems becomes insufficient to cover the vast space adequately. Consequently, the average distance between randomly sampled points in a (d)-dimensional hypercube increases, often proportionally to (\sqrt{d}) [28], making it difficult to build accurate global surrogate models from limited data.

Failure of Model and Acquisition Function Components

The COD directly impacts the two core components of the BO algorithm:

Gaussian Process Model Degradation: The accuracy of the Gaussian Process (GP) surrogate model, the workhorse of BO, heavily depends on the distance between data points. In high dimensions, the increased average distance between points weakens the correlation captured by the kernel function, leading to poor model predictions [28]. Furthermore, fitting the GP model involves optimizing its hyperparameters (e.g., length scales). In high dimensions, the likelihood function for these hyperparameters can suffer from vanishing gradients, causing the optimization to fail and resulting in a poorly conditioned model [28].

Acquisition Function Optimization Becomes Intractable: Even with a reasonably accurate surrogate model, the subsequent step of optimizing the acquisition function to select the next evaluation point becomes exponentially more difficult. The acquisition function is often highly non-convex and multi-modal. Optimizing this function in a high-dimensional space is a challenging global optimization problem in its own right, and inaccurate solutions at this stage severely compromise the efficiency of the overall BO process [30] [31].

Quantitative Performance Benchmarking in Materials Science

Empirical evidence from materials science underscores BO's performance characteristics across dimensions. Benchmarking studies across diverse experimental systems—including carbon nanotube-polymer blends, silver nanoparticles, and perovskites—reveal how the choice of surrogate model impacts robustness in moderate dimensions.

The table below summarizes key findings from a comprehensive benchmarking study performed across five real-world experimental materials datasets [17]:

| Surrogate Model | Key Characteristic | Performance on High-Dimensional Problems |

|---|---|---|

| GP with Isotropic Kernel | Uses a single length scale for all dimensions | Performance decreases significantly as dimensionality increases |

| GP with Anisotropic Kernel (ARD) | Assigns independent length scales to each dimension | Most robust performance across varied materials datasets |

| Random Forest (RF) | Non-parametric, no distributional assumptions | Comparable performance to GP with ARD; a viable alternative |

This study highlights that standard BO components (like an isotropic GP) are indeed inadequate for higher dimensions. In contrast, models that can adapt to variable sensitivity across dimensions (like GP with ARD) show markedly better performance. RF also emerges as a strong candidate due to its different underlying assumptions and lower computational complexity [17].

Methodologies for High-Dimensional Bayesian Optimization

To combat the curse of dimensionality, researchers have developed sophisticated methods that move beyond the standard BO framework. The following table categorizes and describes the predominant strategies.

| Method Category | Core Assumption | Representative Algorithms | Brief Mechanism |

|---|---|---|---|

| Variable Selection | Only a small subset of variables is influential. | MCTS-VS [31], SAASBO [30] | Identifies and optimizes only the most "active" variables. |

| Subspace Embedding | The function varies primarily in a low-dimensional subspace. | REMBO [32] [31], BAxUS [31] | Projects high-D space to a low-D subspace for optimization. |

| Decomposition | The function is additive over low-dimensional subspaces. | Add-GP-UCB [32] [31] | Decomposes the function into lower-dimensional components. |

| Local & Coordinate Search | Local regions or coordinates can be optimized sequentially. | TuRBO [32], ECI-BO [31], TAS-BO [32] | Uses trust regions or coordinate-wise optimization to focus search. |

Detailed Experimental Protocols

To ensure reproducibility and provide a practical guide, we outline the experimental protocols for two key methodological approaches: one based on local search and another on coordinate descent.

TAS-BO enhances the local search capability of standard BO by incorporating a secondary, local modeling step.

- Global Model Fitting: Fit a global GP model (\mathcal{M}_G) to the entire set of existing observations.

- Candidate Point Selection: Optimize a global acquisition function (e.g., Expected Improvement) based on (\mathcal{M}G) to locate a candidate point (\mathbf{x}c).

- Local Model Fitting: Define a local region around (\mathbf{x}c) (e.g., a trust region). Fit a new, local GP model (\mathcal{M}L) using only the data points within this region.

- Infill Point Selection: Optimize a local acquisition function based on (\mathcal{M}L) to find a new point (\mathbf{x}\text{new}). This step acts as a "fine-tuning" mechanism.

- Evaluation and Update: Evaluate the expensive objective function at (\mathbf{x}_\text{new}) and add the new observation to the dataset. Repeat the process from step 1.

This coarse-to-fine search strategy prevents the optimizer from becoming overly reliant on the potentially inaccurate global model, thereby improving performance on high-dimensional problems [32].

ECI-BO tackles high-dimensional acquisition function optimization by breaking it down into a sequence of one-dimensional problems.

- Initialization: Start with an initial dataset and fit a global GP model.

- ECI Calculation: For each coordinate (i), compute the Expected Coordinate Improvement (ECI). The ECI measures the potential improvement achievable by moving from the current best solution (\mathbf{x}^*) along only the (i)-th coordinate. It has a closed-form expression similar to the conventional EI.

- Coordinate Selection: Select the coordinate (j) with the highest maximal ECI value.

- One-Dimensional Optimization: Perform a one-dimensional global optimization of the acquisition function along the selected coordinate (j), while keeping all other coordinates fixed at their values in (\mathbf{x}^*). This is a tractable 1D problem.

- Evaluation and Iteration: Evaluate the new point, update the model, and cycle through all coordinates based on their ECI values. This ensures all dimensions are gradually optimized.

The primary advantage of ECI-BO is that it transforms the difficult high-dimensional acquisition function optimization into a series of easy one-dimensional optimizations [31].

Workflow Visualization: High-Dimensional BO Strategies

The following diagram illustrates the logical relationships and decision pathways between the core strategies for high-dimensional Bayesian optimization.

The Scientist's Toolkit: Essential Research Reagents for BO

Applying Bayesian optimization effectively, especially in a high-dimensional context, requires both computational and domain-specific tools. The table below details key "research reagents" and their functions.

| Tool / Reagent | Function / Purpose | Relevance to High-Dimensional BO |

|---|---|---|

| Gaussian Process (GP) with ARD | A surrogate model that automatically learns the relevance of each input dimension. | Mitigates COD by identifying insensitive dimensions, allowing the model to focus on important variables [17]. |

| Trust Region | A dynamical search region that focuses on a local area around the current best solution. | Enables effective local search and prevents over-reliance on an inaccurate global model in high dimensions [32] [28]. |

| Random Forest (RF) Surrogate | An alternative, non-probabilistic tree-based model for approximating the objective function. | Provides a robust, less computationally intensive alternative to GP for initial benchmarking [17]. |

| Acquisition Function (e.g., EI, UCB) | A utility function that guides the selection of the next point to evaluate by balancing exploration and exploitation. | Its optimization becomes a key bottleneck in high dimensions, necessitating specialized techniques [30] [31]. |

| Random Embedding Matrix | A linear projection that maps a high-dimensional space to a randomly generated low-dimensional subspace. | Forms the basis for embedding-based methods (e.g., REMBO), enabling BO in a lower-dimensional space [32] [30]. |

The challenge of scaling Bayesian optimization beyond approximately 20 dimensions is a direct consequence of the curse of dimensionality, which fundamentally undermines the accuracy of surrogate models and the tractability of acquisition function optimization. The often-cited 20-dimensional threshold is not a hard limit but a reflection of the point where these issues become critically pronounced for standard BO implementations. However, as evidenced by active research, this barrier is not insurmountable. Methodologies such as variable selection, subspace embedding, additive decomposition, and localized or coordinate-wise search offer powerful pathways forward. For researchers in materials science and drug development, the key to success lies in carefully matching the choice of high-dimensional BO method to the known or suspected structure of the problem at hand, leveraging benchmarking studies and robust, well-understood algorithms like GP with ARD or TuRBO as a starting point for navigating the vast and complex landscapes of high-dimensional optimization.

In the realm of materials science, the exploration of vast parameter spaces—encompassing synthesis conditions, processing parameters, and compositional variations—is a fundamental challenge. Bayesian optimization (BO) has emerged as a powerful, data-efficient framework for navigating these complex, high-dimensional landscapes. A critical component that determines the success of BO is the surrogate model, which uses a kernel function to model the similarity between different data points in the input space. The standard isotropic kernel, which assumes uniform variability across all input dimensions, is often ill-suited for materials research. In real-world scenarios, the impact of different material parameters on a target property can vary significantly; some parameters may have a profound effect, while others are nearly irrelevant. Automatic Relevance Determination (ARD) addresses this by employing anisotropic kernels that learn a distinct length-scale parameter for each input dimension during the model training process. These length-scales act as weights, automatically identifying and quantifying the relative importance of each synthesis variable or material descriptor, thereby making the optimization process in materials discovery not only more efficient but also more interpretable [33] [34].

Mathematical Foundation of ARD and Anisotropic Kernels

From Isotropic to Anisotropic Kernels

The fundamental difference between a standard isotropic kernel and an ARD-enabled anisotropic kernel lies in the structure of their distance metrics.

Isotropic Radial Basis Function (RBF) Kernel: The standard RBF kernel is defined as: ( K{\text{iso}}(\mathbf{x}, \mathbf{x}') = \sigmaf^2 \exp\left(-\frac{1}{2\ell^2} \|\mathbf{x} - \mathbf{x}'\|^2\right) ) Here, ( \ell ) is a single scalar length-scale parameter that governs the sensitivity of the function across all input dimensions. A small change in any dimension impacts the similarity measure equally [33].

Anisotropic ARD Kernel: The anisotropic version generalizes the scalar length-scale into a vector of length-scales, ( \mathbf{\ell} = (\ell1, \ell2, ..., \elld) ), where ( d ) is the dimensionality of the input space. The kernel function becomes: ( K{\text{aniso}}(\mathbf{x}, \mathbf{x}') = \sigmaf^2 \exp\left(-\frac{1}{2} \sum{i=1}^{d} \frac{(xi - xi')^2}{\elli^2}\right) ) This can be equivalently expressed using a diagonal covariance matrix ( \Sigma^{-1} ), where the diagonal elements are ( 1/\elli^2 ) [33] [34]. The inverse of the length-scale, ( 1/\elli ), can be interpreted as the relevance of the ( i )-th feature. A small length-scale (( \elli \to 0 )) means that the function is highly sensitive to changes in that dimension, indicating a highly relevant parameter. Conversely, a large length-scale (( \ell_i \to \infty )) smoothes out the function's variation along that dimension, effectively masking its irrelevance [33].

Common ARD Kernel Functions and Their Properties

The ARD framework can be applied to a variety of kernel functions. The table below summarizes the most commonly used ones in materials informatics.

Table 1: Common ARD Kernel Functions and Their Properties

| Kernel Name | Mathematical Formulation (with ARD) | Key Properties and Use-Cases |

|---|---|---|

| ARD-RBF Kernel | ( K(\mathbf{x}, \mathbf{x}') = \sigmaf^2 \exp\left(-\frac{1}{2} \sum{i=1}^{d} \frac{(xi - xi')^2}{\ell_i^2}\right) ) | Universally applicable; assumes smooth, infinitely differentiable functions. Excellent for modeling continuous material properties [33] [35]. |

| ARD Matérn Kernel | ( K(\mathbf{x}, \mathbf{x}') = \sigmaf^2 \frac{2^{1-\nu}}{\Gamma(\nu)} \left( \sqrt{2\nu} \sqrt{ \sum{i=1}^{d} \frac{(xi - xi')^2}{\elli^2} } \right)^\nu K\nu \left( \sqrt{2\nu} \sqrt{ \sum{i=1}^{d} \frac{(xi - xi')^2}{\elli^2} } \right) ) | Less smooth than RBF; flexibility controlled by ( \nu ) (e.g., ( \nu=3/2, 5/2 )). Useful for modeling properties with more irregular, rough landscapes [36]. |

| ARD Linear Kernel | ( K(\mathbf{x}, \mathbf{x}') = \sigma0^2 + \sum{i=1}^{d} \sigmai^2 xi x_i' ) | Models linear relationships. The variance parameters ( \sigma_i^2 ) perform the role of relevance weights [35]. |

ARD in Practice: Methodologies for Materials Discovery

Integration with Gaussian Process Regression and BO

In a typical Bayesian optimization loop for materials discovery, a Gaussian Process (GP) surrogate model is placed at the core. The integration of ARD into this workflow involves:

- Prior Definition: Place priors over the anisotropic kernel's hyperparameters: the length-scales ( \mathbf{\ell} ) and the signal variance ( \sigma_f^2 ).

- Posterior Inference: After collecting a set of experimental data ( {\mathbf{X}, \mathbf{y}} ), compute the posterior distribution of the GP. This involves estimating the hyperparameters by maximizing the log marginal likelihood: ( \log p(\mathbf{y} | \mathbf{X}, \mathbf{\ell}, \sigmaf^2) = -\frac{1}{2} \mathbf{y}^T (K + \sigman^2\mathbf{I})^{-1} \mathbf{y} - \frac{1}{2} \log |K + \sigma_n^2\mathbf{I}| - \frac{n}{2} \log 2\pi ) where ( K ) is the covariance matrix built using the anisotropic kernel [34] [15].

- Relevance Extraction: The optimized length-scale values ( \ell_i ) are directly interpreted. Dimensions with the smallest length-scales are identified as the most critical for the target material property.

- Informed Data Acquisition: The BO acquisition function (e.g., Expected Improvement), now informed by the more accurate and structured uncertainty estimate from the ARD-GP model, selects the next most promising experiment to perform [19] [37].

Protocol for an ARD-Driven Materials Exploration Campaign

The following diagram illustrates the closed-loop, autonomous experimental workflow powered by ARD.

Diagram 1: ARD-driven materials workflow.

Detailed Experimental Protocol:

- Initial Design of Experiments (DOE): Begin with a space-filling initial design (e.g., Latin Hypercube Sampling) to get a low-resolution baseline of the parameter space. A typical initial size is 5-10 points per dimension [37] [34].

- Model Training and Hyperparameter Optimization: Fit the ARD-GP model to the current dataset. This is a critical step where the length-scales are learned. Optimization is typically done using gradient-based methods (e.g., L-BF-B). The protocol should include multiple restarts from different initial points to avoid poor local optima [34].

- Relevance Analysis and Model Diagnostics: Analyze the converged length-scales. Parameters with ( \ell_i ) orders of magnitude larger than others can be considered irrelevant and potentially fixed in subsequent iterations, effectively reducing the dimensionality of the problem.

- Iterative Experimentation: The loop (steps 2-4 in Diagram 1) continues until a stopping criterion is met, such as the discovery of a material satisfying the target property, exhaustion of the experimental budget, or convergence of the acquisition function.

Advanced ARD Methodologies and Research Frontiers

Beyond Standard ARD-GP: Multi-Output and Hierarchical Models

Materials discovery often involves optimizing multiple properties simultaneously. Standard ARD-GP models are single-task. Recent advances focus on capturing correlations between distinct material properties:

- Multi-Task Gaussian Processes (MTGPs): MTGPs use a coregionalization matrix to model linear correlations between different property outputs. When combined with ARD kernels for the input space, they can learn feature relevance while sharing information across correlated tasks (e.g., optimizing for both high bulk modulus and low thermal expansion coefficient in high-entropy alloys), significantly accelerating the discovery process [15].

- Deep Gaussian Processes (DGPs): DGPs stack multiple GP layers, creating a hierarchical, more expressive model. This allows for learning highly complex, non-linear relationships between inputs and outputs. DGP-based BO has been shown to outperform conventional GP-BO in complex multi-objective optimization tasks within high-entropy alloy spaces [15].

Sparse Modeling and High-Dimensional Challenges

In very high-dimensional spaces (e.g., >20 parameters), standard BO can struggle—a phenomenon known as the "curse of dimensionality." Sparse modeling techniques are being developed to enhance ARD in these settings. One recent approach is Bayesian optimization with the maximum partial dependence effect (MPDE). This method allows researchers to set an intuitive threshold (e.g., ignore parameters affecting the target by less than 10%), leading to effective optimization with fewer experimental trials [38].

The Scientist's Toolkit: Key Reagents & Computational Tools

Table 2: Essential "Research Reagents" for ARD-Driven Materials Discovery

| Category | Item / Tool | Function / Purpose |

|---|---|---|

| Computational Core | Gaussian Process Library (e.g., GPyTorch, GPflow, scikit-learn) | Provides the core infrastructure for building and training GP models with ARD kernels. |

| Bayesian Optimization Framework (e.g., BoTorch, Ax, Dragonfly) | Implements the full BO loop, including various acquisition functions and handling of asynchronous parallel experiments. | |

| Differentiable Programming Platform (e.g., PyTorch, JAX) | Enables efficient gradient-based optimization of kernel hyperparameters through automatic differentiation. | |

| Experimental Infrastructure | Autonomous Robotic Platform | Executes synthesis and characterization protocols without human intervention, enabling rapid closed-loop experimentation. |

| High-Throughput Characterization Tools (e.g., Automated SEM/XRD) | Provides fast, quantitative property measurements essential for feeding data back into the BO loop in near real-time. | |

| Data & Kernels | ARD-RBF / Matérn Kernel | The foundational model component for learning parameter relevance and building the surrogate model. |

| Synthetic Test Functions (e.g., Ackley, Hartmann) | Used for in-silico testing and benchmarking of the BO-ARD pipeline before committing to costly real-world experiments [37]. |

Automatic Relevance Determination, implemented through anisotropic kernels, transforms Bayesian optimization from a black-box search algorithm into an insightful and efficient partner in materials exploration. By learning the relative importance of each synthesis and processing parameter, ARD not only accelerates the search for optimal materials but also provides valuable scientific insights into the underlying physical and chemical relationships governing material behavior. As the field progresses, the integration of ARD with multi-task, hierarchical, and sparse models will further enhance our ability to navigate the ever more complex design spaces of next-generation materials, from high-entropy alloys to organic photovoltaics and bespoke pharmaceutical compounds.

Advanced BO Frameworks and Real-World Materials Applications: From Theory to Synthesis

Bayesian optimization (BO) has emerged as a powerful machine learning framework for navigating complex design spaces with limited experimental budgets, making it particularly valuable for materials science applications where individual experiments can be costly and time-consuming [39]. Traditional BO approaches predominantly focus on optimizing materials properties by estimating the maxima or minima of unknown functions [1]. However, many practical materials applications require finding specific property values rather than mere optima, as materials often exhibit exceptional performance at precise values or under certain conditions that don't necessarily correspond to functional extremes [1]. For instance, catalysts for hydrogen evolution reactions demonstrate enhanced activities when free energies approach zero, photovoltaic materials achieve high energy absorption within targeted band gap ranges, and shape memory alloys require specific transformation temperatures for applications like thermostatic valves [1].

This technical guide examines the emerging paradigm of target-oriented Bayesian optimization, which represents a significant shift from conventional optimization-focused approaches. Where traditional methods seek to find the "best" possible value, target-oriented methods efficiently identify materials with predefined specific properties, often requiring substantially fewer experimental iterations [1]. This approach is particularly valuable for real-world materials engineering constraints where specific property thresholds must be met for practical applications. The following sections provide a comprehensive technical overview of target-oriented BO methodologies, experimental validation, implementation protocols, and research tools that collectively enable accelerated discovery of materials with precisely tailored properties.