Autonomous XRD Pattern Analysis: How Machine Learning is Revolutionizing Materials Characterization and Drug Development

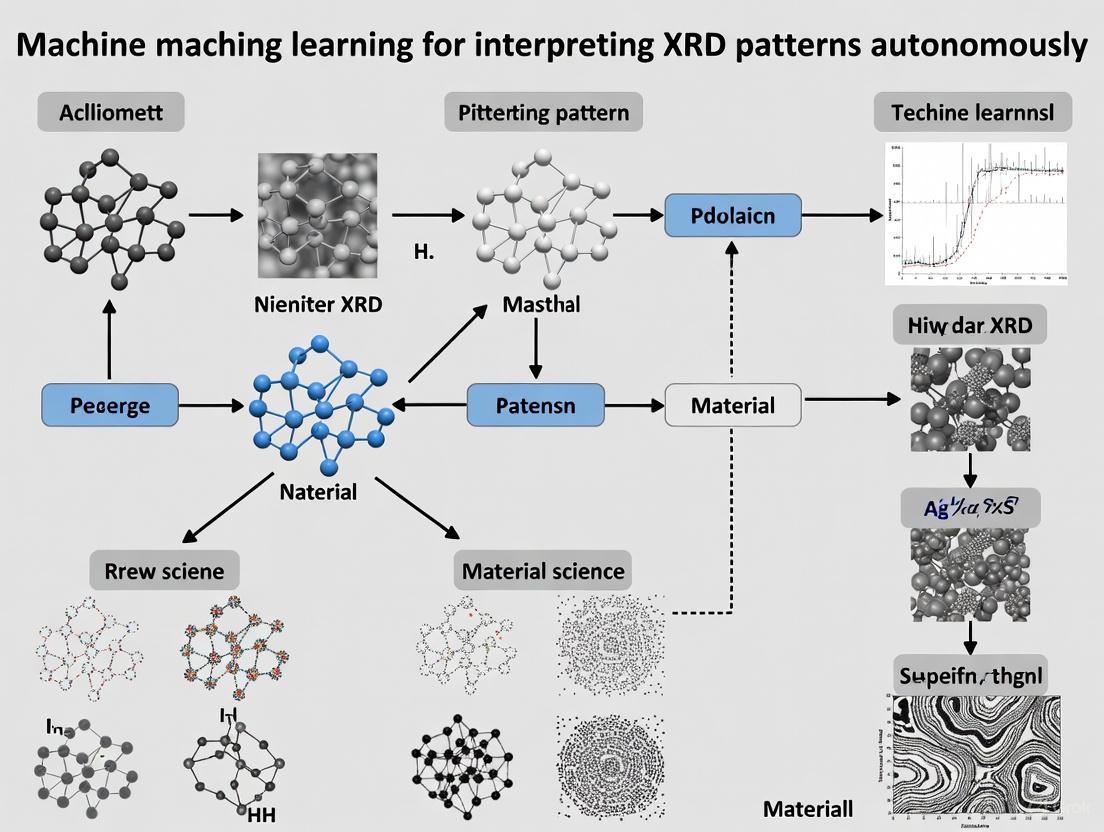

This article explores the transformative role of machine learning (ML) in automating the interpretation of X-ray diffraction (XRD) patterns.

Autonomous XRD Pattern Analysis: How Machine Learning is Revolutionizing Materials Characterization and Drug Development

Abstract

This article explores the transformative role of machine learning (ML) in automating the interpretation of X-ray diffraction (XRD) patterns. Aimed at researchers, scientists, and drug development professionals, it covers the foundational shift from traditional, labor-intensive analysis to data-driven automation. We delve into core methodologies like convolutional neural networks for phase identification and adaptive XRD, address critical challenges including data scarcity and model interpretability, and validate these approaches through performance benchmarks and real-world applications. The synthesis provides a roadmap for integrating autonomous XRD analysis to accelerate discovery in materials science and pharmaceutical development.

The New Paradigm: From Manual Analysis to Autonomous XRD Interpretation

X-ray diffraction (XRD) has defined our understanding of material structures for over a century, providing atomic-resolution insights into the long-range order and defects in crystalline materials [1]. However, the foundational principles of XRD analysis, including Rietveld refinement and Bragg's Law, are being fundamentally transformed by a confluence of two modern forces: the explosion of available diffraction data and the rapid advancement of machine learning (ML) techniques [1] [2]. The advent of high-throughput materials synthesis, automated robotic laboratories, online crystal structure databases, and advanced beamline facilities has generated terabytes of XRD data, creating both an unprecedented opportunity and an acute analysis bottleneck [1]. This data deluge has catalyzed an ML revolution in XRD interpretation, enabling autonomous phase identification, real-time adaptive experiments, and the extraction of subtle microstructural features that challenge conventional analysis methods [3] [2] [4]. This application note examines how ML is reshaping XRD data analysis, providing researchers with structured protocols, validated tools, and strategic frameworks to leverage these transformative technologies in materials discovery and characterization.

The XRD Data Landscape: Volume and Variety

The scale of available XRD data has expanded dramatically due to multiple technological drivers. High-throughput methodologies have revolutionized both synthesis and characterization, with automated robotic laboratories enabling the rapid screening of bulk oxides, phosphates, metal nanomaterials, quantum dots, and polymers [1]. Specialized facilities now generate terabytes of data from single experiments, particularly through in situ and operando methodologies that track material dynamics in real time [1]. This data explosion is complemented by the growth of massive public crystallographic databases, which provide the foundational training datasets for ML models.

Table 1: Major Crystallographic Databases for ML Training

| Database Name | Size and Scope | Primary Content | ML Application |

|---|---|---|---|

| Powder Diffraction File (PDF) | >1,126,200 material datasets [5] | Comprehensive collection of minerals, metals, alloys, polymers, pharmaceuticals | Phase identification, pattern matching |

| Crystallography Open Database (COD) | 467,861+ structures [6] | Open-access collection of organic, metal-organic, inorganic structures | Generalizable model training, benchmark creation |

| Inorganic Crystal Structure Database (ICSD) | Hundreds of thousands of structures [1] | Curated inorganic crystal structures | Specialized model training for inorganic systems |

| SIMPOD | 467,861 simulated patterns from COD [6] | Simulated 1D diffractograms and 2D radial images | Computer vision approaches to XRD analysis |

The SIMPOD (Simulated Powder X-ray Diffraction Open Database) benchmark exemplifies how databases are being specifically engineered for ML applications. By providing 467,861 simulated powder patterns with corresponding 2D radial images, SIMPOD enables computer vision approaches that have demonstrated superior performance in space group prediction compared to traditional ML methods using 1D diffractograms [6].

Machine Learning Applications in XRD Analysis

ML approaches are being deployed across the XRD analysis pipeline, from rapid phase identification to advanced microstructural characterization. These applications can be categorized into supervised learning for classification and regression tasks, and unsupervised methods for pattern discovery in high-dimensional data [1] [2].

Phase Identification and Classification

Phase identification represents the most mature application of ML in XRD analysis. Convolutional neural networks (CNNs) now demonstrate exceptional accuracy in classifying crystalline phases from both 1D diffraction patterns and 2D radial images [6] [3]. The transformation of 1D patterns to 2D representations has proven particularly valuable, with models like Swin Transformers and ResNets achieving top-5 accuracies exceeding 90% on the SIMPOD benchmark [6]. These models leverage computer vision architectures to detect subtle peak relationships and relative intensity patterns that distinguish similar crystal structures.

Microstructural Descriptor Extraction

Beyond phase identification, ML models can extract quantitative microstructural descriptors directly from XRD profiles, including properties such as dislocation density, phase fractions, pressure, and temperature states in dynamically loaded materials [4]. Supervised learning models trained on paired XRD profiles and microstructural data from molecular dynamics simulations can establish complex mappings between diffraction pattern features and material states, enabling rapid characterization of defect populations and phase distributions that would require extensive manual analysis with traditional methods [4].

Adaptive and Autonomous XRD

The integration of ML with physical diffractometers has enabled a paradigm shift from static characterization to adaptive experimentation. Autonomous XRD systems employ real-time decision algorithms that guide data collection toward maximally informative measurements [3]. This approach uses class activation maps (CAMs) to identify diffraction regions that distinguish between candidate phases, then strategically allocates measurement time to resolve ambiguities [3]. Such systems have demonstrated particular value in capturing transient intermediate phases during in situ reactions, where measurement speed is essential to observe short-lived states [3].

Experimental Protocols for ML-Driven XRD Analysis

Protocol 1: Adaptive XRD for Phase Identification in Multi-Phase Mixtures

This protocol enables autonomous identification of crystalline phases with optimized measurement efficiency, particularly valuable for detecting trace phases or characterizing dynamic processes [3].

Table 2: Research Reagent Solutions for Adaptive XRD

| Item | Function | Implementation Example |

|---|---|---|

| ML Model (XRD-AutoAnalyzer) | Phase prediction and confidence assessment | Convolutional neural network trained on relevant chemical space (e.g., Li-La-Zr-O) |

| Class Activation Map (CAM) Analysis | Identifies discriminative 2θ regions | Highlights angles that distinguish top candidate phases |

| Confidence Threshold | Decision metric for additional data collection | 50% confidence cutoff balances speed and accuracy |

| 2θ Expansion Algorithm | Progressive range extension | Increases maximum angle by +10° increments up to 140° |

Procedure:

Initial Rapid Scan: Perform a quick measurement over 2θ = 10°-60° to establish baseline pattern. This range captures sufficient peaks for preliminary analysis while minimizing initial time investment.

Preliminary Phase Prediction: Input the initial pattern to the ML model (XRD-AutoAnalyzer) to obtain phase predictions with confidence estimates for each suspected phase.

Confidence Evaluation: Compare all phase confidence values against the 50% threshold. If all values exceed threshold, proceed to final reporting. If below threshold, initiate adaptive resampling.

Selective Resampling: Calculate CAMs for the two most probable phases. Identify 2θ regions where CAM difference exceeds 25% threshold. Rescan these regions with higher resolution (slower scan rate) to clarify distinguishing features.

Iterative Expansion: If confidence remains below threshold after resampling, expand angular range by +10° and repeat rapid scanning. Continue until confidence thresholds are met or maximum angle (140°) is reached.

Ensemble Prediction: Aggregate predictions from multiple 2θ ranges using confidence-weighted averaging according to the equation: $$P{ens} = \frac{\sum{10}^{2θi} ciPi}{n + 1}$$ where $Pi$ represents each prediction, $c_i$ is the confidence, and $n+1$ gives the total number of 2θ-ranges [3].

Validation: This approach has demonstrated accurate detection of impurity phases at 1-2 wt% levels in the Li-La-Zr-O and Li-Ti-P-O chemical spaces, with significantly reduced measurement times compared to conventional high-resolution scans [3].

Protocol 2: Transfer Learning for Microstructural Prediction

This protocol addresses the challenge of model transferability across different material states and crystallographic orientations, particularly relevant for shocked materials or textured polycrystals [4].

Procedure:

Diverse Training Data Generation:

- Perform molecular dynamics simulations of shock loading for multiple single-crystal orientations (〈111〉, 〈110〉, 〈100〉, 〈112〉)

- Generate paired XRD profiles and microstructural descriptors (dislocation density, phase fractions, pressure, temperature)

- Use Cu Kα radiation (λ = 1.54 Å), 2θ range 30°-60° to capture key peaks ({111} at 43.15°, {200} at 50.35°)

Model Training:

- Train supervised ML models (random forest, neural networks) on XRD profiles from multiple orientations

- Use 5-fold cross-validation to assess performance on dislocation density, phase fraction, and pressure prediction

Transferability Assessment:

- Evaluate model performance on held-out crystal orientations

- Test generalizability to polycrystalline systems with random grain orientations

- Quantify accuracy degradation for specific microstructural descriptors

Key Findings: Models trained on multiple crystal orientations show significantly improved transferability to polycrystalline systems. Prediction accuracy varies substantially across microstructural descriptors, with phase fractions generally more transferable than dislocation density [4].

Implementation Considerations and Best Practices

Data Quality and Model Selection

Successful implementation of ML for XRD analysis requires careful consideration of data quality and model architecture. Simulated training data should incorporate realistic experimental artifacts including peak broadening, background noise, and preferred orientation effects to enhance model transferability to experimental data [1] [4]. For phase identification, 2D computer vision models (ResNet, Swin Transformer) trained on radial images generally outperform 1D CNN models on raw diffractograms, with pre-training on large image datasets providing additional accuracy improvements of 2.5-3% [6]. However, this performance advantage must be balanced against the computational cost of image transformation and model complexity.

Addressing Model Transferability Limitations

A significant challenge in ML-driven XRD analysis is model transferability—the ability to maintain accuracy on crystal orientations, microstructures, or material systems not represented in training data [4]. Strategies to enhance transferability include:

- Diverse Training Data: Incorporate multiple crystallographic orientations, polycrystalline systems, and defect structures during training [4]

- Data Augmentation: Apply synthetic peak broadening, noise injection, and intensity variations to expand effective training dataset diversity

- Transfer Learning: Fine-tune models pre-trained on large diverse datasets (SIMPOD, PDF) for specific material systems with limited data

- Hybrid Approaches: Combine ML predictions with physics-based constraints from Rietveld refinement or structure factor calculations [1]

Software and Computational Tools

Table 3: Essential Software Tools for ML-Enhanced XRD Analysis

| Tool Category | Examples | Primary Function | ML Integration |

|---|---|---|---|

| Commercial XRD Software | HighScore Plus, JADE, DIFFRAC.SUITE [7] [5] [8] | Traditional phase analysis, Rietveld refinement | Limited native ML, primarily pattern matching |

| Specialized ML Tools | XRD-AutoAnalyzer, SIMPOD benchmark [6] [3] | Phase identification, space group prediction | Dedicated ML models for classification |

| Simulation Packages | LAMMPS diffraction package, Dans Diffraction [6] [4] | Synthetic XRD pattern generation | Training data generation for ML models |

| General ML Frameworks | PyTorch, H2O AutoML [6] | Custom model development | Flexible implementation of novel architectures |

The integration of machine learning with X-ray diffraction is transforming materials characterization from a static, human-guided process to a dynamic, autonomous discovery engine. The field is advancing toward fully closed-loop systems where ML algorithms not only interpret XRD data but actively design and steer experiments toward optimal characterization outcomes [3]. Future developments will likely focus on improving model interpretability through attention mechanisms and saliency maps, enabling researchers to understand which diffraction features drive specific predictions [1] [2]. Additionally, the integration of ML with multi-modal characterization—correlating XRD with spectroscopy, microscopy, and computational modeling—will provide more comprehensive materials understanding [9] [2].

The data explosion in XRD has indeed catalyzed an ML revolution, creating unprecedented opportunities for accelerated materials discovery and characterization. By implementing the protocols and best practices outlined in this application note, researchers can leverage these transformative technologies to extract deeper insights from diffraction data, characterize dynamic materials processes with unprecedented temporal resolution, and accelerate the development of novel materials with tailored properties and performance.

X-ray diffraction (XRD) stands as one of the most powerful non-destructive techniques for determining the atomic and molecular structure of crystalline materials, with applications spanning pharmaceuticals, materials science, and metallurgy [10]. The technique provides a unique "fingerprint" for material identification, enabling researchers to determine crystal structure, identify phases, measure lattice parameters, and analyze microstructural features [2] [10]. Despite its proven capabilities, traditional XRD analysis faces significant challenges that create bottlenecks in research and development pipelines, particularly in an era of high-throughput experimentation. This application note details three core challenges—time-intensive processes, high expertise requirements, and limited throughput capabilities—within the broader context of developing machine learning solutions for autonomous XRD pattern interpretation.

Core Challenges of Traditional XRD Analysis

Time-Intensive Analysis Processes

Traditional XRD data analysis, particularly for unknown crystal structures, is notoriously labor-intensive and time-consuming. The conventional workflow involves multiple specialized steps that collectively require substantial human effort and processing time.

Table 1: Time Requirements for Traditional XRD Analysis Steps

| Analysis Step | Description | Time Requirement | Key Challenges |

|---|---|---|---|

| Data Collection | Measurement of diffraction intensity versus angle (2θ) | Minutes to hours per sample | Instrument-dependent; varies with sample quality and required resolution |

| Phase Identification | Matching diffraction patterns to known crystal structures | Hours to days | Requires expert knowledge of crystallographic databases |

| Structure Solution | Determining atomic positions from diffraction data | Days to weeks for new structures | Labor-intensive trial-and-error process |

| Rietveld Refinement | Full-pattern fitting to optimize structural parameters | Hours to days, requiring human intervention | Demands substantial expertise and manual tuning |

Solving and refining unknown crystal structures from powder X-ray diffraction (PXRD) data represents one of the most time-intensive aspects, with traditional methods requiring "significant expertise" and often extending across extended periods [11]. The Rietveld refinement process, considered the gold standard for quantitative phase analysis, demands "manual tuning and adjustments such as peak indexing and parameter initialization for trial-and-error iterations" that substantially prolong analysis time [12]. Furthermore, over 476,000 entries in the Powder Diffraction File (PDF) database have unresolved atomic coordinates, highlighting the persistent challenges in timely structure determination [11].

High Expertise Requirements

Traditional XRD analysis demands specialized knowledge across multiple domains, creating a significant barrier to widespread adoption and creating dependency on limited expert resources.

Table 2: Expertise Domains Required for Traditional XRD Analysis

| Expertise Domain | Application in XRD Analysis | Consequence of Expertise Gap |

|---|---|---|

| Crystallography | Understanding crystal systems, space groups, symmetry | Incorrect phase identification or structure solution |

| Diffraction Physics | Interpreting peak positions, intensities, and shapes | Misinterpretation of structural features or defects |

| Software Proficiency | Operating specialized analysis programs (e.g., Rietveld refinement software) | Inefficient analysis or incorrect parameter optimization |

| Materials Science | Contextualizing results within material properties and processing | Failure to connect structural features to material behavior |

The expertise barrier manifests particularly in interpreting complex XRD patterns, which "are notoriously difficult to interpret, especially if they exhibit complex peak shifting, broadening, and varying peak ratios" [13]. The presence of multiple phases in a single sample further complicates analysis, creating "overlapping peaks and potentially ambiguous phase assignments" that require sophisticated interpretation skills [13]. Current indexing techniques "require human intervention and contextual insights from verified materials," making fully automated analysis impossible without expert input [12]. This dependency creates critical bottlenecks, especially with the emergence of "big datasets from millions of measurements; far over what human experts can manually analyze" [12].

Limited Throughput Capabilities

The manual nature of traditional XRD analysis creates significant throughput limitations that impede research progress, particularly in high-throughput experimentation environments.

Table 3: Throughput Limitations in Traditional XRD Analysis

| Throughput Factor | Limitation | Impact on Research Pace |

|---|---|---|

| Sample Processing | Sequential rather than parallel analysis | Limits number of samples characterized per unit time |

| Data Interpretation | Manual peak identification and phase matching | Creates backlog between data collection and analysis |

| Structure Refinement | Iterative manual optimization of parameters | Dramatically slows structure-property relationship mapping |

| Expert Availability | Dependency on limited specialized personnel | Creates bottlenecks in analysis pipeline |

The fundamental mismatch between data generation and analysis capabilities has become particularly pronounced with "recent advances in ultrafast synchronous X-ray diffraction and spectroscopy measurements [that] generate big datasets from millions of measurements; far over what human experts can manually analyze" [12]. This challenge is further exacerbated by "the lack of rapid and reliable XRD data analysis methods for conclusive structural determination" that forces most algorithms to "operate on reduced quantities such as scalar performance metrics or gradients in spectroscopic signals, limiting the reasoning ability of AI agents" [13]. The throughput limitations are particularly problematic in high-throughput experimentation where "rapid, automated, and reliable analysis of XRD data at rates that match the pace of experimental measurements at a synchrotron source remains a major challenge" [13].

Experimental Protocols for Traditional XRD Analysis

Protocol 1: Multi-Phase Sample Analysis Using CrystalShift

CrystalShift provides a probabilistic approach for multiphase labeling that employs symmetry-constrained optimization and Bayesian model comparison, offering advantages over traditional methods for complex multi-phase samples [13].

Materials:

- X-ray diffractometer with Cu Kα radiation source (λ = 1.5418 Å)

- Powder sample of interest (<10 μm particle size)

- Crystallographic database (e.g., ICSD, COD) for candidate phases

- CrystalShift software platform

Procedure:

- Sample Preparation:

- Grind sample to uniform particle size (<10 μm) to minimize preferred orientation effects

- Mount powder in sample holder using back-loading technique to ensure random orientation

- Level sample surface to minimize displacement errors

Data Collection:

- Set up Bragg-Brentano geometry with divergence slits

- Scan range: 5° to 80° 2θ with 0.02° step size

- Counting time: 2 seconds per step to ensure adequate signal-to-noise ratio

- Record data as intensity versus 2θ values

Candidate Phase Selection:

- Compile list of potential phases based on sample composition and synthesis conditions

- Retrieve crystal structure files (CIF) for candidate phases from databases

- Generate theoretical diffraction patterns for each candidate phase

Tree Search Execution:

- Input experimental XRD pattern and candidate phase list into CrystalShift

- Run best-first tree search algorithm with maximum phase combination depth (typically 3-5 phases)

- Allow lattice parameter optimization while preserving space group symmetry

- Set convergence criteria for residual minimization

Bayesian Model Comparison:

- Calculate evidence for each optimized phase combination using Laplace approximation

- Apply softmax function to generate probability distribution over phase combinations

- Select most probable phase combination based on posterior probability

Validation:

- Compare refined lattice parameters with known values for candidate phases

- Verify physical plausibility of refined microstructural parameters

- Cross-reference with complementary characterization data (e.g., elemental analysis)

Expected Outcomes: The protocol should yield probabilistic phase identification with quantitative lattice strain measurements and phase fractions, typically within 1-2 hours per sample, significantly faster than traditional iterative methods [13].

Protocol 2: Crystal Structure Determination from PXRD

This protocol outlines the traditional approach for determining crystal structures from powder XRD data, a process that new machine learning methods aim to accelerate [11].

Materials:

- High-quality powder diffraction data (preferably synchrotron source for superior resolution)

- Structure solution software (e.g., EXPO, FOX, or GSAS-II)

- Rietveld refinement program

- Access to crystallographic databases (ICSD, Materials Project)

Procedure:

- Data Quality Assessment:

- Ensure adequate signal-to-noise ratio (>10:1 for weakest peaks)

- Verify minimal preferred orientation through sample preparation optimization

- Check for appropriate angular range to access sufficient diffraction peaks

Unit Cell Determination:

- Perform peak indexing using auto-indexing algorithms (e.g., ITO, DICVOL)

- Evaluate figures of merit (M{20}, F{N}) to assess indexing quality

- Refine unit cell parameters using whole-pattern fitting

Space Group Determination:

- Analyze systematic absences to determine possible space groups

- Consider chemical constraints and known structural families

- Use statistical assessment of possible extinction symbols

Structure Solution:

- Employ direct methods (e.g., Monte Carlo, simulated annealing, genetic algorithms)

- Alternatively, use charge flipping or maximum entropy methods

- Generate trial structure models compatible with electron density maps

Rietveld Refinement:

- Initialize refinement with trial structure model

- Sequentially refine scale factor, background, lattice parameters, peak shape

- Progress to atomic coordinates and displacement parameters

- Include microstructural parameters (crystallite size, strain) if necessary

- Monitor agreement factors (R{wp}, R{p}, χ²) for convergence

Validation:

- Check for chemical reasonableness of bond lengths and angles

- Verify displacement parameters are physically plausible

- Assess residual electron density for missing features

Expected Outcomes: Successful application yields a refined crystal structure with atomic coordinates, but requires "significant expertise" and may take "days to weeks for new structures" [11].

Workflow Visualization

Figure 1: Traditional XRD Analysis Workflow. The diagram illustrates the iterative, time-intensive process of traditional crystal structure determination from XRD data, highlighting potential refinement loops that contribute to analysis delays.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Materials for Traditional XRD Analysis

| Item | Function | Application Notes |

|---|---|---|

| Standard Reference Materials (e.g., Si, Al₂O₃) | Instrument calibration and peak position verification | NIST-traceable standards ensure measurement accuracy |

| Zero-Background Holders | Sample mounting with minimal background signal | Single crystal silicon or quartz substrates preferred |

| Microtiter Plates (96-well) | High-throughput sample presentation for automated systems | Enables batch analysis of multiple samples |

| Crystallographic Databases (ICSD, COD, PDF) | Reference patterns for phase identification | Subscription-based services with comprehensive datasets |

| Rietveld Refinement Software (e.g., GSAS, TOPAS) | Whole-pattern fitting for quantitative analysis | Requires significant expertise for effective utilization |

| Monochromated X-ray Source (Cu Kα, λ = 1.5418 Å) | Production of characteristic X-rays for diffraction | Copper most common; molybdenum for heavy elements |

| High-Resolution Detector (e.g., PSD, area detector) | Measurement of diffracted X-ray intensity | Modern detectors significantly reduce acquisition time |

The scientist's toolkit for traditional XRD analysis encompasses both physical materials and computational resources, with the choice of specific items heavily influenced by the particular application domain. For instance, pharmaceutical researchers require "polymorph identification" capabilities [14], while materials scientists need tools for "residual stress measurement in manufactured components" [10]. The integration of "high-resolution detectors" has been a key advancement, providing "sharper diffraction patterns, enabling precise identification of complex crystalline structures" [14]. Similarly, computational resources like the "Inorganic Crystal Structure Database (ICSD)" serve as essential references for phase identification [12]. The emergence of "compact and portable XRD systems" has further expanded applications to "on-site analysis across diverse industries such as mining, pharmaceuticals, and environmental science" [14].

The core challenges of traditional XRD analysis—time-intensive processes, high expertise requirements, and limited throughput capabilities—represent significant bottlenecks in modern materials research and drug development. These limitations are particularly problematic in the context of high-throughput experimentation and autonomous materials discovery, where rapid, reliable structural analysis is essential for establishing composition-structure-property relationships. The protocols and methodologies outlined in this application note highlight both the sophistication of traditional XRD analysis and its inherent limitations in contemporary research environments. These challenges provide a compelling rationale for the development of machine learning approaches for autonomous XRD pattern interpretation, which aim to overcome these bottlenecks while maintaining the precision and accuracy of conventional methods.

The integration of machine learning (ML) into X-ray diffraction (XRD) analysis represents a paradigm shift in materials science and related fields, enabling the autonomous and rapid interpretation of crystalline structures. Traditional XRD analysis often requires extensive expert knowledge and can be time-consuming, especially for complex multi-phase mixtures or defective structures. ML techniques, particularly deep learning, are now being deployed to overcome these limitations, automating critical tasks such as phase identification, crystal symmetry classification, and microstructural analysis. This document outlines the fundamental protocols, data requirements, and performance benchmarks for implementing these ML-driven tasks, providing a practical guide for researchers and development professionals.

Crystal Symmetry Classification

Crystal symmetry classification is a crucial first step in materials characterization, as symmetry directly influences physical properties. Machine learning models, especially Convolutional Neural Networks (CNNs), have demonstrated high accuracy in classifying crystal systems, extinction groups, and space groups from diffraction data.

Core Methodologies and Performance

Two primary data representation approaches are used for symmetry classification: one-dimensional powder XRD patterns and three-dimensional electron density data.

Table 1: Performance of ML Models for Crystal Symmetry Classification

| Data Representation | Model Architecture | Dataset | Classification Task | Reported Accuracy | Key Advantage |

|---|---|---|---|---|---|

| 1D Powder XRD Pattern [15] | Fully Convolutional Network (FCN) | ICSD (197,131 inorganic compounds) | Crystal System | 93.06% | Considered upper limit for 1D XRD |

| 2D Diffraction Image [16] | Convolutional Neural Network (CNN) | >100,000 simulated structures (perfect & defective) | Crystal Symmetry | 100% on defective structures (see Table 2) | Robustness to high defect concentrations |

| 3D Electron Density (ICSD) [15] | Sparse 3D CNN | ICSD (experimental data) | Crystal System | 97.28% | Superior accuracy, direct real-space interpretation |

| 3D Electron Density (ICSD) [15] | Sparse 3D CNN | ICSD (experimental data) | Space Group | 90.10% | High performance for complex task |

A landmark study demonstrated that a CNN trained on 2D diffraction images could correctly classify over 100,000 simulated crystal structures, including those with heavy defects, achieving 100% accuracy even at high defect concentrations. This showcases exceptional robustness compared to conventional algorithms like Spglib, which require user-defined thresholds and fail with significant defects [16].

Table 2: ML Model Robustness to Defects (Accuracy %) [16]

| Method / Defect Level | Random Displacements (σ = 0.02 Å) | Vacancies (η = 25%) |

|---|---|---|

| Spglib (loose threshold) | 0.00 | 0.00 |

| ML-based Approach (This work) | 100.00 | 100.00 |

Experimental Protocol: 2D Diffraction Image Classification

Workflow Overview:

Detailed Protocol:

- Input Data Preparation: Begin with a set of atomic coordinates and lattice vectors representing the crystal structure [16].

- Descriptor Generation:

- Construct the conventional cell of the crystal structure according to standardized crystallographic definitions [16].

- Simulate diffraction patterns: For each of the three principal crystal axes (x, y, z), rotate the structure by 45° clockwise and counterclockwise. Calculate the diffraction pattern for each rotation using the Fourier transform of the atomic coordinates to simulate the scattering amplitude [16]. The intensity is computed as

I(q) = A · Ω(θ) · |Ψ(q)|², whereΨ(q)is the scattering amplitude [16]. - Create a 2D RGB image: Superimpose the two diffraction patterns for each axis and assign each axis to a color channel (Red, Green, Blue) to form the final image descriptor [16].

- Model Training: Construct a deep Convolutional Neural Network (ConvNet) model designed for image classification. Train the model using a large dataset of simulated crystal structures (e.g., >100,000 samples) with known space group labels [16].

- Validation: Use attentive response maps to interpret the model's internal operations and validate that it uses physically meaningful landmarks for classification [16].

Research Reagent Solutions

| Item | Function / Description |

|---|---|

| Crystallography Open Database (COD) | Source of crystal structures for generating training data [6]. |

| Inorganic Crystal Structure Database (ICSD) | Source of experimentally validated inorganic crystal structures for training and benchmarking [15]. |

| Simulated Powder XRD Open Database (SIMPOD) | Public dataset with 467,861 crystal structures and simulated 1D/2D diffraction data for model development [6]. |

| 2D Diffraction Image Descriptor | Image-based representation of crystal structure that encapsulates global symmetry information for robust classification [16]. |

| Sparse 3D CNN | Deep learning architecture optimized for processing sparse 3D electron density data, achieving state-of-the-art classification accuracy [15]. |

Phase Identification

ML-driven phase identification focuses on detecting and quantifying crystalline phases in a sample, often from powder XRD patterns. This is particularly valuable for analyzing complex mixtures and for in-situ monitoring of reactions where phases may be transient.

Core Methodologies and Performance

Advanced ML frameworks for phase identification often move beyond simple pattern matching to incorporate adaptive data collection strategies.

Table 3: Performance of ML Models for Phase Identification

| Method / Model | Application Context | Key Performance Metric | Result |

|---|---|---|---|

| Adaptive XRD [3] | Trace phase detection in multi-phase mixtures (Li-La-Zr-O, Li-Ti-P-O) | Detection confidence with short measurement times | Accurate identification of trace phases and short-lived intermediates |

| Machine Learning Framework [17] | Phase identification of transition metals and their oxides | General performance | Competitive performance, demonstrating potential for high-impact application |

| XRD-AutoAnalyzer (CNN) [3] | General phase identification | Prediction confidence | Used as a decision metric for adaptive data collection |

Experimental Protocol: Adaptive XRD for Autonomous Phase Identification

This protocol couples an ML algorithm with a physical diffractometer to steer measurements toward features that improve identification confidence.

Workflow Overview:

Detailed Protocol:

- Initial Rapid Scan: Perform a fast XRD scan over a limited angular range (e.g., 2θ = 10° to 60°) to quickly gather preliminary data [3].

- In-line ML Analysis: Feed the initial pattern to a pre-trained deep learning model (e.g., XRD-AutoAnalyzer). The model predicts the present phases and assigns a confidence score (0-100%) to its prediction [3].

- Confidence-Based Decision:

- High Confidence (e.g., >50%): The measurement is concluded, and the phase identification is reported [3].

- Low Confidence (e.g., <50%): The system autonomously decides to collect more data.

- Adaptive Data Collection:

- Resampling: The algorithm calculates Class Activation Maps (CAMs) to identify regions in the pattern that are most critical for distinguishing between the top candidate phases. It then performs a slower, higher-resolution scan over these specific angular regions to clarify ambiguous peaks [3].

- Range Expansion: If confidence remains low, the scan range is iteratively expanded (e.g., in +10° steps up to 140°) to capture additional distinguishing peaks [3].

- Ensemble Prediction: At each iteration, predictions from all scanned ranges are aggregated into a confidence-weighted ensemble prediction,

P_ens = Σ (c_i * P_i) / (n + 1), to improve robustness [3].

Research Reagent Solutions

| Item | Function / Description |

|---|---|

| XRD-AutoAnalyzer | A pre-trained deep learning algorithm for phase identification and confidence assessment [3]. |

| Class Activation Maps (CAMs) | A visualization tool that highlights regions in an XRD pattern most important for the ML model's classification, guiding adaptive resampling [3]. |

| Ensemble Prediction (P_ens) | A weighted average of predictions from multiple 2θ-ranges, improving the reliability of the final phase identification [3]. |

| XRD-Learn Python Package | A software toolkit for processing, visualizing, and analyzing XRD data, supporting workflows for ML analysis [18]. |

Microstructural Analysis

ML for microstructural analysis extracts quantitative descriptors (e.g., dislocation density, phase fractions, microstrain) from XRD profiles, going beyond simple phase identification to assess the material's defect state and mechanical history.

Core Methodologies and Performance

Supervised ML models can be trained on paired datasets of XRD profiles and microstructural descriptors, often generated from atomistic simulations.

Table 4: ML for Microstructural Descriptor Extraction from XRD

| Microstructural Descriptor | Material System | ML Model | Key Insight / Challenge |

|---|---|---|---|

| Pressure, Temperature, Phase Fractions, Dislocation Density [4] | Shock-loaded Cu (single crystal & polycrystal) | Supervised ML | Accuracy depends on target descriptor and training data diversity. |

| Crystallite Size & Microstrain [2] | General crystalline materials | Various ML models | Extracted from peak broadening analysis, surpassing traditional methods like Williamson-Hall. |

Experimental Protocol: Extracting Microstructural Descriptors from Simulated XRD

This protocol uses atomistic simulations to generate a labeled dataset for training models to predict microstructural states from XRD profiles.

Workflow Overview:

Detailed Protocol:

- Generate Microstructural States:

- Perform atomistic simulations (e.g., Molecular Dynamics with LAMMPS) to subject a material (e.g., single-crystal or polycrystalline Copper) to various thermodynamic and mechanical conditions (e.g., shock loading). Save multiple snapshots of the atomic structure throughout the simulation [4].

- Create Paired Dataset:

- Microstructural Descriptors: For each saved atomic snapshot, calculate target descriptors (

s_i), such as pressure, temperature, phase fractions (FCC, HCP, disordered), and dislocation density using analysis tools (e.g., OVITO with Common Neighbor Analysis and the Dislocation Extraction Algorithm) [4]. - XRD Profiles: For the same snapshots, simulate 1D XRD profiles

I(2θ)using a diffraction package (e.g., the LAMMPS diffraction package). Use a Cu Kα wavelength (1.54 Å) and a relevant angular range (e.g., 30°-60°). Normalize the intensities to a maximum of 1 [4].

- Microstructural Descriptors: For each saved atomic snapshot, calculate target descriptors (

- Model Training and Validation:

- Train supervised ML models (e.g., Random Forest, Neural Networks) to regress the microstructural descriptors from the XRD profiles.

- Critically assess model transferability—the ability of a model trained on data from one crystallographic orientation or microstructure (e.g., single crystal) to accurately predict descriptors for another (e.g., a different orientation or polycrystal). Training on multiple orientations significantly improves transferability [4].

Research Reagent Solutions

| Item | Function / Description |

|---|---|

| LAMMPS (MD Simulator) | A classical molecular dynamics code used to simulate material behavior under various conditions and generate atomic configurations for XRD simulation [4]. |

| OVITO | A scientific visualization and analysis software for atomistic simulation data. Used with plugins like CNA and DXA to compute microstructural descriptors [4]. |

| Dislocation Extraction Algorithm (DXA) | An analysis tool (e.g., in OVITO) used to identify and quantify dislocation types and densities in an atomic structure [4]. |

| Common Neighbor Analysis (CNA) | An analysis method used to identify the local crystal structure (FCC, BCC, HCP) of each atom in a simulation [4]. |

The integration of machine learning (ML) with X-ray diffraction (XRD) is transforming materials characterization, enabling the rapid and autonomous interpretation of crystallographic data. A core distinction in these ML-driven workflows lies in the choice between supervised and unsupervised learning. This article delineates the fundamental principles, applications, and protocols for these two approaches within the context of autonomously interpreting XRD patterns. Supervised learning relies on labeled datasets to train models for phase identification and classification, whereas unsupervised learning identifies hidden patterns and structures within data without pre-existing labels, making it suitable for discovering new phases or analyzing complex mixtures where reference data is limited [1] [19]. The selection between these paradigms is crucial for the efficiency and success of materials discovery and drug development research.

Core Conceptual Comparison

The following table summarizes the key characteristics of supervised and unsupervised learning in the context of XRD analysis.

Table 1: Comparison of Supervised and Unsupervised Learning for XRD Workflows

| Aspect | Supervised Learning | Unsupervised Learning |

|---|---|---|

| Primary Objective | Classification, regression, and quantitative phase identification [3] [20]. | Dimensionality reduction, clustering, and discovery of hidden patterns without labeled data [19] [21]. |

| Training Data | Labeled XRD patterns (e.g., patterns linked to specific crystal phases, space groups, or cell parameters) [1] [6]. | Raw, unlabeled XRD patterns (e.g., from composition-spread libraries or mapping experiments) [19] [21]. |

| Model Output | Predicted phase, crystal system, space group, or confidence score [3] [20]. | Identified clusters, basis patterns, or a low-dimensional representation of the data [19] [22]. |

| Key Advantage | High accuracy and speed for identifying known phases; enables autonomous, adaptive data collection [1] [3]. | No need for labeled data; capable of identifying unknown phases, solid solutions, and peak-shifting effects [19] [21]. |

| Main Challenge | Dependency on large, high-quality labeled datasets; models can be physics-agnostic and may not generalize well to experimental data [1] [20]. | Results can be more difficult to interpret; requires post-analysis to connect clusters to physical meaning [19] [22]. |

| Typical Algorithms | Convolutional Neural Networks (CNNs), Multi-Layer Perceptrons (MLPs), Random Forests [3] [6] [20]. | Non-negative Matrix Factorization (NMF), Uniform Manifold Approximation and Projection (UMAP), clustering algorithms (e.g., k-means) [19] [21] [22]. |

Supervised Learning: Protocols and Applications

Workflow for Autonomous Phase Identification

Supervised learning models, particularly deep learning networks, are trained on vast databases of simulated or experimental XRD patterns to achieve expert-level accuracy in phase identification [1] [3]. A advanced application is adaptive XRD, which closes the loop between measurement and analysis.

Diagram Title: Supervised Adaptive XRD Workflow

Protocol: Adaptive XRD for Phase Identification [3]

- Initial Rapid Scan: Begin with a fast XRD scan over a limited angular range (e.g., 2θ = 10°–60°).

- ML Prediction & Confidence Assessment: Input the diffraction pattern into a trained convolutional neural network (e.g., XRD-AutoAnalyzer). The model outputs a predicted phase and an associated confidence score (0–100%).

- Confidence Check: If the confidence for all suspected phases exceeds a predefined threshold (e.g., 50%), the process concludes. If not, the system autonomously decides on the next measurement step.

- Guided Resampling: Using Class Activation Maps (CAMs), the algorithm identifies angular regions where the diffraction patterns of the top candidate phases differ most. It then performs a higher-resolution (slower) scan over these specific regions to collect more decisive data.

- Range Expansion: If ambiguity persists, the angular range is iteratively expanded (e.g., in +10° steps up to 140°) to capture additional distinguishing peaks.

- Iteration: Steps 2–5 are repeated until the confidence threshold is met or the maximum angle is reached.

This protocol has been validated for detecting trace impurity phases and identifying short-lived intermediate phases during in situ solid-state reactions, such as the synthesis of LLZO, with a higher success rate than conventional methods [3].

Protocol for Crystal System and Space Group Classification

Objective: To train a supervised model for predicting crystal symmetry information (crystal system, extinction group, space group) from a single-phase powder XRD pattern [20].

Data Preparation:

- Source: Obtain Crystallographic Information Files (CIFs) from databases like the Inorganic Crystal Structure Database (ICSD) or Crystallography Open Database (COD).

- Simulation: Use software (e.g., Dans Diffraction package) to simulate powder XRD patterns from the CIFs. Use a fixed wavelength (e.g., Cu Kα, λ = 1.5406 Å) and a defined 2θ range (e.g., 5°–90°).

- Augmentation (Optional): Introduce variability by randomizing parameters in the peak profile function (e.g., pseudo-Voigt) and background polynomial to improve model robustness.

- Labeling: Each simulated pattern is labeled with its crystal system, extinction group, and space group.

Model Training:

- Architecture: Employ a Convolutional Neural Network (CNN) or a Vision Transformer designed for 1D signal or image processing.

- Input: Use the entire diffraction pattern (intensity vs. 2θ) or a transformed 2D radial image of the pattern [6].

- Training: Train the model in a supervised manner using the labeled dataset to map the input pattern to the correct symmetry labels.

Validation:

- Test the model on a hold-out set of simulated patterns. Reported accuracies can reach ~94% for crystal system and ~81% for space group classification [20].

- For ultimate validation, the model should be tested on experimental XRD data, though this often presents a challenge due to the disparity with idealized simulations [20].

Unsupervised Learning: Protocols and Applications

Workflow for Phase Mapping with NMF

Unsupervised learning excels at analyzing high-throughput XRD datasets from combinatorial libraries, where the phase composition is unknown a priori. Non-negative Matrix Factorization (NMF) is a powerful method for this task.

Diagram Title: Unsupervised Phase Mapping with NMF

Protocol: Phase Mapping with NMF Integrated with Custom Clustering (NMFk) [19]

Data Matrix Construction: From a combinatorial library with N measurement points, compile all XRD patterns into a non-negative data matrix X of size M × N, where M is the number of diffraction angles (2θ) and each column is a single XRD pattern.

Determine the Number of Phases (K):

- Run the NMF algorithm multiple times for a range of potential phase numbers, K̃.

- For each K̃, use a custom clustering and Silhouette statistics to evaluate the robustness and reproducibility of the solution.

- The optimal number of end-members K is identified as the one that produces the most stable and well-separated clusters of solutions.

Matrix Factorization: Decompose the data matrix X into two non-negative matrices: W (the basis patterns or end-members) and H (the mixing coefficients or abundances), such that X ≈ W * H.

Handle Peak Shifting: A critical challenge in combinatorial datasets is continuous peak shifting due to changing lattice parameters across compositions.

- Analyze the derived basis patterns in W using cross-correlation.

- Identify and combine patterns that represent the same crystal structure but are shifted due to solid solution effects.

Interpret Results: The final matrix W contains the XRD patterns of the unique phases in the system, and H describes their abundance across the compositional spread, allowing for the construction of a compositional phase diagram.

Protocol for Dimensionality Reduction and Clustering of NanoXRD Data

Objective: To analyze raw, high-dimensional nanoXRD data without prior knowledge for defect recognition and structural feature mapping [21].

Data Acquisition: Perform a nanoXRD scan, collecting a 2D diffraction pattern at each probe position on a 2D grid, resulting in a 4D dataset (2 real space + 2 reciprocal space dimensions).

Pre-processing: Correct for simple global artifacts like beam shift by aligning the central beam of all diffraction patterns. Avoid subjective manipulation of the raw data.

Dimensionality Reduction:

- Method: Apply the Uniform Manifold Approximation and Projection (UMAP) algorithm.

- Process: UMAP embeds the high-dimensional diffraction patterns (each considered as a single data point in a high-dimensional space) into a lower-dimensional space (e.g., 2D or 3D), preserving as much of the geometric structure of the data as possible.

Clustering and Analysis:

- The low-dimensional UMAP representation will naturally form clusters.

- Each cluster corresponds to a distinct local crystal structure or defect type (e.g., regions with different strain states or crystal orientations).

- By coloring the real-space map according to UMAP cluster assignment, one can visualize the spatial distribution of these microstructural features, guiding further investigation.

This method has been successfully applied to identify structural defects in HVPE-GaN wafers, providing a more precise categorization than conventional analysis and minimizing information loss from data integration [21].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Resources for ML-Driven XRD Experiments

| Item / Solution | Function in ML-XRD Workflow |

|---|---|

| Crystallography Open Database (COD) | A primary source of open-access crystal structures used to generate large, labeled datasets for supervised training or benchmarking [6] [20]. |

| Inorganic Crystal Structure Database (ICSD) | A comprehensive database of inorganic crystal structures, often used for curating high-quality training data for supervised learning models [1] [20]. |

| SIMPOD Benchmark | A public dataset of simulated powder XRD patterns from the COD, designed for training and testing ML models for tasks like space group and parameter prediction [6]. |

| Non-negative Matrix Factorization (NMF) | A core unsupervised algorithm for blind source separation, decomposing a set of XRD patterns into constituent phase patterns and their abundances [19]. |

| Class Activation Maps (CAMs) | A visualization technique in deep learning that highlights the diffraction angle regions most important for a model's classification, enabling adaptive steering of experiments [3]. |

| Uniform Manifold Approximation and Projection (UMAP) | A powerful manifold learning technique for dimensionality reduction and clustering of complex, high-dimensional diffraction data (e.g., nanoXRD) [21]. |

The application of machine learning (ML) to X-ray diffraction (XRD) analysis represents a paradigm shift in materials science and drug development, enabling the autonomous and high-throughput interpretation of crystalline structures [1]. The efficacy of such data-driven models is intrinsically tied to the quality, volume, and diversity of the training data. This establishes curated, well-documented datasets and benchmarks not merely as useful resources but as foundational pillars for the entire research domain [6] [2]. Within this ecosystem, three resources are particularly critical: the SIMPOD benchmark, the Crystallography Open Database (COD), and the Inorganic Crystal Structure Database (ICSD). This application note details these key resources, providing a quantitative comparison, experimental protocols for their use in ML model development, and visualizations of the associated workflows to accelerate research in autonomous XRD pattern interpretation.

Table 1 summarizes the core characteristics of the SIMPOD, COD, and ICSD databases, providing a clear comparison for researchers selecting a data source.

Table 1: Core Characteristics of Key Crystallographic Databases for ML

| Database | Primary Content & Scope | Data Volume | Access Model | Key Features for ML |

|---|---|---|---|---|

| SIMPOD [6] | Simulated 1D XRD patterns & 2D radial images from diverse COD structures. | 467,861 crystal structures and patterns [6]. | Open Access [6]. | A ready-made ML benchmark; includes derived 2D images for computer vision models; standardized simulation parameters [6]. |

| Crystallography Open Database (COD) [23] | Experimental crystal structures of organic, metal-organic, inorganic compounds, and minerals [23]. | >376,000 structures (as of 2017) [23]. | Open Access [23]. | Community-driven; diverse chemical space; uses standard CIF format; ideal for sourcing new structures for simulation [23]. |

| Inorganic Crystal Structure Database (ICSD) [24] [25] | Curated experimental crystal structures of inorganic compounds [24]. | >210,000 entries [25]. | Licensed / Subscription [25]. | High-quality, critically evaluated data; extensive historical coverage (from 1913); essential for inorganic materials research [24] [25]. |

Experimental Protocols for ML Model Development

The following protocols outline methodologies for leveraging these datasets, from training a model on a static benchmark to implementing an adaptive, autonomous XRD system.

Protocol 1: Supervised Learning for Space Group Classification using SIMPOD

This protocol describes the process of training and evaluating a computer vision model to predict the space group from a powder XRD pattern, using the SIMPOD benchmark.

- Data Acquisition and Partitioning: Download the SIMPOD dataset, which provides structures from the COD and their corresponding simulated 1D diffractograms and 2D radial images [6]. For a standard classification task, partition the data into training, validation, and test sets (e.g., 2-fold cross-validation with 50,000 structures each and a separate test set of 25,000 structures) [6].

- Model Selection and Training:

- For 1D Diffractograms: Utilize traditional ML models like Distributed Random Forest (DRF) or Multi-Layer Perceptrons (MLP), which can be efficiently implemented and optimized using AutoML frameworks like H2O AutoML [6].

- For 2D Radial Images: Employ deep learning models such as AlexNet, ResNet, DenseNet, or Swin Transformers [6]. Transfer learning with pre-trained models on large image datasets (e.g., ImageNet) can be applied, which has been shown to improve accuracy by ~2.6% [6].

- Model Optimization and Evaluation: Optimize hyperparameters using the validation set. Evaluate the final model on the held-out test set and report standard metrics including top-1 accuracy and top-5 accuracy to assess classification performance [6].

Protocol 2: Autonomous and Adaptive XRD for Phase Identification

This protocol, adapted from Mian et al. (2023), describes a closed-loop system that integrates ML-driven analysis with a physical diffractometer to autonomously steer measurements for rapid phase identification, which is particularly useful for detecting trace impurities or transient phases in in situ reactions [3].

- Initial Rapid Scan: Perform a fast XRD scan over a narrow angular range (e.g., 2θ = 10° to 60°) to quickly gather preliminary data [3].

- In-line Phase Prediction and Confidence Assessment: Feed the acquired pattern to a pre-trained deep learning model (e.g., an XRD-AutoAnalyzer) to predict the present crystalline phases and, crucially, the model's confidence (from 0-100%) for each prediction [3].

- Decision Point: Proceed or Refine: If the confidence for all suspected phases exceeds a predefined threshold (e.g., 50%), the analysis is complete. If confidence is low, initiate an adaptive refinement loop [3].

- Adaptive Refinement Loop:

- Targeted Rescanning: Calculate Class Activation Maps (CAMs) to identify the 2θ regions that are most discriminative for separating the two most probable phases. Rescan these specific regions with a slower rate for higher resolution [3].

- Angular Range Expansion: If rescanning is insufficient, iteratively expand the scan range (e.g., in +10° increments up to 140°) to capture additional distinguishing peaks. After each expansion, return to Step 2 for a new prediction with updated confidence [3].

- Ensemble Prediction Aggregation: Aggregate predictions from all scanned 2θ-ranges into a final, confidence-weighted ensemble prediction to yield the most robust phase identification result [3].

The following workflow diagram visualizes this adaptive process:

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2 lists key computational and experimental "reagents" essential for working with these datasets and implementing the described protocols.

Table 2: Essential Research Reagents and Resources

| Item / Resource | Function / Purpose | Example / Source |

|---|---|---|

| CIF (Crystallographic Information File) | Standard text file format for storing crystallographic information, the fundamental data unit for COD and ICSD [23]. | IUCr-standard CIF format [23]. |

| Powder Diffraction Simulation Software | Generates theoretical 1D powder XRD patterns from crystal structures for creating datasets like SIMPOD or validating results. | Dans Diffraction package, Gemmi [6]. |

| Deep Learning Frameworks | Provides the programming environment for building, training, and deploying ML models for phase identification and space group classification. | PyTorch [6]. |

| AutoML Libraries | Automates the process of applying standard machine learning models to structured data, such as 1D diffractograms. | H2O AutoML [6]. |

| Class Activation Map (CAM) Algorithm | A critical interpretability tool that highlights regions of an XRD pattern (2θ angles) most influential to an ML model's decision, guiding adaptive rescans [3]. | Integrated within CNN-based phase classifiers [3]. |

Workflow for Sourcing and Simulating Data from Primary Databases

For researchers who need to go beyond a pre-packaged benchmark like SIMPOD and create custom datasets, the following workflow outlines the process of sourcing structures from primary databases and converting them into usable XRD data. This is a common practice for targeting specific material classes not fully represented in existing benchmarks [6].

ML in Action: Core Algorithms and Real-World Applications for XRD

Convolutional Neural Networks (CNNs) for 1D Pattern and 2D Image Analysis

X-ray diffraction (XRD) stands as a fundamental technique for determining the atomic-scale structure and properties of crystalline materials. The analysis of XRD data, whether in the form of one-dimensional (1D) powder diffraction patterns or two-dimensional (2D) diffraction images, has traditionally required significant expert interpretation, creating bottlenecks in high-throughput experimental workflows. Convolutional Neural Networks (CNNs) have emerged as powerful tools for automating and enhancing the analysis of both 1D and 2D XRD data, enabling rapid phase identification, quantitative parameter extraction, and anomaly detection. These capabilities are particularly valuable for autonomous interpretation systems in materials discovery and characterization, where they can process vast datasets orders of magnitude faster than conventional methods like Rietveld refinement [1] [26].

The application of CNNs to XRD analysis represents a paradigm shift from physics-based refinement to data-driven pattern recognition. While traditional methods require precise modeling of diffraction physics, CNNs can learn complex relationships between diffraction features and material properties directly from data. This enables the development of systems capable of real-time analysis during in situ and operando experiments, enabling immediate feedback and experimental decision-making [26]. Furthermore, the integration of interpretability mechanisms like attention and Bayesian uncertainty quantification is addressing the "black box" nature of deep learning models, increasing their reliability for scientific applications [27] [28].

Analysis of 1D XRD Patterns Using CNNs

Network Architectures and Approaches

CNNs applied to 1D XRD patterns typically utilize architectural patterns that maintain the sequential nature of the data while extracting hierarchical features. The Parameter Quantification Network (PQ-Net) exemplifies this approach, comprising three main components: a pattern-block with convolutional and max-pooling layers to extract local features and reduce pattern dimensionality; phase-blocks that extract phase-specific features; and parameter-blocks with fully connected layers that output quantitative parameters [26]. This architecture has demonstrated remarkable capability in predicting scale factors, lattice parameters, and crystallite sizes from multi-phase systems, achieving errors below 10⁻³ Å for lattice parameters and less than 1 nm for crystallite sizes in synthetic Ni catalyst systems [26].

For phase identification and classification, Bayesian-VGGNet architectures have shown strong performance, achieving 84% accuracy on simulated spectra and 75% on external experimental data for crystal symmetry classification [28]. These networks incorporate Bayesian methods to estimate prediction uncertainty, a critical feature for autonomous systems that must recognize when model predictions are unreliable. The integration of attention mechanisms with CNNs has further enhanced model interpretability by enabling intuitive visualization of key diffraction peak contributions to model predictions [27]. In lithium-ion battery research, this approach has successfully identified correlations between specific diffraction features and electrochemical properties like voltage and rate capability [27].

Experimental Protocol for 1D Pattern Analysis

Data Preparation and Preprocessing

- Source Selection: Obtain 1D XRD patterns from experimental measurements or synthetic generation using crystallographic information files (CIF) from databases like COD, ICSD, or Materials Project [29] [6]. For synthetic data, use diffraction simulation packages (e.g., Dans Diffraction) with parameters matching experimental conditions (Cu Kα radiation, λ = 1.5406 Å) [6].

- Intensity Normalization: Apply min-max scaling to normalize intensity values between 0 and 1, preserving relative peak intensities crucial for material identification [30] [6].

- Angular Range Definition: Consistent 2θ range (typically 5°-90°) with uniform step size (approximately 0.008°) across all patterns [6].

- Data Augmentation: Introduce variability through simulated changes in lattice parameters, crystallite size, preferred orientation, and synthetic noise to improve model robustness [29].

Model Training and Validation

- Architecture Selection: Implement a CNN architecture appropriate for the task (e.g., PQ-Net for parameter quantification, Bayesian-VGGNet for classification) [26] [28].

- Loss Function: For quantification tasks, use mean absolute error (MAE) instead of mean squared error (MSE) to better handle outliers in training data [26]. For phase quantification, consider specialized loss functions like Dirichlet modeling for proportion inference [29].

- Validation Strategy: Employ k-fold cross-validation (e.g., 2-fold with 50,000 structures per fold) and holdout test sets to evaluate generalization performance [6].

- Performance Metrics: Track loss convergence, mean absolute error for regression tasks, and accuracy/uncertainty calibration for classification tasks [26] [28].

Table 1: Performance Comparison of CNN Models for 1D XRD Analysis

| Model | Application | Accuracy/Performance | Key Advantages |

|---|---|---|---|

| PQ-Net [26] | Parameter quantification | Lattice parameter error < 10⁻³ Å; Crystallite size error < 1 nm | Real-time analysis; handles multi-phase systems |

| CNN with Attention [27] | Property prediction from battery XRD | Voltage prediction MAPE < 0.5%; R² > 0.98 | Interpretable predictions; identifies relevant peaks |

| Bayesian-VGGNet [28] | Crystal symmetry classification | 84% accuracy (simulated); 75% (experimental) | Uncertainty quantification; improved reliability |

| Phase Quantification CNN [29] | Mineral identification & quantification | 0.5% error (synthetic); 6% error (experimental) | Handles complex mineral assemblages |

Implementation Workflow for 1D Analysis

The following workflow diagram illustrates the complete process for analyzing 1D XRD patterns using CNNs:

Analysis of 2D XRD Images Using CNNs

Technical Approaches and Network Architectures

The analysis of 2D XRD images presents distinct challenges and opportunities compared to 1D pattern analysis. CNNs for 2D data leverage spatial relationships across the detector surface, enabling detection of anomalies, crystal orientation effects, and texture information that may be lost in 1D integrations. The RefleX system exemplifies this approach, utilizing a multi-path architecture that processes diffraction images in both Cartesian and polar coordinate systems to detect seven common anomaly types including ice rings, diffuse scattering, non-uniform detector response, and artifacts [31]. This system achieved between 87% and 99% accuracy in anomaly detection depending on the anomaly type, demonstrating the strong capability of CNNs for automated image quality assessment [31].

For crystal structure analysis from 2D images, approaches include direct analysis of the 2D images or transformation to alternative representations. The SIMPOD database facilitates this research by providing both simulated 1D diffractograms and derived 2D radial images from 467,861 crystal structures in the Crystallography Open Database [6]. These radial images enable the application of sophisticated computer vision models like ResNet, DenseNet, and Swin Transformer, which have shown superior performance compared to models using 1D data, particularly for space group prediction tasks [6]. In nanobeam XRD analysis, unsupervised learning approaches like Uniform Manifold Approximation and Projection (UMAP) have been combined with CNN features to categorize crystal structures from raw three-dimensional ω-2θ-φ diffraction patterns, providing more precise categorization than conventional fitting methods [32].

Experimental Protocol for 2D Image Analysis

Image Preprocessing and Enhancement

- Format Conversion: Convert proprietary detector formats to standardized 2D arrays (e.g., numpy arrays), handling missing data and detector-specific artifacts [31].

- Beam Center Detection: Implement robust algorithms to identify the X-ray beam center position, which serves as the reference point for subsequent transformations [31].

- Coordinate Transformation: Generate multiple image representations including Cartesian coordinates, polar coordinates (centered on beam position), and radial projections to enhance feature visibility [6] [31].

- Intensity Normalization: Apply scaling to manage varying dynamic ranges across different detectors and experimental conditions.

Model Architecture and Training

- Backbone Selection: Utilize established CNN architectures (ResNet, DenseNet) or transformers (Swin) as feature extraction backbones, leveraging transfer learning from natural image datasets where beneficial [6].

- Multi-label Framework: For anomaly detection, implement multi-label classification heads to handle co-occurring anomalies within single images [31].

- Data Augmentation: Apply spatial transformations, noise injection, and synthetic artifact generation to improve model robustness to experimental variations.

- Uncertainty Quantification: Incorporate Bayesian layers or Monte Carlo dropout to estimate prediction confidence, particularly important for autonomous operation.

Table 2: CNN Applications for 2D XRD Image Analysis

| Application | Model Architecture | Performance | Key Detections/Outputs |

|---|---|---|---|

| Anomaly Detection [31] | Multi-path CNN (RefleX) | 87-99% accuracy by anomaly type | Ice rings, diffuse scattering, detector artifacts, background issues |

| Space Group Prediction [6] | ResNet, DenseNet, Swin Transformer | Superior to 1D models | Crystal symmetry classification from radial images |

| nanoXRD Analysis [32] | UMAP + CNN features | Enhanced categorization vs. conventional fitting | Crystal structure features from nanobeam patterns |

| 5D Tomographic Imaging [26] | PQ-Net adapted for 2D | Real-time processing of 20,000+ patterns | Lattice parameter, crystallite size maps across samples |

Implementation Workflow for 2D Analysis

The following workflow illustrates the process for analyzing 2D XRD images using CNNs:

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Computational Tools for CNN-XRD Research

| Resource Category | Specific Tools/Databases | Primary Function | Application Context |

|---|---|---|---|

| Crystallographic Databases | Crystallography Open Database (COD), Inorganic Crystal Structure Database (ICSD), Materials Project (MP) | Source of crystal structures for synthetic training data and reference patterns | Phase identification, model training, validation [28] [6] |

| Diffraction Simulation | Dans Diffraction, Profex/BGMN, TOPAS | Generate synthetic XRD patterns from CIF files; Rietveld refinement comparison | Training data generation, model validation [29] [6] |

| ML Frameworks & Libraries | PyTorch, TensorFlow, H2O AutoML | Implementation of CNN architectures and training pipelines | Model development, experimentation [6] |

| Specialized Datasets | SIMPOD, Proteindiffraction.org | Benchmark datasets for training and validation | Model comparison, performance evaluation [6] [31] |

| Preprocessing Tools | scikit-image, Gemmi, NumPy | Data cleaning, normalization, transformation | Data preparation, feature engineering [6] [31] |

Challenges and Future Perspectives

Despite significant advances, several challenges remain in the application of CNNs to XRD analysis. The scarcity of diverse, high-quality experimental training data continues to limit model generalizability, particularly for uncommon crystal structures or complex multi-phase systems [28] [1]. The physics-agnostic nature of standard CNN approaches can lead to predictions that violate fundamental crystallographic principles, potentially limiting their adoption in rigorous materials characterization [1]. Additionally, issues of model interpretability, uncertainty quantification, and seamless integration with existing experimental workflows require further development [28].

Future research directions likely include the development of physics-informed neural networks that incorporate known diffraction constraints directly into model architectures, improving both accuracy and reliability. Generative models show promise for creating more realistic training data and addressing data scarcity issues [6]. The creation of larger, more diverse benchmark datasets like SIMPOD will enable more comprehensive model evaluation and development [6]. Furthermore, the integration of CNN-based XRD analysis with robotic synthesis and characterization systems points toward fully autonomous materials discovery pipelines, where ML models not only interpret data but actively guide experimental decisions [1]. As these technologies mature, they will increasingly enable researchers to extract deeper insights from XRD measurements while dramatically reducing analysis time from days to seconds.

The autonomous interpretation of X-ray diffraction (XRD) patterns represents a frontier in materials science, accelerating the journey from synthesis to structural understanding. While machine learning (ML) has made significant strides in classifying crystalline phases from XRD data, the next frontier lies in moving beyond qualitative identification to quantitative prediction. Regression models are now being developed to predict precise lattice parameters and microstructural descriptors directly from diffraction patterns, providing a deeper, quantitative understanding of material properties. This evolution is crucial for high-throughput experimentation (HTE) and autonomous materials research, where quantitative insights into strain, defect density, and phase fractions are necessary to establish robust composition-structure-property relationships [13] [4].

State-of-the-Art Regression Approaches

Physics-Informed Probabilistic Labeling and Lattice Refinement

The CrystalShift algorithm exemplifies a sophisticated approach that integrates symmetry-constrained optimization for lattice parameter prediction. Unlike neural networks that require extensive training datasets, CrystalShift employs a best-first tree search and Bayesian model comparison to provide probabilistic phase labels and refined lattice constants without prior training. Its workflow involves:

- Input: An XRD spectrum and a list of candidate phases.

- Pseudo-Refinement Optimization: A non-linear least-squares optimization refines the lattice parameters of candidate phases (without breaking space group symmetry) to minimize the difference between simulated and experimental XRD patterns.

- Tree Search: The algorithm explores potential phase combinations, iteratively refining lattice parameters for each combination.

- Bayesian Model Comparison: The evidence for each optimized phase combination is calculated, naturally incorporating Occam's razor to prefer simpler models that adequately explain the data. This yields a posterior probability distribution over phase combinations and their associated, refined lattice parameters [13].

This method has demonstrated robust performance on complex systems, such as resolving the intricate peak shifting in Cr~x~Fe~0.5-x~VO~4~ monoclinic phases, providing quantitative lattice strain information critical for HTE workflows [13].

Supervised Machine Learning for Microstructural Descriptors

Supervised ML models are increasingly used to decode complex microstructural information from XRD profiles. These models are trained on paired datasets of XRD patterns and corresponding microstructural descriptors obtained from simulations or experimental measurements.

A key application is the analysis of shock-loaded materials, where models have been trained to predict descriptors such as pressure, temperature, phase fractions, and dislocation density from XRD profiles. The general workflow involves:

- Data Generation: Using atomistic simulations (e.g., Molecular Dynamics) to generate microstructural states and their corresponding simulated XRD patterns.

- Model Training: Training supervised ML models (e.g., neural networks) to map the XRD profile to the target microstructural descriptors.

- Transferability Assessment: Evaluating the model's ability to predict descriptors for new crystal orientations or polycrystalline systems not included in the training data [4].

Studies on copper have shown that while models trained on single-crystal data can transfer to polycrystalline systems, their accuracy is highly dependent on the diversity of the training data and the specific descriptor being targeted [4].

Empirical and ML-Generated Correlative Models

For specific material families, empirical and data-driven correlative models remain powerful tools. For instance, in perovskite materials, revised empirical equations based on ionic-radius data have been developed to predict cubic/pseudocubic lattice constants with high accuracy [33]. Furthermore, evolutionary algorithms can now generate optimized elemental numerical descriptions that enhance the performance of regression models. These generated descriptors, which are vectors of values assigned to each element, have been shown to significantly reduce error in predicting properties like the hardness of high-entropy alloys, improving R² values from 0.79 to 0.88 compared to models using traditional elemental features [34].

Table 1: Comparison of Regression Approaches for XRD Data

| Approach | Key Methodology | Primary Outputs | Advantages | Limitations |

|---|---|---|---|---|