Autonomous Laboratory Robotics: A Scientist's Guide to Self-Driving Labs and Accelerated Discovery

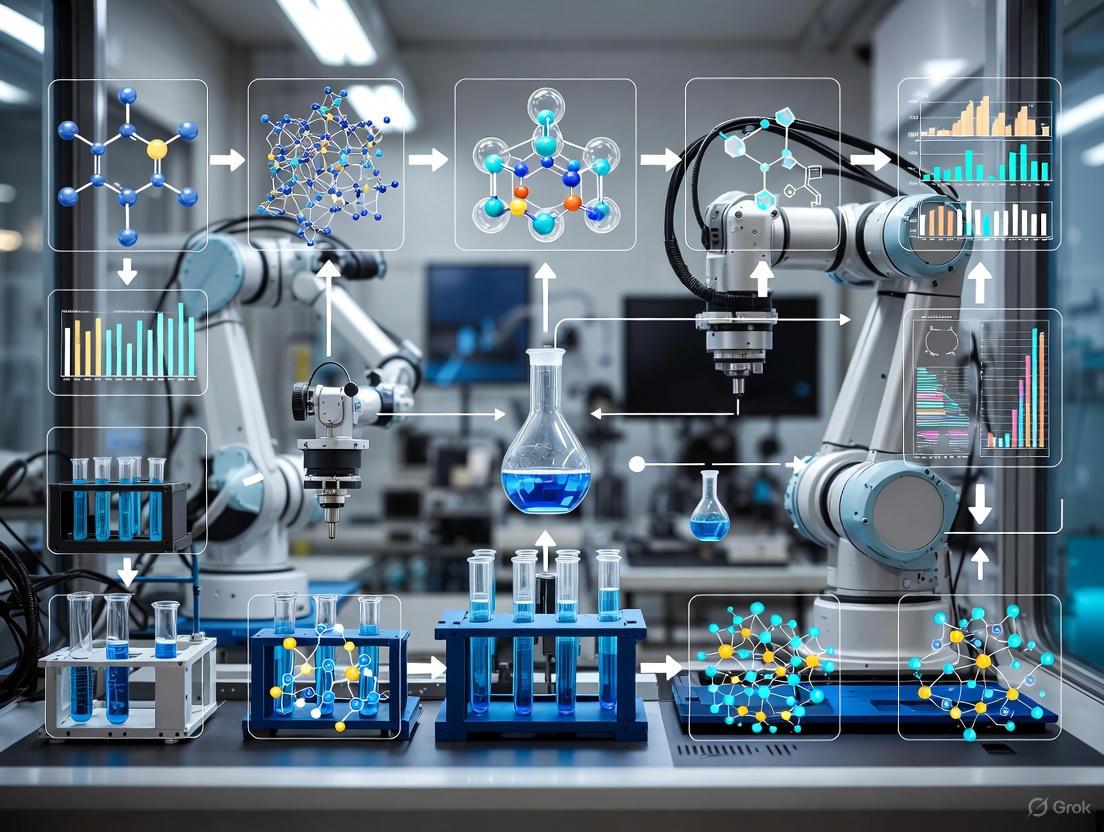

This overview explores the transformative field of autonomous laboratory robotics, a paradigm shift integrating AI, robotics, and data science to accelerate scientific discovery.

Autonomous Laboratory Robotics: A Scientist's Guide to Self-Driving Labs and Accelerated Discovery

Abstract

This overview explores the transformative field of autonomous laboratory robotics, a paradigm shift integrating AI, robotics, and data science to accelerate scientific discovery. Tailored for researchers and drug development professionals, it covers the foundational principles of self-driving labs, from their core objectives of enhancing reproducibility and throughput to the practical implementation of the Design-Make-Test-Analyze (DMTA) cycle. The article delves into real-world applications in materials science and drug discovery, provides a strategic framework for troubleshooting common automation challenges, and offers a comparative analysis of performance validation. By synthesizing the latest research and case studies, this guide serves as a critical resource for scientists looking to navigate, implement, and optimize autonomous systems in their research workflows.

The Foundations of Self-Driving Labs: From Automation to Autonomous Discovery

The contemporary scientific landscape is undergoing a profound transformation, moving from static, human-directed laboratory processes toward dynamic, intelligent, and self-directed systems. Autonomous laboratories represent the pinnacle of this evolution, integrating advanced robotics, artificial intelligence, and data science to create research environments where systems can not only execute predefined tasks but also independently propose hypotheses, design experiments, and iteratively refine scientific understanding. This shift is fundamentally redefining the roles of researchers and technicians, transitioning them from manual executors to strategic overseers of complex scientific workflows. The core distinction lies in the capability for independent decision-making; where simple automation follows rigid, programmed instructions, autonomous laboratories leverage AI to adapt, learn, and optimize in response to experimental data in real-time [1].

This evolution is critical for addressing the increasing complexity of modern scientific challenges, particularly in fields like genomics and drug discovery, where the volume and multidimensionality of data exceed human analytical capacity. The integration of AI enables a more holistic approach to research, facilitating the identification of subtle, cross-disciplinary patterns that might otherwise remain obscured within isolated datasets. This technical guide examines the architectural components, operational workflows, and practical implementations of autonomous laboratories, providing a foundational overview for scientists and drug development professionals engaged in the digital transformation of research [1].

The Architectural Framework of an Autonomous Laboratory

An autonomous laboratory is built upon a tightly integrated stack of hardware and software components that work in concert to enable closed-loop, goal-directed research. The architecture can be deconstructed into three foundational layers: the physical automation infrastructure, the data management and visualization platform, and the AI-driven intelligence core.

Physical Automation and Robotic Infrastructure

The physical layer consists of the robotic systems and laboratory hardware that perform manual tasks. This includes collaborative robotic arms, automated base platforms for mobility, and specialized laboratory tables designed for stability and modularity. For instance, modern laboratory tables offer configurable work surface tiers, integrated utility connections, and substantial weight capacity (e.g., up to 226 kg per shelf) to support a diverse array of instruments and consumables within a hands-free automated infrastructure [2]. This hardware is centralized and managed by a scheduling and control system, such as Cellario software, which ensures all networked devices are optimally configured and synchronized to execute complex workflows without manual intervention [2]. The principle of modularity is paramount, allowing the laboratory to be reconfigured and expanded to meet evolving scientific needs without requiring a complete infrastructure overhaul.

Data Management and Visualization Platforms

The middle layer handles the immense streams of multimodal data generated by laboratory instruments. Effective data management is the central nervous system of an autonomous lab. Platforms like Foxglove provide a unified environment for visualizing, debugging, and managing this data, offering over 20 customizable panels for interactive 2D/3D visualizations of live and recorded data [3]. These systems support a range of data formats (e.g., ROS 1, ROS 2, MCAP, Protobuf) and enable efficient data storage, indexing, and retrieval via cloud or on-premises solutions. This capability allows researchers to triage issues, debug robotic behavior, and optimize prototypes by providing a comprehensive, real-time view of all experimental operations, thus closing the loop between data acquisition and analysis [3].

AI and Machine Intelligence Core

At the highest layer resides the AI core, which provides the cognitive functions for the laboratory. This encompasses machine learning models for tasks such as predicting therapeutic targets, simulating molecular interactions, and classifying genetic variants. This layer is evolving from performing static data analysis to enabling Generative Lab Intelligence (GLI), where AI systems actively participate in the scientific method by proposing novel hypotheses and designing experimental pathways [1]. Techniques like reinforcement learning are crucial here, allowing systems to learn optimal policies for achieving research goals through continuous interaction with the experimental environment. This represents the ultimate leap from automation to autonomy.

Table 1: Core Architectural Layers of an Autonomous Laboratory

| Layer | Key Components | Primary Function | Example Technologies |

|---|---|---|---|

| Physical Infrastructure | Robotic arms, mobile base platforms, modular laboratory tables, integrated devices | Execute physical tasks: liquid handling, sample movement, instrument operation | Nucleus automation infrastructure [2], Kinova robotic arms [4] |

| Data & Visualization | Data streaming platforms, visualization software, data lakes, analysis tools | Ingest, manage, visualize, and analyze multimodal data for debugging and insight | Foxglove [3], CellarioOS [2] |

| AI & Intelligence | Machine learning models, generative AI, reinforcement learning, simulation environments | Generate hypotheses, design experiments, interpret results, optimize workflows | Generative Lab Intelligence (GLI) [1], AlphaFold, multi-omics AI platforms [1] |

Operational Workflow: The Lifecycle of an Autonomous Experiment

The operational logic of an autonomous laboratory can be conceptualized as a recursive, closed-loop cycle. This workflow enables the system to function as an active research collaborator rather than a passive tool.

Hypothesis Generation and Experimental Planning

The cycle begins with a human researcher providing a high-level goal. The AI system, often leveraging generative models, then proposes one or more testable hypotheses and designs a detailed, executable experimental plan. For example, in drug discovery, an AI could propose a novel therapeutic target and design a series of compound screening assays to validate its hypothesis [1]. The planning algorithm must account for resource constraints, instrument availability, and the potential for parallelization to maximize throughput.

Robotic Execution and Data Acquisition

The structured experimental plan is dispatched to a scheduling engine, such as CellarioScheduler, which dynamically coordinates the fleet of laboratory robots and instruments [2]. This stage involves the physical execution of the experiment—whether it's PCR setup, cell staining, or high-throughput sequencing. Advanced algorithms, similar to those developed for manufacturing, are used to organize multiple robots in time and space, ensuring they work both alone and in teams without collision, thereby optimizing the assembly process of scientific workflows [5]. The robotic systems execute the precise physical tasks, following the established protocols.

Data Analysis, Learning, and Iteration

Upon completion of the physical experiment, instruments generate raw data, which is immediately ingested by the data management platform. The AI analysis layer then processes this data, interpreting the results to validate or refute the initial hypothesis. Crucially, the system learns from this outcome, updating its internal models and using this new knowledge to inform the next cycle. The AI decides whether the goal has been met or if the experiment needs to be refined and repeated, thus closing the loop and beginning a new iteration. This creates a continuous, adaptive learning process that progressively converges on a solution.

Practical Implementation and Experimental Protocols

Case Study: Automated High-Throughput Genome Sequencing

A representative example of an autonomous laboratory in action is an end-to-end automated system for Whole Genome Sequencing (WGS) library preparation. The goal is to convert a large number of DNA samples into sequenced libraries with minimal human intervention.

- High-Level Goal: "Prepare and sequence 384 WGS libraries from raw DNA samples with maximum efficiency and consistency."

- AI-Generated Plan: The AI designs a workflow that parallelizes sub-processes (e.g., fragmentation, amplification, purification) across multiple work cells, optimizing the schedule to minimize idle time for robots and instruments.

- Robotic Execution: A modular system, potentially using Nucleus automated infrastructure, performs all liquid handling and plate transfers. A central collaborative robot arm shuttles samples between stations for DNA extraction, QC, PCR setup, and final library normalization [2].

- Data and Iteration: After sequencing, the AI analyzes the quality metrics (e.g., read depth, coverage uniformity). If any samples fail QC, the AI can trace the failure to a specific process and adjust the protocol for future runs, such as modifying incubation times or reagent volumes.

Table 2: Key Research Reagent Solutions in an Automated Genomics Lab

| Reagent / Material | Function in Automated Workflow | Consideration for Autonomy |

|---|---|---|

| DNA Extraction Beads | Magnetic bead-based purification of nucleic acids | Must be compatible with robotic liquid handlers and stable at room temperature for deck storage. |

| Fragmentation Enzymes | Enzymatically shears DNA to desired size for sequencing | Requires precise, robotic-controlled incubation times and temperatures. |

| PCR Master Mix | Amplifies adapter-ligated DNA fragments | Pre-aliquoted into microplates for stability and to reduce robotic pipetting steps. |

| Indexing Adapters | Adds unique barcodes to each sample for multiplexing | Critical for sample tracking; barcode information must be digitally linked to the robot's scheduling software. |

| QC Assay Kits | (e.g., Fluorometric) Assesses library quality and quantity | Results must be digitally parsed by the AI to automatically pass/fail samples before proceeding. |

Enabling Technologies and Algorithmic Foundations

The functionality of autonomous labs relies on specialized algorithms. For complex tasks requiring multiple robots, assembly algorithms compute how to break down a product into subassemblies that can be built in parallel and then combined, directing robots to work collaboratively and mapping efficient paths to avoid interference [5]. Furthermore, robust simulation environments are essential for testing and training AI models without consuming physical resources. These simulators allow researchers to prototype new algorithms, optimize workflows, and even create educational tools, providing a safe sandbox for innovation before deployment on physical hardware [5].

Future Trajectory and Challenges

The future development of autonomous laboratories will be guided by several key trends and challenges. A major focus is on achieving robust multi-omics integration, where AI systems seamlessly correlate data from genomics, transcriptomics, proteomics, and epigenomics to build comprehensive models of biological systems [1]. Furthermore, the concept of In-Space Servicing, Assembly, and Manufacturing (ISAM) being pioneered in space robotics—where systems must operate with complete autonomy in unpredictable environments—directly informs the need for terrestrial labs to become more resilient and self-sufficient [6].

However, significant hurdles remain. Regulatory frameworks from bodies like the FDA and EMA are still adapting to approve AI-driven diagnostics and drug discovery tools, particularly those that learn and evolve over time [1]. Data quality and standardization are another critical challenge; AI models are only as good as their training data, necessitating strict data governance and initiatives like the Global Alliance for Genomics and Health (GA4GH) to develop universal data-sharing standards [1]. Finally, the human factor is paramount. Successful implementation requires training laboratory professionals in new skills like bioinformatics and data science, fostering a culture of continuous learning and interdisciplinary collaboration between computer scientists, biologists, and clinicians [1].

The integration of artificial intelligence (AI) and robotics is fundamentally transforming the scientific research landscape, giving rise to autonomous laboratories [7] [8]. These self-driving labs (SDLs) represent a paradigm shift from traditional manual experimentation to a highly automated, data-driven approach [9]. This transformation is guided by three core objectives: radically accelerating the pace of discovery, enhancing experimental reproducibility, and democratizing access to advanced research capabilities [7] [9] [8]. By leveraging robotic systems that operate with minimal human intervention, autonomous laboratories can conduct high-throughput, data-driven experimentation, freeing researchers from repetitive tasks and enabling more sophisticated scientific inquiry [8]. This technical guide explores the implementation frameworks, experimental protocols, and resource requirements for realizing these core objectives within chemical, materials, and life sciences research.

Core Objectives and Technical Implementation

Accelerating the Pace of Discovery

The acceleration of research cycles is achieved through closed-loop operation, where AI-driven systems autonomously design, execute, and analyze experiments. This approach can compress discovery timelines from years to days.

- AI-Driven Closed-Loop Optimization: A prime example is an autonomous platform for nanomaterial synthesis, which integrates a Generative Pre-trained Transformer (GPT) model for method retrieval and an A* algorithm for closed-loop optimization. This system optimized multi-target Au nanorods across 735 experiments and Au nanospheres/Ag nanocubes in just 50 experiments, demonstrating a significant reduction in optimization cycles compared to manual approaches [10].

- High-Throughput Experimentation: Robotic platforms enable parallel experimentation at unprecedented scales. For instance, the BEAR (Bayesian experimental autonomous researcher) DEN system conducts thousands of experiments to identify materials with optimal properties, such as the most efficient material for absorbing energy, a process reminiscent of Edisonian methods but exponentially faster [9].

- Efficient Search Algorithms: The choice of optimization algorithm critically impacts search efficiency. In nanoparticle synthesis, the A* algorithm demonstrated superior performance, requiring significantly fewer iterations to reach optimization targets compared to Bayesian methods like Optuna and Olympus [10].

Table 1: Performance Metrics of an Autonomous Nanomaterial Synthesis Platform [10]

| Nanomaterial Target | Number of Experiments | Key Result | Comparative Algorithm Efficiency |

|---|---|---|---|

| Au Nanorods (LSPR 600-900 nm) | 735 | Comprehensive parameter optimization | A* algorithm outperformed Optuna and Olympus |

| Au Nanospheres / Ag Nanocubes | 50 | Successful parameter optimization | A* algorithm required significantly fewer iterations |

| Reproducibility Metrics | Deviation (Identical Parameters) | ||

| Characteristic LSPR Peak (Au NRs) | ≤ 1.1 nm | ||

| FWHM (Au NRs) | ≤ 2.9 nm |

Enhancing Experimental Reproducibility

Reproducibility is a fundamental challenge in scientific research, which autonomous laboratories address through standardization, automation, and precise data tracking.

- Standardization of Processes: Robotic systems execute protocols with unwavering consistency, eliminating variability introduced by human researchers. As emphasized by researchers, the primary metric for success is reproducibility: "It’s that if we were able to get this result, you could get the same result using a similarly configured lab" [9]. This is analogous to standardized processes in franchise models like Taco Bell, where operations continue seamlessly despite changes in personnel [9].

- Precision and Data Integrity: Automated systems deliver exceptional precision in repetitive tasks. In the synthesis of Au nanorods, reproducibility tests showed deviations in the characteristic longitudinal surface plasmon resonance (LSPR) peak and full width at half maxima (FWHM) of under 1.1 nm and 2.9 nm, respectively, when using identical parameters [10]. This level of precision is difficult to achieve consistently through manual manipulation.

- Integrated Data Logging: Autonomous platforms inherently create a complete digital record of all experimental parameters, environmental conditions, and outcomes for every experiment conducted. This comprehensive data trail ensures full traceability and facilitates the debugging of failed experiments or the replication of successful ones [10] [8].

Democratizing Research Access

Democratizing automation involves making advanced research tools accessible to a broader community of scientists, beyond only well-funded institutions and corporations.

- Open-Source Hardware and Modular Systems: The development of open-source hardware, such as the FLUID robot for material synthesis, and modular, commercially accessible automation platforms lowers the financial and technical barriers to entry [7] [10] [8]. These systems are designed to be reconfigurable and adaptable to different research needs and budgets.

- Lowering Financial Barriers: Traditional high-end automation requires investments in the millions of dollars [8]. Democratization efforts focus on creating lower-cost alternatives through open-source designs, digital fabrication (e.g., 3D printing), and modular systems that smaller research groups can acquire and operate [7] [9] [8].

- Collaborative Research Networks: Large-scale initiatives, such as the NSF-funded $2 million grant for a collaborative ecosystem to discover semiconductor nanomaterials, are establishing blueprints for SDL networks. These networks connect researchers across institutions, allowing for shared access to autonomous platforms and distributed expertise [11].

Detailed Experimental Protocol: Autonomous Nanomaterial Synthesis

The following protocol details the operation of an autonomous platform for nanomaterial synthesis and optimization, which embodies the three core objectives [10].

The platform integrates three main modules: a literature mining module (GPT and Ada embedding models), an automated experimental module (commercial PAL DHR system), and an A* algorithm optimization module. The workflow is a closed loop.

Step-by-Step Methodology

Literature Mining and Initial Method Generation:

- Input: A research goal (e.g., "synthesize Au nanorods with LSPR ~800 nm").

- Process: The literature mining module, powered by GPT and Ada embedding models, processes a database of scientific papers (e.g., crawled from Web of Science) to retrieve and summarize relevant synthesis methods and parameters [10].

- Output: A proposed experimental procedure.

Script Editing and Experimental Setup:

- Process: The researcher reviews the generated procedure and either edits an existing automation script (mth or pzm files) or creates a new one to define the robotic operations (liquid handling, mixing, heating, etc.) [10].

- Hardware Preparation: The PAL DHR robotic platform is prepared, which includes Z-axis robotic arms, agitators, a centrifuge module, a UV-vis spectrometer, and solution modules [10].

Automated Execution and Characterization:

- Synthesis: The robotic system executes the script, performing all liquid handling, mixing, reaction, and purification steps autonomously.

- Characterization: The system transfers the synthesized nanoparticle sample to an integrated UV-vis spectrometer for immediate optical characterization [10].

- Data Output: The system generates a file containing the detailed synthesis parameters and the corresponding UV-vis spectral data.

AI-Driven Analysis and Parameter Update:

- Process: The output file is automatically uploaded to a specified location where the A* optimization module reads the data.

- Algorithm Execution: The A* algorithm, a heuristic search method effective in discrete parameter spaces, evaluates the result against the target. It then calculates and proposes a new set of synthesis parameters to better meet the goal [10].

- Loop: These updated parameters are fed back into the automated experimental module, and the cycle repeats.

Termination:

- The closed-loop cycle continues until the synthesized material meets the predefined target criteria (e.g., LSPR peak position, narrow FWHM). The researcher is only required for initial setup and script editing, with all subsequent iterations running autonomously [10].

Research Reagent Solutions

Table 2: Key Reagents and Hardware for Autonomous Nanomaterial Synthesis [10]

| Item | Function / Description | Role in Autonomous Workflow |

|---|---|---|

| Metal Salt Precursors | (e.g., HAuCl₄ for Au NPs). Source of metal ions for nanoparticle formation. | Stored in the solution module; robotically dispensed with high precision. |

| Reducing Agents | (e.g., NaBH₄, Ascorbic Acid). Initiates reduction of metal ions to form nanoparticles. | Stored in the solution module; added at specific times and volumes by the robotic arm. |

| Shape-Directing Agents | (e.g., CTAB for Au nanorods). Directs crystal growth to achieve specific morphologies. | Critical parameter optimized by the A* algorithm. |

| PAL DHR Robotic System | Commercial automated synthesis platform with robotic arms, agitators, and a centrifuge. | Core hardware for executing all physical experimental steps. |

| Integrated UV-vis Spectrometer | In-line optical characterization instrument. | Provides immediate feedback on synthesis success (LSPR peak); data for the AI loop. |

Implementation Framework for Autonomous Laboratories

Architectural Components

Building a functional autonomous laboratory requires the tight integration of several technological components.

Hardware Layer (Robotics and Instruments): This includes robotic arms for liquid handling and material transport, automated instruments for synthesis and analysis (e.g., reactors, UV-vis spectrometers, plate readers), and modular systems that can be reconfigured for different tasks [10] [8]. Examples include the Chemputer for synthetic chemistry and mobile robots like Kuka for instrument operation [8].

AI and Intelligence Layer (Decision Making): This is the "brain" of the autonomous lab. It includes:

- Optimization Algorithms: Such as the A* algorithm [10], Bayesian optimization (e.g., BEAR) [9], and others for efficiently navigating parameter spaces.

- Large Language Models (LLMs): Such as GPT, used for mining scientific literature, generating initial methods, and interacting with researchers in natural language [10] [8].

Data and Integration Layer (Connectivity): A robust software infrastructure is required to manage the flow of information. This layer connects the AI brain to the robotic body, ensuring that experimental data is seamlessly passed to the AI for analysis and that the AI's decisions are translated into actionable commands for the robots [10] [8].

The Evolving Role of the Scientist

In the autonomous laboratory, the role of the human researcher evolves from manual executor to strategic director. Scientists focus on higher-level tasks such as formulating research problems, designing the overall experimental strategy, interpreting complex results that may require deep domain knowledge, and forming novel hypotheses [7] [12] [8]. This model is best described as collaborative intelligence, where humans and machines co-create knowledge, each leveraging their distinct strengths [7]. This shift also necessitates new training and education paradigms, emphasizing multidisciplinary skills in data science, robotics, and AI, alongside deep scientific expertise [12].

Autonomous laboratory robotics is poised to redefine scientific research by concretely addressing the triple objectives of acceleration, reproducibility, and democratization. The technical frameworks and protocols outlined in this guide, from AI-driven closed-loop optimization to modular, open-source hardware, provide a roadmap for implementation. As these technologies mature and become more accessible, they promise to usher in an era of collaborative intelligence, amplifying human insight and enabling a more efficient, reproducible, and inclusive scientific enterprise.

The Design-Make-Test-Analyze (DMTA) cycle represents the fundamental iterative process driving modern scientific discovery, particularly in drug development and materials science. This closed-loop workflow has evolved from a human-directed process to a fully autonomous operation through the integration of artificial intelligence (AI), robotics, and data science. Self-driving laboratories (SDLs) embody this transformation, where AI serves as the lab's "brain" – planning experimental conditions, predicting outcomes, and deciding subsequent experiments – while robotic hardware acts as the "hands," physically executing reactions, measurements, and data collection [13]. This creates a continuous feedback loop where data from each experiment immediately informs the next investigative step, enabling 24/7 operation and dramatically accelerating the pace of discovery compared to traditional manual methods [13].

The significance of autonomous DMTA cycles extends beyond mere acceleration; it represents a paradigm shift in how scientific research is conducted. In pharmaceutical research, this approach promises to substantially lower costs while exponentially increasing throughput and data quality [13]. For researchers and drug development professionals, understanding the components, workflows, and implementation strategies of autonomous DMTA systems has become essential for maintaining competitive advantage in an increasingly digital and automated research landscape. This technical guide deconstructs the core components, AI methodologies, and implementation frameworks that enable fully autonomous experimentation within modern scientific research environments.

Core Components of the Autonomous DMTA Cycle

The Four-Phase Workflow

The autonomous DMTA cycle consists of four tightly integrated phases that form a continuous, closed-loop system:

Design: In this initial phase, AI systems generate novel molecular structures or experimental conditions based on predefined objectives and historical data. This involves generative models that propose candidates optimized for multiple properties simultaneously, such as potency, selectivity, and developability [13] [14]. The design phase has evolved from manual literature searches and chemical intuition to computer-assisted synthesis planning (CASP) and AI-powered platforms that generate innovative ideas for synthetic route design [15].

Make: The designed molecules are synthesized using automated laboratory equipment. This phase encompasses synthesis planning, sourcing materials, reaction setup, monitoring, purification, characterization, and documentation [15]. Automated synthesis platforms including robotic liquid handlers, automated reactors, and purification systems execute the physical construction of target compounds with minimal human intervention [13]. The transition from manual, labor-intensive synthesis to automated workflows has significantly reduced what was traditionally the most costly and lengthy part of the DMTA cycle [15].

Test: Newly synthesized compounds undergo biological or physicochemical testing through automated assay systems. This involves high-throughput screening platforms that evaluate designed molecules for target properties such as binding affinity, physiological activity, or other key performance indicators [13]. Modern testing platforms generate complex datasets that require advanced analytical methods, including computer vision algorithms for interpreting microscope images or spectrometer outputs [13].

Analyze: Experimental results are processed by machine learning algorithms to extract meaningful patterns and relationships. This phase employs statistical analysis and predictive modeling to inform the next design iteration [13] [16]. The analysis must handle complex, multi-dimensional datasets and translate them into actionable insights for the subsequent design phase. At organizations like AstraZeneca, cloud-native modeling platforms such as the Predictive Insight Platform (PIP) provide the computational infrastructure for these analytical workloads [16].

The following diagram illustrates the continuous, closed-loop workflow of an autonomous DMTA cycle and the key technologies enabling each phase:

Enabling Technologies and Infrastructure

The implementation of autonomous DMTA cycles requires sophisticated integration of hardware and software components. The laboratory automation infrastructure forms the physical foundation, comprising robotic liquid handlers, automated reactors and synthesizers, high-throughput screening instrumentation, and automated purification and characterization systems [13]. These components must be seamlessly connected through an orchestration layer that transforms discrete instruments into a coherent autonomous scientist [13].

The data architecture represents another critical component, with FAIR (Findable, Accessible, Interoperable, Reusable) data principles being essential for building robust predictive models and enabling interconnected workflows [15]. Centralized platforms like Torx provide comprehensive information delivery mechanisms that enhance visibility throughout projects, enabling all team members to input on design prioritization in real-time [17]. For large organizations with legacy systems, web-based platforms can be fully integrated to provide single, streamlined solutions for information delivery while maintaining data integrity and ensuring internal and external connections [17].

Cloud computing infrastructure has become fundamental to modern autonomous DMTA implementation, enabling the scalable computational resources required for AI-driven experimentation. Cloud-native modeling platforms, such as AstraZeneca's Predictive Insight Platform (PIP), provide the necessary architecture for molecular predictive modeling, supporting the entire DMTA cycle through specialized services and infrastructure [16]. This technical foundation allows organizations to manage the vast amounts of data generated by autonomous laboratories and apply sophisticated AI algorithms to accelerate discovery timelines.

AI and Machine Learning: The Cognitive Core

Decision Algorithms for Experimental Optimization

The autonomous functionality of self-driving laboratories is powered by sophisticated AI algorithms that make real-time decisions about experimental directions. The most prominent approach is Bayesian optimization (BO), which uses surrogate models (often Gaussian Processes or neural networks) trained on existing experimental data to predict target outcomes such as reaction yield, biological activity, or solubility [13]. The BO algorithm selects new experimental conditions to test through an acquisition function that balances exploration of uncertain regions with exploitation of known promising areas [13].

Recent advances have led to the development of specialized Bayesian experiment planners like Bayesian Back-End (BayBE), which can integrate custom experimental parameters, handle multiple objectives, and apply transfer learning to leverage past data [13]. For multi-objective optimization challenges – common in drug discovery where researchers must balance potency, efficacy, and developability – multi-objective Bayesian optimization (MOBO) approaches have demonstrated significant utility. For instance, LabGenius' EVA platform uses MOBO to autonomously design therapeutic antibodies optimized for multiple properties simultaneously, capable of designing, producing, and testing up to 2,300 antibody variants in just six weeks [13].

Alternative AI strategies include evolutionary algorithms, reinforcement learning, and active learning frameworks, each with particular strengths depending on the experimental context [13] [14]. Evolutionary algorithms mimic natural selection to evolve promising candidates over generations, while reinforcement learning uses reward-based systems to guide exploration of chemical space. The Variational AI team has demonstrated how active learning using generative foundation models can find extremely potent compounds for novel targets with data on only 500 molecules, dramatically accelerating the hit-to-lead and lead optimization process [14].

Machine Learning Model Architectures

The machine learning backbone of autonomous DMTA systems employs diverse model architectures tailored to specific tasks:

Predictive Models: Graph neural networks (GNNs) have shown remarkable performance for molecular property prediction by directly learning from molecular structures [15]. For instance, researchers at Roche have successfully established GNNs capable of predicting C–H functionalisation reactions, a valuable capability for synthetic planning [15]. Random forest regressors operating on extended connectivity fingerprints continue to achieve competitive performance for small molecule potency prediction, serving as robust baselines for QSAR modeling [14].

Generative Models: Deep generative models such as variational autoencoders (VAEs), generative adversarial networks (GANs), and transformer-based architectures can propose novel molecular structures with desired properties [13]. These models learn the underlying distribution of chemical space and can generate new candidates optimized for multiple objectives simultaneously. Variational AI's Enki represents a generative foundation model pretrained on millions of potency data points across hundreds of targets, enabling effective optimization for novel targets with limited initial data [14].

Planning Models: Monte Carlo Tree Search (MCTS) and A* Search algorithms enable multi-step synthesis planning by chaining individual reactions into complete routes [15]. These approaches address the combinatorial challenge of retrosynthetic analysis that often overwhelms human comprehension, systematically exploring possible synthetic pathways to identify feasible routes to target molecules.

The following diagram illustrates the AI decision engine that forms the cognitive core of a self-driving laboratory:

Implementation and Integration Frameworks

Successful implementation of AI-driven DMTA cycles requires sophisticated computational infrastructure. AstraZeneca's Predictive Insight Platform (PIP) exemplifies a cloud-native modeling platform specifically designed for molecular predictive modeling throughout the DMTA cycle [16]. Such platforms provide the necessary architecture, integration patterns, and services to support the entire drug discovery workflow, from initial design to final candidate selection.

A critical advancement in AI integration is the development of more natural user interfaces that lower barriers for scientific researchers. The advent of agentic Large Language Models (LLMs) is reducing the complexity of interacting with sophisticated models, potentially enabling chemists to work through synthesis steps via conversational interfaces ("ChatGPT for Chemists") [15]. These approaches could be directly incorporated into design processes, as demonstrated by Roche's workflow that highlights the impact of synthetic accessibility assessment in the design process [15].

Laboratory Automation: The Physical Infrastructure

Hardware Components for Autonomous Experimentation

The physical implementation of self-driving laboratories requires specialized automation equipment that can execute experiments with minimal human intervention:

Table: Core Hardware Components for Autonomous Experimentation

| Component Category | Specific Technologies | Function | Application Examples |

|---|---|---|---|

| Robotic Liquid Handlers | Automated pipetting systems, microplate handlers | Precise transfer of liquid volumes; high-density plate replication and reformatting | Dispensing reagents for high-throughput screening; setting up reaction mixtures [13] |

| Automated Reactors & Synthesizers | Flow chemistry systems, automated parallel reactors | Conduct chemical reactions under controlled conditions; enable continuous processing | Multi-step organic synthesis; reaction condition screening [13] |

| High-Throughput Screening Instrumentation | Plate readers, automated microscopes, HPLC systems | Rapid biological or physicochemical testing of compounds | Measuring binding affinity; evaluating cellular responses; analyzing compound purity [13] |

| Automated Purification Systems | Flash chromatography systems, prep-HPLC, CPC | Purify synthesized compounds without manual intervention | Isolation of target molecules from reaction mixtures [13] [15] |

| Characterization Equipment | NMR, MS, LC-MS systems with automated sampling | Determine structural identity and purity of compounds | Confirm structure of synthesized molecules; assess sample quality [13] [15] |

Integration and Control Systems

The individual hardware components must be seamlessly integrated through a central control system that serves as the orchestration layer for the entire autonomous laboratory [13]. This automation stack transforms a collection of robots and instruments into a coherent autonomous scientist by enabling AI algorithms to interface with physical equipment – issuing commands such as "dispense 10 µL of reagent A to well 5" or "heat reactor 3 to 100°C for 10 minutes" and reading back data like "absorbance at 450 nm" or "product yield %" [13].

Leading research groups have demonstrated such integrated setups across various domains, proving the generalizability and power of the approach. Applications range from synthesizing nanoparticles and polymers to optimizing drug formulations and enzyme designs – essentially, any measurable and automatable process can be accelerated through self-driving laboratory principles [13]. The integration extends beyond physical execution to encompass data flow, with automated documentation systems capturing experimental parameters and outcomes in standardized formats to ensure FAIR data principles and enable model retraining [15].

Experimental Protocols and Case Studies

Quantitative Performance Benchmarks

Autonomous DMTA systems have demonstrated remarkable performance improvements across multiple domains. The following table summarizes quantitative results from documented case studies:

Table: Performance Metrics of Autonomous DMTA Implementation

| Application Domain | Traditional Approach | Autonomous DMTA Results | Key Metrics |

|---|---|---|---|

| Therapeutic Antibody Optimization | Manual design and testing | AI-driven platform designed, produced, and tested 2,300 variants in 6 weeks [13] | Time reduction: ~5-10x; Throughput: ~2,300 variants [13] |

| Small Molecule Lead Optimization | Multiple years, millions of dollars | Identified extremely potent compounds with data on only 500 molecules [14] | Cost reduction: Significant; Cycle acceleration: ~5 rounds to identify candidates [14] |

| Materials Discovery | Sequential manual experimentation | Identified optimal material candidates 10x faster; often found best solution on first try after training [13] | Speed improvement: 10x; Data utilization: 10x more data feeding AI [13] |

| Enzyme Engineering | Iterative manual optimization | Improved enzyme activity by 26x through autonomous optimization [13] | Performance gain: 26x activity improvement [13] |

Detailed Experimental Protocol: AI-Driven Molecule Optimization

The following protocol outlines a typical autonomous DMTA workflow for small molecule optimization, based on documented case studies [14]:

Initialization Phase

- Objective Definition: Clearly define optimization objectives, typically as weighted combinations of target properties (e.g., pIC50 – 3*(1-QED) for potency and drug-likeness) [14].

- Baseline Data Collection: Assemble existing relevant data or generate initial dataset (e.g., 100 randomly selected molecules) for model priming [14].

- Model Configuration: Select and configure appropriate AI models – Bayesian optimization for single-objective tasks, multi-objective Bayesian optimization (MOBO) for competing objectives, or generative models for novel chemical space exploration [13] [14].

Active Learning Cycle

- Design

- Fine-tune predictive models on available experimental data

- Generate candidate molecules maximizing expected improvement or other acquisition functions

- Apply synthesizability filters using retrosynthetic analysis tools

- Select final candidate set (typically 100 molecules per cycle) for synthesis [14]

Make

- Execute synthesis planning using Computer-Assisted Synthesis Planning (CASP) tools

- Source required building blocks from physical or virtual catalogs (e.g., Enamine MADE collection) [15]

- Perform automated synthesis using robotic liquid handlers and reactors

- Conduct purification via automated flash chromatography or prep-HPLC systems

- Verify compound identity and purity through automated LC-MS and NMR characterization [13] [15]

Test

- Configure high-throughput screening assays for target properties (e.g., binding affinity, cellular activity)

- Execute automated biological testing using plate readers and liquid handling systems

- Collect raw data and process into structured formats for analysis [13]

Analyze

- Process experimental results to calculate key performance metrics

- Update machine learning models with new data

- Evaluate cycle performance and adjust strategy if needed

- Prepare for next iteration by selecting most informative experiments [14]

Termination Criteria

- Achievement of target compound profile

- Diminishing returns from successive cycles

- Exhaustion of resource allocation

- Successful identification of clinical candidate [14]

Case Study: Kinase Inhibitor Optimization

A comprehensive benchmark study demonstrated the effectiveness of autonomous DMTA cycles for kinase inhibitor optimization [14]. The study compared Variational AI's Enki generative model against state-of-the-art baselines (REINVENT and Graph GA) across three kinase targets (FGFR1, AURKA, EGFR). The autonomous system was initialized with just 100 randomly selected molecules, then proceeded through five rounds of active learning, with 100 molecules designed and evaluated in each cycle.

The results showed that Enki-produced molecules significantly outperformed other methods, with large or very large effect sizes (Cohen's d > 0.8) in most comparisons [14]. Importantly, the best Enki-optimized molecules surpassed any compounds found in a ~2 million molecule high-throughput screening library, demonstrating the ability of autonomous DMTA to explore chemical spaces beyond conventional screening collections. Additionally, retrosynthetic analysis confirmed that 90% of the Enki-optimized molecules were predicted to be synthesizable in fewer than ten steps, addressing practical implementation concerns [14].

Implementation Guide: The Scientist's Toolkit

Essential Research Reagents and Materials

Successful implementation of autonomous DMTA cycles requires careful selection of research reagents and materials that enable automated workflows:

Table: Essential Research Reagents and Materials for Autonomous DMTA

| Category | Specific Materials | Function in Autonomous Workflow |

|---|---|---|

| Chemical Building Blocks | Enamine MADE collection, eMolecules, Chemspace, Sigma-Aldrich | Provide diverse starting materials for automated synthesis; virtual catalogs expand accessible chemical space [15] |

| Pre-weighted Building Blocks | Custom library services from commercial vendors | Eliminate labor-intensive weighing, dissolution, and reformatting; enable cherry-picking for specific projects [15] |

| Specialized Reagents | Unnatural amino acids, fluorinated building blocks, diverse boronic acids and halides | Enable specific chemical transformations and access to underrepresented chemical space [15] |

| Catalyst Systems | Pre-formulated catalyst kits for high-throughput experimentation | Facilitate rapid reaction screening and optimization without manual preparation [15] |

| Assay Reagents | Cell lines, protein targets, fluorescence markers, buffer components | Enable high-throughput biological testing with minimal manual intervention [13] |

Implementation Considerations

Implementing autonomous DMTA workflows presents several technical and organizational challenges that must be addressed for success:

Data Management and Quality

- Establish FAIR data principles across all experimental workflows [15]

- Implement automated data capture systems to ensure comprehensive experimental documentation

- Develop standardized data models to enable interoperability between different instruments and software platforms

- Curate high-quality datasets for model training, including both positive and negative results [15]

Integration Challenges

- Address interoperability between legacy systems and new automation platforms

- Develop robust API frameworks for connecting AI planning systems with laboratory execution systems

- Implement middleware solutions that can translate high-level experimental designs into equipment-specific commands [13]

- Establish data pipelines that can process heterogeneous data types from different instruments

Team Structure and Skills

- Foster multidisciplinary teams combining domain expertise with data science and engineering capabilities

- Develop training programs to upskill experimental scientists in data science and AI fundamentals

- Create collaborative workflows that maintain human oversight while leveraging autonomous capabilities [17]

- Establish clear protocols for human-in-the-loop decision points in otherwise autonomous cycles

Infrastructure Requirements

- Ensure reliable laboratory infrastructure with minimal downtime for automated systems

- Implement robust scheduling systems for shared instrumentation in core facilities

- Develop maintenance protocols to ensure consistent performance of automated equipment

- Establish computational infrastructure capable of handling data-intensive AI workloads, potentially through cloud-native solutions [16]

For organizations of different scales, implementation strategies will vary significantly. Small biotech companies may opt for completely outsourced IT solutions that host both the DMTA platform and back-end systems [17]. Large multinational pharmaceutical companies will likely focus on integrating autonomous systems into legacy infrastructure, providing streamlined solutions for information delivery while maintaining data integrity [17]. Mid-sized organizations can exploit flexible platforms that align with specific corporate requirements while maintaining an easy end-user experience [17].

The autonomous DMTA cycle represents a fundamental transformation in scientific research methodology, enabled by the convergence of artificial intelligence, robotics, and data science. This closed-loop workflow accelerates discovery timelines, reduces costs, and enhances exploration of complex experimental spaces that would be intractable through manual approaches. The integration of AI decision engines with automated laboratory infrastructure creates self-improving systems that effectively learn how to experiment more efficiently over time.

The future of autonomous experimentation will likely see increased integration of generative AI models capable of proposing novel research directions beyond human intuition. Advances in computer-assisted synthesis planning will continue to close the "evaluation gap" between theoretical proposals and executable protocols [15]. The development of more natural human-machine interfaces, potentially through agentic LLMs as "chemical ChatBots," will further lower barriers for scientific researchers [15]. As these technologies mature, we can anticipate the emergence of fully integrated, data-driven research environments where autonomous DMTA cycles become the standard approach for exploratory science across multiple domains.

For research organizations, successful adoption of autonomous DMTA methodologies will require both technological investment and cultural adaptation. The scientists of the future will need to combine deep domain expertise with data literacy and computational thinking. Organizations that effectively navigate this transition will be positioned to leverage autonomous experimentation for accelerated discovery, potentially transforming research and development from a cost center into a strategic advantage in the competitive landscape of scientific innovation.

The modern scientific laboratory is undergoing a profound transformation, evolving from a environment characterized by manual processes into an intricate, interconnected data factory [18]. This revolution is powered by the convergence of robotics, artificial intelligence (AI), and sophisticated data management systems, creating a new paradigm for autonomous research. For researchers, scientists, and drug development professionals, this integration is not merely about efficiency; it is becoming essential for maintaining competitive advantage, accelerating the pace of discovery, and tackling problems of unprecedented complexity [18] [19]. This technical guide explores the core technologies driving this change, their practical implementations, and the measurable impacts they are having on scientific research, particularly within the life sciences.

The traditional model of drug discovery—notorious for its lengthy timelines, high costs, and high failure rates—is being fundamentally disrupted. The integration of AI with laboratory automation is creating closed-loop, "self-driving" labs that can generate and test hypotheses with minimal human intervention [20]. This shift is underpinned by a reimagining of data as the central, fluid asset in the research lifecycle, necessitating robust strategies for its generation, capture, and analysis [18]. The following sections provide a detailed examination of the key enabling technologies, their synergies, and the practical frameworks for their implementation.

Core Technologies and Their Synergistic Integration

The autonomous laboratory is built upon a foundation of three interdependent technological pillars: robotics and automation, artificial intelligence and machine learning, and unified data management. Their convergence creates a system where intelligent decision-making directly controls physical experimentation, generating high-quality data that, in turn, refines the AI models.

Robotics and Automation: The Physical Engine

Robotics provides the physical means to execute experiments with superhuman precision, endurance, and throughput. The role of robotics has moved far beyond simple sample conveyance to the complex, autonomous execution of entire workflows [18].

- Robotic Arms and Liquid Handlers: Highly dexterous robotic arms, equipped with advanced sensors and end-effectors, can mimic the subtle manipulation skills of a human scientist, performing complex tasks like micro-pipetting, plating, and operating manual instruments. These systems eliminate human variability and are central to high-throughput screening (HTS) automation, enabling the execution of tens of thousands of assays per day [18] [20].

- Autonomous Mobile Robots (AMRs): These robots are responsible for logistics within the lab, transporting plates, reagents, and samples between different analytical stations or storage units. This ensures seamless continuity and workflow integration across the lab floor, connecting islands of automation into a cohesive whole [18].

- Modular and Flexible Systems: A key advancement is the design of modular systems, such as the Autonomous Lab (ANL), where devices for culturing, preprocessing, and analysis are installed on movable carts. This allows the laboratory configuration to be easily modified or expanded to suit specific experimental needs, providing the versatility required for diverse research applications [21].

The primary benefits of laboratory robotics are increased reproducibility, 24/7 operational capability, and the liberation of highly skilled scientists from repetitive and error-prone manual tasks [18] [19].

Artificial Intelligence and Machine Learning: The Cognitive Center

AI and machine learning (ML) serve as the cognitive core of the autonomous lab, transforming vast datasets into actionable insights and decisions.

- AI in Drug Discovery: AI algorithms are revolutionizing early-stage drug discovery. They are used for target identification by mining omics datasets and scientific literature, for virtual screening to predict compound-target interactions, and for generative design to create novel molecules with desired properties [22] [20]. Tools like AlphaFold have dramatically accelerated structural biology by predicting protein structures with near-experimental accuracy [22].

- Closed-Loop Experimentation: AI-powered systems can analyze experimental results in real-time and use optimization algorithms, such as Bayesian optimization, to propose the next set of experimental conditions. This creates a closed-loop "design-make-test-analyze" (DMTA) cycle where the system autonomously navigates a complex experimental space to find an optimal solution, such as ideal medium conditions for maximizing metabolite production in a microbial strain [21] [20].

- The Critical Role of Edge AI: Relying solely on cloud computing for data analysis from automated instruments introduces latency and a dependency on internet connectivity. Edge AI involves deploying high-performance computing resources locally within the lab. This enables immediate AI-driven feedback to robotic systems, allowing for real-time protocol adjustments and ensuring operational resilience even during network outages. It also enhances security for sensitive research data by allowing local processing before any data is sent to the cloud [18].

Data Management and IoT: The Central Nervous System

The value of robotics and AI is contingent on the quality, accessibility, and structure of the data that fuels them. A unified data strategy is the nervous system that connects all components of the autonomous lab.

- The Centrality of Data: In the laboratory of the future, data is the primary asset. A significant problem in traditional labs is the fragmentation of data across disparate systems (e.g., proprietary instrument files, spreadsheets, electronic lab notebooks). The modern solution involves treating data as a central, fluid component, where every instrument, robot, and sensor automatically feeds standardized, metadata-rich data into a consolidated cloud or hybrid repository [18].

- Laboratory Information Management Systems (LIMS) and IoT: A robust LIMS is critical for standardizing data formats and managing the flow of information. The Internet of Things (IoT) complements this by embedding sensors in everything from incubators and refrigerators to air quality monitors. These sensors provide a continuous stream of contextual data on environmental conditions, asset location (via RFID/BLE tags), and equipment health, enabling predictive maintenance and rigorous experimental validation [18] [19].

- The Shift to Data-Centric Ecosystems: The evolution is moving beyond simply digitizing paper processes with an Electronic Lab Notebook (ELN) toward a fundamental rethinking of workflows. This involves embracing standardization to enable end-to-end digitalization across the R&D lifecycle, fostering a "right-first-time" approach that improves speed, agility, and quality [19].

The synergy of these technologies is visualized in the following autonomous research loop, which illustrates the continuous cycle of data generation, analysis, and action.

Quantitative Frameworks for Assessing Autonomous Performance

As laboratories become more autonomous, quantifying the level and performance of this autonomy is crucial for system design, benchmarking, and regulatory acceptance. A proposed framework based on task requirements moves beyond simple high-level classifications to provide a quantitative assessment [23].

Key Metrics for Autonomy

This framework distinguishes between the level of autonomy (the existence of a requisite capability) and the degree of autonomy (how well that capability performs). It is founded on three core metrics derived from robot task characteristics [23]:

- Requisite Capability Set: The set of core functions the system must perform to accomplish a task.

- Reliability: The probability that the system will perform its required functions under stated conditions for a specified period. This is a measure of the system's integrity and availability.

- Responsiveness: The system's ability to complete tasks within required time constraints, reflecting its operational efficiency.

Application to an Autonomous Lab Experiment

The following table applies this framework to a generalized autonomous laboratory experiment, breaking down the task and quantifying performance.

Table: Quantitative Autonomy Assessment for an Autonomous Laboratory Experiment

| Task Characteristic | Description & Metric | Quantitative Measure |

|---|---|---|

| Overall Task | Optimize culture conditions for a metabolite in E. coli using a closed-loop system. | N/A |

| Requisite Capability | 1. Liquid Handling: Precise dispensing of media components.2. Environmental Control: Maintain culture temperature and agitation.3. Analytical Sampling: Automated sampling and quenching.4. Data Analysis: LC-MS/MS analysis of metabolite concentration.5. Decision-Making: Bayesian optimization to propose next experiment. | Binary (Yes/No) for capability existence. |

| Reliability | Integrity: Probability of a full cycle (culture to analysis) completing without critical error.Availability: System uptime for continuous operation. | e.g., Integrity = 98%, Availability = 95% |

| Responsiveness | Cycle Time: Mean time to complete one full "design-make-test-analyze" cycle.Decision Latency: Time from data acquisition to new experiment proposal. | e.g., Cycle Time = 6 hours, Decision Latency < 1 min |

This framework provides a more nuanced and measurable understanding of autonomous performance than a simple label of "fully autonomous," supporting system improvement and trustworthy deployment [23].

Experimental Protocols and Methodologies

To illustrate the practical application of these converging technologies, this section details a real-world case study of an autonomous system used for bioproduction optimization.

Case Study: Autonomous Optimization of Microbial Metabolite Production

This protocol is based on a study that developed an Autonomous Lab (ANL) to optimize medium conditions for a recombinant E. coli strain overproducing glutamic acid [21].

1. Hypothesis: The growth and productivity of a genetically modified E. coli strain are suboptimal under standard medium conditions and can be significantly improved by systematically varying the concentrations of key nutrients and cofactors.

2. Autonomous System Configuration (The ANL): The hardware is configured as a modular system integrating the following key components, orchestrated by a central software controller [21]:

- Transfer Robot: For moving plates between modules.

- Liquid Handler: For preparing and dispensing medium variants.

- Incubator: For culturing E. coli strains.

- Centrifuge: For cell harvesting.

- Microplate Reader: For measuring optical density (cell growth).

- LC-MS/MS System: For quantifying glutamic acid concentration.

3. Experimental Workflow: The entire process forms a closed loop, as depicted in the workflow diagram below.

4. Detailed Methodology:

- Step 1: Initialization. An initial dataset of medium compositions and corresponding cell growth and glutamic acid yield is input into the Bayesian optimization algorithm [21].

- Step 2: Proposal. The algorithm analyzes the current data and proposes a new set of medium conditions (e.g., concentrations of CaCl₂, MgSO₄, CoCl₂, ZnSO₄) predicted to maximize the objective (growth or production) [21].

- Step 3: Execution.

- The liquid handler automatically prepares the proposed medium variant in a culture plate.

- The strain is inoculated, and the plate is moved to the incubator.

- After a defined period, the culture is sampled.

- Cells are pelleted via centrifugation, and supernatant is analyzed for glutamic acid concentration via LC-MS/MS. Cell density is measured via a microplate reader [21].

- Step 4: Analysis & Iteration. The new results are automatically fed back into the data management system. The Bayesian optimization algorithm updates its model and proposes the next best experiment, repeating the cycle until convergence on an optimum is achieved [21].

5. Key Outcome: The ANL successfully identified optimized medium conditions that improved both the cell growth rate and maximum cell density, demonstrating the power of closed-loop autonomous experimentation to navigate complex experimental spaces more efficiently than traditional manual approaches [21].

The Scientist's Toolkit: Essential Reagents and Materials

The following table details key reagents used in the featured autonomous bioproduction experiment and their critical functions [21].

Table: Essential Research Reagents for Microbial Bioproduction Optimization

| Reagent/Material | Function in the Experiment |

|---|---|

| M9 Minimal Medium | A defined base medium containing only essential nutrients and metal ions. It allows for precise control over components and avoids background interference when measuring microbial metabolite production. |

| Carbon Source (e.g., Glucose) | Provides the essential energy and carbon backbone for microbial growth and the synthesis of the target metabolite, glutamic acid. |

| Trace Elements (CoCl₂, ZnSO₄, etc.) | Act as cofactors for enzymes in central metabolism and the biosynthetic pathway for glutamic acid. Their optimization is critical for maximizing enzymatic activity and flux. |

| Salts (CaCl₂, MgSO₄) | Mg²⁺ is a critical cofactor for many enzymatic reactions. Ca²⁺ can play roles in cell signaling and membrane stability. Concentrations also directly impact osmotic pressure. |

| Vitamin Precursors (e.g., Thiamine) | Essential for the synthesis of coenzymes required for metabolic function. |

| Recombinant E. coli Strain | The engineered production host, containing enhanced metabolic pathways for the overproduction of glutamic acid. |

Measurable Impacts and Future Outlook

The integration of robotics, AI, and data management is delivering tangible, transformative outcomes across the research and development landscape.

Quantitative Benefits in Drug Discovery and Biotechnology

The adoption of these technologies is directly addressing the core inefficiencies of traditional research pipelines.

Table: Measurable Impacts of AI and Automation in Research

| Impact Area | Traditional Timeline/Cost | With AI/Automation | Example |

|---|---|---|---|

| Hit Identification | Months to years, high cost per compound screened. | Achieved in days or less; AI virtual screening drastically reduces the number of compounds needing physical testing. | Atomwise identified two drug candidates for Ebola in less than a day [22]. |

| Preclinical Drug Development | ~3-6 years, costing hundreds of millions [22]. | Timeline compressed to 12-18 months for preclinical candidate selection. | Insilico Medicine designed a novel drug candidate for idiopathic pulmonary fibrosis and reached Phase II trials in under 3 years [22] [20]. |

| Laboratory Efficiency | Manual, error-prone processes; significant time spent on repetitive tasks. | 60% reduction in human errors; over 50% increase in sample processing speed reported by a biotech startup [19]. | Automated sample intake with QR code logging eliminated manual data entry errors [19]. |

Challenges and Strategic Considerations

Despite the clear benefits, widespread adoption faces several significant hurdles that organizations must strategically navigate [19] [24]:

- High Implementation Costs: The initial investment in automation, robotics, and advanced computing is substantial, creating a barrier for smaller labs [19].

- Data Integration and Quality: AI models are only as good as the data they are trained on. Incomplete, biased, or non-standardized data can lead to flawed predictions. Integrating new systems with legacy instruments remains a complex challenge [22] [20] [24].

- Workforce and Cultural Readiness: Successful deployment requires a workforce skilled in both domain science and data science. Resistance to change and a lack of adequate training can hinder adoption [19] [24].

- Cybersecurity and Regulatory Compliance: As labs become more digitized and connected, they face increased vulnerability to cyber threats. Furthermore, regulatory frameworks for AI-driven discoveries are still evolving, requiring careful navigation [22] [19] [24].

The Future: The Age of Autonomous Discovery

The trajectory points toward fully autonomous discovery laboratories. These "self-driving labs" will feature AI systems that not only propose hypotheses but also physically execute and analyze complex experiments through integrated robotics in a continuous, closed-loop manner [21] [20]. This paradigm shift—from automated to autonomous—promises 24/7 innovation, systematically exploring chemical and biological spaces with an efficiency and scale unattainable by humans alone. The laboratory of the future is not just automated; it is anticipatory, data-centric, and relentlessly driven by intelligent, self-improving systems [18] [19]. For the scientific community, embracing this convergence is no longer a choice but a necessity to unlock the next frontier of discovery.

Implementing Autonomous Workflows: Methods and Real-World Applications

The evolution of fully autonomous laboratories represents a paradigm shift in scientific research, particularly in drug discovery and materials science. Self-driving laboratories (SDLs) combine automated experimental hardware with computational experiment planning to create systems capable of designing, executing, and adapting experiments with minimal human intervention [25]. The intrinsic complexity created by their multitude of components demands an effective orchestration platform to ensure correct operation of diverse experimental setups [25]. Existing orchestration frameworks have historically been limited by being either tailored to specific setups or not implemented for real-world synthesis applications [25].

Orchestration software serves as the central nervous system of autonomous laboratories, coordinating communication, data exchange, and instruction management among modular laboratory components. By treating the entire laboratory as an integrated system, platforms like ChemOS 2.0 enable researchers to move beyond simple automation toward truly intelligent experimentation [25]. This transformation is accelerating research timelines while improving reproducibility—two critical challenges where traditional drug development has consistently struggled [12]. The implementation of these systems marks a practical shift in drug discovery from theoretical promises to tangible progress, where automation saves time, data systems connect seamlessly, and biology better reflects human complexity [26].

Core Architecture of ChemOS 2.0

System Design Principles

ChemOS 2.0 was specifically designed to address the limitations of previous orchestration frameworks through a modular architecture that efficiently coordinates laboratory operations. The system combines ab-initio calculations, experimental orchestration, and statistical algorithms to guide closed-loop operations in chemical and materials research [25]. This architecture treats the laboratory as an "operating system" where various components—both physical and computational—interoperate seamlessly [25].

A key innovation in ChemOS 2.0 is its approach to modularity, which allows diverse laboratory components to communicate through standardized interfaces. This design enables researchers to integrate new instruments and analytical tools without requiring extensive reconfiguration of existing workflows. The platform manages the entire experiment lifecycle, from initial design through execution and data analysis, creating a continuous loop where results inform subsequent experimental choices [25].

Technical Components and Data Flow

The technical implementation of ChemOS 2.0 encompasses several interconnected layers that handle different aspects of laboratory operations:

- Communication Layer: Manages standardized messaging between instruments, robots, and computational resources, ensuring that experimental parameters and results are transmitted accurately across the system.

- Data Exchange Framework: Handles the transformation and routing of experimental data between instruments, databases, and analysis tools, maintaining data integrity throughout the process.

- Instruction Management System: Translates high-level experimental designs into specific commands for individual laboratory components, coordinating timing and dependencies between different steps.

- Experiment Planning Engine: Incorporates AI and statistical algorithms to design new experiments based on previous results, optimizing the research trajectory toward desired outcomes.

This architectural approach enables ChemOS 2.0 to function as a unified control system for SDLs, coordinating the activities of robotic platforms, analytical instruments, and computational resources through a single interface [25].

ChemOS 2.0 in Practice: Experimental Protocol

Case Study: Organic Laser Molecule Discovery

To demonstrate its capabilities, ChemOS 2.0 was implemented in a case study focused on discovering novel organic laser molecules [25]. This application showcases the platform's ability to accelerate materials research through integrated planning, execution, and analysis. The experimental workflow followed a structured, iterative process that exemplifies the power of autonomous laboratory systems.

Table: Experimental Stages in Organic Laser Molecule Discovery

| Stage | Key Activities | ChemOS 2.0 Functionality |

|---|---|---|

| Molecular Design | Virtual screening of candidate structures | Ab-initio calculations and molecular modeling |

| Experiment Planning | Selection of synthesis priorities | Statistical algorithms for experiment selection |

| Automated Synthesis | Robotic execution of chemical reactions | Orchestration of robotic fluid handling systems |

| Characterization | Optical and spectroscopic analysis | Coordination of analytical instruments |

| Data Analysis | Performance evaluation of candidates | AI-driven analysis of structure-property relationships |

| Iteration | Design of subsequent experiments | Closed-loop experimental optimization |

Detailed Methodological Workflow

The specific methodology for the organic laser molecule discovery followed a precise sequence, with ChemOS 2.0 managing transitions between phases:

Initialization Phase: Researchers defined the target parameters for organic laser molecules, including optical properties, stability requirements, and synthesis constraints. ChemOS 2.0 translated these requirements into computational screening criteria.

Computational Screening: The platform executed ab-initio calculations to predict molecular properties of candidate structures, prioritizing the most promising candidates for experimental synthesis [25].

Experimental Orchestration: For each selected candidate, ChemOS 2.0:

- Generated detailed synthetic protocols

- Scheduled time on appropriate robotic synthesis platforms

- Prepared instructions for liquid handling systems

- Coordinated the delivery of necessary reagents

Automated Synthesis: Robotic systems executed the synthetic procedures under ChemOS 2.0's supervision, with the platform monitoring progress and handling exceptions.

Integrated Characterization: Upon synthesis completion, ChemOS 2.0 directed the transfer of samples to analytical instruments for optical characterization, including absorption/emission spectra and quantum yield measurements.

Data Integration and Analysis: Characterization data was automatically incorporated into the platform's database, where AI algorithms analyzed structure-property relationships.

Closed-Loop Optimization: Based on the analysis results, ChemOS 2.0's statistical algorithms designed the next set of experiments, refining molecular structures to improve performance [25].

This workflow continued iteratively until molecules meeting the target criteria were identified and validated, demonstrating significantly accelerated discovery compared to traditional approaches.

Diagram: ChemOS 2.0 Closed-Loop Workflow for Organic Laser Molecule Discovery

The Laboratory Robotics Ecosystem

Market Context and Growth Drivers

The development of orchestration platforms like ChemOS 2.0 occurs within a expanding global laboratory robotics market, which provides the hardware infrastructure necessary for autonomous laboratories. Understanding this ecosystem is essential for contextualizing the adoption and impact of orchestration software.

Table: Laboratory Robotics Market Size and Projections

| Year | Market Size (USD Billion) | Compound Annual Growth Rate (CAGR) |

|---|---|---|

| 2024 | 2.67 | - |

| 2025 | 2.93 | 10.0% |

| 2029 | 4.24 | 9.7% |

Source: Laboratory Robotics Market Report 2025 [27]

This market growth is driven by several key factors:

- Increasing research and clinical trials: The total number of industry-initiated clinical trials reached 411 in 2022, up 4.3% from 394 trials in 2021 [27]. This growth creates demand for automated solutions that can enhance efficiency and reduce human error.

- Demand for personalized medicine: The shift toward targeted therapies requires more complex experimental approaches that benefit from automated, high-throughput systems [27].

- Shortage of skilled laboratory personnel: Automation helps address workforce limitations while maintaining research productivity [27].

- Focus on data integrity and reproducibility: Robotic systems provide greater consistency in experimental execution, enhancing the reliability of research results [26].

Key Robotic Platforms and Integration Capabilities

Orchestration software interacts with diverse robotic platforms to create integrated experimental environments. Major companies in the laboratory robotics market include Tecan, Thermo Fisher Scientific, Hamilton Robotics, Chemspeed Technologies, and Biosero [27] [28]. These platforms provide the physical automation capabilities that ChemOS and similar systems orchestrate.

Recent advancements in robotic systems focus on flexibility and interoperability. For example, SPT Labtech's firefly+ platform combines pipetting, dispensing, mixing, and thermocycling within a single compact unit, while its collaboration with Agilent Technologies enables automated target enrichment protocols for genomic sequencing [26]. Similarly, mo:re's MO:BOT platform automates 3D cell culture processes to improve reproducibility and reduce the need for animal models [26]. These specialized robotic systems represent the execution layer that orchestration platforms like ChemOS control and coordinate.

Essential Research Reagents and Materials

The implementation of autonomous laboratories requires carefully standardized reagents and materials to ensure experimental consistency and reproducibility. The following table details key research solutions used in platforms like ChemOS and their specific functions within automated workflows.

Table: Research Reagent Solutions for Autonomous Laboratories

| Reagent/Material | Function in Autonomous Workflows | Application Examples |

|---|---|---|

| Agilent SureSelect Max DNA Library Prep Kits | Automated target enrichment protocols for genomic sequencing | Oncology research, precision medicine [26] |

| 3D Cell Culture Matrices | Standardized scaffolds for organoid and tissue model development | Human-relevant disease modeling, toxicity testing [26] |