Autonomous Experimentation Workflows: The Complete Guide to Self-Driving Labs in Drug Discovery

This article provides a comprehensive overview of autonomous experimentation workflows, a transformative approach that integrates robotics, artificial intelligence, and data science to create self-driving laboratories.

Autonomous Experimentation Workflows: The Complete Guide to Self-Driving Labs in Drug Discovery

Abstract

This article provides a comprehensive overview of autonomous experimentation workflows, a transformative approach that integrates robotics, artificial intelligence, and data science to create self-driving laboratories. Tailored for researchers, scientists, and drug development professionals, it explores the foundational concepts, methodological applications, and optimization strategies that are revolutionizing biomedical research. By examining real-world case studies in oncology and peptide discovery, alongside comparative analyses of human and AI agent performance, this guide offers practical insights for implementing these systems to drastically accelerate discovery timelines, enhance reproducibility, and reduce the high costs associated with traditional drug development.

What Are Autonomous Experimentation Workflows? The Foundation of Self-Driving Science

Autonomous experimentation represents a paradigm shift in scientific research, moving from traditional manual processes to intelligent, self-driving systems. This approach combines artificial intelligence (AI), robotics, and advanced computing to design and execute scientific experiments with minimal human intervention. Unlike simple automation that merely assists with repetitive tasks, autonomous systems can make intelligent decisions, learn from outcomes, and adapt their strategies in a closed-loop manner [1] [2]. The core of this transformation lies in the ability to create systems that not only perform experiments but also manage the entire scientific method—from hypothesis generation and experimental design to execution, analysis, and iterative learning [3].

The significance of autonomous experimentation extends across multiple domains, including materials science, chemistry, and drug development. These systems are poised to dramatically accelerate the pace of discovery, potentially reducing the time from laboratory discovery to viable products from decades to much shorter timeframes [1]. For researchers and drug development professionals, this technology offers the potential to overcome long-standing bottlenecks in the research-to-industry pipeline, particularly in bridging the "valley of death" where promising laboratory discoveries fail to become viable products due to scale-up challenges and real-world deployment complexities [1].

Classification and Levels of Autonomy

Defining Levels of Scientific Autonomy

The autonomy of experimental systems exists on a spectrum, from basic tools that assist researchers to fully autonomous systems that require no human intervention. A widely adopted framework, adapted from the Society of Automotive Engineers' levels of driving automation, provides a standardized way to classify these systems [3]. This classification helps researchers understand the capabilities of different experimental platforms and set appropriate expectations for what these systems can accomplish independently.

The table below outlines the five primary levels of autonomy in scientific research, from basic assistance to fully autonomous operation:

Table 1: Levels of Autonomy in Scientific Experimentation

| Autonomy Level | Name | Description | Examples |

|---|---|---|---|

| Level 1 | Assisted Operation | Machine assistance with defined laboratory tasks | Robotic liquid handlers, data analysis software |

| Level 2 | Partial Autonomy | Proactive scientific assistance (e.g., protocol generation) | Aquarium dynamic workflow planner |

| Level 3 | Conditional Autonomy | Autonomous performance of at least one cycle of the scientific method; requires human intervention for anomalies | iBioFab, Mobile Robot Chemist |

| Level 4 | High Autonomy | Capable of automating protocol generation, execution, data analysis, and hypothesis adjustment | Adam, Eve, MicroCycle platforms |

| Level 5 | Full Autonomy | Full automation of the entire scientific method; not yet achieved | N/A |

Most current autonomous systems operate at Level 3 or Level 4, representing a significant advancement beyond basic automation. Level 3 systems can autonomously perform multiple cycles of the scientific method, interpreting and learning from previous results to inform subsequent experimental designs [3]. Level 4 systems function as highly skilled lab assistants, capable of modifying and updating hypotheses as they proceed through cycles of experimentation after initial human guidance [3].

A Two-Dimensional Classification Framework

An alternative classification system evaluates autonomy along two separate dimensions: hardware autonomy (physical automation) and software autonomy (decision-making capabilities) [3]. This framework provides a more nuanced understanding of a system's capabilities.

Table 2: Two-Dimensional Framework for SDL Autonomy

| Hardware Autonomy Level | Software Autonomy Level | Manual (Level 0) | Single Cycle (Level 1) | Multiple 'Closed-Loop' Cycles (Level 2) | Generative (Level 3) |

|---|---|---|---|---|---|

| Automated Laboratory (Level 3) | Level 3 | Level 4 | Level 5 | ||

| Automated Workflow (Level 2) | Level 2 | Level 3 | Level 4 | ||

| Automated Single Task/Experiment (Level 1) | Level 1 | Level 2 | Level 3 | ||

| Manual (Level 0) | Level 0 | Level 1 | Level 2 |

In this two-dimensional framework, hardware autonomy ranges from no automation (Level 0) to fully automated laboratories with only manual restocking and maintenance (Level 3). Software autonomy ranges from human ideation (Level 0) to generative systems where computers handle both search space definition and experiment selection (Level 3). A fully Level 5 SDL would need to achieve Level 3 in both dimensions, a milestone not yet demonstrated [3].

Core Components of Autonomous Experimentation Systems

Foundational Technologies

Autonomous experimentation systems integrate several advanced technologies that work in concert to enable self-driving capabilities. The first critical component is artificial intelligence and machine learning, which serves as the intellectual core of these systems. AI algorithms, including Bayesian optimization and large language models (LLMs) like ChatGPT and Llama, are employed to design experiments, analyze results, and determine subsequent steps in the research process [4] [5]. For instance, at the National Renewable Energy Laboratory (NREL), researchers use LLMs to swiftly establish control modules and graphical user interfaces for scientific instruments, significantly accelerating the development of autonomous capabilities [4].

The second crucial component is robotics and laboratory automation, which provides the physical means to execute experiments. This includes robotic arms, automated liquid handlers, diffractometers for analyzing material crystal structures, and other instruments that can be controlled algorithmically [5] [2]. Companies like Opentrons have developed systems such as the Opentrons Flex and OT-2 that automate common lab protocols including pipetting and plate transfers, making automation more accessible to researchers and startups [2].

The third component encompasses data infrastructure and computational frameworks that enable the seamless flow and analysis of experimental data. This includes high-performance computing resources, cloud platforms for data processing, and specialized software for data analysis and visualization [1] [6]. The integration of these technologies creates a continuous learning cycle where data from each experiment informs subsequent iterations, progressively refining the experimental approach and accelerating discovery.

Research Reagents and Materials Solutions

Autonomous experimentation requires not only advanced instrumentation but also specialized materials and reagents that enable high-throughput, reproducible research. The table below details key research reagent solutions and their functions in autonomous materials science and drug discovery platforms:

Table 3: Key Research Reagent Solutions in Autonomous Experimentation

| Reagent/Material | Function | Application Example |

|---|---|---|

| Thin-film Combinatorial Libraries | Houses large numbers of compositionally varying samples for high-throughput screening | Mapping phase diagrams in materials discovery [5] |

| Zn-Ti-N Sputtering Targets | Source materials for deposition of thin-film nitrides via Bayesian optimization | Autonomous synthesis of functional coatings [4] |

| Molecular Beam Epitaxy Precursors | Provide source fluxes for growing monoclinic (In,Ga)₂O₃ alloys | Rapid screening of growth conditions for semiconductor materials [4] |

| Electrochemical Impedance Spectroscopy Cells | Enable temperature- and pressure-dependent measurements of material properties | Characterization of energy storage and conversion materials [4] |

| Oxide Semiconductor Gas Sensors | Detect gases through changes in electrical properties | Temperature- and time-dependent measurements of sensor performance [4] |

These specialized materials and reagents are essential for enabling the high-throughput experimentation that characterizes autonomous research systems. For example, thin-film combinatorial libraries allow researchers to explore vast compositional spaces efficiently by housing numerous samples with systematic variations in composition on a single substrate [5]. Similarly, precise precursor materials for techniques like molecular beam epitaxy enable the autonomous exploration of processing conditions for advanced semiconductor materials [4].

The Autonomous Experimentation Workflow

The Closed-Loop Experimentation Process

The core of autonomous experimentation lies in its implementation of a continuous, closed-loop workflow that mirrors the scientific method. This process enables systems to not only execute experiments but also to learn from results and adapt their strategies accordingly. The workflow typically follows these key stages, creating an iterative cycle of knowledge generation and refinement.

The workflow begins with researchers establishing initial hypotheses and research goals, providing the foundational direction for the autonomous system. The AI then designs specific experiments to test these hypotheses, selecting parameters and conditions that maximize information gain. Robotic systems execute these designed experiments, collecting data with precision and consistency that often exceeds manual operations. Automated data analysis follows, where machine learning algorithms process results to extract meaningful patterns and insights. Based on this analysis, the system updates its understanding and selects the next most informative experiments to perform, creating a continuous learning cycle until research objectives are achieved [5] [3].

Case Study: The AMASE Platform

A concrete example of this workflow in action is the Autonomous MAterials Search Engine (AMASE) developed by researchers at the University of Maryland. This platform demonstrates how autonomous systems can efficiently navigate complex scientific landscapes through integrated theory-experiment cycles [5].

The AMASE workflow operates as follows:

- Experimental Phase Identification: The AI algorithm directs a diffractometer to analyze a combinatorial library at a specific temperature. Machine learning code then determines the crystal phase distribution landscape from the acquired data [5].

- Theoretical Integration: This experimental phase information is automatically fed into CALculation of PHAse Diagrams (CALPHAD), a computational platform based on Gibbs' theory of thermodynamics, to predict the entire phase diagram in the composition-temperature space [5].

- Iterative Refinement: The computationally predicted phase diagram then determines which region the diffractometer should investigate next. This cycle continues autonomously, with each iteration producing a more accurate phase diagram [5].

This approach has demonstrated a six-fold reduction in overall experimentation time compared to traditional methods, highlighting the efficiency gains possible through autonomous experimentation [5]. The key innovation lies in the tight coupling of theoretical prediction with experimental validation, creating a virtuous cycle where each informs and refines the other.

Applications and Impact

Transformative Applications Across Domains

Autonomous experimentation systems are demonstrating transformative potential across multiple scientific domains. In materials science, these systems are accelerating the discovery and optimization of novel materials with specific properties. Researchers at NREL have implemented autonomous sputter deposition of Zn-Ti-N thin-film nitrides, where targeted material compositions are achieved through Bayesian optimization with in-situ feedback from optical plasma emission measurements [4]. Similarly, autonomous characterization techniques are accelerating temperature- and pressure-dependent electrochemical impedance spectroscopy measurements that would traditionally require extensive manual effort [4].

In pharmaceutical research and drug development, autonomous systems are streamlining the drug discovery process. The Eve platform, a Level-4 autonomous system, has demonstrated the ability to design and perform experiments to identify hit compounds for treating malaria [3]. By automating the screening of potential drug candidates and optimizing synthesis pathways, these systems can dramatically reduce the time and cost associated with early-stage drug development.

The emergence of cloud laboratories represents another significant application, democratizing access to advanced experimental capabilities. Platforms like Emerald Cloud Lab offer subscription-based remote control of experimental instrumentation, allowing researchers to execute experiments without physical access to specialized facilities [3] [2]. Carnegie Mellon University, for example, is collaborating with Emerald Cloud Lab to create the first fully remote, AI-integrated lab accessible to students and researchers [2].

Quantitative Impact and Efficiency Gains

The implementation of autonomous experimentation systems has demonstrated substantial quantitative benefits across multiple metrics of research efficiency and effectiveness:

Table 4: Measured Impact of Autonomous Experimentation Systems

| Metric | Impact | Context |

|---|---|---|

| Experiment Duration | 6-fold reduction | AMASE platform for phase diagram mapping [5] |

| Discovery Timeline | 20 years to weeks/months | Traditional lab to deployment vs. SDL compression [7] |

| Modeling Accuracy | 20% increase | Firms leveraging AI tools in financial modeling [6] |

| Data Validation Speed | 50% faster | Blockchain-enabled real-time auditing [6] |

| Forecast Precision | 15% growth rate | Businesses utilizing alternative datasets [6] |

Beyond these quantitative metrics, autonomous experimentation systems address fundamental challenges in scientific research, including the reproducibility crisis. Studies indicate that nearly 70% of scientists struggle to reproduce others' findings [2]. By automating every step of an experiment, self-driving labs increase consistency and transparency, which is vital for scientific credibility [2].

Future Directions and Challenges

Emerging Trends and National Initiatives

The field of autonomous experimentation is evolving rapidly, with several emerging trends shaping its future trajectory. There is a growing emphasis on developing modular, interoperable infrastructure to overcome barriers posed by legacy equipment and proprietary data formats [1]. Standardized platforms for data sharing and instrument control are crucial for maximizing the potential of autonomous systems across different laboratory environments.

At a policy level, major national initiatives are recognizing the strategic importance of autonomous experimentation. The recently launched Genesis Mission, established by executive order in November 2025, aims to accelerate scientific discovery by leveraging various forms of artificial intelligence [8]. This initiative explicitly frames the mission as a national effort "comparable in urgency and ambition to the Manhattan Project," intended to dramatically accelerate scientific discovery across domains including advanced manufacturing, biotechnology, critical materials, and nuclear energy [8].

The Genesis Mission envisions the creation of an American Science and Security Platform that would provide high-performance computing, AI modeling frameworks, secure data access, and tools for autonomous experimentation [8]. This reflects a growing recognition at the highest levels of government that autonomous experimentation capabilities are crucial for maintaining scientific and technological leadership.

Critical Challenges and Considerations

Despite the promising potential of autonomous experimentation, several significant challenges must be addressed for these systems to achieve widespread adoption. A primary technical challenge is the development of intelligent tools for causal understanding that shift from correlation-focused machine learning toward causal models providing deep, physics-based insights [1]. Current AI systems often excel at identifying patterns but struggle with understanding underlying causal mechanisms, which is essential for robust scientific discovery.

The regulatory and intellectual property landscape presents another complex challenge. As noted in recent analyses, "inventions emerging from AI-driven science pose a grand challenge, as patent laws across the world recognize only human inventors. If the inventions they generate remain unpatentable, funding for SDLs may be constrained" [3]. This legal ambiguity requires resolution to ensure appropriate incentives for investment in autonomous research systems.

Workforce adaptation represents a third critical challenge. While concerns about AI replacing scientists are common, most experts anticipate a hybrid model where "AI and robotic automation assist in experimentation, while human scientists remain essential" [2]. The nature of scientific work is likely to evolve, with researchers focusing more on hypothesis generation, experimental design, and interpreting results, while autonomous systems handle routine experimentation and data collection. This shift will require new training approaches and skill development for the next generation of scientists.

Finally, security and safety concerns must be proactively addressed, particularly as autonomous systems gain capabilities in domains with potential dual-use applications such as biology and chemistry. Robust cybersecurity measures and clear frameworks for human accountability will be essential for the responsible development and deployment of these powerful technologies [3].

Autonomous experimentation represents a fundamental transformation in how scientific research is conducted, moving from manual, sequential processes to intelligent, self-driving systems that integrate AI, robotics, and advanced data analytics. These systems operate across a spectrum of autonomy levels, with current platforms typically achieving conditional or high autonomy (Levels 3-4) where they can perform multiple cycles of the scientific method with minimal human intervention.

The core value of autonomous experimentation lies in its ability to implement closed-loop workflows that continuously integrate experimental results with theoretical models, dramatically accelerating the pace of discovery. As demonstrated by platforms like AMASE, this approach can reduce experimentation time by factors of six or more while improving the quality and reproducibility of results. For researchers and drug development professionals, these capabilities offer the potential to overcome traditional bottlenecks in the research pipeline and bridge the "valley of death" between laboratory discoveries and viable products.

While significant challenges remain in developing causal understanding, adapting regulatory frameworks, and addressing security concerns, the strategic importance of autonomous experimentation is increasingly recognized at national levels. Initiatives like the Genesis Mission highlight the urgent ambition to leverage these technologies for scientific and competitive advantage. As the field continues to evolve, autonomous experimentation systems are poised to become indispensable tools in the scientific arsenal, augmenting human intelligence and enabling discoveries at unprecedented speed and scale.

The integration of artificial intelligence (AI) into laboratory sciences represents a paradigm shift from human-directed experimentation to self-driving autonomous research systems. This evolution, spanning from the expert systems of the 1980s to today's agentic AI, has fundamentally redefined the methodology of scientific discovery. Framed within the broader study of autonomous experimentation workflows, this transformation is characterized by the creation of closed-loop systems that seamlessly integrate hypothesis formulation, experimental execution, and data analysis without human intervention. The journey began with rule-based systems that encoded human expertise and has progressed to modern platforms capable of navigating complex experimental spaces such as materials science and drug discovery. This whitepaper traces the technical milestones in this evolution, provides detailed protocols for seminal experiments, and outlines the core components that constitute the modern autonomous research laboratory. By understanding this historical trajectory and the underlying mechanisms of autonomous workflows, researchers can better leverage these technologies to accelerate discovery in fields from biotechnology to advanced materials.

Historical Timeline: Key Milestones in Laboratory AI

The following table summarizes the pivotal developments in laboratory AI from the 1980s to the present, highlighting the transition from knowledge-based systems to fully autonomous discovery platforms.

Table 1: Evolution of AI in the Laboratory from the 1980s to Present

| Decade | Key Systems & Concepts | Core Capabilities | Domain Impact |

|---|---|---|---|

| 1980s | Expert Systems (e.g., DENDRAL) [9], First Driverless Car (1986) [10] | Rule-based reasoning, encoding expert knowledge, symbolic AI [9] [11] | Hypothesis formation in organic chemistry [9]; early robotics [10] |

| 1990s | Deep Blue (1997) [10], NASA Rovers (Spirit & Opportunity, 2004) [10] | High-speed processing of possibilities, autonomous navigation, real-time decision-making in harsh environments [10] | Demonstrated machine superiority in constrained tasks; autonomous data collection on Mars [10] |

| 2000s | Social Robots (Kismet, 2000) [10], IBM Watson (2011) [10], Siri/Alexa (2011/2014) [10] | Social/emotional interaction, natural language processing (NLP), question-answering, command-and-control systems [10] | Human-machine interaction; information retrieval from large datasets; voice-activated controls [10] |

| 2010s | Neural Networks & Deep Learning [10], AlphaGO (2016) [10], Generative AI (GPT-3, 2020) [11] | Pattern recognition, image/speech recognition, reinforcement learning in complex spaces, generative content creation [10] [11] | Revolutionized data analysis; demonstrated strategic problem-solving; enabled generative design of molecules/materials [10] [11] |

| 2020s | Autonomous Experimentation (AMASE, 2025) [5], Agentic AI [12], National Initiatives (Genesis Mission, 2025) [8] [13] | Fully closed-loop research, AI-guided decision-making, autonomous hypothesis testing, large-scale parallel experimentation [5] [12] | Self-driving laboratories for materials [5] and drug discovery; AI as a collaborative scientist [12] |

Detailed Experimental Protocols for Seminal AI Systems

Protocol 1: The DENDRAL Expert System (Late 1960s-1980s)

DENDRAL, developed at Stanford University, was a pioneering expert system that automated the decision-making process of organic chemists to identify molecular structures [9].

- 1. Objective: To infer the topological structure of an organic molecule from its mass spectral data and prior knowledge [9].

- 2. Materials & Workflow:

- Input: Empirical formula of the compound and its mass spectrum [9].

- Heuristic Generation: The system applied a set of constraints and heuristics (rules of thumb) derived from expert chemists to generate all possible acyclic and cyclic isomers that were consistent with the input data [9].

- Structure Prediction: For each candidate structure, DENDRAL predicted a mass spectrum [9].

- Matching & Ranking: The predicted spectra were compared against the empirical data. The candidate structures were ranked based on the closeness of the match, and the top-ranking structures were presented as the solution [9].

Protocol 2: The AMASE Platform for Autonomous Materials Discovery (2025)

The Autonomous MAterials Search Engine (AMASE) is a contemporary example of a closed-loop system for mapping materials phase diagrams [5].

- 1. Objective: To autonomously and efficiently map a materials phase diagram (composition vs. temperature) with minimal human intervention [5].

- 2. Materials & Workflow:

- Initialization: A thin-film combinatorial library, containing a large range of material compositions, is loaded into a diffractometer [5].

- AI-Directed Data Acquisition: The AI algorithm instructs the diffractometer to analyze the crystal structure at a specific composition and temperature [5].

- Machine Learning Analysis: A machine learning model processes the acquired X-ray diffraction data to determine the crystal phase distribution at that specific condition [5].

- Theory Integration: The experimental phase information is fed into a CALPHAD (CALculation of PHAse Diagrams) system, a computational platform based on thermodynamics, to predict the entire phase diagram [5].

- Autonomous Decision Point: The updated CALPHAD model identifies the most uncertain or interesting region of the phase diagram. This prediction becomes the input for the next cycle, directing the diffractometer to a new composition/temperature coordinate [5].

- Iteration: This closed loop of experiment → ML analysis → theory update → new experiment continues autonomously, refining the phase diagram with each iteration. This method has been shown to reduce overall experimentation time by a factor of six [5].

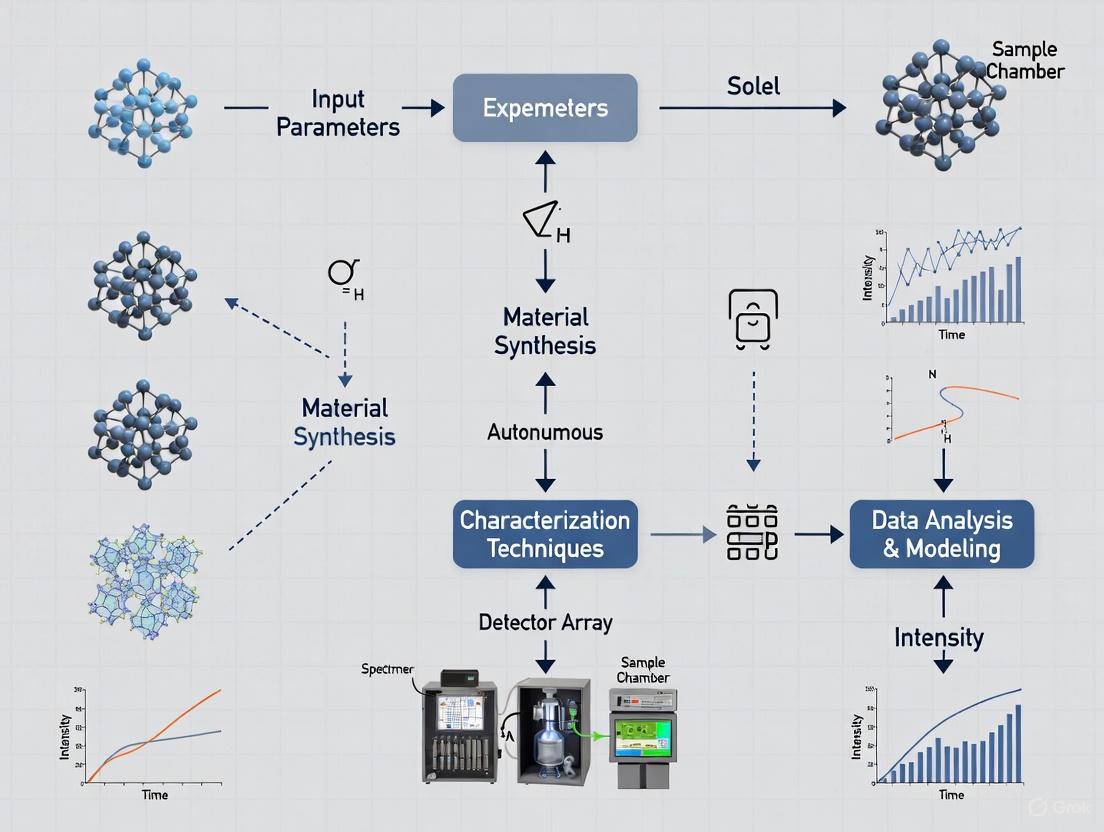

The workflow of a modern autonomous discovery system like AMASE can be visualized as a continuous, iterative cycle.

Autonomous Materials Discovery Workflow

The Scientist's Toolkit: Core Components for Autonomous Experimentation

The implementation of autonomous research requires a suite of integrated hardware and software components. The following table details the key "research reagents" – the essential solutions and tools – that constitute a modern autonomous experimentation platform.

Table 2: Key Research Reagent Solutions for Autonomous Experimentation

| Component | Function | Example Implementation |

|---|---|---|

| Combinatorial Library | A substrate containing a large number of systematically varying samples (e.g., in composition, structure). Serves as the physical search space for the AI. | Thin-film library with a gradient of material compositions [5]. |

| Robotic Instrumentation | Automated hardware capable of executing physical tasks (synthesis, measurement) without human intervention. | Robotic arm for sample handling; automated diffractometer for structural analysis [8] [5]. |

| AI Modeling & Analysis Framework | The core intelligence of the system. Includes machine learning models for real-time data analysis and prediction. | Machine learning code for crystal phase identification from diffraction data [5]. |

| Domain-Specific Foundation Models | Large-scale AI models pre-trained on vast amounts of scientific data for a specific field (e.g., chemistry, biology). | A foundation model trained on protein sequences and structures for predicting molecular function [8] [13]. |

| Theoretical Simulation Engine | A computational model that provides a physics-based or empirical framework to interpret results and guide exploration. | CALPHAD (CALculation of PHAse Diagrams) for thermodynamic modeling [5]. |

| Decision-Making AI Agent | The software component that processes data from all sources, evaluates the state of the experiment, and decides the next optimal step. | An agent using Bayesian optimization to select the most informative experiment to perform next [12]. |

The Principles of Agentic AI and Autonomous Experimentation

Modern autonomous experimentation is powered by agentic AI, systems with the capability to reason, retrieve information, execute tasks, and adapt. The principles defining these systems, as outlined in strategic intelligence research, are summarized below [12].

Table 3: Core Principles of Agentic AI for Autonomous Experimentation

| Principle | Capability Description | Impact on Research |

|---|---|---|

| Continuous Hypothesis Generation | Agents constantly monitor live data to formulate new testable ideas without human input. | Ensures the experiment pipeline is never empty, dramatically compressing the innovation cycle [12]. |

| Parallelized Experimentation | Running dozens or hundreds of experimental variations concurrently across different segments. | Accelerates the rate of discovery and reduces time-to-insight by exploring multiple directions at once [12]. |

| Adaptive Experiment Design | Adjusting experimental parameters (variables, sample sizes) on the fly based on interim results. | Prevents wasted cycles and reallocates resources to the most promising avenues of inquiry [12]. |

| Multi-Metric Optimization | Balancing multiple Key Performance Indicators (KPIs) at once (e.g., yield, purity, cost). | Leads to more robust and practical solutions by avoiding the trap of optimizing for a single, potentially misleading metric [12]. |

| Continuous Learning Integration | Feeding experimental results directly back into the AI's reasoning and decision models in near real-time. | Enables fast pivots and creates a compounding effect of improvements, as the system learns from every outcome [12]. |

The logical relationships and data flow between the scientist, the AI agent, and the experimental hardware in an agentic system can be complex. The following diagram illustrates this integrated architecture.

Agentic AI System Architecture

Future Outlook: National Initiatives and Strategic Direction

The future of AI in the laboratory is being shaped by large-scale, coordinated national efforts. The recent launch of the Genesis Mission in the United States exemplifies this trend. Framed as a national effort "comparable in urgency and ambition to the Manhattan Project," its goal is to create an integrated AI platform that harnesses federal scientific datasets and supercomputing resources [8] [13].

- Platform Integration: The mission will establish the American Science and Security Platform, providing integrated high-performance computing, AI modeling frameworks, domain-specific foundation models, and tools for autonomous experimentation [13].

- Priority Domains: The initiative will focus on addressing national challenges in key areas including advanced manufacturing, biotechnology, critical materials, nuclear energy, quantum information science, and semiconductors [8] [13].

- Operational Tempo: The executive order mandates an aggressive timeline, requiring the identification of computational resources within 90 days, initial datasets within 120 days, and a demonstration of initial operating capability within 270 days [13].

This initiative signals a strategic shift towards leveraging AI not just as a tool within individual labs, but as a foundational component of a national science and technology ecosystem, aiming to dramatically accelerate the pace of discovery across multiple critical fields [8] [13].

The convergence of robotics, artificial intelligence (AI), and machine learning (ML) creates an integrated system capable of performing complex tasks with perception, adaptability, and autonomy. In autonomous experimentation workflows for drug development, this trifecta transforms traditional research from a linear, manual process into a dynamic, self-optimizing loop. While these technologies are distinct, their integration produces systems greater than the sum of their parts.

- Robotics provides the physical embodiment to execute tasks in the laboratory environment, from pipetting liquids to handling microplates.

- Artificial Intelligence (AI) provides the overarching cognitive framework for decision-making, enabling robots to reason through complex situations and make informed decisions without human intervention [14].

- Machine Learning (ML) is a subset of AI that gives robots the ability to learn from data and improve their performance over time without being explicitly reprogrammed for every new situation [15].

This technical guide examines the core components of this synergistic relationship, its implementation in autonomous research workflows, and the detailed experimental protocols that are reshaping the future of life sciences.

Core Technologies and Their Synergistic Relationships

The Role of Robotics

Robotics provides the hardware and control systems that automate physical laboratory procedures. Modern robotic systems for life sciences include:

- Automated Liquid Handlers: For precise, high-throughput reagent dispensing.

- Autonomous Mobile Robots (AMRs): For transporting materials between workstations [14] [16].

- Collaborative Robots (Cobots): Designed to work safely alongside human technicians, featuring enhanced safety sensors and intuitive programming interfaces [16].

- Robotic Arms: For complex manipulation tasks such as instrument tending and sample preparation.

The Role of Artificial Intelligence

AI technologies enable robotic systems to move beyond simple pre-programmed motions and respond intelligently to complex, unstructured laboratory environments. Key AI capabilities in robotics include:

- Perception: Using sensor data and computer vision to understand the laboratory environment [14].

- Reasoning: Making informed decisions about experimental steps and workflow adjustments.

- Adaptation: Adjusting procedures in response to unexpected outcomes or changing conditions [14].

- Autonomy: Executing multi-step experimental protocols with minimal human supervision [14].

The Role of Machine Learning

ML provides the specific algorithms and techniques that enable robots to learn from experimental data and improve their performance iteratively. ML in robotics typically follows a three-step learning loop [15]:

- Data Collection: The robot gathers data from sensors and experimental outcomes.

- Model Training: Algorithms process this data to identify patterns and create predictive models.

- Action and Correction: The robot implements actions based on its models, then refines them based on results.

Table 1: Primary Machine Learning Methods in Robotics

| ML Method | Core Function | Application in Experimental Workflows |

|---|---|---|

| Supervised Learning | Learns from labeled training data | Classifying cell types in microscopy images; identifying compound structures [15]. |

| Unsupervised Learning | Finds hidden patterns in unlabeled data | Detecting anomalous experimental results; clustering similar drug response profiles [15]. |

| Reinforcement Learning | Learns optimal actions through trial-and-error rewarded by a feedback system | Optimizing experimental parameters like temperature or concentration; improving robotic motion paths [14] [15]. |

| Deep Learning | Uses neural networks with multiple layers to process complex data | Predicting protein-ligand binding affinity; analyzing high-content screening data [17]. |

Synergistic Integration in Autonomous Systems

The true power of the trifecta emerges when these technologies are tightly integrated. The AI component provides high-level reasoning and experimental design, the ML component continuously improves specific task performance based on data, and the robotics component executes physical actions in the real world. This creates a closed-loop design-make-test-analyze cycle that can operate autonomously [18].

Figure 1: The synergistic relationship between AI, ML, and Robotics creates an autonomous system capable of intelligent action in physical environments.

The Scientist's Toolkit: Research Reagent Solutions

Implementing autonomous experimentation requires both physical reagents and specialized software tools. The following table details essential components of an AI-driven robotics platform for drug discovery.

Table 2: Essential Research Reagents and Platform Components for Autonomous Experimentation

| Component | Function | Specific Examples |

|---|---|---|

| AI-Driven Design Platforms | Generate novel molecular structures and predict properties | Exscientia's DesignStudio [18]; Insilico Medicine's generative chemistry platform [18] |

| Automated Synthesis Systems | Physically produce predicted compounds with minimal human intervention | Robotics-mediated "AutomationStudio" [18]; Nuclera's eProtein Discovery System [19] |

| High-Content Screening Assays | Generate rich, multidimensional biological data for ML training | Recursion's phenomic screening platform [18]; 3D cell culture systems like mo:re's MO:BOT [19] |

| Integrated Data Management | Unify experimental data with metadata for ML model training | Cenevo's Mosaic and Labguru platforms [19]; Sonrai's Discovery platform [19] |

| Specialized ML Models | Analyze complex biological data and predict experimental outcomes | Convolutional Neural Networks for image analysis [15]; Transformers for multi-modal data integration [15] |

Quantitative Analysis of Performance and Capabilities

The integration of robotics, AI, and ML delivers measurable improvements in drug discovery efficiency and effectiveness. The following data summarizes key performance metrics from implemented systems.

Table 3: Performance Metrics of AI and Robotics in Drug Discovery

| Metric | Traditional Approach | AI/Robotics-Enhanced Approach | Improvement |

|---|---|---|---|

| Discovery Timeline | ~5 years to clinical candidate [17] | As little as 18 months to Phase I [18] [17] | ~70% reduction [18] |

| Compound Synthesis Efficiency | 10-100+ compounds synthesized and tested [18] | ~70% faster design cycles with 10x fewer compounds [18] | Significant reduction in resource utilization |

| Market Growth | Traditional pharmaceutical R&D growth | AI in robotics market growing at 29.4% CAGR to $50.2B by 2028 [14] | Exponential expansion |

| Automation Potential | Manual laboratory work | AI agents could automate ~44% of work hours; robots ~13% [20] | Transformative workforce impact |

Experimental Protocols for Autonomous Experimentation

Protocol 1: Automated Compound Screening and Optimization

This protocol details a closed-loop workflow for autonomous drug candidate screening and optimization, integrating AI-driven design with robotic validation.

Objective: To iteratively design, synthesize, and test novel compounds for a specific therapeutic target with minimal human intervention.

Workflow:

- Target Identification Phase:

Compound Design Phase:

- Generative AI models propose novel molecular structures satisfying target product profiles (potency, selectivity, ADME properties) [18].

- ML Method: Reinforcement learning optimizes for multiple parameters simultaneously [15].

- Physics-based simulations (e.g., Schrödinger's platform) predict binding affinities [18].

Robotic Synthesis Phase:

- Automated systems synthesize prioritized compounds.

- Robotic System: Liquid handlers and robotic arms prepare reaction mixtures in 96- or 384-well plates [19].

- Purification and quality control are performed inline using automated chromatography and mass spectrometry systems.

Biological Testing Phase:

- Robotic systems conduct high-throughput screening against target proteins and cellular models.

- Assay Technology: Use 3D organoid models (e.g., mo:re's MO:BOT platform) for human-relevant data [19].

- High-content imaging captures multidimensional response data.

Data Analysis and Learning Phase:

- ML models analyze screening results to identify structure-activity relationships.

- Algorithm: Deep learning networks process high-content imaging data to extract subtle phenotypic features [17].

- Results feed back into the generative AI to design the next compound series.

Figure 2: The autonomous Design-Make-Test-Analyze cycle for closed-loop drug discovery.

Protocol 2: Autonomous Protein Expression and Characterization

This protocol outlines an integrated workflow for high-throughput protein production, particularly valuable for structural biology and assay development.

Objective: To rapidly screen multiple construct designs and expression conditions to produce soluble, active protein.

Workflow:

- DNA Template Design:

- AI algorithms optimize codon usage and predict optimal construct boundaries based on structural data.

- Data Source: AlphaFold-predicted structures inform construct design [17].

Parallelized Expression Screening:

Automated Purification and Quality Control:

- Robotic arms perform affinity purification, buffer exchange, and concentration.

- Integrated analytics (UV-Vis, dynamic light scattering) assess protein quality and quantity.

Activity and Characterization Assays:

- Automated systems conduct functional assays (e.g., enzyme activity, binding measurements).

- ML Application: Computer vision algorithms analyze gel electrophoresis images to assess purity [15].

Data Integration and Model Refinement:

- Experimental results train ML models to improve future construct design predictions.

- Output: High-quality protein for downstream applications within 48 hours versus traditional weeks [19].

Implementation Challenges and Future Directions

Despite significant progress, several technical challenges remain in fully realizing autonomous experimentation systems:

- Sim-to-Real Transfer: Models trained in simulation often underperform when deployed on physical robots due to differences in lighting, friction, or sensor noise [15].

- Data Quality and Quantity: ML models require large, diverse, and accurately labeled datasets, which can be expensive and difficult to gather in biological contexts [15] [21].

- Hardware Constraints: ML processing requires significant compute power and energy, creating challenges for compact or mobile laboratory robots [15].

- Interpretability and Trust: The "black box" nature of many complex ML models raises concerns in regulated environments like drug development [17].

Future directions focus on addressing these limitations through:

- Greater Autonomy: Robots will rely less on human supervision as ML models mature [15].

- On-Device Learning: Edge computing will allow robots to process and learn directly on their hardware, reducing latency [15].

- Multi-Modal Models: Combining vision, audio, and tactile data will enable robots to interpret complex environments more like humans [15].

- Generative AI Integration: Foundation models will enhance planning, reasoning, and natural language interaction [15].

As these technologies continue to mature and integrate, the autonomous experimentation laboratory represents not just an incremental improvement but a fundamental transformation of how scientific discovery is conducted.

The field of scientific research, particularly in drug development and materials science, is undergoing a fundamental transformation driven by the emergence of autonomous agents. This shift from traditional automation to agentic systems represents more than a simple technological upgrade; it constitutes a paradigm change in how experimentation is conceived, executed, and optimized. Traditional automation has served research well for decades, providing reliability in repetitive, rule-based tasks. However, the complex, multi-variable challenges of modern science—from optimizing synthetic pathways to characterizing novel therapeutic compounds—demand systems capable of intelligent adaptation, dynamic decision-making, and proactive experimentation. Autonomous agents, powered by advances in artificial intelligence, machine learning, and robotics, are poised to meet these demands, ushering in an era of accelerated discovery and enhanced research efficacy.

Framed within the broader thesis on autonomous experimentation workflows, this evolution marks the transition from tools that execute predefined procedures to collaborative partners that design and learn from experiments. This whitepaper details the core architectural, functional, and operational differences between traditional automation and autonomous agents, providing researchers and drug development professionals with a technical framework for evaluating and implementing these transformative technologies.

Definitions and Core Concepts

What is Traditional Automation?

Traditional automation in a research context consists of rule-based systems designed to execute specific, predefined laboratory procedures without human intervention. These systems operate on static logic and structured workflows, following a deterministic path from input to output. In practice, this encompasses robotic liquid handlers programmed for specific plate layouts, automated high-throughput screening (HTS) systems executing identical assays across thousands of wells, and automated analyzers following fixed measurement protocols.

The core characteristic of traditional automation is its reactive nature; it performs reliably only in controlled environments where inputs and processes are predictable and well-defined [22]. For instance, an automated polymerase chain reaction (PCR) setup system excels at repetitively mixing samples and reagents in a predefined ratio and volume but cannot dynamically adjust its protocol if an unexpected result is detected mid-process. Its intelligence is confined to the initial programming, and any deviation or failure typically requires manual intervention and system reconfiguration, thereby limiting its scope to repetitive, high-volume tasks where variability is minimal.

What is an Autonomous Agent?

An autonomous agent is an intelligent software system that perceives its environment (e.g., experimental data, instrument status), makes decisions to achieve specified research goals, and acts upon those decisions by orchestrating laboratory instruments and workflows [23] [24]. Unlike traditional automation, autonomous agents are proactive and goal-driven. They are not programmed with fixed steps but are equipped with high-level objectives, such as "maximize the yield of compound X" or "identify the crystal structure of this material."

These agents leverage a suite of technologies, including large language models for interpreting scientific literature, machine learning for data analysis and model building, and application programming interfaces for seamless integration with laboratory hardware and software [22] [24]. A key differentiator is their incorporation of a persistent, evolving memory, allowing them to learn from past experimental outcomes—both successes and failures—to continuously refine their strategy and improve performance over time [24]. This capacity for self-directed learning and adaptation makes them uniquely suited for navigating the complex, often unpredictable, landscape of scientific research.

Architectural and Functional Comparison

The divergence between these two paradigms is rooted in their underlying architecture, which dictates their capabilities and applications in a research setting.

Technical Architecture

Traditional Automation relies on a linear, procedural architecture. Its workflow is a fixed sequence: receive a trigger (e.g., a sample is loaded), execute a predefined series of actions (e.g., aspirate, dispense, mix, measure), and output a result [22]. This architecture depends on if-then-else rules and is typically integrated at the user interface level or via static APIs, mimicking human manual actions but with greater speed and precision.

Autonomous Agents are built on a cyclic, cognitive architecture known as the perceive-decide-act loop [23]. This loop is supported by a layered technical stack that includes a reasoning engine (often an LLM), a planning module that decomposes goals into actionable steps, a memory layer for retaining context and results, and an orchestration layer that communicates with instruments via dynamic API calls [22] [24]. This allows the agent to function not as a mere executor, but as an integrated project manager for the experiment.

The diagram below visualizes the fundamental workflow difference between a traditional automated system and an autonomous agent.

Key Capabilities and Differences

The architectural divide translates into distinct functional capabilities, as summarized in the table below.

Table 1: Functional Comparison of Traditional Automation vs. Autonomous Agents

| Dimension | Traditional Automation | Autonomous Agent |

|---|---|---|

| Autonomy & Initiative | Reactive; acts only when triggered by a predefined event or command [24]. | Proactive & goal-driven; can initiate actions and experiments to achieve an objective [24]. |

| Learning & Adaptability | None; cannot learn or improve from experience. Rules must be manually updated [23] [22]. | High; learns from data, feedback, and past interactions to adapt strategies and improve outcomes [23] [22]. |

| Decision-Making | Follows fixed, pre-programmed rules and logic paths [22]. | Makes dynamic, context-aware decisions using real-time data and historical memory [23] [22]. |

| Data Handling | Works exclusively with structured data in expected formats [22]. | Processes structured, semi-structured, and unstructured data (e.g., journal articles, raw spectra) [22]. |

| Task Complexity | Suited for simple, repetitive, and predictable tasks (e.g., sample aliquoting) [22]. | Excels at complex, multi-step tasks with uncertain outcomes (e.g., reaction optimization) [22]. |

| Scalability & Maintenance | Scaling requires adding more hardware/scripts. High maintenance for process changes [23] [22]. | Modular and reusable. Lower maintenance due to self-optimization and cloud-native design [23] [22]. |

| Human Role | Human-in-the-loop for setup, monitoring, and exception handling [24]. | Human-on-the-loop; provides high-level oversight and strategic guidance [24]. |

The Scientist's Toolkit: Research Reagent Solutions for Autonomous Experimentation

Transitioning to an agent-driven workflow requires not only new software but also a reconsideration of laboratory materials. The following table details key reagents and their functions, curated for reliability and compatibility with automated platforms, which are crucial for robust autonomous experimentation.

Table 2: Essential Research Reagents for Automated Workflows

| Research Reagent / Material | Primary Function in Experimental Workflows |

|---|---|

| Lyophilized Assay Kits | Pre-mixed, stable reagents for consistent, high-throughput biochemical assays (e.g., cell viability, enzyme activity). Minimizes manual pipetting error. |

| Barcoded Microtiter Plates | Standardized sample containers that enable automated plate readers and liquid handlers to track and process hundreds of samples simultaneously. |

| Stable Cell Line Libraries | Genetically uniform cells ensuring experimental reproducibility across long-duration, iterative experiments run by autonomous systems. |

| Broad-Spectrum Catalyst Libraries | Diverse sets of catalysts for autonomous platforms to rapidly screen and discover optimal conditions for chemical synthesis. |

| API-Accessible Chemical Databases | Digital repositories (e.g., PubChem, Reaxys) that agents query to inform experiment design and predict compound properties. |

Experimental Protocols for Autonomous Workflows

To illustrate the practical application of autonomous agents, below are detailed methodologies for two key experiment types relevant to drug development and materials science.

Protocol 1: Multi-Parameter Reaction Optimization

This protocol is designed for an autonomous agent to optimize a chemical synthesis, such as the yield of a pharmaceutical intermediate.

- Goal Definition: The researcher provides the high-level goal: "Maximize yield of compound P from starting materials A and B."

- Hypothesis Generation & DoE: The agent queries relevant literature and historical data from its memory to identify key reaction parameters (e.g., temperature, catalyst concentration, pH, solvent ratio). It then uses this information to generate an initial Design of Experiments (DoE), often a space-filling model like a Sobol sequence, to explore the parameter space efficiently.

- Workflow Execution: The agent orchestrates the laboratory instruments via their APIs:

- Instructs the liquid handling robot to prepare reaction vials according to the DoE conditions.

- Commands the automated reactor to run the reactions at specified temperatures and durations.

- Directs the HPLC-MS system to analyze the composition and yield of each reaction product.

- Analysis and Decision: The agent analyzes the yield data. Using a machine learning model (e.g., a Bayesian optimizer), it identifies the most promising regions of the parameter space and generates a new set of experimental conditions predicted to improve yield.

- Iteration: Steps 3 and 4 are repeated in a closed-loop fashion until the yield is maximized or converges, or the resource budget is exhausted. The final optimized protocol and all data are stored in the agent's memory for future use.

Protocol 2: High-Throughput Protein Characterization

This protocol enables the autonomous characterization of engineered proteins for therapeutic candidate screening.

- Goal Definition: The researcher specifies the goal: "For this library of 1000 engineered protein variants, determine expression level and binding affinity to target antigen T."

- Workflow Planning: The agent decomposes the goal into parallelized sub-tasks: expression culture, purification, quantification, and affinity measurement.

- Orchestrated Execution:

- The agent instructs a bioreactor to express the proteins in small-scale cultures.

- It then coordinates a protein purification system (e.g., an automated chromatography system) to purify each variant.

- A plate reader is commanded to measure the concentration of each purified protein.

- Finally, the agent runs a high-throughput surface plasmon resonance (SPR) or bio-layer interferometry (BLI) assay to measure binding kinetics.

- Data Integration and Reporting: The agent correlates data from all stages (expression, yield, affinity), ranks the variants based on predefined multi-parameter criteria, and generates a summary report highlighting the top candidates for further development.

The following diagram maps the logical flow of this complex, multi-instrument experiment.

Implications for Autonomous Experimentation Research

The integration of autonomous agents into research workflows signifies a move toward Programmable Cloud Laboratories (PCLs). As highlighted by the U.S. National Science Foundation's "PCL Test Bed" initiative, the future lies in distributed, remotely accessible laboratory facilities that combine AI-enabled experiment design, automated preparations, and data analysis [25]. This vision is built upon the core capabilities of autonomous agents.

For researchers, this paradigm shift promises a significant acceleration of the discovery cycle. It reduces human-intensive labor, minimizes cognitive biases in experimental design, and enables the exploration of vast experimental spaces that were previously intractable. This is particularly critical in fields like drug development, where optimizing lead compounds or understanding complex biological pathways requires testing thousands of hypotheses. By framing automation as an intelligent, collaborative partner, the scientific community can unlock new levels of productivity and innovation, ultimately accelerating the path from fundamental research to tangible societal benefits.

The contemporary landscape of scientific research, particularly in fields like drug development, is on the cusp of a paradigm shift, moving from traditional linear experimentation to AI-driven autonomous workflows. The recently launched Genesis Mission, a U.S. national initiative, epitomizes this shift, framing the effort as comparable in urgency and ambition to the Manhattan Project [8]. Its core objective is to leverage artificial intelligence (AI) to achieve a dramatic acceleration in scientific discovery, thereby strengthening national security, securing energy dominance, and enhancing workforce productivity [13]. This mission, and the broader field it represents, seeks to overcome the inherent fragmentation in current research and development (R&D) by integrating the world's largest collection of federal scientific datasets with supercomputing resources into a unified AI platform [8]. This platform is designed to train foundational scientific models and create AI agents capable of testing new hypotheses and automating entire research workflows, promising to multiply the return on investment in R&D [13].

The potential for 100x to 1000x faster discovery rates is not merely aspirational but is grounded in concurrent breakthroughs in computational hardware. For instance, researchers at Peking University have developed an analog chip that uses resistive random-access memory (RRAM) to process data as continuous electrical signals directly within the chip. This design reportedly outperforms top-tier digital processors like NVIDIA's H100 GPU by as much as 1,000 times in throughput while using 100 times less energy [26]. Similarly, advances in plasmonic resonators—nanometer-sized light antennas—suggest the potential for computer chips that are up to 1,000 times faster by using photons instead of electrons [27]. When such revolutionary hardware is coupled with the AI-driven software frameworks of initiatives like the Genesis Mission, the foundation for radically accelerated discovery rates becomes technologically plausible.

Foundational Technologies for Acceleration

The pursuit of exponentially faster discovery rests on two interconnected pillars: a coordinated, software-defined research infrastructure and transformative hardware capabilities.

The American Science and Security Platform

The Genesis Mission is operationalized through the American Science and Security Platform, an integrated infrastructure designed to provide the following capabilities in a unified manner [13]:

- High-performance computing resources, including national laboratory supercomputers and secure cloud-based AI environments for large-scale model training and simulation.

- AI modeling frameworks, including AI agents to explore design spaces, evaluate experimental outcomes, and automate workflows.

- Domain-specific foundation models across key scientific domains.

- Secure data access to proprietary, federally curated, and open scientific datasets, including synthetic data.

- Experimental tools to enable autonomous and AI-augmented experimentation and manufacturing.

The implementation of this platform follows an aggressive timeline, with the Secretary of Energy required to identify computing assets within 90 days, initial datasets within 120 days, and demonstrate an initial operating capability for at least one national challenge within 270 days of the executive order [8] [13].

Enabling Hardware Breakthroughs

The software platform's demands are met by groundbreaking hardware advances that redefine the limits of processing speed and energy efficiency.

Table 1: Hardware Platforms Enabling Accelerated Discovery

| Technology | Reported Performance Gain | Key Mechanism | Primary Application |

|---|---|---|---|

| Peking University Analog Chip [26] | ~1000x higher throughput; 100x less energy vs. NVIDIA H100 | Uses RRAM to process data as analog signals in-memory, avoiding data movement. | AI and 6G communication systems. |

| Plasmonic Resonators [27] | Potentially 1000x faster than conventional chips | Uses light (photons) instead of electricity (electrons) in nanometer-sized metal structures. | Ultra-fast active plasmonics and light-based switches. |

A detailed analysis of the Peking University chip reveals that it tackles long-standing precision issues in analog computing by using RRAM to process data as continuous electrical signals directly within the chip itself. This sidesteps the massive energy and latency costs associated with moving data to and from separate memory units in traditional von Neumann architectures [26]. The chip's design, which leverages commercial fabrication methods, indicates a viable path to widespread adoption and scalability.

Concurrently, research in plasmonic resonators has achieved a critical breakthrough in modulation. A German-Danish team successfully electrically modulated a single gold nanorod resonator by altering its surface properties [27]. Dr. Thorsten Feichtner explains the principle is comparable to a Faraday cage, where "additional electrons on the surface influence the optical properties of the resonators" [27]. Their experiments revealed quantum-mechanical effects—a "smearing" of electrons across the metal-air boundary—requiring a new semi-classical model to describe. This foundational work paves the way for optical modulators with high efficiency, which are critical components for future optical computing systems [27].

Quantitative Analysis of Accelerated Workflows

To validate and guide the implementation of accelerated discovery platforms, a robust quantitative data analysis framework is essential. This transforms raw computational and experimental data into actionable insights.

Core Analytical Methods

Quantitative analysis in this context relies on several statistical and machine learning methods to systematically make sense of numerical data [28] [29].

- Descriptive Analysis: This is the foundational step, summarizing what happened in a dataset. It involves calculating measures of central tendency (mean, median, mode) and dispersion (range, variance, standard deviation) to understand basic characteristics and identify outliers [29].

- Diagnostic Analysis: This method investigates why something happened by looking for relationships between variables. Regression analysis is a key technique here, helping to model the relationship between a dependent variable (e.g., reaction yield) and one or more independent variables (e.g., temperature, catalyst concentration) [28] [29].

- Predictive Analysis: Using historical data and statistical modeling, predictive analysis forecasts future outcomes. Techniques range from traditional regression models to advanced machine learning algorithms like decision trees, random forests, and neural networks, which can capture complex, non-linear relationships in high-dimensional data [29].

- Prescriptive Analysis: As the most advanced type, prescriptive analysis combines insights from all other methods to recommend specific actions. It helps answer "What should we do about it?" using data-driven evidence, potentially guiding an AI agent on the next best experiment to run [28].

Performance Data and Benchmarks

The performance claims of new technologies must be rigorously quantified against established benchmarks.

Table 2: Quantitative Performance Benchmarks for Discovery Technologies

| Metric | Conventional Benchmark (e.g., NVIDIA H100) | Next-Gen Technology (e.g., Analog Chip) | Gain Factor |

|---|---|---|---|

| Computational Throughput | 1x (Baseline) | ~1000x higher [26] | 1000x |

| Energy Efficiency | 1x (Baseline) | ~100x less energy [26] | 100x |

| Operational Capability | N/A | Platform establishment in 270 days [13] | N/A (Accelerated Setup) |

Experimental Protocols for Autonomous Research

The realization of autonomous experimentation requires standardized, detailed methodologies that can be executed by AI agents. The following protocols outline the core workflows.

Protocol 1: AI-Driven Hypothesis Generation and In-Silico Screening

Objective: To autonomously generate novel research hypotheses and pre-screen candidates computationally using foundation models and simulation.

- Data Integration: The AI agent is granted secure access to relevant, federated datasets from the American Science and Security Platform, including molecular structures, genomic data, and material properties [13].

- Model Querying: The agent queries a domain-specific foundation model (e.g., a protein folding model or a quantum chemistry model) to identify promising candidates or conditions that meet a target profile.

- Simulation & Down-selection: The agent uses high-performance computing resources to run large-scale simulations (e.g., molecular dynamics, finite element analysis) on the shortlisted candidates. Candidates are ranked based on simulated performance metrics.

- Hypothesis Formulation: The agent formulates a testable hypothesis, such as "Compound X will inhibit protein Y with an IC50 of less than 10 nM," and proposes an experimental workflow for validation.

Protocol 2: Autonomous Robotic Experimentation Loop

Objective: To physically test AI-generated hypotheses using robotic laboratories in a closed-loop, iterative manner.

- Workflow Translation: The AI agent translates the proposed experimental workflow into a machine-readable instruction set for a robotic laboratory system.

- Automated Execution: The robotic system (e.g., automated pipetting, synthesis reactors, characterizers) executes the experiment. Sensors collect real-time data on outcomes.

- Data Analysis and Learning: The AI agent analyzes the experimental results using quantitative data analysis methods [29]. It compares the outcome with the prediction from the in-silico model.

- Hypothesis Refinement: Based on the analysis, the AI agent refines its model and generates a new, optimized set of experimental conditions or candidates.

- Iteration: The loop (steps 2-4) repeats autonomously until a stopping criterion is met (e.g., a performance target is achieved, or a set number of cycles is completed).

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Autonomous Experimentation Workflows

| Item / Reagent | Function in Autonomous Workflow |

|---|---|

| Domain-Specific Foundation Models | Pre-trained AI models that provide deep knowledge of a specific scientific domain (e.g., bio-catalysis, polymer science), enabling accurate in-silico predictions and hypothesis generation [13]. |

| AI Agents | Software entities that perform specific tasks such as exploring design spaces, evaluating experimental outcomes, and automating the sequencing of research steps without human intervention [13]. |

| Robotic Laboratory Modules | Automated physical systems for sample handling, synthesis, purification, and characterization that execute the instructions from the AI agent [8] [13]. |

| Synthetic Data Generators | Computational tools that generate realistic, labeled data to augment training datasets for AI models, improving their robustness and performance when real experimental data is scarce [13]. |

| Standardized Partnership Frameworks | Legal and technical agreements that govern data sharing, intellectual property, and collaboration between different entities (national labs, academia, industry), ensuring secure and efficient cooperation [8] [13]. |

Workflow Visualization of an Autonomous Discovery Cycle

The following diagram, generated using Graphviz and adhering to the specified color and contrast rules, maps the logical flow of a fully autonomous experimentation cycle, integrating the protocols and technologies described.

Autonomous Discovery Workflow Logic

This diagram visualizes the self-reinforcing cycle of AI-accelerated discovery. The process begins with the In-Silico Discovery & Planning phase, where AI agents leverage federated data and foundation models to generate hypotheses and down-select candidates through high-performance simulation [13]. The most promising candidate is then passed to the Physical Experimentation & Learning phase, where robotic systems execute the experiment and collect data [8] [13]. The results are quantitatively analyzed, and the AI model updates its understanding, creating a Learning Feedback Loop that directly informs the next round of hypothesis generation. This closed-loop automation, powered by integrated AI and advanced hardware, is the core engine that enables the 100x to 1000x acceleration in discovery rates.

Building and Implementing Self-Driving Labs: From Virtual Screening to Robotic Execution

The paradigm of scientific discovery is undergoing a profound transformation through the adoption of autonomous experimentation workflows. These AI-driven pipelines represent a fundamental shift from traditional hypothesis-testing models to self-optimizing systems that can navigate complex experimental landscapes with minimal human intervention. For researchers in fields such as drug development, where the experimental space is vast and the costs of exploration are high, these workflows offer the potential to dramatically accelerate the pace of discovery. An effective AI workflow integrates data, computational power, and experimental infrastructure into a cohesive system that can prioritize experiments, execute protocols, analyze results, and refine hypotheses in a continuous cycle of learning [8].

Framed within broader research on autonomous experimentation, this technical guide provides a comprehensive breakdown of the core stages that constitute a robust AI workflow. From the initial gathering of raw data to the final deployment of trained models that drive robotic experimentation systems, each component must be carefully designed and integrated. The following sections detail these critical stages—data ingestion, preprocessing, model training, evaluation, and deployment—providing researchers with the methodologies and frameworks needed to implement these transformative systems in their own scientific domains [30] [31].

Stage 1: Data Ingestion and Management

The foundation of any effective AI workflow is robust data management. AI data management represents a comprehensive approach that uses artificial intelligence technologies to automate, optimize, and improve data management processes, with the core objective of handling both structured and unstructured data more effectively to boost efficiency, security, and compliance while minimizing human error [30].

Data Ingestion Methods and Pipeline Architecture

Data ingestion serves as the critical entry point to the AI workflow, involving the process of collecting, manipulating, and storing information from multiple sources for use in analysis and decision-making. This fundamental stage enables the flow of data from diverse experimental instruments, databases, and sensors into a unified system where it can be processed and analyzed [32].

The data ingestion pipeline follows a sequential process with distinct stages:

- Discovery: Establishing connections to trusted data sources including experimental instruments, laboratory information management systems (LIMS), scientific databases, IoT devices, and APIs.

- Extraction: Pulling data using appropriate protocols for each source or establishing persistent connections to real-time feeds, supporting a wide range of data formats and frameworks.

- Validation: Algorithmically inspecting and validating raw data to confirm it meets expected standards for accuracy, consistency, and experimental relevance.

- Transformation: Converting validated data into consistent formats suitable for AI model consumption, including error correction, duplicate removal, and metadata addition.

- Loading: Moving the transformed data to target systems such as data warehouses, data lakes, or specialized scientific repositories where it becomes ready for analysis and model training [32].

Table 1: Data Ingestion Methods for Scientific Workflows

| Method | Characteristics | Scientific Use Cases |

|---|---|---|

| Batch Processing | Collects data at scheduled intervals (hourly, daily, weekly); processes in bulk; simple and reliable with minimal performance impact during off-peak hours | Laboratory instrument data aggregation; overnight processing of high-throughput screening results; weekly genomic sequence compilation |

| Real-time Ingestion | Processes data continuously from sources to destinations; enables immediate decision-making; requires substantial infrastructure investment | Live sensor monitoring in bioreactors; real-time equipment failure detection; continuous experimental condition adjustment |

| Micro-batch Ingestion | Hybrid approach collecting data continuously but processing in small batches at frequent intervals; balances timeliness with resource constraints | Experimental condition optimization; near-real-time quality control in automated synthesis; dynamic parameter adjustment in extended experiments [32] |

AI-Enhanced Data Management Components

Beyond initial ingestion, effective data management for autonomous experimentation leverages several AI-enhanced components that ensure data quality and accessibility throughout the workflow:

Data Discovery & Metadata Generation: AI systems automatically scan datasets to identify meaningful characteristics such as data type, business relevance, usage frequency, and relationships to other data points. This automated metadata generation eliminates time-consuming manual work and improves the comprehensiveness of data inventories, making it easier for research teams to quickly access and understand the data they need [30].

Data Quality, Cleaning, & Anomaly Detection: Machine learning models continuously clean and monitor data in real-time, identifying and correcting common data quality issues such as duplicate entries, missing values, and formatting inconsistencies. AI-powered anomaly detection proactively monitors data flows to identify unusual patterns or shifts that may indicate experimental errors, instrumental drift, or novel phenomena worthy of further investigation [30].

Data Classification, Lineage, & Governance: Natural language processing and machine learning algorithms automatically assess the context and sensitivity of data, identifying personally identifiable information, intellectual property, and other protected categories. AI creates visual lineage graphs that track the flow and transformations of data as it moves through systems, providing essential visibility for ensuring data integrity, reproducibility, and compliance with regulatory standards [30].

Stage 2: Data Preprocessing and Feature Engineering

Methodologies for Data Preprocessing

Once data is ingested, it must be transformed into a format suitable for AI model training through systematic preprocessing. This stage is critical for ensuring that experimental data from diverse sources and formats can be effectively utilized by machine learning algorithms. The preprocessing phase addresses issues of data inconsistency, noise, and incompleteness that are particularly prevalent in scientific datasets [31].

Data preprocessing employs several key techniques:

Noise Reduction and Filtering: Implementation of algorithmic filters to remove instrumentation artifacts, background signals, and other sources of experimental noise that could obscure meaningful patterns in the data.

Data Validation and Accuracy Checking: Application of domain-specific validation rules to identify physiologically or physically impossible values, measurement outliers, and potential instrument calibration errors that may skew model training.

Format Standardization: Conversion of diverse data formats into consistent structures compatible with AI training pipelines, including normalization of units, timestamp alignment, and categorical variable encoding.

Handling Missing Data: Application of sophisticated imputation techniques to address gaps in experimental measurements, using methods ranging from simple interpolation to advanced generative models that preserve statistical properties of the dataset [31].

The significance of rigorous preprocessing is underscored by industry findings that over 25% of global data and analytics professionals identify poor data quality as a significant barrier, with organizations estimating losses exceeding $5 million annually as a result [30].

Experimental Protocol: Data Preprocessing Workflow

For drug development researchers implementing autonomous experimentation workflows, the following detailed protocol ensures data quality before model training:

Materials and Equipment:

- Raw experimental datasets (e.g., high-throughput screening results, spectroscopic measurements, genomic sequences)

- Computational environment with sufficient processing capacity (minimum 64GB RAM recommended for large datasets)

- Data preprocessing toolkit (Python Pandas, Scikit-learn, or domain-specific libraries like Bioconductor for biological data)

Procedure:

Data Auditing and Assessment (4-6 hours)

- Profile datasets to identify missing values, data types, and value distributions