AI-Powered Reaction Pathway Prediction: Accelerating Autonomous Materials Synthesis for Biomedical Innovation

This article explores the transformative integration of artificial intelligence and robotics for predicting reaction pathways in autonomous materials synthesis.

AI-Powered Reaction Pathway Prediction: Accelerating Autonomous Materials Synthesis for Biomedical Innovation

Abstract

This article explores the transformative integration of artificial intelligence and robotics for predicting reaction pathways in autonomous materials synthesis. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive overview of the foundational principles, key methodologies, and practical applications of self-driving laboratories. The content covers the latest advances in AI-driven platforms, from LLM-guided chemical logic and multi-robot systems to troubleshooting common challenges and validating predictive models. By synthesizing insights from recent case studies and comparative analyses, this article serves as a strategic guide for leveraging autonomous experimentation to accelerate the discovery and optimization of advanced materials, with significant implications for pharmaceutical development and clinical research.

The New Paradigm: Foundations of Autonomous Reaction Pathway Exploration

The discovery and development of advanced materials are fundamental to addressing global challenges in clean energy, healthcare, and sustainable manufacturing. Traditionally, this process has been slow and labor-intensive, taking an average of 20 years and $100 million to bring a new material to market [1]. Self-Driving Labs (SDLs) and Materials Acceleration Platforms (MAPs) represent a paradigm shift, leveraging artificial intelligence (AI), robotics, and advanced computing to autonomously design, execute, and analyze experiments. This transition from manual to autonomous research compresses discovery timelines from years to days and drastically reduces associated costs and environmental impact [1] [2].

A Self-Driving Lab (SDL) is a robotic platform that combines AI with automated experimentation to autonomously and rapidly design and test new materials or molecules [1] [3]. The core of an SDL is a closed-loop system where AI proposes experiments, robots perform synthesis and testing, and the resulting data is fed back to the AI to refine its future predictions [1].

A Materials Acceleration Platform (MAP) can be conceived as a self-driving laboratory specifically engineered for the discovery of advanced materials, often for applications in clean energy [4]. MAPs integrate five key elements: AI models, robotic platforms, orchestration software, storage databases, and human intuition [4]. They are envisioned as a cornerstone for a low-carbon future, accelerating the development of high-performance materials for clean energy technologies [4].

Key Components and Quantitative Comparisons

The Architectural Framework of a MAP

The operation of a MAP is governed by a tightly integrated ecosystem of components that function in a closed-loop manner [4]:

- AI Models: Machine learning and deep learning algorithms that predict promising material candidates and propose subsequent experiments based on data.

- Robotic Platforms: Hardware responsible for the automated synthesis, processing, and characterization of materials.

- Orchestration Software: The central nervous system that manages the entire workflow, coordinates the other components, and executes the decision-making process.

- Storage Databases: Repositories for all generated experimental data, which is essential for the AI models to learn and improve.

- Human Intuition: Researchers define the problem constraints, incorporate prior knowledge, and interact with the AI to guide the overall discovery campaign [4].

Comparative Analysis: Traditional vs. Autonomous Discovery

The table below summarizes the profound differences between traditional materials discovery and the approach enabled by SDLs/MAPs.

Table 1: A comparison of traditional and autonomous materials discovery paradigms.

| Aspect | Traditional Discovery | SDL/MAP Approach | Source |

|---|---|---|---|

| Timeline | ~20 years | As little as 1 year | [1] |

| Cost | ~$100 million | As little as $1 million | [1] |

| Experimental Throughput | Low, limited by human labor | High, hundreds of experiments per day | [5] |

| Primary Driver | Human intuition & trial-and-error | AI-guided, data-driven hypothesis generation | [1] [4] |

| Data Utilization | Sparse; often only successful results reported | Comprehensive; uses all data for continuous learning | [6] |

| Environmental Impact | High chemical waste per successful material | Drastically reduced waste through miniaturization & efficiency | [2] |

Recent advancements continue to push these boundaries. For instance, a new technique using dynamic flow experiments has been shown to collect at least 10 times more data than previous SDL techniques while simultaneously slashing chemical consumption and waste [2].

Experimental Protocols for Autonomous Discovery

This section details a specific, advanced protocol for an autonomous discovery loop, focusing on the synthesis and optimization of inorganic materials in a flow-based SDL.

Protocol: Dynamic Flow Experimentation for Colloidal Nanocrystal Synthesis

This protocol is adapted from a recent study that demonstrated record-breaking data acquisition efficiency for synthesizing CdSe colloidal quantum dots [2].

1. Objective: To autonomously discover and optimize synthesis parameters (e.g., precursor ratios, temperature, reaction time) for CdSe colloidal quantum dots with target optical properties.

2. Experimental Setup and Reagents: Table 2: Key research reagents and hardware solutions for a fluidic SDL.

| Item | Function/Description |

|---|---|

| Cadmium Precursor | e.g., Cadmium oleate, provides the Cd²⁺ source for quantum dot formation. |

| Selenium Precursor | e.g., Selenium-Trioctylphosphine (Se-TOP), provides the Se²⁻ source. |

| Solvents & Ligands | e.g., 1-Octadecene (ODE), Oleic Acid; control growth and stabilize nanoparticles. |

| Continuous Flow Reactor | A microfluidic chip or capillary system where reactions occur under continuous flow. |

| Precise Syringe Pumps | Deliver precursors and solvents at programmed, dynamically varying flow rates. |

| In-line Spectrophotometer | Provides real-time, in-situ characterization of optical properties (absorbance, photoluminescence). |

3. Workflow Diagram:

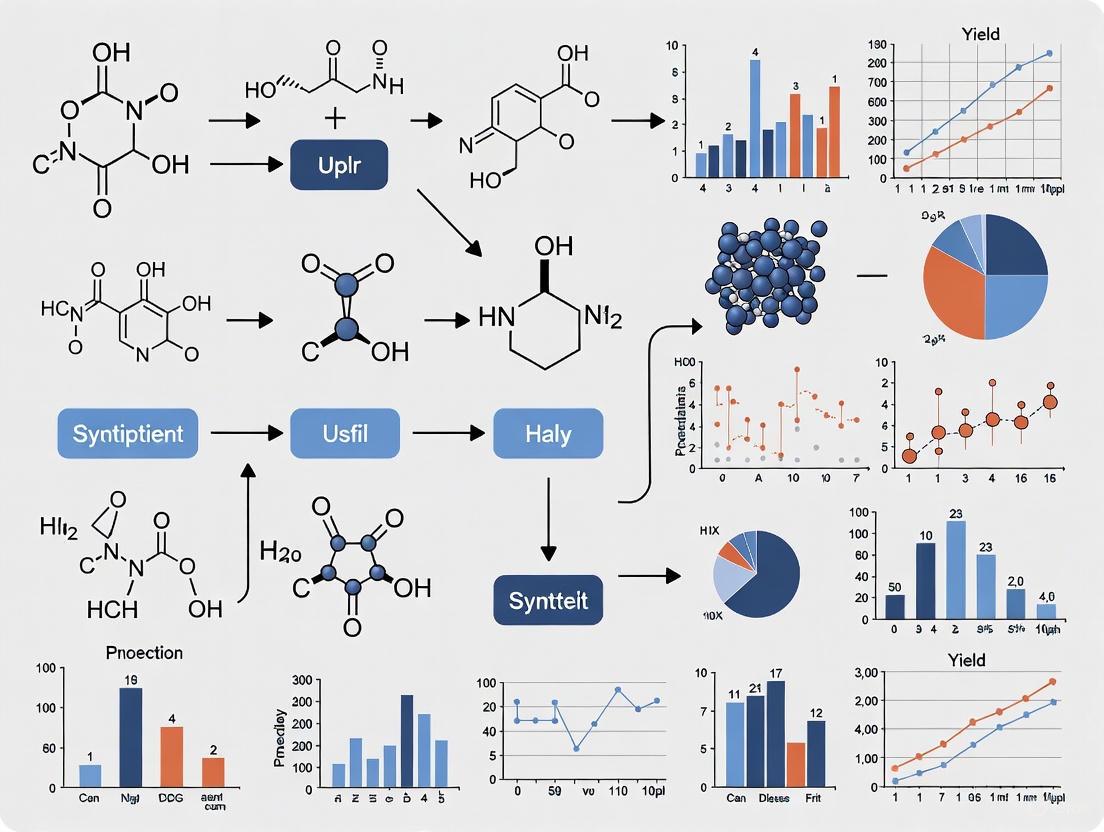

The following diagram illustrates the closed-loop, autonomous workflow that integrates both the physical robotic platform and the AI decision-making core.

4. Step-by-Step Procedure:

- Initialization: The researcher defines the goal (e.g., "maximize photoluminescence quantum yield at 620 nm") and sets system constraints (precursor types, safe operating ranges for temperature and pressure).

- AI-Driven Experimental Design: The machine learning algorithm (e.g., a Bayesian optimizer) proposes an initial set of dynamic flow parameters. Unlike steady-state methods, this involves creating a time-varying profile for precursor flow rates, effectively encoding multiple reaction conditions into a single, continuous experiment [2].

- Robotic Execution: The orchestrator software commands the robotic fluidic platform. Syringe pumps precisely mix and inject precursors according to the dynamic profile into the continuous flow reactor, which is maintained at a set temperature.

- Real-Time, In-Situ Characterization: As the reaction proceeds, the resulting stream of nanocrystals passes through an in-line spectrophotometer. This instrument collects optical data (e.g., absorbance and emission spectra) at a high frequency (e.g., every 0.5 seconds), creating a rich "movie" of the reaction instead of a single "snapshot" [2].

- Data Management: All experimental parameters and corresponding characterization data are automatically stored in a structured database.

- AI Learning and Decision: The AI model processes the new, high-density data stream to update its internal model of the synthesis landscape. It then calculates the most informative experiment to perform next to rapidly converge on the target material.

- Loop Closure: Steps 2-6 are repeated autonomously until a convergence criterion is met (e.g., a material with the desired properties is identified, or a set number of cycles is completed). This dynamic approach allows the SDL to identify optimal candidates on the very first try after its initial training, dramatically accelerating the search [2].

The Scientist's Toolkit: Essential Reagents and Hardware

Implementing an SDL requires a combination of advanced chemical reagents and specialized hardware. The following table details key components for a fluidic platform focused on inorganic nanomaterials, as featured in the protocol above.

Table 3: Essential research reagents and hardware for a fluidic self-driving lab.

| Category | Item | Function / Relevance to Autonomous Discovery |

|---|---|---|

| Chemical Reagents | Metal-containing Precursors (e.g., metal acetates, oleates) | Source of inorganic material; varied to explore different elemental compositions. |

| Chalcogenide Sources (e.g., Se-TOP, S-ODE) | React with metal precursors to form semiconductor nanocrystals. | |

| Surfactants & Ligands (e.g., Oleic Acid, Oleylamine) | Control nucleation and growth kinetics; critical for achieving size and shape control. | |

| Robotic Hardware | Continuous Flow Reactor (Microfluidic Chip) | Enables rapid, controlled reactions with efficient heat/mass transfer. |

| Precision Syringe Pumps | Allow for dynamic, computer-controlled variation of reactant flow rates. | |

| In-line Spectrophotometer / Analyzer | Provides real-time feedback on material properties without human intervention. | |

| Automated Sample Collector | Physically collects candidate materials for later off-line validation. |

The maturation of Self-Driving Labs and Materials Acceleration Platforms marks a transformative moment in materials science and synthetic biology. By closing the loop between AI-led hypothesis generation and robotic validation, they invert the traditional discovery process, allowing scientists to define desired properties and work backward with unprecedented speed [1]. This capability is critical for developing materials for clean energy, sustainable chemicals, and next-generation electronics [4] [5].

The future of this field lies in achieving full autonomy. Current challenges include improving the generalizability of AI models, developing standardized data formats, and creating more robust and flexible robotic systems [4] [6]. The integration of explainable AI (XAI) will be crucial for building trust and providing deeper scientific insights, moving beyond black-box predictions [6]. Furthermore, the concept of "data intensification"—gaining orders of magnitude more information from each experiment, as demonstrated by dynamic flow methods—will be a key driver for making autonomous discovery even faster and more sustainable [2]. As these technologies converge, SDLs and MAPs are poised to become a powerful, foundational engine for scientific advancement, turning autonomous experimentation from a proof-of-concept into a core pillar of national research infrastructure [6] [5].

Autonomous synthesis systems represent a paradigm shift in materials and chemical research, integrating artificial intelligence (AI), robotics, and closed-loop optimization to accelerate discovery and development. These systems close the gap between computational screening and experimental realization by creating a continuous workflow where AI plans experiments, robotics executes them, and analytical data informs subsequent AI decisions [7]. This autonomous cycle minimizes human intervention and significantly reduces the time from conceptual design to validated synthesis.

The core value of these systems lies in their ability to navigate complex experimental spaces more efficiently than human researchers. For instance, the A-Lab, an autonomous laboratory for solid-state synthesis of inorganic powders, successfully realized 41 novel compounds from 58 targets over 17 days of continuous operation by leveraging computations, historical data, machine learning, and active learning [7]. This demonstrates the transformative potential of autonomous systems for accelerating materials discovery and development pipelines in both academic and industrial settings.

Core System Components

Artificial Intelligence and Planning Modules

The intelligence layer of autonomous synthesis systems encompasses multiple AI subsystems working in concert to plan and interpret experiments. Retrosynthesis planning algorithms form the foundation, with tools like ASKCOS and Synthia using data-driven approaches to propose viable synthetic routes [8]. These systems have reached a level of sophistication where graduate-level organic chemists express no statistically significant preference between literature-reported routes and program-generated ones [8].

Recent advances include generative AI approaches that incorporate physical constraints. The FlowER (Flow matching for Electron Redistribution) system developed at MIT uses a bond-electron matrix to represent electrons in a reaction, ensuring conservation of mass and electrons while predicting outcomes [9]. For more complex reaction pathway exploration, tools like ARplorer integrate quantum mechanics with rule-based methodologies guided by large language models (LLMs) to explore potential energy surfaces and identify transition states [10].

Natural language processing models trained on extensive synthesis literature provide another critical capability, assessing target similarity to propose initial synthesis recipes based on analogy to known materials [7]. These models enable the system to leverage historical knowledge much like an experienced human chemist would when approaching a new synthetic challenge.

Robotic Hardware and Automation

The physical execution of synthesis plans requires sophisticated robotic systems capable of handling diverse chemical operations. Two predominant paradigms exist: flow chemistry platforms and batch processing systems. Flow platforms use computer-controlled pumps and reconfigurable flowpaths to perform reactions in continuous streams [11] [8], while batch systems like the ChemComputer automate traditional round-bottom flask operations [8].

More recently, modular systems using mobile robots have emerged as a flexible alternative. These platforms employ free-roaming robotic agents that transport samples between standardized stations for synthesis, analysis, and processing [12]. This approach allows robots to share existing laboratory equipment with human researchers without requiring extensive redesign or monopolizing instruments [12].

Essential hardware modules include automated liquid handling systems for precise reagent dispensing, robotic grippers for vial and plate transfer, computer-controlled heater/shaker blocks for reaction management, and automated purification systems. The A-Lab exemplifies integration of these components with three specialized stations for powder handling, furnace heating, and X-ray diffraction characterization, coordinated by robotic arms for sample transfer [7].

Closed-Loop Optimization and Active Learning

Closed-loop optimization transforms automated systems into truly autonomous laboratories by enabling continuous improvement based on experimental outcomes. Active learning algorithms like ARROWS3 (Autonomous Reaction Route Optimization with Solid-State Synthesis) integrate ab initio computed reaction energies with observed synthesis outcomes to predict optimal solid-state reaction pathways [7].

These systems typically employ Bayesian optimization strategies to navigate complex parameter spaces efficiently. The A-Lab demonstrated this capability by successfully optimizing synthesis routes for nine targets, six of which had zero yield from initial literature-inspired recipes [7]. By building databases of observed pairwise reactions and prioritizing intermediates with large driving forces to form targets, the system could reduce search spaces by up to 80% [7].

Table 1: Key Performance Metrics of Autonomous Synthesis Systems

| System/Platform | Synthesis Type | Success Rate | Throughput | Optimization Capability |

|---|---|---|---|---|

| A-Lab [7] | Solid-state inorganic powders | 71% (41/58 targets) | Continuous 17-day operation | Active learning with ARROWS3 |

| Mobile Robot Platform [12] | Organic and supramolecular | Varies by chemistry | Parallel synthesis capabilities | Heuristic decision-making |

| Flow Chemistry Systems [11] [8] | Organic compounds | Dependent on reaction scope | Continuous flow | Bayesian optimization |

Experimental Protocols and Workflows

Protocol: Autonomous Multi-Step Organic Synthesis

This protocol outlines the procedure for automated multi-step synthesis using a mobile robotic platform integrated with a Chemspeed ISynth synthesizer, UPLC-MS, and benchtop NMR, as demonstrated by Steiner et al. [12].

Materials and Equipment:

- Chemspeed ISynth automated synthesizer or equivalent

- Mobile robotic agents with multipurpose grippers

- UPLC-MS system

- Benchtop NMR spectrometer (80 MHz)

- Standard laboratory consumables (vials, plates, etc.)

- Chemical inventory of building blocks and reagents

Procedure:

- Synthesis Planning: Input target molecules to the planning software. The system generates synthetic routes using retrosynthesis algorithms [8].

- Reagent Preparation: The automated platform dispenses prescribed amounts of starting materials to reaction vials using liquid handling robots.

- Reaction Execution: Transfer vials to heating/stirring stations under inert atmosphere if required. Perform reactions at specified temperatures and durations.

- Reaction Monitoring: At completion, the synthesizer takes aliquots of each reaction mixture and reformats them separately for MS and NMR analysis.

- Sample Transport: Mobile robots transport samples to appropriate analytical instruments [12].

- Orthogonal Analysis: Perform parallel UPLC-MS and 1H NMR characterization using automated scripts.

- Data Integration: Analytical results are saved to a central database for processing by the decision-making algorithm.

- Decision Cycle: The heuristic decision-maker applies pass/fail criteria to both analytical results to determine which reactions proceed to subsequent steps [12].

- Scale-up and Diversification: Successful reactions are automatically scaled up or diversified in subsequent synthetic steps without human intervention.

Troubleshooting:

- If analytical results are ambiguous, the system can be programmed to perform additional characterization or repeat reactions.

- Clogged lines in flow systems require automated detection and bypass protocols [8].

- Failed reactions trigger alternative route generation using active learning algorithms [7].

Protocol: Solid-State Materials Synthesis and Optimization

This protocol describes the procedure for autonomous synthesis of novel inorganic powders using the A-Lab system [7].

Materials and Equipment:

- Powder dispensing and mixing station

- Robotic arms for crucible transfer

- Box furnaces (multiple units for parallel processing)

- Automated grinding station

- X-ray diffractometer with autosampler

- Alumina crucibles

- Precursor powders

Procedure:

- Target Identification: Select target materials predicted to be stable using ab initio phase-stability data from sources like the Materials Project.

- Recipe Generation: Generate initial synthesis recipes using natural language models trained on literature data [7].

- Precursor Preparation: Automatically dispense and mix precursor powders in appropriate stoichiometries using the powder handling station.

- Reaction Execution: Transfer mixtures to alumina crucibles and load into furnaces using robotic arms. Heat to temperatures predicted by ML models trained on heating data [7].

- Product Characterization: After cooling, grind samples to fine powders and analyze by XRD.

- Phase Identification: Use probabilistic ML models to identify phases and weight fractions from XRD patterns, confirmed with automated Rietveld refinement.

- Active Learning Cycle: If target yield is <50%, employ ARROWS3 algorithm to propose improved synthesis routes based on observed reaction pathways and thermodynamic driving forces [7].

- Iterative Optimization: Repeat synthesis with modified conditions until target is obtained as majority phase or all recipe options are exhausted.

Validation:

- Compare predicted and experimental XRD patterns to verify successful synthesis.

- Cross-reference identified phases with computational databases.

- Document all attempted recipes and outcomes to improve future predictions.

System Workflow Visualization

Autonomous Synthesis Closed Loop

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for Autonomous Synthesis

| Reagent/Material | Function | Application Examples | Considerations |

|---|---|---|---|

| MIDA-boronates [8] | Iterative cross-coupling building blocks | Automated synthesis of polycyclic structures | Catch-and-release purification compatibility |

| Diverse precursor powders [7] | Starting materials for solid-state reactions | Synthesis of novel inorganic oxides and phosphates | Purity, particle size, and reactivity |

| Functionalized building blocks [12] | Modular components for diversity-oriented synthesis | Library generation for drug discovery | Stability, compatibility with automated handling |

| Specialized catalysts [10] | Enable challenging transformations | Organometallic and asymmetric reactions | Stability under automated conditions |

| Deuterated solvents [12] | NMR spectroscopy for structural validation | Reaction monitoring and product characterization | Compatibility with automated liquid handling |

Implementation Challenges and Future Directions

Despite significant advances, autonomous synthesis systems face several implementation challenges. Purification remains a particular hurdle, as universally applicable automated purification strategies do not yet exist [8]. Analytical limitations also persist, with most platforms equipped primarily with LC-MS while structural elucidation often requires additional techniques like NMR or specialized detectors [8] [12].

Kinetic limitations pose another challenge, particularly for solid-state synthesis where sluggish reaction kinetics hindered 11 of 17 failed targets in the A-Lab study [7]. Future developments will likely focus on expanding reaction scope, particularly for metallic and catalytic systems where current models have limited experience [9]. Improved integration of multimodal data and development of platforms that can better handle unforeseen outcomes will also be critical for advancing from automation to true autonomy [8].

The ongoing integration of large language models with quantum mechanical calculations shows promise for enhancing reaction pathway exploration [10]. As these technologies mature and databases of experimental results grow, autonomous synthesis systems will become increasingly sophisticated, potentially capable of discovering entirely new reactions and mechanisms beyond human intuition.

The paradigm of materials discovery is undergoing a profound transformation, shifting from traditional trial-and-error approaches toward autonomous, data-driven workflows [13]. This evolution is enabled by the integration of artificial intelligence (AI), automated robotic platforms, and high-throughput computation, creating closed-loop systems that dramatically accelerate research cycles [14] [13]. The core of this modern approach is a seamless workflow that begins with computational target selection, proceeds through automated synthesis, and concludes with comprehensive characterization, with data flowing continuously back to inform subsequent cycles [13]. This article details the application notes and protocols for implementing such a workflow within the context of reaction pathway prediction for autonomous materials synthesis, providing researchers with practical methodologies to advance their discovery pipelines.

Target Selection and Pathway Prediction

The initial phase of the autonomous workflow involves identifying promising candidate materials and predicting their viable synthesis pathways before any experimental resources are committed.

Data-Driven Target Selection

Target selection leverages large-scale intelligent models to navigate the vast chemical space efficiently. Stable crystal structures can be predicted using models like the GNoME (Materials Exploration Graph Network), which has expanded the number of known stable materials nearly tenfold [13]. For molecular targets, tools such as Prompt-MolOpt leverage Large Language Models (LLMs) for multi-property molecular optimization, enabling the design of molecules tailored to specific property requirements [10]. The quantitative metrics for target selection are summarized in Table 1.

Table 1: Quantitative Metrics for Data-Driven Target Selection

| Method/Model | Primary Function | Reported Output/Scale | Key Performance Metric |

|---|---|---|---|

| GNoME Intelligent Model [13] | Crystal structure prediction | 421,000+ stable materials discovered | ~10x increase in known stable structures |

| Prompt-MolOpt [10] | Multi-property molecular optimization | Optimized molecular structures | Remarkable performance in preserving pharmacophores |

| Bayesian Optimization [13] | Search space optimization | Minimized trials to convergence | Efficient global optimum identification |

Reaction Pathway Exploration with ARplorer

Once a target is identified, the next critical step is to explore its potential energy surface (PES) to identify feasible reaction pathways. The ARplorer program exemplifies a modern approach to this challenge, integrating quantum mechanics (QM) with rule-based methodologies underpinned by LLM-guided chemical logic [10].

Protocol: Automated Reaction Pathway Exploration with ARplorer

- Objective: To automatically identify viable reaction pathways and transition states for a given molecular system.

- Software Requirements: ARplorer (Python and Fortran-based), compatible QM software (e.g., Gaussian 09), GFN2-xTB for semi-empirical calculations.

- Procedure:

- Input Preparation: Convert the reaction system into Simplified Molecular Input Line Entry System (SMILES) format.

- Chemical Logic Curation:

- Access a pre-generated general chemical logic library from literature.

- Generate system-specific chemical logic and SMARTS patterns using specialized LLMs via prompt engineering [10].

- Active Site Identification: Utilize the Pybel Python module to compile a list of active atom pairs and potential bond-breaking locations [10].

- Recursive Pathway Search: Execute the ARplorer algorithm, which operates iteratively:

- Step 1: Set up multiple input molecular structures based on active site analysis.

- Step 2: Perform iterative transition state (TS) searches, employing a blend of active-learning sampling and potential energy assessments.

- Step 3: Conduct Intrinsic Reaction Coordinate (IRC) analysis to derive new reaction pathways, eliminate duplicates, and finalize structures for the next cycle [10].

- Pathway Validation: Confirm the identified pathways and TS using higher-fidelity computational methods like Density Functional Theory (DFT).

The following diagram illustrates the logical workflow of the ARplorer program:

Diagram 1: The ARplorer program integrates LLM-guided chemical logic with recursive QM calculations to automate the exploration of reaction pathways [10].

Autonomous Synthesis and Experimental Execution

Following the computational prediction of targets and pathways, the workflow moves to the physical realm of synthesis within an autonomous laboratory.

The Autonomous Laboratory Framework

An autonomous laboratory is an embodied intelligence-driven platform that integrates several fundamental elements to close the "predict-make-measure" discovery loop [13]. These elements include:

- Chemical Science Databases: Serve as the knowledge backbone, integrating structured and unstructured data from sources like Reaxys, PubChem, and scientific literature, often organized via Knowledge Graphs (KGs) constructed with LLMs [13].

- Large-Scale Intelligent Models: Act as the decision-making core, using algorithms like Bayesian Optimization and Genetic Algorithms (GAs) to plan experiments based on prior data [13].

- Automated Experimental Platforms: Robotic systems that execute synthesis and handling tasks.

- Integrated Management & Decision Systems: Software that orchestrates the entire closed-loop operation [13].

Protocol: Closed-Loop Operation for Thin-Film Materials Discovery

- Objective: To autonomously synthesize and optimize a thin-film material library based on iterative computational guidance.

- Platform Configuration: This protocol aligns with platforms like the Ada self-driving laboratory for thin-film materials, which utilizes the Phoenics algorithm (a Bayesian optimization method) [13].

- Hardware Requirements: Physical vapor deposition (PVD) chambers with automated X-Y motion stages for combinatorial deposition [14].

- Procedure:

- Initialization: The management system receives a target material property. An intelligent model proposes an initial set of synthesis parameters (e.g., chemical composition gradients, substrate temperature gradients).

- Combinatorial Deposition: Robotic systems prepare a material library via PVD, creating well-controlled gradients across a substrate [14].

- Synthesis Data Logging: All synthesis parameters (composition, temperature, thickness) are automatically recorded and mapped to their specific locations on the substrate library [14].

- Analysis and Decision: After characterization, the data is fed back to the Bayesian optimization model. The model analyzes the results, updates its internal surrogate model, and proposes a new, more optimal set of synthesis parameters for the next iteration [13].

- Iteration: The loop (Steps 2-4) continues autonomously until a convergence criterion (e.g., target property achieved) is met.

Diagram 2: The closed-loop predict-make-measure-analyze cycle of an autonomous laboratory, enabling self-driving experimentation [13].

High-Throughput Characterization and Data Analysis

Rapid, automated characterization is essential for providing feedback within the autonomous loop.

Spatially Resolved Characterization

The material libraries generated by combinatorial deposition are analyzed using characterization instruments equipped with automatically controlled X-Y motion stages. This enables precise mapping of properties (e.g., optical, electronic, structural) as a function of position, and consequently, as a function of the synthesis parameters like composition and temperature [14].

Automated Data Analysis

The combinatorial synthesis and spatially resolved characterization of material libraries generate enormous datasets. To manage this, robust data analysis capabilities are required. These can include both local and network-based analysis pipelines designed to process the raw data and transform it into actionable knowledge and insights for the next experimental cycle [14].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational and Experimental Resources

| Item/Resource | Function/Description | Application Note |

|---|---|---|

| ARplorer Software [10] | Automated exploration of reaction pathways and transition states. | Integrates QM with LLM-guided chemical logic for efficient PES searching. |

| GFN2-xTB [10] | Semi-empirical quantum mechanical method for fast PES generation. | Used for quick, large-scale screening of reaction pathways. |

| Gaussian 09 [10] | Software for electronic structure modeling. | Provides algorithms for searching PES; can be used for high-fidelity validation. |

| Bayesian Optimization [13] | An efficient algorithm for global optimization of black-box functions. | Core decision-making algorithm in autonomous labs for minimizing experiments to convergence. |

| Combinatorial PVD Chamber [14] | Instrument for creating material libraries with gradients in composition, temperature, etc. | Enables high-throughput synthesis of sample arrays for autonomous screening. |

| X-Y Motion Stage [14] | Automated stage for positioning samples in characterization instruments. | Allows for spatially resolved mapping of properties across a material library. |

Key Challenges in Traditional Materials Development and the SDL Solution

The development of novel materials has historically been a time-intensive and resource-heavy process, often characterized by sequential experimentation and a significant degree of intuition. This traditional paradigm faces substantial challenges in keeping pace with the demands for sustainable and high-performance materials. This application note delineates the principal bottlenecks inherent in conventional materials development and posits the Sustainable Development Lifecycle (SDL) as an integrated solution, with a specific focus on its application in reaction pathway prediction for autonomous materials synthesis. This framework is particularly pertinent for researchers and scientists engaged in the design of next-generation materials for pharmaceuticals, energy storage, and sustainable construction.

Key Challenges in Traditional Materials Development

Traditional materials development is hampered by several interconnected challenges that limit its efficiency, sustainability, and scope. The table below summarizes these core bottlenecks.

Table 1: Core Challenges in Traditional Materials Development

| Challenge Category | Specific Limitations | Impact on Development |

|---|---|---|

| Environmental Impact | High emissions from concrete production; use of non-renewable, resource-intensive materials [15] [16]. | Contributes significantly to global CO₂ levels and conflicts with decarbonization goals. |

| Material Performance & Durability | Susceptibility to cracking (concrete); degradation from water, sunlight, and fungi (wood); limited load-bearing strength (earthen materials) [16]. | Shortens service life, increases maintenance, and restricts application in demanding environments. |

| Process Inefficiency | Reliance on sequential, trial-and-error experimentation; lengthy development cycles for new chemistries and composites [15]. | Slows time-to-market and limits the exploration of a wide material design space. |

| Safety & Toxicity | Traditional toxicity testing is time-consuming, costly, and ethically complex; challenges in assessing mixture exposures [17]. | Hinders the rapid implementation of "Safe and Sustainable by Design" (SSbD) principles for new materials. |

| Data Management & Integration | Lack of integrated data streams from synthesis, characterization, and lifecycle analysis [18]. | Prevents a holistic view of material properties and sustainability, impeding informed decision-making. |

The SDL Solution: A Paradigm for Sustainable and Efficient Materials Development

The Sustainable Development Lifecycle (SDL) is a holistic framework that integrates data-driven design, advanced processing, and circular economy principles to overcome traditional limitations. It leverages reaction pathway prediction as a core enabling technology for autonomous materials development, creating a closed-loop system that continuously learns and optimizes.

The following diagram illustrates the integrated workflow of the SDL, highlighting how it connects data, prediction, and sustainable action.

Core Pillars of the SDL Framework

- Data-Driven Materials Design: The SDL utilizes a centralized knowledge base that aggregates data from synthesis parameters, advanced characterization (e.g., in-situ monitoring), and lifecycle assessments [18] [17]. This data foundation is essential for training machine learning models for accurate reaction pathway prediction.

- Safe and Sustainable by Design (SSbD): The framework embeds sustainability and safety at the molecular design phase. This involves using predictive toxicology and New Approach Methodologies (NAMs) for early hazard identification, and selecting feedstocks and pathways that minimize environmental impact [17].

- Advanced Processing and Automation: The SDL integrates robotic synthesis and autonomous laboratories to execute predicted reaction pathways rapidly and reproducibly. This enables high-throughput experimentation and closes the loop between design and validation [16].

- Circularity and Regenerative Lifecycle: The framework prioritizes the use of abundant, bio-based, or waste-derived feedstocks (e.g., excavated earth, bamboo, CO₂) [16] [17]. It also designs materials for disassembly, reuse, or biodegradable end-of-life scenarios, transforming the traditional linear model into a circular one.

Experimental Protocols for SDL Implementation

This section provides detailed methodologies for key experiments that operationalize the SDL framework, with a focus on generating data for reaction pathway prediction.

Protocol: High-Throughput Screening of Sustainable Composite Materials

Objective: To rapidly synthesize and characterize a library of bio-based composite materials for mechanical properties and sustainability metrics.

- Material Preparation:

- Feedstock Selection: Prepare a matrix of biopolymers (e.g., Polylactic Acid - PLA) and reinforcement materials (e.g., Bamboo fiber powder, silica aerogel) [15].

- Mixing and Processing: Use an automated twin-screw compounder to prepare composite blends with varying reinforcement ratios (e.g., 1%, 5%, 10% by weight). Processing parameters (temperature, screw speed) should be logged for each run.

- Automated Specimen Fabrication: Employ a robotic press or injection molding system to fabricate standardized tensile and impact test specimens (e.g., ASTM D638 Type I) from each composite blend.

- High-Throughput Characterization:

- Mechanical Testing: Use an automated universal testing machine equipped with a load cell and extensometer to perform tensile tests. Record Young's Modulus, Tensile Strength, and Elongation at Break.

- Barrier Properties: Measure water vapor transmission rate (WVTR) and oxygen transmission rate (OTR) using calibrated sensors.

- Data Logging and Integration: Automatically log all synthesis parameters, processing conditions, and characterization results into the centralized SDL Knowledge Base. This dataset is critical for training predictive models that link composition to performance.

Protocol: Predictive Hazard Assessment for Novel Material Chemistries

Objective: To implement a Safe-and-Sustainable-by-Design (SSbD) workflow using in silico and high-throughput in vitro methods for early-stage hazard assessment of new material building blocks [17].

- In Silico Screening (Tier 1):

- Utilize quantitative structure-activity relationship (QSAR) models and software tools to predict physicochemical properties and toxicity endpoints (e.g., mutagenicity, aquatic toxicity) for proposed molecular structures.

- Perform a preliminary lifecycle screening using simplified LCA tools to flag potential high-impact feedstocks or processes.

- High-Content Screening (Tier 2):

- For candidates passing Tier 1, proceed to in vitro testing using cell-based assays (e.g., human hepatocyte models for cytotoxicity).

- Employ high-content imaging and analysis to evaluate multiple adverse outcome pathways (AOPs) simultaneously, such as mitochondrial membrane potential and reactive oxygen species generation.

- Data for SSbD: Integrate the predicted and experimental hazard data with performance metrics from Protocol 4.1. This multi-parameter dataset allows the SDL Decision Engine to identify material candidates that optimally balance performance, safety, and sustainability.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key materials and reagents essential for experiments within the SDL framework, particularly those focused on developing sustainable materials.

Table 2: Essential Research Reagents for Sustainable Materials Development

| Reagent/Material | Function & Application | Sustainable & Safety Considerations |

|---|---|---|

| Polylactic Acid (PLA) | A biodegradable thermoplastic polymer used as a matrix for bio-composites in sustainable packaging and consumer products [15]. | Derived from renewable resources like corn starch; requires industrial composting for degradation. |

| Bamboo Fiber Powder | A natural fiber used as a reinforcement in polymer composites to improve tensile strength and modulus, replacing synthetic fibers [15]. | Fast-growing, high-carbon-sequestration biomass; requires consideration of binding resins and processing. |

| Silica Aerogel | A nanoporous solid used as an additive to enhance the mechanical and barrier properties (e.g., WVTR) of composites, or as a highly efficient insulation material [15]. | Offers superior thermal performance reducing operational energy; synthesis can be energy-intensive. |

| Phase-Change Materials (PCMs) | Substances (e.g., paraffin wax, salt hydrates) used in thermal energy storage systems for buildings, storing/releasing heat during phase transitions [15]. | Enable energy efficiency in heating and cooling; material sourcing and long-term stability are key factors. |

| Liquid Earth Formulations | Clay-rich soil mixed with natural additives for use in rammed-earth construction, providing a low-carbon alternative to concrete walls [16]. | Abundant, low-emission material; research focuses on additives to enhance water resistance and strength. |

| Trass Lime | A natural pozzolanic material (volcanic rock) used as an additive in earthen constructions to increase durability and compressive strength [16]. | A natural material that can reduce the carbon footprint of binders compared to Portland cement. |

The transition from traditional materials development to the data-centric, autonomous SDL framework represents a fundamental shift in materials science. By directly addressing the challenges of environmental impact, process inefficiency, and safety through integrated pillars of data-driven design, SSbD, and advanced processing, the SDL offers a viable pathway to accelerate the discovery and deployment of sustainable materials. The integration of reaction pathway prediction acts as the central nervous system of this framework, enabling a proactive and intelligent design process. The experimental protocols and research tools detailed herein provide a tangible starting point for research teams to implement this paradigm, ultimately contributing to a more sustainable and efficient materials future.

AI in Action: Methodologies and Real-World Applications in Pathway Prediction

The integration of Large Language Models (LLMs) into chemical research represents a paradigm shift from their role as direct structure generators to sophisticated reasoning engines that guide traditional search algorithms. This approach leverages the strategic understanding of LLMs while maintaining the precision of established computational tools, creating a powerful synergy for autonomous materials synthesis [19]. By framing LLMs as intelligent guides, researchers can now tackle two of the most intellectually demanding tasks in chemistry: strategy-aware retrosynthetic planning and reaction mechanism elucidation, with unprecedented efficiency and strategic depth [19]. This Application Note provides detailed protocols and frameworks for implementing LLM-guided systems to enhance reaction pathway prediction within autonomous discovery workflows.

Quantitative Performance of LLMs in Chemical Tasks

Recent systematic evaluations demonstrate that current LLMs exhibit robust capabilities in analyzing chemical entities and strategic patterns, with performance strongly correlating with model scale [19].

Table 1: Performance of LLM Models in Strategy-Aware Retrosynthetic Planning

| Model | Short Route Performance | Complex Route Performance | Strategy Alignment Capability |

|---|---|---|---|

| Claude-3.7-Sonnet | High | Moderate to High | Advanced strategic understanding |

| Claude-3.5 | Moderate to High | Moderate | Good strategic tracking |

| GPT-4o | Moderate | Limited | Basic strategy evaluation |

| DeepSeek-V3 | Moderate | Limited | Basic strategy evaluation |

| GPT-4o-mini | Poor (indistinguishable from random) | Poor | Minimal strategic reasoning |

Table 2: LLM-Guided Synthesis Success Rates in Autonomous Systems

| Application Domain | Success Rate | Key Performance Metrics | Limitations |

|---|---|---|---|

| Solid-state inorganic synthesis (A-Lab) | 71-78% | 41/58 novel compounds synthesized | Slow kinetics, precursor volatility [7] |

| Organic molecule synthesis (ChemCrow) | High (validated cases) | Successful synthesis of insect repellent, organocatalysts | Procedure validation required [20] |

| Reaction pathway exploration (ARplorer) | Enhanced efficiency | Accelerated PES searching with LLM-guided logic | System-specific adaptations needed [10] |

Experimental Protocols

Protocol: LLM-Guided Retrosynthetic Planning with Strategic Constraints

Purpose: To implement strategy-aware retrosynthetic planning using LLMs as reasoning engines to guide search algorithms toward routes satisfying natural language constraints.

Materials and Reagents:

- LLM API access (Claude-3.7-Sonnet, GPT-4o, or equivalent)

- Traditional retrosynthetic planning software (ASKCOS, AiZynthFinder, or IBM RXN)

- Molecular structure input (SMILES, SDF, or other standard format)

- Natural language strategy specification

Procedure:

- Input Preparation:

- Define target molecule using standardized representation (SMILES preferred)

- Formulate strategic constraints in natural language (e.g., "construct pyrimidine ring in early stages," "avoid sensitive functional groups until final steps")

System Configuration:

- Integrate LLM as evaluation module within existing retrosynthetic search framework

- Configure LLM prompt structure with explicit instructions for chemical reasoning

- Set scoring parameters for route-to-prompt alignment assessment

Search Execution:

- Traditional search algorithm generates candidate synthetic routes

- LLM evaluates each route against strategic constraints

- LLM provides chemical rationale for each evaluation

- System iteratively refines routes based on LLM feedback

Output Analysis:

Troubleshooting:

- For long synthetic sequences (>20 steps), implement chunked analysis to maintain evaluation accuracy

- If strategic alignment is poor, refine natural language constraints with more specific chemical terminology

- For invalid chemical suggestions, augment LLM with structure validation tools

Protocol: LLM-Guided Reaction Mechanism Elucidation

Purpose: To elucidate plausible reaction mechanisms by combining LLM understanding of chemical principles with systematic exploration of electron-pushing steps.

Materials and Reagents:

- LLM with chemical reasoning capabilities

- Quantum mechanics calculation software (Gaussian, GFN2-xTB)

- Reaction representation system (bond-electron matrix, SMILES)

- Experimental data (reactants, products, potential intermediates)

Procedure:

- Reaction Setup:

- Input reactant and product structures

- Define known experimental constraints or observations

- Select calculation level for energy evaluations

Mechanism Exploration:

- LLM identifies potential reactive sites and elementary steps

- System enumerates possible electron-pushing steps

- LLM evaluates chemical plausibility of each step

- QM calculations validate energy feasibility of LLM-selected steps

Pathway Assembly:

- LLM guides assembly of elementary steps into complete mechanisms

- Active learning approach identifies key transition states

- LLM prioritizes mechanisms consistent with chemical principles

Validation:

Troubleshooting:

- For complex systems, implement hierarchical exploration focusing on highest-probability steps first

- If LLM suggests chemically implausible steps, augment with explicit rule-based constraints

- For catalytic systems, ensure proper handling of metal centers and coordination chemistry

Protocol: Autonomous Synthesis Execution with LLM Guidance

Purpose: To implement end-to-end autonomous synthesis from planning to physical execution using LLM-guided systems.

Materials and Reagents:

- LLM chemistry agent (ChemCrow or equivalent)

- Robotic synthesis platform (RoboRXN or equivalent)

- Chemical precursor databases

- Synthesis validation tools

Procedure:

- Target Identification:

- Define desired compound or material properties

- LLM searches for candidate molecules meeting criteria

- Select target based on synthetic accessibility

Route Planning:

- LLM plans synthetic route using retrosynthetic analysis

- Validate route with reaction prediction tools

- Check precursor availability in database

Procedure Optimization:

- LLM generates detailed experimental procedure

- Validate procedure for robotic platform compatibility

- Iteratively adjust parameters (solvent volumes, purification) until fully valid

Execution:

- Deploy validated procedure to robotic platform

- Monitor reaction progress autonomously

- Characterize products and assess yield [20]

Troubleshooting:

- For procedure validation failures, implement iterative adjustment with platform-specific constraints

- If yields are low, employ active learning to optimize reaction conditions

- For novel compounds, validate identity through multiple characterization techniques

Workflow Visualization

LLM-Guided Retrosynthetic Planning Workflow

LLM-Guided Reaction Mechanism Exploration

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Key Research Reagents and Platforms for LLM-Guided Chemistry

| Tool/Platform | Function | Application Context | Access |

|---|---|---|---|

| ChemCrow | LLM chemistry agent with 18 expert-designed tools | Organic synthesis, drug discovery, materials design | Open source [20] |

| ARplorer | Automated reaction pathway exploration | Potential energy surface studies, mechanism elucidation | Research code [10] |

| IBM RXN | Reaction prediction and synthesis planning | Retrosynthetic analysis, reaction outcome prediction | Web platform [21] |

| AiZynthFinder | Retrosynthetic planning | Synthetic route discovery | Open source [21] |

| RoboRXN | Cloud-connected robotic synthesis | Autonomous reaction execution | Platform access required [20] |

| FlowER | Reaction prediction with physical constraints | Electron-conserving reaction prediction | Open source [9] |

| ASKCOS | Computer-aided synthesis planning | Retrosynthetic analysis and reaction condition recommendation | Open source [21] |

| AlchemyBench | Materials synthesis benchmark | Evaluation of synthesis prediction models | Research dataset [22] |

Implementation Considerations

Model Selection and Scaling

The effectiveness of LLM-guided chemical reasoning demonstrates strong scaling with model size, with smaller models showing performance indistinguishable from random selection [19]. For research implementation:

- Prioritize larger models (Claude-3.7-Sonnet, GPT-4) for complex strategic reasoning

- Fine-tune smaller models for specific chemical subdomains when computational resources are limited

- Consider ensemble approaches combining multiple LLMs for critical evaluations

Data Quality and Curation

LLM performance in chemical tasks depends heavily on training data quality and diversity:

- Curate diverse reaction datasets encompassing various reaction classes and conditions

- Include failed reactions to avoid bias toward only successful transformations

- Incorporate mechanistic data to enhance reasoning capabilities [21]

- Validate generated suggestions with physical constraints and expert knowledge

Integration with Physical Systems

For autonomous materials discovery, seamless integration between LLM reasoning and robotic execution is essential:

- Implement iterative validation between proposed procedures and platform constraints

- Develop feedback mechanisms to incorporate experimental results into reasoning cycles

- Establish safety protocols for autonomous execution of chemical reactions [7] [20]

The integration of Large Language Models as chemical guides represents a transformative approach to reaction planning and logic in autonomous materials synthesis. By leveraging LLMs as reasoning engines rather than direct structure generators, researchers can maintain chemical validity while incorporating sophisticated strategic thinking. The protocols and frameworks presented in this Application Note provide practical implementation guidelines for deploying these systems across various chemical domains, from organic synthesis to materials discovery. As these technologies continue to mature, the collaboration between human expertise and LLM-guided reasoning promises to accelerate the pace of chemical discovery while maintaining the rigorous standards of the field.

The acceleration of data-driven reaction development and catalyst design is fundamentally linked to our ability to rapidly and accurately explore chemical reaction pathways. ARplorer is an automated computational program that addresses this challenge by integrating quantum mechanics and rule-based methodologies, underpinned by a Large Language Model (LLM)-assisted chemical logic [10]. This application note details ARplorer's architecture, showcases its performance through quantitative case studies, and provides detailed protocols for its application in autonomous materials synthesis research. By employing active-learning methods and parallel multi-step reaction searches, ARplorer significantly enhances the efficiency of Potential Energy Surface (PES) exploration, positioning it as a powerful tool for accelerating discovery in pharmaceutical and materials chemistry [10].

ARplorer operates on a recursive algorithm designed to automate the exploration of complex reaction pathways. Its development in Python and Fortran allows for robust numerical computation and flexible integration with electronic structure software [10]. The program's core mission is to overcome the limitations of conventional unfiltered PES searches, which are often impractical due to extensive time requirements and the generation of unlikely pathways [10].

The architectural workflow can be visualized as a recursive cycle, consisting of three primary phases executed for each new intermediate identified during the exploration.

- Phase 1: Active Site and Input Setup. The program first identifies active sites and potential bond-breaking locations on the input molecular structure(s). This step sets up multiple input configurations for subsequent quantum mechanical analysis [10].

- Phase 2: Transition State Search and Optimization. An iterative TS search is performed, employing a blend of active-learning sampling and potential energy assessments. This approach refines the search for potential intermediates and transition states, enhancing computational efficiency [10].

- Phase 3: Pathway Validation and Deduplication. Intrinsic Reaction Coordinate (IRC) analyses are performed to derive new reaction pathways from the optimized transition states. Duplicate pathways are eliminated, and the resulting structures are finalized for use as inputs in the next recursive cycle [10].

A key feature of ARplorer is its flexibility in selecting computational methods. It can utilize the fast semi-empirical method GFN2-xTB for initial large-scale PES generation and screening, while allowing for more precise Density Functional Theory (DFT) calculations when necessary [10]. The program's workflow is designed to be largely independent of the quantum chemistry software package, requiring only minor adjustments for compatibility [10].

LLM-Guided Chemical Logic Framework

A defining innovation of ARplorer is its incorporation of a structured, LLM-guided chemical logic to bias the PES search towards chemically plausible pathways, moving beyond purely mathematical exploration.

The framework for building this chemical logic is twofold, synthesizing general knowledge and system-specific intelligence, as illustrated below.

- General Chemical Logic: This component is pre-generated by processing and indexing prescreened data sources, including textbooks, databases, and research articles, to form a broad chemical knowledge base. This knowledge is refined into general reaction patterns (encoded as SMARTS patterns) using specialized LLMs guided by prompt engineering to reduce output variance [10].

- System-Specific Chemical Logic: For a given reaction system, the reactants and potential intermediates are converted into the SMILES format. A specialized LLM, leveraging the general knowledge base and targeted prompts, then generates chemical logic and SMARTS patterns tailored to the specific functional groups and reaction types present in the system [10].

It is critical to note that in the current ARplorer workflow, the LLM serves exclusively as a literature mining tool during this initial knowledge curation phase. The program conducts fully deterministic reaction space exploration, and all energy evaluations, pathway rankings, and kinetic assessments are performed exclusively via first-principles quantum mechanical computations, ensuring rigorous adherence to physical laws [10].

Application Notes & Performance Data

ARplorer's effectiveness and versatility have been demonstrated through case studies on diverse multi-step reactions, including organic cycloadditions, asymmetric Mannich-type reactions, and organometallic Pt-catalyzed reactions [10]. The program's performance metrics are summarized in the table below.

Table 1: Key Performance Metrics of ARplorer's Automated Pathway Exploration

| Metric | Description | Value / Outcome |

|---|---|---|

| Computational Efficiency | Enhanced via active-learning TS sampling and parallel multi-step searches with efficient filtering [10]. | Significant improvement over conventional unfiltered PES search methods. |

| Program Versatility | Successfully applied to multi-step reaction types [10]. | Organic cycloaddition, asymmetric Mannich-type, organometallic Pt-catalyzed reaction. |

| Software Integration | Compatible with popular quantum chemistry packages [10]. | Combined with GFN2-xTB and Gaussian 09; adaptable to other specified software. |

| High-Throughput Capability | Scalability for parallel screening [10]. | Capable of scaling up for high-throughput screening. |

The program's capability to scale up for high-throughput screening significantly enhances its utility in data-driven reaction development and catalyst design, allowing researchers to rapidly survey vast chemical spaces that would be intractable manually [10].

Experimental Protocols

This section provides a detailed methodology for employing ARplorer in a computational research workflow, from initial setup to result analysis.

Protocol: Setting up an ARplorer Project

Goal: To initialize a computational project for automated reaction pathway exploration using ARplorer. Reagents & Computational Tools:

- Hardware: A high-performance computing (HPC) cluster or a powerful workstation with multiple CPU cores.

- Software: ARplorer program (Python and Fortran), a compatible quantum chemistry package (e.g., Gaussian 09), and GFN2-xTB for semi-empirical calculations [10].

- Input Files: 3D molecular structure files (e.g., .xyz, .mol) of the reactant(s) in a optimized geometry.

Procedure:

- Input Preparation: Prepare and optimize the 3D molecular structure of your reactant(s) using a quantum chemical method (e.g., DFT). Convert this structure into a format recognized by ARplorer.

- System Specification: Provide the SMILES strings of the reaction system to ARplorer. This allows the integrated LLM to generate system-specific chemical logic and SMARTS patterns [10].

- Configuration: Set the computational parameters in the ARplorer configuration file. Key choices include:

- Level of Theory: Specify whether to use GFN2-xTB for fast scanning or a higher-level DFT method for more accurate results [10].

- Active Learning Parameters: Define thresholds for the active-learning algorithm used in transition state sampling.

- Parallelization: Set the number of CPU cores for parallel reaction searches.

Protocol: Executing an Automated Pathway Search

Goal: To run ARplorer and identify all kinetically relevant reaction pathways and transition states. Procedure:

- Job Launch: Execute the ARplorer program from the command line on your HPC system.

- Recursive Workflow: The program will automatically run its three-phase recursive workflow:

- Phase 1: The program analyzes the input structure to identify active atom pairs and potential bond-breaking locations, setting up multiple input configurations [10].

- Phase 2: It performs iterative transition state searches, using active-learning to hone in on valid transition states and intermediates.

- Phase 3: For every located transition state, an IRC calculation is performed to confirm the connected minima. New intermediates are added to the queue, and duplicates are removed [10].

- Monitoring: Monitor the job output for progress and completion. The program will log discovered pathways, intermediates, and transition states.

Protocol: Analysis of Results

Goal: To extract meaningful chemical and kinetic insights from the completed ARplorer calculation. Procedure:

- Pathway Enumeration: Review the final list of discovered reaction pathways. Each pathway is a sequence of intermediates connected by transition states.

- Energetic Profiling: Extract the relative energies (including reaction and activation barriers) for all intermediates and transition states along each pathway from the output files.

- Kinetic Analysis: Construct the potential energy profile for the most kinetically favorable pathways. The pathway with the lowest overall activation barrier is typically the most kinetically favorable.

- Validation: Manually inspect the key transition state structures and IRC paths to ensure chemical reasonability, even though they are located via first-principles methods.

The Scientist's Toolkit

The following table details the key research reagents and computational solutions integral to operating ARplorer and similar platforms in autonomous materials synthesis.

Table 2: Essential Research Reagent Solutions for Automated Pathway Exploration

| Item Name | Function / Role | Specification / Notes |

|---|---|---|

| GFN2-xTB | Semi-empirical quantum chemical method for fast generation of Potential Energy Surfaces [10]. | Used for quick, large-scale screening; balances speed and accuracy. |

| DFT (e.g., via Gaussian) | Higher-level quantum mechanical method for precise energy and geometry calculations [10]. | Used for final, accurate characterization of promising pathways located by GFN2-xTB. |

| SMILES Strings | Simplified Molecular-Input Line-Entry System; a string representation of a molecular structure. | Serves as input for generating system-specific chemical logic via the LLM [10]. |

| SMARTS Patterns | A language for specifying molecular substructures and reaction transforms. | Encodes the chemical logic and reaction rules that guide the automated PES search [10]. |

| Active Learning Algorithm | A machine learning approach that selects the most informative data points to compute next. | Enhances efficiency by minimizing unnecessary quantum calculations during transition state sampling [10]. |

ARplorer represents a significant advancement in the field of automated reaction discovery. By strategically integrating the pattern-recognition capabilities of LLMs for chemical logic curation with the rigorous, first-principles evaluation of quantum mechanics, it achieves a new level of efficiency and practicality in exploring Potential Energy Surfaces. Its demonstrated success across a range of complex organic and organometallic reactions underscores its potential as a cornerstone tool for accelerating data-driven reaction development, catalyst design, and autonomous materials synthesis.

The "Rainbow" platform represents a transformative approach in autonomous materials science, specifically engineered to address the complex challenge of optimizing metal halide perovskite (MHP) nanocrystals (NCs). These NCs offer extraordinary tunability in optical properties, but fully exploiting this potential is challenged by a vast and complex synthesis parameter space involving both continuous and discrete variables [23]. Traditional materials development pipelines typically require 10-20 years, but self-driving laboratories (SDLs) like Rainbow aim to reduce this timeline to just 1-2 years through integrated closed-loop systems [24]. Rainbow distinguishes itself through its multi-robot architecture that autonomously navigates the 6-dimensional input/3-dimensional output parameter space of MHP NCs, systematically exploring critical structure-property relationships and identifying scalable Pareto-optimal formulations for targeted spectral outputs [23]. By operating continuously without human intervention, Rainbow achieves unprecedented experimental throughput, performing in days what would traditionally take human researchers years [25], thereby accelerating both fundamental synthesis science and the development of next-generation photonic materials.

Detailed Experimental Protocols

Rainbow Hardware Configuration and Integration

The Rainbow platform employs a sophisticated multi-robot architecture designed for parallelized experimentation and continuous operation. The hardware integration follows a systematic protocol:

Liquid Handling Robot System: Configured for NC precursor preparation and multi-step NC synthesis operations. The system manages precise liquid handling tasks including NC sampling for characterization and waste collection/management. Calibration protocols require daily verification of dispensing accuracy across the viscosity range of precursor solutions [23].

Characterization Robot Integration: A dedicated benchtop instrument equipped with UV-Vis absorption and emission spectroscopy capabilities for real-time optical characterization. The system performs automated measurements of photoluminescence quantum yield (PLQY), emission linewidth (FWHM), and peak emission energy (EP) after each synthesis iteration [23].

Robotic Plate Feeder: Programmed for automated labware replenishment to maintain continuous operation. The feeding mechanism accommodates standard microplate formats and requires loading according to a predefined laboratory layout map [23].

Robotic Transfer Arm: Serves as the critical interconnection system, facilitating sample and labware transfer between the other three robotic systems. Path optimization algorithms ensure collision-free operation and minimal transfer times between workstations [23].

Reactor System Configuration: The platform utilizes parallelized, miniaturized batch reactors specifically designed for handling discrete parameters in SDLs. Reactor vessels are compatible with room temperature reactions and designed for direct scalability to production volumes [23].

Autonomous Synthesis Workflow Protocol

The closed-loop optimization follows a meticulously defined experimental sequence:

Precursor Preparation: The liquid handling robot prepares precursor solutions according to AI-generated formulations. For CsPbX3 NC synthesis, this involves precise combination of cesium precursors, lead precursors (Pb(OA)2), and halide sources (Cl-, Br-, I-) in organic solvents [23]. Ligand solutions are prepared from organic acids with varying alkyl chain lengths to systematically investigate ligand structure-property relationships [23].

Multi-step NC Synthesis: The robotic system executes NC synthesis in parallelized batch reactors. The protocol encompasses both one-pot synthesis and post-synthesis halide exchange reactions, enabling precise bandgap tuning across the UV-vis spectral region [23]. Temperature control is maintained at 25°C ± 0.5°C throughout the synthesis process.

Real-time Sample Transfer: Upon reaction completion, the robotic arm transfers samples from synthesis reactors to the characterization instrument. Transfer timing is critical to ensure consistent characterization timepoints post-synthesis.

Automated Optical Characterization: The characterization robot acquires UV-Vis absorption and emission spectra for each synthesized NC sample. The system automatically calculates three key performance parameters: PLQY (%), FWHM (nm), and peak emission energy (eV) [23].

Data Processing and AI Decision-making: Characterization data is processed and fed to the machine learning algorithm. The AI agent, typically using Bayesian optimization methods, analyzes the results against target objectives and proposes new experimental conditions for the next iteration [23].

Closed-loop Iteration: The system automatically implements the AI-generated experimental proposals, beginning the next cycle of synthesis and characterization without human intervention. This loop continues until predefined optimization targets are achieved or the experimental budget is exhausted [23].

Machine Learning and Optimization Parameters

The AI-driven optimization protocol employs specific parameters and algorithms:

Objective Function Definition: The optimization target is defined as a multi-objective function seeking to maximize PLQY, minimize FWHM, and achieve a target peak emission energy (EP) simultaneously [23].

Search Space Configuration: The algorithm navigates a 6-dimensional input space comprising continuous parameters (precursor concentrations, reaction times) and discrete parameters (ligand structures, halide compositions) [23].

Bayesian Optimization Implementation: The AI uses Bayesian optimization to balance exploration of unknown parameter regions with exploitation of promising areas. The algorithm maintains and updates a probabilistic model of the synthesis landscape with each iteration [23].

Pareto-front Identification: For multi-objective optimization, the system maps Pareto-optimal fronts representing the trade-off relationships between PLQY and FWHM at target emission energies [23].

Quantitative Performance Data

Table 1: Key Quantitative Performance Metrics of the Rainbow System

| Performance Parameter | Specification | Measurement Method |

|---|---|---|

| Experimental Throughput | Up to 1,000 experiments per day [25] | System operation logging |

| Parameter Space Dimensions | 6 input dimensions, 3 output dimensions [23] | Experimental design documentation |

| Optimization Acceleration | 10×-100× vs. traditional methods [23] | Comparative timeline analysis |

| PLQY Optimization Range | Maximum achievable (reported near-unity values) [23] | UV-Vis absorption and emission spectroscopy |

| Emission Energy Targeting | Tunable across UV-vis spectral region [23] | Photoluminescence spectroscopy |

| Emission Linewidth (FWHM) | Minimized to narrowest achievable values [23] | Spectral linewidth analysis |

Table 2: Representative Perovskite Nanocrystal Optimization Results

| Target Emission Energy | Optimal Ligand Structure | Achieved PLQY | Achieved FWHM | Scalability Rating |

|---|---|---|---|---|

| Blue Spectrum | Short-chain organic acid [23] | High (%) | Narrow (nm) | Directly scalable [23] |

| Green Spectrum | Intermediate-chain organic acid [23] | High (%) | Narrow (nm) | Directly scalable [23] |

| Red Spectrum | Long-chain organic acid [23] | High (%) | Narrow (nm) | Directly scalable [23] |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents for Autonomous Perovskite Nanocrystal Synthesis

| Reagent Category | Specific Examples | Function in Synthesis |

|---|---|---|

| Metal Precursors | Cesium precursors, Lead(II) oleate (Pb(OA)2) [23] | Provides metal cations for perovskite crystal structure formation |

| Halide Sources | Chloride, Bromide, Iodide precursors [23] | Controls bandgap engineering and emission energy tuning |

| Organic Ligands | Organic acids with varying alkyl chain lengths [23] | Stabilizes NCs, controls growth, and tunes optical properties |

| Solvents | 1-butanol (1-BuOH), octadecene (ODE) [26] | Reaction medium with controlled polarity and boiling point |

| Surface Ligands | Oleic acid (OA), Oleylamine (OLA) [26] | Modifies surface chemistry and affects charge transport properties |

Workflow and System Architecture Visualization

Diagram 1: Closed-loop Autonomous Optimization Workflow. This diagram illustrates Rainbow's iterative process for perovskite nanocrystal optimization, showing the complete cycle from objective definition to optimized formulation.

Diagram 2: Multi-Robot System Architecture. This diagram shows the integrated hardware configuration of the Rainbow platform, highlighting the coordination between multiple robotic systems and the central AI control.

The application of artificial intelligence (AI) to retrosynthesis planning is transforming the field of organic synthesis, with profound implications for drug discovery and materials science. However, the development of robust AI models necessitates large, diverse datasets of chemical reactions, which are often proprietary and reside in isolated "data islands" across competing organizations [27]. This creates a significant barrier to collaborative discovery, as sharing sensitive reaction data risks exposing confidential intellectual property or compromising competitive advantages [27]. The challenge, therefore, is to enable collaborative AI model training that leverages distributed chemical data without centralizing it or compromising its confidentiality.

This application note explores the emerging paradigm of privacy-preserving AI frameworks for retrosynthesis, with a specific focus on the Chemical Knowledge-Informed Framework (CKIF). We detail its protocol for collaborative learning and provide a comparative analysis of its performance against established benchmarks. The content is framed within the broader objective of achieving autonomous materials synthesis, where secure, multi-institutional collaboration is essential for accelerating the discovery of novel molecules and synthetic pathways.

Privacy-Aware Retrosynthesis Frameworks

The Need for Data Privacy in Chemical AI

Chemical reaction data is a pivotal asset in competitive fields like pharmaceuticals. It often contains confidential insights and trade secrets, leading organizations to protect it rigorously [27]. Centralizing this data to train a single, global AI model—the current standard paradigm—poses considerable privacy risks [27]. These risks include potential unauthorized access during data transmission and storage, which can deter organizations from participating in collaborative research initiatives. A privacy-preserving approach that facilitates learning from distributed data without sharing the raw data itself is critical for advancing the field.

The CKIF Framework: A Federated Learning Approach

The Chemical Knowledge-Informed Framework (CKIF) is a privacy-preserving approach that enables collaborative training of retrosynthesis models across multiple chemical entities without transferring raw reaction data [27] [28]. Instead of gathering data in a central location, CKIF operates through iterative communication rounds where participants train local models on their proprietary data and share only the model parameters [27].

The core innovation of CKIF is its Chemical Knowledge-Informed Weighting (CKIW) strategy. This strategy moves beyond simple averaging of model parameters (as in traditional Federated Averaging, or FedAvg) by leveraging chemical knowledge to personalize the aggregated model for each participant [27] [28]. The CKIW algorithm quantitatively assesses the usefulness of other clients' models by comparing the molecular fingerprints (e.g., ECFP, MACCS keys) of their predicted reactants against local ground-truth data [27]. The resulting similarity scores are used as adaptive weights during model aggregation, ensuring each client's final model is tailored to its specific data distribution and chemical preferences [27].

Experimental Protocols & Performance Analysis

CKIF Implementation Protocol

The following protocol outlines the steps for deploying the CKIF framework in a collaborative retrosynthesis project.

Phase 1: System Initialization

- Step 1.1: Client Registration. A central server registers

Kparticipating clients (e.g., pharmaceutical companies, research labs). Each clientC_ipossesses a proprietary reaction datasetD_i. - Step 1.2: Model and Metric Definition. A base retrosynthesis model architecture (e.g., a Graph Neural Network) is defined. Molecular fingerprinting methods (ECFP4, MACCS) are selected for the CKIW strategy.