AI-Driven Discovery of Novel Functional Materials: Accelerating Breakthroughs for Biomedical Applications

The discovery of novel functional materials is undergoing a radical transformation, moving from traditional trial-and-error approaches to a data-driven paradigm powered by artificial intelligence and automated experimentation.

AI-Driven Discovery of Novel Functional Materials: Accelerating Breakthroughs for Biomedical Applications

Abstract

The discovery of novel functional materials is undergoing a radical transformation, moving from traditional trial-and-error approaches to a data-driven paradigm powered by artificial intelligence and automated experimentation. This article provides a comprehensive overview for researchers and drug development professionals, exploring the foundational principles of this shift, the cutting-edge methodologies from machine learning to self-driving labs, and the critical challenges of data quality and model interpretability. It examines the validation frameworks ensuring the real-world applicability of AI-predicted materials and highlights transformative applications, particularly in targeted drug delivery systems and smart biomaterials. By synthesizing insights from current research and investment trends, this article serves as a strategic guide for navigating the future of accelerated materials innovation in the biomedical field.

The New Paradigm: From Trial-and-Error to AI-Driven Materials Discovery

Defining Functional Materials for Biomedical Applications

Functional materials are engineered substances designed with specific properties to perform targeted tasks in biomedical applications. In the context of novel materials research, these advanced materials include distinct classes of lipids, polymers, proteins, peptides, and inorganic substances that form the foundation of innovative nanomedicinal products, drug delivery systems, and medical devices [1]. The primary function of these materials extends beyond inert structural support to active participation in therapeutic and diagnostic processes, enabling groundbreaking applications in disease therapy and diagnosis.

The discovery and development of novel functional materials represent a paradigm shift in biomedical engineering, facilitating the creation of sophisticated systems that interact with biological entities at molecular and cellular levels. These materials are characterized by their tailored physical, chemical, and biological properties, which allow them to respond to specific physiological stimuli, navigate biological barriers, and execute precise therapeutic functions. The strategic integration of these materials into biomedical technologies has opened new frontiers in personalized medicine, regenerative therapies, and diagnostic methodologies.

Classes of Functional Materials and Their Properties

Material Classification and Characteristics

Functional materials for biomedical applications can be broadly categorized into three primary groups: inorganic materials, elastomers, and hydrogels. Each class possesses distinct properties that make it suitable for specific biomedical applications, particularly in the rapidly evolving field of organ-on-a-chip (OOC) technology and drug delivery systems [2].

Table 1: Major Classes of Functional Materials for Biomedical Applications

| Material Class | Specific Examples | Major Properties | Key Limitations | Typical Applications |

|---|---|---|---|---|

| Inorganic Materials | Glass, Silicon | Surface stability, optically transparent, electrically insulating | Not gas permeable, high fabrication cost | OOC device substrate, transformation studies, real-time imaging [2] |

| Elastomers | PDMS, POMaC, SEBS copolymer | High elasticity, gas permeability, biocompatibility, rapid prototyping | Hydrophobicity, absorbs biomolecules, incompatible with organic solvents | Biomimetic cell culture scaffolds, microvascular models, lung-on-a-chip [2] |

| Hydrogels | Collagen, Gelatin, Alginate, PEG | Biocompatible, enzymatically degradable, tunable mechanical properties | Weak mechanical strength, rapid degradation, poor cell adhesion | Microvascular networks, 3D tissue models, spheroid-based organ models [2] |

Advanced Composite and Hybrid Materials

Beyond these primary categories, hybrid materials that combine organic and inorganic components represent a cutting-edge frontier in functional materials research. These sophisticated composites leverage the advantages of multiple material classes while mitigating their individual limitations. For instance, PDMS-methacrylate blends have been developed for 3D stereolithography, maintaining the beneficial properties of conventional PDMS while enabling advanced fabrication capabilities [2]. Similarly, liquid glass—a photocurable amorphous silica nanocomposite—has emerged as a promising material for low-cost prototyping of glass microfluidics, combining the optical advantages of glass with easier processing [2].

The continuous evolution of material systems, including the development of biodegradable elastomers with tailored mechanical properties and degradation profiles, addresses the need for implantable devices and temporary tissue scaffolds. These advanced materials demonstrate precisely tunable mechanical characteristics and biodegradation rates optimized for specific applications such as human myocardium or liver tissue engineering [2].

Quantitative Analysis of Material Properties

Performance Metrics and Evaluation Parameters

The development and selection of functional materials for biomedical applications requires rigorous quantitative assessment across multiple performance parameters. These metrics provide critical data for comparing material alternatives and optimizing their composition for specific biomedical applications.

Table 2: Quantitative Analysis of Hydrogel Materials for Tissue Engineering

| Material Type | Mechanical Strength (Young's Modulus) | Degradation Time | Cell Adhesion Efficiency | Porosity | Optical Clarity |

|---|---|---|---|---|---|

| Collagen | Low (0.1-1 kPa) | Enzyme-dependent (days-weeks) | High (>80%) | High (>95%) | Moderate |

| Gelatin (GelMA) | Tunable (1-100 kPa) | Days to weeks | High (>75%) | Adjustable | High |

| Alginate | Low-Medium (5-50 kPa) | Ion-dependent | Low (<20%) without modification | High (>90%) | Moderate |

| PEG-based | Highly tunable (1-500 kPa) | Controlled (weeks-months) | Low (requires functionalization) | Adjustable | High |

The quantitative profiling of material properties enables researchers to make evidence-based selections for specific applications. For instance, materials intended for vascular network engineering require specific mechanical properties to withstand physiological flow conditions, while those designed for drug delivery applications must demonstrate controlled degradation profiles to regulate therapeutic release kinetics. The systematic evaluation of these parameters accelerates the discovery of novel functional materials by establishing clear structure-function relationships.

Experimental Protocols for Material Evaluation

Guideline for Reporting Experimental Protocols

Comprehensive reporting of experimental protocols is fundamental to advancing functional materials research. Based on analysis of over 500 published and unpublished experimental protocols, a guideline comprising 17 essential data elements has been established to ensure reproducibility and sufficient technical detail [3]. These key elements include:

- Protocol Title and Identifier: Unique identification and versioning

- Authorship and Affiliation: Contributor information and organizational context

- Abstract and Summary: Concise protocol overview

- Introduction and Rationale: Scientific context and purpose

- Objectives and Goals: Specific aims and success criteria

- Safety Considerations: Hazard identification and protective measures

- Reagents and Materials: Comprehensive listing with specifications

- Equipment and Instruments: Detailed device information with models and settings

- Sample Preparation: Source, handling, and preparation procedures

- Step-by-Step Procedures: Chronological, detailed instructions

- Timing Requirements: Duration and critical timepoints

- Troubleshooting Guidance: Problem anticipation and solutions

- Expected Results: Outcome predictions and benchmarks

- Analysis Methods: Data processing and interpretation procedures

- Validation Approaches: Verification methods and controls

- References and Resources: Source materials and influential works

- Acknowledgments and Credits: Contributions and support recognition

This structured approach to protocol documentation addresses the critical issue of insufficient methodological reporting that has been identified as a significant barrier to reproducibility in biomedical research [3].

Protocol for Biomaterial Cytocompatibility Assessment

The following detailed protocol provides a standardized methodology for evaluating the cytocompatibility of novel functional materials, a critical assessment for any material intended for biomedical application:

Purpose: To evaluate the biocompatibility of functional materials through direct cell contact studies, assessing cell viability, proliferation, and morphological changes.

Materials and Reagents:

- Test material samples (sterilized)

- Appropriate cell line (e.g., NIH/3T3 fibroblasts for ISO 10993-5 compliance)

- Cell culture medium with serum

- Phosphate buffered saline (PBS), pH 7.4

- Trypsin-EDTA solution for cell detachment

- Live/dead viability/cytotoxicity kit (e.g., Calcein AM/EthD-1)

- MTT or AlamarBlue cell viability reagents

- 4% paraformaldehyde solution in PBS

- Triton X-100 solution (0.1% in PBS)

Equipment:

- Biological safety cabinet (Class II)

- CO2 incubator (37°C, 5% CO2)

- Inverted phase contrast microscope with fluorescence capability

- Microplate reader (for absorbance/fluorescence measurements)

- Cell culture vessels (multi-well plates)

- Sterile forceps and implements for material handling

Procedure:

- Material Preparation:

- If materials are not sterile, sterilize by autoclaving, ethylene oxide treatment, or gamma irradiation based on material compatibility.

- For leachable testing, incubate materials in complete culture medium at 37°C for 24 hours at a surface area-to-volume ratio of 3-6 cm²/mL.

- Rinse materials three times with sterile PBS before cell seeding.

Cell Seeding:

- Harvest cells at 80-90% confluence using standard trypsinization procedures.

- Prepare cell suspension at appropriate density (typically 1-5×10⁴ cells/cm² depending on cell type).

- Seed cells directly onto material surfaces or in wells containing material extracts.

- Include positive control (cells on tissue culture plastic) and negative control (cells with known cytotoxic agent).

Incubation and Monitoring:

- Incubate cells with test materials for predetermined intervals (typically 1, 3, and 7 days).

- Monitor cell behavior daily using phase contrast microscopy.

- Document morphological changes, adhesion characteristics, and confluency.

Viability Assessment:

- At each timepoint, assess cell viability using live/dead staining:

- Prepare working solution containing 2µM Calcein AM and 4µM Ethidium homodimer-1 in PBS.

- Incubate cells with staining solution for 30 minutes at 37°C.

- Visualize using fluorescence microscopy (green: live cells; red: dead cells).

- Quantify metabolic activity using MTT assay:

- Add MTT solution to achieve final concentration of 0.5mg/mL.

- Incubate for 2-4 hours at 37°C.

- Solubilize formed formazan crystals with DMSO or acidified isopropanol.

- Measure absorbance at 570nm with reference at 630-690nm.

- At each timepoint, assess cell viability using live/dead staining:

Cell Morphology Analysis:

- Fix samples with 4% paraformaldehyde for 15 minutes at room temperature.

- Permeabilize with 0.1% Triton X-100 for 5-10 minutes.

- Stain actin cytoskeleton with phalloidin conjugate (e.g., Alexa Fluor 488).

- Counterstain nuclei with DAPI or Hoechst stains.

- Image using fluorescence microscopy.

Troubleshooting:

- Poor cell adhesion: Consider surface modification techniques (plasma treatment, protein coating) to improve hydrophilicity.

- High cytotoxicity: Evaluate potential leachables and consider additional purification or processing steps.

- Inconsistent results: Ensure standardized material preparation and cell culture conditions across replicates.

Validation:

- Compare results with established reference materials where available.

- Include appropriate controls in each experiment.

- Perform statistical analysis with sufficient replicates (n≥3).

This protocol provides a standardized framework for the critical assessment of novel functional materials, enabling reliable comparison between different material systems and ensuring safety for biomedical applications.

Research Workflows and Signaling Pathways

Methodology for Functional Material Development

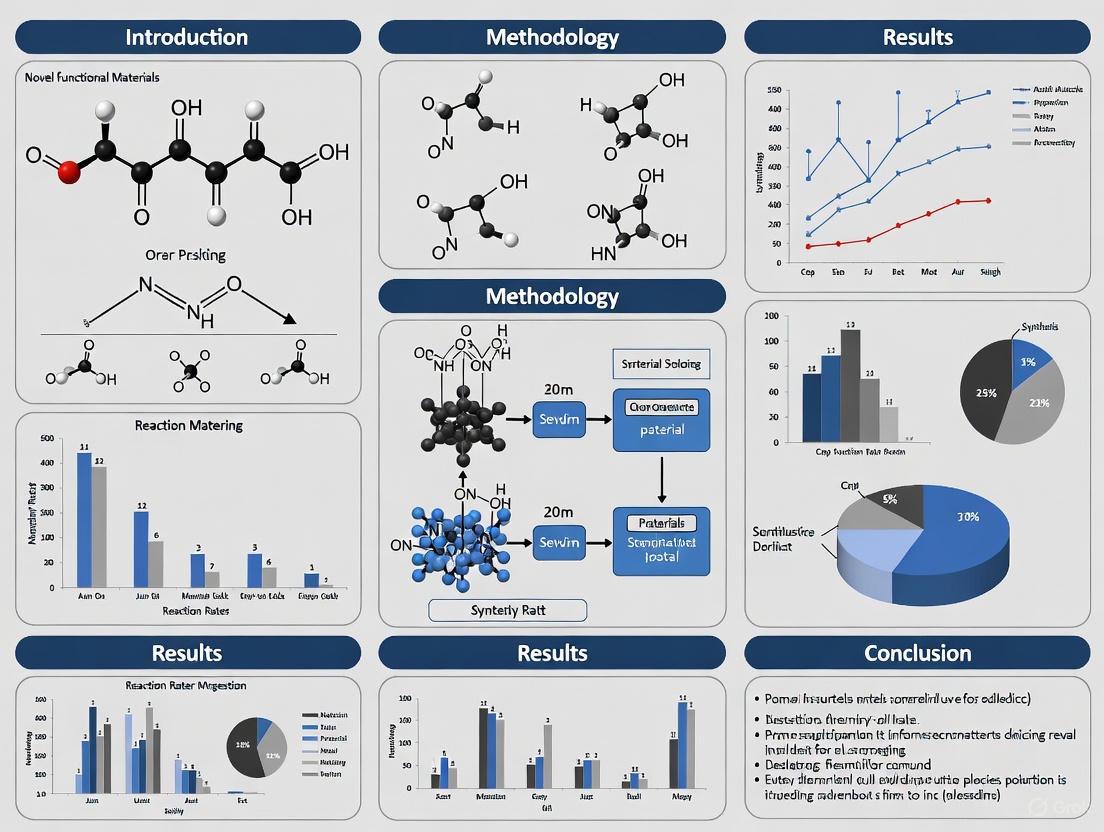

The discovery and development of novel functional materials follows a systematic workflow that integrates computational design, synthesis, characterization, and validation. The diagram below illustrates this comprehensive research pathway.

Diagram Title: Functional Materials Development Workflow

This workflow emphasizes the iterative nature of materials development, where data from characterization and validation stages inform subsequent design modifications. The integration of computational approaches at the initial design phase enables predictive modeling of material properties and biological interactions, potentially accelerating the discovery timeline for novel functional materials.

Material-Cell Interaction Pathways

Functional materials interact with biological systems through defined signaling pathways that determine their biomedical efficacy. The following diagram illustrates the key molecular interactions between material surfaces and cellular components.

Diagram Title: Material-Cell Interaction Signaling Pathway

This signaling cascade begins with protein adsorption onto the material surface, which is influenced by material properties such as hydrophobicity, charge, and topography. The adsorbed protein layer then mediates specific receptor interactions that trigger intracellular signaling pathways, ultimately leading to defined cellular responses. Understanding these molecular mechanisms enables the rational design of materials that direct specific cellular behaviors for therapeutic applications.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful research in functional materials for biomedical applications requires access to specialized reagents, materials, and instrumentation. The following table details essential components of the research toolkit for scientists working in this field.

Table 3: Essential Research Reagent Solutions for Functional Materials Research

| Category | Specific Items | Function/Purpose | Key Considerations |

|---|---|---|---|

| Base Materials | PDMS (Polydimethylsiloxane), PEGDA (Polyethylene glycol diacrylate), GelMA (Gelatin methacryloyl), Collagen type I | Primary material components for device fabrication and tissue engineering | Biocompatibility, mechanical properties, processing requirements [2] |

| Fabrication Reagents | Photoinitiators (Irgacure 2959, LAP), Crosslinking agents, Sacrificial materials | Enable material processing and structure formation | Cytotoxicity, reaction efficiency, byproducts |

| Characterization Tools | Live/dead viability assays, Antibodies for specific markers, Extracellular matrix proteins | Assessment of material performance and biological interactions | Specificity, sensitivity, quantification capability |

| Cell Culture Components | Primary cells, Cell lines, Culture media, Serum supplements, Differentiation factors | Biological assessment of material functionality | Source, passage number, validation requirements |

| Analytical Instruments | Scanning electron microscope, Atomic force microscope, FTIR spectrometer, Rheometer | Material characterization and quality assessment | Resolution, detection limits, quantitative accuracy |

This toolkit represents the fundamental resources required to conduct rigorous research in functional materials. The selection of specific reagents and materials should be guided by the intended application, with particular attention to regulatory considerations for materials destined for clinical translation. Additionally, researchers should prioritize establishing robust quality control procedures for all critical reagents to ensure experimental reproducibility.

Advanced functional materials represent a transformative approach to biomedical challenges, enabling unprecedented capabilities in drug delivery, diagnostic systems, and tissue engineering. The systematic characterization, standardized protocols, and structured research workflows outlined in this technical guide provide a foundation for the continued discovery and development of novel materials that will shape the future of biomedical technology.

The discovery of novel functional materials is a cornerstone of technological advancement, enabling breakthroughs in fields ranging from clean energy and electronics to drug development. For decades, this discovery process has been dominated by traditional methods reliant on trial-and-error experimentation, manual laboratory work, and intuition-driven research. While these approaches have yielded successes, they are fundamentally constrained by significant limitations in cost, time, and scalability. This article examines these core constraints, details the emerging methodologies that are overcoming them, and provides a quantitative and technical guide for researchers and scientists navigating the modern materials discovery landscape.

The Triad of Traditional Constraints

Traditional materials discovery is an inherently slow and resource-intensive process. The journey from a theoretical compound to a synthesized and characterized material is often measured in decades.

The Time Barrier

The average timeline for a new material to move from initial discovery to commercial application spans an average of two decades [4]. This protracted timeline delays the deployment of technologies critical for addressing global challenges, such as advanced batteries for energy storage or novel catalysts for carbon capture.

The Cost of Discovery

The resource-intensive nature of traditional methods makes discovery expensive. To contextualize these costs, the broader "discovery" domain, including electronic discovery (eDiscovery) for legal processes, provides a useful analogy for tracking task-level expenditures. In that field, the "review" task—the most resource-intensive phase—accounted for 73% of total expenditures in 2012, a figure that shifted to 64% in 2024 and is projected to fall to 52% by 2029 due to automation and AI [5]. This redistribution highlights how manual-intensive tasks dominate costs and how technological integration can fundamentally alter spending patterns, a trend directly applicable to materials science.

The Scalability Ceiling

Conventional discovery struggles with the vastness of chemical space. The number of possible stable inorganic crystals is astronomically large, yet, until recently, only about 48,000 such structures had been identified computationally through continued research [6]. Relying on sequential experiments or computationally expensive first-principles calculations like Density Functional Theory (DFT) for every candidate makes exhaustive exploration impractical, creating a severe scalability bottleneck.

Table 1: Quantitative Benchmarks of Discovery Processes

| Metric | Traditional/Baseline Performance | Modern/AI-Driven Performance |

|---|---|---|

| Materials Discovery Timeline | ~20 years from discovery to market [4] | Dramatically compressed via self-driving labs [7] |

| Stable Crystal Predictions | ~48,000 known computationally stable structures [6] | 2.2 million new structures predicted by GNoME AI [6] |

| Experimental Data Throughput | Steady-state flow experiments: Low data points per hour [7] | Dynamic flow experiments: Data point every 0.5 seconds [7] |

| Hit Rate for Stable Materials | Composition-only search: ~1% [6] | GNoME model: >80% with structure, ~33% with composition only [6] |

| Prediction Accuracy (Energy) | Previous ML models: ~28 meV/atom MAE [6] | Scaled GNoME models: ~11 meV/atom MAE [6] |

The Modern Toolkit: Accelerating Discovery with AI and Automation

The limitations of traditional discovery are being surmounted by a new paradigm that integrates artificial intelligence (AI), high-throughput computing, and robotic automation.

Machine Learning and Deep Learning

Machine learning (ML), particularly deep learning, uses historical data to predict material properties and stability, bypassing the need for costly simulations or experiments for every candidate. Key methodologies include:

- Graph Neural Networks (GNNs): Models like the Graph Networks for Materials Exploration (GNoME) treat crystal structures as graphs, enabling highly accurate predictions of formation energy and stability. GNoME has discovered over 2.2 million new crystal structures stable with respect to previously known materials [6] [8].

- Generative Models: Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs) can generate novel chemical compositions that meet specific target properties, enabling inverse design [9] [8].

- Automated Machine Learning (AutoML): Frameworks like AutoGluon and TPOT automate the process of model selection and hyperparameter tuning, making powerful ML more accessible to materials scientists [9] [8].

Self-Driving Laboratories

Self-driving labs, or Materials Acceleration Platforms (MAPs), represent the physical manifestation of this new paradigm. These robotic systems combine AI-driven decision-making with automated synthesis and characterization to create a closed-loop discovery system [4] [7].

A recent breakthrough involves replacing traditional steady-state flow experiments with dynamic flow experiments. In this method, chemical mixtures are continuously varied and monitored in real-time, generating a data point every half-second. This "streaming-data" approach collects at least 10 times more data than previous methods in the same timeframe, dramatically accelerating the AI's learning and optimization process while reducing chemical consumption and waste [7].

High-Throughput Experimentation (HTE) and Screening

HTE uses robotic systems to conduct hundreds or thousands of parallel experiments, rapidly exploring vast combinatorial libraries of elements or compounds [4]. In drug discovery, this is complemented by High-Content Screening (HCS), which uses automated microscopy and multiparametric image analysis to understand complex phenotypic changes in cells, a market projected to grow from USD 1.52 billion in 2024 to USD 3.12 billion by 2034 [10]. The integration of AI is crucial for analyzing the complex, high-dimensional data produced by these techniques [11] [10].

Experimental Protocols for Modern Discovery

Protocol 1: Autonomous Materials Discovery with a Self-Driving Fluidic Lab

This protocol details the dynamic flow experiment methodology for inorganic materials synthesis [7].

- System Setup: Configure a continuous flow microreactor system integrated with real-time, in situ spectroscopic characterization (e.g., UV-Vis, photoluminescence) and an AI control unit.

- Precursor Introduction: Continuously pump precursor solutions into the microreactor system at dynamically controlled flow rates.

- Dynamic Flow Experimentation: Instead of waiting for steady-state, continuously vary the flow rates of precursors and other reaction conditions (e.g., temperature) according to a program designed to map transient states.

- Real-Time Characterization: Monitor the reaction and the formation of the target material (e.g., CdSe quantum dots) continuously, collecting a spectral data point as often as every 0.5 seconds.

- AI-Driven Decision Making: The machine learning model analyzes the streaming data in real-time to predict the next set of optimal reaction parameters to approach the target material property.

- Closed-Loop Operation: The system automatically adjusts the flow rates and conditions based on the AI's decision, creating a non-stop, goal-oriented discovery loop.

- Validation: Promising material candidates identified by the autonomous system are synthesized at a larger scale for ex-situ characterization and validation of properties.

Protocol 2: Discovering Stable Crystals with Graph Neural Networks

This computational protocol outlines the process used by projects like GNoME to discover new stable crystals [6].

- Candidate Generation:

- Structural Path: Generate candidate crystal structures by applying symmetry-aware partial substitutions (SAPS) to known crystals.

- Compositional Path: Generate reduced chemical formulas by oxidation-state balancing with relaxed constraints.

- Model Filtration:

- Structural Filtration: Pass the generated structures through a pre-trained GNN ensemble. The model predicts the formation energy and calculates the decomposition energy to the convex hull. Structures predicted to be stable are clustered, and polymorphs are ranked for DFT evaluation.

- Compositional Filtration: For new compositions predicted to be stable by a compositional model, initialize 100 random structures for evaluation using Ab Initio Random Structure Searching (AIRSS).

- Energetic Validation: Evaluate the filtered candidate structures using Density Functional Theory (DFT) calculations with standardized settings (e.g., in VASP).

- Active Learning: Incorporate the DFT-verified structures and their energies back into the training dataset.

- Iterative Retraining: Retrain the GNN models on the expanded dataset, improving their predictive accuracy for the next round of discovery. This iterative active learning process is key to the model's improving performance.

The workflow for this AI-driven discovery process is illustrated below.

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential resources, platforms, and technologies that form the backbone of modern, accelerated materials discovery.

Table 2: Essential Research Reagent Solutions for Accelerated Discovery

| Tool Name/Platform | Type | Primary Function in Discovery |

|---|---|---|

| GNoME | AI Model | A deep learning model that predicts the stability of new inorganic crystals, enabling the discovery of millions of new materials [6]. |

| Materials Project | Database | An open-access database providing computed properties of known and hypothetical materials, serving as a foundational dataset for training ML models [4] [9] [6]. |

| Self-Driving Lab | Robotic System | An automated platform that uses AI and robotics to autonomously synthesize and characterize materials, drastically speeding up experimentation [7]. |

| High-Content Screening | Instrumentation | Automated microscopy and image analysis systems used to analyze complex cellular phenotypes in drug discovery, generating rich, multiparametric data [11] [10]. |

| MERCURIUS DRUG-seq | Assay Technology | A high-throughput transcriptomic screening technology that provides deep, target-agnostic molecular insights into the effects of compounds or genetic perturbations [11]. |

| AutoGluon, TPOT | Software Framework | AutoML frameworks that automate the process of model selection and hyperparameter tuning, making ML more efficient and accessible [9] [8]. |

| A-Lab | Robotic System | An autonomous laboratory designed to synthesize inorganic powders from solid powder precursors, demonstrating high success rates in creating target materials [4]. |

The paradigm for discovering novel functional materials is undergoing a profound transformation. The traditional constraints of cost, time, and scalability are no longer immovable barriers. Through the strategic integration of machine learning, autonomous robotics, and high-throughput methodologies, the process is becoming faster, cheaper, and more scalable. The ability to predict millions of stable crystals computationally and validate them in self-driving labs represents an order-of-magnitude leap in capability. For researchers and drug development professionals, embracing this new toolkit is no longer optional but essential for leading the next wave of innovation in energy, electronics, and medicine.

The field of materials science is undergoing a profound transformation, shifting from traditional trial-and-error experimentation to a sophisticated data-driven paradigm. This revolution is fundamentally altering how researchers discover and develop novel functional materials for applications ranging from renewable energy and electronics to drug development. Traditional approaches to designing custom materials have heavily relied on researcher intuition and expertise, resulting in an iterative process that is both time-consuming and expensive, often requiring numerous rounds of experimentation to achieve target material characteristics [12]. The limitations of these conventional methods prompted a strategic initiative to revolutionize materials design and development, most notably exemplified by the Materials Genome Initiative introduced in 2011 [12].

At the core of this transformation lies the powerful integration of materials databases and high-throughput computing, which together enable the rapid screening of vast numbers of materials to identify candidates with specific desired properties. Materials informatics, the interdisciplinary field that leverages data analytics to accelerate and make materials development more efficient, represents this new paradigm [13]. By analyzing vast amounts of historical experimental data and employing simulations coupled with high-throughput screening, researchers can now identify promising material candidates more quickly than ever before. The convergence of accumulated experimental data with modern computational infrastructure—including high-performance computing and emerging quantum platforms—has finally made data-driven materials discovery feasible, significantly shortening development cycles from decades to months in many cases [14] [13].

The Foundation: Materials Databases and Standardization

The Evolution of Materials Databases

The emergence of comprehensive materials databases represents a cornerstone of the data-driven revolution in materials science. Significant advancements in materials design have been driven by increased computational power and the development of sophisticated electronic structure codes, enabling researchers to conduct complex calculations with unprecedented speed and accuracy [12]. The automation of these calculations has paved the way for high-throughput ab initio computations to become a powerful tool in materials research, allowing for the systematic exploration of material spaces that were previously inaccessible. The outcomes of these high-throughput calculations are meticulously curated in extensive databases that serve as repositories containing properties of both existing and hypothetical materials [12].

Recently, there has been a proliferation of numerous open-domain databases accessible to the scientific community, fundamentally changing how researchers approach materials design. By leveraging these databases, researchers can efficiently search for materials that exhibit specific characteristics, streamlining the materials design process and minimizing their reliance on traditional trial-and-error methods [12]. Moreover, the data stored in these databases can be utilized to develop advanced predictive machine learning models that enhance the efficiency and accuracy of materials design. The integration of computational tools and materials databases has not only accelerated the pace of materials discovery but has also facilitated collaboration and knowledge sharing within the research community [12].

The OPTIMADE Initiative: Standardizing Data Access

Despite these advancements, the landscape of materials databases long remained fragmented, creating significant challenges for researchers seeking to utilize these resources effectively. While some databases offered a Representational State Transfer (REST) Application Program Interface (API) for interaction, the lack of standardized protocols made it challenging to access curated materials data on a large scale [12]. To address this critical issue, the OPTIMADE consortium was established to develop a comprehensive API that can access all materials databases, bringing together a growing number of developers and maintainers of leading databases [12].

The OPTIMADE initiative has created a community that drives the development of the OPTIMADE API, establishing future plans to broaden the community and enhance the API's scope. Through workshops, monthly virtual meetings, and community mailing lists, the consortium has released multiple stable versions of the OPTIMADE API specifications [12]. In 2021, the OPTIMADE specifications were published as a research paper in the prestigious peer-reviewed journal Scientific Data, spurring increased adoption and utilization of the API [12]. This momentum led to the recent publication of a second research paper in Digital Discovery, further solidifying the standard's importance in the field [12].

Table 1: Major Open Materials Databases Accessible via OPTIMADE API

| Database Name | Primary Focus | URL | Institution |

|---|---|---|---|

| AFLOW | Distributed materials property repository | http://aflow.org | Duke University |

| Materials Project | Computational materials data | http://materialsproject.org | LBNL |

| Open Quantum Materials Database (OQMD) | Quantum materials properties | http://oqmd.org | Northwestern University |

| Crystallography Open Database (COD) | Crystal structures | http://www.crystallography.net/cod | Vilnius University |

| Materials Cloud | Materials science data platform | http://materialscloud.org | Paul Scherrer Institute |

| NOMAD Repository | Materials science data | https://nomad-lab.eu | European Consortium |

Experimental Databases and Integration

The experimental community has also been actively involved in creating databases that contain material properties, though these databases vary in accessibility, with some being openly available like those offered by the National Institute of Standards and Technology, while others are commercially available [12]. Due to the abundance of materials databases, it is not feasible to compile a comprehensive list, but specific initiatives provide links to a wide range of databases. The integration of these computational and experimental databases creates a powerful ecosystem for materials discovery, enabling researchers to validate computational predictions with experimental data and refine models based on empirical results.

High-Throughput Computing and AI-Driven Discovery

Computational Frameworks for Materials Screening

High-throughput computing represents the engine that powers modern data-driven materials discovery, enabling the systematic exploration of material spaces through rapid computational screening. Traditional empirical experiments and classical theoretical modeling are time-consuming and costly, creating significant bottlenecks in the materials development pipeline [9]. With the rapid growth of data from experiments, simulations, and databases, conventional methods struggle to meet current research demands. Machine learning overcomes these challenges by analyzing large datasets and revealing complex relationships between chemical composition, microstructural features, and material properties [9].

A major limitation of traditional computational methods like density functional theory (DFT) and molecular dynamics (MD) simulations is their computational intensity, which makes them slow, especially for complex multicomponent systems [9]. Moreover, the vast chemical space makes experimental testing of every candidate impractical, severely hindering innovation. High-throughput computing addresses these issues by deploying automated computational workflows that systematically calculate properties for thousands of materials in parallel, creating the foundational data necessary for training machine learning models and identifying promising candidates for further experimental investigation [9].

Diagram 1: High-Throughput Materials Discovery Workflow

Machine Learning and AI Integration

Machine learning has become a transformative tool in modern materials science, offering new opportunities to predict material properties, design novel compounds, and optimize performance [9]. The re-emergence of ML is driven by increased data availability, computational advances, and enhanced computing power [9]. Initially rooted in statistical learning, ML now permeates physics, chemistry, and materials science, using historical data to generate predictions via various algorithms, with performance depending on dataset size and computational efficiency [9].

Key methodologies in this field include deep learning, graph neural networks, Bayesian optimization, and automated generative models (GANs, VAEs) [9]. These approaches enable the autonomous design of materials with tailored functionalities. By leveraging AutoML frameworks (AutoGluon, TPOT, and H2O.ai), researchers can automate model selection, hyperparameter tuning, and feature engineering, significantly improving the efficiency of materials informatics [9]. The integration of AI-driven robotic laboratories and high-throughput computing has established a fully automated pipeline for rapid synthesis and experimental validation, drastically reducing the time and cost of material discovery [9].

Table 2: Key Machine Learning Algorithms in Materials Informatics

| Algorithm Category | Specific Methods | Applications in Materials Science | Advantages |

|---|---|---|---|

| Deep Learning | Convolutional Neural Networks (CNNs), Graph Neural Networks (GNNs) | Crystal structure prediction, Property forecasting | Handles complex patterns in high-dimensional data |

| Generative Models | Generative Adversarial Networks (GANs), Variational Autoencoders (VAEs) | Novel material design, Inverse design | Generates new structures with desired properties |

| Bayesian Optimization | Gaussian Processes, Acquisition Functions | Experimental design, Parameter optimization | Efficient global optimization with uncertainty quantification |

| Automated Machine Learning (AutoML) | AutoGluon, TPOT, H2O.ai | Automated workflow development, Model selection | Reduces need for ML expertise, accelerates model deployment |

Autonomous Experimentation and Closed-Loop Systems

The integration of AI with high-throughput experimentation is creating fully automated research environments that dramatically accelerate the discovery process. Automated laboratories equipped with artificial intelligence (AI) and robotic systems are transforming modern chemistry and materials science by conducting experiments, analyzing data, and optimizing processes with minimal human intervention [9]. These intelligent "robot scientists" accelerate the discovery of novel materials, optimize synthesis conditions, and enhance high-throughput screening capabilities [9].

Studies have demonstrated ML-driven robotic platforms optimizing chemical reactions and material synthesis parameters through iterative experimentation, significantly reducing the number of trials needed to achieve optimal results compared to traditional approaches [9]. This integration of computational prediction with automated experimental validation creates a closed-loop system that continuously refines models and accelerates discovery. The synergy between AI, automated experimentation, and computational modeling is transforming how materials are discovered and optimized, paving the way for new innovations in energy, electronics, and nanotechnology [9].

Experimental Protocols and Methodologies

Protocol: High-Throughput Screening of Porous Materials for CO₂ Capture

Objective: Systematically identify and validate metal-organic frameworks (MOFs) and porous materials for efficient CO₂ capture and conversion using integrated computational and experimental approaches.

Background: The urgent need to mitigate climate change has intensified research efforts in carbon capture and utilization technologies. The importance of this field was recently underscored by the 2025 Nobel Prize in Chemistry, awarded for developing metal-organic frameworks capable of efficiently capturing CO₂ [13].

Computational Screening Phase:

- Database Query: Execute queries across multiple materials databases (Materials Project, CSD, COD) to identify candidate porous materials with appropriate pore sizes (0.5-2.0 nm) and chemical functionality.

- High-Throughput Property Calculation: Deploy automated DFT calculations to determine:

- CO₂ adsorption isotherms at various pressures and temperatures

- Heat of adsorption (Qst) for CO₂

- CO₂/N₂ selectivity based on binding energy differences

- Diffusion barriers for CO₂ within framework structures

- Machine Learning Optimization: Train graph neural networks on calculated properties to predict performance of unscreened materials and generate novel structures with enhanced properties using variational autoencoders.

Experimental Validation Phase:

- Synthesis: Execute robotic synthesis of top-ranked candidates using solvothermal, microwave-assisted, and mechanochemical approaches.

- Characterization: Perform automated structural characterization (PXRD, BET surface area analysis, FTIR spectroscopy) to validate computational predictions.

- Performance Testing: Conduct high-pressure gas adsorption measurements using volumetric and gravimetric methods to determine CO₂ uptake capacity and selectivity.

Success Metrics: Materials exhibiting CO₂ uptake >4 mmol/g at 0.15 bar and 298K, CO₂/N₂ selectivity >150, and recyclability >100 cycles proceed to pilot-scale testing.

Protocol: ML-Driven Discovery of Molecular Catalysts for CO₂ Conversion

Objective: Accelerate the discovery and design of novel molecules that efficiently capture CO₂ and catalyze its transformation into valuable chemicals using HPC and ML models.

Background: This protocol was implemented by NTT DATA in collaboration with the University of Palermo and the University of Catanzaro, funded by the Italian National Recovery and Resilience Plan within the ICSC center [13].

Methodology:

- Data Curation: Compile comprehensive dataset of known CO₂ capture molecules and catalysts from literature and experimental sources, including structural descriptors, electronic properties, and performance metrics.

- Feature Engineering: Calculate molecular descriptors (topological, electronic, geometric) and use them as input features for machine learning models.

- Generative AI Implementation: Employ generative artificial intelligence (GenAI) to propose new molecular structures with optimized properties, broadening the search space beyond traditional design paradigms.

- Quantum Computing Integration: Investigate quantum computing frameworks to assess their potential in accelerating and improving performance of GenAI techniques.

- Validation: Synthesize and experimentally test top-performing candidate molecules identified through the informatics workflow.

Outcomes: The project successfully identified promising molecules for CO₂ catalysis currently under evaluation by chemistry experts, with the protocol being transferable to different chemical systems beyond CO₂ capture and conversion [13].

Table 3: Essential Computational and Experimental Resources for Data-Driven Materials Research

| Resource Category | Specific Tools/Platforms | Function/Purpose | Access Type |

|---|---|---|---|

| Materials Databases | Materials Project, AFLOW, OQMD, COD | Provide curated computational and experimental data for known and hypothetical materials | Open access via OPTIMADE API [12] |

| High-Throughput Computing | MedeA Software, AiiDA, FireWorks | Automated workflow management for high-throughput computational screening | Commercial and open source |

| Machine Learning Frameworks | AutoGluon, TPOT, H2O.ai | Automated machine learning for model selection and hyperparameter tuning | Open source [9] |

| Quantum Computing Platforms | Quantum processors, simulators | Solve complex optimization problems in materials design | Emerging access [13] |

| Robotic Synthesis Systems | Automated liquid handlers, reactor arrays | High-throughput synthesis of candidate materials | Institutional core facilities |

| Characterization Suites | High-throughput XRD, automated SEM/TEM, robotic gas sorption | Rapid structural and property characterization | Institutional core facilities |

Applications and Case Studies

Accelerated Discovery of Functional Materials

The data-driven approach has demonstrated remarkable success across multiple domains of functional materials research. Machine learning-driven techniques are revolutionizing materials discovery, property prediction, and material design by minimizing human intervention and accelerating scientific progress [9]. This review provides a comprehensive overview of smart, machine learning-driven approaches, emphasizing their role in predicting material properties, discovering novel compounds, and optimizing material structures [9]. These methodologies enable the autonomous design of materials with tailored functionalities, with demonstrated success in areas including superconductors, catalysts, photovoltaics, and energy storage systems [9].

Real-world applications of automated ML-driven approaches include predicting mechanical, thermal, electrical, and optical properties of materials, demonstrating successful cases across multiple technology domains [9]. For example, deep learning combined with DFT data has improved solar cell efficiency, advancing renewable energy technologies [9]. Modern computational resources like GPUs and TPUs accelerate neural network training, enabling the development of complex models and paving the way for "smart" laboratories where ML-driven systems conduct real-time material synthesis and optimization [9].

Industrial Applications and Technology Transfer

The transformative potential of data-driven materials discovery extends significantly to industrial applications, where reduced development timelines and costs provide substantial competitive advantages. A compelling case study comes from NTT DATA's collaboration with Komi Hakko, a startup originating from Osaka University, to digitalize scent reproduction [13]. Komi Hakko developed technology that quantifies scents, enabling the reproduction of specific odors by blending multiple fragrance ingredients to match a target numerical profile [13].

However, with thousands of potential fragrance components, identifying the optimal combination posed a significant computational challenge. NTT DATA combined its proprietary optimization technology with Komi Hakko's scent quantification technology, enabling efficient exploration of scent composition patterns that would be difficult for humans to discover manually [13]. This collaboration led to the development of a new formulation process for deodorant products that reduces production time by approximately 95% compared to conventional methods—achieving a remarkable improvement in the efficiency of material development processes [13].

The data-driven revolution in materials science, powered by integrated databases and high-throughput computing, represents a fundamental shift in how researchers approach the discovery and development of novel functional materials. Machine learning has emerged as a transformative paradigm in modern materials science, dramatically accelerating the prediction, design, and discovery of next-generation materials [9]. Over the past decade, remarkable progress has been achieved through the application of ML algorithms to analyze large and diverse datasets, enabling faster and more accurate modeling of complex material behaviors [9].

Despite these significant advancements, challenges remain in data quality, interpretability, and the integration of automated workflows with quantum computing [9]. Future developments will likely focus on enhancing data standardization, improving model interpretability, and creating more sophisticated autonomous research systems. The continued integration of data-driven methods with domain expertise enables faster discovery timelines, achieves significant reductions in experimental time and costs, and identifies previously undiscoverable materials—ultimately driving innovation and competitiveness in the next generation of materials research [13]. As these technologies mature, they promise to accelerate the development of advanced materials addressing critical global challenges in energy, healthcare, and sustainability.

The frontier of materials science is being reshaped by the development of advanced functional materials whose properties can be precisely engineered for specific applications. This field moves beyond traditional materials by designing matter with tailored, responsive, and often intelligent behaviors. Framed within the broader thesis of discovering novel functional materials, research is increasingly focused on creating highly adaptable platforms that bridge multiple disciplines. These materials are defined by their functionality—optical, electronic, biomedical, or mechanical—which is programmed in at the molecular or nanoscale level. The ability to systematically control properties like luminescence, selectivity, and responsiveness to external stimuli is unlocking new possibilities in sensing, healthcare, energy, and environmental protection [15] [16]. This guide provides an in-depth technical examination of the key materials classes at the heart of this revolution, with a specific focus on luminescent sensors and smart biomaterials, and details the experimental methodologies driving their development.

Core Advanced Materials Classes

Advanced materials can be categorized by their composition, structure, and primary function. The table below summarizes the key classes central to current research, particularly highlighting the journey from luminescent sensors to smart biomaterials.

Table 1: Key Classes of Advanced Functional Materials

| Material Class | Core Composition & Structure | Key Functional Properties | Primary Applications & Target Systems |

|---|---|---|---|

| Fluorescent Nanoclays [15] | Clay-based nanosheets functionalized with fluorophores (e.g., Piyuni Ishtaweera's polyionic nanoclays). | High functionality for precise tuning; Extreme brightness (e.g., 7,000 normalized brightness units [15]); Adaptable optical & physicochemical properties. | Medical imaging & contrast agents; Chemical sensors & biosensors; Environmental monitoring (e.g., water quality). |

| Stimuli-Responsive "Smart" Materials [16] | Polymers, hydrogels, alloys (e.g., shape memory), composites that react to environmental cues. | Properties change in response to stimuli (e.g., pH, temperature, light, magnetic field, specific molecules). | Intelligent drug delivery & release systems [16]; Actuators & sensors; Smart textiles. |

| Biomimetic & Bioinspired Materials [16] [17] | Synthetic or natural macromolecules designed to mimic biological structures (e.g., polymer blends, soft gels). | Biocompatibility; Specific bio-recognition; Self-assembly; Often combined with stimuli-responsiveness. | Implantable medical devices [16]; Tissue engineering scaffolds; Targeted therapeutic delivery. |

| Advanced Composite & Hybrid Materials [16] [17] | Combinations of organic/inorganic components (e.g., polymer-ceramic composites, thin films, 2D/3D structures). | Multifunctionality; Enhanced mechanical strength, conductivity, or catalytic activity; Synergistic effects. | Energy harvesting; Photocatalysis; Protective coatings; Structural components. |

| Inorganic Luminescent Materials [16] | Ceramics, semiconductors, and quantum dots with precise optical properties. | High photostability; Tunable emission wavelengths; Long luminescence lifetimes. | Sensing & detection (e.g., Adv. Inorganic Luminescent Materials [16]); Displays; Anti-counterfeiting. |

Detailed Experimental Protocols

The development and characterization of these advanced materials require rigorous and reproducible methodologies. The following protocols outline key processes for creating and testing two central material classes discussed in this guide.

Protocol 1: Synthesis and Characterization of Fluorescent Polyionic Nanoclays

This protocol is adapted from the work of Ishtaweera, Baker, et al., which resulted in a "brilliantly luminous" nanoscale tool with a patent pending [15].

1. Synthesis of Polyionic Nanoclay Base: - Begin with a purified, natural or synthetic smectite clay (e.g., montmorillonite). The clay's inherent negative surface charge is foundational. - Perform a cation-exchange process in an aqueous solution to intercalate the clay layers with specific polymeric cations. This step forms the "polyionic" base, enhancing the clay's stability and functionality. - Use techniques like centrifugation and dialysis to purify the resulting polyionic nanoclay suspension, removing excess ions and polymers. The final product is a stable aqueous dispersion of exfoliated, single-layer nanoclay sheets.

2. Functionalization with Fluorophores: - Select from thousands of commercially available, cationic fluorophores based on the desired excitation/emission profiles and application needs (e.g., for medical imaging or biosensing) [15]. - Incubate the polyionic nanoclay dispersion with the selected fluorophore. The positively charged fluorophores electrostatically "hook" onto the negatively charged surfaces of the nanoclay sheets. - Precisely control the loading ratio (fluorophore to nanoclay) and reaction conditions (pH, temperature, time) to dictate the density of fluorophore attachment, which directly tunes the optical properties of the final material.

3. Purification and Recovery: - Separate the fluorescently tagged nanoclays from unbound fluorophore molecules using repeated cycles of centrifugation and re-dispersion in a clean buffer or solvent. - The final product can be recovered as a concentrated colloidal suspension or as a solid powder via lyophilization (freeze-drying).

4. Characterization and Validation: - Brightness & Optical Properties: Use fluorometry to measure fluorescence intensity and quantum yield. The reported brightness should be normalized for volume, with high-performing materials achieving ~7,000 brightness units [15]. - Structural Analysis: Employ techniques like X-ray diffraction (XRD) to confirm the intercalation/exfoliation structure and dynamic light scattering (DLS) for particle size and zeta-potential analysis. - Morphology: Use atomic force microscopy (AFM) or transmission electron microscopy (TEM) to visualize the sheet-like morphology and confirm nanoscale dimensions.

Protocol 2: In Vitro Evaluation of a Smart Biomaterial for pH-Responsive Drug Release

This protocol describes a general methodology for testing a smart, stimuli-responsive polymer-based drug delivery system.

1. Material Preparation and Drug Loading: - Synthesize or acquire a pH-responsive polymer, such as a copolymer containing ionizable groups (e.g., carboxylic acids) that swell or degrade at a specific pH. - Using a solvent evaporation or dialysis method, load a model active pharmaceutical ingredient (API) into the polymer matrix to form drug-loaded nanoparticles or a hydrogel. - Purify the drug-loaded material and determine the drug loading capacity and encapsulation efficiency using UV-Vis spectroscopy or HPLC against a standard calibration curve.

2. Experimental Setup for Release Kinetics: - Prepare simulated physiological buffers at different pH levels relevant to the target pathway (e.g., pH 7.4 for blood, pH 6.5 for tumor microenvironment, pH 1.2-5.0 for the gastrointestinal tract). - Place a precise amount of the drug-loaded material into dialysis bags or a membrane-less chamber within a vessel containing the release medium. Maintain the system at a constant temperature (e.g., 37°C) with continuous agitation. - At predetermined time intervals, withdraw a small sample of the release medium and replace it with an equal volume of fresh buffer to maintain sink conditions.

3. Quantification and Data Analysis: - Analyze the collected samples for drug concentration using a pre-validated analytical method (HPLC or UV-Vis). - Calculate the cumulative percentage of drug released over time. - Plot the release profile (Cumulative % Release vs. Time) for each pH condition. A successful smart material will show significantly different release kinetics at the trigger pH compared to physiological pH.

4. Cytocompatibility Assessment (MTT Assay): - Culture relevant cell lines (e.g., HeLa, HEK293) in standard conditions. - Expose the cells to a range of concentrations of the blank (unloaded) smart biomaterial and incubate for 24-72 hours. - Add MTT reagent to the wells, which is reduced to purple formazan by metabolically active cells. - Solubilize the formazan crystals and measure the absorbance. Calculate the percentage of cell viability relative to untreated control cells to confirm the material's non-toxicity.

Quantitative Data and Comparison Tables

The performance of advanced materials is quantified through key metrics, which allow for direct comparison and selection for specific applications.

Table 2: Quantitative Performance Metrics of Luminescent Sensor Materials

| Material Type | Reported Brightness (Normalized for Volume) | Key Functionalized Elements | Detection Limits / Sensitivity | Stability & Environmental Factors |

|---|---|---|---|---|

| Fluorescent Polyionic Nanoclays [15] | ~7,000 units (matches highest reported) | Customizable with fluorophores, antibodies, DNA aptamers, metal-binding ligands [15]. | "Highly useful for sensitive optical detection methods"; "Improved detection" [15]. | Clay base provides structural robustness; functionality adaptable for different media (aqueous, biological). |

| Advanced Inorganic Luminescent Materials [16] | Typically very high (data specific to material required) | Dopant ions (rare earth, transition metals), surface coatings. | Varies by material; generally high photostability enables low-level detection. | High thermal and photostability; suitable for harsh environments. |

| Carbon-Based Dots / Quantum Dots [17] | Ranges from moderate to very high | Surface passivation molecules, functional groups (-COOH, -NH2). | Can be highly sensitive to specific ions/molecules; tunable. | Can be susceptible to photobleaching (varies by composition); pH-dependent. |

Table 3: "The Scientist's Toolkit": Essential Research Reagents and Materials

| Reagent/Material Solution | Function in Research and Development | Specific Application Example |

|---|---|---|

| Cationic Fluorophore Library [15] | Provides the optical signaling (fluorescence) capability for sensory materials. | Attaching to nanoclays to create a bright, customizable fluorescent probe [15]. |

| Polyionic Clay Precursors [15] | Forms the structural nano-platform with high surface area and negative charge for functionalization. | Serving as the base for building fluorescent nanoclays or hybrid materials [15]. |

| pH-Responsive Polymer Monomers | The building blocks for synthesizing "smart" drug delivery systems that release cargo in specific bodily environments. | Creating micro- or nano-carriers for targeted cancer therapy in the acidic tumor microenvironment. |

| DNA Aptamers / Antibodies [15] | Imparts high biological specificity for targeting and recognition. | Functionalizing a material surface to selectively bind to cancer cell biomarkers or pathogens [15]. |

| Shape Memory Alloys/Polymers [16] | Provides the ability to change shape in response to temperature or other stimuli. | Developing stents, actuators, or self-fitting implants in biomedical devices [16]. |

Visualizing Workflows and Material Behavior

The logical relationships in material synthesis and functional pathways are visualized below using Graphviz DOT language, adhering to the specified color and contrast guidelines.

Synthesis of Fluorescent Nanoclays

Smart Biomaterial Drug Release Mechanism

Investment and Grant Trends Fueling Foundational Research in 2025

The discovery of novel functional materials represents a critical frontier in addressing global challenges in clean energy, healthcare, and sustainable technology. As the climate emergency deepens, demand for minerals essential to renewable energy technologies is accelerating dramatically, creating supply shortages that could undermine progress toward global climate targets [18]. Current investment in mining projects falls short by an estimated $225 billion, leaving production levels well below what is needed to meet the Paris Agreement's 1.5°C target [18]. This resource constraint has created an urgent need for innovation within materials discovery, requiring significant capital directed toward technologies such as high-quality materials databases, advanced computational modeling, and self-driving labs [18].

Foundational research in functional materials sits at a critical juncture, driven by mounting sustainability imperatives, rapid technological advancements, and shifting economic priorities [19]. The global advanced functional materials market, valued at approximately $250 billion in 2023, reflects this strategic importance and is projected to grow at a compound annual growth rate (CAGR) exceeding 7%, reaching over $450 billion by 2033 [20]. This growth is fueled by escalating demand from automotive, electronics, and aerospace sectors constantly striving for enhanced performance, lightweighting, and miniaturization [20]. Within this expanding market, 2025 represents a pivotal year where investment patterns, grant allocation strategies, and technological breakthroughs are converging to create unprecedented opportunities for researchers and drug development professionals working at the frontiers of materials science.

Current Funding Landscape and Investment Trends

Capital Flows and Funding Mechanisms

Materials discovery research is primarily driven by two complementary sources of capital: equity financing and grant funding. Each plays a distinct yet interconnected role in advancing the field from basic research to commercial application.

Table 1: Materials Discovery Funding Trends (2020-2025)

| Year | Equity Investment (Million USD) | Grant Funding (Million USD) | Key Developments |

|---|---|---|---|

| 2020 | $56 | Not Specified | Baseline funding level |

| 2023 | Not Specified | $59.47 | Significant grant growth began |

| 2024 | Not Specified | $149.87 | Near threefold increase in grants |

| Mid-2025 | $206 | Data not yet available | Steady growth trajectory |

Equity investment in the sector has demonstrated consistent growth from $56 million in 2020 to $206 million by mid-2025, indicating sustained flow of private capital and growing confidence in the sector's long-term potential [18]. This upward trajectory is particularly remarkable given global economic uncertainties, underscoring the strategic importance investors place on advanced materials. Grant funding has experienced even more dramatic growth, with a significant surge in 2023 followed by a near threefold increase in 2024, rising from $59.47 million to $149.87 million [18]. This explosive growth in public funding reflects governmental recognition of materials science as a strategic priority for addressing climate change and maintaining technological competitiveness.

Notable recent grants exemplifying these trends include:

- Mitra Chem (USA) secured a $100 million grant from the U.S. Department of Energy to advance lithium iron phosphate cathode material production [18]

- Infleqtion (USA) received $56.8 million in 2023 from UK Research and Innovation (UKRI) for quantum technology work, with additional awards of $1.15 million in September and $11 million in December 2024 [18]

- Sepion Technologies (USA) and Giatec (Canada) each received $17.5 million in funding [18]

Stage and Sector Focus

Investment patterns reveal distinct concentrations across development stages and technology subsectors. Early-stage companies have attracted the bulk of risk capital, with momentum particularly strong at the pre-seed and seed stages where startups are developing early prototypes or validating novel approaches such as computational modeling and new materials platforms [18]. This early-stage momentum carried through into 2024 but has since moderated, pointing to more selective scaling decisions as investors become increasingly discerning. Late-stage deals remain limited, reflecting the sector's early maturity and the inherently long timelines required for commercialization of materials technologies [18].

Table 2: Investment Distribution by Materials Sub-Sector

| Sub-Sector | Cumulative Funding | Key Developments | Growth Potential |

|---|---|---|---|

| Materials Discovery Applications | $1.3 billion | Driven by $1.2B acquisition of Chryso by Saint-Gobain (2021) | High, direct decarbonization impact |

| Computational Materials Science | $168 million (by mid-2025) | Steady growth from $20M in 2020 | Very high, enables acceleration |

| Materials Databases | $31 million (notable 2025 uptick) | Rising recognition of AI-enablement value | High, foundation for AI/ML |

| Robotics for Materials Discovery | Minimal funding | Niche focus, early adoption stage | Medium-long term |

Within the broader materials discovery landscape, specific application areas have attracted varying levels of investor attention. Materials discovery applications have attracted the largest share of capital with a cumulative $1.3 billion in funding, largely driven by the $1.2 billion acquisition of Chryso by Saint-Gobain in 2021, a landmark deal in construction chemicals aligned with advanced materials integration [18]. Meanwhile, computational materials science and modeling shows steady growth, rising from $20 million in 2020 to $168 million by mid-2025, reflecting growing confidence in simulation-based platforms that accelerate R&D and reduce time-to-market for novel materials [18]. Materials databases recorded a notable uptick in 2025 with $31 million in funding, indicating rising investor recognition of data infrastructure and AI-enablement as critical components of materials discovery workflows [18].

Regional Distribution and Global Hotspots

The geographic distribution of materials research funding reveals clear global leaders and emerging hubs. North America continues to lead global investment in materials discovery, with the United States commanding the majority share of both funding and deal volume over the past five years [18]. Investment activity in the U.S. peaked between 2022 and 2024, establishing it as the dominant force in the sector. Europe ranks second in both funding and transaction count, with the United Kingdom standing out with consistent year-on-year deal flow, underlining its strategic commitment to advanced materials innovation [18]. Other key European markets such as Germany, the Netherlands, and France exhibit more sporadic activity, suggesting that funding is still concentrated around specific companies or projects rather than broad-based sectoral support [18].

While global participation is increasing, capital remains heavily concentrated in the U.S. and Europe, underscoring their leadership in the emerging materials innovation landscape [18]. This concentration reflects the presence of established research institutions, venture capital ecosystems, and governmental support mechanisms that create fertile environments for materials innovation. However, Asia-Pacific regions, particularly China and Japan, are poised for substantial growth, fueled by rapid industrialization and increasing investments in advanced technologies [20].

Emerging Technologies Reshaping Research Methodologies

Self-Driving Labs and Autonomous Discovery

A transformative technological advancement revolutionizing materials discovery is the development of self-driving laboratories - robotic platforms that combine machine learning and automation with chemical and materials sciences to discover materials more quickly [7]. These systems represent a paradigm shift from traditional sequential experimentation to autonomous, data-rich approaches. The automated process allows machine-learning algorithms to make use of data from each experiment when predicting which experiment to conduct next to achieve predefined research goals [7].

Recent breakthroughs have demonstrated techniques that allow self-driving laboratories to collect at least 10 times more data than previous techniques at record speed while slashing costs and environmental impact [7]. The key innovation lies in the transition from steady-state flow experiments to dynamic flow experiments. In traditional steady-state approaches, different precursors are mixed together and chemical reactions take place while continuously flowing in a microchannel, with characterization occurring only after reaction completion - a process that can take up to an hour per experiment with the system sitting idle during reactions [7]. In contrast, dynamic flow systems continuously vary chemical mixtures through the system with real-time monitoring, capturing data every half second and creating a continuous "movie" of the reaction rather than separate snapshots [7].

This streaming-data approach allows the self-driving lab's machine-learning algorithm to make smarter, faster decisions, honing in on optimal materials and processes in a fraction of the time. The system dramatically cuts down on chemical use and waste, advancing more sustainable research practices while accelerating discovery [7]. As Milad Abolhasani, corresponding author of groundbreaking research in this field, explains: "The future of materials discovery is not just about how fast we can go, it's also about how responsibly we get there. Our approach means fewer chemicals, less waste, and faster solutions for society's toughest challenges" [7].

AI-Guided Materials Design

Beyond automated experimentation, artificial intelligence is revolutionizing the theoretical design of novel materials. Researchers have developed techniques that enable popular generative materials models to create promising quantum materials by following specific design rules [21]. This addresses a critical limitation in conventional AI models, which tend to generate materials optimized for stability rather than exotic quantum properties needed for advanced applications.

The SCIGEN (Structural Constraint Integration in GENerative model) tool represents a breakthrough in this domain [21]. This computer code ensures diffusion models adhere to user-defined constraints at each iterative generation step, steering models to create materials with unique structures that give rise to quantum properties. As MIT's Mingda Li explains: "The models from these large companies generate materials optimized for stability. Our perspective is that's not usually how materials science advances. We don't need 10 million new materials to change the world. We just need one really good material" [21].

This approach has proven particularly valuable for designing materials with specific geometric patterns like Kagome and Lieb lattices that can support the creation of materials useful for quantum computing [21]. When applied to generate materials with Archimedean lattices (collections of 2D lattice tilings of different polygons known to give rise to quantum phenomena), the SCIGEN-equipped model generated over 10 million material candidates, with one million surviving initial stability screening [21]. Subsequent simulations found magnetism in 41 percent of structures, leading to the successful synthesis of two previously undiscovered compounds, TiPdBi and TiPbSb [21]. This demonstrates the powerful synergy between AI-guided design and experimental validation.

Research Reagent Solutions and Experimental Infrastructure

Table 3: Essential Research Reagents and Platforms for Advanced Materials Discovery

| Reagent/Platform | Function | Application Examples |

|---|---|---|

| Continuous Flow Reactor Systems | Enables dynamic flow experiments with real-time monitoring | High-throughput synthesis of colloidal quantum dots [7] |

| Microfluidic Characterization Chips | Integrated sensors for in-situ material property measurement | Real-time optical, structural characterization during synthesis [7] |

| Archimedean Lattice Precursors | Chemical building blocks for targeted quantum material structures | Synthesis of Kagome lattice materials for quantum spin liquids [21] |

| Multi-Ferroic Composite Materials | Polymer matrices with magnetic/ferroelectric nanoparticles | Biomedical applications (drug delivery, retinal transplantation) [22] |

| Biocompatible Magnetic Elastomers | Magnetic nanoparticle-polymer composites for biomedical use | Theranostics, stem cell differentiation, universal sensors [22] |

| Advanced Photocatalytic Materials | Semiconductors for UV/visible light-driven reactions | Photocatalytic hydrogen production, CO2 reduction [22] |

The experimental infrastructure supporting modern materials discovery has evolved significantly beyond traditional laboratory equipment. Continuous flow reactor systems form the backbone of self-driving laboratories, enabling dynamic flow experiments that generate orders of magnitude more data than batch processes [7]. These systems are typically integrated with microfluidic characterization chips containing various sensors for real-time, in-situ measurement of material properties during synthesis [7]. For quantum materials research, specialized Archimedean lattice precursors serve as chemical building blocks for creating specific geometric patterns known to host exotic quantum properties [21].

In biomedical applications, multi-ferroic composite materials combining polymer matrices with magnetic and ferroelectric nanoparticles enable advanced applications in drug delivery, diagnostics, and even retinal transplantation using magnetic seals [22]. Similarly, biocompatible magnetic elastomers are being developed for theranostics (combined therapy and diagnostics), stem cell differentiation, and as the basis for universal sensors and energy converters [22]. The field also benefits from advanced photocatalytic materials - semiconductors engineered for specific UV or visible light-driven reactions - that show promise for photocatalytic hydrogen production and carbon dioxide reduction [22].

Grant Funding Priorities and Strategic Directions

Major Funding Initiatives and Thematic Priorities

Grant funding in 2025 reflects strategic priorities aligned with global challenges, particularly climate change, energy security, and technological sovereignty. Current calls for proposals demonstrate a clear focus on specific high-impact areas:

Climate and Energy Resilience Initiatives include calls to develop deep tech solutions addressing key climate risks and improving Europe's climate resilience, with awards ranging from EUR 0.5 million to EUR 10 million [23]. These focus on combating extreme heat in urban environments, climate-smart agriculture, combating water scarcity, and flood/coastal protection. Parallel initiatives support entrepreneurship programs empowering start-ups developing breakthrough technologies for a net-zero and climate-resilient future, particularly those working at the intersection of Artificial Intelligence (AI), Deep Technologies, and Climate Innovation [23].

Advanced Energy Materials Development is supported through calls targeting start-ups and SMEs developing advanced materials for renewable energy and energy storage systems, with awards ranging from EUR 0.5 million to EUR 10 million [23]. These initiatives specifically address the urgent need to boost all stages of advanced materials development - from design and synthesis to up-scaling and production - to enhance strategic autonomy in the energy sector. Applicants must focus on developing materials and associated processes that minimize the use of Critical Raw Materials (CRMs) and reduce environmental footprint, measured using comprehensive Life Cycle Analysis (LCA) [23].

Strategic Technology Sovereignty is addressed through programs like the EIC Accelerator, which targets start-ups and SMEs possessing the ambition to scale up operations based on scientific discovery or technological breakthroughs ('deep tech') [23]. Funding is provided through a combination of grant and investment funding, along with Business Acceleration Services, focusing on later-stage technology development and scale-up where innovation has reached at least Technology Readiness Level 5 [23]. For more mature companies, the EIC STEP Scale Up program addresses market gaps in financing deep tech scale-up companies with equity-only investments ranging from EUR 10 million to EUR 30 million per company [23].

Evolving Evaluation Criteria and Application Strategies

Success in securing grant funding increasingly depends on alignment with evolving evaluation criteria that reflect broader shifts in research priorities. According to insights from leading investors, proposals must demonstrate:

- Technology Readiness for Industrialization: As emphasized by Frank Lehmann, VP Corporate Venturing & Open Innovation at AMCOR, "Start-ups must ensure their technologies are ready for industrialisation. That's the largest hurdle for securing new capital" [19].