AI and Robotics in Solid-State Materials Synthesis: Accelerating Discovery for Biomedical Applications

This article explores the transformative integration of artificial intelligence and robotic automation in solid-state materials science.

AI and Robotics in Solid-State Materials Synthesis: Accelerating Discovery for Biomedical Applications

Abstract

This article explores the transformative integration of artificial intelligence and robotic automation in solid-state materials science. Tailored for researchers, scientists, and drug development professionals, it provides a comprehensive analysis of how these technologies are reshaping the discovery pipeline—from AI-driven predictive modeling and inverse design to the realities of autonomous synthesis labs. We cover foundational concepts, practical methodologies, critical challenges in optimization and reproducibility, and a comparative validation of AI predictions against experimental results. The synthesis offers a roadmap for leveraging these advanced tools to accelerate the development of novel materials for drug delivery, medical implants, and diagnostic technologies.

The New Frontier: How AI and Robotics are Redefining Materials Discovery

The field of solid-state materials synthesis is undergoing a fundamental transformation, moving from traditional trial-and-error approaches to a new paradigm of AI-guided design. This shift represents the emergence of a four paradigm of scientific discovery, where research is driven by big data and artificial intelligence rather than purely empirical experiments or theoretical models alone [1]. For decades, materials scientists faced unprecedented challenges in discovering new materials, including recruitment delays affecting 80% of studies, escalating costs, and success rates below 12% [2]. The traditional process of materials discovery has been a stressful and time-consuming task that involved labor-intensive methods and serendipitous discovery [3].

The integration of AI and robotics is now systematically addressing these systemic inefficiencies across the entire materials development lifecycle. As Kristin Persson, a professor in materials science at the University of California in Berkeley, notes: "In the fourth paradigm, you now have enough data that you can train machine-learning algorithms. That brings a whole new level of speed in terms of innovation" [1]. This transformation is particularly evident in the development of hydrogen-based electrochemical systems, such as fuel cells and electrolyzers, as clean energy solutions known for their promising high energy density and zero-emission operations [4]. What makes this relationship unique is its reciprocal nature—AI is not only accelerating materials discovery but also benefiting from the development of new materials that advance computational hardware [1].

Quantitative Impact: Measuring AI's Transformative Effect

The implementation of AI-guided design principles has yielded measurable improvements across key metrics in materials research and development. The table below summarizes substantial AI benefits documented across recent studies and implementations:

Table 1: Quantitative Impact of AI on Materials Research and Development

| Performance Metric | Traditional Approach | AI-Guided Approach | Improvement | Source |

|---|---|---|---|---|

| Patient recruitment rates | Manual screening processes | AI-powered recruitment tools | 65% improvement in enrollment rates | [2] |

| Trial outcome prediction | Statistical models | Predictive analytics models | 85% accuracy in forecasting outcomes | [2] |

| Development timeline | Linear, sequential processes | AI-accelerated workflows | 30-50% acceleration | [2] |

| Cost reduction | High operational expenses | Optimized resource allocation | Up to 40% reduction | [2] |

| Adverse event detection | Periodic manual review | Digital biomarker monitoring | 90% sensitivity | [2] |

| Patient screening time | Manual review of records | Automated EHR analysis | 42.6% reduction while maintaining 87.3% accuracy | [5] |

| Document processing | Manual documentation | AI-powered automation | 50% reduction in process costs | [5] |

| Drug discovery timeline | 5+ years for target identification | AI-accelerated platforms | Reduction to 12-18 months | [6] |

The financial markets have recognized this transformative potential, with the global AI-based clinical trials market growing from $7.73 billion in 2024 to $9.17 billion in 2025, with projections showing it will reach $21.79 billion by 2030 [7]. Similarly, the AI in pharmaceutical market is forecasted to reach around $16.49 billion by 2034, accelerating at a CAGR of 27% from 2025 to 2034 [6]. Beyond clinical applications, the fundamental shift is perhaps most evident in materials science, where AI has catalyzed the discovery of new materials, enhanced design simulations, influenced process controls, and facilitated operational analysis and predictions of material properties and behaviors [4].

Core AI Technologies Powering the Transformation

Machine Learning and Deep Learning Foundations

The AI revolution in materials science is built on sophisticated machine learning (ML) and deep learning (DL) algorithms that enable computers to learn from data without being explicitly programmed. These technologies form the backbone of modern materials discovery systems, allowing researchers to identify complex patterns and relationships within multidimensional datasets that would be impossible for humans to discern manually. As demonstrated in studies of hydrogen-based electrochemical systems, AI algorithms can process intricate relationships between material composition, synthesis parameters, and resulting properties, delivering relatively more accurate and reliable solutions to critical challenges [4].

Deep learning techniques, particularly graph neural networks, have proven exceptionally valuable for modeling crystalline and molecular structures, as they naturally represent the graph-based topology of atomic arrangements [8]. These models can accurately forecast the physicochemical properties as well as biological activities of new chemical entities, dramatically accelerating the initial screening phase of materials development [3]. Reinforcement learning techniques further enhance this capability by enabling the AI systems to optimize their search strategies based on continuous feedback from experimental results.

Natural Language Processing for Knowledge Extraction

Natural language processing (NLP) technologies enable the extraction of valuable insights from the vast scientific literature in materials science. Approximately 80% of critical materials information exists as unstructured text in research papers, patents, and clinical notes rather than organized data fields [5]. Advanced NLP algorithms can process this textual information to identify previously overlooked relationships between material properties, synthesis conditions, and performance characteristics.

Specialized AI models like TrialGPT demonstrate how NLP capabilities can be tailored for scientific applications, processing complex materials information to provide individual criterion assessments and consolidated predictions for experimental suitability [5]. These systems extend beyond basic information retrieval to identify subtle correlations and generate novel hypotheses that can guide future research directions, effectively amplifying the collective knowledge of the entire materials science community.

Generative AI for Molecular and Materials Design

Generative AI represents one of the most transformative technologies in the modern materials development pipeline. Models like generative adversarial networks (GANs) and variational autoencoders can create novel molecular structures that meet specific biological and physicochemical properties, significantly accelerating the materials design process [3]. These systems learn the underlying probability distributions of known successful materials and can then generate new candidates with optimized characteristics.

The impact of generative AI is particularly evident in molecular design, where models like AlphaFold and newer systems can predict protein structures with remarkable accuracy from amino acid sequences [6]. This breakthrough has profound implications for drug design and biomaterials development, as predicting how drugs interact with their targets improves the design of new therapeutic materials [3]. Companies like Insilico Medicine have leveraged these capabilities to develop AI-designed drug candidates, such as a novel treatment for idiopathic pulmonary fibrosis, in just 18 months—a fraction of the time required through traditional methods [3].

Digital Twins and Simulation Technologies

Digital twins create computer simulations that replicate real-world materials behavior using mathematical models and experimental data. Researchers can test hypotheses and optimize synthesis protocols using virtual materials before conducting physical experiments, significantly reducing the number of iterative trials required [5]. These in silico models mimic human organs and disease states, making realistic predictions of material effectiveness and side effects before clinical trials [3].

The Materials Project, a multi-institution, international effort led by researchers at Lawrence Berkeley National Laboratory, exemplifies the power of this approach. By harnessing the power of supercomputing and state-of-the-art simulation methods, the project is calculating the properties of all known inorganic materials and beyond (currently 160,000 materials) and making this data freely available [1]. This massive dataset provides the foundation for training increasingly accurate AI models that can predict material properties without resource-intensive experimental characterization.

Experimental Protocols for AI-Guided Materials Discovery

The Automated Discovery Loop Protocol

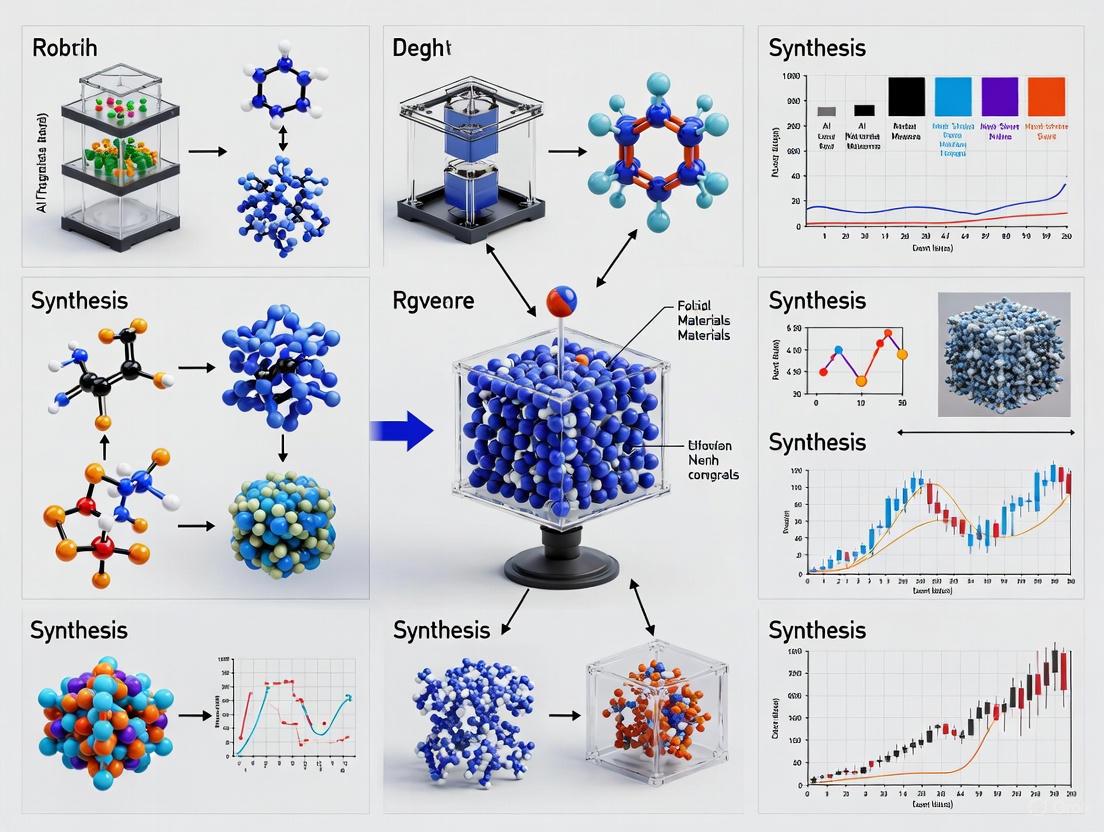

The most significant protocol emerging in AI-guided materials design is the automated discovery loop, which integrates AI-generated hypotheses with robotic experimentation. This protocol establishes a continuous feedback cycle between computational prediction and experimental validation, dramatically accelerating the exploration of materials space. The following workflow visualization captures the key stages of this transformative approach:

Figure 1: AI-Guided Materials Discovery Workflow

Step 1: AI Hypothesis Generation - The process begins with AI systems, particularly large language models and reasoning engines like OpenAI's o1, proposing novel materials compositions or synthesis recipes. These systems leverage existing experimental data, computational databases like the Materials Project, and underlying physical principles to generate promising candidates [8] [1]. As Ekin Dogus Cubuk of Periodic Labs explains: "The LLM can propose, for example, synthesis recipes or it can propose simulations to run and because the LLMs are pretty good at tool use, it can actually do it itself" [8].

Step 2: Robotic Synthesis - AI-generated hypotheses are executed by automated laboratory systems that handle powder processing, mixing, and synthesis operations. These robotic platforms can operate continuously with minimal human intervention, significantly increasing experimental throughput. As Andrew Cooper, a chemistry professor at the University of Liverpool, notes: "We're trying to bring those two things together and build AI directly into robots so that they can discover new materials by thinking about the data and making decisions" [1].

Step 3: Automated Characterization - Newly synthesized materials undergo immediate characterization using integrated analytical instruments, which may include X-ray diffraction, electron microscopy, spectroscopy, and functional property measurements. This automation generates consistent, high-quality data for the subsequent analysis phase.

Step 4: Data Integration - Results from materials characterization are structured and added to the central database, creating an expanding knowledge base that captures both successful and unsuccessful synthesis attempts. This comprehensive data collection is critical for improving AI model accuracy.

Step 5: Model Refinement - The AI algorithms update their predictive models based on the new experimental results, learning from both successes and failures. This continuous learning process allows the system to progressively refine its understanding of composition-structure-property relationships.

The entire cycle operates as a closed-loop system, with each iteration enhancing the AI's predictive capabilities. This protocol represents a fundamental departure from traditional linear research approaches, embracing instead an adaptive, data-driven methodology that continuously improves through experimental feedback.

Predictive Performance Validation Protocol

Validating AI model predictions against known materials systems represents a critical protocol for establishing confidence in AI-guided approaches before exploring novel chemical spaces. This validation follows a structured methodology:

Phase 1: Training Dataset Curation - Researchers compile a comprehensive dataset of known materials, their synthesis parameters, and measured properties. For example, the Materials Project provides calculated properties for over 160,000 inorganic materials, serving as an extensive training resource [1].

Phase 2: Model Training - AI models are trained on a subset of the available data, using techniques such as graph neural networks for structure-property prediction and reinforcement learning for synthesis optimization.

Phase 3: Hold-Out Validation - The trained models predict properties for known materials not included in the training set, with predictions compared against experimental measurements to quantify accuracy.

Phase 4: Prospective Experimental Testing - Models are used to predict new materials or optimized synthesis conditions, which are then verified through targeted experiments to confirm predictive capabilities.

This protocol has demonstrated impressive results, with predictive analytics models achieving 85% accuracy in forecasting trial outcomes and digital biomarkers enabling 90% sensitivity for adverse event detection in related clinical applications [2].

The Scientist's Toolkit: Essential Research Reagents and Solutions

The implementation of AI-guided materials design requires specialized computational tools, experimental infrastructure, and data resources. The following table details key components of the modern materials discovery toolkit:

Table 2: Essential Research Reagents and Solutions for AI-Guided Materials Discovery

| Tool Category | Specific Tools/Platforms | Function | Application Example |

|---|---|---|---|

| AI/ML Platforms | TensorFlow, PyTorch, scikit-learn | Development and training of machine learning models for property prediction | Building neural networks for structure-property mapping [4] |

| Materials Databases | Materials Project, Cambridge Structural Database | Providing curated datasets of known materials and properties | Training AI models for inorganic materials discovery [1] |

| Automation Hardware | Liquid handling robots, automated synthesis systems | High-throughput synthesis and characterization of material samples | Accelerating experimental iteration in closed-loop discovery [1] |

| Simulation Software | VASP, Gaussian, LAMMPS | First-principles calculation of material properties | Generating training data for AI models [1] |

| Laboratory IoT Sensors | In-line spectroscopy, automated particle sizing | Real-time monitoring of synthesis processes and material characteristics | Continuous data collection for AI model refinement [6] |

| Reasoning Models | OpenAI o1, specialized scientific LLMs | Generating and evaluating scientific hypotheses | Proposing novel synthesis pathways beyond training data [8] |

This toolkit enables the implementation of what pioneers in the field have termed the "Lab in a Loop" approach, where "data from laboratory experiments and clinical settings are used to train AI models and algorithms... These models then make predictions about drug targets, therapeutic molecules, and more" [7]. The predicted outcomes are tested back in the lab, generating new data that further refines and retrains the AI models in a continuous cycle of improvement that moves beyond traditional trial-and-error approaches.

Implementation Challenges and Ethical Considerations

Technical and Data Infrastructure Barriers

The paradigm shift to AI-guided design faces significant technical implementation barriers that must be addressed for successful deployment. Data interoperability challenges frequently arise when legacy laboratory systems lack standardized data formats and modern APIs required for AI connectivity [2] [5]. This heterogeneity creates significant friction in creating the unified, structured datasets necessary for effective AI training.

The data scarcity problem presents another critical challenge for materials science applications. As noted in the research, "In materials science, we have a data shortage. Out of all the near endless possible materials, only a very tiny fraction have been made, and of them, few have been well characterized" [1]. This limitation is particularly significant given that AI systems are notoriously data-hungry—the more data they receive, the better their performance. To address this constraint, researchers employ techniques such as transfer learning (where models pre-trained on large chemical databases are fine-tuned with smaller materials-specific datasets) and synthetic data generation through computational simulation.

Performance degradation represents an additional concern, as AI models frequently experience accuracy decline when applied to populations or material classes different from their training data [5]. This problem of out-of-distribution generalization is particularly relevant for materials science, where discovery often involves exploring completely novel chemical spaces. As one researcher notes, "A big problem with using artificial intelligence to discover new materials? It struggles to predict beyond its training data" [8].

Algorithmic Bias and Fairness Considerations

Algorithmic bias presents significant ethical challenges in AI-guided materials design, as AI systems can perpetuate and amplify existing biases present in training data [2]. Historical research has disproportionately focused on certain classes of materials while neglecting others, creating imbalanced datasets that can lead to biased predictive models with reduced performance for underrepresented material categories.

Addressing these fairness concerns requires comprehensive data audit processes that examine training datasets for demographic representation and coverage of diverse material classes [5]. Technical approaches such as fairness testing methods evaluate AI performance across different population subgroups to identify performance gaps before deployment. Additionally, Explainable AI (XAI) techniques, including SHAP analysis and attention mechanisms, provide interpretable insights into model decision-making processes, helping researchers identify and mitigate potential sources of bias [9].

Regulatory and Validation Frameworks

The rapid advancement of AI-guided materials design has outpaced the development of corresponding regulatory frameworks, creating uncertainty around validation requirements and compliance standards [2]. In early 2025, the FDA released comprehensive draft guidance titled "Considerations for the Use of Artificial Intelligence to Support Regulatory Decision-Making for Drug and Biological Products," establishing initial pathways for AI validation while maintaining safety standards [5].

A risk-based assessment framework categorizes AI models into three risk levels based on their potential impact on patient safety and trial outcomes [5]. For materials science applications, similar frameworks are emerging that consider the potential consequences of AI errors in various contexts, from early-stage discovery to safety-critical applications.

Validation requirements for AI systems in scientific applications extend beyond traditional software validation protocols [5]. Organizations must document training dataset characteristics including size, diversity, representativeness, and bias assessment results. Model architecture documentation includes algorithm selection rationale, parameter optimization procedures, and performance benchmarking against established baselines.

Future Directions and Emerging Trends

The future of AI-guided materials design points toward increasingly autonomous and integrated discovery systems. Emerging trends suggest several transformative developments on the horizon:

Autonomous Discovery Laboratories represent the natural evolution of current AI-guided approaches, with systems capable of end-to-end materials discovery with minimal human intervention. As one analysis predicts: "Some experts foresee a future where clinical trials become fully autonomous. In this vision, AI systems will handle everything—from designing the trial and recruiting participants to monitoring progress, analyzing data, and generating reports—with minimal human involvement" [7]. While such fully AI-driven materials discovery laboratories may still be a decade away, the foundational technologies are actively being developed and integrated.

Quantum Computing Integration promises to address currently intractable materials simulation challenges. As Michelle Simmons, founder and CEO of Silicon Quantum Computing, notes: "Combining AI and quantum computing will open up mind-boggling possibilities" [1]. Five material platforms are mainly being pursued to develop quantum computers: superconductors, semiconductors, trapped ions, photons and neutral atoms [1]. The intersection of these fields creates a virtuous cycle where AI accelerates the development of quantum computing hardware, which in turn enables more powerful AI applications for materials discovery.

Neuromorphic Computing approaches, inspired by the exceptional energy efficiency of biological neural systems, offer potential solutions to the escalating computational demands of AI-guided materials design. "Improvements in energy efficiency are plateauing for silicon chips, but they are still 10,000 less efficient than the human brain," noted Huaqiang Wu, deputy director of the Institute of Microelectronics at Tsinghua University [1]. Research in memristors and other neuromorphic devices aims to replicate the brain's efficiency in artificial neural networks, potentially enabling more sophisticated AI systems with reduced environmental impact.

The continued maturation of reasoning models represents another critical frontier, addressing the current limitation of AI systems in extrapolating beyond their training data. As researchers have observed: "What O1 showed is if you spend test time compute, you can get better results. That was very exciting to me because there was one way of investing resources beyond the training set" [8]. This development, coupled with improved capabilities in mathematical and physical reasoning, suggests a path toward AI systems that can genuinely reason about materials design rather than simply interpolating from existing examples.

The paradigm shift from trial-and-error to AI-guided design represents more than a simple technological upgrade—it constitutes a fundamental transformation of the scientific method itself. As AI and robotics continue to mature and integrate more deeply into materials research, they promise to unlock unprecedented opportunities for innovation across energy storage, electronics, healthcare, and sustainability applications. The organizations and researchers who successfully navigate this transition will find themselves at the forefront of a new era in materials science, defined by accelerated discovery, reduced costs, and breakthrough innovations that address some of humanity's most pressing challenges.

The field of solid-state materials synthesis is undergoing a profound transformation, driven by the integration of artificial intelligence (AI) and robotics. This transition moves materials discovery from a traditional, often artisanal process reliant on trial-and-error to an industrial-scale, data-driven enterprise [10]. AI technologies are revolutionizing the entire discovery pipeline, enabling the rapid design and synthesis of novel materials with tailored properties for applications in energy, electronics, and healthcare [11]. Core to this transformation are three interconnected AI domains: machine learning (ML) for optimizing synthesis parameters and predicting properties, deep learning for processing complex, high-dimensional data such as spectral and microstructural images, and generative models for the inverse design of new, stable solid-state materials [12] [13]. This whitepaper provides an in-depth technical guide to these core AI technologies, framing them within the context of autonomous robotics and their application to accelerating solid-state materials research.

Core AI Technologies: Definitions and Applications

Foundational Concepts

- Machine Learning (ML): A subset of AI that provides systems the ability to automatically learn and improve from experience without being explicitly programmed. In materials science, ML algorithms excel at finding patterns in high-dimensional data to predict material properties and optimize synthesis parameters [11] [12].

- Deep Learning (DL): A specialized subset of machine learning based on artificial neural networks with multiple layers (deep architectures). These models are particularly powerful for processing unstructured data such as microscopy images, spectral data, and scientific text [13].

- Generative Models: A class of AI models that learn the underlying probability distribution of training data and can generate novel data instances. Unlike discriminative models used for prediction, generative models enable inverse design—creating new material structures based on desired properties [12].

Comparative Analysis of AI Technologies

Table 1: Core AI Technologies in Solid-State Materials Research

| Technology | Primary Function | Key Architectures | Applications in Materials Synthesis |

|---|---|---|---|

| Machine Learning (ML) | Pattern recognition, prediction, and optimization | Bayesian Optimization, Random Forests, Support Vector Machines [14] | Synthesis parameter optimization, property prediction from composition [15] [16] |

| Deep Learning (DL) | Processing complex, high-dimensional data | Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs), Transformers [13] | Microstructural image analysis, spectral interpretation, literature mining [14] |

| Generative Models | Inverse design of novel materials | Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), Diffusion Models, Normalizing Flows [12] | De novo crystal structure generation, proposing novel synthesis pathways [12] [13] |

The AI-Robotics Interface in Materials Synthesis

The integration of AI with robotic laboratories creates a closed-loop discovery system. AI models propose promising material candidates and synthesis conditions, which robotic systems then execute through high-throughput synthesis and characterization. The resulting experimental data is fed back to improve the AI models, creating a continuous cycle of optimization [11] [14]. This synergy is encapsulated in the following experimental workflow:

AI-Robotics Workflow

Machine Learning for Synthesis Optimization and Property Prediction

Statistical Optimization of Synthesis Parameters

Machine learning, particularly when integrated with established design of experiment (DOE) methods, significantly enhances the optimization of complex synthesis processes. For instance, the Taguchi robust design method has been successfully employed to optimize precipitation reaction conditions for synthesizing manganese carbonate (MnCO₃) nanoparticles, a precursor for manganese oxide (Mn₂O₃) nanoparticles [15]. The analysis of variance (ANOVA) from such studies quantitatively identifies critical parameters—in this case, manganese concentration, carbonate concentration, and flow rate—enabling the synthesis of nanoparticles with precise size control (54 ± 12 nm for MnCO₃) [15].

Bayesian Optimization (BO) represents a more advanced ML approach for experimental planning. As described by MIT researchers, "Bayesian optimization is like Netflix recommending the next movie to watch based on your viewing history, except instead it recommends the next experiment to do" [14]. When enhanced with multimodal data (literature knowledge, experimental results, human feedback), BO becomes a powerful tool for navigating complex parameter spaces efficiently.

ML-Based Force Fields and Property Prediction

A significant advancement in computational materials science is the development of machine-learning-based force fields (MLFFs) or machine-learned potentials (MLPs). These models bridge the accuracy of quantum mechanical ab initio methods (like density functional theory) with the computational efficiency of classical molecular dynamics (MD) simulations [11] [12]. MLFFs are trained on a pool of ab-initio simulation data or experimental data, enabling accurate simulations of larger systems over longer timescales, which is crucial for understanding solid-state synthesis and material behavior [12].

Table 2: Machine Learning Applications in Experimental Optimization

| Synthesis System | AI/Method Used | Key Parameters Optimized | Result |

|---|---|---|---|

| Manganese Carbonate Nanoparticles [15] | Taguchi Robust Design | Mn²⁺ concentration, CO₃²⁻ concentration, flow rate, temperature | Nanoparticles sized 54 ± 12 nm |

| Fuel Cell Catalysts [14] | Multimodal Bayesian Optimization | Chemical composition of multi-element catalysts | 9.3-fold improvement in power density per dollar over pure Pd |

| Fe₃O₄ Nanoparticles [16] | Systematic Parameter Optimization | pH, aging time, washing solvents | Phase-pure superparamagnetic nanoparticles (15-25 nm) |

Deep Learning for Multimodal Data Processing

Processing Complex Materials Data

Deep learning architectures excel at interpreting the complex, multimodal data inherent to materials synthesis and characterization. In solid-state research, these models process diverse data types:

- Microstructural Images: Convolutional Neural Networks (CNNs) analyze scanning electron microscopy (SEM) and transmission electron microscopy (TEM) images to identify phases, defects, and morphological features [14].

- Spectral Data: Recurrent Neural Networks (RNNs) and Transformers process X-ray diffraction (XRD) patterns, X-ray photoelectron spectroscopy (XPS) data, and other spectral information for phase identification and property extraction [13].

- Scientific Literature: Transformer-based models perform named entity recognition (NER) and relationship extraction from text, patents, and research papers to build knowledge bases that inform synthesis planning [13].

Foundation Models for Materials Science

Foundation models, pre-trained on broad data and adaptable to various downstream tasks, represent the cutting edge of deep learning in materials science [13]. These models include encoder-only architectures (e.g., BERT-like models) for property prediction and decoder-only architectures (e.g., GPT-like models) for generating new material structures [13]. When fine-tuned on materials-specific data, these models demonstrate remarkable capability in predicting properties from structure and planning synthesis routes.

Generative Models for Inverse Materials Design

Principles of Generative AI for Materials

Generative models represent a paradigm shift from prediction to creation in materials science. Unlike discriminative models that learn a mapping function from inputs to outputs (e.g., structure to property), generative models learn the underlying probability distribution of the data, enabling them to create novel material structures by sampling from this distribution [12]. A critical feature is the latent space—a lower-dimensional representation that captures the essential structure-property relationships, facilitating inverse design strategies where desired properties are specified as inputs to generate corresponding structures [12].

Key Generative Model Architectures

Table 3: Generative Models for Solid-State Materials Discovery

| Model Type | Key Principle | Example in Materials Science | Application |

|---|---|---|---|

| Variational Autoencoders (VAEs) [12] | Learn probabilistic latent space for data generation | CrystalVAE, MatVAE | Generation of novel crystal structures, molecular designs |

| Generative Adversarial Networks (GANs) [12] | Generator and discriminator networks trained adversarially | MaterialGAN | Synthesis of realistic material microstructures |

| Diffusion Models [12] | Generate data by iteratively denoising random noise | DiffCSP, SymmCD [12] | Crystal structure prediction (CSP) |

| Normalizing Flows [12] | Learn invertible transformation between simple and complex distributions | CrystalFlow, FlowLLM [12] | Inverse design of crystals and molecules |

| Generative Flow Networks (GFlowNets) [12] | Generate diverse candidates through a series of actions | Crystal-GFN [12] | Exploration of chemical space for stable crystals |

The Generative Design Workflow

The process of generative materials design involves multiple steps, from data representation to final experimental validation, with generative models acting as the engine for proposing novel candidates.

Generative Design Process

Integrated Experimental Protocols

Protocol: AI-Driven Optimization of Magnetic Nanoparticles

The following detailed protocol outlines the synthesis and optimization of superparamagnetic Fe₃O₄ nanoparticles, exemplifying the integration of systematic parameter optimization with robotic characterization [16].

Objective: Synthesize phase-pure, well-dispersed magnetite (Fe₃O₄) nanoparticles exhibiting superparamagnetic behavior for magnetic hyperthermia applications.

Materials and Equipment:

- Precursors: Ferrous sulfate heptahydrate (FeSO₄·7H₂O), Ferric chloride hexahydrate (FeCl₃·6H₂O)

- Precipitating Agent: Ammonium hydroxide (NH₄OH) solution

- Inert Atmosphere: Argon (Ar) gas supply

- Washing Solvents: Methanol, Ethanol

- Characterization Equipment: Synchrotron X-ray diffraction, Field emission scanning electron microscopy, X-ray photoelectron spectroscopy, Vibrating sample magnetometer

Synthesis Procedure:

- Dissolve FeSO₄·7H₂O and FeCl₃·6H₂O separately in deionized water with molar ratio Fe²⁺:Fe³⁺ = 1:2.

- Mix the solutions under continuous Ar gas flow with vigorous stirring to create an inert atmosphere, maintaining temperature at 80°C.

- Add NH₄OH solution (30% by volume) dropwise to the mixture until the solution color changes to black, indicating magnetite formation.

- Continuously stir the reaction mixture for varying aging times (parameter under optimization).

- Wash the black precipitate with different solvents (water, ethanol, methanol) to remove excess ions and impurities.

- Dry the product at 80°C for 12 hours and grind into fine powder.

AI-Optimized Parameters:

- Systematically vary pH (8-11), aging time, and washing solvents.

- Use high-resolution synchrotron XRD to detect minute impurity phases.

- Employ statistical analysis to identify optimal conditions for phase purity and desired magnetic properties.

Key Outcomes: Successful synthesis of spherical Fe₃O₄ nanoparticles (15-25 nm) with high saturation magnetization (57.26 emu/g at 298 K) and superparamagnetism, demonstrating significant temperature increase (13°C) in hyperthermia studies [16].

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for AI-Driven Solid-State Synthesis

| Reagent/Equipment | Function | Application Example |

|---|---|---|

| Metal Salt Precursors (e.g., FeSO₄·7H₂O, FeCl₃·6H₂O) [16] | Source of metal cations for oxide formation | Co-precipitation synthesis of magnetite (Fe₃O₄) nanoparticles |

| Precipitating Agents (e.g., NH₄OH, NaOH) [16] | Adjust pH to induce precipitation of metal hydroxides/oxides | Formation of Fe₃O₄ from Fe²⁺/Fe³⁺ solution |

| Inert Gas Supply (Ar, N₂) [16] | Create oxygen-free environment to prevent oxidation | Maintaining Fe²⁺ state during magnetite synthesis |

| Liquid-Handling Robots [14] | Automated precise dispensing of reagents | High-throughput exploration of chemical compositions (900+ chemistries) |

| Carbothermal Shock System [14] | Rapid synthesis of materials via rapid heating/cooling | Synthesis of multielement catalysts for fuel cells |

| Automated Electrochemical Workstation [14] | High-throughput testing of electrochemical properties | Screening catalyst performance for fuel cells |

The integration of machine learning, deep learning, and generative models with robotic laboratories is fundamentally reshaping the landscape of solid-state materials synthesis. These core AI technologies enable a shift from serendipitous discovery to rational, accelerated design of materials with tailored properties. As foundation models become more sophisticated and multimodal, and as robotic platforms achieve greater autonomy, the pace of materials discovery will continue to accelerate. This AI-driven paradigm promises to solve long-standing challenges in energy storage, electronics, and healthcare by providing a scalable, efficient pathway to novel materials that meet specific technological needs. The future of materials science lies in the continued refinement of these AI technologies and their seamless integration with experimental workflows, ultimately creating self-driving laboratories that can navigate the vast chemical space with unprecedented efficiency.

The field of solid-state materials synthesis, particularly within pharmaceutical development, is undergoing a fundamental transformation. For decades, research and development has been characterized by traditional trial-and-error approaches that are time-consuming, resource-intensive, and prone to high failure rates. In pharmaceutical development, expenditures have skyrocketed 51-fold over recent decades, yet clinical success rates have stagnated at approximately 10%, predominantly due to efficacy and safety concerns [17]. This persistent problem underscores the urgent need for innovation across the entire development process.

The convergence of artificial intelligence (AI) and robotic platforms is now revolutionizing this landscape, creating a new paradigm of "material intelligence" that mimics and extends the capabilities of human researchers [18]. This evolution is progressing from isolated automated workstations to fully integrated autonomous laboratories capable of planning, executing, and analyzing experiments with minimal human intervention. In materials science, this shift enables researchers to close the gap between computational screening and experimental realization of novel compounds [19], while in pharmaceuticals, it promises to streamline drug formulation, tailor treatments based on patient-specific data, and ultimately increase the success rates of new therapies [20]. This technical guide examines the core components, experimental methodologies, and future trajectory of this technological revolution.

The Evolution: From Automated Workstations to Autonomous Systems

The progression toward autonomous laboratories follows a logical evolution in experimental capabilities, with each stage building upon the previous to deliver greater intelligence and independence.

Automated Workstations

Automated workstations represent the foundational layer of laboratory automation. These systems typically automate singular, repetitive tasks such as:

- Liquid handling for high-throughput screening assays

- Sample preparation and dispensing

- Compound synthesis and purification

- Robotic cultivation for biotechnology applications [21]

These systems excel at executing predefined protocols with precision and consistency, significantly reducing human error and increasing throughput. In drug discovery, automated screening robotics enables efficient and large-scale compound testing with higher precision and consistency [20]. However, these systems lack decision-making capabilities and operate primarily within isolated experimental silos.

Integrated Robotic Platforms

Integrated robotic platforms connect multiple automated workstations into a coordinated experimental workflow. These systems typically feature:

- Mobile robotic arms for transferring samples and labware between stations

- Centralized workflow management systems for orchestrating experiments

- Automated data capture and integration across multiple instruments

A prime example is the digital infrastructure implemented using Workflow Management Systems based on Directed Acyclic Graphs (DAGs), which increase traceability and automated execution of experiments in environments with heterogeneous devices [21]. These platforms create a seamless flow from sample preparation through analysis but still rely heavily on human direction for experimental planning and interpretation.

Autonomous Laboratories

Autonomous laboratories represent the pinnacle of this evolution, integrating robotics with AI-driven decision-making to create self-directed research environments. These systems:

- Automatically generate hypotheses and design experiments

- Execute complex experimental protocols without human intervention

- Analyze results and iteratively refine subsequent experiments based on outcomes

The A-Lab for solid-state synthesis exemplifies this paradigm, using computations, historical data, machine learning, and active learning to plan and interpret the outcomes of experiments performed using robotics [19]. Such labs close the "design-make-test-analyze" (DMTA) loop, enabling continuous, adaptive experimentation that dramatically accelerates the research cycle.

Table 1: Comparison of Laboratory Automation Levels

| Feature | Automated Workstations | Integrated Robotic Platforms | Autonomous Laboratories |

|---|---|---|---|

| Decision-Making | None (pre-programmed) | Limited (human-directed) | Advanced (AI-driven) |

| Workflow Integration | Isolated tasks | Multiple connected systems | End-to-end automation |

| Data Handling | Manual or limited capture | Automated capture & storage | Real-time analysis & learning |

| Experimental Flexibility | Fixed protocols | Predefined workflows | Adaptive, self-optimizing |

| Typical Applications | HTS, sample preparation | Multi-step synthesis & testing | Fully autonomous discovery |

Core Architectural Framework of an Autonomous Lab

The operational framework of an autonomous laboratory for solid-state materials synthesis can be conceptualized through three interconnected cycles that mirror the cognitive processes of human researchers [18].

The "Reading" Cycle: Data-Guided Rational Design

This initial phase involves comprehensive data analysis and planning:

- Computational screening of target materials using ab initio phase-stability data from resources like the Materials Project [19]

- Natural-language processing of historical synthesis data from scientific literature to propose initial synthesis recipes [19]

- Precursor selection based on thermodynamic calculations and similarity to known successful syntheses

The "Doing" Cycle: Automation-Enabled Controllable Synthesis

This phase encompasses the physical execution of experiments:

- Robotic powder dispensing and mixing using automated preparation stations

- Precise heating protocols executed in automated furnaces with controlled temperature profiles

- Sample transfer between stations using robotic arms [19]

The "Thinking" Cycle: Autonomy-Facilitated Inverse Design

This analytical and adaptive phase completes the autonomous loop:

- X-ray diffraction (XRD) characterization of synthesis products

- Machine learning-powered phase analysis to identify and quantify reaction products

- Active learning algorithms that propose improved synthesis routes based on outcomes [19]

The following workflow diagram illustrates how these cycles interconnect in a fully autonomous materials discovery pipeline:

Autonomous Laboratory Workflow: This diagram illustrates the closed-loop operation of an autonomous laboratory, integrating the "reading-doing-thinking" cycles with active learning for continuous optimization [19] [18].

Experimental Protocols in Autonomous Materials Synthesis

Case Study: The A-Lab for Novel Inorganic Materials

The A-Lab demonstrated the effectiveness of this approach by successfully synthesizing 41 of 58 target novel inorganic compounds over 17 days of continuous operation—a 71% success rate [19]. The detailed experimental methodology is as follows:

Target Identification and Validation

- Computational Screening: Targets were identified from the Materials Project database as compounds predicted to be on or near (<10 meV per atom) the convex hull of stable phases [19]

- Air Stability Check: All targets were verified computationally not to react with O2, CO2, and H2O to ensure compatibility with open-air handling

Recipe Generation and Precursor Selection

- Literature-Inspired Recipes: Initial synthesis recipes were generated using natural-language processing models trained on 30,000 solid-state synthesis procedures from literature [19]

- Similarity Assessment: Precursor selection was based on chemical similarity to known reactions, with higher similarity correlates to greater success rates

- Temperature Prediction: A separate machine learning model trained on heating data from literature proposed initial synthesis temperatures

Robotic Synthesis Execution

- Powder Dispensing: Precursor powders were automatically dispensed and mixed in stoichiometric ratios

- Milling Process: Powders were milled to ensure good reactivity between precursors with varying physical properties

- Heating Protocol: Samples were transferred to alumina crucibles and heated in one of four box furnaces with temperatures ranging from 450°C to 1150°C based on the target material [19]

- Cooling Phase: Samples were allowed to cool naturally after heating cycles

Material Characterization and Analysis

- XRD Measurement: Automated X-ray diffraction was performed on all samples after synthesis

- Phase Identification: Two machine learning models worked together to analyze diffraction patterns:

- Probabilistic phase identification trained on experimental structures from the Inorganic Crystal Structure Database (ICSD)

- Pattern matching with computed structures from the Materials Project (DFT-corrected)

- Yield Quantification: Automated Rietveld refinement quantified phase fractions and target yield

Active Learning Optimization

For failed syntheses (<50% target yield), the A-Lab implemented Autonomous Reaction Route Optimization with Solid-State Synthesis (ARROWS3):

- Pairwise Reaction Database: The system built a database of observed pairwise reactions between precursors and intermediates

- Driving Force Calculation: Used formation energies from the Materials Project to identify reactions with sufficient thermodynamic driving force (>50 meV per atom)

- Intermediate Avoidance: Prioritized synthesis pathways that avoided intermediates with small driving forces to form the target

- Iterative Refinement: Proposed and tested new precursor combinations and heating profiles based on accumulated experimental data

Table 2: A-Lab Performance Data on Synthesis Outcomes [19]

| Metric | Value | Context |

|---|---|---|

| Operation Period | 17 days | Continuous operation |

| Target Compounds | 58 | Novel inorganic powders |

| Successful Syntheses | 41 | 71% success rate |

| Literature-Inspired Successes | 35 | 85% of successes |

| Active Learning Optimizations | 9 | 6 had zero initial yield |

| Unique Pairwise Reactions Observed | 88 | Added to reaction database |

Pharmaceutical Formulation Development Protocol

In pharmaceutical applications, a similar autonomous approach is being applied to drug formulation development:

Formulation Optimization Workflow

- API Characterization: Analysis of active pharmaceutical ingredient properties, particularly focusing on solubility challenges (affecting 70-90% of new chemical entities) [17]

- Excipient Selection: AI-driven selection of stabilizers, solubilizing agents, and delivery matrices based on molecular compatibility

- Prototype Formulation: Robotic preparation of multiple formulation variants using liquid handlers and powder dispensers

- Performance Testing: Automated testing of dissolution profiles, stability, and bioavailability predictors

- Iterative Refinement: Machine learning analysis of performance data to identify optimal formulation parameters

The Scientist's Toolkit: Essential Research Reagents and Solutions

The effective operation of autonomous laboratories requires carefully selected precursors, reagents, and materials that are compatible with robotic systems while enabling diverse synthesis pathways.

Table 3: Essential Research Reagents for Autonomous Solid-State Synthesis

| Reagent Category | Specific Examples | Function in Synthesis | Compatibility Notes |

|---|---|---|---|

| Oxide Precursors | TiO2, SiO2, Fe2O3, MgO | Primary cation sources for oxide materials | High-purity powders with controlled particle size |

| Phosphate Precursors | NH4H2PO4, (NH4)2HPO4 | Phosphorus source for phosphate materials | May require decomposition control during heating |

| Carbonate Precursors | Li2CO3, Na2CO3, CaCO3 | Alkali metal sources with decomposition pathways | Gas evolution during heating must be accommodated |

| Metal Precursors | Metal powders (Fe, Cu, Ni) | Zero-valent metal sources for reduced phases | Oxidation sensitivity requires environmental control |

| Crucible Materials | Alumina (Al2O3), Zirconia | Inert containers for high-temperature reactions | Chemically inert at operating temperatures (up to 1150°C) |

| Milling Media | Zirconia balls, Alumina beads | Homogenization of precursor mixtures | Hardness and chemical inertness critical |

Active Learning and Optimization Mechanisms

The autonomous functionality of advanced laboratories hinges on sophisticated active learning algorithms that enable continuous improvement of experimental outcomes.

The ARROWS3 Algorithm

The Autonomous Reaction Route Optimization with Solid-State Synthesis (ARROWS3) algorithm exemplifies this approach with two core hypotheses:

- Pairwise Reaction Preference: Solid-state reactions tend to occur between two phases at a time [19]

- Driving Force Optimization: Intermediate phases with small driving forces to form the target should be avoided [19]

The algorithm's decision-making process is visualized below:

Active Learning Optimization Cycle: This diagram outlines the iterative process through which autonomous laboratories learn from failed syntheses to propose and test improved reaction pathways [19].

Application Example: CaFe2P2O9 Synthesis Optimization

The synthesis of CaFe2P2O9 exemplifies this optimization process:

- Initial Failure: Original precursor combination yielded minimal target compound

- Intermediate Analysis: Identified formation of FePO4 and Ca3(PO4)2 intermediates with small driving force (8 meV per atom) to form target

- Pathway Optimization: Algorithm identified alternative route forming CaFe3P3O13 intermediate with larger driving force (77 meV per atom)

- Result: Approximately 70% increase in target yield through optimized pathway [19]

Failure Analysis and Systematic Improvement

Even with advanced autonomous systems, synthesis failures occur and provide valuable learning opportunities. Analysis of the 17 unobtained targets in the A-Lab study revealed specific failure modes:

Table 4: Analysis of Synthesis Failure Modes in Autonomous Experimentation [19]

| Failure Mode | Frequency | Characteristics | Potential Solutions |

|---|---|---|---|

| Slow Kinetics | 11 targets | Reaction steps with low driving forces (<50 meV/atom) | Extended heating times, flux agents, mechanical activation |

| Precursor Volatility | 3 targets | Loss of volatile components during heating | Sealed containers, alternative precursors with higher decomposition temperatures |

| Amorphization | 2 targets | Formation of amorphous phases instead of crystalline targets | Alternative heating profiles, nucleation agents |

| Computational Inaccuracy | 1 target | Errors in predicted stability | Improved DFT functionals, experimental validation of computational data |

Future Directions and Emerging Capabilities

The trajectory of autonomous laboratories points toward increasingly sophisticated capabilities with profound implications for materials and pharmaceutical research.

Next-Generation Autonomous Labs

Future developments are expected to focus on:

- Multi-modal Characterization: Integration of complementary techniques beyond XRD (electron microscopy, spectroscopy)

- Cross-Domain Knowledge Transfer: Applying insights from inorganic synthesis to organic and pharmaceutical systems

- Federated Learning: Collaborative model improvement across multiple autonomous laboratories while preserving data privacy

- Embodied Intelligence: More sophisticated human-robot interaction enabling natural language control and collaborative problem-solving [22]

"Material Intelligence" Vision

The ultimate vision is the development of a universal "material intelligence" that can encode material formulas and parameters into a "material code" transferable across time and space—potentially enabling autonomous materials discovery on Earth and even on distant planets [18]. This represents the full realization of the material intelligence paradigm, where AI and robotics seamlessly integrate to accelerate discovery across the materials universe.

The transition from automated workstations to fully autonomous laboratories represents a paradigm shift in materials and pharmaceutical research. By integrating robotic experimentation with AI-driven decision-making, these systems address fundamental limitations of traditional research approaches, dramatically accelerating the discovery and optimization of novel materials and drug formulations. The demonstrated success of platforms like the A-Lab in synthesizing previously unknown compounds validates this approach and points toward a future where human researchers are amplified by intelligent laboratory partners. As these technologies mature, they promise to not only enhance research efficiency but also expand the boundaries of explorable chemical space, opening new frontiers in materials science and pharmaceutical development.

Key Solid-State Material Classes for Biomedical Applications (e.g., Metal-Organic Frameworks, Perovskites)

Solid-state materials have ushered in a new era for biomedical engineering, offering unique physicochemical properties that are not found in their molecular counterparts. Among the diverse classes of solid-state materials, Metal-Organic Frameworks (MOFs) and Perovskites have emerged as particularly promising due to their structural tunability, multifunctionality, and excellent compatibility with biological systems. The development of these materials is being radically accelerated by the integration of artificial intelligence (AI) and robotic automation, shifting traditional research from slow, iterative processes toward intelligent, high-throughput discovery pipelines [11] [23]. This whitepaper provides an in-depth technical examination of these key material classes, their synthesis, functional properties, and biomedical applications, framed within the context of modern AI-driven materials research.

Metal-Organic Frameworks (MOFs) for Biomedical Applications

Fundamental Properties and Synthesis

Metal-Organic Frameworks (MOFs) are a class of crystalline, porous materials formed via the self-assembly of metal ions or clusters (nodes) and organic linkers. Their defining characteristics include an extraordinarily high surface area, tunable pore size and volume, well-defined active sites, and hybrid organic-inorganic structures [24]. This inherent tunability allows for precise control over their chemical and biological interactions, making them ideal platforms for biomedical applications.

Synthesis Methodologies: MOFs can be synthesized using various methods, each yielding materials with distinct morphologies and properties suitable for different biomedical uses. Key synthesis protocols include:

- Solvothermal/Hydrothermal Synthesis: Involves reactions in a sealed vessel at elevated temperature and pressure. This is a widely used method for producing high-quality MOF crystals [24].

- Electrochemical Synthesis: An efficient method that allows for the direct growth of MOF films on electrode surfaces. This is particularly useful for creating biosensor platforms, as it enables precise control over film thickness and morphology [24].

- Sonochemical Synthesis: Utilizes ultrasonic radiation to accelerate chemical reactions and nucleation. This method is known for its rapid reaction times and energy efficiency, often resulting in materials with unique properties [24].

- Mechanochemical Synthesis: A solvent-free approach that relies on mechanical grinding to initiate reactions between solid precursors. This green chemistry method is gaining popularity for its environmental friendliness and simplicity [24].

Biomedical Applications of MOFs

The adaptable nature of MOFs has led to their exploration in a wide array of biomedical applications, summarized in Table 1 below.

Table 1: Biomedical Applications of Metal-Organic Frameworks (MOFs)

| Application Area | Key Functionality | Specific Examples / Mechanisms |

|---|---|---|

| Drug Delivery | Controlled and sustained release of therapeutic agents. | Particularly effective for anticancer drugs; high surface area allows for high drug loading capacity; pore structure enables controlled release kinetics to maintain therapeutic blood levels [24]. |

| Biosensing & Diagnostics | Recognition and electrochemical sensing of biomolecules. | MOF-based electrodes utilize metal centers as catalytic sites for enzymatic and non-enzymatic reactions; enable ultrasensitive detection of trace biomarkers in biological fluids for real-time monitoring and point-of-care diagnostics [24]. |

| Theranostics | Combined therapeutic and diagnostic capabilities. | Incorporation of imaging agents (e.g., contrast agents) within the MOF structure; allows for simultaneous drug delivery and imaging, enabling tracking of drug distribution and release [24]. |

| Antimicrobial Applications | Targeting and destroying pathogenic microorganisms. | Engineered to exhibit enzyme-mimicking (nanozyme) activities that can generate reactive oxygen species (ROS) or disrupt bacterial cell membranes [24]. |

Perovskite Materials for Biomedical Applications

Fundamental Properties and Synthesis

Perovskites are a class of materials with the general formula ABX₃, where A is an organic or inorganic cation, B is a metal cation, and X is an anion (typically oxygen or a halide) [25] [26]. Their crystal structure is an octahedral cube, and their properties can be finely tuned by altering the elements at the A, B, and X sites. This flexibility allows for the engineering of specific optoelectronic, magnetic, and ferroelectric properties [26]. Notably, some perovskites are multiferroic, exhibiting both ferroelectric and magnetic orderings that are coupled, enabling sophisticated multifunctionality [26].

Synthesis Considerations: The chosen synthetic route profoundly impacts the properties and performance of perovskites in biomedical devices. Key advancements and challenges include:

- Nanocrystal Synthesis for Imaging: For applications like biological imaging, stable, high-quality nanocrystals are essential. Computational guidance is used to manage complex defect chemistry, such as using tin-rich conditions to suppress bulk defects in lead-free alternatives [27].

- Thin-Film Fabrication for Detectors: Low-temperature processed perovskite thin films are being developed for digital X-ray detectors. These offer advantages of low cost, large radiation area, and critically, a low radiation dose for patients [25] [27].

- Stability and Reproducibility: A major focus for commercial biomedical application is improving the stability and reproducibility of perovskites, which can be more critical than achieving peak performance metrics in a lab setting. The purity of precursors and the synthesis route itself are key factors [27] [28].

Biomedical Applications of Perovskites

Perovskites are being actively researched for several high-impact biomedical applications, as detailed in Table 2.

Table 2: Biomedical Applications of Perovskite Materials

| Application Area | Key Functionality | Specific Examples / Mechanisms |

|---|---|---|

| Medical Imaging | Detection of X-rays and upconversion imaging. | Halide perovskites used as solution-deposited absorption layers in digital X-ray detectors [25]. Certain perovskites can be encapsulated for water stability and used for upconversion imaging in living cells [25]. |

| Biosensing | Electrochemical and physical sensing of biological signals. | Fabrication of electrochemical sensors for detecting specific biomarkers [29] [26]. The piezoelectric properties of perovskites also allow their use in flexible sensors and energy harvesters for powering implantable and wearable IoT devices [26]. |

| Tissue Engineering & Implants | Promotion of bone growth and tissue integration. | Used as scaffolds for bone repair due to good biocompatibility and piezoelectric properties (e.g., CaTiO₃) [25] [26]. Coatings like CaTiO₃/TiO₂ composites on titanium alloys improve the performance of biomedical implants [26]. |

| Antimicrobial Therapy | Inactivation of bacteria and other pathogens. | Explored for antibacterial and antimicrobial performance, often through catalytic generation of reactive oxygen species or other mechanisms that damage microbial cells [26]. |

The AI and Robotics Paradigm in Materials Research

The discovery and optimization of MOFs and perovskites are being transformed by artificial intelligence and autonomous robotics. This represents a paradigm shift from intuition-driven research to a data-driven, closed-loop process.

The Autonomous Workflow

The following diagram illustrates the integrated, closed-loop workflow of an AI-driven autonomous laboratory for materials discovery.

Diagram Title: AI-Driven Materials Discovery Loop

This workflow is enabled by several key technological elements:

- Chemical Science Databases: Serve as the foundation, containing structured and unstructured data on known materials, properties, and synthesis routes. These databases are built using natural language processing (NLP) to extract information from literature and patents, and are often organized into Knowledge Graphs (KGs) for efficient retrieval [23].

- Large-Scale Intelligent Models: AI algorithms are used to plan experiments and predict outcomes. Key methods include:

- Automated Robotic Platforms: These "A-Labs" physically execute the synthesis and characterization steps. For example, the A-Lab by DeepMind uses robotics to handle and characterize solid inorganic powders, planning and interpreting experiments autonomously [23].

Case Studies in AI-Driven Discovery

- MOF Optimization: A study by Moosavi et al. used a genetic algorithm-guided robotic platform to optimize the crystallinity and phase purity of MOFs. The AI explored a nine-parameter space over 90 experiments, with a random forest model trained on prior data to predict outcomes and exclude suboptimal paths [23].

- Perovskite Crystallization: Ahmadi and co-workers reported a high-throughput, autonomous investigation of the crystallization of 2D halide perovskites. This AI-driven approach allows for the rapid mapping of synthesis conditions to final material properties, which is crucial for manipulating photophysical properties for imaging and sensing [27].

The Scientist's Toolkit: Essential Research Reagents and Materials

The experimental research and development of MOFs and perovskites rely on a core set of chemical reagents and materials. The table below details key components and their functions.

Table 3: Essential Research Reagents and Materials for MOF and Perovskite Synthesis

| Reagent / Material | Function in Synthesis | Example Applications / Notes |

|---|---|---|

| Metal Salts (e.g., Zn, Cu, Fe, Zr salts) | Serve as the metal ion nodes (secondary building units) in the MOF structure. | The choice of metal ion influences stability, catalytic activity, and biocompatibility. Zirconium-based MOFs are often favored for their high chemical stability [24]. |

| Organic Linkers (e.g., carboxylates, imidazolates) | Multifunctional organic molecules that coordinate with metal nodes to form the framework. | Ditopic or polytopic linkers like terephthalic acid are common. The linker length and functionality dictate pore size and surface chemistry [24]. |

| A-site Cations (e.g., MA⁺, FA⁺, Cs⁺) | Organic or inorganic cations that occupy the A-site in the ABX₃ perovskite structure. | Methylammonium (MA⁺), formamidinium (FA⁺), and Cesium (Cs⁺). They influence crystal stability and optoelectronic properties [25] [27]. |

| B-site Cations (e.g., Pb²⁺, Sn²⁺) | Metal cations that occupy the B-site in the ABX₃ perovskite structure. | Lead (Pb²⁺) is common but raises toxicity concerns. Tin (Sn²⁺) is a widely studied less-toxic alternative, though it presents challenges with stability and defect chemistry [27] [28]. |

| X-site Anions (e.g., I⁻, Br⁻, Cl⁻) | Halide anions that occupy the X-site in halide perovskites. | The choice of halide directly tunes the bandgap and, consequently, the absorption and emission properties of the material, which is critical for imaging applications [25]. |

| Solvents (e.g., DMF, DMSO, water) | Medium for dissolution and reaction of precursors. | Solvent polarity and boiling point can direct crystal growth and morphology. Solvent-free mechanochemical synthesis is also an emerging option [24]. |

| Modulators/Additives | Chemicals used to control crystal growth kinetics and defect formation. | Agents like acetic acid or alkylamines can slow down reaction kinetics for larger crystals. Additives are also used to passivate surface defects in perovskites, improving performance and stability [27]. |

Metal-Organic Frameworks and Perovskites represent two of the most dynamic and promising classes of solid-state materials for advanced biomedical applications. Their tunable structures and multifunctional properties make them ideal for drug delivery, biosensing, medical imaging, and tissue engineering. The future of this field is inextricably linked to the continued development of AI and autonomous laboratory platforms. The transition from isolated, manual discovery to a networked, intelligent, and data-rich research paradigm promises to overcome longstanding challenges in the stability, reproducibility, and targeted design of these materials. As these technologies mature, we can anticipate the accelerated development of sophisticated MOF and perovskite-based solutions that will profoundly impact diagnostics, therapeutics, and regenerative medicine.

From Code to Lab Bench: AI-Driven Prediction and Robotic Synthesis in Action

The discovery and development of new solid-state materials have long been hindered by the prohibitive cost and time requirements of traditional methods. Computational simulations, while invaluable, often demand immense resources. Density Functional Theory (DFT) calculations, for instance, can require hours to days of supercomputing time for a single material, creating a critical bottleneck in research pipelines [30] [31]. Similarly, experimental synthesis and characterization are inherently slow, resource-intensive processes. This is where Artificial Intelligence (AI) emerges as a transformative tool. By serving as fast, accurate, and data-efficient surrogate models, AI systems can emulate the behavior of expensive physics-based simulations, accelerating the entire materials discovery cycle from years to weeks [11] [32].

This whitepaper details the implementation of AI-driven surrogate modeling, framing it within the context of a modern, robotics-integrated solid-state materials synthesis laboratory. This approach is foundational to the broader thesis that the integration of AI and robotics is not merely an incremental improvement but a fundamental paradigm shift towards autonomous, data-driven materials research. These surrogate models act as the central "brain" of an automated discovery loop, guiding robotic systems in synthesis and characterization by predicting which material compositions and structures are most promising to pursue experimentally, thereby minimizing costly trial-and-error [11] [33].

Fundamental Principles of AI Surrogate Modeling

Core Concepts and Definitions

A surrogate model, in the context of materials science, is a machine-learned approximation of a complex, computationally expensive simulation or physical process. The core premise is to leverage patterns in existing data to build a model that can predict the outcomes of new, unseen scenarios with high fidelity but at a fraction of the computational cost [32]. The workflow typically involves generating a limited set of high-fidelity data using the original, expensive simulator (e.g., DFT). This data then fuels the training of a surrogate model—such as a Gaussian Process or a Neural Network—which learns the underlying input-output relationships. Once trained, this lightweight model can be queried thousands of times per second to explore vast design spaces, perform sensitivity analyses, or guide optimization routines [32].

The efficacy of this approach hinges on several key advantages over traditional simulations. Primarily, it offers a dramatic reduction in computational cost and latency. What once took days can now be accomplished in milliseconds, enabling the exploration of material spaces of a previously unimaginable scale [32] [31]. Furthermore, these models are particularly well-suited for inverse design problems. Instead of predicting a property from a structure (a forward problem), they can be used to identify the material structure that will yield a desired property, a task that is exceptionally challenging for traditional simulations [11].

Integration with Automated Laboratories

The true power of surrogate modeling is fully realized when it is embedded within a closed-loop autonomous research system. In this framework, the surrogate model acts as the decision-making engine. It continuously proposes the most promising candidate materials from a vast search space to a robotic synthesis and characterization platform. The results from these automated experiments are then fed back to refine and retrain the surrogate model, enhancing its predictive accuracy with each iteration [11]. This creates a self-improving cycle of discovery. As noted in a review on AI-driven materials discovery, this synergy is pushing the frontier towards "autonomous laboratories capable of real-time feedback and adaptive experimentation" [11]. This seamless integration of computational prediction and physical validation is the cornerstone of next-generation materials research.

Implementation Workflow for Surrogate Models

The development of a robust AI surrogate model follows a structured pipeline, from data acquisition to deployment. The diagram below illustrates this integrated workflow, connecting computational and experimental components.

Data Acquisition and Preprocessing

The foundation of any effective surrogate model is high-quality, relevant data. The model's predictive capability is directly influenced by the amount and reliability of the training data [31].

- Data Sources: Data can be sourced from public computational databases like the Materials Project, AFLOW, or the Open Quantum Materials Database (OQMD), which contain millions of pre-calculated material properties [30] [31]. For specific applications, High-Throughput Computation (HTC) or targeted experimental historical data may be used.

- Feature Engineering: This critical step involves transforming raw material representations (e.g., chemical formulas, crystal structures) into numerical descriptors that capture chemically meaningful patterns. For crystalline materials, common descriptors include compositional features (e.g., elemental properties, electron negativities) and structural features (e.g., radial distribution functions, Voronoi tessellations) [31]. Automated feature extraction using graph neural networks (GNNs), which can directly learn from atomic connections and bonds, is also becoming increasingly popular [30].

- Data Cleaning and Redundancy Control: Raw data often contains noise, missing values, and redundancies. Techniques like clustering and regression are used for noise smoothing and filling missing values [31]. Crucially, materials datasets are often characterized by many highly similar structures, which can lead to overly optimistic performance estimates if not properly managed. Algorithms like MD-HIT have been developed to control this redundancy, ensuring that model performance is evaluated on truly distinct material families and better reflects its extrapolation capability [30].

Model Selection and Training

Selecting the right algorithm depends on the data type, size, and the specific prediction task. The table below summarizes commonly used algorithms and their applications in material property prediction.

Table 1: Machine Learning Algorithms for Material Property Prediction

| Algorithm | Best For | Key Advantages | Example Properties |

|---|---|---|---|

| Gaussian Process (GP) | Small datasets, uncertainty quantification | Provides inherent uncertainty estimates, high interpretability | Formation energy, band gap [32] |

| Graph Neural Networks (GNNs) | Structure-based property prediction | Directly learns from crystal structure, high accuracy | Formation energies, elastic moduli [30] |

| Support Vector Machines (SVM) | Classification, regression with clear margins | Effective in high-dimensional spaces | Bulk modulus, shear modulus [34] |

| Random Forests / Gradient Boosting | Tabular data with mixed features | Handles non-linearity, robust to outliers | Thermal conductivity, phase classification [34] |

The training process involves splitting the preprocessed dataset into training, validation, and test sets. It is critical to use redundancy-controlled splits or cluster-based cross-validation to avoid over-optimistic performance assessments [30]. The model is then trained on the training set, and its hyperparameters are tuned using the validation set. The final model is evaluated on the held-out test set, which contains materials distinct from those in the training set, to gauge its true predictive power for novel compounds.

Explainable AI (XAI) and Model Interpretation

For surrogate models to be trusted and provide scientific insight, they must be interpretable. Explainable AI (XAI) techniques are essential for moving beyond "black box" predictions [11] [32].

- Global Explanations: Techniques like Partial Dependence Plots (PDP) and global sensitivity analysis reveal how a material property, on average, changes with a specific input feature (e.g., the effect of atomic radius on thermodynamic stability) [32].

- Local Explanations: Methods like SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) explain individual predictions, highlighting which features were most influential for a specific material candidate [32]. This coupling of surrogate modeling with XAI creates a powerful tool for knowledge discovery, helping researchers uncover hidden structure-property relationships and guiding the formulation of new scientific hypotheses.

Experimental Protocols and Validation

Protocol: Benchmarking Surrogate Model Against DFT

Objective: To validate the accuracy and computational efficiency of an AI surrogate model for predicting the formation energy of crystalline solids by comparing its performance to standard Density Functional Theory (DFT) calculations.

Materials and Data:

- Dataset: A curated set of 5,000 crystalline structures from the Materials Project database [31].

- Software: Python with libraries like Scikit-learn, PyTorch, or TensorFlow for model development; and a DFT code such as VASP or Quantum ESPRESSO for reference calculations.

Methodology:

- Data Preprocessing and Splitting: Apply the MD-HIT algorithm to the dataset to ensure no two structures in the training and test sets have a similarity exceeding a 90% threshold. This prevents data leakage and provides a realistic evaluation of extrapolation performance [30].

- Feature Engineering: Convert the crystal structures into a suitable representation. For a GNN, this involves creating crystal graphs where nodes are atoms and edges represent bonds. For other models, compute features like Coulomb matrices or smooth overlap of atomic positions (SOAP) descriptors.

- Model Training: Train a surrogate model (e.g., a Crystal Graph Convolutional Neural Network) on the training set. Use the mean absolute error (MAE) between the model's predictions and the DFT-calculated formation energies as the loss function.

- Validation and Benchmarking: