AI and Robotics: Accelerating Automated Synthesis for Next-Generation Materials Discovery

This article explores the transformative integration of artificial intelligence (AI) and robotics in materials discovery and drug development.

AI and Robotics: Accelerating Automated Synthesis for Next-Generation Materials Discovery

Abstract

This article explores the transformative integration of artificial intelligence (AI) and robotics in materials discovery and drug development. It covers the foundational shift from manual, trial-and-error experimentation to autonomous, data-driven laboratories. The content details core methodologies, including active learning, multimodal AI, and robotic automation, highlighting their application in optimizing synthesis and predicting material properties. It addresses critical challenges such as experimental irreproducibility and data limitations, offering insights into troubleshooting and optimization strategies. Furthermore, the article examines the validation of AI-driven discoveries through real-world case studies and discusses the growing impact of these technologies on accelerating the development of novel therapeutics and advanced materials, providing a comprehensive overview for researchers and professionals in the field.

The New Paradigm: From Manual Labs to Self-Driving Discovery Platforms

Defining Autonomous Laboratories and AI-Driven Synthesis

Autonomous Laboratories (ALs), often termed "self-driving labs," represent a transformative operational paradigm in scientific research where advanced algorithms, typically based on artificial intelligence (AI), autonomously select which samples are synthesized and how they are characterized [1]. This process operates within a closed-loop feedback system designed to maximize knowledge gain with each experimental iteration, significantly accelerating the pace of discovery in fields such as materials science, chemistry, and drug development [1] [2].

In a fully realized autonomous laboratory, the core functions of sample generation, handling, and characterization are executed with high levels of automation, requiring minimal human intervention [1]. This automation empowers scientists to redirect their efforts from repetitive tasks toward more substantive intellectual endeavors, such as experimental design, complex problem-solving, and creative hypothesis generation [1] [2]. The technology emerges at a critical time, as modern research confronts multi-scale complexity challenges that traditional methods struggle to address effectively [3].

The Architecture of AI-Driven Synthesis

The integration of AI and robotics facilitates a complete re-engineering of the traditional research workflow into an automated, data-driven discovery engine.

Core Workflow and Closed-Loop Automation

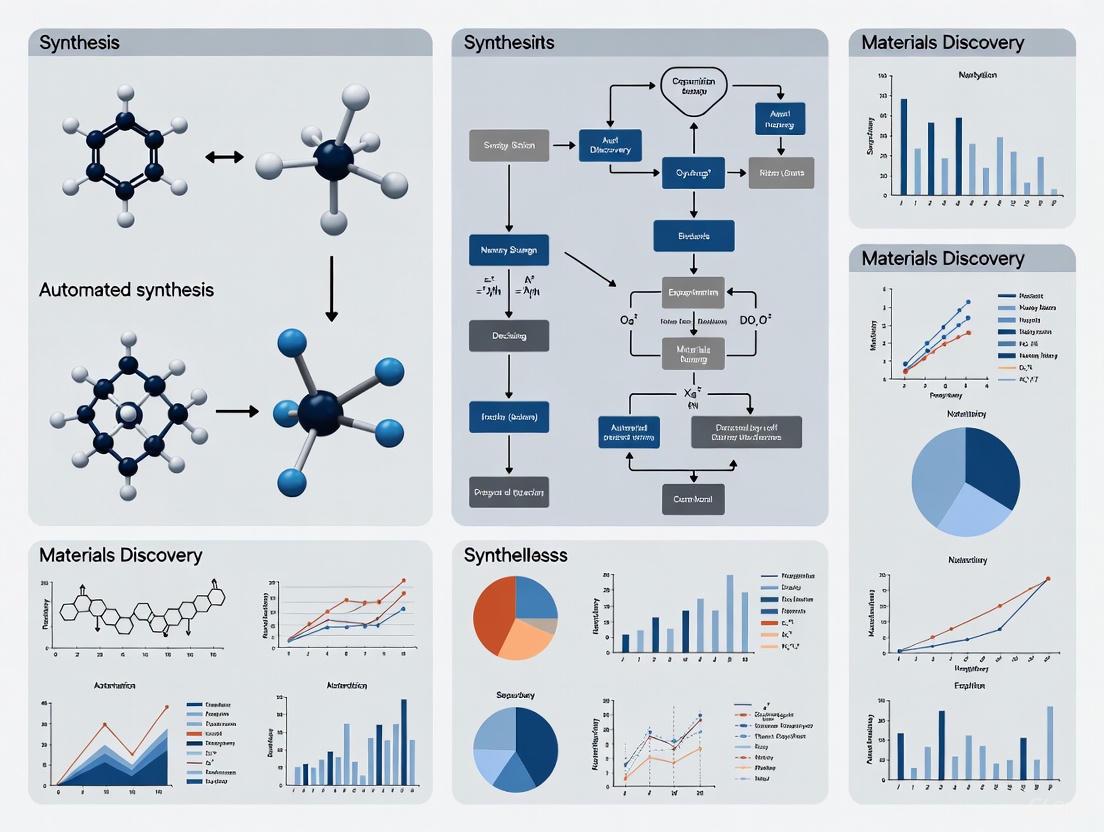

The following diagram illustrates the foundational closed-loop process that enables autonomous experimentation. This continuous cycle of planning, execution, and learning forms the backbone of a self-driving lab.

Figure 1: The autonomous R&D loop enables continuous discovery.

This workflow creates a self-optimizing system where the AI learns from experimental outcomes to propose increasingly optimal subsequent experiments [2]. For instance, the AI system Coscientist demonstrates this capability by planning and executing complex chemistry experiments, such as the optimization of palladium-catalyzed cross-couplings, entirely without human intervention [2]. The system translates a simple natural language prompt into a complete experimental process.

Technical Framework for Synthesis Prediction

Beneath the automated workflow lies a sophisticated technical framework for predicting viable synthesis pathways. Advances in Large Language Models (LLMs) and dedicated benchmarks are critical to this capability.

Figure 2: AI framework for end-to-end synthesis prediction.

Recent research has established benchmarks like AlchemyBench, which provides an end-to-end framework for evaluating LLMs applied to synthesis prediction [4]. This framework encompasses key tasks including raw materials prediction, equipment recommendation, synthesis procedure generation, and characterization outcome forecasting [4]. The development of large-scale, expert-verified datasets, such as the Open Materials Guide (OMG) with 17,000 synthesis recipes, is crucial for training and validating these predictive models [4]. Furthermore, the LLM-as-a-Judge framework demonstrates strong statistical agreement with expert assessments, enabling the scalable, automated evaluation of synthesis predictions without constant reliance on costly human experts [4].

Quantitative Analysis of Autonomous Laboratory Performance

The impact of automation on research efficiency and drug discovery timelines is significant, as shown in the following performance data compiled from industry reports and research findings.

Table 1: Performance Metrics of Autonomous Laboratory Systems

| Metric | Traditional Lab Performance | Autonomous Lab Performance | Source |

|---|---|---|---|

| Experiment Throughput | Limited by human workday | Can run >100 experiments simultaneously and continuously [2] | Industry Report [2] |

| Operation Schedule | ~40 hours/week (human-limited) | 24/7 operation without interruption [2] | Industry Report [2] |

| Drug Discovery Timeline | Multiple years | 30 days for target-to-hit phase (semi-autonomous) [2] | Research Study [2] |

| Development Cost Reduction | Baseline | Up to 25% reduction in pharmaceutical development [2] | McKinsey Analysis [2] |

| Time Savings per Task | 5-day work week (human) | Equivalent work completed in under 2 days (SDL) [2] | Industry Report [2] |

| Research Paper Cost | Thousands of dollars | Approximately $15 per paper (AI-generated, with errors) [2] | Sakana AI [2] |

Experimental Protocols in Autonomous Research

Protocol: AI-Driven Synthesis Recipe Extraction and Validation

The foundation of reliable AI-driven synthesis is high-quality, structured data. This protocol details the process of creating a verified dataset from scientific literature.

Table 2: Research Reagent Solutions for Synthesis Data Extraction

| Reagent/Tool | Function in Protocol | Technical Specification |

|---|---|---|

| Semantic Scholar API | Literature retrieval | Queries 400K+ articles using 60 domain-specific search terms [4] |

| PyMuPDFLLM | PDF-to-structure conversion | Converts PDF articles to structured Markdown format [4] |

| GPT-4o | Multi-stage annotation | Categorizes articles and segments text into 5 key components [4] |

| Expert Validation Panel | Quality verification | 8 domain experts from 3 institutions performing manual review [4] |

| ICC Statistical Model | Inter-rater reliability | Two-way mixed-effects model quantifying expert agreement [4] |

Methodology:

- Data Collection: The pipeline begins with retrieving 28,685 open-access articles from the Semantic Scholar API using expert-recommended search terms (e.g., "solid state sintering process," "metal organic CVD") [4].

- Text Extraction and Structuring: PDF articles are converted to structured Markdown using PyMuPDFLLM. A multi-stage LLM (GPT-4o) annotation process then parses the text [4].

- Component Segmentation: For articles containing synthesis protocols, the text is systematically segmented into five key components:

- X: A summary of the target material, synthesis method, and application.

- YM: Raw materials, including precise quantitative details.

- YE: Equipment specifications.

- YP: Step-by-step procedural instructions.

- YC: Characterization methods and results [4].

- Quality Verification: A panel of domain experts manually reviews a representative sample of the extracted recipes. They evaluate based on Completeness (capturing all components), Correctness (accurate extraction of critical details like temperature and amounts), and Coherence (logical narrative without contradictions) using a five-point Likert scale. The Intraclass Correlation Coefficient (ICC) is computed to ensure inter-rater reliability [4].

Protocol: Closed-Loop Material Formulation Optimization

This protocol exemplifies the application of autonomous labs in a critical industrial context: optimizing drug formulations or consumer products.

Methodology:

- AI Experimental Planning: An AI experiment planner, such as the open-source Bayesian Back End (BayBE), recommends optimal experiments based on predefined objectives (e.g., reducing viscosity, optimizing a chemical reaction) [2].

- Robotic Synthesis and Testing: The AI planner directs robotic equipment to execute the suggested experiments. For example, in developing Dove Intensive Repair hair care products, robots prepared consistent hair fiber samples in seconds and washed 120 samples every 24 hours, ensuring treatment consistency and controlled variables [2].

- Data Integration and Model Retraining: Resulting data from synthesis and testing are automatically fed back into the machine learning model. The model is retrained on this new data, closing the loop and informing the next, more optimal round of experimental candidates [2]. This approach has been successfully used by Intrepid's Valiant lab to develop more effective options for oral drug delivery [2].

Case Studies in Materials and Drug Discovery

Real-world implementations demonstrate the transformative potential of autonomous laboratories across diverse sectors.

Table 3: Autonomous Laboratory Implementation Case Studies

| Organization/Initiative | Field | Key Achievement | Technology Used |

|---|---|---|---|

| Carnegie Mellon University | Chemistry/Biology | First university autonomous lab; runs >100 experiments simultaneously [2] | Emerald Cloud Lab software [2] |

| Insilico Medicine/AC | Drug Discovery | Identified new treatment pathway for liver cancer (HCC) in 30 days [2] | PandaOmics, Chemistry42, AlphaFold [2] |

| Merck KGaA | Material Science | Accelerated selection of viscosity-reducing experiments [2] | Bayesian Back End (BayBE) [2] |

| Unilever | Consumer Goods | Shortened product testing from weeks to days for Dirt is Good's Wonder Wash [2] | Robotics at Materials Innovation Factory [2] |

| AI Scientist (Sakana AI) | AI Research | Automated generation of ML research papers at minimal cost [2] | Proprietary AI discovery process [2] |

Future Directions and Challenges

The trajectory of Autonomous Laboratories points toward increasingly intelligent and generalized systems, but several challenges must be overcome.

A primary challenge is data scarcity in specialized scientific domains, which limits the generalizability of AI models [4] [3]. Future progress hinges on creating large-scale, high-quality, and legally distributable datasets, such as the Open Materials Guide [4]. Furthermore, while the LLM-as-a-Judge framework shows promise for scalable evaluation, its alignment with expert judgment requires continuous refinement, particularly for complex or novel synthesis scenarios [4].

Future breakthroughs are anticipated from the development of interdisciplinary knowledge graphs, reinforcement learning-driven closed-loop systems, and interactive AI interfaces that can refine scientific theories collaboratively with human researchers [3]. A key evolution will be the shift of AI's role from a specialized tool to a "meta-technology" that redefines the very paradigm of scientific discovery, enabling the exploration of frontiers beyond the reach of traditional methods [3].

The Pressing Need for Acceleration in Materials and Drug Discovery

The processes of discovering new materials and drugs are traditionally time-consuming and resource-intensive, often spanning decades from initial concept to practical application. This extended timeline is increasingly untenable in the face of urgent global challenges, including the need for sustainable energy solutions, advanced electronics, and rapid responses to emerging diseases. The pressing need for acceleration in these fields has catalyzed a paradigm shift toward automated synthesis and AI-driven research methodologies that can dramatically compress innovation cycles.

This transformation is enabled by the convergence of robotic equipment, large-scale data analysis, and artificial intelligence. These technologies form the core of a new research infrastructure capable of autonomously hypothesizing, synthesizing, and testing new compounds. This technical guide examines the core principles, experimental protocols, and implementation frameworks underpinning this accelerated discovery paradigm, providing researchers with actionable methodologies for integrating automation into their scientific workflows.

The Case for Acceleration: Quantitative Insights

The traditional materials discovery pipeline faces significant bottlenecks. The following table quantifies the performance improvements achieved by an automated AI-driven platform (CRESt) compared to conventional methodologies, demonstrating the profound impact of acceleration technologies [5].

Table 1: Performance Metrics of AI-Driven vs. Conventional Discovery

| Metric | Traditional Discovery | AI-Driven Discovery (CRESt) | Improvement Factor |

|---|---|---|---|

| Catalyst Discovery Timeline | Multiple years | ~3 months | ~4x faster |

| Chemistry Exploration Scale | Dozens of chemistries | 900+ chemistries | ~10-100x greater |

| Experimental Throughput | Manual, sequential testing | 3,500+ automated tests | ~100-1000x higher |

| Catalyst Cost-Performance | Baseline (Pure Pd) | 9.3-fold improvement per dollar | 9.3x better value |

| Precious Metal Loading in Fuel Cells | 100% baseline | 25% (with superior performance) | 4x reduction |

The CRESt platform achieves these gains by integrating multimodal feedback—including data from scientific literature, chemical compositions, microstructural images, and human expert input—to guide a highly efficient exploration of the materials space [5]. This system moves beyond simplistic Bayesian optimization by creating a knowledge-informed search space, dramatically increasing the efficiency of active learning.

Core Methodologies and Experimental Protocols

The AI-Driven Experimentation Loop

Automated discovery relies on a continuous, iterative cycle of planning, synthesis, and analysis. The workflow below details the core operational protocol of an integrated AI-driven research platform.

Detailed Experimental Protocol for Automated Materials Discovery

The following protocol is adapted from the CRESt platform, which successfully discovered a record-breaking multielement fuel cell catalyst [5].

Phase 1: System Setup and Initialization

- Objective Definition: Conversationally define the research goal using natural language (e.g., "Discover a low-cost, high-activity catalyst for direct formate fuel cells").

- Precursor Selection: Specify up to 20 potential precursor molecules and substrate materials for the AI to incorporate into its recipe designs.

- Knowledge Base Integration: The system ingests and creates vector representations of relevant scientific papers, existing experimental data, and domain knowledge to build a contextual understanding of the problem space.

Phase 2: Autonomous Experimentation Cycle

- Recipe Design: The AI performs principal component analysis on the knowledge embedding space to define a reduced, high-potential search space. It then uses Bayesian optimization within this space to propose specific material compositions and synthesis parameters [5].

- Robotic Synthesis:

- A liquid-handling robot precisely prepares precursor solutions according to the AI's recipe.

- A carbothermal shock system or other automated synthesis equipment rapidly processes the samples to create the target material.

- Automated Characterization and Testing:

- Structural Analysis: Automated electron microscopy (SEM) and X-ray diffraction (XRD) collect microstructural and crystallographic data.

- Performance Testing: An automated electrochemical workstation evaluates functional properties (e.g., catalytic activity, stability).

- Data Logging: All experimental parameters and results are automatically recorded in a structured database.

- Model Update and Learning:

- Newly acquired multimodal data (text, images, numerical results) is fed back into the AI models.

- A large language model (LLM) processes this data alongside human feedback to refine the knowledge base and redefine the search space for the next iteration.

Phase 3: Validation and Debugging

- Computer Vision Monitoring: Cameras monitor experiments in real-time. Vision language models analyze the footage to detect issues (e.g., sample misplacement, deviant sample morphology) and suggest corrective actions [5].

- Human-in-the-Loop Review: Researchers review the system's observations, hypotheses, and proposed corrections via the natural language interface, providing final validation and overriding if necessary.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Implementing an automated discovery pipeline requires a suite of integrated hardware and software solutions. The following table details the key components of a modern, self-driving laboratory.

Table 2: Essential Toolkit for Automated Discovery Research

| Tool / Solution | Function | Specific Example / Vendor |

|---|---|---|

| Liquid-Handling Robot | Precise, high-throughput dispensing of precursor solutions for synthesis. | Eppendorf EP Motion, Hamilton Microlab STAR |

| Automated Synthesis Reactor | Rapid, programmable synthesis of material samples under controlled conditions. | Carbothermal shock systems, automated hydrothermal reactors |

| Automated Electrochemical Workstation | High-throughput functional testing of material performance (e.g., catalytic activity). | BioLogic VMP-300, PalmSens4 with autosampler |

| Automated Electron Microscope | Unattended collection of microstructural and compositional data from multiple samples. | Thermo Scientific Autoscope SEM |

| Multimodal AI Platform | Integrates diverse data streams (text, images, numbers) to plan and learn from experiments. | CRESt-like platform, custom implementations [5] |

| Computer Vision System | Monitors experiments, detects operational anomalies, and ensures reproducibility. | Cameras coupled with vision language models (e.g., OpenAI CLIP, custom VLMs) [5] |

Visualization and Data Presentation Standards

Effective data communication is critical in high-throughput science. Adhering to visual accessibility standards ensures that complex information is perceivable by all researchers.

Color Contrast and Accessibility

All graphical elements, including charts, diagrams, and user interface components, must meet minimum color contrast ratios as defined by the Web Content Accessibility Guidelines (WCAG) [6] [7].

Table 3: WCAG Color Contrast Requirements for Data Visualization

| Content Type | Minimum Ratio (AA Rating) | Enhanced Ratio (AAA Rating) |

|---|---|---|

| Standard Body Text | 4.5 : 1 | 7 : 1 |

| Large-Scale Text (≥18pt or 14pt bold) | 3 : 1 | 4.5 : 1 |

| Graphical Objects & UI Components (data points, icons, graph lines) | 3 : 1 | Not defined |

These thresholds are crucial for researchers with low vision or color vision deficiencies, ensuring that insights are not lost due to poor visual design [6] [7]. Tools like the WebAIM Color Contrast Checker should be used to validate all color choices in data presentations and user interfaces [8].

The integration of AI, robotics, and multimodal data analysis is fundamentally reshaping the landscape of materials and drug discovery. The methodologies and protocols outlined in this guide provide a concrete framework for research institutions and industrial R&D departments to build and operate accelerated discovery pipelines. By implementing these automated systems, scientists can transcend the limitations of traditional trial-and-error approaches, systematically exploring vast chemical spaces with unprecedented speed and intelligence. This paradigm shift promises not only to accelerate the pace of innovation but also to unlock novel solutions to some of the world's most pressing technological and health-related challenges.

The discovery and development of novel materials are critical for advancing technologies in fields ranging from energy storage to pharmaceuticals. Traditional materials discovery is often slow and sequential, creating a significant bottleneck between theoretical prediction and practical application. This guide details the core components required to bridge the gap between high-throughput computational screening and experimental realization, forming a cohesive pipeline for accelerated materials discovery. By integrating artificial intelligence, robotics, and data science, researchers can transform this traditionally linear process into a dynamic, iterative cycle that dramatically reduces development timelines from years to months or even weeks.

The fundamental challenge in materials science lies in the vastness of chemical space. For organic materials alone, the number of possible molecules consisting of 30 or fewer light atoms reaches approximately 10^60 possibilities, creating a combinatorial explosion that defies traditional experimental approaches [9]. Computational methods can rapidly screen these possibilities, but their true value emerges only when seamlessly connected to experimental validation through automated workflows. This integration enables researchers to navigate complex multi-objective optimization problems where materials must simultaneously satisfy multiple property requirements for specific applications.

Core Workflow Components

Computational Screening and AI-Driven Design

Computational screening serves as the foundational stage in modern materials discovery pipelines, leveraging physics-based simulations and machine learning to identify promising candidate materials from vast chemical spaces before any laboratory work begins.

First-Principles Calculations and Machine Learning Force Fields Density Functional Theory (DFT) and other ab initio methods provide the theoretical foundation for computational materials screening by enabling accurate prediction of material properties from quantum mechanical principles. These approaches allow researchers to calculate formation energies, electronic structures, phase stability, and other essential properties purely from computational models [10]. Machine-learning-based force fields have emerged that offer comparable accuracy to ab initio methods at a fraction of the computational cost, enabling large-scale simulations of complex systems including nanomaterials and solid-state materials [11]. For pharmaceutical and organic materials, computational programmes focus on exploring the energy landscape to find thermodynamically stable materials, then screening them for desired properties to identify viable candidates [9].

Generative Models and Inverse Design Advanced AI techniques now enable inverse design approaches, where models generate novel molecular structures with targeted properties rather than simply screening existing databases. Generative models can propose new materials and synthesis routes by learning from known chemical spaces while exploring new regions [11]. These models have demonstrated the ability to rediscover experimentally known design rules while also proposing novel molecular features not previously considered in conservative experimental programmes [9]. The integration of explainable AI (XAI) techniques improves model transparency and physical interpretability, increasing researcher trust in these computational suggestions [11].

Experimental Automation and Robotic Platforms

The transition from digital predictions to physical materials requires sophisticated automated systems capable of executing complex synthesis and characterization protocols with minimal human intervention.

Autonomous Synthesis Robotics The A-Lab, an autonomous laboratory for solid-state synthesis of inorganic powders, exemplifies the advanced robotic capabilities now available for materials synthesis [12]. This platform integrates robotic arms for sample handling, automated powder milling and mixing stations, and computer-controlled box furnaces for heating operations. The system handles multigram sample quantities suitable for subsequent device-level testing and technological scale-up. For organic materials and pharmaceutical compounds, liquid-handling robots enable high-throughput synthesis of molecular precursors, though challenges remain in keeping precursor feedstocks pace with automated synthesis capabilities [9].

Integrated Characterization and Analysis Automated characterization forms the critical feedback loop in autonomous discovery pipelines. The A-Lab incorporates automated X-ray diffraction (XRD) stations with robotic sample transfer systems that grind synthesized products into fine powders and perform structural analysis without human intervention [12]. Probabilistic machine learning models then analyze the resulting diffraction patterns to identify phases and quantify weight fractions of synthesis products. These models are trained on experimental structures from databases like the Inorganic Crystal Structure Database (ICSD) and supplemented with simulated patterns from computational sources like the Materials Project, with corrections applied to reduce density functional theory errors [12].

Table 1: Key Computational Methods in Materials Discovery

| Method Category | Specific Techniques | Primary Applications | Accuracy/Throughput |

|---|---|---|---|

| First-Principles Calculations | Density Functional Theory (DFT), Ab Initio Molecular Dynamics | Phase stability prediction, electronic structure calculation, reaction energy calculation | High accuracy, lower throughput |

| Machine Learning Force Fields | Neural Network Potentials, Gaussian Approximation Potentials | Large-scale molecular dynamics, nanomaterial simulation, complex system modeling | Near-ab initio accuracy, 10-1000× speedup |

| Generative Models | Recurrent Neural Networks (RNN), Variational Autoencoders, Generative Adversarial Networks | Inverse molecular design, novel precursor suggestion, multi-property optimization | High novelty, emerging reliability |

| Stability Prediction | Convex Hull Analysis, Phase Diagram Construction | Thermodynamic stability assessment, decomposition energy calculation | >70% success rate in experimental validation |

Data Infrastructure and Knowledge Integration

Effective bridging of computational and experimental domains requires sophisticated data management systems that capture, standardize, and leverage information across multiple discovery cycles.

Literature Mining and Historical Knowledge Natural language processing models trained on vast synthesis databases extract heuristic knowledge from scientific literature, enabling algorithms to propose initial synthesis recipes based on analogy to known materials [12]. These models assess target "similarity" and recommend precursor selections and heating protocols derived from historical experimental data. This encoded domain knowledge mimics the approach of human researchers who base initial synthesis attempts on related materials while leveraging the scale of computational processing to identify non-obvious analogies.

Active Learning and Continuous Optimization Active learning algorithms close the loop between computational prediction and experimental validation by using failed synthesis attempts to propose improved follow-up recipes. The ARROWS3 (Autonomous Reaction Route Optimization with Solid-State Synthesis) algorithm integrates ab initio computed reaction energies with observed synthesis outcomes to predict optimal solid-state reaction pathways [12]. This approach prioritizes reaction intermediates with large driving forces to form target materials while avoiding kinetic traps that lead to metastable byproducts. Through continuous experimentation, the system builds a growing database of observed pairwise reactions that progressively constrains the synthesis search space.

Experimental Protocols and Methodologies

Precursor Selection and Recipe Generation

The initial stage of experimental realization involves selecting appropriate starting materials and defining synthesis protocols that maximize the probability of obtaining target materials.

Literature-Inspired Recipe Generation For each target compound, up to five initial synthesis recipes are generated by machine learning models that have learned to assess target similarity through natural-language processing of a large database of syntheses extracted from the literature [12]. A second ML model trained on heating data from historical sources then proposes optimal synthesis temperatures [12]. These literature-inspired recipes succeed approximately 37% of the time when the reference materials are highly similar to the targets, confirming that computational similarity metrics provide useful guidance for precursor selection.

Thermodynamics-Guided Optimization When literature-inspired recipes fail to produce >50% yield, active learning algorithms propose improved synthesis routes based on thermodynamic principles. The ARROWS3 framework operates on two key hypotheses: (1) solid-state reactions tend to occur between two phases at a time (pairwise), and (2) intermediate phases that leave only a small driving force to form the target material should be avoided [12]. This approach continuously builds a database of pairwise reactions observed in experiments—identifying 88 unique pairwise reactions in initial operations—which allows the products of some recipes to be inferred without testing, potentially reducing the search space by up to 80%.

Synthesis Execution and Characterization

Standardized protocols for automated synthesis and characterization ensure consistent, reproducible results across discovery campaigns.

Solid-State Synthesis Protocol

- Precursor Preparation: Robotic systems dispense and mix precursor powders in stoichiometric ratios determined by synthesis recipes. The A-Lab uses three integrated stations for sample preparation, heating, and characterization, with robotic arms transferring samples and labware between them [12].

- Milling and Homogenization: Powder mixtures are transferred to alumina crucibles and subjected to mechanical milling to ensure good reactivity between precursors with diverse physical properties including density, flow behavior, particle size, hardness, and compressibility.

- Thermal Treatment: Robotic arms load crucibles into box furnaces for heating according to temperature profiles suggested by ML models. The system includes four box furnaces to enable parallel processing of multiple samples.

- Cooling and Recovery: After programmed heating cycles, samples are allowed to cool before robotic transfer to characterization stations.

Structural Characterization and Phase Analysis

- Sample Preparation: Automated systems grind synthesized products into fine powders using robotic mortar and pestle systems to ensure consistent particle size for diffraction analysis.

- XRD Measurement: Powder X-ray diffraction patterns are collected with automated instruments capable of high-throughput sample processing.

- Phase Identification: Probabilistic ML models analyze diffraction patterns to identify crystalline phases present in synthesis products. These models are trained on experimental structures from crystal structure databases.

- Quantification: Automated Rietveld refinement quantifies weight fractions of identified phases, with results reported to the laboratory management system to inform subsequent experimental iterations.

Table 2: Experimental Techniques in Autonomous Materials Discovery

| Technique Category | Specific Methods | Key Measurements | Automation Compatibility |

|---|---|---|---|

| Synthesis Methods | Solid-State Reaction, Hydrothermal Synthesis, Solution Processing | Phase purity, yield, reaction efficiency | High for solid-state, medium for solution |

| Structural Characterization | X-Ray Diffraction (XRD), Pair Distribution Function (PDF) Analysis | Crystal structure, phase identification, weight fractions | High with robotic sample handling |

| Spectroscopic Analysis | Raman Spectroscopy, XPS, NMR | Chemical bonding, electronic structure, functional groups | Medium (evolving automation) |

| Microscopic Analysis | SEM, TEM, AFM | Morphology, particle size, elemental distribution | Low to medium (requires development) |

Failure Analysis and Iterative Optimization

Systematic analysis of failed syntheses provides crucial insights for improving both computational predictions and experimental protocols.

Kinetic Limitations Sluggish reaction kinetics represents the most common failure mode, particularly for reactions with low driving forces (<50 meV per atom) [12]. These kinetic limitations can be addressed through modified thermal profiles (extended heating times, higher temperatures) or alternative precursor selections that provide more favorable reaction pathways.

Precursor Compatibility Precursor volatility and amorphization constitute additional failure modes that require specialized detection algorithms. Computational inaccuracies in predicted formation energies, though relatively rare, can lead to targeting of genuinely unstable compounds [12]. These failure modes highlight opportunities for improving both experimental protocols and computational methods.

Visualization of Integrated Workflows

Figure 1: Integrated computational-experimental workflow for autonomous materials discovery, showing the cyclic process from target identification through experimental validation and iterative optimization.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Research Reagent Solutions for Autonomous Materials Discovery

| Reagent/Material | Function | Application Examples | Considerations |

|---|---|---|---|

| Precursor Powders | Starting materials for solid-state synthesis | Metal oxides, phosphates, custom organic precursors | Purity, particle size, reactivity, commercial availability |

| Alumina Crucibles | Containment for high-temperature reactions | Solid-state synthesis up to 1600°C | Chemical inertness, thermal stability, reusability |

| Solvents for Extraction/Purification | Media for solution-based synthesis | Organic solvents, water, ionic liquids | Purity, boiling point, environmental impact |

| Structural Characterization Standards | Reference materials for instrument calibration | Silicon standard for XRD, NMR reference compounds | Certification, stability, compatibility |

| Machine-Learned Force Fields | Accelerated molecular dynamics simulations | Nanomaterial modeling, reaction pathway prediction | Transferability, accuracy across chemical space |

| Ab Initio Reference Data | Training data for machine learning models | Materials Project formation energies, ICSD structures | Data quality, computational methodology |

| Automated Synthesis Robots | High-throughput experimental execution | Liquid handling, powder dispensing, reactor control | Precision, compatibility with materials, maintenance |

The integration of computational screening with experimental realization represents a paradigm shift in materials discovery, transforming traditionally sequential processes into dynamic, iterative cycles. The core components outlined in this guide—advanced computational methods, robotic automation, active learning algorithms, and standardized data protocols—together create a powerful framework for accelerating the development of novel materials. As these technologies mature, we can anticipate further improvements in success rates, which already approach 71% for autonomous synthesis of computationally predicted materials [12].

Future developments will likely focus on increasing the modularity of AI systems, enhancing human-AI collaboration interfaces, and integrating techno-economic analysis directly into the discovery pipeline [11]. The ongoing challenge of model generalizability, standardized data formats, and energy-efficient computation will drive research in explainable AI and hybrid approaches that combine physical knowledge with data-driven models [11]. By aligning computational innovation with practical experimental implementation, the materials science community is poised to make autonomous experimentation a powerful engine for scientific advancement and technological innovation.

The field of materials science and chemistry is undergoing a profound transformation driven by the emergence of autonomous laboratories. These platforms, often termed "self-driving labs," represent the full integration of artificial intelligence (AI), robotic experimentation, and high-performance computing into a continuous, closed-loop cycle [13]. By automating the entire research workflow—from initial hypothesis and experimental design to execution and data analysis—these systems accelerate the discovery and development of novel materials and molecules at an unprecedented pace, fundamentally changing the research paradigm from human-in-the-loop to "human on the loop" [14]. This whitepaper provides an in-depth technical examination of three exemplary platforms—A-Lab, CRESt, and Polybot—that are at the forefront of this revolution, highlighting their unique architectures, methodologies, and contributions to accelerating automated synthesis and materials discovery.

The following section details the core design, capabilities, and demonstrated achievements of the A-Lab, CRESt, and Polybot platforms. A comparative summary is provided in Table 1.

Table 1: Comparative Analysis of Autonomous Research Platforms

| Feature | A-Lab | CRESt (MIT) | Polybot |

|---|---|---|---|

| Primary Focus | Solid-state synthesis of inorganic powders [12] | Materials discovery, particularly for energy solutions [5] | Solution processing of electronic polymers [15] |

| Core AI Methodology | Natural language models for recipe generation; Active learning (ARROWS3) for optimization [12] | Multimodal models incorporating diverse data sources; Bayesian optimization [5] | Importance-guided multi-objective Bayesian optimization [15] |

| Robotic Capabilities | Powder handling, milling, furnace heating, X-ray diffraction (XRD) [12] | Liquid-handling robot, carbothermal shock synthesis, automated electrochemical workstation [5] | Robotic solution processing, blade coating, automated electrical/optical characterization [15] [16] |

| Key Achievement | Synthesized 41 of 58 novel, computationally predicted compounds in 17 days [12] | Discovered a multielement fuel cell catalyst with a 9.3-fold improvement in power density per dollar over palladium [5] | Achieved transparent conductive films with averaged conductivity exceeding 4500 S/cm [15] |

| Data Handling | XRD analysis via machine learning models; Uses historical literature data [12] | Uses literature, experimental data, and human feedback; Computer vision for monitoring [5] | Statistical analysis for repeatability; Automated data extraction and storage [15] |

A-Lab: Autonomous Solid-State Synthesis

The A-Lab, as presented in Nature, is an autonomous laboratory specifically engineered for the solid-state synthesis of inorganic powders. Its primary goal is to close the gap between the high rate of computational materials screening and the slow pace of their experimental realization [12].

Experimental Protocol:

- Target Identification: The process begins with targets identified from large-scale ab initio phase-stability databases like the Materials Project and Google DeepMind. The A-Lab focused on air-stable compounds predicted to be on or near the thermodynamic convex hull [12].

- Recipe Generation: For each target, the system generates initial synthesis recipes using natural-language models trained on a massive database of historical syntheses mined from the scientific literature. This mimics a human researcher's approach of using analogy to known materials. A second ML model proposes a synthesis temperature [12].

- Robotic Execution: A robotic arm transfers the mixed precursor powders into an alumina crucible. The crucible is then loaded into one of four box furnaces for heating. After heating and cooling, the sample is ground into a fine powder and automatically characterized by X-ray Diffraction (XRD) [12].

- Phase Analysis & Active Learning: The XRD patterns are analyzed by machine learning models to determine the phases and weight fractions of the synthesis products. If the target yield is below 50%, the lab's active learning algorithm, ARROWS3, takes over. This algorithm uses observed reaction pathways and thermodynamic data from the Materials Project to propose new, optimized synthesis recipes with different precursors or conditions, and the cycle repeats [12].

CRESt: A Copilot for Experimental Scientists

Developed by MIT researchers, the Copilot for Real-world Experimental Scientists (CRESt) is a platform designed to incorporate diverse sources of information, much like a human scientist. It leverages large multimodal models to navigate complex experimental spaces [5].

Experimental Protocol:

- Multimodal Objective Setting: Researchers converse with CRESt in natural language to define a goal, such as finding a promising catalyst material. CRESt integrates information from previous literature, chemical compositions, microstructural images, and more to inform its strategy [5].

- High-Throughput Exploration: The robotic system, which includes a liquid-handling robot and a carbothermal shock system for rapid synthesis, executes the experiments. An automated electrochemical workstation performs high-throughput testing [5].

- Real-Time Observation & Optimization: Cameras and visual language models allow CRESt to monitor experiments, detect issues (like a pipette being out of place), and suggest corrections. The results of each experiment are fed back into the models, which use a form of Bayesian optimization to plan the subsequent experiments, creating a tight feedback loop [5]. In one demonstration, CRESt explored over 900 chemistries and conducted 3,500 electrochemical tests to discover a superior fuel cell catalyst [5].

Polybot: Autonomous Discovery for Electronic Polymers

Polybot is an AI-integrated robotic platform designed to address the formidable challenge of efficiently processing electronic polymer solutions into thin films with specific properties. Its architecture is modular, facilitating both synthesis and characterization [15] [16].

Experimental Protocol:

- Parameter Space Definition: The experiment begins by defining a high-dimensional processing space. In a study on PEDOT:PSS thin films, this included seven parameters such as additive types, blade-coating speeds, and post-processing temperatures [15].

- Automated Workflow Execution: The robotic platform automatically handles solution formulation, thin-film coating on a substrate (via a blade-coating station), and post-processing (e.g., annealing). The entire loop—formulation, processing, and conductivity measurement—takes approximately 15 minutes per sample [15].

- Quality-Centric Characterization: The system uses an automated probe station for electrical characterization, taking multiple current-voltage (IV) curves across different sample regions. A key feature is its emphasis on data repeatability: it performs multiple trials per sample and uses statistical tests (Shapiro-Wilk and t-test) to filter out invalid data, ensuring only high-quality data is used for learning [15].

- Importance-Guided Optimization: Polybot uses a tailored "importance-guided Bayesian optimization" algorithm to navigate the complex parameter space. This algorithm efficiently balances the exploration of new regions with the exploitation of known high-performing areas to achieve multiple objectives, such as maximizing conductivity while minimizing coating defects [15].

The Scientist's Toolkit: Essential Research Reagents and Materials

The successful operation of these platforms relies on a suite of specialized reagents, materials, and hardware. The table below details key components referenced in the experimental campaigns of A-Lab, CRESt, and Polybot.

Table 2: Key Research Reagents and Materials in Autonomous Experimentation

| Item | Function | Exemplary Use Case |

|---|---|---|

| PEDOT:PSS | A commercially available conductive polymer dispersion used to create transparent conductive films. | Used as the exemplary material in Polybot's autonomous processing campaign [15]. |

| Formate Salt | A fuel source for a type of high-density fuel cell. | CRESt discovered a catalyst that efficiently uses formate salt to produce electricity [5]. |

| Inorganic Precursor Powders | Powdered elements or compounds that serve as starting materials for solid-state reactions. | A-Lab handled and mixed various precursors to synthesize novel inorganic compounds [12]. |

| Palladium / Platinum | Precious metals that serve as benchmarks or components in catalyst materials. | CRESt's discovered catalyst reduced the need for expensive palladium [5]. |

| Solvent Additives (e.g., DMSO, EG) | Chemical additives mixed into polymer solutions to improve their electrical conductivity and film quality. | Polybot's search space included varying additive types and ratios to optimize PEDOT:PSS film performance [15]. |

| Catalyst Nanoparticles | Metal nanoparticles (e.g., Fe, Co) used to catalyze the growth of carbon nanostructures. | Discussed in the context of autonomous CVD systems for CNT synthesis, a related application [14]. |

Visualizing the Autonomous Workflow

The power of platforms like A-Lab, CRESt, and Polybot lies in their implementation of a closed-loop, iterative workflow. The following diagram generalizes this core autonomous discovery process.

A-Lab, CRESt, and Polybot exemplify the current state-of-the-art in autonomous materials discovery. While their technical implementations differ—targeting solid-state synthesis, solution-processed materials, and energy applications, respectively—they share a common core architecture that integrates artificial intelligence, robotics, and data science into a closed-loop system. Their demonstrated successes in discovering and optimizing new materials, often far more efficiently than traditional approaches, provide a compelling proof-of-concept for the future of scientific research. As these platforms evolve, addressing challenges such as data scarcity, model generalizability, and hardware interoperability will be key to unlocking their full potential and democratizing their impact across chemistry, materials science, and drug development [13].

Inside the Engine: AI Methodologies and Real-World Applications

Harnessing Active Learning and Bayesian Optimization for Experiment Planning

The pursuit of novel materials and molecules is fundamental to technological advancement, yet traditional research and development (R&D) methods often involve time-consuming and costly trial-and-error processes. The convergence of large-scale experimentation, automation, and artificial intelligence is transforming this landscape. This whitepaper details how the strategic integration of Active Learning (AL) and Bayesian Optimization (BO) creates a powerful, efficient framework for experiment planning, accelerating discovery in automated synthesis and materials science while significantly reducing resource expenditure [17].

Active Learning, a subfield of machine learning dedicated to optimal experiment design, allows computational models to identify the most informative subsequent experiments [18]. When paired with Bayesian Optimization—a probabilistic strategy for navigating complex search spaces—these systems can autonomously guide research campaigns. This approach is particularly potent in the "low-to-no-data regime" common in industrial R&D, where it enables "make-test-learn" cycles that are both smarter and faster [19]. By implementing closed-loop systems, where AI plans experiments and robotic platforms execute them, researchers can achieve orders-of-magnitude acceleration in discovering new functional materials, such as high-performance catalysts and energy storage materials [5] [18].

Theoretical Foundations

Core Principles of Bayesian Optimization

Bayesian Optimization is a sequential design strategy for optimizing black-box functions that are expensive to evaluate. It is exceptionally suited for experimental planning where the relationship between input parameters (e.g., chemical composition, processing temperature) and the output objective (e.g., catalytic activity, battery capacity) is unknown, complex, and costly to measure.

The BO framework consists of two primary components [19]:

- A probabilistic surrogate model is used to approximate the unknown objective function, ( f(\mathbf{x}) ). The most common choice is a Gaussian Process (GP), which provides a non-parametric, Bayesian approach to modeling functions. A GP is fully specified by a mean function, ( \mu(\mathbf{x}) ), and a covariance kernel, ( k(\mathbf{x}, \mathbf{x'}) ), which encodes prior assumptions about the function's behavior (e.g., smoothness, periodicity).

- An acquisition function, ( \alpha(\mathbf{x}) ), guides the selection of the next experiment by quantifying the utility of evaluating a candidate point ( \mathbf{x} ). It uses the surrogate model's prediction (mean) and associated uncertainty (variance) to balance exploration (probing regions of high uncertainty) and exploitation (probing regions with high predicted performance).

The standard BO loop iterates as follows [19]:

- Update the surrogate model using all available data ( D ).

- Maximize the acquisition function to identify the most promising next experiment, ( \mathbf{x}{\text{next}} = \text{argmax}{\mathbf{x}} \alpha(\mathbf{x}) ).

- Evaluate the true objective function ( f ) at ( \mathbf{x}_{\text{next}} ) (i.e., run the experiment).

- Augment the dataset ( D ) with the new result and repeat.

The Role of Active Learning

While BO is powerful for optimization, Active Learning provides a broader framework for intelligently selecting data points to achieve various goals, such as global exploration, model improvement, or, as in BO, optimization. In the context of experiment planning, AL prioritizes experiments that are expected to provide the maximum information gain. This is crucial when each experiment consumes significant time, money, or resources. By focusing on the most informative experiments, AL minimizes the total number of trials required to achieve a research objective, whether that is mapping a phase diagram or finding a material with a target property [17].

Implementation and Workflows

Implementing a closed-loop system for materials discovery involves integrating computational intelligence with physical automation. The following workflow and diagram illustrate this process.

Diagram 1: Closed-loop autonomous discovery workflow.

Detailed Methodologies

The workflow in Diagram 1 is realized through specific methodologies, as demonstrated by leading research platforms:

- The CRESt Platform (MIT): This system uses a multimodal knowledge base that incorporates scientific literature, chemical compositions, microstructural images, and experimental results. Its BO implementation is augmented by creating a "knowledge embedding space" from prior literature before experiments begin. Principal component analysis reduces this space, and BO operates within this reduced, knowledge-informed region. After each experiment, newly acquired data and human feedback are fed into a large language model to augment the knowledge base and redefine the search space, significantly boosting AL efficiency [5].

- The CAMEO Algorithm: CAMEO uniquely combines the objectives of phase mapping and property optimization. It uses a materials-specific active-learning campaign governed by the function ( \mathbf{x}* = \text{argmax}{\mathbf{x}} \left[ g(F(\mathbf{x}), P(\mathbf{x})) \right] ), where ( F(\mathbf{x}) ) is the functional property and ( P(\mathbf{x}) ) is the knowledge of the phase map. This allows the algorithm to exploit the known dependence of materials properties on crystal structure and phase boundaries, leading to a more efficient search than standard BO [18] [20].

- The BayBE Framework: Designed for industrial applications, BayBE emphasizes practical features like chemical encodings for categorical variables (e.g., representing solvents in a semantically meaningful way rather than using one-hot encoding), transfer learning to leverage data from similar past experiments, and multi-target optimization. These features address common real-world challenges and can reduce the number of required experiments by at least 50% compared to default implementations [19].

Performance and Quantitative Outcomes

The effectiveness of AL- and BO-driven experiment planning is demonstrated by concrete outcomes across multiple domains. The following table summarizes key performance metrics from documented case studies.

Table 1: Quantitative Performance of AL/BO in Experimental Campaigns

| Platform / Study | Field / Application | Key Achievement | Experimental Efficiency |

|---|---|---|---|

| CRESt (MIT) [5] | Materials Science: Fuel Cell Catalysts | Discovered an 8-element catalyst with a 9.3-fold improvement in power density per dollar over pure palladium. | Explored 900+ chemistries and conducted 3,500 electrochemical tests over 3 months. |

| CAMEO [18] [20] | Materials Science: Phase-Change Memory | Discovered a novel epitaxial nanocomposite with optical contrast up to 3x larger than the well-known Ge₂Sb₂Te₅. | Achieved a 10-fold reduction in the number of experiments required. |

| BayBE Framework [19] | Chemical Reactions & Formulations | Optimized reaction conditions and formulations in the low-data regime. | Reduced the average number of experiments, costs, and time by ≥50%. |

| Industrial BO Adoption [21] | Drug Development: Yeast Optimization | Applied BO for continuous, closed-loop optimization of growth parameters (e.g., N-C ratio) using automated bioreactors. | Enables 24/7 experiment suggestion and execution, drastically accelerating bioprocess development. |

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of these strategies requires a combination of software and hardware. The table below details key components of an automated discovery lab.

Table 2: Key Research Reagent Solutions for Automated Discovery

| Tool / Solution | Type | Function / Description | Example Platforms / Libraries |

|---|---|---|---|

| Bayesian Back End (BayBE) [19] | Software Library | An open-source Python framework for BO in industrial contexts. Features chemical encodings, transfer learning, and multi-target optimization. | BayBE |

| CRESt [5] | Integrated AI Platform | A "Copilot for Real-world Experimental Scientists" that uses multimodal models and robotic equipment for closed-loop materials discovery. | CRESt |

| Liquid-Handling Robot [5] | Hardware | Automates the precise dispensing of liquid precursors for high-throughput synthesis of material libraries. | Custom/integrated systems |

| Automated Electrochemical Workstation [5] | Hardware | Performs high-throughput testing of functional properties, such as the performance of fuel cell catalysts. | Custom/integrated systems |

| Automated Characterization [5] [18] | Hardware | Provides rapid, automated structural and chemical analysis of synthesized samples. | Scanning Electron Microscopy (SEM), X-ray Diffraction (XRD) at synchrotron beamlines |

| Summit [21] | Software Library | A Python package designed to make it easy to apply BO to scientific problems across discovery, process optimization, and system tuning. | Summit |

The integration of Active Learning and Bayesian Optimization represents a paradigm shift in experimental science. Moving beyond traditional, intuition-driven approaches, this methodology enables a targeted, data-efficient, and accelerated path to discovery. As these tools become more accessible through frameworks like BayBE and Summit, and as integrated platforms like CRESt and CAMEO demonstrate groundbreaking successes, their adoption will become imperative for industrial and academic researchers alike. By harnessing these technologies, scientists can navigate the exponentially vast design spaces of modern materials and drug development with unprecedented speed and precision, ushering in a new era of automated discovery.

The discovery and synthesis of new materials have traditionally been slow, artisanal processes, often plagued by low success rates and lengthy timelines between discovery and practical application. The field now stands at a transformative juncture, where artificial intelligence is poised to accelerate discovery from artisanal to industrial scale [22]. Central to this transformation is multimodal AI, which integrates diverse data types—from scientific literature and experimental results to human intuition and robotic feedback—into a cohesive discovery framework. Unlike traditional AI models that operate on single data streams, multimodal AI systems emulate the collaborative, holistic approach of human scientists, considering experimental results, broader scientific literature, imaging, structural analysis, and colleague input [5]. This technical guide explores the core architectures, methodologies, and implementations of multimodal AI within automated synthesis and materials discovery research, providing researchers and drug development professionals with the foundational knowledge to leverage these systems in their own work.

Core Architecture of Multimodal AI Systems

At its essence, multimodal AI for scientific discovery combines multiple data modalities to form a more complete understanding of materials and their potential applications. These systems leverage cross-modal representation learning to create shared representations across different data types, allowing the AI to map relationships between seemingly disparate information sources [23].

Key Components and Data Flow

The following diagram illustrates the core architecture and data flow of a typical multimodal AI system for materials discovery:

Core Enabling Technologies

Multimodal AI systems rely on several interconnected technologies to process and interpret diverse data types [23]:

- Natural Language Processing (NLP): Enables the system to parse and understand scientific literature, patents, and experimental notes, extracting relevant chemical relationships and synthesis parameters.

- Computer Vision: Analyzes microstructural images, spectroscopy data, and X-ray diffraction patterns to characterize material properties and identify structural features.

- Machine Learning & Deep Learning: Develops sophisticated algorithms that fuse data from multiple sources to support specific discovery tasks.

- Sensor Fusion Techniques: Integrates data from various laboratory sensors and instruments into a unified environmental context.

Implementation in Automated Materials Discovery

The CRESt Platform: A Case Study in Fuel Cell Catalyst Discovery

The Copilot for Real-world Experimental Scientists (CRESt) platform developed by MIT researchers exemplifies the practical implementation of multimodal AI for materials discovery [5]. This system was deployed to discover advanced electrode materials for direct formate fuel cells, achieving a 9.3-fold improvement in power density per dollar over pure palladium through the exploration of more than 900 chemistries and 3,500 electrochemical tests over three months.

Core Experimental Methodology

The CRESt platform operates through an integrated workflow that combines computational planning with robotic execution:

Key Research Reagent Solutions

Table 1: Essential research reagents and equipment for multimodal AI-driven materials discovery

| Item | Function | Example Implementation |

|---|---|---|

| Liquid-Handling Robot | Precise dispensing of precursor chemicals for reproducible synthesis | CRESt system for exploring 900+ chemistries [5] |

| Carbothermal Shock System | Rapid synthesis of materials through extreme temperature jumps | CRESt's high-throughput materials synthesis [5] |

| Automated Electrochemical Workstation | High-throughput testing of material performance under various conditions | CRESt's 3,500 electrochemical tests [5] |

| Automated Electron Microscopy | Microstructural characterization and image analysis without human intervention | CRESt's automated SEM analysis [5] |

| Powder X-ray Diffraction (PXRD) | Crystal structure determination immediately after synthesis | U of T's AI tool for MOF characterization [24] |

| Precursor Chemical Library | Diverse starting materials for exploring combinatorial chemistry spaces | CRESt's use of up to 20 precursor molecules [5] |

Quantitative Performance of Multimodal AI Systems

The implementation of multimodal AI systems has demonstrated significant improvements in discovery efficiency and success rates across multiple domains.

Table 2: Performance metrics of multimodal AI systems in scientific discovery

| System / Domain | Key Performance Metrics | Comparative Advantage |

|---|---|---|

| CRESt Platform (Materials Discovery) | 9.3x improvement in power density/$, 3,500 tests in 3 months, 900+ chemistries explored [5] | Outperforms traditional Bayesian optimization, which "often gets lost" in high-dimensional spaces [5] |

| MADRIGAL (Drug Combinations) | Predicts effects across 95,342 clinical outcomes and 21,842 compounds; handles missing multimodal data [25] | Outperforms single-modality methods in predicting adverse drug interactions [25] |

| AI in Drug Discovery (Pharmaceuticals) | Market projected to grow from $1.8B (2023) to $13.1B (2034) at 18.8% CAGR; >50% of new drugs to involve AI by 2030 [26] | Identified novel liver cancer drug candidate in 30 days vs. traditional timelines [26] |

| U of T MOF AI Tool (Metal-Organic Frameworks) | Predicts optimal applications for newly synthesized MOFs using only precursor and PXRD data [24] | Reduces 7-year typical application discovery lag through "time-travel" validation [24] |

Technical Framework for Experimental Design

Multimodal Data Integration Methodology

Effective multimodal AI systems employ sophisticated techniques for integrating diverse data types:

Data Integration and Feature Extraction: The system merges and harmonizes data from distinct sources or modalities, combining text, images, audio, and numerical data into unified representations [23]. For material science applications, this involves processing precursor chemical information, PXRD patterns, microscopy images, and performance metrics into aligned feature spaces [24].

Cross-Modal Representation Learning: The AI learns shared representations across multiple modalities, mapping features learned from different data types based on their interrelationships [23]. For instance, the system might learn to associate specific PXRD patterns with performance characteristics and literature descriptions, enabling it to predict material behavior from minimal initial data [24].

Fusion Techniques: Data from multiple modalities is combined to produce integrated outputs using various fusion strategies, including early fusion (combining raw data), intermediate fusion (merging extracted features), and late fusion (combining model predictions) [23]. The CRESt system employs knowledge embedding spaces where it creates representations of material recipes based on previous knowledge before experimentation [5].

Active Learning and Experiment Planning

Multimodal AI systems implement sophisticated active learning strategies to guide experimental design:

Knowledge-Enhanced Bayesian Optimization: Traditional Bayesian optimization is augmented with literature knowledge and human feedback. As described by MIT researchers, "For each recipe we use previous literature text or databases, and it creates these huge representations of every recipe based on the previous knowledge base before even doing the experiment" [5]. The system performs principal component analysis in this knowledge embedding space to obtain a reduced search space that captures most performance variability, then uses Bayesian optimization in this reduced space to design new experiments [5].

Human-in-the-Loop Feedback: The system incorporates natural language interfaces that allow researchers to converse with the system with no coding required [5]. The system explains its reasoning, presents observations and hypotheses, and incorporates human domain expertise to refine its search strategies.

Computer Vision for Quality Control: Cameras and visual language models monitor experiments, detecting issues such as millimeter-sized deviations in sample shapes or pipette misplacements, and suggesting corrections to maintain experimental integrity [5].

Applications Beyond Materials Science

The power of multimodal AI extends beyond materials discovery into adjacent fields, particularly drug development, where similar challenges of data integration and experimental design prevail.

Drug Discovery and Development

In pharmaceutical research, multimodal AI addresses critical bottlenecks in the drug development pipeline:

Target Identification and Validation: AI systems analyze vast datasets from genomics, proteomics, and metabolomics to identify promising biological targets, significantly accelerating the initial stages of drug discovery [26].

Compound Design and Optimization: Multimodal language models can simultaneously explore genetic sequences, protein structures, and clinical data to suggest molecular candidates that satisfy multiple criteria, including efficacy, safety, and bioavailability [27]. The MADRIGAL system, for instance, integrates structural, pathway, cell viability, and transcriptomic data to predict clinical outcomes of drug combinations [25].

Clinical Trial Optimization: By integrating multi-omics data with electronic health records, multimodal AI can identify biomarkers and patient subpopulations most likely to respond to treatments, thus increasing the precision and success rates of clinical trials [26].

Multimodal AI represents a paradigm shift in automated synthesis and materials discovery, transforming these fields from artisanal crafts to industrialized processes. By integrating diverse data streams—from scientific literature and experimental results to human expertise and robotic feedback—these systems achieve a more holistic understanding of material behavior and dramatically accelerate the discovery process. The technical frameworks and methodologies outlined in this guide provide researchers with the foundation to implement and advance these systems, potentially unlocking breakthroughs in energy storage, drug development, and beyond. As these technologies continue to mature, with improvements in explainable AI, robust data integration, and human-AI collaboration, they promise to turn autonomous experimentation into a powerful engine for scientific advancement that complements and extends human capabilities.

Robotic Automation in Synthesis and Characterization

The integration of robotic automation into synthesis and characterization represents a paradigm shift in materials discovery research. This transition from manual, sequential experimentation to automated, high-throughput workflows is fundamentally accelerating the pace of scientific discovery. Self-driving laboratories (SDLs), which combine robotic hardware with artificial intelligence (AI) for planning and decision-making, are now capable of navigating vast experimental parameter spaces with minimal human intervention [28]. This technical guide examines the core principles, technologies, and methodologies underpinning this transformation, with a specific focus on the autonomous multi-robot synthesis and optimization of advanced materials, as exemplified by metal halide perovskite nanocrystals (MHP NCs) [29].

The Autonomous Research Framework

The core of modern automated materials research is the closed-loop feedback system. This framework integrates automated synthesis, real-time characterization, and data-driven decision-making into a cyclical, autonomous process. This approach is designed to efficiently explore high-dimensional parameter spaces that are intractable for traditional manual methods [29].

Core Components of a Self-Driving Laboratory

A fully functional SDL consists of several interconnected subsystems:

- Automated Synthesis Platform: Robotic systems for precise handling and combination of reagents. This often involves liquid handling robots and parallelized, miniaturized batch reactors that allow for the investigation of numerous conditions simultaneously [29].

- Real-Time Characterization Module: Integrated analytical instruments, such as spectrophotometers, that provide immediate feedback on material properties. This enables the system to conduct property measurements like UV-Vis absorption and emission spectroscopy immediately after synthesis [29].

- AI-Driven Decision Engine: Machine learning (ML) algorithms that analyze characterization data and propose subsequent experiments. This AI agent uses the experimental data to iteratively suggest new experimental conditions to optimize for a user-defined objective, creating a continuous loop of hypothesis, experiment, and learning [29].

- Robotic Material Handling: Systems that physically connect the synthesis and characterization modules, transferring samples between stations without human intervention. This is often accomplished with a robotic arm, ensuring seamless workflow integration [29].

Case Study: The "Rainbow" System for Perovskite Nanocrystal Synthesis

The "Rainbow" platform provides a concrete example of a multi-robot SDL for the synthesis and optimization of metal halide perovskite nanocrystals (MHP NCs). MHP NCs are a model system for this approach due to their complex, multi-variable synthesis and high commercial potential in photonics and optoelectronics [29].

Hardware Architecture

Rainbow's hardware is a symphony of coordinated robotic components [29]:

- Liquid Handling Robot: Manages precursor preparation, multi-step NC synthesis, and sample aliquoting for characterization.

- Characterization Robot: Equipped with a benchtop spectrometer to acquire UV-Vis absorption and photoluminescence emission spectra.

- Robotic Plate Feeder: Automatically replenishes consumables and labware to ensure continuous, uninterrupted operation.

- Robotic Arm: Serves as the system's material handling backbone, transferring samples and labware between the other stations to connect their functionalities.

This multi-robot integration enables Rainbow to operate as a unified system, moving from chemical precursors to characterized materials without manual intervention.

Experimental Objectives and Workflow

The primary goal for Rainbow in the cited study was the autonomous optimization of MHP NC optical properties, specifically targeting maximum photoluminescence quantum yield (PLQY) and minimum emission linewidth (FWHM) at a predefined peak emission energy (EP) [29]. The system navigated a challenging 6-dimensional input parameter space to control a 3-dimensional output space of optical properties.

Table 1: Key Performance Metrics for MHP NC Optimization

| Optical Property | Definition | Optimization Goal |

|---|---|---|

| Photoluminescence Quantum Yield (PLQY) | Efficiency of converting absorbed light to emitted light | Maximize (approach 100%) |

| Emission Linewidth (FWHM) | Spectral purity of the emitted light | Minimize |

| Peak Emission Energy (EP) | Central wavelength of light emission | Achieve user-defined target |

The experimental workflow can be visualized as a continuous, automated cycle. The following diagram, generated using the DOT language with the specified color palette, illustrates this closed-loop process.

Diagram 1: Autonomous Research Workflow (77 characters)

The Scientist's Toolkit: Research Reagent Solutions

The effectiveness of an SDL depends on the careful selection of reagents and materials. The following table details key components used in the autonomous synthesis of MHP NCs, based on the Rainbow use case [29].

Table 2: Essential Research Reagents for Autonomous MHP NC Synthesis

| Reagent/Material | Function in the Experiment |

|---|---|

| Cesium Lead Halide Precursors (e.g., CsPbBr₃) | Base starting materials for the formation of perovskite nanocrystal structures. |

| Organic Acid/Base Ligands (Varying alkyl chain lengths) | Surface-active agents that control nanocrystal growth, stability, and final optical properties. The ligand structure is a critical discrete variable. |

| Halide Exchange Salts (e.g., containing Cl⁻ or I⁻) | Used in post-synthesis anion exchange reactions to fine-tune the bandgap and emission energy of the NCs. |

| Organic Solvents | The reaction medium for room-temperature, solution-phase synthesis and processing. |

Detailed Experimental Protocol for Autonomous Nanocrystal Optimization

This section provides a detailed, step-by-step methodology for a closed-loop optimization campaign, as implemented in the Rainbow system [29].

Pre-Experiment Configuration

- Objective Definition: The human operator defines the primary optimization target. For example: "Maximize PLQY while achieving a peak emission energy of 2.48 eV (500 nm) and minimizing FWHM."

- Algorithm Selection: An appropriate optimization algorithm (e.g., Bayesian Optimization) is selected for the AI agent. This algorithm is designed to balance the exploration of unknown parameter regions with the exploitation of known high-performing areas.

- Hardware Priming: All robotic systems are initialized. The liquid handler is loaded with stock precursor solutions, the plate feeder is stocked with clean reaction vials, and the spectrophotometer is calibrated.

Iterative Closed-Loop Procedure

- Experiment Proposal: The AI agent analyzes all existing data and proposes a set of new experimental conditions (e.g., specific ligand types, precursor concentrations, reaction times).

- Robotic Synthesis Execution:

- The liquid handling robot prepares precursor mixtures in parallel batch reactors according to the AI's specified recipe.

- For halide exchange reactions, the robot may perform a multi-step synthesis process.

- The system incubates the reactions at room temperature for the prescribed duration.

- Automated Sample Handling and Characterization:

- Upon synthesis completion, the robotic arm transfers the reaction vials to the characterization station.

- The liquid handler extracts a precise aliquot from each vial and prepares it for spectroscopic analysis.

- The characterization robot acquires the UV-Vis absorption and photoluminescence emission spectra for each sample.

- Data Processing and Model Update:

- The software automatically extracts the key performance metrics (PLQY, FWHM, EP) from the acquired spectra.

- This new data, comprising both the input parameters and output properties, is added to the central dataset.

- The AI agent's internal model is updated with this new information to refine its understanding of the synthesis landscape.

- Loop Termination: The cycle (steps 1-4) repeats automatically. The campaign continues until a predefined performance threshold is met, a maximum number of iterations is completed, or the model convergence indicates a optimum has been found.

The hardware architecture that enables this protocol is complex. The diagram below maps the physical components and their interactions within the robotic platform.

Diagram 2: Multi-Robot Hardware Architecture (82 characters)

Quantitative Outcomes and Performance Metrics

The implementation of robotic automation in synthesis and characterization leads to quantifiable improvements in research efficiency and outcomes.

Acceleration of Discovery

SDL platforms like Rainbow demonstrate a dramatic acceleration in the materials discovery process. Studies report 10× to 100× acceleration in the discovery of novel materials and synthesis strategies compared to traditional manual laboratories [29]. This is achieved through 24/7 operation, massive parallelization of experiments, and the elimination of time gaps between synthesis, characterization, and analysis.

Optimization Results and Data Fidelity

In the specific case of MHP NC optimization, the autonomous system successfully [29]:

- Elucidated complex structure-property relationships, identifying the pivotal role of specific ligand structures in controlling PLQY and FWHM.

- Mapped Pareto-optimal fronts, providing a comprehensive representation of the best-achievable trade-offs between multiple competing objectives (e.g., high PLQY vs. low FWHM) at a target emission energy.

- Generated high-fidelity data and metadata, creating a robust, reproducible dataset that includes both successful and failed experiments, which is crucial for training accurate ML models.

Table 3: Performance Advantages of Autonomous Research Platforms

| Metric | Traditional Manual Lab | Autonomous Self-Driving Lab |

|---|---|---|

| Experimental Throughput | Low (sequential experiments) | High (parallelized experiments) |

| Operational Hours | Limited by human workday | Continuous (24/7) |

| Data Consistency | Prone to batch-to-batch variation | High reproducibility |

| Parameter Space Exploration | Inefficient (e.g., one-parameter-at-a-time) | Efficient (AI-guided navigation of high-dimensional space) |

| Human Role | Perform all manual tasks | Focus on high-level strategy and analysis |