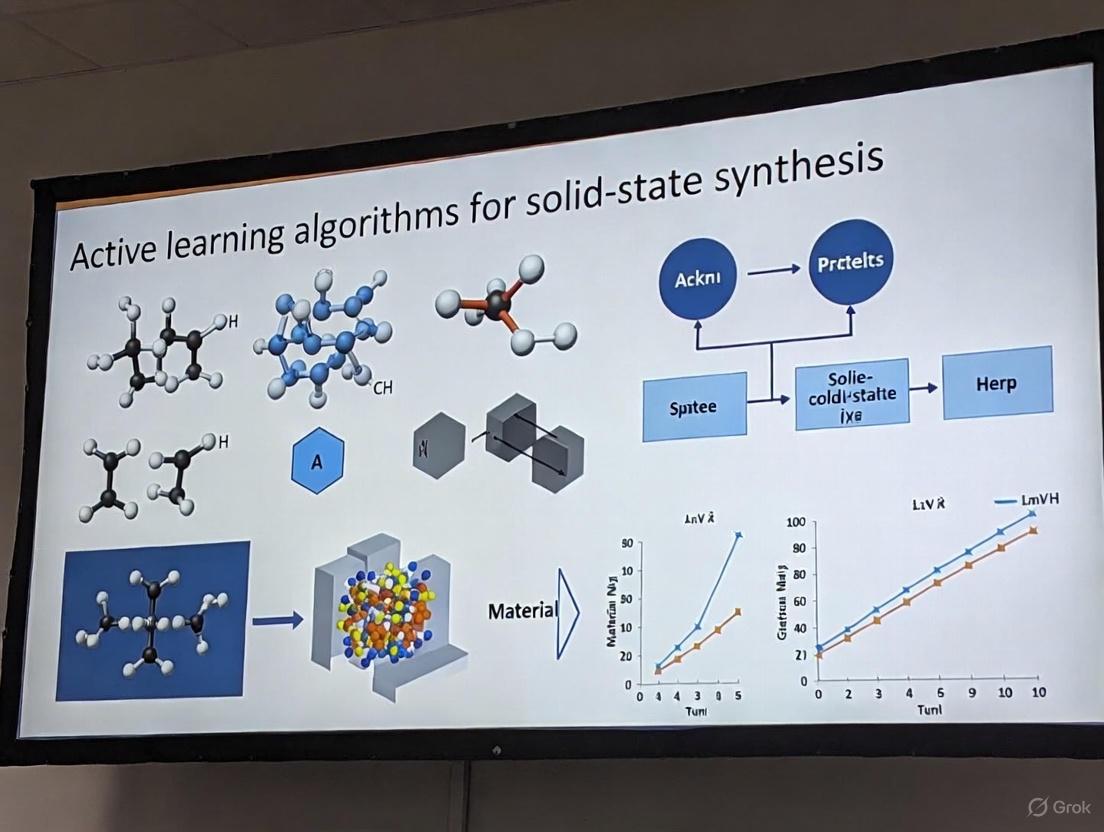

Active Learning for Solid-State Synthesis: Accelerating Materials Discovery and Optimization

This article provides a comprehensive overview of active learning (AL) algorithms and their transformative impact on solid-state synthesis.

Active Learning for Solid-State Synthesis: Accelerating Materials Discovery and Optimization

Abstract

This article provides a comprehensive overview of active learning (AL) algorithms and their transformative impact on solid-state synthesis. Aimed at researchers and drug development professionals, it explores the foundational principles of AL as a data-efficient machine learning strategy that iteratively guides experiments to minimize resource-intensive trials. The scope covers core methodologies like Bayesian optimization and uncertainty sampling, their direct application in synthesizing complex materials such as multi-principal element alloys, and their integration within autonomous laboratories. It further details strategies for troubleshooting optimization challenges and presents rigorous benchmarking studies that validate AL's performance against traditional methods, highlighting its significant potential to accelerate the discovery and development of advanced materials for biomedical applications.

What is Active Learning and Why is it a Game-Changer for Solid-State Chemistry?

The High-Cost Challenge of Traditional Solid-State Synthesis

Solid-state synthesis is a cornerstone technology for developing advanced materials, from novel inorganic compounds for energy storage to peptide-based therapeutics. However, traditional Edisonian approaches to materials discovery face significant economic challenges due to their resource-intensive nature. The process requires extensive experimentation with complex parameter spaces involving precursor selection, temperature profiles, reaction times, and atmospheric conditions. Each experiment consumes substantial materials, energy, and researcher time, creating a pressing need for more efficient methodologies.

Table 1: Economic Landscape of Solid-State and Peptide Synthesis Markets

| Market Segment | 2024 Market Size | Projected 2032/2033 Market Size | CAGR | Key Cost Drivers |

|---|---|---|---|---|

| Global Solid Phase Synthesis Carrier for Peptide Drug Market [1] | USD 123 million | USD 221 million by 2032 | 10.4% | Specialized resins, automated synthesizers, purification systems |

| Global Peptide Synthesis Market [2] | USD 860.99 million | USD 2,268.16 million by 2033 | 11.4% | Complex synthesis protocols, HPLC purification, quality testing |

| Solid Phase Peptide Synthesis (SPPS) Segment [2] | 39.7% market share | Dominant position maintained | - | High-purity resins, reagent excess, solvent consumption |

| Solution Phase Peptide Synthesis Segment [2] | 29.8% market share | Fastest-growing segment | - | Batch reactors, continuous flow systems, specialized reagents |

The financial implications extend beyond research to manufacturing scales. For peptide therapeutics, the high costs of manufacturing and purification present considerable commercial challenges. Peptides, particularly long-chain or modified sequences, require complex synthesis protocols with multiple protection and deprotection steps, highly controlled reaction conditions, and stringent quality testing. Purification processes such as high-performance liquid chromatography (HPLC) add substantial cost and time, making large-scale production expensive [2]. These economic barriers highlight the critical need for innovative approaches that can reduce the resource burden while accelerating discovery.

Active Learning as a Strategic Solution

Active learning represents a paradigm shift in materials research methodology. This machine learning approach strategically selects the most informative experiments to perform, efficiently navigating complex design spaces with minimal experimental overhead [3]. Unlike traditional sequential experimentation, active learning employs Bayesian optimization to balance exploration of unknown parameter regions with exploitation of promising areas, dramatically reducing the number of experiments required to identify optimal materials.

The fundamental advantage of active learning lies in its data-efficient optimization capability. By prioritizing experiments that maximize information gain, these algorithms can identify optimal material compositions and synthesis conditions while evaluating only a fraction of the possible parameter space. Research demonstrates that hypervolume-based active learning methods can identify optimal Pareto fronts by sampling just 16-23% of the entire search space, achieving up to 36% greater efficiency compared to random selection in data-deficient scenarios [4]. This efficiency translates directly into cost savings through reduced reagent consumption, instrument time, and researcher hours.

Active learning particularly excels at multi-objective optimization, which is essential for real-world materials development where researchers must balance competing properties. For example, in developing battery materials, one might need to optimize for both ionic conductivity and stability, or for catalyst materials, activity and durability. The expected hypervolume improvement (EHVI) algorithm has demonstrated remarkable efficiency in these scenarios, successfully navigating trade-offs between conflicting objectives [4]. This capability addresses a fundamental challenge in materials science where property relationships are often inversely proportional yet both must be optimized for practical applications.

Case Study: The A-Lab for Inorganic Materials

The practical implementation of active learning principles is exemplified by the A-Lab, an autonomous laboratory dedicated to the solid-state synthesis of inorganic powders. This platform integrates computational screening, historical data from scientific literature, machine learning, and robotics to plan and execute synthesis experiments with minimal human intervention [5].

Over 17 days of continuous operation, the A-Lab successfully synthesized 41 of 58 novel target compounds identified through computational screening, achieving a 71% success rate in first attempts [5]. This performance demonstrates how autonomous laboratories can significantly accelerate materials discovery while managing resource utilization. The system's ability to learn from failed syntheses and adjust subsequent experiments represents a fundamental advancement over traditional approaches where failed experiments represent pure cost without cumulative knowledge gain.

Table 2: A-Lab Performance Metrics and Outcomes

| Metric | Performance | Implication for Cost Reduction |

|---|---|---|

| Operation Duration | 17 days continuous | Reduced researcher time requirements |

| Targets Attempted | 58 novel compounds | High-throughput capability |

| Successfully Synthesized | 41 compounds (71% success rate) | Reduced failed experiment costs |

| Recipes Tested | 355 total attempts | Automated optimization |

| Synthesis Routes Optimized | 9 targets via active learning | Continuous improvement |

| Initial Literature Recipe Success | 35 materials | Integration of historical knowledge |

The A-Lab's active learning cycle, known as Autonomous Reaction Route Optimization with Solid-State Synthesis (ARROWS3), identified improved synthesis routes for nine targets, six of which had zero yield from initial literature-inspired recipes [5]. By building a database of observed pairwise reactions and prioritizing intermediates with large driving forces to form target materials, the system continuously refined its synthetic strategies. This adaptive approach mimics the learning process of experienced researchers but operates at scale and speed unattainable through manual experimentation.

Experimental Protocols for Active Learning Implementation

Protocol: Multi-Objective Bayesian Optimization for Material Screening

This protocol applies active learning for discovering materials that satisfy multiple target properties simultaneously, such as electronic and mechanical properties in two-dimensional materials [4].

Materials and Software Requirements:

- Material database (e.g., Computational 2D Materials Database - C2DB, Materials Project)

- Machine learning library (Python Scikit-learn, GPyTorch for Gaussian processes)

- Bayesian optimization framework (BoTorch, AX Platform)

- Computational resources for feature generation (density functional theory calculations optional)

Procedure:

- Database Curation: Compile a database of candidate materials with existing property data. For novel materials, compute feature descriptors including compositional, structural, and electronic properties.

- Feature Selection: Identify critical features governing target properties using mutual information analysis or Shapley values. Common descriptors include elemental properties, structural symmetry, and bonding characteristics.

- Surrogate Model Training: Train machine learning models (random forest, neural networks, Gaussian processes) to predict target properties from selected features. Validate model performance through cross-validation.

- Acquisition Function Setup: Implement Expected Hypervolume Improvement (EHVI) to balance exploration of uncertain regions with exploitation of promising candidates. EHVI is particularly effective for multi-objective optimization.

- Active Learning Loop:

- Select batch of candidate materials using acquisition function

- Obtain ground truth data through simulation or experiment

- Update surrogate model with new data

- Check convergence criteria (hypervolume stabilization or target property achievement)

- Validation: Experimentally verify predicted optimal materials to confirm performance.

Troubleshooting Tips:

- For data-deficient scenarios, begin with exploration-weighted acquisition before shifting to exploitation

- If surrogate model performance plateaus, consider feature engineering or model ensemble approaches

- Address imbalanced data distributions through sampling techniques or loss function weighting

Protocol: Autonomous Synthesis Optimization for Solid-State Reactions

This protocol outlines steps for implementing autonomous synthesis optimization inspired by the A-Lab workflow [5], applicable to inorganic powder synthesis.

Materials and Equipment:

- Precursor powders (high purity, characterized particle size distribution)

- Automated powder dispensing and mixing system

- Robotic furnace system with temperature and atmosphere control

- X-ray diffractometer with automated sample handling

- Computing infrastructure for data analysis and machine learning

Procedure:

- Target Identification: Select target compounds through computational screening (e.g., Materials Project) with stability and synthesizability filters. Prioritize materials with negative decomposition energies.

- Literature Analysis: Apply natural language processing to extract synthesis recipes for analogous materials. Use similarity metrics to identify promising precursor sets and temperature profiles.

- Initial Synthesis Trials: Execute literature-inspired recipes using automated systems:

- Dispense and mix precursor powders in appropriate stoichiometries

- Transfer to crucibles using robotic arms

- Heat in box furnaces with optimized temperature programs

- Cool samples and prepare for characterization

- Automated Characterization and Analysis:

- Grind samples to fine powders using automated mortar

- Perform X-ray diffraction measurements

- Analyze patterns using probabilistic machine learning models

- Quantify phase fractions through Rietveld refinement

- Active Learning Optimization:

- For failed syntheses (yield <50%), apply ARROWS3 algorithm

- Identify observed intermediate phases and their formation energies

- Propose alternative precursor sets that avoid kinetic traps

- Prioritize reaction pathways with large driving forces to target

- Iterative Refinement: Continue active learning cycle until target yield is achieved or search space is exhausted. Update reaction database with each experiment.

Troubleshooting Tips:

- For sluggish kinetics, extend reaction times or introduce intermediate grinding steps

- If precursor volatility issues occur, consider sealed ampoules or alternative precursors

- Address amorphous phase formation through nucleation agents or modified thermal profiles

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Solid-State Synthesis

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Solid Phase Synthesis Resins (Hydroxyl, Chloromethyl, Amino) [1] | Matrices for peptide chain elongation | Enable stepwise synthesis with simplified purification; critical for peptide drug development |

| Rice Husk Ash (RHA) [6] | Silica source for wollastonite synthesis | Eco-friendly waste-derived material; reduces synthesis costs while maintaining purity |

| Natural Limestone [6] | Calcium source for ceramic synthesis | Abundant and economical precursor for calcium silicate formation |

| Precursor Powders (Oxides, Carbonates, Phosphates) [5] | Starting materials for inorganic synthesis | Require careful characterization of particle size and purity for reproducible reactions |

| Automated Synthesis Reactors [2] | High-throughput peptide production | SPPS reactors (up to 5,000L capacity) enable scalable production with reduced manual operation |

The integration of active learning methodologies with solid-state synthesis represents a transformative approach to addressing the high-cost challenges inherent in traditional materials discovery. By strategically guiding experimentation through intelligent algorithms, researchers can dramatically reduce the number of experiments required while accelerating the development timeline. The demonstrated success of autonomous laboratories like the A-Lab and multi-objective Bayesian optimization platforms provides a compelling roadmap for the future of materials research.

Looking forward, the continued evolution of active learning platforms will likely focus on increasing autonomy through improved decision-making algorithms and enhanced integration of computational and experimental workflows. As these technologies mature, they promise to reshape the economic landscape of materials development, making the discovery of advanced materials more accessible and sustainable. For researchers embracing these methodologies, the potential exists to not only reduce costs but to unlock novel materials with optimized properties that might otherwise remain undiscovered through conventional approaches.

The discovery and synthesis of novel inorganic materials are fundamental to advancements in energy storage, catalysis, and electronics. Traditional solid-state synthesis methods have long relied on trial-and-error approaches and researcher intuition, making the process slow, costly, and often resulting in impurities [7]. The Materials Genome Initiative aimed to halve the time and cost of discovering new materials, yet the number of successfully discovered materials with enhanced properties remains limited [8]. This challenge has catalyzed the adoption of a more systematic paradigm: the active learning loop. This framework integrates computational prediction, robotic experimentation, and data analysis in an iterative cycle to guide synthesis decisions efficiently, moving beyond conventional methods toward a predictive science of materials creation.

Core Principles of the Active Learning Loop

Active learning is a decision-theoretic approach from the information sciences that enables efficient navigation of vast materials search spaces by iteratively guiding experiments and computations toward promising candidates [8]. Its power lies in prioritizing which experiments to perform next based on the expected value of the information they will provide.

The loop operates on a foundational two-stage process:

- Surrogate Modeling: A machine learning model (the surrogate) is trained on available data—whether from historical literature, high-throughput computations, or previous experiments—to predict material properties or synthesis outcomes.

- Utility Maximization: An acquisition function (utility function) uses the predictions and, crucially, the uncertainties from the surrogate model to decide the most informative experiment to perform next [8].

This process closes the gap between computational screening and experimental realization, allowing researchers to minimize the number of costly and time-consuming experiments required to find a material with desired properties.

Key Utility Functions for Experimental Design

The choice of utility function dictates the strategy for exploring the materials search space. The table below summarizes the primary utility functions used in active learning for materials science.

Table 1: Common Utility Functions in Active Learning for Materials Synthesis

| Utility Function | Mathematical Principle | Primary Goal | Use Case in Synthesis |

|---|---|---|---|

| Expected Improvement | Maximizes the probability of improving upon the current best outcome [8] | Exploitation | Optimizing a synthesis parameter (e.g., temperature) to maximize the yield of a known material |

| Maximum Variance | Selects the data point where the model's prediction uncertainty is highest [8] | Exploration | Probing uncharted regions of the chemical space to discover entirely new materials or reactions |

| G-Optimality | Minimizes the maximum prediction variance across the design space [8] | Global Model Accuracy | Building a robust general model of a synthesis process, such as understanding the phase formation landscape |

Experimental Protocol: Implementing an Active Learning Loop for Solid-State Synthesis

The following protocol details the steps for implementing an active learning loop, drawing from the methodology of the A-Lab described by [9].

Protocol: Autonomous Synthesis of Novel Inorganic Powders

Objective: To autonomously synthesize a target inorganic compound predicted to be stable by ab initio calculations, optimizing the synthesis pathway through iterative active learning.

Materials and Equipment

- Precursors: High-purity solid powder precursors (e.g., carbonates, oxides).

- Robotics System: Integrated stations for powder dispensing, mixing, and milling.

- Heating System: Box furnaces capable of operating at temperatures up to 1000+ °C.

- Characterization Tool: X-ray Diffractometer (XRD) with an automated sample handler.

- Computational Resources: Access to ab initio databases (e.g., Materials Project) and machine learning models for recipe prediction and data analysis.

Procedure

Target Identification

- Identify a target compound screened from a database like the Materials Project, ensuring it is predicted to be stable (on the convex hull) and air-stable [9].

Initial Recipe Generation

- Input the target into a natural language processing (NLP) model trained on text-mined synthesis literature. The model proposes up to five initial precursor sets based on analogy to historically successful recipes for similar materials [9].

- Determine an initial heating temperature using a separate ML model trained on text-mined heating data [9].

Robotic Synthesis Execution

- Dispensing and Mixing: Automatically dispense and mix precursor powders in the calculated stoichiometric ratios.

- Transfer and Heating: Transfer the mixture to an alumina crucible and load it into a furnace for heating according to the proposed temperature profile.

- Cooling and Grinding: After heating, allow the sample to cool, then grind it into a fine powder to prepare for characterization.

Phase Analysis via XRD and Machine Learning

- Perform XRD on the synthesized powder.

- Analyze the diffraction pattern using a probabilistic ML model to identify phases and determine the weight fraction of the target product.

- Validate the ML analysis with automated Rietveld refinement. A synthesis is considered successful if the target yield exceeds 50% [9].

Active Learning and Recipe Optimization

- If the target yield is >50%: The synthesis is successful. The recipe and outcome are added to the database.

- If the target yield is ≤50%: Initiate the active learning loop: a. Update Database: Add the failed recipe and the identified reaction intermediates (e.g., FePO₄, Ca₃(PO₄)₂) to the reaction database. b. Propose New Recipe: The active learning algorithm (e.g., ARROWS³) uses the expanded database and computed reaction energies to propose a new, optimized synthesis route. This optimization is based on two key hypotheses [9]: i. Reactions proceed via pairwise intermediates. ii. Intermediates with a small driving force (<50 meV per atom) to form the target should be avoided, as they lead to kinetic traps. c. Iterate: Return to Step 3 with the new recipe. The loop continues until the target is synthesized or all plausible recipes are exhausted.

Troubleshooting

- Slow Kinetics: If a target fails due to low driving force (<50 meV/atom) in a reaction step, the active learning algorithm should prioritize precursor sets that form intermediates with a larger driving force to the target [9].

- Amorphization: If the XRD pattern indicates an amorphous product, the algorithm may propose alternative thermal profiles (e.g., different heating rates or cooling procedures).

Workflow Visualization

The following diagram illustrates the integrated, iterative process of the active learning loop for autonomous materials synthesis.

Autonomous Materials Synthesis Workflow

Computational Workflow: From Data to Thermodynamic Metrics

The computational backbone of the active learning loop involves data-driven synthesis planning and the application of thermodynamic selectivity metrics to predict reaction success.

Data-Driven Synthesis Planning

A robust synthesis planning workflow leverages large-scale thermodynamic data to evaluate numerous potential reactions, as demonstrated in the synthesis of barium titanate (BaTiO₃) [7].

- Input: Define the target material (e.g., BaTiO₃) and a set of potential precursor elements.

- Reaction Enumeration: Generate all possible balanced chemical reactions between precursors to form the target. For BaTiO₃, this resulted in 82,985 possible reactions [7].

- Metric Calculation: For each reaction, calculate the Primary Competition and Secondary Competition metrics using formation energies from sources like the Materials Project [7].

- Reaction Ranking: Rank all possible synthesis reactions based on their selectivity metrics. A more negative Primary Competition value indicates a higher likelihood of the target forming.

- Experimental Validation: Select the top-ranked reactions for laboratory testing.

Thermodynamic Selectivity Metrics

The predictive power of the workflow hinges on two key thermodynamic metrics designed to assess the favorability of a solid-state reaction.

Table 2: Thermodynamic Selectivity Metrics for Predictive Synthesis

| Metric | Definition | Interpretation | Correlation with Experiment |

|---|---|---|---|

| Primary Competition | Measures the favorability of the target reaction versus competing reactions from the pristine precursors [7]. | A more negative value indicates a higher likelihood of the target product forming over unwanted side products. | Correlates strongly with the amount of target material formed [7]. |

| Secondary Competition | Measures the stability of the target product relative to potential side products that can form after the target is made [7]. | A lower value indicates the target is more stable and less likely to decompose into impurities. | Correlates with the amount of impurities observed in the final product [7]. |

Success in active learning-driven synthesis relies on a suite of computational and experimental resources.

Table 3: Key Resources for Data-Driven Synthesis Science

| Tool / Resource | Type | Function in Active Learning Loop | Example |

|---|---|---|---|

| Ab Initio Database | Computational | Provides thermodynamic data (formation energies) for calculating reaction driving forces and selectivity metrics [7]. | The Materials Project [9] |

| Text-Mined Synthesis Database | Data | Serves as a knowledge base for training ML models to propose initial, literature-inspired synthesis recipes [9]. | Databases mined from scientific literature [10] |

| Natural Language Processing (NLP) Model | Computational | Analyzes text-mined recipes to assess "target similarity" and suggest initial precursor sets [9]. | BiLSTM-CRF models [10] |

| Autonomous Laboratory (A-Lab) | Experimental | A robotic platform that executes the physical synthesis, characterization, and iterative optimization without human intervention [9]. | The A-Lab integrating robotics with AI [9] |

| Active Learning Algorithm | Computational | The core "brain" that uses experiment outcomes and thermodynamics to propose optimized synthesis routes after initial failures [9]. | ARROWS³ [9] |

The active learning loop represents a paradigm shift in solid-state synthesis, moving the field from a reliance on intuition and iterative trial-and-error toward a closed-loop, data-driven science. By integrating computational guidance with robotic experimentation, this approach enables the systematic and accelerated discovery of novel inorganic materials. The successful demonstration of autonomous labs synthesizing a high proportion of novel compounds underscores the maturity of this approach [9]. As thermodynamic and kinetic models continue to improve, and as text-mined datasets grow in volume and veracity, the active learning loop is poised to become the standard methodology for predictive synthesis, ultimately accelerating the realization of next-generation materials for technology and society.

Active learning (AL) has emerged as a transformative methodology for accelerating research in data-intensive fields like solid-state synthesis and drug discovery. By strategically selecting the most informative data points for experimental labeling, AL optimizes the use of costly resources and reduces the number of experiments required to achieve research objectives [3]. This protocol focuses on three core principles underpinning effective AL strategies: Uncertainty Sampling, which targets samples where the model's prediction is least confident; Diversity, which ensures a representative exploration of the chemical or materials space; and Expected Model Change, which selects samples that would most significantly alter the current model [11]. The high cost and time investment associated with solid-state synthesis and experimental validation in drug development make the integration of these AL principles particularly valuable for maximizing research efficiency [12] [3]. This document provides a detailed guide to their implementation, complete with quantitative benchmarks and experimental protocols.

Theoretical Foundations and Key Metrics

Uncertainty Sampling

Uncertainty sampling selects data points for which the current model's predictions are most uncertain. The core assumption is that labeling these instances will provide the maximum information to resolve model ambiguity and improve decision boundaries [13].

Key Uncertainty Measures:

- Least Confidence: Prefers instances with the lowest confidence for the most likely label. For a prediction ( p{\theta}(y | \boldsymbol{x}) ), the score is ( 1 - \max{y} p_{\theta}(y | \boldsymbol{x}) ) [13].

- Margin of Confidence: Focuses on the difference between the two most confident predictions, ( p{\theta}(ym | \boldsymbol{x}) - p{\theta}(yn | \boldsymbol{x}) ), where ( ym ) and ( yn ) are the first and second most probable classes. A smaller margin indicates higher uncertainty [13].

- Entropy: Measures the average information content, favoring predictions where the probability distribution across classes is most uniform: ( - \sum{y} p{\theta}(y | \boldsymbol{x}) \log p_{\theta}(y | \boldsymbol{x}) ) [13].

- Epistemic vs. Aleatoric Uncertainty: Advanced frameworks distinguish between uncertainty arising from the model's lack of knowledge (epistemic, reducible) and inherent data noise (aleatoric, irreducible). For active learning, targeting points with high epistemic uncertainty is often more effective for model improvement [13].

Diversity

Diversity-based selection aims to construct a batch of data points that collectively provide broad coverage of the input space. This prevents the model from over-exploiting local regions and helps in building a robust, generalizable model.

Common Techniques:

- K-Means Clustering: Uses clustering in the feature space to select a diverse set of instances from different clusters [14].

- Core-Set Approaches: Selects a subset of points such that the model trained on this subset performs similarly to one trained on the entire dataset.

- Determinant of Covariance (COVDROP/COVLAP): Novel batch active learning methods select batches that maximize the joint entropy, i.e., the log-determinant of the epistemic covariance of the batch predictions. This inherently enforces batch diversity by rejecting highly correlated samples [14].

Expected Model Change

Expected Model Change Maximization (EMCM) queries the instances that are expected to induce the most significant change in the current model parameters, typically measured by the gradient of the loss function.

Implementation:

- The utility score for an unlabeled instance ( \boldsymbol{x} ) is often the magnitude of the gradient vector that would be induced by its true label: ( \| \nabla l(\boldsymbol{x}, y; \theta) \| ) [11].

- This approach can be computationally intensive, as it requires estimating the gradient for all possible labels for each candidate point.

Quantitative Benchmarking of AL Strategies

The following table summarizes performance data from a comprehensive benchmark study evaluating various AL strategies within an Automated Machine Learning (AutoML) framework on materials science regression tasks [11].

Table 1: Benchmark Performance of Active Learning Strategies in Materials Science

| Strategy Category | Example Methods | Key Characteristics | Early-Stage Performance (Data-Scarce) | Late-Stage Performance |

|---|---|---|---|---|

| Uncertainty-Driven | LCMD, Tree-based-R | Selects points with highest predictive uncertainty | Clearly outperforms random sampling baseline | Performance gap narrows; converges with other methods |

| Diversity-Hybrid | RD-GS | Combines representativeness and diversity (e.g., via determinantal point processes) | Clearly outperforms baseline | Performance gap narrows; converges with other methods |

| Geometry-Only | GSx, EGAL | Relies on feature space geometry, ignores model uncertainty | Underperforms uncertainty and hybrid methods | Converges with other methods |

| Baseline | Random-Sampling | Random selection of data points | Lower accuracy and data efficiency | Serves as convergence reference |

The benchmark concluded that in the early, data-scarce phase of an AL cycle, uncertainty-driven and diversity-hybrid strategies provide the most significant performance gains, substantially improving model accuracy with fewer labeled samples. As the labeled set grows, the relative advantage of specific AL strategies diminishes [11].

Experimental Protocols

This section provides detailed protocols for implementing active learning in a research pipeline, such as solid-state synthesis or molecular optimization.

Protocol 1: General Pool-Based Active Learning Workflow

Objective: To iteratively improve a predictive model by selectively labeling the most informative samples from a large unlabeled pool. Background: This is the standard setup in materials science and drug discovery where a large database of uncharacterized compounds or materials exists [12] [11].

Materials:

- Initial Labeled Set (( L )): A small set of ( {(xi, yi)}{i=1}^l ) where ( yi ) is the property of interest (e.g., mutagenicity, band gap, synthesis success).

- Unlabeled Pool (( U )): A large collection of instances ( {x_j} ) without labels.

- Oracle: The experimental setup (e.g., automated synthesis lab, Ames test) or expert that provides the true label ( yj ) for a given ( xj ).

- Model: A trainable machine learning model (e.g., Graph Neural Network, Random Forest).

Procedure:

- Initialization: Begin with a small, randomly selected initial labeled dataset ( L ) and a large unlabeled pool ( U ).

- Model Training: Train the predictive model on the current labeled set ( L ).

- Candidate Scoring: Use the trained model to score all instances in ( U ) based on a chosen AL strategy (e.g., uncertainty, diversity, expected model change).

- Batch Selection: Select the top-( k ) instances with the highest utility scores to form a batch ( B ). For pure uncertainty sampling, this is the top-( k ) most uncertain. For diversity, use a method like COVDROP [14].

- Oracle Querying & Labeling: Present the batch ( B ) to the oracle for labeling. In a research context, this involves synthesizing and characterizing the selected materials or compounds [12] [15].

- Dataset Update: Remove the newly labeled instances ( {(x^, y^)} ) from ( U ) and add them to ( L ).

- Iteration: Repeat steps 2-6 until a predefined stopping criterion is met (e.g., performance target achieved, experimental budget exhausted).

Protocol 2: Implementing Uncertainty Sampling for Molecular Mutagenicity Prediction

Objective: To reduce the number of training molecules required for accurate mutagenicity prediction by actively selecting uncertain samples. Background: Experimental mutagenicity testing (e.g., Ames test) is time-consuming and costly. The muTOX-AL framework demonstrates the efficacy of this approach [12].

Materials:

- Dataset: A large chemical database (e.g., TOXRIC with 7495 compounds) [12].

- Feature Extraction: Molecular fingerprints and descriptors.

- Model Architecture: A deep learning framework with a backbone prediction module and an uncertainty estimation module.

Procedure:

- Data Preparation: Split the dataset into an initial labeled pool (e.g., 200 random samples) and an unlabeled pool (the remainder). Perform five-fold cross-validation.

- Model and Uncertainty Setup: Implement a model that can provide predictive probabilities. The entropy of this distribution ( -\sum p\theta(y|\boldsymbol{x}) \log p\theta(y|\boldsymbol{x}) ) can be used as the uncertainty measure [12] [13].

- Active Learning Cycle:

- Train the model on the current labeled pool.

- Use the trained model to predict and calculate the uncertainty score for every molecule in the unlabeled pool.

- Rank the unlabeled molecules by their uncertainty score (highest to lowest).

- Select the top-( k ) most uncertain molecules, query their "oracle" labels (simulated from the held-out data), and add them to the training set.

- Evaluation: Monitor the model's prediction accuracy on a fixed test set after each AL cycle.

- Outcome: The muTOX-AL study achieved a 57% reduction in the number of training molecules needed compared to random sampling, demonstrating high data efficiency [12].

Protocol 3: Batch Active Learning for Drug Discovery with Diversity

Objective: To select optimal batches of molecules for testing in ADMET or affinity prediction tasks, balancing uncertainty and diversity. Background: In industrial drug discovery, testing is performed in batches. This protocol is based on the COVDROP/COVLAP methods [14].

Materials:

- Datasets: ADMET/affinity datasets (e.g., cell permeability, aqueous solubility, lipophilicity).

- Model: A deep learning model (e.g., Graph Neural Network) capable of providing predictive uncertainty, for instance via Monte Carlo Dropout (COVDROP) or Laplace Approximation (COVLAP).

Procedure:

- Uncertainty Quantification: For each molecule in the unlabeled pool, compute the predictive uncertainty. With MC Dropout, this involves multiple stochastic forward passes to estimate the variance of the predictions [14].

- Covariance Matrix Computation: Compute an epistemic covariance matrix ( C ) between the predictions for all unlabeled samples. This matrix captures both the individual uncertainties (variances) and the correlations (covariances) between samples.

- Greedy Batch Selection: Select a submatrix ( CB ) of size ( B \times B ) (where ( B ) is the batch size) that has the maximal log-determinant: ( \text{argmax}{B \subset U} \log \det(C_B) ). This step simultaneously maximizes the joint information (uncertainty) and diversity (by penalizing high correlations within the batch) [14].

- Experimental Testing: Synthesize and test the selected batch of molecules to obtain their experimental property values.

- Iteration: Retrain the model with the new data and repeat.

- Outcome: This method has been shown to significantly outperform random selection and other batch selection methods like BAIT and k-means, leading to potential substantial savings in the number of experiments required [14].

Table 2: Essential Tools for Implementing Active Learning in Experimental Research

| Item / Resource | Type | Function in Active Learning Workflow | Example Use Case |

|---|---|---|---|

| AutoML Platforms | Software | Automates model selection and hyperparameter tuning, ensuring the underlying surrogate model in AL is always optimized [11]. | General materials property prediction regression/classification tasks. |

| DeepChem Library | Software | Provides an open-source toolkit for deep learning in drug discovery, chemistry, and materials science, offering implementations of various models [14]. | Building graph neural network models for molecular property prediction. |

| Monte Carlo Dropout | Algorithm | A practical technique for estimating model (epistemic) uncertainty with neural networks without changing the model architecture [14] [11]. | Uncertainty estimation in COVDROP batch active learning method. |

| Determinantal Point Processes (DPPs) | Algorithm | A probabilistic model that provides a mathematically elegant way to select a diverse subset of items from a larger set [14]. | Promoting diversity in batch selection. |

| TOXRIC Dataset | Data | A balanced, public dataset of compounds with mutagenicity labels, useful for benchmarking AL strategies in toxicology prediction [12]. | Training and evaluating models for molecular mutagenicity prediction. |

| Autonomous Laboratory (A-Lab) | Hardware/Software | A fully integrated platform that uses AI and robotics to execute solid-state synthesis and characterization, closing the AL loop physically [15]. | Autonomous synthesis and testing of target inorganic materials. |

Workflow and Decision Diagrams

Core Active Learning Workflow for Solid-State Synthesis

Algorithm Selection Logic for Active Learning Strategies

The Synergy Between AI, Robotics, and Active Learning in Autonomous Labs

Application Notes: Core Principles and System Architectures

The integration of artificial intelligence (AI), robotics, and active learning is forging a new paradigm in materials research through autonomous laboratories, or "self-driving labs". These systems function as a continuous, closed-loop cycle that minimizes human intervention and dramatically accelerates experimental throughput [15]. This paradigm shift is particularly impactful for solid-state synthesis, where traditional trial-and-error approaches are notoriously time-consuming and resource-intensive [16].

The Autonomous Workflow Cycle

At its core, an autonomous laboratory operates on a "reading-doing-thinking" framework [16]. The cycle begins with AI-driven experimental planning, where models trained on vast literature databases and theoretical calculations propose initial synthesis targets and recipes. Robotic systems then execute the hands-off synthesis, handling tasks from precursor dispensing and mixing to high-temperature reactions. Finally, automated data analysis and interpretation—such as phase identification from X-ray diffraction (XRD) patterns—feed results back to the AI, which uses active learning to plan the next, more informed experiment [15] [5]. This loop turns processes that once took months into workflows that can run continuously for weeks, as demonstrated by the A-Lab, which synthesized 41 novel inorganic compounds over 17 days of uninterrupted operation [5].

The Role of Active Learning and AI

Active learning (AL) is the intellectual engine of this process. Under constrained resources, AL algorithms identify and prioritize experiments that are most informative for improving the model, thereby reducing redundant tests and maximizing the knowledge gained from each experiment [3]. In practice, this often involves Bayesian optimization to navigate complex parameter spaces [3]. For solid-state synthesis, AI's role is multifaceted: it powers natural-language models for recipe generation from historical data, computer vision models for analyzing characterization data, and decision-making algorithms for iterative optimization [15] [5]. The ARROWS³ algorithm, for instance, uses active learning grounded in thermodynamics to improve synthesis routes by avoiding intermediates with low driving forces to form the target material [5].

Emerging Architectures: LLMs and Embodied AI

Recent advances are introducing more sophisticated "brains" for autonomous labs. Large Language Model (LLM)-based agents like Coscientist and ChemCrow have demonstrated the ability to autonomously design, plan, and execute complex chemical experiments by leveraging tool-using capabilities [15]. Simultaneously, research into embodied intelligence suggests that AI models which learn by interacting with the physical world—integrating vision, proprioception, and language—can develop more robust and generalizable understanding, akin to how a child learns [17]. This approach could lead to AI that better handles the unpredictable nature of real-world laboratory experiments.

Experimental Protocols

Objective: To autonomously synthesize novel, predicted-in-advance inorganic powder materials and optimize their synthesis recipes using an integrated AI and robotics platform.

Materials:

- Precursors: High-purity solid powder precursors (e.g., metal oxides, carbonates, phosphates).

- Crucibles: Alumina crucibles.

- Robotic Platforms: Integrated stations for powder dispensing, mixing, heat treatment, and XRD characterization.

Methodology:

Target Identification:

- Input: Use large-scale ab initio phase-stability data from sources like the Materials Project to identify novel, theoretically stable, and air-stable target materials.

Initial Recipe Generation:

- Utilize natural-language processing models trained on text-mined synthesis literature to propose initial solid-state synthesis recipes based on analogy to known, similar materials.

- A second ML model, trained on literature heating data, proposes an initial synthesis temperature.

Robotic Synthesis Execution:

- A robotic arm transports an empty alumina crucible to a powder dispensing station.

- Automated dispensers weigh and mix precursor powders according to the generated recipe.

- The mixture is transferred into the crucible.

- A second robotic arm loads the crucible into one of four box furnaces for heat treatment according to the specified temperature program.

- After heating, the sample is cooled automatically.

Automated Product Characterization and Analysis:

- A robot transfers the cooled sample to a grinding station to create a fine powder for analysis.

- The powder is characterized by X-ray diffraction (XRD).

- The XRD pattern is analyzed by two consecutive ML models:

- A probabilistic deep learning model (e.g., a convolutional neural network) provides an initial phase identification and weight fraction estimation.

- An automated Rietveld refinement confirms the phases and refines the quantitative phase analysis.

Active Learning and Iteration:

- If the target yield is >50%, the experiment is deemed successful.

- If the yield is low or the target is not formed, an active learning algorithm (e.g., ARROWS³) takes over.

- The algorithm integrates the observed reaction pathway (e.g., identified intermediates) with computed thermodynamic data (e.g., driving forces from the Materials Project) to propose a new, improved set of precursors or reaction conditions.

- Steps 3-5 are repeated until the target is successfully synthesized or a predetermined number of iterations is exhausted.

Key Considerations:

- Failure Analysis: Common failure modes include slow reaction kinetics (often linked to low driving forces <50 meV per atom), precursor volatility, and amorphization.

- Database Building: The system continuously builds a database of observed pairwise reactions, which precludes the need to re-test known pathways and shrinks the effective search space.

Objective: To use a large language model (LLM) agent to autonomously design, plan, and execute a chemical synthesis.

Materials:

- LLM Agent: A central LLM (e.g., GPT-4) equipped with tool-using capabilities.

- Software Tools: Access to web search, document retrieval, code execution, and robotic instrument control APIs.

- Robotic Liquid-Handling System: An automated synthesizer (e.g., Chemspeed ISynth).

- Analytical Instruments: Integrated systems like UPLC-MS and benchtop NMR.

Methodology:

Task Interpretation:

- The user provides a high-level goal (e.g., "synthesize an insect repellent" or "optimize a palladium-catalyzed cross-coupling reaction").

- The LLM agent parses the instruction and breaks it down into sub-tasks.

Research and Planning:

- The agent uses its web search and document retrieval tools to gather relevant literature on the target molecule or reaction.

- It analyzes the retrieved information to propose a viable synthetic route, including precursor selection and reaction conditions.

Code Generation for Automation:

- The agent generates the necessary code to control the robotic liquid-handling system and other laboratory instruments to execute the planned synthesis.

Execution and Monitoring:

- The generated code is executed, and the robotic system performs the synthesis steps (dispensing, mixing, heating, etc.).

- The agent can trigger analytical instruments (e.g., UPLC-MS) to monitor reaction progress.

Analysis and Iteration:

- The agent interprets the analytical data (e.g., MS and NMR spectra) using heuristic rules or additional models.

- Based on the outcome, it can decide the next steps, such as scaling up, functional testing, or re-optimizing the reaction.

Key Considerations:

- Hallucination Mitigation: LLMs may generate plausible but incorrect information. Robust fact-checking against known databases and human oversight are critical for safety.

- Tool Reliability: The performance is dependent on the reliability and breadth of the tools available to the LLM agent.

Data Presentation

Table 1: Performance Metrics of Selected Autonomous Laboratories

| System / Lab Name | Primary Focus | Key AI/Robotic Technologies | Experimental Output | Key Outcome / Success Rate | Reference |

|---|---|---|---|---|---|

| A-Lab | Solid-state synthesis of inorganic powders | NLP for recipe generation, Robotic arms for powder handling, ML for XRD analysis, ARROWS³ for active learning | 58 targets attempted over 17 days | 41/58 (71%) novel compounds synthesized | [5] |

| Coscientist | Organic synthesis & optimization | LLM (GPT-4) with tool use, Automated liquid handling, Code generation | Optimization of Pd-catalyzed cross-couplings | Successful planning & execution of complex organic synthesis tasks | [15] |

| Modular Platform (Dai et al.) | Exploratory synthetic chemistry | Mobile robots, Heuristic reaction planner, Integrated UPLC-MS/NMR | Multi-day campaigns for reaction discovery | Accelerated discovery in supramolecular chemistry & photocatalysis | [15] |

| PU Learning Model (Chung et al.) | Predicting synthesizability of ternary oxides | Positive-Unlabeled (PU) Learning, Human-curated dataset | Evaluation of 4,312 hypothetical compositions | 134 compositions predicted as synthesizable | [18] |

Table 2: The Scientist's Toolkit: Key Research Reagent Solutions for Autonomous Solid-State Synthesis

| Item / Category | Function & Description | Example Use in Protocol |

|---|---|---|

| Precursor Powders | High-purity, fine-grained powders of metal oxides, carbonates, etc., that serve as the starting materials for solid-state reactions. | Robotic systems automatically weigh and mix these according to the AI-generated recipe. [5] |

| Ab Initio Databases | Computational databases (e.g., Materials Project) providing thermodynamic data used for target selection and active learning. | Used to identify stable target materials and compute driving forces for reaction optimization in ARROWS³. [5] |

| Text-Mined Synthesis Datasets | Large datasets of historical synthesis procedures extracted from scientific literature using Natural Language Processing (NLP). | Trains the NLP models that generate the initial, literature-inspired synthesis recipes. [15] [5] |

| Active Learning Algorithm (e.g., ARROWS³) | The optimization engine that uses experimental results and thermodynamic data to propose improved synthesis routes. | Takes over after a failed synthesis, proposing new precursor sets to avoid low-driving-force intermediates. [5] |

| Machine Learning Models for XRD | Models trained to identify crystalline phases and estimate their weight fractions from raw XRD diffraction patterns. | Provides rapid, automated analysis of synthesis products, feeding results directly back to the AI planner. [15] [5] |

Workflow and System Visualization

Autonomous Laboratory Closed-Loop Workflow

Active Learning Cycle for Synthesis Optimization

Implementing Active Learning: From Bayesian Optimization to Real-World Synthesis

The acceleration of materials discovery, particularly in solid-state synthesis, is a cornerstone of modern technological advancement. Within this domain, Gaussian Process Regression (GPR) and Random Forests (RF) have emerged as two pivotal machine learning algorithms that enable researchers to navigate complex experimental spaces efficiently. These algorithms are particularly powerful when integrated into active learning (AL) frameworks, which strategically select the most informative experiments to perform, thereby minimizing costly trial-and-error approaches. Active learning addresses a fundamental challenge in materials science: the high resource cost of experiments and simulations, which creates a bottleneck in the discovery pipeline [3]. By iteratively selecting data points that maximize information gain, AL enables more efficient exploration of synthesis possibilities. GPR and RF serve as the computational engines within these frameworks, providing the predictive capabilities and uncertainty quantification necessary for intelligent experiment selection. Their application spans various materials domains, from lithium-ion battery electrodes to solid-state electrolytes and inorganic powders, demonstrating versatility in addressing diverse synthesis prediction challenges [19] [20] [5].

Algorithm Fundamentals and Comparative Analysis

Gaussian Process Regression (GPR)

Gaussian Process Regression is a non-parametric, Bayesian approach to regression that provides not only predictions but also well-calibrated uncertainty estimates for those predictions. This dual capability makes it particularly valuable for synthesis prediction tasks where understanding prediction confidence is crucial for decision-making. Fundamentally, a Gaussian process defines a distribution over functions, where any finite set of function values has a joint Gaussian distribution. This is completely specified by its mean function ( m(\mathbf{x}) ) and covariance function ( k(\mathbf{x}, \mathbf{x}') ), often referred to as the kernel [21].

The kernel function encodes assumptions about the function's properties, such as smoothness and periodicity. For synthesis prediction, the Matérn kernel is often preferred over the squared exponential kernel as it accommodates moderately rough functions that commonly appear in materials science data. The predictive distribution of GPR for a new input ( \mathbf{x}* ) is Gaussian with closed-form expressions for the mean ( \mu(\mathbf{x}) ) and variance ( \sigma^2(\mathbf{x}_) ). The variance provides a natural measure of uncertainty that active learning algorithms can exploit to select experiments where the model is least confident [21] [22].

A key advantage of GPR in synthesis prediction is its calibrated uncertainty quantification, which allows researchers to distinguish between reliable and unreliable predictions. This is particularly valuable when exploring new regions of the synthesis space where training data is sparse. Additionally, GPR's Bayesian foundation provides a principled framework for incorporating prior knowledge, which can be crucial when historical data is limited [21].

Random Forests (RF)

Random Forests are an ensemble learning method that operates by constructing multiple decision trees during training and outputting the mean prediction (for regression) of the individual trees. For synthesis prediction tasks, RF builds multiple decorrelated trees through bootstrap aggregating (bagging) and random feature selection. Each tree is grown on a bootstrap sample of the training data, and at each split, a random subset of features is considered as candidates [19].

The RF algorithm provides implicit uncertainty estimates through the variance of predictions across individual trees in the forest. While not probabilistic in the Bayesian sense like GPR, this variance has proven effective for guiding active learning in many materials science applications. RF is particularly robust to noisy features and can naturally handle mixed data types (continuous and categorical), which often appear in synthesis recipes where precursors and processing conditions may be represented differently [19].

An important capability of RF for synthesis optimization is its native support for feature importance analysis. By tracking how much each feature reduces impurity across all trees, RF can identify which synthesis parameters (e.g., temperature, doping concentration, precursor properties) most significantly impact the target property. This provides valuable scientific insights beyond mere prediction [19].

Quantitative Performance Comparison

Table 1: Comparative performance of GPR and Random Forests for synthesis prediction tasks

| Performance Metric | Gaussian Process Regression | Random Forests |

|---|---|---|

| Uncertainty Quantification | Native probabilistic uncertainty with confidence intervals [21] | Implicit via tree prediction variance [19] |

| Handling High Dimensions | Struggles with very high-dimensional data (>100 features) | Performs well even with hundreds of features [19] |

| Data Efficiency | Highly data-efficient; works well with small datasets [21] | Requires more data to build stable ensembles [19] |

| Computational Scaling | O(n³) for training; challenging with >10,000 points [21] | Linear training complexity; handles large datasets [19] |

| Implementation in Active Learning | Superior in uncertainty-based sampling schemes [21] | Effective in diversity and uncertainty hybrid schemes [19] |

| Interpretability | Black box; limited interpretability | Feature importance scores provide interpretability [19] |

Table 2: Experimental results from synthesis prediction case studies

| Application Domain | Algorithm Used | Key Performance Metrics | Reference |

|---|---|---|---|

| Co-doped LiFePO₄/C cathode | Random Forest & GPR | RF demonstrated superior predictive power for specific discharge capacity [19] | [19] |

| Pharmaceutical dissolution | Gaussian Process Regression | Higher fidelity predictions than polynomial models with same data [21] | [21] |

| Solid-state synthesis of novel inorganic powders | GPR with active learning | 41 novel compounds synthesized from 58 targets in 17 days [5] | [5] |

| Solid-state synthesizability prediction | Positive-unlabeled learning | 134 of 4312 hypothetical compositions predicted synthesizable [18] | [18] |

Experimental Protocols for Synthesis Prediction

Protocol 1: Developing a GPR Model for Synthesis Optimization

Purpose: To create a Gaussian Process Regression model for predicting synthesis outcomes and guiding experimental optimization through active learning.

Materials and Data Requirements:

- Input Features: Intrinsic and extrinsic characteristics of synthesis parameters (e.g., atomic number, valence, ionic radii, electronegativity, processing conditions) [19]

- Output/Target Variable: Measurable synthesis outcome (e.g., specific discharge capacity, phase purity, yield) [19]

- Software: Python with scikit-learn, GPy, or GPflow libraries [21]

Procedure:

- Feature Selection: Analyze Pearson correlation coefficients between potential input features and target variable to identify the most relevant predictors [19].

- Data Preprocessing: Normalize all features to zero mean and unit variance. For compositional data, consider domain-specific representations (e.g., orbital field matrix, Magpie features).

- Kernel Selection: Begin with a Matérn kernel (ν=3/2 or ν=5/2) which is less smooth than the radial basis function (RBF) kernel but often more appropriate for materials data [21].

- Model Training: Optimize kernel hyperparameters by maximizing the log marginal likelihood using gradient-based optimization (e.g., L-BFGS-B).

- Uncertainty Calibration: Validate uncertainty estimates using calibration curves on held-out test data.

- Active Learning Integration: Use the posterior predictive distribution to compute acquisition functions (e.g., expected improvement, upper confidence bound) for selecting next experiments [21] [22].

Troubleshooting Tips:

- For convergence issues, try adding a white noise kernel to account for measurement error.

- If training is too slow, consider sparse variational GPR approximations for datasets exceeding 10,000 points.

- For poor performance, experiment with composite kernels (e.g., linear + periodic) to capture complex feature interactions.

Protocol 2: Random Forest for Dopant Synergy Analysis

Purpose: To develop a Random Forest model for predicting synergistic effects of co-dopants in solid-state materials.

Materials and Data Requirements:

- Dataset: Historical synthesis data with single-element and co-doping results [19]

- Feature Set: Atomic properties of dopants, processing conditions, characterization results [19]

- Software: Python with scikit-learn or R with randomForest package

Procedure:

- Data Compilation: Create a dataset encompassing information on doped structures, including both singular and co-doped elements. Include various intrinsic and extrinsic characteristics [19].

- Feature Engineering: Compute pairwise interaction terms between dopant properties to explicitly capture potential synergistic effects.

- Model Training: Train RF with 100-500 trees, using out-of-bag error to monitor convergence. Set

max_featurestosqrt(n_features)for regression tasks. - Validation: Use k-fold cross-validation (k=5-10) to assess model performance and prevent overfitting.

- Synergy Analysis: Compare actual specific discharge capacities of co-doped materials with expected values derived from superimposition of ML predictions to quantify synergistic effects [19].

- Feature Importance: Compute permutation importance or mean decrease in impurity to identify which atomic features most strongly influence synergistic behavior [19].

Troubleshooting Tips:

- If trees are too correlated, reduce

max_featuresor increasemin_samples_split. - For imbalanced datasets (rare high-synergy compositions), use balanced class weights or synthetic minority oversampling.

- Visualize individual tree predictions to identify potential outliers or data quality issues.

Experimental Workflow Visualization

Active Learning Workflow for Synthesis Prediction

Essential Research Reagents and Computational Tools

Table 3: Key research reagents and computational tools for ML-driven synthesis prediction

| Category | Item | Specifications/Functions | Application Examples |

|---|---|---|---|

| Data Sources | Materials Project Database | Ab initio calculation data for ~150,000 materials [18] [5] | Stability screening, precursor selection [5] |

| Data Sources | Inorganic Crystal Structure Database (ICSD) | Curated crystal structures of ~200,000 inorganic compounds [18] | Training data for synthesizability prediction [18] |

| Software Tools | scikit-learn | Python ML library with GPR and RF implementations [19] [21] | Rapid prototyping of synthesis models [19] |

| Software Tools | Gaussian Process Toolkits | GPy, GPflow for advanced GPR models [21] | Custom kernel design for materials data [21] |

| Experimental Validation | X-ray Diffraction (XRD) | Phase identification and quantification [5] | Target yield assessment in solid-state synthesis [5] |

| Experimental Validation | Electrochemical Characterization | Specific discharge capacity measurement [19] | Battery material performance validation [19] |

| Automation Systems | Autonomous Laboratory (A-Lab) | Robotic synthesis and characterization [5] | High-throughput experimental validation [5] |

Algorithm Implementation in Active Learning Cycles

The integration of GPR and RF into active learning cycles represents the most impactful application of these algorithms for synthesis prediction. The A-Lab, an autonomous laboratory for solid-state synthesis, demonstrates this integration at scale. In its operation, the system uses GPR for recipe optimization when initial literature-inspired approaches fail [5]. The algorithm leverages both computed reaction energies from ab initio databases and observed synthesis outcomes to predict optimal reaction pathways [5].

A key advantage of GPR in this context is its ability to quantify prediction uncertainty, which enables the implementation of upper confidence bound (UCB) acquisition functions. These functions balance exploration (testing uncertain regions) and exploitation (refining promising candidates) in the synthesis space [22]. For solid-state reactions, the A-Lab implements specialized active learning that prioritizes intermediates with large driving forces to form the target material, avoiding kinetic traps [5].

Random Forests contribute to active learning through diversity-based sampling strategies that ensure broad exploration of the compositional space. The feature importance capabilities of RF additionally help identify which synthesis parameters warrant more extensive exploration. In co-doping studies for battery materials, RF feature analysis revealed which atomic properties (electronegativity, valence, ionic radii) most significantly influenced synergistic effects [19].

The effectiveness of these approaches is demonstrated by the A-Lab's success in realizing 41 novel compounds from 58 targets over 17 days of continuous operation [5]. Similarly, RF models applied to doped LiFePO₄/C systematically identified synergistic co-dopant combinations that significantly enhanced specific discharge capacity [19]. These implementations showcase how GPR and RF, when properly integrated into active learning frameworks, can dramatically accelerate the discovery and optimization of solid-state materials.

The discovery and optimization of quinary high-entropy alloys (HEAs), which consist of five principal elements, present a significant challenge due to the vast compositional space and complex property relationships. Traditional trial-and-error approaches are impractical given the near-infinite possible combinations. This application note details a structured methodology employing active learning (AL) to efficiently navigate this complexity, enabling the targeted discovery of quinary alloys with desired properties for solid-state synthesis. Framed within a broader thesis on autonomous materials research, this protocol demonstrates how AL closes the loop between computational prediction and experimental validation, dramatically accelerating the materials development cycle.

Active learning refers to an iterative process where an algorithm selects the most informative experiments to perform, thereby building a predictive model with maximum efficiency and minimal data. In materials science, this approach is transformative, particularly when integrated with autonomous laboratories capable of executing synthesis and characterization with minimal human intervention [5] [3]. This case study leverages these advanced concepts to establish a robust, data-driven workflow for quinary alloy optimization.

Active Learning Framework for Quinary Alloys

The core of the methodology is an adaptive cycle that integrates computational design, experimental synthesis, and data analysis. The figure below illustrates this iterative workflow for optimizing quinary alloy compositions.

Workflow Overview: The process initiates with a defined target, such as achieving a single-phase microstructure or specific hardness. An initial dataset, potentially sourced from historical literature or ab initio calculations, is used to train a machine learning (ML) model. The active learning core involves the algorithm selecting the most promising composition for subsequent experimental validation. This selection is based on criteria designed to maximize information gain, such as high model uncertainty or high predicted performance. The chosen composition is then synthesized and characterized, with the results fed back into the dataset to refine the ML model for the next cycle [5] [3]. This loop continues until the target performance is achieved or the experimental budget is exhausted.

Experimental Protocol: Active Learning-Driven Synthesis

This section provides a detailed, step-by-step protocol for implementing the active learning cycle, from computational setup to material validation.

Phase 1: Computational Design and Precursor Selection

Objective: To define the quinary alloy search space and identify an initial set of candidate compositions for experimental testing.

Step 1.1 - Define Constrained Composition Space

- Action: Establish the five base elements (e.g., Cu, Fe, Ni, Mn, Al) [23]. Set compositional constraints for each element (e.g., 5-35 at%) to define a realistic and manufacturable search space.

- Rationale: The vast quinary space must be constrained by practical considerations, such as the prevention of brittle phase formation or excessive cost.

Step 1.2 - Generate Initial Training Data

- Action: Populate an initial dataset using high-throughput ab initio calculations (e.g., using the Materials Project database [5]) or by curating existing experimental data from literature. Key properties to compute/predict include formation energy, phase stability (e.g., FCC, BCC), and elastic constants.

- Rationale: Machine learning models require an initial dataset to learn the relationship between composition and properties. This bootstraps the active learning process.

Step 1.3 - Apply Manufacturability Filters

- Action: Screen candidate compositions using predefined manufacturability criteria [23]:

- Melting Point Compatibility (ΔTmc): Ensure the melting points of constituent elements are compatible to avoid issues during solidification.

- Atomic Solubility Index (

S¯): Estimate the likelihood of forming a solid solution versus intermetallic compounds.

- Rationale: This step ensures that computationally predicted alloys are experimentally viable, bridging the gap between simulation and synthesis.

- Action: Screen candidate compositions using predefined manufacturability criteria [23]:

Phase 2: Autonomous Synthesis and Characterization

Objective: To physically realize the compositions proposed by the active learning algorithm and measure their key properties.

Step 2.1 - Sample Preparation and Mixing

- Action:

- Weigh high-purity (>99.9%) elemental metal powders according to the equiatomic composition (e.g., CuFeNiMnAl) [23] in an inert argon atmosphere glovebox to prevent oxidation.

- Transfer the powder mixture to a mixing apparatus (e.g., a ball mill) and mix for a predetermined time to ensure homogeneity.

- Rationale: Uniform mixing at the powder stage is critical for achieving a homogeneous alloy upon consolidation.

- Action:

Step 2.2 - Consolidated Sample Fabrication

- Action: Use Directed Energy Deposition (DED) additive manufacturing for synthesis [23].

- Parameters: Laser power: 500-1200 W, Scan speed: 5-15 mm/s, Layer thickness: 30-50 µm.

- The powder mixture is fed into the melt pool created by the laser on a substrate, building the sample layer-by-layer.

- Rationale: DED is well-suited for rapid prototyping of novel alloys and allows for the creation of bulk samples directly from powder.

- Action: Use Directed Energy Deposition (DED) additive manufacturing for synthesis [23].

Step 2.3 - Microstructural and Mechanical Characterization

- Action:

- Phase Identification: Prepare a flat, polished cross-section of the synthesized sample. Perform X-ray Diffraction (XRD) to identify the present crystalline phases (e.g., dominant FCC structure) [23] [5].

- Mechanical Property Mapping: Perform Vickers microhardness testing on the polished cross-section using a standard load (e.g., 500 gf). Report the average of at least 5 indents [23].

- Rationale: XRD validates the phase prediction from the model, while hardness provides a quantitative measure of mechanical performance for the feedback loop.

- Action:

Phase 3: Data Integration and Model Retraining

Objective: To update the active learning model with new experimental results and plan the next optimal experiment.

Step 3.1 - Data Logging

- Action: Record the synthesized composition, its measured phase constitution, and hardness value into the central database.

- Rationale: Creates a clean, structured dataset for model refinement.

Step 3.2 - Active Learning Query and Model Update

- Action:

- Retrain the machine learning property predictor (e.g., a Gaussian process model) with the updated dataset.

- The active learning algorithm (e.g., a Bayesian optimizer) selects the next composition to test by maximizing an acquisition function. Common functions include:

- Expected Improvement (EI): Favors compositions likely to outperform the current best.

- Upper Confidence Bound (UCB): Balances prediction and uncertainty.

- The algorithm may also leverage knowledge of solid-state reaction pathways, avoiding intermediates with low driving forces to form the target phase [5].

- Rationale: This step is the core of active learning, ensuring each experiment is chosen to most efficiently guide the search toward the global optimum.

- Action:

Key Experimental Results and Data

The following tables summarize quantitative data from a representative study that successfully applied this protocol to discover the novel quinary HEA CuFeNiMnAl [23].

Table 1: Synthesis Parameters and Resulting Properties for a Quinary HEA Candidate

| Alloy System | Fabrication Method | Key Process Parameters | Dominant Phase | Hardness (HV) | Density (g/cm³) |

|---|---|---|---|---|---|

| CuFeNiMnAl [23] | Directed Energy Deposition (DED) | Laser power: 500-1200 W, Scan speed: 5-15 mm/s | FCC | ~560 | ~6.8 |

Table 2: Active Learning Performance Metrics in Materials Discovery

| Metric | Reported Value / Capability | Context & Significance |

|---|---|---|

| Success Rate | 71% (41 of 58 novel compounds synthesized) [5] | Demonstrates the high efficacy of the AL-driven autonomous lab approach. |

| AL-Driven Optimization | Active learning improved yields for 9 targets, 6 of which had zero initial yield [5]. | Highlights AL's power to find viable synthesis routes where initial human-proposed recipes fail. |

| Informed Precursor Selection | Recipes based on high-similarity precursors were more likely to succeed [5]. | Validates the use of ML-based similarity metrics for rational experiment design. |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Materials for Quinary HEA Synthesis via DED

| Item Name | Specification / Purity | Function in Protocol |

|---|---|---|

| Elemental Metal Powders | Cu, Fe, Ni, Mn, Al; >99.9% purity, spherical morphology (45-150 µm) | Serve as the principal components for forming the quinary high-entropy alloy. |

| Argon Gas | High-purity (≥99.999%) | Creates an inert atmosphere during powder handling and DED processing to prevent oxidation. |

| Substrate Material | Mild steel or 304 stainless steel plate | Provides a base for the Directed Energy Deposition process to build the alloy sample layer-by-layer. |

| Polishing Supplies | SiC grinding paper (180-1200 grit), colloidal silica suspension (0.05 µm) | For preparing metallographic samples with a scratch-free surface for XRD and hardness testing. |

| CALPHAD Database | Commercial (e.g., TCHEA) or custom database | Provides thermodynamic data for calculating phase diagrams and informing manufacturability filters [24]. |

Visualization of High-Dimensional Alloy Space

A critical challenge in quinary alloy design is visualizing the four-dimensional composition-property relationship. Dimensionality reduction techniques like UMAP (Uniform Manifold Approximation and Projection) are essential tools for this task. The following diagram illustrates how a high-dimensional composition space is projected into an interpretable 2D map for guiding the active learning process.

Diagram Explanation: The high-dimensional space of a quinary alloy (a 4D simplex) cannot be directly visualized. UMAP acts as a non-linear projection tool that maps this space onto a 2D plane while preserving significant topological structure [25]. The resulting 2D map reveals clusters of compositions with similar properties (e.g., single-phase FCC regions, high-hardness regions). The active learning algorithm can use this map to identify "information gaps"—sparsely sampled areas of high uncertainty—and prioritize them for the next round of experimentation, ensuring a comprehensive exploration of the design space.

The convergence of artificial intelligence (AI), robotics, and materials science has given rise to autonomous laboratories, which leverage active learning to accelerate the discovery and development of novel materials. These self-driving laboratories (SDLs) can plan, execute, and interpret experiments with minimal human intervention, dramatically reducing the time and resource costs associated with traditional research and development [26]. A core component of these systems is the closed-loop workflow, where experimental data is continuously fed back to a decision-making algorithm that proposes the most informative subsequent experiments.

This protocol focuses on the operational principles of the A-Lab, an autonomous laboratory for the solid-state synthesis of inorganic powders, and details the implementation of its active learning-driven closed-loop workflows [5]. The methodologies described herein are designed for researchers aiming to understand, replicate, or adapt these systems for accelerated materials research within the specific context of solid-state synthesis.

Experimental Protocols

The A-Lab Workflow for Solid-State Synthesis

The following protocol outlines the primary closed-loop workflow of the A-Lab, which integrates computational screening, robotic execution, and AI-driven decision-making [5] [27].

Primary Objective: To autonomously synthesize target inorganic compounds from powder precursors and maximize the yield through iterative, active-learning-guided experimentation.

Materials and Equipment

- Target Materials List: A set of air-stable inorganic target materials identified from computational databases (e.g., the Materials Project).

- Precursor Powders: High-purity powdered starting materials.

- Robotic Integration: The A-Lab platform, comprising three integrated stations:

- Sample Preparation Station: For automated powder dispensing, weighing, and mixing.

- Heating Station: Equipped with multiple box furnaces.

- Characterization Station: Featuring an X-ray diffractometer (XRD) and an automated sample grinder.

- Computational Infrastructure: Access to the Materials Project database and machine learning models for recipe generation and analysis.

- Software Framework: A workflow management system such as AlabOS to orchestrate experiments and manage hardware [27].

Procedure

Target Identification and Validation:

- Identify candidate materials from large-scale ab initio phase-stability databases (e.g., the Materials Project, Google DeepMind).

- Filter targets to include only those predicted to be air-stable (i.e., non-reactive with O~2~, CO~2~, and H~2~O).

Initial Recipe Generation:

- For each target compound, generate up to five initial solid-state synthesis recipes using a natural-language processing model trained on historical literature [5].

- This model assesses "similarity" to known compounds to propose effective precursor combinations.