Achieving Inter-Laboratory Reproducibility in Microbiome Sequencing: Strategies, Challenges, and Clinical Translation

Inter-laboratory reproducibility remains a significant hurdle in microbiome research, impacting the reliability of findings and their translation into clinical applications.

Achieving Inter-Laboratory Reproducibility in Microbiome Sequencing: Strategies, Challenges, and Clinical Translation

Abstract

Inter-laboratory reproducibility remains a significant hurdle in microbiome research, impacting the reliability of findings and their translation into clinical applications. This article synthesizes current evidence and best practices to address this challenge, targeting researchers, scientists, and drug development professionals. We first explore the foundational causes of variability, from contamination in low-biomass samples to methodological inconsistencies. We then detail standardized experimental protocols and analytical methodologies that enhance consistency across labs. A dedicated section provides a troubleshooting framework for identifying and correcting batch effects and contaminants. Finally, we examine validation strategies, including interlaboratory studies and ring trials, that benchmark performance and confirm result robustness. The conclusion integrates key takeaways and outlines a path forward for developing reliable, clinically applicable microbiome-based diagnostics and therapies.

The Reproducibility Crisis in Microbiome Science: Understanding the Core Challenges

Defining Reproducibility vs. Replicability in a Microbiome Context

In the rapidly advancing field of microbiome research, the terms "reproducibility" and "replicability" are often used interchangeably, creating confusion and impeding cross-laboratory validation of findings. While both concepts are essential for establishing robust scientific knowledge, they represent distinct aspects of verification in the scientific process. The National Academies of Sciences, Engineering, and Medicine defines reproducibility as obtaining consistent results using the same input data, computational steps, methods, and code, while replicability refers to obtaining consistent results across studies aimed at answering the same scientific question, each of which has obtained its own data [1].

This distinction carries particular significance in microbiome research, where technical variability in DNA extraction, sequencing platforms, bioinformatic analyses, and environmental conditions can dramatically influence outcomes. As noted in a 2018 perspective, microbiologists have long struggled to make their research reproducible, with difficulties compounded in interdisciplinary fields like microbiome research [2] [3]. This guide examines the critical differences between these concepts, provides experimental evidence of their challenges, and offers practical frameworks to enhance methodological rigor in microbiome studies.

Conceptual Framework: Distinguishing Between Verification Processes

Definitional Framework

The taxonomy of verification in microbiome science can be organized through a structured framework that accounts for both methodological and conceptual repetition. The table below outlines the key distinctions:

Table 1: Framework for Reproducibility and Related Concepts in Microbiome Research

| Concept | Definition | Methods | Experimental System | Key Question |

|---|---|---|---|---|

| Reproducibility | Ability to regenerate a result with the same dataset and analysis workflow [2] | Same methods | Same experimental system | Can we recreate the same results from the same data? |

| Replicability | Ability to produce a consistent result with an independent experiment asking the same scientific question [2] | Same methods | Different experimental system | Does the finding hold in a new experimental iteration? |

| Robustness | Ability to obtain consistent results using different methods on the same experimental system [2] | Different methods | Same experimental system | Is the result method-dependent? |

| Generalizability | Extent to which results apply in other contexts or populations that differ from the original one [1] | Different methods | Different experimental system | Does the finding extend to different systems? |

This framework is particularly valuable for microbiome researchers designing validation strategies for their findings. A finding that is reproducible but not replicable may indicate technical artifacts in the experimental process, while a finding that is replicable but not generalizable may have limited applicability beyond specific laboratory conditions.

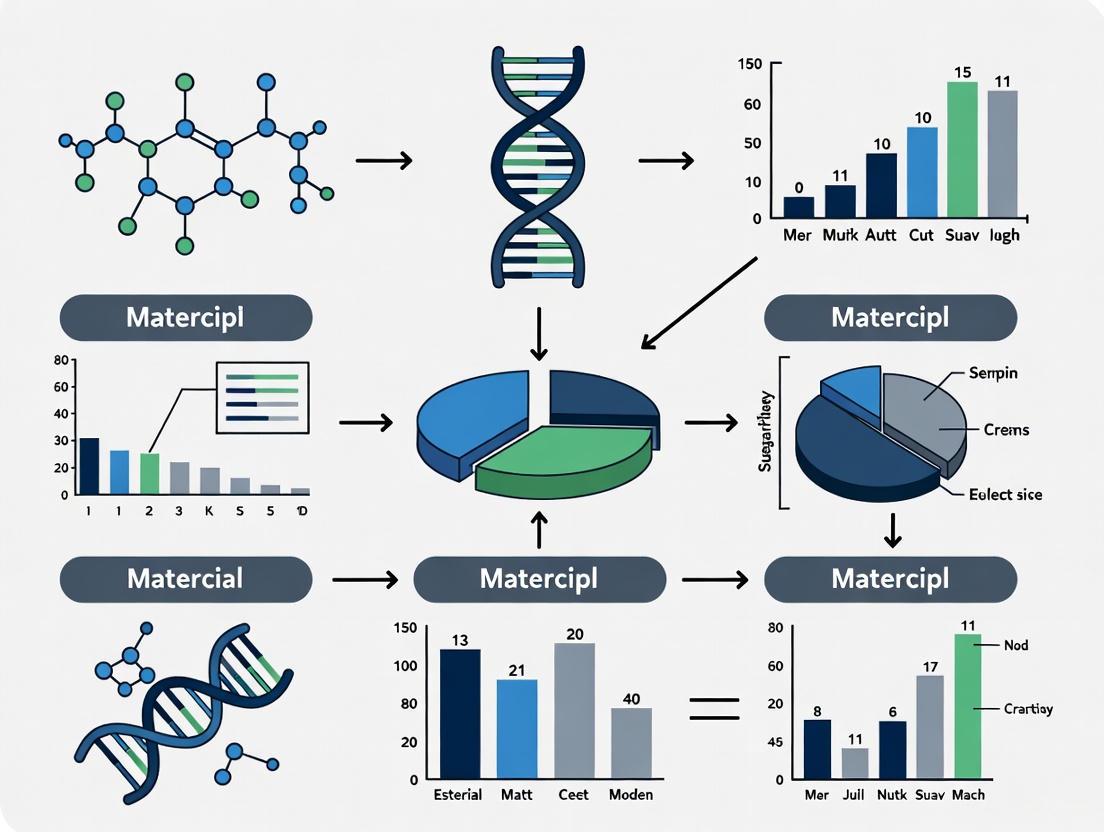

Visualizing the Verification Framework

The following diagram illustrates the conceptual relationships and progression between these key verification concepts in microbiome research:

Experimental Evidence: A Multi-Laboratory Case Study

Study Design and Standardized Protocols

A groundbreaking 2025 multi-laboratory ring trial exemplifies the pursuit of reproducibility in microbiome research [4] [5]. Five independent laboratories collaborated to test whether synthetic microbial community (SynCom) assembly experiments would yield reproducible results across different locations. The study employed:

- Standardized EcoFAB 2.0 devices: Fabricated ecosystems providing sterile, controlled habitats for plant growth

- Synthetic bacterial communities: Two defined communities (SynCom16 and SynCom17) with and without the dominant colonizer Paraburkholderia sp. OAS925

- Model grass: Brachypodium distachyon as a consistent plant host

- Detailed protocols: Comprehensive methodological documentation with annotated videos distributed to all participating laboratories [5]

Critical to the study's design was the central distribution of almost all supplies—including EcoFABs, seeds, SynCom inoculum, and filters—from the organizing laboratory to minimize material-based variability. Additionally, a single laboratory performed all sequencing and metabolomic analyses to reduce analytical variation.

Experimental Workflow for Multi-Laboratory Reprodubility

The methodology followed by all participating laboratories is visualized below:

Key Findings and Quantitative Results

The multi-laboratory study demonstrated remarkable consistency in several key outcomes, despite variations in growth chamber conditions across laboratories:

Table 2: Reproducibility Assessment Across Five Laboratories

| Parameter Measured | Result Type | Consistency Across Labs | Key Finding |

|---|---|---|---|

| Sterility Maintenance | Process Control | 99% success (2/210 tests showed contamination) | Demonstrated protocol effectiveness for maintaining sterile conditions [5] |

| Plant Biomass | Phenotypic Response | Significant decrease with SynCom17 across all labs | Consistent plant phenotype response to specific microbial composition [5] |

| Root Microbiome Composition | Microbial Community Assembly | High reproducibility in SynCom17 (98 ± 0.03% Paraburkholderia) | Dominant colonization effect consistently observed across all laboratories [5] |

| Root Development | Phenotypic Response | Consistent decrease in SynCom17 from 14 DAI onwards | Reproducible plant developmental response to microbial community [5] |

The study revealed that SynCom17-inoculated plants were dominated by Paraburkholderia sp. OAS925 across all laboratories (98 ± 0.03% average relative abundance), while SynCom16 communities showed higher variability between laboratories [5]. This suggests that specific microbial interactions can be reproducible across laboratories when standardized protocols are employed.

Key Research Reagent Solutions

Table 3: Essential Materials for Reproducible Microbiome Research

| Reagent/Resource | Function | Application in Microbiome Research |

|---|---|---|

| Mock Microbial Communities | Benchmarking and validation of workflows | Control for technical variability in DNA extraction and sequencing; identify process flaws [6] |

| Standardized DNA Extraction Kits | Nucleic acid isolation with minimal bias | Ensure consistent lysis across diverse microbial taxa (Gram-positive/negative, eukaryotes) [6] |

| EcoFAB 2.0 Devices | Fabricated ecosystem for controlled plant growth | Provide sterile, standardized habitats for plant-microbiome interactions [4] [5] |

| Synthetic Microbial Communities (SynComs) | Defined microbial communities for mechanistic studies | Bridge natural communities and axenic cultures; limit complexity while retaining functional diversity [5] |

| Standardized Sequencing Protocols | Consistent library preparation and sequencing | Minimize batch effects and technical biases in data generation [6] |

| Bioinformatic Workflows | Computational analysis of sequencing data | Ensure consistent data processing and interpretation across studies [7] |

Practical Implementation Guidance

Successful implementation of these resources requires careful planning. Mock microbial communities should include diverse species (Gram-positive/negative bacteria, eukaryotes) with varying GC content to properly benchmark wetlab processes [6]. Synthetic communities should be cryopreserved with standardized resuscitation protocols to ensure consistent starting inoculums across experiments and laboratories [5]. DNA extraction methods must be validated for their efficiency across different microbial cell wall types to avoid underrepresentation of specific taxa [6].

Quantitative Assessment of Reproducibility

Metrics for Measuring Reproducibility

A 2025 scoping review identified 50 different metrics used to quantify reproducibility across scientific disciplines [8]. These metrics can be categorized into several types:

- Formulas and statistical models: Quantitative measures of agreement between original and reproduced results

- Frameworks: Structured approaches for assessing multiple dimensions of reproducibility

- Graphical representations: Visual tools for comparing results across studies

- Algorithms: Computational approaches for quantifying reproducibility

- Studies and questionnaires: Systematic assessments of reproducibility across multiple experiments

The appropriate metric depends on the specific research question and project goals, with no single "best" metric applicable across all contexts [8].

Application in Microbiome Context

In microbiome research, commonly used metrics include:

- Microbial community similarity measures (e.g., Bray-Curtis, Jaccard, UniFrac distances)

- Taxonomic abundance correlation across technical replicates

- Alpha diversity consistency (within-sample diversity measures)

- Beta diversity preservation (between-sample diversity patterns)

- Differential abundance reproducibility for specific taxa of interest

The choice of metric should align with the specific research question, whether focused on overall community structure, specific taxonomic groups, or functional potentials.

The distinction between reproducibility and replicability provides a valuable framework for methodical validation of microbiome research. While reproducibility (same data, same methods) represents a minimum necessary condition for verifying analytical approaches, replicability (new data, same methods) provides stronger evidence for biological validity. The successful multi-laboratory study demonstrates that standardized protocols, centralized reagent distribution, and detailed methodological documentation can achieve high levels of reproducibility in microbiome research [4] [5].

However, challenges remain. Variations in growth conditions, DNA extraction efficiencies, and bioinformatic tools continue to introduce variability [7] [6]. Researchers should prioritize transparent reporting, use of mock communities, and methodology standardization while avoiding overly rigid protocols that might constrain scientific exploration. As the field advances, these practices will enhance the reliability and translational potential of microbiome research, ultimately strengthening its contributions to human health, agriculture, and environmental science.

Inter-laboratory variability presents a fundamental challenge in microbiome sequencing research, significantly impacting the reproducibility and comparability of findings across different studies. Advances in next-generation sequencing have transformed our ability to characterize complex microbial communities, yet the myriad of methodological options available complicates replication of results and limits comparability between independent studies using differing techniques [9]. This variability stems from a complex array of factors throughout the experimental workflow, ranging from sample collection and DNA extraction to bioinformatic analysis. The compositional nature of metagenomic sequencing measurements further compounds these challenges, as biases introduced at any step can propagate through the entire analytical process [9]. Understanding and addressing these sources of variation is particularly crucial for drug development professionals and researchers seeking to translate microbiome science into clinical applications, where reliable and reproducible diagnostic tools are essential [10] [11].

The total variability in microbiome sequencing results can be partitioned into distinct components arising at different stages of the experimental workflow. The Mosaic Standards Challenge (MSC), an international interlaboratory study comparing experimental protocols across 44 laboratories, demonstrated that methodological choices have significant effects on results, including both measurement bias and impacts on measurement robustness [9]. These factors can be broadly categorized into pre-analytical, analytical, and post-analytical sources of variation.

Pre-Analytical Variability

Pre-analytical variability encompasses all factors from sample collection through preparation for sequencing. This phase represents a significant source of inter-laboratory disagreement that cannot be corrected through computational means later in the workflow.

- Sample Collection and Storage: Differences in collection methods (e.g., sterile collection tools, timing relative to food intake or medication), stabilization buffers, storage conditions (temperature, duration), and transport methods can significantly alter microbial composition [11]. The biological variation inherent in the microbiome itself, including fluctuations due to diet, medication, circadian rhythms, and within-subject physiological changes, further complicates standardization [12] [11].

- DNA Extraction and Purification: The MSC identified that protocol choices during DNA extraction, such as the use of homogenizers, different commercial kits, and variations in cell lysis methods, significantly affect measurement robustness and the observed taxonomic profiles [9]. Inconsistent implementation of these steps introduces substantial variability in DNA yield, quality, and microbial representation.

Analytical Variability

Analytical variability arises from methodological choices during the sequencing process itself, including library preparation and the sequencing platform used.

- 16S rRNA Gene Region Selection and Primer Bias: For 16S rRNA sequencing, the choice of variable region (V1-V9) significantly impacts primer specificity, amplification efficiency, and taxonomic resolution [13]. A comprehensive evaluation of 57 commonly used 16S rRNA primer sets revealed significant limitations in widely used "universal" primers, which often fail to capture full microbial diversity due to unexpected variability in conserved regions and substantial intergenomic variation [13]. This primer bias can result in underrepresentation or complete omission of certain bacterial taxa.

- Sequencing Platform and Conditions: Differences between sequencing platforms (e.g., Illumina, PacBio, Oxford Nanopore), sequencing depth, and run-specific conditions contribute to analytical variation. Laboratory-specific implementations of otherwise standardized protocols introduce additional variability that affects the final results [9].

Post-Analytical Variability

Post-analytical variability encompasses all data processing, bioinformatic analysis, and interpretation steps following sequencing.

- Bioinformatic Pipeline Choices: The selection of reference databases (e.g., SILVA, Greengenes, NCBI), algorithms for taxonomic assignment, and parameters for quality filtering introduce substantial variability [13]. Discrepancies between database curation methods, taxonomic hierarchies, and nomenclature can lead to inconsistent species identification across studies [13].

- Data Normalization and Statistical Methods: The use of different data transformation techniques, normalization approaches, and statistical models for differential abundance testing can yield varying interpretations from the same underlying data. The compositional nature of MGS data necessitates careful statistical treatment to avoid spurious results [9].

Table 1: Impact of Methodological Choices on Microbiome Sequencing Results

| Variability Source | Experimental Evidence | Impact on Results |

|---|---|---|

| DNA Extraction Method | Interlaboratory comparison using shared reference samples [9] | Significant effects on measurement robustness; homogenizer use reduced variability |

| 16S rRNA Primer Selection | In silico analysis of 57 primer sets against SILVA database [13] | Coverage variation from <70% to ≥90% across key gut genera; primer bias affecting diversity estimates |

| Sequencing Platform | MSC study comparing 16S vs. WGS across labs [9] | Systematic differences in taxonomic profiles between 16S and WGS approaches |

| Bioinformatic Database | Comparison of SILVA vs. NCBI taxonomy assignment [13] | Discrepancies in species identification due to different curation methods |

| Sample Storage Conditions | Microbiome study methodological review [11] | Altered microbial profiles based on storage temperature, duration, and stabilization buffers |

Table 2: Performance Comparison of 16S rRNA Primer Sets for Gut Microbiome

| Primer Set | Target Region | Coverage Across 4 Major Phyla | Genera with ≥90% Coverage | Key Limitations |

|---|---|---|---|---|

| V3_P3 | V3 | ≥70% | ≥4 of 20 representative genera | Balanced coverage and specificity [13] |

| V3_P7 | V3 | ≥70% | ≥4 of 20 representative genera | Balanced coverage and specificity [13] |

| V4_P10 | V4 | ≥70% | ≥4 of 20 representative genera | Balanced coverage and specificity [13] |

| Commonly used "Universal" | Multiple | Highly variable | Often <4 genera | Fails to capture diversity; designed from limited datasets [13] |

Experimental Protocols for Assessing Variability

Understanding the experimental approaches used to quantify inter-laboratory variability provides critical context for evaluating the evidence supporting the above comparisons.

The Mosaic Standards Challenge Protocol

The MSC employed a systematic approach to assess variability across the entire microbiome sequencing workflow:

- Reference Material Production: Created homogeneous, stabilized fecal materials from 5 human donors with distinct microbiome compositions, plus 2 DNA mock communities (Mix A with equal genomic ratios of 13 species; Mix B with varying abundances across 3 orders of magnitude) [9].

- Participant Recruitment and Testing: 44 laboratories participated (30 submitting 16S data, 14 submitting WGS data), each using their standard laboratory protocols without prescribed methods [9].

- Metadata Collection: Developed a comprehensive reporting sheet with ~100 metadata parameters capturing methodological details for each step of the measurement process [9].

- Data Analysis: Applied common analysis pipelines to raw sequencing data alongside methodological metadata. Analyzed effects using the Firmicutes:Bacteroidetes ratio to directly apply common statistical methods, acknowledging the compositional nature of MGS measurements [9].

In Silico Primer Validation Protocol

The evaluation of 16S rRNA primer performance employed computational methods to assess potential sources of primer bias:

- Primer Compilation: Systematically compiled 57 unique primer pairs from literature review and commercial sources, focusing on those commonly used in human gut microbiome studies [13].

- In Silico PCR: Used TestPrime 1.0 to assess primer performance against the SILVA SSU Ref NR database (138.1 release), applying perfect alignment within primer degeneracy criteria [13].

- Coverage Calculation: Defined primer coverage as the percentage of eligible sequences successfully amplified, with selection criteria requiring ≥70% coverage across four dominant gut phyla (Actinobacteriota, Bacteroidota, Firmicutes, Proteobacteria) and ≥90% coverage for at least 4 of 20 representative genera [13].

- Intergenomic Variation Analysis: Computed Shannon entropy values from multiple sequence alignments of 100 sequences per genus, classifying regions with entropy >0.5 as variable and examining primer binding in the context of this variation [13].

Visualizing Variability in Microbiome Sequencing

The following diagram illustrates how methodological choices at each stage of the microbiome sequencing workflow contribute to inter-laboratory variability:

Essential Research Reagent Solutions

Table 3: Key Research Reagents and Materials for Standardized Microbiome Sequencing

| Reagent/Material | Function | Considerations for Reducing Variability |

|---|---|---|

| Stabilization Buffers | Preserve microbial composition during sample storage and transport | Use consistent, validated buffers; document lot numbers and preparation methods [9] |

| DNA Extraction Kits | Extract and purify microbial DNA from samples | Select kits with demonstrated efficiency across diverse taxa; homogenization improves robustness [9] |

| 16S rRNA Primers | Amplify target regions for sequencing | Use validated primer sets (e.g., V3P3, V3P7, V4_P10) with balanced coverage; avoid problematic "universal" primers [13] |

| Mock Communities | Control materials with known composition | Include in each batch to quantify technical variability and bias [9] |

| PCR Enzymes/Master Mixes | Amplify target sequences | Use high-fidelity enzymes with minimal bias; document lot numbers and cycling conditions [13] |

| Sequence Databases | Taxonomic classification of sequences | Select appropriate database (SILVA, Greengenes, NCBI) and document version; understand curation differences [13] |

Addressing inter-laboratory variability in microbiome sequencing requires a systematic approach across the entire research workflow. The evidence demonstrates that methodological choices at every stage—from sample collection through data analysis—significantly impact results and contribute to variability between laboratories [9] [13]. Effective strategies for enhancing reproducibility include: adopting standardized protocols with detailed metadata collection; utilizing reference materials like mock communities and homogeneous samples; implementing multi-primer strategies for 16S rRNA sequencing to overcome primer bias; and applying computational reproducibility practices including containerization and version control [9] [14] [13]. For drug development professionals and researchers, recognizing and mitigating these sources of variability is essential for developing reliable, clinically applicable microbiome-based diagnostics and therapeutics [10] [11].

The Critical Impact of Low Microbial Biomass and Contamination

The pursuit of inter-laboratory reproducibility in microbiome sequencing research represents a significant challenge in modern microbial science. Reproducibility is particularly compromised when studying low microbial biomass environments—samples containing minimal microbial DNA—where the signal from true resident microbes can be dwarfed by contaminating DNA from reagents, laboratory environments, and cross-sample contamination [15] [16]. These environments include human tissues (e.g., placenta, blood, tumors), certain environmental samples (e.g., deep subsurface, atmosphere, treated drinking water), and engineered systems [15] [16]. The critical impact of contamination in these contexts has fueled major controversies, from debates about the existence of a placental microbiome to retractions of studies concerning the tumor microbiome [16]. This guide examines the core challenges, compares experimental approaches for contamination control, and provides a standardized toolkit to enhance reproducibility for researchers, scientists, and drug development professionals.

Understanding the Core Challenges

The Contamination Landscape in Low-Biomass Studies

In low microbial biomass research, the term "contamination" encompasses several distinct but related problems, each with unique implications for data integrity:

- External Contamination: DNA introduced from sources outside the study, including laboratory reagents, DNA extraction kits, sampling equipment, and personnel [15] [17]. This form of contamination is particularly problematic because reagent-derived microbial DNA can constitute a substantial proportion of sequence data in low-biomass samples [17].

- Cross-Contamination (Well-to-Well Leakage): The transfer of DNA between samples processed concurrently, often in adjacent wells on processing plates [15] [18]. Also termed the "splashome," this phenomenon violates the core assumption of sample independence and can be difficult to distinguish from biological signal [16].

- Host DNA Misclassification: In host-associated low-biomass samples (e.g., tissues), the majority of sequenced DNA often originates from the host. When this host DNA is not adequately accounted for, it can be misclassified as microbial, generating noise or even artifactual signals if confounded with experimental conditions [16].

- Batch Effects and Processing Bias: Technical variability introduced by differences in protocols, reagent lots, personnel, or laboratory conditions that can distort microbial community profiles [16]. These effects are exacerbated in low-biomass research due to the heightened impact of any technical variation.

Quantifying the Reproducibility Problem

Recent multi-laboratory assessments have quantified the substantial variability in metagenomic measurements, particularly for low-biomass samples. The following table summarizes key findings from large-scale inter-laboratory studies:

Table 1: Inter-laboratory Variability in Metagenomic Sequencing Assessments

| Study Focus | Number of Participating Laboratories | Key Findings on Variability | Impact on Low-Biomass Detection |

|---|---|---|---|

| Metagenomic next-generation sequencing (mNGS) for microbe detection [19] | 90 | High inter-laboratory variability in microbial identification and quantification; significantly lower detection rates for microbes at concentrations ≤10³ cells/mL. | 42.2% of laboratories reported unexpected microbes (false positives); only 56.7-83.3% could correctly identify etiological diagnoses in simulated cases. |

| Plant-microbiome studies with synthetic communities [5] [4] | 5 | Consistent inoculum-dependent changes observed across laboratories when using standardized habitats (EcoFAB 2.0) and protocols. | Demonstrated that standardized protocols and materials enable reproducible microbiome assembly, highlighting a solution pathway. |

| Strain-resolved analysis for contamination detection [18] | N/A (Analysis of clinical datasets) | Identified widespread well-to-well contamination in extraction plates; contamination more significant in lower biomass samples. | Revealed that nearby wells on extraction plates are more prone to cross-contamination, with specific patterns of strain sharing. |

The data clearly demonstrates that the current state of metagenomic sequencing suffers from significant reproducibility issues, especially near detection limits. The variability is not merely quantitative but affects fundamental taxonomic identification and pathogen detection capabilities.

Standardized Experimental Protocols for Enhanced Reproducibility

Multi-Laboratory Validated Workflow for Plant-Microbiome Research

A landmark international ring trial involving five laboratories successfully established a highly reproducible workflow for plant-microbiome research using fabricated ecosystems (EcoFAB 2.0) and synthetic microbial communities (SynComs) [5] [4]. The detailed protocol is available at protocols.io and involves these critical phases:

- EcoFAB 2.0 Device Assembly: Utilization of sterile, standardized growth chambers to ensure identical physical and chemical environments across laboratories.

- Seed Preparation: Brachypodium distachyon seeds are dehusked, surface-sterilized, stratified at 4°C for 3 days, and germinated on agar plates for 3 days.

- Seedling Transfer: Germinated seedlings are transferred to EcoFAB 2.0 devices for 4 days of additional growth before inoculation.

- Sterility Testing and Inoculation: Devices are tested for sterility before inoculation with defined SynComs (e.g., 16- or 17-member bacterial communities). Critical note: SynComs are prepared using optical density (OD₆₀₀) to colony-forming unit (CFU) conversions to ensure equal cell numbers across laboratories (final inoculum of 1×10⁵ bacterial cells per plant) [5].

- Growth Monitoring and Sampling: Plants are grown for 22 days post-inoculation with regular water refills and root imaging. At harvest, samples are collected for plant phenotyping, 16S rRNA amplicon sequencing, and metabolomics by LC-MS/MS.

This workflow achieved remarkable reproducibility across all five laboratories, with consistent observation of Paraburkholderia sp. OAS925 dominance in root colonization when present in the SynCom, and corresponding impacts on plant phenotype and exometabolite profiles [5] [4].

DNA Extraction and Library Construction Standards for Human Fecal Metagenomics

The Japan Microbiome Consortium established validated protocols for human fecal microbiome measurements through systematic comparison and inter-laboratory validation [20]. The study compared 11 commercial library construction kits and multiple DNA extraction methods using defined mock communities. Key recommendations include:

- DNA Extraction: Validation of extraction efficiency across sample types (lumenal contents, mucosa) with special attention to Gram-positive bacteria, which are often underrepresented due to their tough cell walls.

- Library Construction: Selection of kits that minimize GC bias and PCR duplicate formation. PCR-free protocols generally showed lower variability, though several PCR-based protocols also performed well when starting with adequate DNA input.

- Performance Metrics: Establishment of target values for analytical performance, including using the geometric mean of absolute fold-differences (gmAFD) to evaluate trueness against mock community "ground truth."

Quantitative Framework for Absolute Abundance Measurements

Relative abundance data inherently obscures true population dynamics, as an increase in one taxon's relative abundance could result from its actual growth or the decline of other taxa [21]. A quantitative sequencing framework combining digital PCR (dPCR) with 16S rRNA gene amplicon sequencing enables absolute abundance measurements [21]. The protocol involves:

- DNA Extraction with Efficiency Monitoring: Spiking defined microbial communities into germ-free mouse samples to validate extraction efficiency across different sample matrices (mucosa, cecum contents, stool).

- Absolute Quantification of 16S rRNA Genes: Using dPCR to precisely count 16S rRNA gene copies in extracted DNA, providing an absolute anchor point for sequencing data.

- Lower Limit of Quantification Establishment: Determining the minimum microbial load required for accurate quantification (e.g., 4.2×10⁵ 16S rRNA gene copies per gram for stool) [21].

This approach revealed that a ketogenic diet decreased total microbial loads in mice—a finding impossible to discern from relative abundance data alone [21].

Visualizing Experimental Workflows and Contamination Pathways

The following diagram illustrates the primary contamination sources throughout a typical microbiome study workflow and their potential impacts on data interpretation:

Contamination Pathways in Low-Biomass Microbiome Studies

Strain-Resolved Contamination Detection Workflow

Strain-resolved analysis provides high-resolution detection of contamination in metagenomics data. The following workflow illustrates how to identify cross-sample contamination using strain-sharing patterns:

Strain-Resolved Contamination Detection Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing robust low-biomass microbiome research requires specific reagents, controls, and analytical tools. The following table details essential components of a contamination-aware research toolkit:

Table 2: Essential Research Reagent Solutions for Low-Biomass Microbiome Studies

| Tool Category | Specific Examples | Function & Importance |

|---|---|---|

| Standardized Synthetic Communities | 17-member bacterial SynCom for Brachypodium distachyon [5], Defined mock communities with varying GC content [20] | Provide "ground truth" communities with known composition for validating extraction efficiency, library prep bias, and bioinformatic pipelines. |

| DNA Decontamination Reagents | DNA removal solutions (e.g., sodium hypochlorite, DNA-ExitusPlus), UV-C light sterilization systems [15] | Eliminate contaminating DNA from surfaces, equipment, and reagents before sample processing. |

| Process Controls | DNA extraction blanks, no-template PCR controls, sampling controls (empty collection vessels, swabs) [15] [16] | Identify contamination introduced at specific workflow steps; essential for distinguishing environmental signal from contamination. |

| Quantification Standards | Digital PCR systems, spike-in DNA from unrelated organisms (e.g., Salmonella bongori), flow cytometry standards [21] [17] | Enable conversion of relative abundance data to absolute abundances, revealing true microbial population dynamics. |

| Standardized DNA Extraction Kits | Kits validated for Gram-positive/Gram-negative evenness [20] | Ensure consistent lysis efficiency across diverse bacterial cell wall types, preventing biased community representation. |

| Library Preparation Kits | Kits with minimal GC bias (validated by mock community testing) [20] | Reduce technical artifacts in sequencing data, improving accuracy of taxonomic and functional assignments. |

Achieving reproducibility in low microbial biomass microbiome research requires acknowledging the profound impact of contamination and implementing systematic solutions. The experimental data and protocols presented here demonstrate that while challenges are significant, they are addressable through standardized workflows, comprehensive controls, and absolute quantification approaches. The scientific community must adopt more rigorous standards—including those outlined in the RIDE checklist [17] and recent Nature Microbiology guidelines [15]—to ensure that low-biomass microbiome research produces reliable, reproducible findings that can confidently inform drug development and clinical applications.

The field of microbiome research has been revolutionized by advanced DNA sequencing technologies, enabling unprecedented insights into complex microbial communities. However, this rapid progress has been shadowed by a significant and persistent challenge: the limited reproducibility and comparability of results between different research laboratories [22]. Metagenomic sequencing (MGS) measurements are the product of complex workflows with multiple distinct steps, each involving numerous methodological choices that collectively introduce measurement bias and noise [22]. Understanding how these technical variables influence taxonomic profiling is not merely an academic exercise but a fundamental prerequisite for generating reliable, comparable data that can effectively inform clinical and therapeutic development [23].

This case study examines the substantial impact of methodological decisions on observed taxonomic profiles in microbiome research. We synthesize evidence from recent inter-laboratory comparisons and method evaluation studies to dissect how choices in DNA extraction, library preparation, sequencing technology, and bioinformatic analysis systematically skew scientific interpretations. The findings underscore an urgent need for standardized protocols and reporting frameworks, particularly as microbiome science increasingly influences our understanding of health and disease [24].

The Reproducibility Crisis in Microbiome Research

Documented Variability from Inter-Laboratory Studies

Recent large-scale collaborative efforts have quantitatively demonstrated the profound extent of methodological impacts. The Mosaic Standards Challenge (MSC), an international interlaboratory study, revealed that methodological choices introduce significant effects, including both bias in measurements and impacts on measurement robustness [22]. In this study, 44 laboratories analyzed identical reference samples using their standard protocols, resulting in 30 16S rRNA gene amplicon datasets and 14 whole-genome shotgun (WGS) datasets. Despite using identical starting material, the results showed striking variability attributable solely to technical differences between laboratories [22].

Similarly, a multicenter evaluation of gut microbiome profiling involving seven participating laboratories found that inter-laboratory variance actually exceeded inter-individual variance in beta-diversity analyses, highlighting how technical noise can obscure true biological signals [25]. This study compared partial-length metabarcoding, full-length metabarcoding, and metagenomic profiling approaches using DNA extracted from the same fecal samples. The taxonomic profiles generated by different partners showed substantial discrepancies, with one laboratory detecting half of its reported genera uniquely compared to others [25].

Impact on Data Interpretation and Cross-Study Comparisons

The consequences of this methodological variability extend beyond academic concerns to practical implications for data interpretation. When different methodologies produce different taxonomic profiles from identical samples, cross-study comparisons become problematic, and meta-analyses may yield conflicting conclusions [26]. This is particularly concerning in the context of clinical applications, where microbiome signatures are being developed as potential diagnostic or prognostic biomarkers [23]. The risk of technical artifacts being misinterpreted as biological findings necessitates a more critical approach to methodological reporting and standardization.

Critical Methodological Variables and Their Impacts

DNA Extraction Protocols

The initial step of DNA isolation represents a primary source of technical variability, with different kits demonstrating significant differences in performance characteristics.

Table 1: Comparison of DNA Extraction Kit Performance

| Kit Manufacturer | DNA Yield | DNA Quality | Host DNA Ratio | Reproducibility | Hands-On Time |

|---|---|---|---|---|---|

| Zymo Research (Z) | High | High (HMW) | Low | High consistency | Extensive |

| Macherey-Nagel (MN) | Highest | Suitable for LRS | Low | Reliable | Moderate |

| Invitrogen (I) | Moderate | Suitable for LRS | Low | Highest variance | Moderate |

| Qiagen (Q) | Lowest | Most degraded | Significantly higher | Below-average | Moderate |

A comprehensive cross-comparison of gut metagenomic profiling strategies evaluated four commercial DNA isolation kits, finding substantial differences in both the quantity and quality of extracted nucleic acids [27]. The Zymo Research Quick-DNA HMW MagBead Kit yielded high-quality DNA suitable for long-read sequencing but required more extensive hands-on time. The Qiagen kit consistently produced the lowest DNA yield and most degraded DNA, while also resulting in a significantly higher ratio of host DNA contamination [27]. These differences directly impact downstream sequencing results, particularly in their efficiency of lysing Gram-positive bacteria with more rigid cell wall structures, potentially leading to underrepresentation of these taxa [27].

Sequencing Technologies and Primer Selection

The choice between 16S rRNA gene amplicon sequencing and shotgun metagenomic sequencing, as well as the specific technical parameters within each approach, represents another critical variable influencing taxonomic profiles.

Table 2: Comparison of Sequencing Approaches

| Sequencing Approach | Taxonomic Resolution | Functional Profiling | Cost Considerations | Key Limitations |

|---|---|---|---|---|

| 16S rRNA Amplicon (Partial-length) | Genus level, sometimes family | Inferred (PICRUSt, Tax4Fun2) | Lower cost | PCR amplification biases, limited resolution |

| 16S rRNA Amplicon (Full-length) | Species level possible | Inferred (PICRUSt, Tax4Fun2) | Moderate cost | PCR amplification biases, emerging technology |

| Shotgun Metagenomic | Species/strain level | Direct assessment of functional potential | Higher cost | Computational demands, host DNA contamination |

Primer selection for 16S rRNA gene sequencing significantly influences results, with different variable regions capturing different facets of microbial diversity [28] [24]. One study found that primer choice can determine which unique taxa are detected, with certain combinations missing specific bacterial groups entirely [28]. For urinary microbiota studies, V1V2 primers have been shown to be better suited than V4 primers, which may underestimate species richness [24].

Comparative evaluation of sequencing technologies revealed that Oxford Nanopore Technologies (ONT) 16S rRNA sequencing captured a broader range of taxa compared to Illumina, though metagenome sequencing on both platforms showed high correlation [28]. This suggests that while 16S rRNA sequencing approaches vary significantly between platforms, shotgun metagenomic approaches are more consistent.

Bioinformatic Analysis Pipelines

The computational processing of sequencing data introduces another layer of variability that can dramatically alter taxonomic profiles. A multicenter study found that when raw sequences from different laboratories were reprocessed using a single bioinformatic pipeline, the resulting bacterial profiles were more similar to each other and closer to profiles obtained by metagenomic profiling [25]. This highlights the major impact of the bioinformatics pipeline, primarily the database used for taxonomic annotation [25].

Different bioinformatic tools exhibit varying performance characteristics. For example, sourmash has been shown to produce excellent accuracy and precision on both short-read and long-read sequencing data, while Kraken2 has proven applicable to non-16S rRNA databases despite being originally developed for WGS reads [27]. The development of new tools like minitax aims to provide more consistent results across platforms and methodologies [27].

Diagram: Methodological Workflow and Variability Sources. This workflow illustrates how methodological variability at each step of the microbiome analysis pipeline impacts final taxonomic profiles.

Contamination Challenges in Low-Biomass Samples

The problem of methodological skewing is particularly acute in low-biomass samples, where contaminating DNA can constitute a substantial proportion of the final sequence data [15]. Environments such as certain human tissues (respiratory tract, fetal tissues, blood), treated drinking water, and hyper-arid soils present unique challenges because the limited microbial biomass approaches the detection limits of standard DNA-based sequencing approaches [15].

Contaminants can be introduced from multiple sources throughout the experimental workflow, including human operators, sampling equipment, reagents/kits, and laboratory environments [15]. Well-to-well leakage during PCR amplification has been identified as a particularly persistent problem that can lead to cross-contamination between samples [15].

To address these challenges, recent consensus guidelines recommend:

- Decontamination of sources of contaminant cells or DNA using 80% ethanol followed by nucleic acid degrading solutions [15]

- Using personal protective equipment (PPE) or other barriers to limit contact between samples and contamination sources [15]

- Collecting and processing controls from potential contamination sources, including empty collection vessels, swabs exposed to air, and aliquots of preservation solutions [15]

- Implementing rigorous negative controls throughout the workflow to identify and account for contaminating sequences [15]

Pathways to Improved Reproducibility

Standardization and Protocol Harmonization

Evidence suggests that standardization of protocols can significantly improve inter-laboratory reproducibility. A groundbreaking international ring trial involving five laboratories demonstrated that highly consistent plant phenotype, root exudate composition, and bacterial community structure could be achieved across different laboratories when using standardized protocols, synthetic bacterial communities, and sterile fabricated ecosystems (EcoFAB 2.0 devices) [5] [4]. This study provided participants with detailed protocols, annotated videos, and centralized critical components to minimize variation [5].

The success of this coordinated approach highlights the potential for protocol harmonization to enhance reproducibility without stifling methodological innovation. The key elements included:

- Centralized distribution of critical reagents and materials [5]

- Detailed, video-annotated protocols with specific part numbers for labware [5]

- Standardized data collection templates and image examples [5]

- Centralized sequencing and analysis to minimize analytical variation [5]

Enhanced Metadata Reporting and Data Sharing

Inconsistent metadata reporting represents a significant obstacle to comparing and integrating findings across microbiome studies. The lack of comprehensive metadata associated with raw data hinders the ability to perform robust data stratifications and consider confounding factors [26]. Recent reviews have emphasized the vital role of metadata in interpreting and comparing datasets, highlighting the need for standardized metadata protocols to fully leverage metagenomic data's potential [26].

Machine learning approaches for microbiome classification are particularly dependent on high-quality metadata for model training and validation [26]. Improving metadata collection and sharing will facilitate the application of these advanced computational approaches to microbiome data.

Reference Materials and Method Benchmarking

The use of reference materials, such as mock microbial communities with known composition, provides a powerful strategy for benchmarking methodological performance and identifying technical biases [22] [25]. In the Mosaic Standards Challenge, inclusion of DNA-based mixtures with ground-truth taxa abundances enabled differentiation between measurement variability and measurement bias with respect to ground truth values [22].

Similarly, a multicenter evaluation used the ZymoBIOMICS Microbial Community DNA Standard as an internal positive control to assess the performance of different metabarcoding and metagenomic approaches [25]. These reference materials allow laboratories to validate their methods and identify potential sources of bias before applying them to precious biological samples.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Materials for Reproducible Microbiome Research

| Reagent/Material | Function/Purpose | Examples/Considerations |

|---|---|---|

| DNA Extraction Kits | Isolation of microbial DNA from samples | Zymo Research Quick-DNA HMW MagBead Kit effective for high-quality DNA [27] |

| DNA Standards & Mock Communities | Method validation and benchmarking | ZymoBIOMICS Microbial Community DNA Standard used for internal positive controls [25] |

| Stabilization/Preservation Buffers | Maintain microbial integrity during storage | OMNIgene·GUT, AssayAssure; effectiveness varies by bacterial taxa [24] |

| Library Preparation Kits | Prepare sequencing libraries from DNA | Illumina DNA Prep method effective for microbial diversity analysis [27] |

| Synthetic Microbial Communities (SynComs) | Controlled systems for reproducibility testing | 17-member model community for grass rhizosphere studies [5] |

| Sterile Fabricated Ecosystems | Standardized habitats for microbiome studies | EcoFAB 2.0 devices enable reproducible plant-microbiome research [5] [4] |

Diagram: Solutions for Methodological Variability. This diagram outlines the relationship between identified problems and potential solutions for improving reproducibility in microbiome studies.

Methodological choices in microbiome research systematically skew taxonomic profiles through multiple mechanisms, including DNA extraction efficiency biases, primer selection specificity, sequencing platform characteristics, and bioinformatic processing variations. The evidence from inter-laboratory comparisons reveals that technical variability can sometimes exceed biological variability, complicating data interpretation and cross-study comparisons.

Addressing these challenges requires a multi-faceted approach centered on method standardization, comprehensive metadata reporting, and rigorous contamination controls. The research community is increasingly recognizing these imperatives, with emerging best practices emphasizing the use of reference materials, standardized protocols, and controlled synthetic communities. As microbiome science continues to evolve and influence therapeutic development, upholding these methodological rigor standards will be essential for generating reliable, reproducible findings that can effectively inform clinical applications.

For researchers and drug development professionals, the path forward involves both adopting these best practices and maintaining critical awareness of methodological limitations when interpreting microbiome data. By acknowledging and addressing the technical variables that skew taxonomic profiles, the field can advance toward more robust, reproducible microbiome science with greater translational potential.

The Consequences for Drug Development and Clinical Translation

Inter-laboratory reproducibility is a fundamental pillar of scientific progress, yet it remains a significant barrier in microbiome research. The complex, ecosystem-like nature of microbial communities, combined with technical variations in sequencing and analysis, often prevents findings from one laboratory from being reliably replicated in another. This reproducibility crisis has profound consequences, directly impacting the translation of basic microbiome research into safe and effective clinical therapies [29] [5]. In drug development, a failure to replicate preclinical findings can derail clinical programs, wasting years of research and millions of dollars. This guide objectively compares the performance of different microbiome sequencing and analytical approaches, providing researchers with the data needed to design more robust and reproducible studies that can successfully navigate the path from bench to bedside.

Comparative Analysis of Microbiome Sequencing Applications

The choice of sequencing technology and data analysis strategy is critical for generating reliable and interpretable data. The table below compares the core methodologies used in the field.

Table 1: Comparison of Key Microbiome Sequencing and Analysis Methods

| Method Category | Specific Technology/Approach | Typical Application | Key Performance Considerations |

|---|---|---|---|

| Sequencing Technology | 16S rRNA Gene Sequencing [30] | Profiling microbial community composition and diversity [30]. | Cost-effective for taxonomy; limited functional insight. |

| Sequencing Technology | Shotgun Metagenomic Sequencing [30] | Comprehensive analysis of all genetic material for functional potential [30]. | Higher cost; reveals genes and pathways; more complex data. |

| Data Integration | Sparse PLS (sPLS) [31] | Identifying the most relevant microbial and metabolic features across datasets. | Addresses multicollinearity; effective for feature selection. |

| Data Integration | Centered Log-Ratio (CLR) Transformation [31] | Normalizing microbiome data for statistical analysis. | Handles compositionality to avoid spurious results. |

| Experimental System | Synthetic Communities (SynComs) in EcoFABs [5] | Controlled, reproducible study of host-microbe interactions. | Limits complexity while retaining key microbe-microbe interactions. |

Standardized Protocols for Reproducible Microbiome Research

Multi-Laboratory Ring Trial for Reproducibility

A landmark five-laboratory international ring trial demonstrated a successful framework for achieving reproducibility in plant-microbiome studies [5]. The study employed a standardized synthetic community (SynCom) of 17 bacterial isolates and the EcoFAB 2.0 device, a sterile, fabricated ecosystem for growing the model grass Brachypodium distachyon [5].

Key Experimental Protocol:

- SynCom Preparation: Bacterial strains were provided from a central biobank (DSMZ). Inoculums were prepared using OD600 to CFU conversions to ensure equal cell numbers (final inoculum of 1 × 10^5 bacterial cells per plant) and shipped as 100x concentrated stocks on dry ice to all participating labs [5].

- Plant Growth and Inoculation: All labs followed a unified protocol for seed sterilization, stratification, and germination. Seedlings were transferred to sterile EcoFAB 2.0 devices and inoculated at the same growth stage [5].

- Data and Sample Collection: Labs measured plant biomass, performed root scans, and collected samples for 16S rRNA amplicon sequencing and metabolomics. All sequencing and metabolomic analyses were performed by a single, central laboratory to minimize analytical variation [5].

Outcomes: The study achieved consistent results across all laboratories, observing that the inclusion of a single bacterial strain, Paraburkholderia sp. OAS925, consistently dominated the root microbiome and led to reproducible changes in plant phenotype and root exudate composition [5]. This highlights how standardized tools and protocols can successfully control for inter-laboratory variability.

From Association to Causation: An Iterative Translational Workflow

For human health, a structured, iterative approach is required to move from initial clinical observations to mechanistic understanding and, finally, to clinical intervention [29]. The workflow can be summarized in the following diagram:

Detailed Methodologies:

From Clinical Patterns to Data-Driven Hypotheses: Research begins with clinical observations, such as variability in patient response to a drug. These insights are paired with biological sampling in large, deeply phenotyped cohorts. Machine learning and statistical modeling are then used on these integrated multi-omics datasets (e.g., metagenomics and metabolomics) to identify robust microbial signatures associated with clinical phenotypes [29] [31].

From Hypotheses to Mechanisms: Experimental Validation: Once associations are identified, causal relationships must be tested. This involves using experimental models like gnotobiotic animals (germ-free mice colonized with human microbiota) or in vitro gut culture systems [29]. Proof-of-concept often starts with fecal microbiota transplant (FMT) from patient subgroups into germ-free mice. If a clinical phenotype (e.g., altered glucose tolerance) is transferred, it suggests a mechanistic role for the microbiome. These findings can be further dissected using reductionist models like monocolonisation or microbiota-organoid systems to pinpoint specific microbes and metabolites [29].

The Scientist's Toolkit: Essential Reagents for Reproducible Microbiome Research

Achieving reproducibility requires access to well-characterized and standardized research materials. The following table details key reagents and their functions.

Table 2: Key Research Reagent Solutions for Microbiome Studies

| Reagent / Material | Function in Research | Example / Standardization Source |

|---|---|---|

| Synthetic Microbial Communities (SynComs) | Limits complexity while retaining functional diversity for mechanistic studies of community assembly and host interactions [5]. | A model 17-member community for grass rhizosphere, available via public biobank DSMZ [5]. |

| Fabricated Ecosystems (EcoFABs) | Provides a sterile, standardized laboratory habitat for studying microbiomes in a controlled, replicable environment [5]. | EcoFAB 2.0 device, which enables highly reproducible plant growth [5]. |

| Standardized Cryopreservation Protocols | Ensures consistent viability and function of microbial inoculums upon resuscitation, critical for experiment-to-experiment reproducibility [5]. | Detailed protocols for SynCom cryopreservation in 20% glycerol [5]. |

| Live Biotherapeutic Products (LBPs) | Defined microbial consortia developed as next-generation therapies, subject to strict regulatory and manufacturing standards [30]. | Requires standardization across sequencing platforms and methodologies for batch-to-batch consistency [30]. |

Consequences and Outcomes in Drug Development

Impact on Therapeutic Areas

The application of reproducible microbiome science is actively shaping several therapeutic areas, as summarized below.

Table 3: Clinical Applications and Translational Challenges of Microbiome-Based Therapies

| Therapeutic Area | Microbiome Application | Translational Consequence & Status |

|---|---|---|

| Infectious Disease | Fecal Microbiota Transplantation (FMT) for C. difficile infection [30]. | High efficacy (~90% cure rates); successfully translated into FDA-approved products (Rebyota, VOWST) [30]. |

| Oncology | Modulating gut microbiome to enhance checkpoint inhibitor immunotherapy [29] [30]. | Promising associations; requires controlled trials to define specific microbial consortia for reliable patient response [29]. |

| Metabolic Disease | Personalizing nutrition and interventions for obesity and type 2 diabetes based on individual microbiome profiles [30]. | Early stage; success depends on robust biomarkers and reproducible profiling to inform personalized interventions [30]. |

| Neurology & Psychiatry | Targeting the gut-brain axis for conditions like Parkinson's and autism spectrum disorder [30]. | Highly exploratory; mechanistic links (e.g., via microbial metabolites) require further validation in standardized models [30]. |

The Path to the Clinic: Overcoming Translational Failure

Despite promising preclinical findings, many microbiome-based interventions fail to replicate in human studies. For example, while FMT from lean donors transfers the lean phenotype to mice, clinical trials in individuals with obesity and metabolic syndrome have shown only transient improvements in insulin sensitivity and no effect on body weight [29]. The consequences of such translational failures are significant, leading to costly and unsuccessful clinical trials.

The primary reasons for this bench-to-bedside gap include:

- Physiological Differences: Fundamental differences in gut anatomy, diet, microbiota composition, and immune system development between animal models (e.g., mice) and humans alter the cross-species translatability of interventions [29].

- Inter-individual Variability: The high degree of variability in human microbiome composition between individuals complicates the design of universally effective therapies and requires careful patient stratification [29] [30].

To overcome these challenges, the field is moving towards more targeted, mechanistically informed approaches, such as defined microbial consortia and engineered probiotics, and adapting clinical trial designs to account for baseline microbial composition and host diet [29]. The following diagram illustrates the integrated, iterative process required for successful translation, from initial discovery to clinical application, emphasizing the constant feedback needed between clinical insight and experimental models.

Standardized Protocols and Tools for Robust Microbiome Analysis

Inter-laboratory reproducibility remains a significant challenge in microbiome research, hindering progress in understanding host-microbe interactions and developing reliable therapeutic applications. Studies have documented considerable variability in microbiome data due to methodological differences in DNA extraction, sequencing protocols, and bioinformatic analysis [6] [9] [32]. For instance, international interlaboratory comparisons have revealed that methodological choices can significantly impact metagenomic sequencing results, with extraction protocols causing substantial variation in observed microbial abundances [9]. This reproducibility crisis has prompted the development of standardized experimental systems that can generate consistent, comparable results across different research settings. Without such standardization, the ability to validate findings, build upon existing research, and translate microbiome insights into clinical or agricultural applications remains severely limited.

Core Technologies: EcoFAB and Synthetic Communities

Fabricated Ecosystem Devices (EcoFAB)

EcoFAB devices are standardized, sterile laboratory habitats that enable highly controlled investigation of plant-microbe interactions. These fabricated ecosystems provide several key advantages: (1) precise environmental control including nutrient delivery, light, and temperature; (2) easy measurement of plant and microbial metrics; and (3) complete specification of all initial biotic and abiotic factors [4] [5]. The EcoFAB 2.0 represents an improved version that supports reproducible plant growth and microbiome assembly, with studies demonstrating consistent results across multiple laboratories [5]. These devices function as miniature ecosystems that bridge the gap between simplified laboratory conditions and complex natural environments, allowing for systematic investigation of microbial community dynamics.

Synthetic Microbial Communities (SynComs)

Synthetic microbial communities are precisely defined mixtures of microbial strains that represent core functions or taxonomic groups found in natural environments. A model 16-member soil SynCom has been developed for studying rhizosphere interactions, comprising bacterial isolates from switchgrass agricultural fields that span the typical diversity found in grass rhizospheres [33]. This community includes representatives from Actinomycetota, Bacillota, Pseudomonadota, and Bacteroidota phyla, with each strain selected from a different genus to facilitate identification through 16S rRNA gene sequencing [33] [5]. Key advantages of SynComs include reduced complexity compared to natural communities while retaining functional diversity, enabling researchers to establish causal relationships and investigate specific microbial interactions in a controlled manner.

Experimental Evidence for Standardization Efficacy

Multi-Laboratory Validation of Reproducibility

A comprehensive five-laboratory international study demonstrated that the combination of EcoFAB devices and defined SynComs produces highly reproducible results across different research settings [4] [5]. All participating laboratories observed consistent inoculum-dependent changes in plant phenotype, root exudate composition, and final bacterial community structure. Specifically, the inclusion of Paraburkholderia sp. OAS925 in the SynCom consistently dominated root colonization across all laboratories, comprising 98 ± 0.03% average relative abundance in the root microbiome [5]. This remarkable consistency emerged despite variations in growth chamber conditions (including light quality and temperature) across different laboratory settings, underscoring the robustness of the standardized system.

Table 1: Key Findings from Multi-Laboratory EcoFAB-SynCom Validation Study

| Parameter Measured | Finding | Consistency Across Labs |

|---|---|---|

| Sterility Maintenance | <1% contamination rate (2 out of 210 tests) | High |

| Plant Biomass | Significant decrease with SynCom17 inoculation | Consistent trend |

| Root Development | Decreased with SynCom17 after 14 days | Consistent trend |

| Microbiome Assembly | Paraburkholderia dominance in SynCom17 (98 ± 0.03%) | High |

| Root Exudate Composition | Inoculum-dependent changes | Consistent |

Comparative Performance Against Traditional Methods

When compared to traditional microbiome research approaches, the EcoFAB-SynCom system demonstrates superior reproducibility and control. Automated assembly of SynComs using picoliter liquid printing technology produces significantly more consistent communities than manual assembly, with machine-assembled communities showing reduced Bray-Curtis dissimilarity between replicates (one-way ANOVA with BH FDR correction, , P < 0.05; *, P < 0.001) [33]. Additionally, optimized growth conditions in low-nutrient media (0.1× R2A) significantly increase community α-diversity compared to richer media, as measured by Shannon diversity index and Pielou's evenness (one-way ANOVA with BH FDR correction; *, P < 0.05; *, P < 0.005) [33]. This level of precision and optimization is rarely achievable with conventional microbiome research methods that often suffer from uncontrolled variability.

Table 2: Protocol Optimization for Enhanced Reproducibility in SynCom Research

| Protocol Aspect | Traditional Approach | Optimized EcoFAB-SynCom Approach | Impact on Reproducibility |

|---|---|---|---|

| Community Assembly | Manual pipetting | Automated picoliter printing | Significantly reduced variability (Bray-Curtis distance) |

| Growth Medium | Standard nutrient concentration | Low-nutrient (0.1× R2A) | Enhanced α-diversity |

| Community Storage | Variable methods | Cryopreservation with glycerol | Enables replication and dissemination |

| Sterility Control | Laboratory-specific protocols | Standardized EcoFAB 2.0 with documented sterility testing | <1% contamination rate |

Experimental Protocols and Methodologies

Standardized EcoFAB Workflow

The detailed experimental protocol for implementing EcoFAB-SynCom systems has been rigorously validated and made publicly available [5] [34]. The general procedure follows these key steps: (1) EcoFAB 2.0 device assembly; (2) Plant seed dehusking, surface sterilization, and stratification at 4°C for 3 days; (3) Germination on agar plates for 3 days; (4) Transfer of seedlings to EcoFAB 2.0 device for additional 4 days of growth; (5) Sterility testing and SynCom inoculation into EcoFAB device; (6) Water refill and root imaging at multiple timepoints; and (7) Sampling and plant harvest at 22 days after inoculation. Critical to success is the use of specified part numbers for labware and materials to minimize variation stemming from equipment differences, along with centralized preparation and distribution of key components like SynCom inoculum and plant seeds [5].

SynCom Preparation and Inoculation

For synthetic community preparation, the optimized protocol involves several critical steps: (1) Individual strain cultivation in appropriate media; (2) Normalization of cell densities using OD600 to CFU conversions; (3) Automated assembly using picoliter liquid handling systems for precision; (4) Mixing in optimized starting ratios to enhance diversity; (5) Cryopreservation in 20% glycerol for storage and distribution; and (6) Resuscitation from frozen stocks for inoculation [33] [5]. The inoculation is typically performed at a standardized density of 1 × 10^5 bacterial cells per plant, with communities shipped as 100× concentrated stocks in 20% glycerol on dry ice to participating laboratories to ensure consistency [5]. This meticulous approach to community construction and application ensures that experiments begin with well-defined, reproducible microbial populations.

Table 3: Essential Research Reagent Solutions for EcoFAB-SynCom Experiments

| Resource Type | Specific Examples | Function/Application |

|---|---|---|

| Standardized Devices | EcoFAB 2.0 | Sterile habitat for reproducible plant-microbe studies |

| Model Organisms | Brachypodium distachyon (model grass) | Standardized plant host for microbiome studies |

| Synthetic Communities | 16-17 member soil bacterial SynCom | Defined microbial community for reproducible inoculation |

| Growth Media | 0.1× R2A low-nutrient media | Enhanced microbial diversity in communities |

| Preservation Solution | 20% glycerol | Cryopreservation and storage of SynComs |

| Reference Materials | DNA mock communities | Benchmarking and validation of sequencing protocols |

| Analysis Tools | Standardized bioinformatics pipelines | Consistent data processing across laboratories |

The implementation of standardized habitats (EcoFAB) and model communities (SynCom) represents a transformative approach for addressing the reproducibility crisis in microbiome research. By controlling both biotic and abiotic factors from the outset, these systems generate consistently reproducible results across independent laboratories, as demonstrated in multiple international validation studies [33] [4] [5]. The availability of detailed protocols, standardized reagents, and benchmarking datasets provides researchers with the necessary tools to conduct rigorous, comparable studies that build collective knowledge rather than generating isolated findings. As the field moves toward increased standardization, these approaches will be crucial for unlocking the potential of microbiome research to address pressing challenges in human health, sustainable agriculture, and environmental management.

Best Practices for Sample Collection, Storage, and DNA Extraction to Minimize Bias

Inter-laboratory reproducibility remains a significant challenge in microbiome sequencing research [6]. The observed microbial community can be dramatically skewed by technical choices at every stage of the workflow, from sample collection to DNA sequencing [35] [9]. These protocol-dependent biases critically limit the comparability of findings between studies and hinder the development of robust clinical microbiome applications [36]. This guide objectively compares methodological alternatives for key steps in microbiome processing, presenting experimental data to inform standardized practices that enhance reproducibility and data reliability across laboratories.

Sample Collection & Storage: A Critical First Step

The initial handling of samples immediately after collection introduces substantial bias, particularly through the promotion of bacterial "blooms" and the loss of susceptible taxa [6]. The chosen method must balance logistical constraints with the need to preserve the original microbial composition.

Experimental Comparison of Storage Conditions

Research comparing storage approaches reveals significant differences in their ability to maintain microbial integrity. One systematic investigation tested several commercially available stabilization solutions against immediate freezing, using human fecal samples to simulate real-world conditions [37].

Table 1: Impact of Sample Storage Conditions on Microbial Composition

| Storage Condition | Relative Abundance vs. Fresh-Frozen | Key Observed Biases |

|---|---|---|

| Immediate Freezing (-80°C) | Baseline | Considered the gold standard; minimal changes when feasible [35] [38]. |

| OMNIgene·GUT (RT) | Limited bias | Limited overgrowth of Enterobacteriaceae; higher Bacteroidota, lower Actinobacteriota and Firmicutes vs. frozen [37]. |

| Zymo Research Buffer (RT) | Limited bias | Limited overgrowth of Enterobacteriaceae; similar profile to OMNIgene·GUT [37]. |

| Unpreserved (Room Temperature) | High bias | Significant blooms of Enterobacteriaceae and other aerobic bacteria; decreased alpha diversity over time [37] [39]. |

The data indicates that while immediate freezing is the optimal standard, stabilization buffers provide a practically viable compromise for large-scale or logistically challenging studies where maintaining a cold chain is difficult [37]. Unpreserved samples stored at room temperature are prone to significant compositional shifts and are not recommended.

DNA Extraction: The Largest Source of Technical Bias

DNA extraction is arguably the most critical source of technical variation in microbiome studies, with different protocols yielding significantly different microbial profiles [35] [9]. The efficiency of cell lysis varies dramatically based on bacterial cell wall structure (e.g., Gram-positive vs. Gram-negative) and morphology [36] [6].

Experimental Insights from Interlaboratory Studies

A major international interlaboratory study, the Mosaic Standards Challenge (MSC), involved 44 labs analyzing the same stool and mock community samples using their standard protocols [9]. The study found that methodological choices during DNA extraction had a greater impact on the resulting Firmicutes-to-Bacteroidetes ratio than the biological differences between sample donors in some cases. The use of a homogenizer during extraction was identified as a key factor in improving measurement robustness and reducing variability between technical replicates [9].

Furthermore, a novel study demonstrated that taxon-specific extraction bias is predictable based on bacterial cell morphology [36]. This finding opens the door for computational correction of extraction bias in future microbiome analyses, using mock community controls to measure and adjust for protocol-dependent distortions [36].

Optimizing the Lysis Step

The method of cell disruption is a major contributor to variation. Mechanical lysis (bead-beating) is consistently shown to be superior to enzymatic or chemical lysis alone, as it is more effective at breaking tough cell walls of Gram-positive bacteria [37] [35].

Table 2: Impact of DNA Extraction and Library Preparation Choices

| Protocol Step | Method Compared | Impact on Microbiome Analysis | Recommended Best Practice |

|---|---|---|---|

| Cell Lysis | Mechanical vs. Chemical | Mechanical lysis is critical; a major source of variation in microbiota composition [37]. | Use a validated, mechanical bead-beating protocol [37] [35]. |

| Extraction Kit | Different Commercial Kits | Significantly different microbial compositions and DNA yields; one of the largest sources of bias [40] [9]. | Use the same kit and lot for all samples in a study; validate with mock communities [35]. |

| PCR Cycle Number | High (30+) vs. Low (25) | Higher cycles increase contaminants and chimera formation [37]. | Use ~25 cycles as optimal to reduce artifacts [37]. |

| Input DNA | Varying Amounts | Low input can increase cross-contamination; high input can increase chimera formation [36]. | Use optimal input (e.g., ~125 pg) to balance yield and contamination risk [37]. |

The Scientist's Toolkit: Essential Research Reagents

The following reagents and controls are essential for conducting rigorous and reproducible microbiome research.

Table 3: Essential Research Reagents and Controls

| Reagent / Control | Function & Purpose |

|---|---|

| Mock Microbial Communities | Defined mixes of microbial strains or their DNA used to benchmark and correct for bias in the entire wet-lab and bioinformatics workflow [36] [6] [9]. |

| Stabilization Buffers | Chemical solutions that preserve microbial community composition at room temperature for transport and storage [37] [41]. |

| DNA Extraction Kits with Bead-Beating | Dedicated kits that include a mechanical lysis step for more uniform and efficient disruption of diverse cell types [37] [35]. |

| Negative Controls | Blanks (e.g., water, buffer) carried through the entire workflow to identify reagent and environmental contaminants [36] [35]. |

| High-Fidelity DNA Polymerase | PCR enzymes with low error rates to minimize introduction of sequence errors during amplification [35] [40]. |

An Optimized End-to-End Workflow

Integrating the best practices from sample collection to sequencing yields a robust workflow designed to minimize bias. The following diagram maps the critical steps and decisions, highlighting the paths that lead to the most reproducible outcomes.

Achieving reproducibility in microbiome research requires meticulous attention to protocol standardization across all stages of the workflow. The experimental data presented confirms that sample storage method and DNA extraction protocol are two of the most critical factors influencing the observed microbial composition and inter-laboratory variability. To enhance the reliability and comparability of microbiome data, researchers should adopt the following practices: First, use stabilization buffers or immediate freezing to prevent microbial blooms after collection. Second, employ DNA extraction methods that include rigorous mechanical lysis and validate these protocols using mock communities. Finally, consistently include appropriate controls and report all methodological metadata to account for batch effects and enable meaningful cross-study comparisons. By systematically addressing these key sources of bias, the field can move closer to robust and clinically actionable insights.