Accelerating Discovery: Strategies to Reduce Data Collection Time in Drug Characterization Workflows

This article provides a comprehensive guide for researchers and drug development professionals seeking to accelerate the data collection phase in characterization workflows.

Accelerating Discovery: Strategies to Reduce Data Collection Time in Drug Characterization Workflows

Abstract

This article provides a comprehensive guide for researchers and drug development professionals seeking to accelerate the data collection phase in characterization workflows. It explores the foundational principles of modern, data-efficient strategies like Model-Informed Drug Development (MIDD). The piece delves into practical applications of AI and machine learning for predictive modeling and automation, addresses common bottlenecks with targeted troubleshooting, and outlines robust validation frameworks to ensure regulatory compliance. By synthesizing these core intents, the article serves as a strategic blueprint for shortening development timelines and bringing effective therapies to patients faster.

The High Cost of Slow Data: Rethinking Characterization for Speed

Identifying Bottlenecks in Traditional Characterization Workflows

Characterization has emerged as a critical bottleneck in modern research and development, particularly as synthesis and automation capabilities outpace our ability to analyze and interpret results. In automated labs, while synthesis methods have scaled significantly through pipetting, microfluidics, and combinatorial techniques, characterization remains dependent on material class, synthesis method, and measurement constraints that don't scale efficiently [1].

The fundamental challenge lies in characterization's inherent differences from synthesis: measurement times for techniques like X-ray or microscopy have physical limitations, the value of each measurement varies drastically by experiment, and combining outputs from multiple instruments to extract joint meaning remains largely unexplored [1].

Troubleshooting Guides & FAQs

FAQ 1: Why does characterization become the bottleneck even with automated equipment?

Answer: Characterization bottlenecks persist due to several interconnected factors:

- Physical Measurement Limits: Techniques like X-ray scanning or microscopy have inherent time requirements. As one expert notes, "An X-ray scan might take 30 minutes — maybe you can run it 10× faster, but not 100×" [1].

- Context-Dependent Operations: Microscopies (SPM, STEM, etc.) require context-sensitive operation and don't scale easily through parallelization [1].

- Data Integration Challenges: Combining outputs from multiple characterization tools to extract meaningful insights remains technically challenging, with differences in manufacturer protocols creating integration barriers [1].

FAQ 2: How can we reduce data collection time without compromising data quality?

Answer: Implement a multi-pronged approach focusing on strategic sampling and workflow integration:

- Optimize Sampling Strategies: Since characterization is often slower than synthesis, intelligent sampling matters significantly. Focus characterization efforts on the most informative samples rather than comprehensive analysis of all available material [1].

- Accelerate Individual Tools: Implement rapid structure-property mapping and fast compositional screening specifically designed for combinatorial libraries [1].

- Build Multi-Tool Workflows: Develop integrated characterization workflows where samples move systematically between complementary instruments, though this requires addressing vendor integration challenges and throughput asynchronicity [1].

FAQ 3: What practical steps can laboratories take today to alleviate characterization bottlenecks?

Answer: Laboratories can implement these immediate improvements:

- Prioritize Characterization Planning: During experimental design, identify which characterization data is truly essential versus nice-to-have, applying risk-based approaches to focus resources [2].

- Implement Smart Automation: Combine rule-based automation for predictable tasks with AI augmentation for appropriate use cases like medical coding, which follows a modified workflow where AI suggests terms for coder review [2].

- Standardize Data Capture: Ensure consistent metadata collection and traceability. As emphasized by industry experts, "If AI is to mean anything, we need to capture more than results. Every condition and state must be recorded" [3].

Quantitative Analysis of Characterization Workflows

The table below summarizes key metrics and improvement strategies for common characterization bottlenecks:

Table 1: Characterization Bottleneck Analysis and Mitigation Strategies

| Bottleneck Category | Impact Measurement | Current Solutions | Expected Efficiency Gain |

|---|---|---|---|

| Manual Sample Handling | Operator time 2-4 hours daily for repetitive tasks | Automated liquid handlers (e.g., Veya platform), ergonomic pipettes | 30-50% reduction in hands-on time; improved reproducibility [3] |

| Multi-Instrument Data Correlation | 40-60% time spent on data integration versus analysis | Multi-tool characterization workflows; standardized data protocols | 25-35% faster insight generation; improved data reliability [1] |

| Low-Value Characterization | 20-30% of characterization runs provide limited new information | Risk-based approaches; focused sampling strategies | 2-3x more relevant data per unit time [2] |

| Data Quality Issues | 15-25% rework rate due to metadata or quality problems | Systems like Labguru and Mosaic for sample management; automated quality control [3] | 40-60% reduction in repeated experiments [3] |

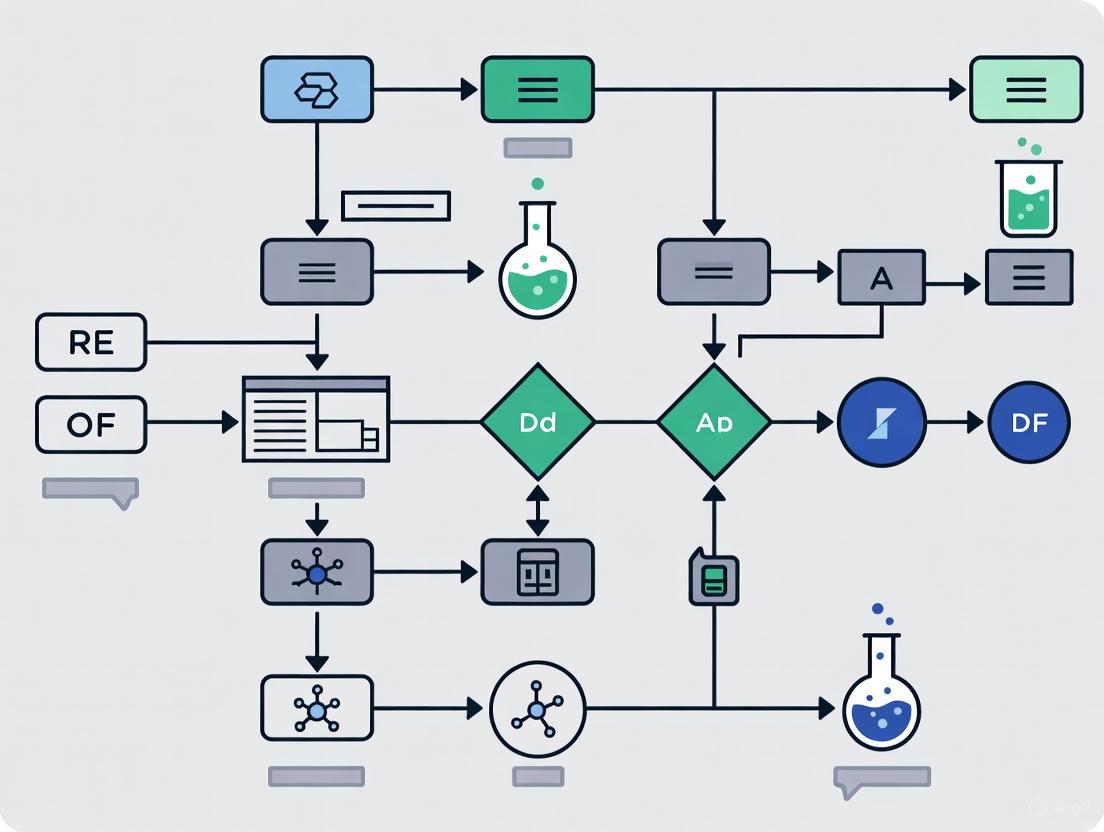

Workflow Visualization: Current State vs. Optimized Characterization

Diagram: Traditional vs. Optimized Characterization Workflow. The traditional workflow (top) shows sequential, disconnected steps creating bottlenecks, while the optimized workflow (bottom) demonstrates integrated, automated processes that accelerate insight generation.

Essential Research Reagent Solutions

Table 2: Key Research Reagents and Materials for Characterization Workflows

| Reagent/Material | Primary Function | Application Context | Impact on Workflow Efficiency |

|---|---|---|---|

| Automated Liquid Handlers | Precision liquid handling with minimal operator intervention | High-throughput screening; reagent dispensing | Reduces manual pipetting time by 70-80%; improves reproducibility [3] |

| 3D Cell Culture Platforms | Standardized human-relevant tissue models | Drug safety and efficacy testing | Provides more predictive data; reduces animal model dependency by 40-60% [3] |

| Integrated Protein Expression Systems | Rapid protein production from DNA to purified protein | Structural biology; drug target validation | Compresses weeks-long processes to under 48 hours; handles challenging proteins [3] |

| Multi-Modal Data Integration Platforms | Unified analysis of imaging, multi-omic and clinical data | Biomarker discovery; mechanism of action studies | Reduces data siloing; accelerates correlation of molecular features with disease [3] |

| Cartridge-Based Screening Systems | Parallel construct and condition screening | Protein optimization; expression testing | Enables 192 parallel conditions; standardizes previously variable processes [3] |

Strategic Implementation Framework

Successful characterization workflow optimization requires addressing three critical directions identified by experts:

Tool Acceleration: Focus on specific techniques like rapid structure-property mapping and fast compositional screening that offer the highest return on investment [1].

Intelligent Sampling: Implement strategic sampling protocols that maximize information yield while minimizing characterization time, recognizing that "characterization is often slower than synthesis" [1].

Multi-Tool Integration: Develop standardized protocols for combining outputs from complementary characterization tools, though this requires addressing vendor integration challenges and establishing common standards [1].

The transition from traditional, manual characterization workflows to optimized, integrated approaches represents the most significant opportunity for reducing data collection timelines in research. By implementing smart automation, strategic sampling, and integrated data platforms, laboratories can transform characterization from a bottleneck into a competitive advantage.

Core Principles of Model-Informed Drug Development (MIDD) for Efficiency

Model-Informed Drug Development (MIDD) is a quantitative framework that uses modeling and simulation to inform decision-making throughout the drug development process. MIDD plays a pivotal role in drug discovery and development by providing quantitative prediction and data-driven insights that accelerate hypothesis testing, assess potential drug candidates more efficiently, reduce costly late-stage failures, and accelerate market access for patients [4]. Evidence from drug development and regulatory approval has demonstrated that a well-implemented MIDD approach can significantly shorten development cycle timelines, reduce discovery and trial costs, and improve quantitative risk estimates [4]. The strategic integration of MIDD is recognized as crucial for reversing the declining productivity in pharmaceutical research, often referred to as "Eroom's Law" [5].

Core Principles & Quantitative Impact

Foundational Principles

MIDD is defined as a "quantitative framework for prediction and extrapolation, centered on knowledge and inference generated from integrated models of compound, mechanism and disease level data and aimed at improving the quality, efficiency and cost effectiveness of decision making" [6]. Several core principles form the foundation of MIDD:

Fit-for-Purpose Implementation: MIDD tools must be well-aligned with the "Question of Interest", "Content of Use", "Model Evaluation", as well as "the Influence and Risk of Model" in presenting the totality of MIDD evidence [4]. A model or method is not fit-for-purpose when it fails to define the COU, has poor data quality, or lacks proper model verification, calibration, and validation [4].

Strategic Integration: MIDD should be strategically integrated throughout the five main stages of drug development: discovery, preclinical research, clinical research, regulatory review, and post-market monitoring [4].

Evidence-Based Decision Making: MIDD "informs" rather than "bases" decisions, providing quantitative support for key development choices while considering the totality of evidence [6].

Regulatory Harmonization: The International Council for Harmonisation (ICH) has expanded its guidance including MIDD, namely the M15 general guidance, to standardize MIDD practices across different countries and regions [4] [7].

Demonstrated Efficiency Gains

The business case for MIDD adoption has been established within the pharmaceutical industry, with documented significant efficiency improvements and cost savings [6].

Table 1: Quantitative Impact of MIDD on Drug Development Efficiency

| Metric | Impact | Source |

|---|---|---|

| Development Timeline Savings | ~10 months per program | [5] |

| Cost Savings | ~$5 million per program | [5] |

| Clinical Trial Budget Reduction | $100 million annually (Pfizer) | [6] |

| Cost Savings from Decision-Making Impact | $0.5 billion (Merck & Co/MSD) | [6] |

| Proof of Mechanism Success | 2.5x increase (AstraZeneca) | [8] |

MIDD Troubleshooting Guide: Common Challenges & Solutions

Frequently Asked Questions

Q: Our team is new to MIDD. Which modeling approach should we start with for our small molecule oncology program?

A: Begin with physiologically-based pharmacokinetic (PBPK) modeling for first-in-human dosing predictions and drug-drug interactions. For later stage development, implement population PK (PopPK) and exposure-response modeling to understand variability and dose-response relationships [8]. The "fit-for-purpose" principle dictates that the tool must match your specific question of interest and stage of development [4].

Q: How can we justify using MIDD to replace certain clinical studies, particularly for special populations?

A: Regulatory agencies increasingly accept robust MIDD approaches to support waivers for certain clinical studies. For special populations, PBPK modeling has become a standard approach to predict pharmacokinetics in unstudied populations such as pediatric, pregnant, and lactating populations, and those with renal or hepatic impairment [8]. Document your model validation thoroughly and reference relevant FDA and ICH guidance, including the ICH M15 guideline [7].

Q: We have very limited patient data for our First-in-Human trial. How can MIDD help accelerate development?

A: Apply model-based dose prediction strategies, including toxicokinetic PK, allometric scaling, QSP and semi-mechanistic PK/PD modeling [4]. These approaches help determine the starting dose and subsequent dose escalation in human trials even with limited data. The key is using all available nonclinical data effectively through quantitative approaches [4].

Q: Why are certain MIDD approaches needed for one drug product but not another?

A: The choice of MIDD approaches depends on multiple factors including the drug's modality, mechanism of action, therapeutic area, and specific development questions. For example, quantitative systems pharmacology (QSP) is particularly valuable for new modalities and combination therapies, while PBPK is standard for small molecules where drug-drug interactions are a concern [8].

Q: How can we gain organizational acceptance for MIDD approaches when facing resistance?

A: Demonstrate value through pilot projects with clear success metrics. Share case studies showing impact, such as how MIDD has been shown to increase the success rates of new drug approvals by offering a structured, data-driven framework for evaluating safety and efficacy [4]. Build cross-functional teams including pharmacometricians, pharmacologists, statisticians, clinicians, and regulatory colleagues [4].

Technical Implementation Challenges

Challenge: Model fails to define Context of Use (COU) adequately

Solution: Clearly document the COU during model planning stages. The COU should specify the specific role and purpose of the model, the decisions it will inform, and the boundaries of its application [4].

Challenge: Insufficient model evaluation or validation

Solution: Implement rigorous model evaluation procedures, including verification, calibration, and validation. Follow good practice recommendations for documentation to enhance credibility for regulatory submissions [6].

Challenge: Difficulty with multidisciplinary alignment on model assumptions

Solution: Facilitate collaborative team meetings early in model development to align on key assumptions. Use a "fit-for-purpose" framework to ensure model complexity matches the decision needs [4].

Experimental Protocols & Methodologies

Key MIDD Workflow

The following diagram illustrates the strategic MIDD workflow from problem identification through to decision support and regulatory application:

Common MIDD Methodologies

Population PK (PopPK) Modeling Protocol:

- Data Collection: Collect sparse PK samples from clinical trials (typically 2-8 samples per subject) [8]

- Base Model Development: Develop structural model using nonlinear mixed-effects modeling

- Covariate Analysis: Identify influential patient factors (age, weight, organ function, etc.)

- Model Validation: Use visual predictive checks and bootstrap methods

- Simulation: Generate exposure distributions for target populations

PBPK Model Development Protocol:

- System Parameters: Define physiological parameters for relevant populations

- Drug Parameters: Incorporate drug-specific properties (lipophilicity, permeability, etc.)

- Model Verification: Verify against in vitro and in vivo data

- Application: Predict drug-drug interactions, special population PK, or first-in-human dosing [8]

Model-Based Meta-Analysis (MBMA) Protocol:

- Data Curation: Systematically collect clinical trial data from literature and databases

- Model Structure: Develop model relating treatment effects to covariates

- Validation: Compare predictions to actual clinical outcomes

- Application: Support trial design optimization and comparator analysis [8]

Research Reagent Solutions: Essential MIDD Tools

Table 2: Key Methodologies and Tools in Model-Informed Drug Development

| Tool/Methodology | Primary Function | Typical Application |

|---|---|---|

| Quantitative Systems Pharmacology (QSP) | Integrates systems biology with pharmacology to generate mechanism-based predictions | New modalities, dose selection, combination therapy, target selection [4] [8] |

| Physiologically Based Pharmacokinetic (PBPK) Modeling | Mechanistic modeling simulating drug movement through organs and tissues | Drug-drug interactions, special populations, formulation development [4] [8] |

| Population PK (PopPK) | Analyzes variability in drug concentrations between individuals | Dose regimen optimization, covariate effect characterization [8] |

| Exposure-Response (ER) Analysis | Characterizes relationship between drug exposure and effectiveness or adverse effects | Dose selection, benefit-risk assessment [4] |

| Model-Based Meta-Analysis (MBMA) | Indirect comparison of treatments using highly curated clinical trial data | Comparator analysis, trial design optimization, external control arms [8] |

| Artificial Intelligence/Machine Learning | Analyzes large-scale biological, chemical, and clinical datasets | Drug discovery, ADME property prediction, dosing optimization [4] |

Strategic Implementation for Maximum Efficiency

MIDD Application Across Development Stages

The strategic application of MIDD across all development phases is essential for maximizing efficiency gains [4]:

- Discovery Stage: Use QSP and quantitative structure-activity relationship (QSAR) modeling for target identification and lead compound optimization [4]

- Preclinical Stage: Apply PBPK modeling and semi-mechanistic PK/PD to improve prediction accuracy and support first-in-human studies [4]

- Clinical Development: Implement PopPK, ER analysis, and clinical trial simulation to optimize trial design and dosage selection [4]

- Regulatory Submission: Use model-integrated evidence to support label claims and dosing recommendations [7]

- Post-Market Stage: Apply models to support label updates and life-cycle management [4]

Relationship Between MIDD Approaches

The following diagram shows how different MIDD methodologies interact and support various aspects of drug development:

Future Directions & Emerging Applications

MIDD continues to evolve with several emerging applications that promise further efficiency gains:

- Democratization of MIDD: Making modeling technology accessible to non-modelers through improved user interfaces and AI integration [5]

- Reduction of Animal Testing: Using PBPK and QSP modeling as alternatives to animal testing through approaches like Certara's Non-Animal Navigator solution [5]

- AI Integration: Applying artificial intelligence to automate model definition, creation, and validation, particularly for unstructured data analysis [5]

- Regulatory Advancement: Continued development of guidelines and frameworks, such as the ICH M15 guideline, to promote standardized assessment of MIDD evidence [7]

The implementation of MIDD approaches represents a fundamental shift in drug development methodology, moving from empirical testing to quantitative, predictive science. By strategically applying these tools throughout the development lifecycle, researchers can significantly reduce data collection time in characterization workflows while improving the quality and efficiency of drug development.

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q: My model fails to define the Context of Use (COU) and has poor data quality. Why is it not "Fit-for-Purpose"? A: A model is not Fit-for-Purpose when it fails to define the COU, lacks adequate data quality or quantity, or has insufficient model verification, calibration, and validation. Oversimplifying the model or unjustifiably adding complexity can also render it unsuitable for its intended question of interest (QOI) [4].

Q: Why might a machine learning model trained on one clinical scenario fail in a different setting? A: A machine learning model may not be Fit-for-Purpose if it is trained on a specific clinical scenario and then used to predict outcomes in a different clinical setting. This underscores the importance of aligning the model's development with its intended context of use and ensuring the training data is representative [4].

Q: How can I determine if my assay results are reliable for screening? A: The robustness of an assay is determined not just by the size of the assay window but also by the standard deviation of the data. The Z'-factor incorporates both these factors. Assays with a Z'-factor greater than 0.5 are generally considered suitable for screening. A large assay window with significant noise can have a lower Z'-factor than an assay with a small window but little noise [9].

Q: What is the most common reason for a complete lack of assay window in a TR-FRET assay? A: The most common reason is an improperly configured instrument. It is critical to use the exact emission filters recommended for your specific instrument model, as the filter choice can determine the success or failure of the assay [9].

Common Issues and Expert Recommendations

| Problem Scenario | Expert Recommendation |

|---|---|

| No assay window in TR-FRET | Verify instrument setup and ensure the use of precisely recommended emission filters [9]. |

| Differences in EC50/IC50 between labs | Investigate differences in prepared stock solutions, which are a primary cause of such discrepancies [9]. |

| Lack of cellular activity in cell-based assay | The compound may not cross the cell membrane, may be actively pumped out, or may be targeting an inactive, upstream, or downstream kinase [9]. |

| Model not Fit-for-Purpose | Ensure the model clearly defines the Context of Use (COU), uses high-quality data, and undergoes proper verification and validation [4]. |

Fit-for-Purpose Modeling Tools and Applications

Model-Informed Drug Development (MIDD) employs a suite of quantitative tools that should be selected based on the specific Question of Interest (QOI) at each stage of development [4]. The table below summarizes key MIDD methodologies and their primary utilities.

Key MIDD Methodologies and Utilities

| Modeling Tool | Description | Primary Utility in Drug Development |

|---|---|---|

| Quantitative Structure-Activity Relationship (QSAR) | Computational modeling to predict a compound's biological activity from its chemical structure [4]. | Early-stage lead compound optimization and target identification [4]. |

| Physiologically Based Pharmacokinetic (PBPK) | Mechanistic modeling to understand the interplay between physiology and drug product quality [4]. | Predicting drug-drug interactions and extrapolating to special populations [4]. |

| Population PK (PPK) & Exposure-Response (ER) | Models that explain variability in drug exposure among individuals and analyze the relationship between exposure and effect [4]. | Optimizing dosage regimens and informing clinical trial design [4]. |

| Quantitative Systems Pharmacology (QSP) | Integrative, mechanism-based modeling combining systems biology and pharmacology [4]. | Generating hypotheses on drug behavior and treatment effects across biological pathways [4]. |

| AI/ML in MIDD | Using machine learning to analyze large-scale datasets for prediction and decision-making [4]. | Enhancing drug discovery, predicting ADME properties, and optimizing dosing strategies [4]. |

Experimental Protocols for Key MIDD Workflows

Protocol 1: Assay Development and Validation for Preclinical Screening

Objective: To establish a robust and reliable assay for screening compound activity, ensuring data quality is sufficient for decision-making.

- Instrument Setup: Confirm the instrument (e.g., microplate reader) is configured according to manufacturer guides. For TR-FRET assays, this is critical—verify the exact excitation and emission filters are installed [9].

- Control Preparation: Prepare control samples representing the minimum and maximum assay signal (e.g., 0% and 100% phosphorylation controls for a kinase assay) [9].

- Data Collection: Run the assay with controls and test compounds. Collect signal data (Relative Fluorescence Units - RFU) for both donor and acceptor channels where applicable [9].

- Ratiometric Analysis: For TR-FRET data, calculate an emission ratio by dividing the acceptor signal RFU by the donor signal RFU. This controls for pipetting variances and reagent lot-to-lot variability [9].

- Assay Quality Assessment: Calculate the Z'-factor using the mean (μ) and standard deviation (σ) of the high and low controls:

- Formula: Z' = 1 - [3(σhigh + σlow) / |μhigh - μlow|]

- Interpretation: A Z'-factor > 0.5 indicates an assay robust enough for screening [9].

Protocol 2: Implementing a Fit-for-Purpose MIDD Strategy

Objective: To strategically select and apply a modeling tool to answer a specific QOI, thereby reducing development time and resources.

- Define the Question of Interest (QOI): Precisely articulate the scientific or clinical question needing resolution (e.g., "What is the predicted human efficacious dose?") [4].

- Establish the Context of Use (COU): Clearly document the specific application of the model, including the decisions it will inform and the stakeholders involved [4].

- Select the Modeling Tool: Align the QOI and COU with the appropriate quantitative methodology. For example:

- Model Evaluation and Validation: Perform verification, calibration, and validation based on the predefined COU to ensure the model is reliable for its purpose [4].

- Incorporate into Overall Strategy: Integrate the model's insights with other scientific evidence and regulatory guidance to support development decisions and regulatory interactions [4].

Workflow and Relationship Visualizations

DOT Scripts for Diagram Generation

The Scientist's Toolkit: Research Reagent Solutions

Essential Materials for Featured Experiments

| Item | Function |

|---|---|

| TR-FRET Assay Kits | Provide validated reagents for studying molecular interactions (e.g., kinase activity) using Time-Resolved Fluorescence Resonance Energy Transfer, which reduces background noise [9]. |

| LanthaScreen Eu/Tb Donors | Lanthanide-based fluorescent donors used in TR-FRET assays. Their long fluorescence lifetime allows for time-gated detection, enhancing signal-to-noise ratio [9]. |

| Microplate Reader with TR-FRET Capability | An instrument capable of exciting samples and measuring fluorescence emission at specific wavelengths and with time-gated detection, essential for TR-FRET assays [9]. |

| Development Reagent | In assays like Z'-LYTE, this is an enzyme mixture that selectively cleaves non-phosphorylated peptide substrates, generating the assay's fluorescent signal [9]. |

| PBPK/QSP Software Platforms | Computational tools that enable the construction and simulation of mechanistic models to predict human pharmacokinetics and pharmacodynamics before clinical trials [4]. |

The Role of AI and Machine Learning in Foundational Data Exploration

Frequently Asked Questions (FAQs)

Q1: What are the primary benefits of using AI for data exploration in research? AI significantly accelerates the initial data exploration phase, which is often the most time-consuming part of research. Key benefits include [10]:

- Speed and Efficiency: AI can analyze large volumes of data far more quickly than humans, enabling real-time insights and high-speed data processing [10].

- Cost Reduction: Automating repetitive tasks like data cleaning can lead to substantial operational cost savings. One report indicates 54% of businesses implementing AI achieved cost savings [10].

- Enhanced Innovation: A global survey found that 64% of organizations report that AI is enabling greater innovation, allowing researchers to focus on higher-level analysis and hypothesis generation [11].

Q2: How can AI help reduce data collection time in characterization workflows? AI reduces data collection time through automation and intelligent forecasting [10] [12]:

- Automated Data Collection: AI tools can automate data gathering from various sources, including CRMs, web tracking, and public data repositories, without manual coding or web scraping [10].

- Predictive Analytics: By analyzing historical data, AI can forecast necessary data points and optimize collection protocols, preventing the gathering of redundant information. In drug development, this is critical as trials have become vastly more complex, collecting 283% more data points in 2020 than in 2010 [12].

Q3: What are the most common technical challenges when integrating AI into existing research workflows? Researchers often face the following hurdles [11] [10] [13]:

- Data Quality and Bias: The principle "garbage in, garbage out" applies strongly to AI. If the source data is poorly formatted, contains errors, or has inherent biases, the AI's outputs will be unreliable [10].

- Workflow Integration: Most organizations are still in the early stages of scaling AI. Successfully capturing value requires fundamentally redesigning existing workflows to embed AI, not just applying it in isolated pockets [11].

- Unstructured Data Management: Generative AI often works with unstructured data (text, images), which many organizations are not equipped to manage effectively. Getting this data into a usable shape requires significant human curation and effort [13].

Q4: Are AI tools a threat to the roles of data analysts and scientists? No, rather than replacing experts, AI transforms their roles. With an estimated 402 million terabytes of data generated daily, the need for skilled professionals to interpret, validate, and extract value from data is greater than ever. AI handles time-consuming, repetitive tasks (like data cleaning, which can consume 70-90% of an analyst's time), freeing up experts to solve more complex problems and drive innovation [10].

Troubleshooting Guides

Problem 1: Poor Quality or Biased AI Outputs

- Symptoms: Model predictions are inaccurate, nonsensical, or reflect societal biases present in the training data.

- Possible Causes & Solutions:

- Cause: Underlying training data contains errors, missing values, or outliers.

- Solution: Implement rigorous, AI-powered data cleaning procedures to identify outliers, handle empty values, and normalize data before analysis [10].

- Cause: Data lacks diversity or contains historical biases.

- Cause: Underlying training data contains errors, missing values, or outliers.

Problem 2: Difficulty Demonstrating Quantitative Value from AI Initiatives

- Symptoms: Inability to connect AI projects to measurable business or research outcomes, such as reduced cycle times or cost savings.

- Possible Causes & Solutions:

- Cause: No established metrics or controlled experiments to measure impact.

- Solution: Move beyond pilot phases and establish Key Performance Indicators (KPIs) for AI solutions. Use controlled experiments; for example, have one group use AI for a task while a control group does not, then compare outcomes like productivity or error rates [13].

- Cause: AI is used in isolation without redesigning the broader workflow.

- Solution: Focus on enterprise-level scaling and intentional workflow redesign. AI high performers are nearly three times more likely to have redesigned individual workflows, which is a key factor for achieving meaningful business impact [11].

- Cause: No established metrics or controlled experiments to measure impact.

Problem 3: Data Security and Privacy Concerns

- Symptoms: Risk of exposing sensitive or proprietary research data when using third-party AI tools.

- Possible Causes & Solutions:

- Cause: Using cloud-based GenAI tools that may use input data for model training.

Quantitative Data on AI Benefits and Challenges

Table 1: Reported Impact of AI Adoption in Organizations [11]

| Impact Category | Percentage of Respondents Reporting Benefit |

|---|---|

| Enablement of Innovation | 64% |

| Improvement in Customer Satisfaction | ~48% |

| Improvement in Competitive Differentiation | ~48% |

| Enterprise-level EBIT Impact | 39% |

| Organizations Scaling AI (AI High Performers) | ~6% |

Table 2: Common AI Data Analysis Techniques and Applications [15]

| Technique | Category | Primary Research Application |

|---|---|---|

| Data Cleaning & Preparation | Foundational | Identifies outliers, handles missing data; automates the 70-90% of time analysts spend on data prep [10]. |

| Machine Learning Algorithms | Advanced | Extracts patterns or makes predictions on large datasets for classification or forecasting. |

| Natural Language Processing (NLP) | Advanced | Derives insights from unstructured text data (e.g., scientific literature, patient reports). |

| Predictive Analytics | Advanced | Forecasts future outcomes based on historical data patterns (e.g., inventory forecasting, patient recruitment). |

| Cluster Analysis | Advanced | Identifies natural groupings or segments within data for patient stratification or biomarker discovery. |

Experimental Protocol: Implementing an AI-Driven Data Exploration Workflow

Objective: To establish a standardized, AI-enhanced protocol for the initial exploration of a new dataset, aiming to reduce the time from data collection to actionable insights.

Materials & Reagents:

- Research Reagent Solutions:

- AI-Powered Data Cleaning Tool: Software that uses ML to automatically detect and correct data anomalies, missing values, and inconsistencies [10].

- Generative BI Tool: A conversational AI interface that allows researchers to ask questions of their data in plain language and receive summaries and insights without writing code [10].

- Python Environment with Libraries (e.g., Scikit-learn, Pandas): Provides access to a wide range of statistical and machine learning algorithms for custom analysis [15].

- Vector Database: A specialized database for handling embeddings of unstructured data (text, images), enabling efficient similarity search for GenAI applications [13].

Methodology:

- Data Ingestion and Automated Profiling:

- Load the raw dataset from source systems.

- Execute an AI-powered profiling tool to generate a summary of data structure, data types, and a preliminary report on data quality issues (e.g., missing value percentage, potential outliers).

AI-Assisted Data Cleaning and Validation:

- Use the data cleaning tool to automatically handle identified issues based on predefined rules (e.g., mean imputation for missing numerical data).

- Manually validate a sample of the corrections to ensure algorithm accuracy.

Exploratory Data Analysis (EDA) via Generative BI:

- Input the cleaned dataset into the Generative BI tool.

- Use natural language prompts to query the data. Example prompts:

- "Show the distribution of [key variable]."

- "Identify the top 5 correlations between [variable set]."

- "Are there any noticeable clusters or segments in the data based on [dimensions]?"

Hypothesis Generation and Testing:

- Based on the initial insights, form specific hypotheses.

- Use the Python environment to run more sophisticated statistical tests (e.g., hypothesis testing, regression analysis) to validate these hypotheses [15].

Visualization and Reporting:

- Use the AI tool's built-in capabilities to generate interactive charts and graphs for the final report.

- Document all steps, including prompts used and decisions made, for reproducibility.

Workflow Visualization

AI-Enhanced Data Exploration Workflow

From Theory to Therapy: Implementing AI and Automated Workflows

Leveraging AI-Powered Analytics and Intelligent Dashboards for Real-Time Insights

Technical Support Center

This technical support center provides troubleshooting guides and FAQs to help researchers resolve common issues with AI-powered analytics and intelligent dashboards, specifically within the context of reducing data collection time in characterization workflows.

Troubleshooting Guides

Dashboard and Analytics Performance Issues

Problem: My analytical dashboard is running very slowly or timing out when processing characterization data.

Diagnosis and Solution: This is a common problem that can originate from the client side, server side, or data layer. Follow these steps to identify and resolve the bottleneck [16].

Identify the Problem Source:

- Open your browser's Developer Tools (F12) and go to the Network tab.

- Reload the dashboard and observe the data requests (type

fetch). If these requests take a long time to complete, the issue is server-side. If requests are fast but the dashboard is still slow to render, the issue is client-side [16].

Resolve Client-Side Issues:

- Reduce Visible Data: Limit the amount of data rendered in widgets by using features like Master Filtering, Drill-Down, or Top N filters. You can also set the

LimitVisibleDataModeproperty toDesignerAndViewer[16]. - Check Data Cache: Ensure your dashboard is leveraging an in-memory data cache or a data extract file to avoid repeatedly querying the database for the same information [16].

- Measure Load Time: Use the following JavaScript code snippet within your dashboard's event handlers to measure client-side performance [16].

- Reduce Visible Data: Limit the amount of data rendered in widgets by using features like Master Filtering, Drill-Down, or Top N filters. You can also set the

- Resolve Server-Side Issues:

- Optimize Color Schemes: Using a

Localcolor scheme instead of aGlobalone for dashboard items can reduce server load, as it requests colors for only the current item [16]. - Use On-Demand Loading: For dashboards with tabs, place data-heavy items on separate tabs and set the

ItemDataLoadingModeproperty toOnDemandso data is loaded only when the tab is active [16]. - Apply Filters: Use data source-level filters or item-level filters to reduce the amount of data the server needs to fetch and process [16].

- Switch Data Processing Mode: If your database is not optimized for complex queries, you can switch the

DataProcessingModetoClient. This loads raw data into memory for client-side aggregation [16]. - Measure Server Load: Use a custom dashboard storage class to measure server-side loading time [16].

- Optimize Color Schemes: Using a

- Address Data Loading Issues:

- SQL Queries: Use a tool like SQL Server Profiler to identify slow-running queries. Review if queries are running in client or server mode and optimize them accordingly [16].

- OLAP Data Sources: Use the SQL Server Profiler to check MDX query performance. Compare query times in your dashboard to a tool like Microsoft Excel to determine if the issue is with the cube structure or the dashboard itself [16].

- Object Data Sources: Check how often the

DataLoadingevent is raised. Data should be cached on the first load and refreshed only after a timeout period (e.g., 5 minutes) [16].

Data and Access Issues

Problem: I cannot see data in my analysis, or the data is incorrect.

Diagnosis and Solution:

No Data Visible:

Unexpected or Zero Values:

- Data Refresh: In the analysis criteria tab, click the Refresh button in the Subject Areas pane to ensure you are viewing the most recent metadata and data [17].

- Currency Conversion: If financial amounts show as zero, check that currency exchange rates are correctly set up. A failed conversion will result in a zero value [17].

- View Display Error: If you see an error like "Exceed configured maximum number of allowed input records," add filters to your analysis to reduce the data volume, such as a narrower date range [17].

Problem: I cannot find or access a specific analysis or dashboard.

Diagnosis and Solution:

- Search the Catalog: Use your BI tool's catalog search function with the full or partial name of the analysis or dashboard [17].

- Permissions: The most common cause is lacking the required permissions or application role. Contact the analysis owner or your system administrator to review your access [17].

- Restore from Backup: If you suspect the item was deleted by mistake, it may need to be restored from a backup of the content from another environment [17].

Frequently Asked Questions (FAQs)

FAQ 1: What is the difference between traditional machine learning and generative AI for analytics, and when should I use each?

The choice depends on your specific analytical goal [18].

- Traditional Machine Learning is best for prediction and pattern recognition on structured, domain-specific data. It is ideal for tasks like classifying crystal quality from image data, predicting experimental outcomes, or detecting anomalies in sensor readings from lab equipment. Use it when working with highly specialized scientific data or when data privacy is a primary concern, as models can be run on-premises without sending data to external APIs [18].

- Generative AI is best for generating new content and understanding natural language. It can simplify the user experience by allowing you to query data using natural language (e.g., "show me all samples from last week with low diffraction quality") or for generating synthetic data to augment small experimental datasets. Use it first for tasks involving everyday language or common images [18].

Table: Machine Learning vs. Generative AI for Research

| Feature | Traditional Machine Learning | Generative AI |

|---|---|---|

| Primary Strength | Prediction, classification, pattern recognition | Content generation, natural language understanding |

| Best for Data Types | Structured, numerical, tabular data | Text, images, language |

| Ideal Research Use Case | Predictive maintenance on lab equipment, sample classification | Natural language querying of datasets, generating lab reports |

| Data Privacy | Suitable for private, on-premises deployment | Requires caution with sensitive data in public APIs |

FAQ 2: Why is real-time data so important for AI in characterization workflows?

Real-time data processing is crucial for reducing data collection time because it enables immediate insights and closed-loop automation, moving beyond the limitations of traditional batch processing [19].

- Instantaneous Feedback: AI models can analyze data as it is generated from instruments (e.g., chromatographs, diffractometers), allowing for immediate quality assessment. This can flag failed experiments early, terminating them to save time and resources [19].

- Closed-Loop Automation: Real-time AI can make autonomous decisions to optimize an ongoing experiment. For example, it can dynamically adjust instrument parameters or trigger the next step in a workflow without human intervention, significantly accelerating throughput [19].

- Adaptive Models: Models that are updated with real-time data can adapt to new patterns or drifts in experimental conditions, maintaining high predictive accuracy for longer periods without manual retraining [19].

FAQ 3: What are the essential steps in a robust data workflow for reliable AI analytics?

A well-defined data workflow is the foundation for any successful AI-driven analytics project. It ensures data quality, reliability, and actionable insights [20].

Research Data Workflow for AI Analytics

The workflow involves eight key stages [20]:

- Goal Planning and Data Identification: Define clear objectives (e.g., "reduce crystal characterization time by 20%") and identify required data sources (e.g., diffraction images, sample metadata).

- Data Extraction: Gather data from various sources like instrumentation APIs, Laboratory Information Management Systems (LIMS), and electronic lab notebooks.

- Data Cleaning and Transformation: Address errors, standardize nomenclatures (e.g., uniform sample names), and transform raw data into analysis-ready formats.

- Data Loading: Store the processed data in a suitable repository, such as a cloud data warehouse, optimized for fast querying.

- Data Validation: Implement automated checks to ensure data quality, flagging anomalies like missing values or unexpected ranges.

- Data Analysis and Modeling: Develop and run AI/ML models to extract insights, such as predicting sample quality.

- Data Governance: Establish security, access controls, and data usage policies to ensure compliance and ethical use.

- Data Maintenance: Perform regular updates, system optimizations, and archival to maintain workflow efficiency and data integrity.

FAQ 4: What tools can help overcome common data workflow challenges?

Several tool categories are essential for a modern research data stack [20]:

Table: Essential Tools for AI-Powered Research Data Workflows

| Tool Category | Purpose | Example Tools |

|---|---|---|

| ETL (Extract, Transform, Load) | Automates data ingestion from sources into a target database or warehouse. | Apache Kafka, Apache Nifi, Fivetran |

| Data Orchestration | Coordinates and automates complex sequences of data processing tasks across different systems. | Apache Airflow, Luigi, Prefect |

| Data Observability | Monitors data health and quality across the entire pipeline, detecting anomalies and lineage. | Monte Carlo |

The Scientist's Toolkit: Research Reagent Solutions

For researchers implementing automated characterization workflows (e.g., similar to the MXPress workflows at ESRF), the following "reagents" or core components are essential [21].

Table: Essential Components for Automated Characterization Workflows

| Item | Function in the Workflow |

|---|---|

| Diffraction Plan | A digital protocol that defines all parameters for an automated experiment, including sample ID, experiment type (e.g., MXPressE), and data collection strategy [21]. |

| Automated Sample Changer | A robotic system that mounts, centers, and unmounts multiple crystal samples without user intervention, enabling high-throughput screening [21]. |

| Mesh and Line Scans | X-ray raster scans used to map a crystal's diffraction quality and automatically center its best-diffracting volume to the beam [21]. |

| eEDNA/BEST Strategy | An AI-driven software that analyzes initial diffraction images to predict the optimal data collection strategy (rotation range, exposure time) for the best possible data [21]. |

| Automated Processing Pipeline | Integrated software that processes collected diffraction data in real-time, handling tasks like indexing, integration, and merging, with results streamed to a database (e.g., ISPyB) [21]. |

Troubleshooting Guides

Deep Learning Model Debugging Guide

Problem: Model performance is worse than expected or results are not reproducible.

Diagnosis and Solution Workflow:

| Step | Action | Key Considerations | Common Bugs to Check |

|---|---|---|---|

| 1. Start Simple | Choose a simple architecture and simplify the problem [22]. | Use a small training set (e.g., ~10,000 examples) to increase iteration speed and establish a performance baseline [22]. | Incorrect input to the loss function (e.g., using softmax outputs for a loss that expects logits) [22]. |

| 2. Implement & Debug | Get the model to run, then overfit a single batch [22]. | Use a lightweight implementation (<200 lines for the first version) and off-the-shelf components [22]. | Incorrect tensor shapes or silent broadcasting errors [22]. |

| 3. Evaluate Model Fit | Apply bias-variance decomposition to prioritize next steps [22]. | High bias suggests underfitting (need more model complexity), high variance suggests overfitting (need regularization) [22]. | Forgetting to set up train/evaluation mode correctly, affecting layers like BatchNorm [22]. |

Federated Learning (FL) Implementation Guide

Problem: The global model performs poorly or exhibits bias due to decentralized, heterogeneous data.

Diagnosis and Solution Workflow:

| Challenge | Description | Mitigation Strategies |

|---|---|---|

| Data Heterogeneity (Non-IID Data) | Client devices hold data with different statistical distributions, harming global model convergence [23]. | Use algorithm-based calibration techniques (e.g., modified aggregation strategies) or explore Personalized FL (PFL) to tailor models to local data [23]. |

| Class Imbalance & Long-Tailed Data | Data across clients is unevenly distributed, causing the model to be biased toward majority classes [23]. | Apply information enhancement (e.g., data augmentation on clients) or model component optimization (e.g., loss re-weighting) [23]. |

| Privacy & Security Risks | Model updates shared with the server can leak sensitive information about local training data [24]. | Combine FL with other Privacy-Enhancing Technologies (PETs) like differential privacy or secure multi-party computation [24]. |

Natural Language Processing (NLP) for Clinical Data

Problem: Inefficient extraction of insights from unstructured clinical text (e.g., patient notes, trial reports).

Diagnosis and Solution Workflow:

| Challenge | Impact on Research Speed | Potential NLP Solution |

|---|---|---|

| Fragmented Data Silos | Slow data sharing and integration from incompatible systems (e.g., separate clinical databases) [25]. | Implement a centralized, cloud-native NLP platform to unify and process text data from disparate sources in real-time [25] [26]. |

| Manual Data Curation | Scientists spend significant time manually retrieving and processing information, delaying analysis [25]. | Deploy automated NLP pipelines for named entity recognition (NER) and relationship extraction to identify key concepts and trends [25]. |

| Regulatory Compliance | Manual validation of clinical text data for regulatory submissions is time-consuming and error-prone [26]. | Utilize automated compliance workflows that track data lineage and generate audit trails, ensuring data integrity [26]. |

Frequently Asked Questions (FAQs)

Q1: My deep learning model's performance is much worse than a paper I'm trying to reproduce. Where should I start debugging? A1: Begin by "starting simple." Reproduce your model on a small, manageable synthetic dataset or a reduced version of your problem. This helps verify your implementation is correct and drastically speeds up debugging cycles. Ensure you are using sensible default hyperparameters and have normalized your inputs [22].

Q2: My federated learning model is converging slowly and seems biased toward certain clients. What could be the cause? A2: This is a classic symptom of data heterogeneity (Non-IID data) and potential class imbalance across clients [23]. Standard aggregation algorithms like FedAvg can be biased toward clients with more data or specific distributions. Investigate advanced aggregation strategies or personalized federated learning approaches designed for non-IID settings [23].

Q3: How can I ensure my federated learning system is truly privacy-preserving? A3: Federated Learning provides a privacy benefit by keeping raw data decentralized, but it is not a complete solution. The model updates (gradients or weights) shared with the server can potentially be reverse-engineered to infer training data [24]. A robust approach involves using FL in combination with other Privacy-Enhancing Technologies (PETs) like differential privacy, which adds noise to updates, or secure aggregation [24].

Q4: Our drug discovery team struggles with slow data analysis from high-throughput screens. How can machine learning help? A4: A major bottleneck is often manual, time-consuming peak identification in analytical data, which can take days or weeks [27]. You can develop a streamlined, automated data analysis workflow using commercial software tools. One proven method involves creating a biotransformation library for your molecule and using it with automated data processing software, which has been shown to reduce analysis time from a week to just a few hours [27].

Q5: What is the most common invisible bug in deep learning code? A5: According to practical guides, incorrect tensor shapes are a very common and often silent bug. The model may run without crashing but perform poorly due to silent broadcasting or reshaping operations that are logically incorrect [22]. Stepping through your model creation and inference in a debugger to check tensor shapes is a critical debugging step.

Experimental Protocols & Data

Quantitative Findings on Data Workflow Optimizations

The table below summarizes experimental data and findings from relevant studies on optimizing data workflows with ML.

| Application / Study | Key Intervention | Quantitative Outcome | Impact on Data Collection/Processing Time |

|---|---|---|---|

| ADC Biotransformation Analysis [27] | Streamlined, automated MS data analysis workflow. | Time for analyte identification reduced from ~1 week to a few hours. | Dramatic reduction (over 90% time saving). |

| High-Throughput Screening [25] | Automated ETL pipeline with metadata annotation. | Time for single analyses reduced by about 25 times. | Dramatic reduction (96% time saving). |

| Clinical Trial Data Entry [28] | Implementation of real-time validation in an EDC system. | Data-entry errors reduced from 0.3% to 0.01%. | Reduces time spent on downstream data cleaning and query resolution. |

This protocol describes a more automated workflow for characterizing Antibody-Drug Conjugates (ADCs) using mass spectrometry, significantly accelerating analytical characterization.

Library Generation: Create a linker-payload biotransformation library.

- Tool: Use commercial metabolite prediction software (e.g., Metabolite Pilot/Molecule Profiler).

- Input: Upload the linker-payload structure (.mol file). Specify the conjugation site.

- Parameters: Set potential cleavages (e.g., max 2 bonds broken), and common biotransformations (e.g., hydrolysis, oxidation). The software generates a list of possible mass shifts and modifications.

Data Processing and Peak Identification:

- Tool: Import the generated delta mass library into intact protein data analysis software (e.g., Biologics Explorer, Protein Metrics Byos).

- Input: Provide raw mass spectrometry data files, and the antibody's HC/LC amino acid sequences.

- Deconvolution: Perform automated deconvolution of the MS data within the software.

- Peak Matching: The software automatically annotates peaks by matching the observed masses against the theoretical masses of the antibody with applied modifications and the biotransformation library.

Review and Quantification:

- Manually review peaks with multiple assignments to select the most plausible biotransformation based on chemical reasoning.

- Export peak intensities to calculate the fractional abundance of each species over time.

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Technology | Function in ML-Driven Research | Example Use Case |

|---|---|---|

| Cloud-Native Statistical Computing Environment (SCE) [26] | Provides a scalable, flexible platform for data storage, analysis, and collaboration; supports languages like SAS, R, Python. | Running large-scale ML model training on integrated clinical trial data. |

| Electronic Data Capture (EDC) Systems [28] | Enables real-time data entry and validation at the source, reducing downstream errors and cleaning time. | Collecting clean, structured clinical trial data for training NLP models on patient outcomes. |

| Containerized Workflows (e.g., Docker, Kubernetes) [25] | Ensures computational methods are portable and reproducible across different computing environments. | Deploying and scaling a standardized FL training environment across multiple research institutions. |

| Streaming ETL Frameworks (e.g., Apache Kafka, Spark Streaming) [25] | Enables real-time data ingestion and processing, crucial for dynamic model retraining. | Continuously integrating and processing new high-throughput screening data for active learning models. |

| Privacy-Enhancing Technologies (PETs) [24] | Techniques like differential privacy and secure multi-party computation used alongside FL to mitigate data leakage from model updates. | Collaboratively training a model on sensitive patient data from multiple hospitals without sharing raw data. |

Workflow Visualization

Federated Learning with Central Server

Deep Learning Troubleshooting Path

Automated MS Data Analysis Flow

Troubleshooting Guides

1. Data Ingestion Failure: Pipeline Intermittently Drops Records

- Problem: An automated data ingestion pipeline from laboratory instruments (e.g., XRD, HPLC) sporadically fails to import records, leading to incomplete datasets for analysis.

- Diagnosis:

- Check Source System Load: High CPU or memory usage on the source instrument's data server can cause timeouts during the extraction phase. Monitor source system performance metrics at the time of failure. [29]

- Review Connectivity Logs: Inspect the ingestion tool's logs for connection reset errors or authentication failures. Unstable network links between the lab network and the central data repository are a common culprit. [30]

- Validate Data Format: Check if the source system occasionally outputs data in a non-standard format (e.g., an extra header line, a special character) that breaks the parser. Automated schema validation checks should catch this. [31] [29]

- Solution:

- Implement a retry mechanism with exponential backoff in your ingestion script to handle temporary network glitches. [30]

- Introduce a dead-letter queue or a staging area to isolate problematic records for later inspection, allowing the rest of the pipeline to continue functioning. [29]

- Use an autonomous data quality tool like DataBuck to monitor the data stream in real-time and flag anomalies in record count or data format. [31]

2. Poor Signal-to-Noise Ratio After Automated Data Cleaning

- Problem: After an automated cleaning and preprocessing step, the dataset used for materials characterization (e.g., XRD peak analysis) shows an overly aggressive removal of data points, distorting the signal and impacting downstream analysis.

- Diagnosis:

- Audit Cleaning Parameters: The thresholds for handling "missing values" or filtering "outliers" may be too strict for the specific experimental context. For instance, a Z-score threshold of 3 might be inappropriate for a naturally high-variance biological assay. [32]

- Review Data Dictionary: The automated process may be misapplying a rule because of a missing or incorrect entry in the data dictionary regarding the expected value range or data type. [32]

- Compare Raw vs. Cleaned Data: Always keep a copy of the raw data. Create a validation script to statistically and visually compare the raw and cleaned datasets to quantify the impact of the cleaning process. [29]

- Solution:

- Calibrate cleaning algorithms using a known, validated subset of data before full deployment. Avoid one-size-fits-all parameters. [32]

- Implement a dynamic thresholding system that can adapt based on the statistical properties of each specific experimental run. [33]

- Document all preprocessing steps and parameters meticulously to ensure reproducibility and facilitate debugging. [32]

3. Workflow Automation Stalls at Data Processing Stage

- Problem: An automated workflow that integrates ingestion, cleaning, and processing consistently hangs or fails during the computationally intensive processing phase (e.g., during feature extraction for machine learning).

- Diagnosis:

- Resource Contention: The processing node may be running out of memory (OOM error) or exceeding allocated CPU, especially when handling large volumes of data from high-resolution instruments. [29]

- Unhandled Edge Case: The processing script or model may encounter an unexpected value or data structure it doesn't know how to handle, causing an infinite loop or a crash. [34]

- Dependency Conflict: A software library used in the processing step might have been updated, creating a version conflict with other parts of the workflow. [32]

- Solution:

- Monitor Performance: Track metrics like data throughput, latency, and error rates to identify bottlenecks. Design the system for scalability, using cloud resources that can scale horizontally for large jobs. [29]

- Build Robust Error Handling: Wrap processing functions in

try-exceptblocks to catch and log errors, allowing the workflow to fail gracefully and notify administrators without manual intervention. [34] - Containerize the Workflow: Use Docker containers to package the processing code with all its dependencies, ensuring a consistent and isolated runtime environment. [32]

Frequently Asked Questions (FAQs)

Q1: What are the main types of data ingestion, and which one is best for reducing data collection time in characterization experiments?

There are two primary types, and the choice directly impacts data latency: [30] [29]

- Batch Ingestion: Collects and processes data in large chunks at scheduled intervals (e.g., nightly). This is simpler to implement but introduces significant delay, which is not ideal for in-situ or real-time analysis. [30]

- Real-Time (Streaming) Ingestion: Moves data continuously as it is generated (in milliseconds). This is best for minimizing the time between data collection and availability for analysis, which is critical for adaptive experimentation and live monitoring. [30]

A hybrid approach is often most practical, using streaming for immediate, time-sensitive insights and batch for consolidating large datasets for historical analysis. [30]

Q2: How can we ensure data quality in an automated workflow without constant manual checks?

Automation is key to maintaining quality at scale. Best practices include: [31] [32] [29]

- Automate Validation Checks: Build data quality checks (e.g., for missing values, data types, value ranges) directly into the ingestion pipeline to reject or flag anomalous data as it arrives.

- Leverage AI and Machine Learning: Use tools like DataBuck that employ AI to automatically learn data patterns and detect anomalies or drifts in quality without pre-defined rules. [31]

- Maintain Data Lineage: Keep comprehensive documentation and metadata about the data's origin, transformations, and destination. This makes it easier to trace and fix the root cause of quality issues. [29]

Q3: Our automated data cleaning is removing critical experimental outliers. How can we prevent this?

This is a common challenge when algorithms are too rigid. The solution involves a more nuanced approach: [32]

- Context-Aware Cleaning: Instead of applying purely statistical outlier detection, incorporate domain knowledge into the cleaning rules. For example, in XRD analysis, certain peaks may be valid despite being statistical outliers.

- Two-Stage Process: Implement a workflow where potential outliers are flagged and moved to a "quarantine" area for expert review instead of being automatically deleted. [29]

- Iterative Refinement: Continuously validate your cleaning process against known outcomes to refine the algorithms and thresholds, making them more sensitive to the specifics of your research domain. [32]

Q4: What are the critical security considerations for an automated data workflow in a regulated research environment?

Protecting sensitive experimental data is paramount. Essential measures include: [29]

- Encryption: Ensure data is encrypted both while it is moving between systems (in transit) and when it is stored (at rest).

- Access Controls: Define and enforce strict role-based access policies to ensure only authorized personnel can view or modify data and workflows.

- Audit Trails: Maintain detailed logs of all data access and pipeline activities to support compliance with regulations like GDPR or HIPAA. [29]

Experimental Protocol: Intelligent Data Selection for Minimized Characterization Time

This protocol outlines a methodology for integrating workflow automation with intelligent data selection to reduce measurement time in X-ray diffraction (XRD) characterization, as conceptualized from recent research. [33]

1. Objective To decrease total data collection time in energy-dispersive XRD experiments for phase analysis of high-strength steels by automating the ingestion of spectral data and using selection strategies to dynamically adapt measurement parameters.

2. Materials and Reagents

- Sample: Dog-bone-shaped tensile sample of low-alloy 42CrSi Quench and Partitioning (QP) steel, heat-treated to contain metastable retained austenite. [33]

- Equipment: X-ray diffractometer equipped with an energy-dispersive detector; in-situ tensile loading stage (e.g., Kammrath & Weiss stress rig). [33]

- Software: Custom Python or commercial workflow automation software (e.g., Integrate.io, Xurrent) for data pipeline orchestration. [33] [34] [29]

3. Methodology

- Step 1: Automated Data Ingestion Setup

- Configure the XRD instrument software to stream spectral data to a designated network location or message broker (e.g., Apache Kafka) in real-time. [30]

- Set up an automated data ingestion pipeline using a tool like Integrate.io to continuously poll for and extract new spectral files as they are generated. [29]

Step 2: Data Cleaning and Preprocessing Automation

Step 3: Implement Intelligent Data Selection & Processing Logic

- Integrate one of two decision-making strategies into the workflow to process the initial data and provide feedback: [33]

- Regions-of-Interest (ROI) Strategy: The workflow identifies and focuses subsequent measurement counts only on the energy ranges (ROIs) known to contain relevant diffraction peaks (e.g., for ferrite and austenite phases). [33]

- Minimum Volume Strategy: The workflow analyzes the incoming data to determine the minimum amount of additional counting time needed to accurately characterize peak properties (position, height, area). [33]

- This logic is codified as a conditional step in the workflow automation tool (e.g., using an "if-then" rule in Xurrent). [34]

- Integrate one of two decision-making strategies into the workflow to process the initial data and provide feedback: [33]

Step 4: Closed-Loop Experimentation and Termination

- The workflow's decision is fed back to the XRD instrument controller via an API, instructing it to either adjust the counting time, move to the next ROI, or terminate the measurement at the current point. [33]

- The final, refined dataset is automatically loaded into a data warehouse or analysis platform for further modeling and reporting. [31] [29]

4. Anticipated Results This automated and adaptive workflow is expected to significantly reduce the total measurement time per point compared to a traditional sequential acquisition that measures the entire energy spectrum for a fixed, long duration, all without detrimental effects on data quality. [33]

Workflow Automation Diagram

Automated Characterization Workflow

Research Reagent Solutions

The following table details key software and conceptual "reagents" essential for building the automated workflows described.

| Research Reagent / Solution | Function in the Automated Workflow |

|---|---|

| Workflow Automation Platform (e.g., Xurrent, Integrate.io) [34] [29] | The core orchestration engine that automates the multi-step process, connecting data ingestion, cleaning, and processing tasks based on predefined business rules (IF/THEN logic). [34] |

| Data Ingestion Tool (e.g., Apache Kafka, Integrate.io Connectors) [30] [29] | Acts as the "acquisition reagent," responsible for automatically collecting and transporting raw data from diverse sources (instruments, sensors) to a centralized storage system. [30] |

| AI-Powered Data Quality Monitor (e.g., DataBuck) [31] | Functions as a "quality control assay," using AI and machine learning to automatically validate, clean, and monitor the quality of ingested data in real-time, flagging anomalies. [31] |

| Cloud Data Warehouse (e.g., Snowflake, BigQuery) [30] [29] | Serves as the "centralized storage buffer," providing a scalable repository for the cleaned and processed data, ready for downstream analysis and reporting. |

| Intelligent Data Selection Logic [33] | The core "analytical protocol" encoded into the workflow. It processes initial data to make adaptive decisions (e.g., ROI focus, minimum volume) that directly reduce experimental measurement time. [33] |

The traditional drug discovery paradigm is characterized by lengthy development cycles, prohibitive costs, and a high preclinical trial failure rate. The process from lead compound identification to regulatory approval typically spans over 12 years with cumulative expenditures exceeding $2.5 billion, and clinical trial success probabilities decline precipitously to an overall rate of merely 8.1% [35]. Artificial Intelligence (AI) has emerged as a transformative force to address these persistent inefficiencies. A core promise of AI is its capacity to drastically reduce data collection times in characterization workflows, compressing discovery timelines that traditionally required years into months [36]. This technical support center is designed to help researchers and scientists navigate the practical implementation of AI tools to achieve these accelerations, specifically in the critical phases of target identification and lead optimization.

AI Troubleshooting Guide: Common Challenges and Solutions

Integrating AI into established wet-lab workflows presents a unique set of challenges. This guide addresses the most frequent issues encountered by researchers.

FAQ: Our AI model for predicting bioactivity performs well on training data but poorly on new, external compounds. What could be the cause?

- Answer: This is a classic sign of overfitting or data quality issues.

- Solution A (Data Quality): Ensure your training data is curated and standardized. AI models are sensitive to "batch effects" from variations in lab protocols, which can mislead the algorithm [36]. Implement automated data cleaning pipelines that use natural language processing (NLP) and pattern recognition to detect and correct inconsistent entries or outliers [37] [38].

- Solution B (Model Generalizability): Employ a more diverse training set that covers a broader chemical space. Utilize techniques like cross-validation and test the model on a held-out validation set that is not used during training. Consider using foundation models pre-trained on vast, public chemical databases that can be fine-tuned for your specific task, which can improve generalization [36].

- Answer: This is a classic sign of overfitting or data quality issues.

FAQ: How can we trust an AI-generated "hit" when the model's decision-making process is a "black box"?

- Answer: Model interpretability is crucial for building trust with scientists and for regulatory compliance.

- Solution A (Transparent Workflows): Utilize platforms and tools that offer completely open workflows, allowing you to verify every input and output. This transparency ensures that insights are explainable and reproducible, which is essential for building confidence in the results [3].

- Solution B (Explainable AI Techniques): Integrate methods such as SHAP (SHapley Additive exPlanations) or LIME (Local Interpretable Model-agnostic Explanations) to highlight which molecular features or substructures the model deemed important for its prediction. This provides a rationale for prioritizing a compound for synthesis and testing [39].

- Answer: Model interpretability is crucial for building trust with scientists and for regulatory compliance.

FAQ: Our AI and automation systems are generating data, but it remains siloed and we cannot get a unified view for analysis.

- Answer: This is a common data integration problem that blocks the derivation of insight.

- Solution A (Unified Data Platforms): Invest in a unified digital R&D platform that connects data from instruments, assays, and computational tools into a single analytical framework. This breaks down silos and allows AI to be applied to meaningful, well-structured information [3].

- Solution B (Federated Learning): For sensitive or distributed data, consider a federated learning approach. Instead of moving all data to a central repository, bring the AI to the data. Models are trained across multiple institutions without the underlying data ever leaving its secure source, thus respecting privacy while unlocking collective intelligence [36].

- Answer: This is a common data integration problem that blocks the derivation of insight.

FAQ: Our AI-designed molecules are theoretically promising but are difficult or impossible to synthesize in the lab.

- Answer: This is a failure in accounting for synthetic feasibility.

- Solution: Integrate synthetic accessibility scores directly into your AI's reward function. During the de novo molecular generation process, use reinforcement learning (RL) algorithms where the agent is rewarded not only for high potency and good ADMET properties but also for proposing structures that are readily synthesizable. This ensures that the output of the digital workflow is a practical input for the medicinal chemistry lab [39].

- Answer: This is a failure in accounting for synthetic feasibility.

Experimental Protocols: Implementing AI in Your Workflow

Below are detailed methodologies for key experiments that leverage AI to accelerate characterization.

Protocol for AI-Driven Target Identification

This protocol uses multi-omics data to identify and prioritize novel therapeutic targets for a specific disease.

- Objective: To systematically identify and validate a novel disease-associated target using AI analysis of integrated multimodal data.

- Materials & Data Inputs:

- Genomic data (e.g., from public repositories like UK Biobank or in-house sequencing).

- Transcriptomic data (e.g., RNA-seq from diseased vs. healthy tissues).

- Proteomic data.

- Clinical records and published literature (text data).

- Methodology:

- Data Ingestion and Harmonization: Collect and clean data from all sources. Use AI-powered tools to automatically standardize formats, correct inconsistencies, and annotate metadata [37] [38]. This step is critical for data quality.

- Multi-Omics Integration: Load the harmonized data into an AI platform (e.g., Sonrai Discovery, BenevolentAI platform) capable of multi-modal data integration. The platform will layer these datasets to uncover links between molecular features and disease mechanisms [3].

- Knowledge Graph Mining: Use Natural Language Processing (NLP) to read and extract relationships from millions of research papers, patents, and clinical notes. Build or query a knowledge graph to uncover hidden connections between genes, proteins, and diseases [35] [36]. A prominent example is BenevolentAI's identification of baricitinib as a COVID-19 treatment through literature mining [36].

- Target Prioritization: The AI algorithm will analyze the integrated data and knowledge graph to output a ranked list of potential targets based on criteria like genetic association with the disease, druggability, and novelty.

- Validation: Perform in vitro knockdown or knockout experiments (e.g., using CRISPR) in relevant cell models to confirm that modulation of the top-predicted target produces the desired phenotypic effect.

Protocol for AI-Enabled Lead Optimization

This protocol outlines the iterative "design-make-test-analyze" cycle accelerated by AI for optimizing a lead compound.

- Objective: To optimize a lead compound for potency, selectivity, and ADMET properties using a closed-loop AI-driven workflow.

- Materials & Data Inputs:

- Initial lead compound(s) and their bioactivity data.

- High-throughput screening (HTS) data or historical assay data.

- Structural information of the target (if available, e.g., from crystallography or AlphaFold2).

- Methodology:

- Molecular Representation: Represent the lead compound and proposed analogs as graphs (atoms as nodes, bonds as edges) or SMILES strings for the AI model.

- Generative Molecular Design: Use a generative AI model (e.g., a Reinforced Learning-based agent or a Generative Adversarial Network) to propose new molecular structures. The model is trained or rewarded to maintain core activity while optimizing for specific properties like improved binding affinity, solubility, or reduced off-target interactions [40] [39].

- In Silico Property Prediction: Before synthesis, screen the AI-generated molecules using predictive ML models for key ADMET properties (e.g., hepatotoxicity, metabolic stability, permeability) [35] [36] [41]. This computationally flags molecules with poor predicted profiles.