A Comparative Guide to Material Property Prediction Algorithms: From Fundamentals to Biomedical Applications

This article provides a comprehensive comparison of machine learning algorithms for predicting material properties, tailored for researchers and professionals in drug development and materials science.

A Comparative Guide to Material Property Prediction Algorithms: From Fundamentals to Biomedical Applications

Abstract

This article provides a comprehensive comparison of machine learning algorithms for predicting material properties, tailored for researchers and professionals in drug development and materials science. It explores the foundational principles of material representation, from compositional and structural descriptors to emerging universal frameworks. The review methodically compares diverse algorithmic approaches, including graph neural networks, random forests, and novel deep learning architectures, addressing critical challenges like dataset redundancy, model generalizability, and integration of physical constraints. By synthesizing performance validation metrics and offering forward-looking perspectives, this guide aims to equip scientists with the knowledge to select, optimize, and validate predictive models for accelerated material discovery and biomedical innovation.

Foundations of Material Property Prediction: Core Concepts and Data Representations

In the data-driven landscape of modern materials science, material descriptors—quantifiable representations of a material's composition, structure, and electronic properties—serve as the foundational bridge between raw data and predictive insight. These descriptors are the critical input variables that machine learning (ML) and deep learning (DL) algorithms use to establish structure-property relationships, enabling the rapid prediction of material behavior without recourse to costly and time-consuming experiments or simulations. The careful selection and engineering of these descriptors directly determine the accuracy, transferability, and physical interpretability of predictive models. This guide provides a comparative analysis of how different classes of descriptors perform in predicting key material properties, detailing the experimental protocols behind their evaluation and offering a toolkit for researchers navigating this complex field.

The importance of descriptors is underscored by the fundamental challenge in materials informatics: mapping a material's intricate atomic-scale reality to its macroscopic properties. While early models relied on simple compositional features, recent advances have embraced more sophisticated descriptors derived from geometric and electronic structure. Notably, the electronic charge density has emerged as a powerful, universal descriptor because it uniquely determines all ground-state electronic properties of a material, as established by the Hohenberg-Kohn theorem [1]. This progression from simple to complex descriptors reflects the community's ongoing effort to balance computational efficiency with predictive accuracy and physical fidelity.

Comparative Analysis of Material Descriptor Performance

The performance of a property prediction model is highly dependent on the type of descriptor used. The table below summarizes the predictive accuracy of various descriptor classes for different material properties, as reported in recent literature.

Table 1: Performance Comparison of Material Descriptor Types for Property Prediction

| Descriptor Category | Specific Descriptor Type | Target Property | Best Model | Performance (Metric / Value) | Key Advantage |

|---|---|---|---|---|---|

| Electronic Structure | Electronic Density of States (DOS) [2] | Chemisorption Energy | Principal Component Analysis (PCA) | Accurate, interpretable models [2] | Clarifies surface effect on adsorption |

| Electronic Structure | Electronic Charge Density [1] | Multiple Properties (8) | MSA-3DCNN (Multi-task) | Average R² = 0.78 [1] | Universal descriptor; high transferability |

| Compositional & Empirical | Hybrid Features (Vectorized Properties & Electronegativity) [3] | Band Gap (2D Materials) | Extreme Gradient Boosting | R² = 0.95, MAE = 0.16 eV [3] | Low computational cost |

| Compositional & Empirical | Hybrid Features (Vectorized Properties & Electronegativity) [3] | Work Function (2D Materials) | Extreme Gradient Boosting | R² = 0.98, MAE = 0.10 eV [3] | Low computational cost |

| Atomic Structure | Pair Distribution Function (PDF) & Element Embeddings [4] | Electronic Density of States (eDOS) | Element Embeddings Model (EEM) | Competitively low MAE [4] | Flexible architecture; captures local environment |

| Compositional | Matminer Features [5] | Formation Energy, Band Gap | Various ML Models | Performance overestimated without redundancy control [5] | Highlights dataset redundancy risk |

Key Insights from Comparative Data

- Electronic Structure Descriptors Offer High Fidelity: Descriptors rooted in electronic structure, such as the density of states (DOS) and full electronic charge density, consistently enable high-accuracy predictions for diverse properties, from chemisorption energies to bulk moduli [2] [1]. Their principal advantage lies in their direct physical relationship with the quantum mechanical state of the material.

- Engineered Compositional Descriptors Can Be Highly Efficient: For specific properties, cleverly engineered compositional descriptors that mix vectorized atomic properties (e.g., covalent radius, polarizability) with empirical functions (e.g., electronegativity) can achieve exceptional accuracy (R² > 0.95) at a fraction of the computational cost of computing full electronic structures [3].

- The Critical Issue of Dataset Redundancy: Reported high accuracies (e.g., R² > 0.95) for composition-based models can be misleading if the dataset contains many highly similar materials. Without proper redundancy control, performance is overestimated, and the model's true extrapolation capability remains poor [5].

Experimental Protocols for Descriptor Evaluation

The rigorous benchmarking of descriptors requires standardized workflows and independent tests to ensure their reported performance is meaningful and reproducible.

Workflow for Universal Charge Density Descriptor

A landmark approach used electronic charge density as a single, universal descriptor for predicting eight different ground-state properties [1]. The protocol was as follows:

- Data Curation: A large dataset of electronic charge density data was curated from the Materials Project. These data are stored as 3D matrices in CHGCAR files.

- Data Standardization: A major challenge was the variable dimension of the 3D charge density data across different materials. This was solved by converting the 3D matrices into a series of 2D image snapshots along the z-direction, with a customized interpolation scheme to handle dimensional variations.

- Model Training: A Multi-Scale Attention-Based 3D Convolutional Neural Network (MSA-3DCNN) was employed to extract features from the image-formatted charge density data. The model was trained in both single-task (predicting one property) and multi-task (predicting all eight properties simultaneously) settings.

- Validation: Model performance was evaluated using the R² metric on hold-out test sets. The multi-task model achieved a significantly higher average R² (0.78) than the single-task models (0.66), demonstrating enhanced learning and transferability [1].

Protocol for Evaluating Dataset Redundancy

To address the critical issue of overestimated performance, the MD-HIT algorithm was developed to create redundancy-controlled benchmarks [5]. The methodology is:

- Problem Identification: Materials databases like the Materials Project contain many redundant (highly similar) materials due to historical "tinkering" in material design (e.g., slight doping of a base perovskite like SrTiO₃).

- Redundancy Control: The MD-HIT algorithm, inspired by CD-HIT in bioinformatics, processes a dataset to ensure no two samples have a similarity (in composition or crystal structure) greater than a user-defined threshold.

- Model Benchmarking: Machine learning models are trained and tested on both the original dataset and the redundancy-controlled dataset derived from it.

- Performance Analysis: The predictive performance (e.g., MAE, R²) on the redundancy-controlled test set is compared to the performance on a randomly split test set. A significant drop in performance on the controlled set indicates that the model's capability was overestimated and that it struggles to extrapolate to truly novel materials [5].

The following diagram illustrates the logical decision process for selecting an appropriate material descriptor based on the research objective and constraints.

The Scientist's Toolkit: Research Reagent Solutions

In the context of computational materials science, "research reagents" refer to the fundamental software, databases, and algorithms that form the backbone of descriptor-based prediction workflows. The table below details key resources.

Table 2: Essential Computational Tools for Descriptor-Based Materials Research

| Tool Name | Type | Primary Function | Relevance to Material Descriptors |

|---|---|---|---|

| JARVIS-Leaderboard [6] | Benchmarking Platform | Community-driven benchmarking for AI, electronic structure, and force-field methods. | Provides objective performance comparisons of different models/descriptors across hundreds of tasks. |

| Materials Project (MP) [1] [4] | Computational Database | Repository of computed properties for over 100,000 inorganic materials. | Primary source for obtaining crystal structures and pre-computed descriptor data (e.g., charge density, DOS). |

| MD-HIT [5] | Algorithm | Controls redundancy in material datasets by ensuring a similarity threshold. | Critical for creating robust train/test splits to obtain a true estimate of a model's predictive power. |

| C2DB [3] | Computational Database | Database of computed properties for 2D materials. | Curated source for structures and properties of 2D materials, used for training specialized models. |

| ChemDataExtractor [7] | Natural Language Processing Tool | Automatically extracts chemical information and properties from scientific literature. | Used to build specialized datasets by mining experimental property data linked to material structures. |

The strategic selection of material descriptors is not merely a preliminary step but a decisive factor in the success of computational materials design. As evidenced by the comparative data, electronic structure-based descriptors like charge density offer a universal and physically rigorous path to high accuracy across multiple properties. In contrast, intelligently engineered compositional descriptors provide a potent, low-cost alternative for targeted applications. However, the field must contend with the challenge of dataset redundancy, using tools like MD-HIT to ensure benchmarks reflect true extrapolation capability. Future progress will likely hinge on hybrid approaches that integrate the physical interpretability of electronic descriptors with the scalability of deep learning, all while adhering to rigorous benchmarking standards that foster reproducible and reliable materials discovery.

The accurate prediction of material properties is a cornerstone of modern materials science and drug development, enabling the rapid discovery and design of novel compounds. Central to this endeavor are two divergent computational philosophies: structure-based and structure-agnostic approaches. Structure-based methods rely on detailed three-dimensional atomic coordinates, often from databases or computational models, to predict properties using relationships derived from the spatial arrangement of atoms [8]. In contrast, structure-agnostic methods predict material properties using only the chemical composition or other readily available descriptors, bypassing the need for often costly and time-consuming atomic structure determination [9].

The choice between these paradigms involves significant trade-offs in data requirements, computational cost, accuracy, and practical applicability. This guide provides an objective comparison for researchers and scientists, framing the discussion within the broader thesis of comparing material property prediction algorithms. We summarize quantitative performance data, detail experimental protocols, and visualize key workflows to inform method selection for specific research scenarios.

Core Characteristics and Comparative Analysis

The following table summarizes the fundamental differences in data requirements, computational overhead, and primary applications of structure-agnostic and structure-based approaches.

Table 1: Core characteristics of structure-agnostic and structure-based approaches.

| Feature | Structure-Agnostic Approaches | Structure-Based Approaches |

|---|---|---|

| Primary Input | Elemental composition (stoichiometric formula) [9]; Experimentally accessible data like XRD patterns [10] | 3D atomic coordinates (crystal structure) [8] |

| Data Dependency | Lower barrier; uses composition or XRD, which are more readily available [10] | High barrier; depends on availability of relaxed crystal structures, which can be scarce [9] |

| Computational Cost | Generally lower; avoids expensive quantum-mechanical calculations [9] | High; often relies on Density Functional Theory (DFT) or molecular simulations, which are computationally expensive [8] |

| Typical Models | Roost [9], CrabNet [10], Composition-based transformers | Graph Neural Networks (GNNs) [9] [8], CGCNN [9], Crystal Graph Networks |

| Key Advantage | High-throughput screening of vast chemical spaces without structural information [9]; Direct application in experimental settings [10] | High accuracy for properties dependent on atomic arrangement (e.g., band gap, elastic moduli) [8]; Provides direct physical insight |

| Main Limitation | May lack accuracy for structure-sensitive properties; limited physical interpretability [9] | Computationally prohibitive for large-scale screening; impractical when structures are unknown [9] [10] |

Performance and Quantitative Comparison

Empirical studies directly comparing models from both paradigms reveal clear performance trade-offs across different material properties and data regimes. The following table summarizes key quantitative findings from recent research.

Table 2: Summary of experimental performance data from key studies.

| Study (Model) | Approach | Key Performance Metric | Result | Notable Finding |

|---|---|---|---|---|

| Pretraining Roost [9] | Structure-Agnostic | Mean Absolute Error (MAE) on formation energy (Perovskites dataset) | MAE: ~0.04 eV/atom (with pretraining) | Pretraining strategies (SSL, FL, MML) significantly improve data efficiency, especially on small datasets. |

| XxaCT-NN [10] | Structure-Agnostic (Multimodal) | Accuracy on various property prediction tasks | Outperformed unimodal baselines; achieved state-of-the-art results | Multimodal learning (Composition + XRD) scales favorably with dataset size, offering a path to foundation models without crystal structures. |

| GNN/CGCNN [9] [8] | Structure-Based | Accuracy on formation energy and band gap prediction (Materials Project) | High accuracy, often used as a benchmark | Accuracy comes at the cost of requiring relaxed crystal structures, which are expensive to generate. |

Experimental Protocols and Methodologies

Protocol for Structure-Agnostic Property Prediction

The following workflow outlines a typical methodology for structure-agnostic prediction using the Roost model, enhanced with pretraining strategies as described in the research [9].

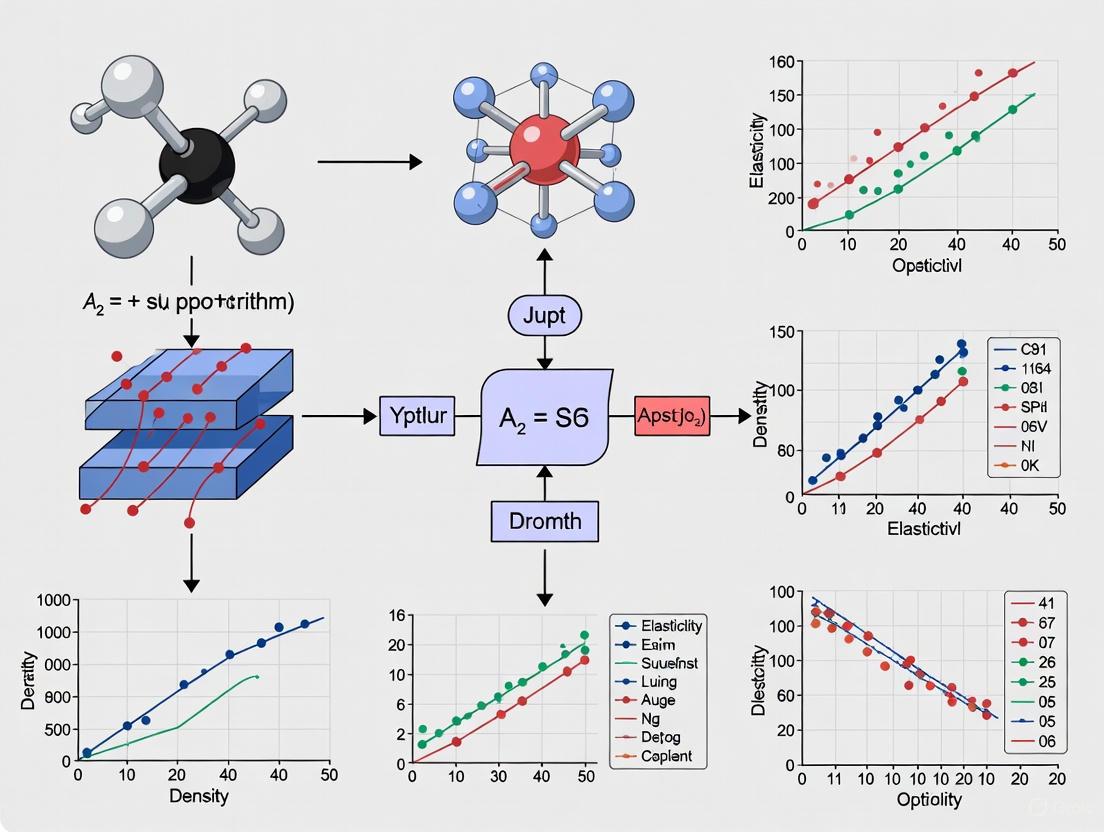

Figure 1: Workflow for structure-agnostic material property prediction. The core model is often enhanced through self-supervised, fingerprint, or multimodal learning pretraining on large unlabeled datasets [9].

Detailed Methodology

- Step 1: Input Representation and Graph Construction. The input is the chemical formula (e.g., SrTiO₃). A dense weighted graph is constructed where nodes represent unique elements. The edges are fully connected, and weights correspond to the fractional composition of each element. Initial node features are generated using pre-trained Matscholar embeddings, which are then multiplied by a learnable weight matrix [9].

- Step 2: Message Passing and Representation Learning. The model employs a message-passing framework to update node representations. For each node, it calculates attention coefficients between itself and all neighboring nodes. These coefficients are normalized using a weighted softmax function that incorporates the elemental fractions. Node features are updated through this process, often using skip connections to preserve information [9].

- Step 3: Readout and Prediction. The final step uses a weighted attention pooling mechanism to combine the updated node features into a single, fixed-length material representation. This representation is passed through a multilayer perceptron (MLP) to make the final property prediction [9].

- Step 4: Pretraining (Optional but Recommended). To boost performance, particularly on small datasets, the encoder can be pretrained using:

- Self-Supervised Learning (SSL): Employing a framework like Barlow Twins, the model is trained to produce similar representations for different augmentations (e.g., random atom masking) of the same material, leveraging unlabeled data [9].

- Fingerprint Learning (FL): The model is pretrained to predict handcrafted material fingerprints (e.g., Magpie), allowing it to retain the benefits of these expert-designed descriptors within a learnable framework [9].

- Multimodal Learning (MML): The structure-agnostic encoder is trained to predict the embeddings generated by a pre-trained structure-based model, thereby learning to infer structural information from composition alone [9].

Protocol for Structure-Based Property Prediction

Structure-based methods typically use Graph Neural Networks (GNNs) to model materials. The following workflow generalizes the process for models like CGCNN (Crystal Graph Convolutional Neural Network) [8].

Figure 2: Workflow for structure-based material property prediction using graph neural networks. The atomic structure is directly encoded into a crystal graph [8].

Detailed Methodology

- Step 1: Crystal Graph Construction. The input is a crystal structure with atomic coordinates and lattice parameters. A crystal graph is constructed where atoms are treated as nodes. Edges are created between atoms based on their proximity (e.g., within a cutoff distance), capturing the bonding interactions and local coordination environments within the crystal [8].

- Step 2: Node and Edge Feature Initialization. Each atom node is assigned initial features that can include atomic number, atomic mass, valence, etc. Edges can be annotated with features such as interatomic distance and bond type, providing the model with geometric and chemical information [8].

- Step 3: Graph Convolution and Learning. The core of the model is a GNN that performs graph convolution operations. These operations allow nodes to aggregate information from their neighboring nodes, updating their own representations to capture both local chemical environments and long-range interactions in the crystal. Multiple layers enable the model to learn hierarchical material features [8].

- Step 4: Global Pooling and Prediction. After several convolutional layers, a global pooling step (e.g., mean pooling, attention pooling) combines all node representations into a single graph-level embedding that represents the entire crystal structure. This embedding is then passed to a fully connected network for the final property prediction [8].

Successful implementation of the discussed approaches relies on key software tools, datasets, and algorithms. The following table details these essential "research reagents."

Table 3: Key resources for material property prediction research.

| Resource Name | Type | Function/Purpose | Relevance |

|---|---|---|---|

| Roost [9] | Algorithm/Software | A structure-agnostic model that uses message-passing on stoichiometric formulas to predict material properties. | Core model for composition-based prediction. |

| CrabNet [10] | Algorithm/Software | A structure-agnostic model based on a transformer architecture that uses composition as input. | Core model for composition-based prediction. |

| CGCNN [9] [8] | Algorithm/Software | A structure-based model that constructs crystal graphs from atomic structures for property prediction. | Benchmark model for structure-based prediction. |

| Materials Project [9] [8] | Database | A vast repository of computed crystal structures and properties for known and predicted materials. | Primary source of data for training and testing structure-based models. |

| Alexandria Dataset [10] | Database | A large-scale dataset (5+ million samples) integrating composition, structure, and XRD data. | Used for pretraining large-scale, multimodal, structure-agnostic models. |

| Matbench [9] | Benchmarking Suite | A curated collection of datasets and tasks for standardized evaluation of ML models in materials science. | For fair and reproducible benchmarking of model performance. |

| Matscholar Embeddings [9] | Data/Algorithm | Pre-trained word embeddings for materials science, capturing semantic relationships between elements and terms. | Used to initialize element features in structure-agnostic models like Roost. |

| Barlow Twins Framework [9] | Algorithm | A self-supervised learning method that learns useful representations by maximizing the similarity of two augmented views of the same data. | Used for pretraining encoders without labeled data. |

Structure-agnostic and structure-based approaches for material property prediction offer complementary strengths, making them suited for different phases of the research pipeline. Structure-based methods remain the gold standard for accuracy when detailed atomic structures are available and computational cost is not prohibitive. Conversely, structure-agnostic methods provide unparalleled efficiency and practicality for high-throughput screening and situations where structural data is absent.

The emerging trend of multimodal learning, which integrates composition with experimentally accessible data like XRD patterns, is a promising direction that mitigates the limitations of both paradigms [10]. Furthermore, techniques like self-supervised pretraining are dramatically improving the data efficiency of structure-agnostic models, narrowing the performance gap with their structure-based counterparts [9]. The choice between these approaches ultimately depends on the specific research question, available data, and computational resources, but the ongoing integration of their best elements points toward a more powerful and unified future for materials informatics.

In the field of materials informatics, the quest for a universal descriptor that can accurately predict a wide range of material properties has long been a primary research objective. According to the foundational Hohenberg-Kohn theorem of density functional theory (DFT), the ground-state electron charge density ρ(r) of a material uniquely determines all its other ground-state properties [11] [12]. This theoretical principle establishes electronic charge density as a fundamentally complete descriptor, containing all necessary information about a material's quantum mechanical state without requiring additional parameters or approximations. Unlike empirically derived descriptors that may only correlate with specific properties, electronic charge density enjoys a rigorous theoretical foundation that directly links it to the entire spectrum of material behaviors, from electronic and thermal to mechanical and optical characteristics.

The significance of this theorem for machine learning in materials science is profound. It suggests that if a machine learning model can accurately learn the mapping from atomic structure to electron charge density, or directly use charge density as an input descriptor, it could in principle predict any ground-state property of interest [1]. This approach bypasses the need for property-specific feature engineering, instead relying on a single, physically rigorous representation of the material. Recent research has begun to capitalize on this theoretical insight, exploring how electronic charge density can serve as a universal descriptor within machine learning frameworks to achieve unprecedented transferability across diverse property prediction tasks [1] [12].

Comparative Analysis of Charge-Density-Based Machine Learning Approaches

Algorithmic Landscape and Performance Metrics

The application of electronic charge density in machine learning has evolved along several methodological pathways, each with distinct advantages and limitations. Researchers have developed various architectures to handle the complex, three-dimensional nature of charge density data while maintaining the physical symmetries inherent to atomic systems.

Table 1: Comparison of Machine Learning Approaches Utilizing Electronic Charge Density

| Model/Approach | Input Representation | Key Innovations | Applicable System Sizes | Reported Performance (R²) |

|---|---|---|---|---|

| Δ-SAED [13] | Atomic structure → Difference charge density | Uses difference from atomic superposition; improves transferability | Small to medium molecules & crystals | >90% structures show accuracy gain |

| Universal MSA-3DCNN [1] | 3D charge density images | Multi-scale attention; interpolation for dimension uniformity | Diverse materials (dataset: Materials Project) | Single-task: 0.66 avg; Multi-task: 0.78 avg |

| ChargE3Net [12] | Atomic species & positions | Higher-order equivariant features (SO(3) irreps) | Up to 10,000+ atoms | 26.7% reduction in SCF iterations on MP data |

| Grid-Based GNNs [12] | Discretized 3D grid points | Basis-set agnostic; natural compatibility with DFT codes | Limited by grid resolution | Lower accuracy vs. equivariant methods |

| Local Basis Expansion [12] | Atomic orbital basis coefficients | Computational efficiency | Restricted to trained basis sets | Limited generalizability across materials |

Performance Benchmarks Across Material Properties

Quantitative evaluation of charge-density-based models reveals their capability to predict diverse material properties with varying degrees of accuracy. The universal descriptor approach demonstrates particular strength in multi-task learning environments, where exposure to multiple property targets during training enhances overall model performance.

Table 2: Property Prediction Performance of Universal Charge Density Models

| Target Property | Model | Dataset | Performance Metric | Result |

|---|---|---|---|---|

| Multiple Properties | Universal MSA-3DCNN (Single-task) | Materials Project (8 properties) | Average R² across properties | 0.66 |

| Multiple Properties | Universal MSA-3DCNN (Multi-task) | Materials Project (8 properties) | Average R² across properties | 0.78 |

| DFT Initialization | ChargE3Net | Materials Project (100K+ materials) | Reduction in SCF iterations | 26.7% |

| DFT Initialization | ChargE3Net | GNoME materials | Reduction in SCF iterations | 28.6% |

| Non-SCF Properties | ChargE3Net | Diverse materials | Accuracy vs DFT | Near-DFT performance |

Experimental Protocols and Methodologies

Data Acquisition and Preprocessing Standards

The development of robust machine learning models for charge density prediction requires meticulous data collection and standardization procedures. High-quality datasets derived from density functional theory calculations serve as the foundation for training and evaluation. The ECD-cubic database, for instance, contains 17,418 cubic inorganic materials with charge density data calculated using the Perdew-Burke-Ernzerhof (PBE) functional, while a subset of 7,147 geometries includes higher-precision data calculated with the Heyd-Scuseria-Ernzerhof (HSE) functional [14] [11]. These datasets are curated from established sources like the Materials Project database, which provides atomic species, positions, and structural information for thousands of inorganic compounds.

Data preprocessing presents significant challenges due to the variable dimensions of charge density data across different materials. As Wang et al. note, "the dimensions are directly connected to the lattice parameters in Cartesian coordinates," making the data material-dependent and impossible to pre-align without potentially losing computational accuracy [1]. To address this, researchers have developed innovative standardization approaches, including converting 3D matrix data into image representations and implementing carefully designed interpolation schemes to create uniform dimensions across different materials while preserving critical information content [1].

Model Architectures and Training Protocols

The ChargE3Net framework exemplifies the advanced architectural approaches being developed for charge density prediction. This model employs higher-order equivariant neural networks that respect the physical symmetries of atomic systems. The architecture utilizes irreducible representations (irreps) of SO(3) with rotation orders up to L=4, enabling the network to capture complex angular variations in electron density [12]. The model operates by introducing probe points at locations where charge densities are to be predicted, then using equivariant tensor products with Clebsch-Gordan coefficients to combine representations while maintaining rotational equivariance [12].

Training protocols for these models typically employ a combination of mean absolute error (MAE) or mean squared error (MSE) losses between predicted and DFT-calculated charge densities. For the universal property prediction model described by Wang et al., both single-task and multi-task learning approaches are implemented, with the multi-task approach demonstrating significantly enhanced performance (average R² of 0.78 vs 0.66 for single-task) [1]. Transfer learning techniques have also proven valuable, particularly when fine-tuning models pretrained on large-scale PBE data with smaller sets of high-precision HSE functional data [14].

Visualization of Methodologies and Workflows

Universal Charge Density Prediction Framework

ChargE3Net Model Architecture

Research Reagents and Computational Tools

The experimental implementation of charge-density-based machine learning requires specific computational tools and datasets. The table below details essential "research reagents" for working in this domain.

Table 3: Essential Research Reagents for Charge-Density-Based Machine Learning

| Resource Name | Type | Primary Function | Access/Reference |

|---|---|---|---|

| ECD Dataset [14] | Benchmark Dataset | Provides 140,646 PBE and 7,147 HSE charge densities for model training & evaluation | Open-sourced for community development |

| ECD-cubic Database [11] | Specialized Dataset | Contains 17,418 cubic inorganic materials with calculated ρ(r) for ML studies | Available for data-driven materials research |

| VASP Software | Simulation Tool | Performs DFT calculations to generate reference charge density data | Commercial/Academic license |

| Materials Project [11] [12] | Materials Database | Source of atomic structures and calculated properties for training data | Publicly accessible database |

| ChargE3Net Model [12] | Software Framework | Higher-order equivariant neural network for charge density prediction | Implementation details in reference |

| Matbench [15] | Benchmarking Suite | Standardized test suite for evaluating materials property prediction methods | Open-source benchmarking platform |

The experimental evidence compiled in this comparison guide demonstrates that electronic charge density serves as a powerful universal descriptor for materials property prediction across multiple benchmarks. The multi-task learning approach shows a significant 18% improvement in average prediction accuracy (R² increasing from 0.66 to 0.78) compared to single-task models [1], highlighting the transferability advantages of the universal descriptor paradigm. Furthermore, models like ChargE3Net achieve substantial computational efficiency gains, reducing self-consistent field iterations in DFT calculations by 26.7-28.6% [12], which translates to meaningful acceleration of materials screening workflows.

The comparative analysis reveals that higher-order equivariant architectures consistently outperform methods limited to scalar or vector representations, particularly for systems with complex angular variations in electron density [12]. While grid-based approaches offer natural compatibility with DFT codes, their computational demands present scalability challenges compared to more parameter-efficient graph neural network implementations. Future research directions should focus on enhancing model interpretability, expanding to dynamic and excited-state properties, and improving scalability for high-throughput materials discovery platforms. The growing availability of standardized charge density datasets and benchmarking suites will continue to drive innovation in this promising domain at the intersection of density functional theory and machine learning.

Understanding Dataset Redundancy and Its Impact on Model Performance

Dataset redundancy, a prevalent characteristic of large materials science databases, significantly influences the performance and generalizability of machine learning (ML) models for property prediction. This guide objectively compares the core methodologies and findings of two principal research approaches addressing this issue: the pruning and active learning framework and the similarity-based redundancy control algorithm (MD-HIT).

Experimental Data and Performance Comparison

The following tables synthesize quantitative data from key experiments, comparing model performance under different redundancy-handling conditions.

Table 1: In-Distribution (ID) Performance with Pruned Data for Formation Energy Prediction [16]

| Model | Dataset | Full Model RMSE (meV/atom) | Reduced Model (20% data) RMSE (meV/atom) | Relative RMSE Increase | % of Data Deemed Informative |

|---|---|---|---|---|---|

| Random Forests (RF) | JARVIS-2018 | ~56 | ~59 | <6% | 13% |

| XGBoost (XGB) | JARVIS-2018 | ~56 | ~62 | ~10% | 20-30% |

| ALIGNN | JARVIS-2018 | ~56 | ~60 (est.) | ~7% (est.) | 55% |

Table 2: Comparative Performance of Redundancy-Reduction Methods on Object Detection (AIRS Dataset) [17]

| Filtering Method | Basis of Method | mAP at 20% Data | mAP at 85% Data | Key Characteristic |

|---|---|---|---|---|

| RSS (Random Sub-sampling) | Baseline | 0.72 (est.) | 0.84 | Baseline performance |

| WTL_unc | Prediction Uncertainty | ~0.72 | - | Performed on par or worse than RSS |

| WTL_CS | Uncertainty + Diversity | 0.80 | - | Re-balanced dataset, better performance |

| WTL_pt | Pre-trained Model Similarity | - | 0.84 | Achieved max performance with 85% of data |

Table 3: Test Set Performance With and Without Redundancy Control (MD-HIT) [5]

| Prediction Task | Input Type | Model | Performance (Random Split) | Performance (MD-HIT Split) | Note |

|---|---|---|---|---|---|

| Formation Energy | Composition | - | Overestimated, high R² | Lower, more realistic R² | Better reflects true capability |

| Band Gap | Structure | - | Overestimated, high R² | Lower, more realistic R² | Better reflects true capability |

Detailed Experimental Protocols

This methodology evaluates redundancy by measuring performance degradation as data is systematically removed.

- Data Splitting: The original dataset (S0) is first split into a training pool (90%) and a hold-out In-Distribution (ID) test set (10%). An Out-of-Distribution (OOD) test set is created using new materials from a more recent version of the database (S1).

- Pruning Algorithm:

- The training pool is randomly split into two subsets, A and B.

- A model is trained on subset A and used to predict the properties of samples in subset B.

- Samples in B with the lowest prediction errors (e.g., Mean Absolute Error) are deemed redundant and pruned.

- The remaining samples from A and B are merged and the process repeats iteratively, progressively reducing the dataset size.

- Model Training & Evaluation: Machine learning models (e.g., Random Forests, XGBoost, ALIGNN) are trained on these progressively smaller datasets. Their performance is evaluated on the ID test set, the unused pool data, and the OOD test set.

- Redundancy Quantification: A threshold (e.g., 10% relative increase in RMSE) defines the maximum acceptable performance degradation. The smallest dataset size that stays within this threshold determines the informative portion of the data; the rest is considered redundant.

This approach directly controls sample similarity before splitting data to prevent over-optimistic performance evaluation.

- Similarity Thresholding: A similarity threshold is defined. For composition-based tasks, this could be based on chemical formula similarity; for structure-based tasks, it could be based on crystal structure similarity.

- Cluster Creation: The entire dataset is processed, and materials are grouped into clusters where all members are sufficiently similar to each other based on the chosen metric and threshold.

- Representative Selection: From each cluster, a single representative sample is selected for the final non-redundant dataset.

- Data Splitting: The non-redundant dataset is then split into training and test sets. This ensures no two highly similar samples are in both training and test sets, providing a more rigorous and realistic evaluation of model generalizability.

Workflow and Relationship Diagrams

Dataset Pruning and Evaluation Workflow

Redundancy Control via MD-HIT

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Tools and Datasets for Material Property Prediction Research [16] [5]

| Item Name | Type | Function/Brief Explanation |

|---|---|---|

| JARVIS-DFT [16] | Materials Database | A large-scale DFT database used for training and benchmarking ML models on properties like formation energy and band gap. |

| Materials Project (MP) [16] [5] | Materials Database | A widely used resource providing computed information on known and predicted materials, often a source of dataset redundancy. |

| Open Quantum Materials Database (OQMD) [16] [5] | Materials Database | Another extensive DFT database contributing to large-scale materials data for ML studies. |

| ALIGNN [16] | Machine Learning Model | A state-of-the-art graph neural network that uses atomic and line graph information for accurate material property prediction. |

| XGBoost [16] | Machine Learning Model | A powerful gradient-boosting framework effective for tabular data, often used as a high-performance baseline model. |

| MD-HIT [5] | Algorithm/Software | A proposed redundancy reduction algorithm that creates non-redundant benchmark datasets by controlling sample similarity. |

| Uncertainty-based Active Learning [16] | Algorithmic Framework | A method for constructing small but informative training sets by iteratively selecting data points where the model is most uncertain. |

The accelerated discovery of new materials is a key driver of technological progress, powering innovations in areas ranging from more efficient solar cells and longer-lived batteries to smaller transistor gates [18] [19]. Computational materials science has emerged as a crucial discipline in this endeavor, with high-throughput calculations and machine learning (ML) offering powerful tools to navigate the vast combinatorial space of possible inorganic materials, estimated to include over 10 billion possible quaternary combinations alone [19] [20]. Central to these efforts are large-scale databases and benchmarking platforms that provide standardized, reliable data for training and evaluating computational models.

This guide focuses on three pivotal resources in this ecosystem: The Materials Project (MP) and the Open Quantum Materials Database (OQMD) as primary sources of computed materials properties, and Matbench as a standardized framework for evaluating the performance of machine learning models that predict these properties. Understanding the role, interrelationships, and proper application of these resources is fundamental for researchers conducting and validating materials informatics research.

Database and Benchmark Platform Profiles

The Materials Project (MP)

The Materials Project is a core initiative that provides a centralized repository for computed materials data, primarily derived from Density Functional Theory (DFT) calculations. It employs consistent computational techniques across its datasets, making it an ideal source of clean and reliable data for machine learning applications [21]. The platform offers data on a vast array of properties, including electronic, thermal, thermodynamic, and mechanical characteristics, and provides tools for accessing and analyzing this data. Its role as a primary data source for many ML benchmarks, including Matbench tasks, makes it a foundational pillar in the computational materials science community [21].

Open Quantum Materials Database (OQMD)

The Open Quantum Materials Database is another critical high-throughput database storing DFT-computed properties for a large number of inorganic crystals. Alongside MP and AFLOW, OQMD is one of the major sources that has enabled researchers to train so-called universal machine learning models covering many of the most application-relevant elements in the periodic table [19] [20]. These databases have been instrumental in shifting the field from training custom models on specific material systems to developing broad-coverage models that open the prospect for genuine ML-guided materials discovery.

Matbench

Matbench is a dedicated benchmarking effort designed to fill a role similar to ImageNet in computer vision: providing standardized tasks to objectively compare the performance of different machine learning algorithms [21]. It consists of a curated collection of 13 datasets spanning diverse materials properties, with dataset sizes ranging from approximately 312 to 132,000 samples [21] [6]. Matbench includes both experimental and calculated data, with and without structural information, allowing comprehensive evaluation of model capabilities. A core component is its public leaderboard, which enables researchers to submit model predictions and compare performance against established baselines and state-of-the-art approaches, thereby fostering transparency and progress in the field [21].

Table 1: Overview of Core Materials Informatics Resources

| Resource Name | Primary Type | Key Function | Notable Features |

|---|---|---|---|

| Materials Project (MP) | Data Repository | Centralized repository for computed materials data | Consistent DFT calculations; Diverse property data; Tools for data access & analysis [21] |

| Open Quantum Materials Database (OQMD) | Data Repository | High-throughput database of DFT-computed properties | Enables training of universal ML models; Major source for expansive chemical space coverage [19] [20] |

| Matbench | Benchmarking Platform | Standardized evaluation of ML model performance | 13 diverse tasks; Public leaderboard; Focus on model comparison & progress tracking [21] |

| JARVIS-Leaderboard | Benchmarking Platform | Comprehensive benchmarking across multiple methodologies | Covers AI, ES, FF, QC, EXP; Multiple data types (structures, images, spectra, text) [6] |

| Matbench Discovery | Specialized Benchmark | Simulates real-world materials discovery campaigns | Tests stability prediction from unrelaxed structures; Prospective benchmarking [18] [19] |

The Benchmarking Ecosystem and Experimental Protocols

While MP and OQMD provide essential training data, benchmarking platforms like Matbench are critical for objectively assessing model performance. The ecosystem has recently expanded to include more specialized benchmarks, such as Matbench Discovery, which addresses specific challenges in materials discovery not fully covered by general-purpose benchmarks.

The Matbench Discovery Framework

Matbench Discovery is an evaluation framework specifically designed to simulate a real-world discovery campaign where ML models act as pre-filters to DFT in a high-throughput search for stable inorganic crystals [18] [19]. It was created to address four fundamental challenges in benchmarking ML for materials discovery:

- Prospective Benchmarking: Moving beyond idealized, retrospective data splits that may not reflect real-world application challenges. It uses test data generated from the intended discovery workflow, creating a realistic covariate shift between training and test distributions [18].

- Relevant Targets: Shifting the prediction target from formation energy—a common but incomplete metric—to the distance to the convex hull phase diagram, which is a more direct indicator of thermodynamic stability [18] [19].

- Informative Metrics: Highlighting that global regression metrics like Mean Absolute Error (MAE) can be misleading. Instead, it emphasizes classification performance (e.g., F1 score) for stability prediction to avoid high false-positive rates that waste laboratory resources [18] [19].

- Scalability: Ensuring benchmarks are large and chemically diverse enough to test model performance in data regimes relevant for true deployment, where the test set may be larger than the training set [18].

Key Experimental Workflows

The experimental protocol in Matbench Discovery enforces a non-circular discovery process. While models may train on any available data (including relaxed structures from MP or OQMD), they must make predictions at test time on the convex hull distance of the relaxed structure using only the unrelaxed structure as input [19] [20]. This prevents a circular dependency where the model requires the output of expensive DFT calculations (relaxed structures) to make predictions that are supposed to reduce the need for those very calculations.

The following diagram illustrates the contrasting workflows of traditional high-throughput screening and the ML-accelerated approach benchmarked by Matbench Discovery:

Diagram 1: High-Throughput Materials Discovery Workflows. Contrasts the traditional DFT-only screening approach (top) with the ML-accelerated workflow (bottom) that uses machine learning models as pre-filters to reduce the computational burden of Density Functional Theory calculations.

Performance Comparison and Key Findings

Rigorous benchmarking within frameworks like Matbench Discovery has yielded crucial insights into the relative performance of different ML methodologies for materials stability prediction.

Methodology Performance Ranking

Initial releases of Matbench Discovery benchmarked a wide range of approaches, including random forests, graph neural networks (GNNs), one-shot predictors, iterative Bayesian optimizers, and universal interatomic potentials (UIPs) [19]. The results, ranked by test set F1 score for thermodynamic stability prediction, are summarized in the table below.

Table 2: Machine Learning Model Performance on Crystal Stability Prediction (Matbench Discovery)

| Model/Methodology | Key Finding / Performance Note | Primary Methodology Category |

|---|---|---|

| EquiformerV2 + DeNS | Top performer, top tier F1 score (0.57-0.82 range) | Universal Interatomic Potential (UIP) |

| Orb | High performer, top tier F1 score (0.57-0.82 range) | Universal Interatomic Potential (UIP) |

| SevenNet | High performer, top tier F1 score (0.57-0.82 range) | Universal Interatomic Potential (UIP) |

| MACE | Ranked 4th in initial v2 benchmark, leading UIP | Universal Interatomic Potential (UIP) |

| CHGNet | Ranked 5th in initial v2 benchmark | Universal Interatomic Potential (UIP) |

| M3GNet | Ranked 6th in initial v2 benchmark | Universal Interatomic Potential (UIP) |

| ALIGNN | GNN performance below UIPs | Graph Neural Network (GNN) |

| MEGNet | GNN performance below UIPs | Graph Neural Network (GNN) |

| CGCNN | GNN performance below UIPs | Graph Neural Network (GNN) |

| Wrenformer | Performance below UIPs and leading GNNs | Other ML |

| BOWSR | Performance below UIPs and leading GNNs | Other ML |

| Voronoi Fingerprint RF | Lowest performing model | Other ML / Classical ML |

Critical Insights from Benchmarking

- Universal Interatomic Potentials are the Leading Methodology: UIPs consistently dominated the rankings, achieving the highest F1 scores for stability classification (ranging from 0.57 to 0.82) [19]. This establishes UIPs as the most effective current approach for ML-guided materials discovery.

- Significant Performance Gap: The top three models are all UIPs, and they substantially outperform other methodologies, including graph neural networks and classical machine learning models [19] [20].

- High Discovery Acceleration: The leading UIPs achieve discovery acceleration factors (DAF) of up to 5-6x on the first 10,000 most stable predictions compared to random selection [19]. This demonstrates a concrete practical benefit, significantly optimizing computational budget allocation for expanding materials databases.

- Misalignment Between Regression and Classification Metrics: A critical finding is the disconnect between commonly used regression metrics (MAE, RMSE) and task-relevant classification metrics. Models with accurate formation energy predictions can still produce high false-positive rates if their errors occur near the stability decision boundary (0 eV/atom above the convex hull) [18] [19]. This underscores why benchmarks must evaluate models based on their final application task.

The effective use of these databases and benchmarks is supported by a suite of software tools and community standards. The following table details key resources that form the essential toolkit for researchers in this field.

Table 3: Essential Computational Tools for Materials Informatics

| Tool Name | Category | Primary Function & Utility |

|---|---|---|

| Matminer | Featurization | A Python toolbox for converting materials primitives (e.g., crystal structures) into feature vectors using routines from peer-reviewed literature [21]. |

| Automatminer | Automated ML | An "AutoML" engine that automatically determines feature sets, performs feature reduction, and searches ML model/hyperparameter spaces to create optimal prediction pipelines [21]. |

| JARVIS-Leaderboard | Benchmarking | A comprehensive platform comparing methods across AI, Electronic Structure, Force-fields, Quantum Computation, and Experiments using diverse data types [6]. |

| Matbench Python Package | Benchmarking | Provides programmatic access to Matbench datasets and tools for standardized model evaluation and submission to the public leaderboard [21]. |

| High-Throughput DFT | Simulation | The computational workhorse (e.g., VASP, Quantum ESPRESSO) generating reference data for MP, OQMD. Consumes major supercomputing resources (e.g., 45% of Archer2 core hours) [18] [19]. |

The relationships between these tools, the core databases, and the ultimate goal of materials discovery can be visualized as an integrated ecosystem:

Diagram 2: The Integrated Materials Informatics Ecosystem. Depicts the workflow from data generation through to discovery, highlighting the roles of databases, analysis tools, and benchmarking platforms.

The Materials Project, OQMD, and Matbench represent critical, complementary pillars in the modern computational materials science infrastructure. MP and OQMD serve as foundational data repositories that provide the consistent, large-scale training data necessary for developing sophisticated ML models. Matbench, and its specialized extension Matbench Discovery, provide the essential benchmarking framework required to objectively evaluate these models, guide methodological progress, and identify the most promising approaches for real-world applications.

The collective insights from these resources clearly indicate that universal interatomic potentials currently represent the state-of-the-art for ML-guided materials discovery, effectively balancing accuracy and computational efficiency to serve as powerful pre-filters in high-throughput screening workflows. Furthermore, the community's move toward prospective benchmarking and task-relevant evaluation metrics ensures that benchmark results translate into genuine acceleration of materials discovery, ultimately contributing to the faster development of new technologies critical for addressing sustainability and energy challenges.

Algorithmic Approaches in Practice: From Traditional ML to Advanced Deep Learning

The accurate prediction of material properties is a cornerstone of modern scientific research, accelerating the discovery and development of new compounds, alloys, and pharmaceuticals. Within this domain, traditional machine learning (ML) models have established themselves as powerful tools, offering a favorable balance between predictive performance and computational efficiency. This guide provides a comprehensive comparison of three prominent traditional ML algorithms—Random Forest, XGBoost, and K-Nearest Neighbors (KNN)—focusing on their application in predicting material properties. We objectively evaluate their performance against one another using recent experimental data, detail the methodologies from key studies, and provide visualizations of their workflows to assist researchers, scientists, and drug development professionals in selecting the most appropriate algorithm for their specific research context.

Model Performance Comparison

The predictive performance of Random Forest, XGBoost, and K-Nearest Neighbors varies significantly across different tasks and datasets. The following tables summarize quantitative results from recent studies, providing a basis for objective comparison.

Table 1: Classification Performance on Attitude Towards AI Dataset (F1-Score %) [22]

| Algorithm | F1-Score (%) |

|---|---|

| Support Vector Machine (SVM) | 95.52 |

| CatBoost | 93.66 |

| Random Forest | 92.56 |

| XGBoost | 92.36 |

| K-Nearest Neighbors (KNN) | Not Reported |

| Multilayer Perceptron (MLP) | 81.87 |

| Decision Tree | 82.72 |

Note: This study classified university students' attitudes towards AI. KNN's performance was not among the top reported models. The results highlight the strong performance of ensemble methods like Random Forest and XGBoost in classification tasks. [22]

Table 2: Regression Performance on COVID-19 Mortality Prediction (R², MAE, RMSE) [23]

| Algorithm | R² | MAE | RMSE |

|---|---|---|---|

| Random Forest | 0.983 | 0.61 | 2.79 |

| XGBoost | Very High (exact value not specified) | Not Reported | Not Reported |

| Decision Tree | Lower than ensemble methods | Not Reported | Not Reported |

| K-Nearest Neighbors (KNN) | Lower than ensemble methods | Not Reported | Not Reported |

Note: This study predicted daily new COVID-19 deaths using sociodemographic, healthcare, and policy-related variables. Random Forest demonstrated superior predictive performance, with XGBoost also performing very well. KNN and Decision Tree exhibited weaker accuracy. [23]

Table 3: Performance Under Varying Class Imbalance Levels (Best F1-Score) [24]

| Algorithm & Upsampling Technique | Performance Summary |

|---|---|

| Tuned XGBoost with SMOTE | Consistently achieved the highest F1 score across all imbalance levels (from 15% to 1% churn rate). |

| Random Forest | Performed poorly under conditions of severe class imbalance. |

| SMOTE (with XGBoost) | Emerged as the most effective upsampling method. |

Note: This research on customer churn prediction highlights XGBoost's robustness when combined with proper data preprocessing techniques like SMOTE, especially in challenging scenarios with highly imbalanced data, a common occurrence in scientific datasets. [24]

Table 4: General Model Characteristics and Sensitivity [25]

| Algorithm | Sensitivity to Feature Scaling | Key Characteristics (from cited literature) |

|---|---|---|

| Random Forest | Robust (Not sensitive) | Ensemble method; mitigates overfitting; provides good generalization. [25] [23] |

| XGBoost | Robust (Not sensitive) | Sequential ensemble method; corrects errors from previous trees; minimizes overfitting; computationally efficient. [25] [23] |

| K-Nearest Neighbors (KNN) | Highly Sensitive | Requires feature scaling for reliable performance; performance depends on similarity-based computation in feature space. [25] [23] |

| Support Vector Machine (SVM) | Highly Sensitive | Included for context. [25] |

Experimental Protocols and Methodologies

To ensure the reproducibility of the results presented in this guide, this section details the key experimental protocols and methodologies from the cited studies.

This large-scale study provides a foundational protocol for evaluating ML algorithms, with a specific focus on the impact of data preprocessing.

- Objective: To systematically assess the impact of 12 different feature scaling techniques on the performance of 14 machine learning algorithms.

- Datasets: 16 diverse datasets covering both classification and regression tasks.

- Algorithms Evaluated: Included Random Forest, XGBoost, and K-Nearest Neighbors, among others.

- Evaluation Metrics: Predictive performance was measured using accuracy, MAE, MSE, and R². Computational efficiency was assessed via training time, inference time, and memory usage.

- Key Findings: Ensemble methods like Random Forest and XGBoost were found to be robust regardless of feature scaling, while KNN was identified as highly sensitive to the choice of scaler. This underscores the critical need for careful preprocessing selection when using distance-based algorithms.

This protocol outlines a robust methodology for comparing classification performance across multiple algorithms.

- Objective: To classify university students' attitudes towards artificial intelligence into three categories ("Insufficient", "Sufficient", and "Strongly Sufficient").

- Data: A dataset of 1,379 students, with 29 variables determining attitudes.

- Algorithms: MLP, Decision Tree, KNN, XGBoost, Random Forest, CatBoost, and SVM.

- Validation Method: 5-fold cross-validation.

- Performance Metrics: Accuracy, precision, recall, and F1-score were calculated for each algorithm. The study also analyzed confusion matrices to understand misclassification patterns, particularly for the "Strongly Sufficient" class.

This protocol is specifically designed for scenarios involving class imbalance, a common challenge in real-world data.

- Objective: To examine the efficacy of Random Forest and XGBoost classifiers when used with upsampling techniques (SMOTE, ADASYN, GNUS) across varying class imbalance levels.

- Datasets: Telecommunications churn data with imbalance levels ranging from moderate to extreme (15% to 1% churn rate).

- Evaluation Metrics: A comprehensive set of metrics was used, including F1 score, ROC AUC, PR AUC, Matthews Correlation Coefficient (MCC), and Cohen's Kappa.

- Optimization: Hyperparameter tuning was performed using Grid Search.

- Validation: Rigorous statistical analyses (Friedman test and Nemenyi post hoc comparisons) were employed to confirm the significance of the results.

- Key Findings: Tuned XGBoost paired with SMOTE consistently achieved the highest F1 score and robust performance across all imbalance levels.

Workflow and Logical Diagrams

The following diagrams illustrate the general workflows for the discussed machine learning models, based on the methodologies from the search results.

Random Forest Workflow for Regression

Random Forest Workflow for Regression

XGBoost Sequential Building Workflow

XGBoost Sequential Building Workflow

K-Nearest Neighbors (KNN) Classification Workflow

K-Nearest Neighbors (KNN) Classification Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

This section details key computational "reagents" and tools essential for working with the machine learning models discussed, drawing from the methodologies in the cited research.

Table 5: Essential Tools for Machine Learning in Material Property Prediction

| Item Name | Function & Application | Relevance to Material Science |

|---|---|---|

| Electronic Charge Density [1] | A physically grounded descriptor used as input for predicting material properties. It provides a direct correlation with a material's electronic structure and properties. | Enables accurate prediction of diverse material properties (with R² up to 0.94) within a unified framework, demonstrating excellent transferability. [1] |

| Synthetic Minority Oversampling Technique (SMOTE) [24] | An upsampling technique that generates synthetic samples for the minority class to address class imbalance in datasets. | Crucial for predicting rare material properties or events (e.g., specific catalytic activity, defect formation) where positive cases are scarce in the data. [24] |

| Grid Search [24] | A hyperparameter optimization technique that exhaustively searches a specified parameter grid to find the model configuration that yields the best performance. | Essential for maximizing the predictive accuracy of models like Random Forest and XGBoost by systematically tuning their parameters for a given materials dataset. [24] |

| Feature Scaling (e.g., Standardization, Min-Max) [25] | A preprocessing step that normalizes or standardizes the range of input features. | Critical for distance-based algorithms like KNN. Ensemble methods like Random Forest and XGBoost are robust and less sensitive to this step. [25] |

| Cross-Validation (e.g., 5-Fold) [22] | A resampling procedure used to evaluate a model's ability to generalize to an independent dataset, mitigating overfitting. | Provides a reliable estimate of model performance on unseen material data, which is vital for validating the robustness of a predictive framework. [22] |

The discovery and development of new crystalline materials are fundamental to technological advances in fields ranging from clean energy to information processing. Traditional methods relying on empirical rules or computationally intensive first-principles calculations, such as those based on Density Functional Theory (DFT), have long served as the cornerstone of materials research [26]. However, the emergence of deep learning techniques, particularly Graph Neural Networks (GNNs), is now profoundly transforming this research paradigm [26].

GNNs are exceptionally well-suited for modeling crystalline materials because of a natural fit between crystal structures and graph theory. These models view crystals as complex graph structures composed of atoms (nodes) and bonds (edges), enabling them to leverage graph networks to capture intricate patterns of atomic arrangements and their interactions [26]. This guide provides an objective comparison of major GNN architectures for crystalline material property prediction, focusing on the foundational Crystal Graph Convolutional Neural Network (CGCNN), subsequent enhancements to it, and other significant models like MEGNet.

Core GNN Architectures for Crystalline Materials

The Foundational Framework: CGCNN

The Crystal Graph Convolutional Neural Network (CGCNN) introduced a groundbreaking method for converting the crystal structure of a unit cell into a graphical representation [26]. In this representation:

- Nodes represent atoms within the unit cell.

- Edges represent connections between atoms within a specified cutoff radius [27].

- Each node is initially assigned a feature vector based on the atom's properties, often using a one-hot encoding of its element [28].

The network then applies convolutional operations that systematically learn local environments by passing and aggregating node features between neighbors, ultimately producing a compressed feature vector for the entire crystal graph that is used for property prediction [27]. This graph-based approach to representing crystal structures has been widely adopted as a foundation for numerous subsequent advancements [26].

Evolution Beyond Foundational CGCNN

Feature-Enriched CGCNN

To address the standard CGCNN's limitations in predicting complex magnetic properties, researchers have developed feature-enriched models. These enhancements integrate physically meaningful atomic attributes to improve representation quality:

- Spin-Augmented Node Features: Integration of atomic spin magnetic moments from DFT calculations as node attributes enables accurate prediction of magnetization in both ferromagnetic (FM) and ferrimagnetic (FiM) compounds, which exhibit complex magnetic behaviors with non-equivalent magnetic sublattices and antiparallel spin couplings [27].

- Enhanced Structural Encoding: Using exact fractional coordinates and lattice parameters directly from material structure helps preserve anisotropic spin-lattice couplings and long-range geometric relationships critical to magnetism [27].

- Normalized Atomic Descriptors: Replacement of simple one-hot encoding with normalized features including atomic mass, atomic number, atomic radius, and electron affinity through min-max scaling allows the model to learn richer chemical representations [27].

This approach demonstrates strong transfer learning capabilities across diverse material families, including transition-metal compounds, rare-earth compounds, Heusler alloys, and MXenes, performing robustly even with limited datasets [27].

MatGNet: Enhanced Encoding and Angular Features

The MatGNet model introduces several key innovations that advance beyond the basic CGCNN framework [26]:

- Mat2vec Atomic Embedding: Replaces the one-hot encoding method (limited to nine element attributes) with pre-trained mat2vec embeddings, which capture richer chemical context and similarities between elements based on extensive scientific text corpora [26].

- Angular Feature Incorporation: Introduces line graphs to embed angular information between atomic bonds, capturing three-body correlations that significantly enhance the model's representation of crystal geometry [26].

- Advanced Network Architecture: Implements an improved gated graph convolutional network with a self-attention mechanism for efficient message passing across both atomic and line graphs [26].

Experimental results on the JARVIS-DFT dataset demonstrate that MatGNet achieves state-of-the-art accuracy on multiple property prediction tasks, outperforming previous models including standard CGCNN [26].

Alternative Architectural Approaches

MEGNet (MatErials Graph Network)

While not extensively detailed in the provided search results, the MatErials Graph Network (MEGNet) framework is recognized as a significant implementation of graph-based representation for materials property prediction [29]. The MDL (MatDeepLearn) toolkit supports MEGNet alongside other GNN architectures, providing researchers with a versatile framework for developing property prediction models [29].

GNoME: Scaling for Materials Discovery

The Graph Networks for Materials Exploration (GNoME) framework demonstrates the impact of scale on model performance [28]. Through large-scale active learning, GNoME has achieved unprecedented levels of generalization, substantially improving the efficiency of materials discovery:

- Architecture: GNoME models are GNNs following a message-passing formulation, where aggregate projections are shallow multilayer perceptrons (MLPs) with swish nonlinearities [28].

- Scale Advantages: A key innovation involves normalizing messages from edges to nodes by the average adjacency of atoms across the entire dataset, which becomes increasingly important with model scaling [28].

- Performance Scaling: GNoME models exhibit neural scaling laws, with test loss improving as a power law with increased data, achieving a prediction error of 11 meV atom⁻¹ on relaxed structures [28].

- Discovery Impact: This framework has led to the discovery of 2.2 million crystal structures stable with respect to previous datasets, expanding known stable materials by nearly an order of magnitude [28].

Quantitative Performance Comparison

Table 1: Performance Comparison of GNN Models on Material Property Prediction Tasks

| Model | Key Innovations | Reported Accuracy/Dataset | Strengths | Limitations |

|---|---|---|---|---|

| CGCNN [26] | Crystal to graph conversion; convolutional operations on atomic neighbors | Foundation for later models; widely adopted | Intuitive representation of crystal structures; established benchmark | Limited atomic feature set; minimal physical descriptor integration |

| Feature-Enriched CGCNN [27] | Atomic spin moments; normalized atomic descriptors; exact structural parameters | Accurate magnetization prediction for FM/FiM compounds; strong transfer learning | Captures complex magnetic behavior; reduces need for full DFT calculations | Requires initial DFT calculations for atomic spin moments |

| MatGNet [26] | Mat2vec embeddings; line graphs for angular features; gated convolution with attention | State-of-the-art on JARVIS-DFT dataset; outperforms CGCNN | Comprehensive structural representation; superior prediction accuracy | Computationally intensive; slower training due to angular features |

| GNoME [28] | Scaled architecture; active learning; normalized message passing | 11 meV atom⁻¹ energy prediction; >80% hit rate for stable structures | Unprecedented discovery capability; emergent out-of-distribution generalization | Requires massive computational resources for training and evaluation |

Table 2: Experimental Results for Specific Property Predictions

| Model | Property Predicted | Dataset | Performance Metric | Result |

|---|---|---|---|---|

| Feature-Enriched CGCNN [27] | Magnetization (FM/FiM compounds) | Materials Project (Transition metals) | Accuracy vs. DFT | Accurate prediction across diverse magnetic materials |

| MatGNet [26] | Multiple properties (12 tasks) | JARVIS-DFT (dft3d2021) | Mean Absolute Error (MAE) | Outperformed Matformer, PST, and previous GNNs |

| GNoME [28] | Formation energy/Stability | Multi-source (MP, OQMD) + Active Learning | Formation Energy MAE | 11 meV atom⁻¹ |

| GNoME [28] | Structure Stability | Active Learning Discovery | Hit Rate (Structures) | >80% |

| GNoME [28] | Composition Stability | Active Learning Discovery | Hit Rate (Compositions) | ~33% per 100 trials |

Experimental Protocols and Methodologies

Data Preparation and Curation

The performance of GNN models heavily depends on high-quality datasets. Commonly used databases include:

- Materials Project [27]: Provides computational data on thousands of known and predicted crystals, frequently used for training magnetic property predictors [27].

- JARVIS-DFT [26]: Contains extensive VASP-computed properties for 3D materials, used for comprehensive benchmarking of models like MatGNet [26].

- ICSD (Inorganic Crystal Structure Database) [28]: Contains experimental crystal structures, used in large-scale discovery efforts like GNoME [28].

For specialized applications, researchers often curate targeted datasets. For instance, the feature-enriched CGCNN for magnetization prediction utilized a curated dataset of eight transition-metal-based (Ti-Cu) magnetic compounds from the Materials Project, encompassing both FM and FiM systems [27].

Graph Construction Protocols

The process of converting crystal structures to graphs involves several key steps:

- Node Feature Definition: Standard CGCNN uses one-hot encoding of elements [26], while enhanced versions incorporate atomic spin moments [27] or Mat2vec embeddings [26].

- Edge Definition: Connections are typically formed between atoms within a specified cutoff radius, with edge features often incorporating interatomic distances expanded using radial basis functions (RBF) [26].

- Advanced Feature Integration: Sophisticated models add angular information through line graphs [26] or incorporate Voronoi tessellation to better capture three-body correlations [26].

Training Methodologies

- Active Learning Frameworks: GNoME demonstrates the power of iterative active learning, where models filter candidate structures evaluated by DFT, with results fed back into training in a "data flywheel" approach [28].

- Transfer Learning: Feature-enriched CGCNN shows effectiveness in transfer learning across different material families, enabling accurate predictions even with minimal representative data during training [27].

- Ablation Studies: MatGNet employed ablation studies to validate the individual contributions of Mat2vec encoding and angular features, demonstrating that each component significantly improves prediction accuracy [26].

Diagram 1: Architectural evolution from basic CGCNN to specialized variants, showing key innovations at each stage.

The Researcher's Toolkit

Table 3: Essential Computational Tools and Datasets for GNN Materials Research

| Tool/Dataset | Type | Primary Function | Application in Research |

|---|---|---|---|

| Materials Project [27] | Database | Repository of computed material properties | Training data for magnetic property prediction [27] |

| JARVIS-DFT [26] | Database | Extensive VASP-computed properties | Benchmarking model performance across diverse properties [26] |

| MatDeepLearn (MDL) [29] | Software Framework | Python environment for graph-based material models | Implements CGCNN, MPNN, MEGNet for property prediction [29] |

| Mat2vec Embeddings [26] | Word Embeddings | Captures chemical context from scientific text | Enhanced node feature representation in MatGNet [26] |

| VASP [28] | Simulation Software | First-principles calculations based on DFT | Ground-truth data generation and model verification [28] |

| AIRSS [28] | Software Tool | Ab initio random structure searching | Structure initialization for composition-based discovery [28] |

The landscape of GNNs for crystalline materials has evolved substantially from the foundational CGCNN framework toward increasingly sophisticated architectures. The evidence indicates that feature enrichment through physically meaningful descriptors significantly enhances performance for specialized prediction tasks like magnetization, while architectural innovations in models like MatGNet that incorporate angular features and advanced embeddings provide state-of-the-art accuracy across diverse properties. Simultaneously, the scaling approach demonstrated by GNoME highlights that data volume and active learning can dramatically expand materials discovery capabilities.

For researchers selecting appropriate models, this comparison suggests:

- Feature-enriched CGCNN variants offer specialized capability for magnetic materials and scenarios with limited data.

- MatGNet represents a strong choice for maximizing prediction accuracy across diverse properties when computational resources permit.

- GNoME-style scaling provides a pathway for discovery-oriented research when the goal is identifying novel stable crystals.

Future development will likely focus on balancing computational efficiency with model expressiveness, improving angular feature incorporation without prohibitive costs, and enhancing transfer learning capabilities across material families and property spaces.

Predicting material properties is a cornerstone of modern materials science, crucial for accelerating the discovery of new compounds for applications in energy, electronics, and drug development. Traditional methods, often reliant on computationally expensive density functional theory (DFT) calculations, face significant challenges in scalability and efficiency [8]. In recent years, graph neural networks (GNNs) have emerged as a powerful alternative, offering a natural framework for representing materials. These models treat crystal structures as graphs, where atoms serve as nodes and chemical bonds as edges, thereby explicitly incorporating the inherent topological information of atomic arrangements.

Building on this foundation, Spatial-Temporal Graph Neural Networks (STGNNs) represent a significant evolution. Originally developed for domains like traffic forecasting [30] and wind farm power prediction [31], STGNNs are uniquely designed to model not only spatial dependencies (the topological structure of the graph) but also temporal or sequential dynamics. In the context of materials, this "temporal" dimension can be interpreted as the propagation of interactions through the material's structure or the hierarchical relationship between different structural features. Dual-stream architectures, a sophisticated class of STGNNs, separately process spatial and temporal information before fusing them, leading to a more nuanced and powerful representation of materials that captures complex structure-property relationships. This guide provides a comparative analysis of these advanced architectures against other leading machine-learning approaches for material property prediction.

Performance Comparison of Material Property Prediction Algorithms

The table below summarizes the performance and characteristics of various state-of-the-art algorithms, highlighting the position of dual-stream STGNNs within the broader research landscape.

Table 1: Comparative Analysis of Material Property Prediction Algorithms