A Comparative Analysis of Material Optimization Strategies in Drug Discovery and Development

This article provides a systematic comparison of material optimization strategies reshaping modern drug development.

A Comparative Analysis of Material Optimization Strategies in Drug Discovery and Development

Abstract

This article provides a systematic comparison of material optimization strategies reshaping modern drug development. Aimed at researchers and pharmaceutical professionals, it explores foundational principles, advanced computational methodologies like AI and Design of Experiments (DoE), and practical troubleshooting techniques for formulation and synthesis. The analysis extends to validation frameworks and comparative studies of optimization models, offering a comprehensive roadmap for enhancing efficiency, reducing costs, and accelerating the development of robust pharmaceutical products.

The Principles of Material Optimization: Building a Framework for Efficient Drug Development

Material efficiency in the pharmaceutical industry is a holistic principle that aims to maximize the output and quality of drug products while minimizing the input of raw materials, energy, and waste generation across the entire development pipeline. This concept spans from the initial synthesis of the Active Pharmaceutical Ingredient (API) to the final drug product formulation [1] [2]. In an era of increasing cost pressures and sustainability concerns, optimizing material use is not merely an economic imperative but a crucial component of sustainable and ethical pharmaceutical manufacturing. The industry is responding by adopting more efficient technologies like continuous flow processing and systematic development approaches such as Quality by Design (QbD) and Design of Experiments (DoE) to refine these processes [1] [3] [4].

This guide provides a comparative analysis of material optimization strategies, offering a detailed examination of the experimental protocols and efficiency metrics that define modern pharmaceutical production. The focus is on actionable methodologies and data-driven comparisons to aid researchers, scientists, and drug development professionals in their pursuit of more efficient and robust manufacturing processes.

Material Efficiency in API Synthesis

The synthesis of the API is often the most complex and resource-intensive part of the pharmaceutical manufacturing process. Material efficiency at this stage is critical for cost management, environmental responsibility, and overall process robustness.

Core Optimization Strategies

- Minimizing Synthesis Steps: A primary goal is to devise synthetic routes with the fewest possible steps, as each additional step typically decreases overall yield and increases material consumption, waste, and cost [2].

- Maximizing Yield and Atom Economy: Optimization focuses on maximizing the yield of each reaction and employing reactions with high atom economy, ensuring that a greater proportion of starting materials is incorporated into the final API [2].

- Strategic Reagent and Solvent Selection: Processes are optimized to avoid highly hazardous, expensive, or difficult-to-source reagents. Similarly, solvent selection and recycling are prioritized to reduce waste and environmental impact [1] [2].

- Process Intensification through Flow Chemistry: A transformative strategy is the shift from traditional batch processing to continuous flow chemistry. Flow reactors offer superior heat and mass transfer, improved safety for hazardous reactions, and reduced inventories of reactive chemicals, leading to more efficient and scalable processes [1].

Quantitative Comparison of API Synthesis Strategies

The table below compares the key characteristics of different API synthesis approaches, highlighting the efficiency advantages of modern strategies.

Table 1: Comparative Analysis of API Synthesis Strategies

| Strategy | Key Efficiency Metrics | Typical Yield Improvement | Waste Reduction Potential | Scalability |

|---|---|---|---|---|

| Traditional Batch Synthesis | High material inventory, Moderate yield | Baseline | Baseline | Well-established, but can face heat/mass transfer challenges |

| Catalytic Asymmetric Synthesis | High enantioselectivity, Reduced steps | Can increase overall yield by ~50% [5] | Up to 80% waste reduction via eliminated protecting groups [5] | Excellent for chiral API production |

| Continuous Flow Synthesis | Reduced reactor footprint, Superior process control | Improved due to enhanced control | Significant reduction in solvent use and by-products [1] | Highly scalable and reproducible [1] |

| Hybrid (Batch & Flow) Synthesis | Flexibility, balances unit operation suitability | Variable, process-dependent | Moderate to High | Flexible, allows for staged implementation of continuous processing [6] |

Experimental Protocol: Catalytic Asymmetric Hydrogenation

The following protocol, inspired by the optimization of Sitagliptin synthesis, illustrates a material-efficient API synthesis step [5].

- Objective: To enantioselectively hydrogenate an unprotected enamine intermediate to produce the chiral API, Sitagliptin, using a transition metal catalyst.

- Materials:

- Dehydro-Sitagliptin precursor

- Catalyst: Rhodium salt complexed with a ferrocenyl-based ligand (e.g.,

Rhodium((R,R)-FerroTANE)) - Solvent: Methanol or Ethanol

- Hydrogen gas (

H₂)

- Procedure:

- Charge a pressure reactor with the dehydro precursor and the chiral rhodium catalyst (typical loading: 0.05-0.15 mol%).

- Add degassed solvent and purge the system with inert gas (e.g.,

N₂). - Pressurize the reactor with

H₂to a predetermined pressure (e.g., 5-10 bar). - Stir the reaction mixture at a controlled temperature (e.g., 50°C) until hydrogen uptake ceases or HPLC analysis shows >99% conversion.

- Depressurize the reactor and filter the mixture to remove the catalyst.

- Concentrate the filtrate under reduced pressure and purify the crude product via crystallization to obtain the pure API.

- Key Efficiency Metrics:

- Yield: >95%

- Enantiomeric Excess (e.e.): >99.5%

- Catalyst Recycling: >95% of the rhodium metal can be recovered and recycled [5].

Material Efficiency in Formulation Development

Once the API is synthesized, it must be formulated into a stable, bioavailable, and patient-friendly drug product. Material efficiency here involves optimizing the composition and process to ensure consistent performance with minimal material waste.

The Role of Design of Experiments (DoE)

Empirical, one-factor-at-a-time (OFAT) approaches to formulation are inefficient and often fail to capture complex interactions between components. Design of Experiments (DoE) is a systematic, statistical method that allows for the simultaneous evaluation of multiple formulation and process variables to identify critical parameters and their optimal ranges [3] [4]. This approach maximizes information gain while minimizing the number of experimental trials, saving time, API, and excipients.

Quantitative Comparison of Formulation Optimization Approaches

The table below contrasts traditional and modern formulation development methods.

Table 2: Comparison of Formulation Optimization Methodologies

| Methodology | Key Features | Material & Time Efficiency | Identification of Interactions | Robustness of Final Design |

|---|---|---|---|---|

| One-Factor-at-a-Time (OFAT) | Simple, intuitive; alters one variable while holding others constant | Low; requires many runs, high material consumption | No; cannot detect factor interactions | Low; optimal point is often poorly defined |

| Screening Designs (e.g., Plackett-Burman) | Identifies the most influential factors from a large set with few runs | High (for initial screening) | Limited; main effects only | Not Applicable (used for screening only) |

| Response Surface Methodologies (e.g., Central Composite) | Models nonlinear responses and precisely locates optimum | Moderate to High | Yes; models complex interactions | High; design space is thoroughly mapped |

| Full Factorial Design | Evaluates all possible combinations of factors at given levels | Moderate; comprehensive but can become large | Yes; all two-factor interactions can be modeled | High |

Experimental Protocol: DoE for a Delayed-Release Tablet

This protocol outlines the use of a full factorial DoE to optimize a direct compression formulation for a delayed-release tablet, as demonstrated in a study on bisphosphonate drugs [3].

- Objective: To optimize the concentrations of diluents, glidant, and lubricant to achieve a formulation with acceptable hardness, friability, and disintegration time.

- Experimental Design: A 2³ full factorial design (three factors at two levels each), resulting in 8 experimental runs, plus potential center points.

- Factors and Levels:

- Factor A: Diluent ratio (Ceolus KG-802 : ProSolv SMCC HD 90) - Levels: 50:50, 75:25

- Factor B: Glidant (Colloidal Silicon Dioxide) concentration - Levels: 0.5%, 1.0%

- Factor C: Lubricant (Stearic Acid) concentration - Levels: 1.0%, 2.0%

- Procedure:

- Blending: For each of the 8 formulations, mix the API (e.g., 35 mg), specified proportions of diluents, and glidant in a twin-shell blender for 15 minutes. Add the lubricant and blend for an additional 3 minutes.

- Compression: Compress the powder blends into tablets using a rotary tablet press, maintaining a fixed target weight and compression force.

- Evaluation: Test the tablets for critical quality attributes (CQAs): hardness, friability, and disintegration time.

- Data Analysis:

- Input the CQA data into statistical software (e.g., JMP, Design-Expert).

- Perform multiple regression analysis to build models for each response.

- Use contour plots and optimization functions to identify a design space where all CQAs meet the desired criteria with high robustness.

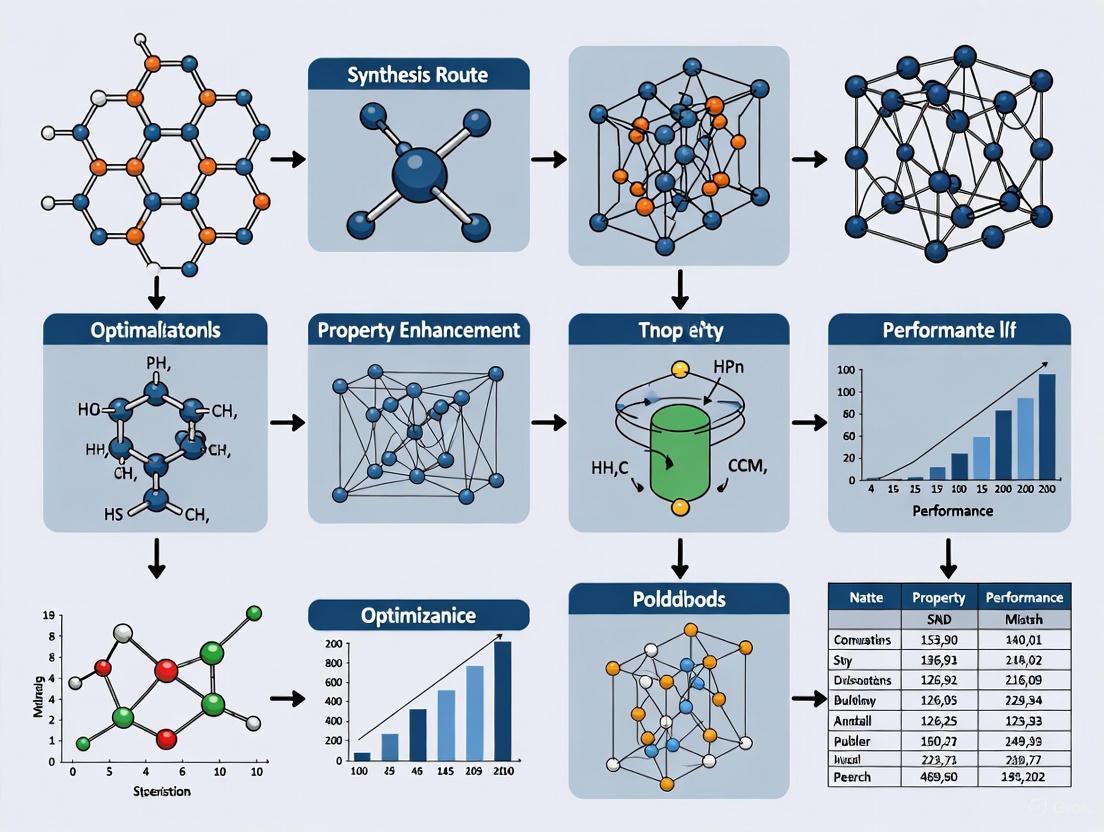

Integrated Workflow and Essential Research Tools

The path to material efficiency requires a connected strategy from molecule to medicine. The following workflow diagram visualizes this integrated, decision-based process, and the subsequent toolkit provides details on essential materials.

Diagram: Material Optimization Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

The following table catalogues essential materials and their functions in developing and optimizing efficient pharmaceutical processes.

Table 3: Essential Reagents and Materials for Optimization Research

| Item | Function in Research & Development |

|---|---|

| Key Starting Materials (KSMs) | Commercially available raw compounds that form the foundation of API synthesis; selection based on cost, stability, and synthetic accessibility [7]. |

| Advanced Intermediates | Custom-synthesized compounds (e.g., chiral alcohols, aromatic halides) that serve as crucial "checkpoints" in multi-step API synthesis, enabling structural and stereochemical control [7]. |

| Metathesis Catalysts (e.g., catMETium IMesPCy) | Ruthenium-carbene complexes used in olefin metathesis reactions (ring-closing, cross-metathesis) to efficiently construct complex carbon frameworks for APIs, reducing step count [5]. |

| Asymmetric Hydrogenation Catalysts | Chiral ligands (e.g., FerroTANE, MeOBIPHEP) complexed with metals like Rh; enable high-yield, enantioselective synthesis of chiral APIs, avoiding wasteful racemic separations [5]. |

| Spray Dried Dispersions (SDDs) | Enabled formulation technology using polymers to create amorphous solid dispersions, overcoming API solubility limitations and improving bioavailability [4]. |

| Functional Excipients (Diluents, Disintegrants) | Inactive components (e.g., Microcrystalline Cellulose, Sodium Starch Glycolate) optimized via DoE to ensure drug product stability, manufacturability, and performance [3]. |

The pursuit of material efficiency is a continuous and multi-faceted effort that is fundamental to the future of pharmaceutical development. As this guide has detailed, achieving excellence requires a comparative and data-driven mindset, leveraging advanced synthetic methodologies like catalysis and flow chemistry alongside systematic development frameworks like DoE. The integration of these strategies—from the initial API synthesis to the final drug product—creates a cohesive and powerful approach to optimization. For researchers and scientists, mastering these tools and concepts is paramount to developing robust, sustainable, and economically viable pharmaceutical processes that successfully navigate the path from the laboratory to the patient.

Material optimization is a critical, multi-faceted challenge in engineering and manufacturing, requiring a delicate balance between competing objectives. Researchers and developers strive to minimize cost and environmental impact while maximizing production speed and final product quality. The emergence of sophisticated computational techniques and advanced materials has transformed this field from a domain of trial-and-error into a discipline driven by predictive modeling and data-centric strategies. This guide provides a comparative analysis of contemporary material optimization strategies, evaluating their performance through experimental data and structured protocols. It is structured to aid professionals in selecting the appropriate methodology for their specific application, whether it be in additive manufacturing, construction, or the development of sustainable supply chains.

Comparative Analysis of Optimization Algorithms

The core of modern material optimization lies in selecting the appropriate algorithmic strategy. Different algorithms excel in different scenarios, depending on whether the goal is single-objective maximization, multi-objective trade-off, or hitting a specific target value. The following table compares the performance of key optimization algorithms as demonstrated in recent studies.

Table 1: Performance Comparison of Optimization Algorithms in Material and Process Design

| Optimization Algorithm | Application Context | Key Performance Findings | Experimental Outcome Metrics |

|---|---|---|---|

| Genetic Algorithm (GA) | Tuning LSBoost model for predicting mechanical properties of 3D-printed nanocomposites [8] | Consistently outperformed BO and SA for most properties [8]. | For yield strength: RMSE of 1.9526 MPa, R² of 0.9713 [8]. |

| Time-Cost Trade-off in Linear Repetitive Construction Projects [9] | Achieved a 3.25% reduction in direct costs and a 20% reduction in indirect costs [9]. | Total construction cost reduced by 7% [9]. | |

| Bayesian Optimization (BO) | Tuning LSBoost model for predicting mechanical properties of 3D-printed nanocomposites [8] | Excelled in specific predictions, such as modulus of elasticity [8]. | For modulus of elasticity: R² of 0.9776 with test RMSE of 130.13 MPa [8]. |

| Particle Swarm Optimization (PSO) | Time-Cost Trade-off in Linear Repetitive Construction Projects [9] | Demonstrated slightly superior cost performance compared to GA [9]. | 4% reduction in direct costs and a 20% decrease in total project duration [9]. |

| Target-Oriented Bayesian Optimization (t-EGO) | Discovering materials with target-specific properties (e.g., shape memory alloy transformation temperature) [10] | Required fewer experimental iterations than standard BO to reach a target value [10]. | Achieved a transformation temperature within 2.66 °C of the target (440 °C) in only 3 experimental iterations [10]. |

| Multi-Objective Bayesian Optimization (MOBO) | Multi-objective optimization in material extrusion (e.g., print accuracy and homogeneity) [11] | Efficiently identifies the Pareto front, illustrating trade-offs between competing objectives [11]. | Finds a set of optimal solutions without a single objective dominating others [11]. |

Detailed Experimental Protocols

To ensure reproducibility and provide a clear understanding of the methodological rigor behind the data, this section details the experimental protocols from the cited studies.

This protocol outlines the process for optimizing a machine learning model used to predict the properties of 3D-printed materials.

- Sample Fabrication: Tensile specimens are fabricated via Fused Deposition Modeling (FDM) using a Taguchi L27 orthogonal array design. Key process parameters varied include extrusion rate, nanoparticle concentration, layer thickness, infill density, and infill geometry [8].

- Mechanical Testing: The fabricated specimens undergo uniaxial tension testing to determine experimental values for modulus of elasticity (E), yield strength (Sy), and toughness (Ku) [8].

- Model Training & Tuning: A Least-Squares Boosting (LSBoost) model is constructed to map process parameters to mechanical properties. The hyperparameters of this model are then tuned using three distinct optimization algorithms—Bayesian Optimization (BO), Simulated Annealing (SA), and Genetic Algorithm (GA)—independently. The tuning process minimizes a composite objective function combining Root Mean Square Error (RMSE) and (1 − R²) loss metrics [8].

- Performance Validation: The performance of each optimized model is evaluated and compared using the RMSE and R² of its predictions against the hold-out experimental test data [8].

This protocol describes a metaheuristic-based framework for optimizing schedules and costs in linear repetitive projects like highways or pipelines.

- Task Decomposition: The repetitive construction project is broken down into its fundamental tasks and sub-tasks [9].

- Method Definition: For each sub-task, multiple construction methods are identified, each with its associated duration and direct cost [9].

- LOB Scheduling: The Line of Balance (LOB) technique is employed to schedule the project, ensuring work crew continuity and a logical sequence for the repetitive units [9].

- Metaheuristic Optimization: Two algorithms, Genetic Algorithm (GA) and Particle Swarm Optimization (PSO), are implemented. Their fitness functions are designed to incorporate project scheduling constraints and to minimize total project cost (direct + indirect costs) and duration. The algorithms explore different combinations of construction methods across sub-tasks to find the optimal trade-off [9].

- Solution Evaluation: The solutions proposed by GA and PSO are compared based on key performance indicators, including total project duration, direct costs, indirect costs, and total project cost [9].

This protocol is designed for finding a material with a specific property value, rather than simply a maximum or minimum.

- Target Definition: A specific target value for a material property is defined (e.g., a phase transformation temperature of 440°C for a shape memory alloy) [10].

- Initial Data Collection: A small initial dataset of material compositions and their corresponding properties is gathered, often from literature or preliminary experiments [10].

- Modeling with Gaussian Process: A Gaussian Process (GP) model is trained on the available data to create a probabilistic map between material composition and the property of interest. This model provides both a predicted mean (μ) and an uncertainty (s) for any point in the composition space [10].

- Candidate Selection via t-EI: The "target-specific Expected Improvement" (t-EI) acquisition function is used to select the next candidate material to test. Unlike standard EI, t-EI calculates the expected improvement of getting closer to the target value, factoring in both the predicted mean and the uncertainty [10].

- Closed-Loop Experimentation: The selected candidate is synthesized and tested experimentally. The result is added to the dataset, and the process loops back to step 3. The loop continues until a material satisfying the target criterion is discovered [10].

Workflow and Relationship Visualizations

The following diagrams illustrate the core logical workflows for the optimization strategies discussed, providing a visual summary of the experimental protocols.

Multi-Objective Bayesian Optimization (MOBO) Workflow

Target-Oriented Bayesian Optimization (t-EGO) Workflow

The Scientist's Toolkit: Key Solutions for Material Optimization

Successful implementation of the described protocols relies on a suite of computational and experimental tools. The following table catalogues essential "research reagent solutions" in the context of material optimization.

Table 2: Essential Research Reagent Solutions for Material Optimization

| Item / Solution | Function in Optimization | Application Example |

|---|---|---|

| Bayesian Optimization (BO) | A machine learning framework that builds a probabilistic model of an objective function to efficiently find its optimum with minimal evaluations [10] [11]. | Used to optimize multiple parameters in material extrusion to maximize print accuracy and homogeneity [11]. |

| Genetic Algorithm (GA) | A metaheuristic optimization algorithm inspired by natural selection, effective at exploring complex search spaces for near-optimal solutions [8] [9]. | Tuning hyperparameters of an LSBoost model predicting mechanical properties of 3D-printed nanocomposites [8]. |

| Particle Swarm Optimization (PSO) | A population-based optimization technique that simulates social behavior to converge on optimal solutions [9]. | Solving the time-cost trade-off problem in linear repetitive construction projects [9]. |

| Finite Element Model (FEM) | A computational simulation tool used to predict how materials and structures respond to physical forces, heat, and other effects [12]. | Comparing the structural performance of aluminum vs. carbon fiber railway car bodies under standard loads [12]. |

| High-Throughput Computing (HTC) | A paradigm that uses parallel processing to perform large-scale simulations, rapidly screening vast material libraries [13]. | Accelerating the discovery of novel materials by computing properties for thousands of compounds via first-principles calculations [13]. |

| Gaussian Process (GP) | A non-parametric model used in BO that provides a prediction along with an estimate of its own uncertainty, crucial for guiding experimental selection [10]. | Modeling the relationship between shape memory alloy composition and its transformation temperature [10]. |

| Building Information Modeling (BIM) | A digital representation of physical and functional characteristics of a facility, enabling integrated analysis of cost, energy, and carbon [14]. | Implementing value engineering to reduce project costs and embodied carbon emissions through automated decision support [14]. |

The comparative analysis presented in this guide reveals that no single optimization strategy is universally superior. The choice of algorithm is deeply contingent on the core objectives of the project. Genetic Algorithms demonstrate robust performance in traditional engineering optimization problems, such as cost minimization and model tuning. Bayesian Optimization frameworks, particularly their advanced variants like Multi-Objective BO and target-oriented BO, offer a powerful, data-efficient approach for navigating complex, multi-faceted design spaces and for zeroing in on precise property targets. The integration of these computational strategies with high-fidelity simulation tools and sustainable procurement principles, as seen in the development of low-carbon supply chains for electric vehicle batteries [15], represents the forefront of material optimization. This synergy enables researchers and professionals to systematically balance the critical axes of cost, speed, quality, and environmental impact, paving the way for more efficient and sustainable material development.

The Role of Computational Tools and Rational Design in Modern Optimization

The field of modern optimization has undergone a paradigm shift, moving from traditional trial-and-error approaches to sophisticated computational strategies that enable the rational design of materials, drugs, and engineered components. This transformation is driven by the integration of powerful algorithms, machine learning, and high-performance computing, which together allow researchers to navigate complex design spaces with unprecedented efficiency. Computational design has emerged as a distinct era in engineering, where designs are represented as programs that capture entire design spaces, and computers systematically explore optimal parameters [16]. This approach stands in stark contrast to earlier paradigms, offering iteration speeds that are exponentially faster than sequential CAD model rebuilds used in previous generations of engineering software.

The fundamental goal of optimization—to find the best solution from a set of available alternatives by systematically choosing input values, computing function outputs, and recording the best values found—remains unchanged [17]. However, the methodologies and tools available have evolved dramatically, enabling solutions to problems of increasing complexity across diverse scientific domains. From de novo protein design [18] to topology optimization for advanced manufacturing [19] and Bayesian optimization for materials discovery [10], computational tools are now indispensable across research and industrial applications.

Comparative Analysis of Optimization Software and Tools

General-Purpose Optimization Software

A diverse ecosystem of optimization software libraries supports scientific research and industrial applications. These tools vary in their specialized capabilities, licensing models, and programming language support, enabling researchers to select tools appropriate for their specific problem domains and technical constraints.

Table 1: Comparison of General-Purpose Optimization Software

| Name | Programming Language | Latest Version | License Model | Specialized Capabilities |

|---|---|---|---|---|

| ALGLIB | C++, C#, Python, FreePascal | 3.19.0 (June 2022) | Dual (Commercial, GPL) | Linear, quadratic, nonlinear programming |

| AMPL | C, C++, C#, Python, Java, Matlab, R | October 2018 | Dual (Commercial, academic) | Algebraic modeling language for linear, mixed-integer, nonlinear optimization |

| Artelys Knitro | C, C++, C#, Python, Java, Julia, Matlab, R | 11.1 (November 2018) | Commercial, Academic, Trial | Nonlinear optimization, MINLP, MPEC, nonlinear least squares |

| CPLEX | C, C++, Java, C#, Python, R | 20.1 (December 2020) | Commercial, academic, trial | Mathematical programming, constraint programming |

| GEKKO | Python | 0.2.8 (August 2020) | Dual (Commercial, academic) | Machine learning, optimization of mixed-integer, differential algebraic equations |

| GNU Linear Programming Kit | C | 4.52 (July 2013) | GPL | Linear programming, mixed integer programming |

| MIDACO | C++, C#, Python, Matlab, Octave, Fortran, R, Java, Excel, VBA, Julia | 6.0 (March 2018) | Dual (Commercial, academic) | Single/multi-objective optimization, MINLP, parallelization |

| SciPy | Python | 1.13.1 (November 2023) | BSD | General purpose numerical/scientific computing |

For non-commercial research, open-source tools like SciPy and the GNU Linear Programming Kit provide robust capabilities without licensing costs, though they may lack specialized features found in commercial alternatives. Commercial tools like Artelys Knitro and CPLEX often offer enhanced performance, specialized algorithms, and technical support, making them valuable for industrial applications [17].

Domain-Specific Optimization Methodologies

Beyond general-purpose optimization software, specialized methodologies have emerged to address the unique challenges of specific scientific domains, particularly in protein design, drug discovery, and materials science.

Table 2: Domain-Specific Optimization Tools and Their Applications

| Domain | Tools/Methods | Key Applications | Performance Characteristics |

|---|---|---|---|

| Protein Design | ROSETTA, K* algorithm, DEZYMER, ORBIT [18] | Design of therapeutic proteins, metalloproteins, enzymes with novel functionalities | Success in designing proteins that fold, catalyze, and signal |

| Drug Discovery | Molecular docking, de novo design, virtual screening [20] | Prediction of ligand-receptor binding modes, identification of novel ligands | AutoDock (29.5% usage), GOLD (17.5%), Glide (13.2%) based on publication analysis |

| Materials Science | Bayesian optimization, reinforcement learning, topology optimization [21] [19] [10] | Discovery of shape memory alloys, metal-organic frameworks, transition metal complexes | Target-oriented BO finds materials with specific properties in fewer experimental iterations |

| Engineering Design | Topology optimization, implicit modeling (SDFs) [19] [16] | Structural optimization for 3D printing, lightweight components | SiMPL method reduces iterations by up to 80% compared to traditional algorithms |

The selection of appropriate optimization strategies is highly dependent on the problem domain. For instance, bio-inspired algorithms excel at navigating complex, non-linear spaces with minimal computational complexity and reduced iterations [22], while Bayesian optimization approaches are particularly valuable when experimental data is limited and costly to obtain [10].

Experimental Protocols and Methodologies

Target-Oriented Bayesian Optimization for Materials Design

Traditional Bayesian optimization focuses on finding maxima or minima of unknown functions, but many materials applications require achieving specific target property values rather than extremes. Target-oriented Bayesian optimization (t-EGO) addresses this need by employing a novel acquisition function (t-EI) that samples candidates based on their potential to approach the target value from either above or below, incorporating prediction uncertainties in the process [10].

Experimental Protocol:

- Problem Formulation: Define the target property value t for the desired material.

- Initial Data Collection: Compile initial experimental measurements or computational data points (y₁, y₂, ..., yₙ).

- Model Construction: Use unprocessed property data y as inputs for Gaussian process modeling.

- Candidate Selection: Apply the t-EI acquisition function to identify the most promising candidate materials:

- Calculate Dismin = min(|y₁ - t|, |y₂ - t|, ..., |yₙ - t|) = |yt.min - t|

- Compute expected improvement: t-EI = E[max(0, |yt.min - t| - |Y - t|)]

- Select the candidate with maximum t-EI value

- Experimental Evaluation: Synthesize and characterize the selected candidate material.

- Iterative Refinement: Update the model with new experimental results and repeat steps 4-5 until the target property is achieved within acceptable tolerance.

This methodology has demonstrated significant efficiency improvements, requiring approximately 1 to 2 times fewer experimental iterations than EGO or Multi-Objective Acquisition Functions strategies to reach the same target [10]. In one application, researchers discovered a thermally-responsive shape memory alloy Ti₀.₂₀Ni₀.₃₆Cu₀.₁₂Hf₀.₂₄Zr₀.₀₈ with a transformation temperature difference of only 2.66°C from the target temperature in just 3 experimental iterations [10].

Target-Oriented Bayesian Optimization Workflow

Molecular Docking for Drug Discovery

Molecular docking represents a fundamental computational methodology in rational drug design, aiming to predict the optimal conformation of a ligand within a protein binding site and estimate the binding affinity [20].

Experimental Protocol:

- Structure Preparation:

- Obtain 3D structures of the target receptor and ligand molecules from databases (PDB for proteins, ZINC for small molecules)

- Add hydrogen atoms, assign partial charges, and define protonation states

- Energy minimization to relieve steric clashes

Binding Site Definition:

- Identify the active binding site based on experimental data or computational prediction

- Define a grid box encompassing the binding site for sampling

Docking Simulation:

- Employ sampling algorithms (genetic algorithm, Monte Carlo) to generate multiple ligand conformations

- Use scoring functions (empirical, force-field based, knowledge-based) to evaluate binding poses

- Select top-ranked poses based on scoring functions

Post-Processing:

- Rescoring of poses with more sophisticated functions (MM/PBSA, MM/GBSA)

- Molecular dynamics simulations to account for flexibility and solvation effects

- Binding affinity calculations and visual inspection of interactions

The main challenge in molecular docking remains the accurate prediction of binding energies, as scoring functions often fail to account for all interfering forces between ligand and receptor, ligand solvation, entropic changes, and receptor flexibility [20]. Despite these limitations, molecular docking has become an indispensable tool in early-stage drug discovery, with applications ranging from the study of antitubulin agents with anti-cancer activity to the investigation of estrogen receptor binding domains [20].

Topology Optimization with SiMPL Algorithm

Topology optimization represents a computational-driven technique that determines the most effective material distribution within a design domain to achieve optimal performance based on specified criteria [19]. The recently developed SiMPL (Sigmoidal Mirror descent with a Projected Latent variable) algorithm addresses key limitations of traditional approaches.

Experimental Protocol:

- Design Domain Definition:

- Define the allowable design space and boundary conditions

- Apply loading conditions and constraints based on functional requirements

- Discretize the domain using finite element mesh

Material Model Setup:

- Initialize material distribution (often uniform)

- Define material properties (Young's modulus, Poisson's ratio)

- Set volume fraction constraint

SiMPL Optimization Loop:

- Transform the design space [0,1] to a latent space (-∞, +∞) using sigmoidal transformation

- Perform finite element analysis to compute structural response

- Calculate sensitivity information (derivatives of objective function)

- Update design variables in the latent space

- Transform back to physical space [0,1] for material distribution

- Check convergence criteria; if not met, repeat the analysis

The SiMPL method's key innovation lies in eliminating "impossible" solutions (values less than 0 or more than 1) that traditionally slow down optimization processes. Benchmark tests demonstrate that SiMPL requires up to 80% fewer iterations to arrive at an optimal design compared to traditional algorithms, potentially reducing computation time from days to hours [19].

Topology Optimization with SiMPL Algorithm

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of computational optimization strategies requires access to specialized software tools, databases, and computational resources. The following table outlines key resources across different optimization domains.

Table 3: Essential Research Reagents and Computational Tools

| Category | Specific Tools/Resources | Function/Purpose | Access Method |

|---|---|---|---|

| Protein Design | ROSETTA, DEZYMER, ORBIT [18] | De novo protein design, enzyme engineering, therapeutic protein development | Academic licensing, open-source versions |

| Molecular Docking | AutoDock, GOLD, Glide [20] | Prediction of ligand-receptor interactions, virtual screening | Commercial licensing, academic packages |

| Materials Databases | Cambridge Structural Database (CSD), CoRE MOF [21] | Source of experimental structures for metals, MOFs, organic compounds | Subscription-based access |

| Data Extraction | ChemDataExtractor [21] | Natural language processing for extracting materials data from literature | Open-source Python package |

| Bayesian Optimization | t-EGO, EGO, MOAF [10] | Efficient materials discovery with limited experimental data | Custom implementation, research code |

| Topology Optimization | SiMPL algorithm, commercial FEA software [19] | Structural optimization for additive manufacturing | Research implementation, commercial packages |

| Implicit Modeling | nTopology, implicit modeling with SDFs [16] | Geometry representation for computational design | Commercial software licensing |

| Quantum Chemistry | DFT packages, molecular dynamics software [21] | Prediction of material properties, reaction mechanisms | Academic licensing, open-source packages |

The selection of appropriate tools depends on multiple factors, including the specific research domain, available computational resources, licensing constraints, and the balance between ease of use and methodological sophistication. Open-source tools often provide greater transparency and customization options, while commercial software typically offers enhanced support, documentation, and user interfaces.

Performance Comparison and Benchmarking

Computational Efficiency Across Methods

The performance of optimization algorithms varies significantly based on problem complexity, dimensionality, and specific application requirements. Recent benchmarking studies provide insights into the relative strengths of different approaches.

Table 4: Performance Comparison of Optimization Techniques

| Method Category | Typical Convergence Speed | Scalability to High Dimensions | Implementation Complexity | Best-Suited Applications |

|---|---|---|---|---|

| Bio-inspired Algorithms (GA, PSO, ACO) [22] | Moderate to fast | Moderate | Low to moderate | Engineering design, scheduling, parameter optimization |

| Bayesian Optimization (t-EGO, EGO) [10] | Fast (fewer experiments) | High with appropriate kernels | Moderate | Materials discovery, drug design (expensive evaluations) |

| Molecular Docking [20] | Varies by sampling algorithm | Limited by receptor flexibility | Moderate | Virtual screening, binding pose prediction |

| Topology Optimization (SiMPL) [19] | Fast (80% fewer iterations) | High with efficient meshing | High | Structural design, additive manufacturing |

| Reinforcement Learning [23] | Slow training, fast deployment | High with function approximation | High | Smart materials, adaptive systems |

Bio-inspired algorithms like Genetic Algorithms (GA) and Particle Swarm Optimization (PSO) are valued for their robustness in finding global optima in complex, multivariable search spaces without requiring gradient information [22]. However, they can be computationally intensive due to the need to evaluate many candidate solutions across generations. In contrast, Bayesian optimization methods like t-EGO excel in scenarios where experimental evaluations are costly and the number of possible experiments must be minimized [10].

Accuracy and Reliability Metrics

Beyond computational efficiency, the accuracy and reliability of optimization methods are critical for research and application success.

For molecular docking, the primary challenge remains accurate prediction of binding energies. While docking software can produce "accurate" binding modes, scoring functions often fail to reliably estimate binding affinities [20]. Post-processing with more sophisticated methods like MM/PBSA and MM/GBSA that include implicit solvent representations can improve accuracy, but at increased computational cost.

In materials design, target-oriented Bayesian optimization has demonstrated remarkable precision, achieving materials with properties within 0.58% of the target value [10]. This level of accuracy is particularly impressive given the minimal number of experimental iterations required.

Topology optimization methods have shown significant improvements in reliability through approaches like implicit modeling using Signed Distance Functions (SDFs), which avoid the fragile computations that frequently cause failures in traditional boundary representation (B-rep) systems [16]. The inherent mathematical formulation of SDFs makes them more reliable for automated design exploration.

Future Directions and Emerging Trends

The field of computational optimization continues to evolve rapidly, with several emerging trends likely to shape future research and applications. Reinforcement learning is gaining traction for optimizing smart materials in multi-dimensional self-assembly processes, enabling materials to autonomously respond to environmental stimuli and optimize their configurations in real-time [23]. The integration of AI and machine learning with traditional optimization approaches is creating new opportunities for generating better-performing designs, providing more realistic performance predictions, and ensuring manufacturability [16].

As computational power continues to grow and become more accessible through cloud computing and GPUs, the pace of innovation in optimization methodologies is expected to accelerate. Technologies like quantum computing may further revolutionize the field, potentially solving classes of optimization problems that are currently intractable with classical computers. The ongoing development of more sophisticated benchmarking frameworks and standardized evaluation metrics will enable more rigorous comparisons between methods and foster continued advancement across this diverse and critically important field.

In the field of comparative analysis of material optimization strategies, researchers face a complex triad of challenges that span computational, logistical, and regulatory domains. Scalability concerns arise from the computational intensity of exploring high-dimensional design spaces, where each additional parameter exponentially increases complexity [23]. Data management challenges emerge from the need to process, validate, and share increasingly large and diverse materials data across research teams and institutions. Simultaneously, regulatory compliance requirements introduce additional layers of complexity, particularly in domains like biomedical engineering and energy storage where material safety and efficacy must be rigorously demonstrated.

The interdependence of these challenges creates a research landscape where advances in one domain often necessitate corresponding improvements in others. This comparison guide examines how contemporary material optimization strategies address these interconnected challenges through various computational frameworks and data handling approaches, providing researchers with a structured analysis of their relative strengths and experimental performance.

Comparative Framework: Optimization Strategies at Scale

Table 1: Comparative Overview of Material Optimization Strategies

| Optimization Strategy | Scalability Approach | Data Management Features | Compliance Considerations | Experimental Validation |

|---|---|---|---|---|

| Target-Oriented Bayesian Optimization [10] | Efficient experimental iteration reduction; Small dataset performance | Handles limited training data; Manages prediction uncertainty | Traceable decision pathway; Audit-friendly candidate selection | Shape memory alloy discovery: 3 iterations to target (2.66°C difference) |

| Multi-Objective AI Optimization [24] | Metaheuristic algorithms (GWO, AO); Parallel objective evaluation | Data-driven weighting (CRITIC, Entropy); Multi-criteria decision making | Full documentation of objective trade-offs; Weight sensitivity analysis | Battery material prediction: R²=0.9969 (ionization energy), 0.9134 (density) |

| Reinforcement Learning [23] | Multi-agent frameworks; Hierarchical task decomposition | Adaptive learning from interaction; Transfer learning capabilities | Policy transparency challenges; Black-box decision concerns | Smart material self-assembly: Improved adaptability & precision in multi-dimensional environments |

| Topology Optimization [19] | SiMPL algorithm: 80% fewer iterations; Latent space transformation | Manages design parameter constraints; Prevents impossible solutions | Engineering standards compliance; Structural safety validation | Benchmark tests: 4-5x efficiency improvement over conventional methods |

Table 2: Performance Metrics Across Optimization Categories

| Strategy | Computational Efficiency | High-Dimensional Handling | Implementation Complexity | Real-World Validation |

|---|---|---|---|---|

| Bayesian Optimization [10] | High (fewer experiments) | Moderate (depends on surrogate model) | Low-Moderate | Strong (experimentally verified) |

| AI-Driven Multi-Objective [24] | Variable (algorithm-dependent) | Strong (explicit multi-parameter handling) | High | Moderate (computational focus) |

| Reinforcement Learning [23] | Low initially (training intensive) | Excellent (specialized for high dimensions) | Very High | Emerging (primarily simulation) |

| Topology Optimization [19] | High (reduced iterations) | Moderate (parameter space dependent) | Moderate | Strong (engineering applications) |

Experimental Protocols and Methodologies

Target-Oriented Bayesian Optimization for Specific Material Properties

The target-oriented Bayesian optimization method (t-EGO) employs a novel acquisition function, t-EI, designed specifically for identifying materials with target-specific properties rather than simply maximizing or minimizing properties [10]. The experimental protocol involves:

- Initial Sampling: Begin with a small set of experimentally characterized materials to establish baseline data.

- Gaussian Process Modeling: Construct a probabilistic model mapping material descriptors to target properties using limited training data.

- Target-Specific Acquisition: Apply t-EI acquisition function to evaluate expected improvement toward a specific target value, incorporating both predicted value and associated uncertainty.

- Iterative Experimentation: Select the most promising candidate for experimental validation based on t-EI ranking, then update the model with new results.

- Convergence Testing: Continue iterations until a material meeting the target specification is identified or resources are exhausted.

Experimental validation demonstrated this method could identify a shape memory alloy Ti₀.₂₀Ni₀.₃₆Cu₀.₁₂Hf₀.₂₄Zr₀.₀₈ with a transformation temperature difference of only 2.66°C from the target temperature in just 3 experimental iterations [10]. Statistical analysis from hundreds of repeated trials showed t-EGO required approximately 1 to 2 times fewer experimental iterations than conventional EGO or Multi-Objective Acquisition Functions (MOAF) strategies to reach the same target.

Multi-Objective Optimization for Battery Material Development

The systematic data-driven approach for battery material optimization combines machine learning, multi-objective optimization, and multi-criteria decision-making [24]. The experimental methodology includes:

- Descriptor Selection: Identify critical atomic-level descriptors influencing target properties (density and ionization energy for battery applications).

- Machine Learning Modeling: Train Support Vector Regression (SVR) models using metaheuristic optimization algorithms (Aquila Optimizer and Gray Wolf Optimizer) for hyperparameter tuning.

- Multi-Objective Optimization: Implement SMS-EMOA and MOEA/D algorithms to minimize density while maximizing ionization energy, identifying Pareto-optimal solutions.

- Multi-Criteria Decision Making: Apply objective weighting methods (CRITIC, Entropy, Gini index) combined with ranking techniques (TOPSIS, SPOTIS, MABAC, VIKOR) to identify optimal material compositions.

- Sensitivity Analysis: Perform extensive trade-off analysis between material properties to ensure robust recommendations.

This approach achieved high prediction accuracy with R² values of 0.9969 for ionization energy and 0.9134 for density using GWO-optimized SVR models [24]. The MOEA/D-TOPSIS hybrid method efficiently identified the best material candidates consistently across validation tests.

Reinforcement Learning for Multi-Dimensional Self-Assembly

The reinforcement learning framework for smart material optimization addresses high-dimensional self-assembly processes through the following experimental protocol [23]:

- Environment Modeling: Create simulated environments representing multi-dimensional self-assembly spaces with relevant material parameters and environmental stimuli.

- Agent Design: Implement multi-agent reinforcement learning systems with modular architectures for enhanced adaptability and scalability.

- Policy Optimization: Utilize Deep Q-Networks (DQNs) and Proximal Policy Optimization (PPO) to learn optimal assembly policies through iterative environment interactions.

- Hierarchical Decomposition: Apply hierarchical reinforcement learning to break down high-dimensional optimization tasks into manageable sub-tasks for faster convergence.

- Transfer Learning: Leverage knowledge from simpler tasks to accelerate learning in complex material design problems through meta-learning approaches.

Experimental results demonstrated significant improvements in material performance and assembly precision under varied environmental conditions, showcasing the method's potential for broad application in smart material engineering [23]. The approach proved particularly effective in navigating complex, high-dimensional design spaces where traditional optimization methods struggle.

Accelerated Topology Optimization for Structural Design

The SiMPL (Sigmoidal Mirror descent with a Projected Latent variable) algorithm for topology optimization addresses scalability challenges through a novel mathematical approach [19]:

- Design Parameterization: Divide the design domain into discrete elements (pixels or voxels) with continuous material density variables between 0 (void) and 1 (solid).

- Latent Space Transformation: Transform the design space between 0 and 1 into a latent space between negative infinity and positive infinity using sigmoidal mapping.

- Optimization Iteration: Perform gradient-based optimization in the latent space, where constraints are inherently satisfied without correction steps.

- Physical Property Evaluation: Compute structural performance metrics (stiffness, stress, compliance) using finite element analysis for each design iteration.

- Design Convergence: Continue iterations until optimality criteria are satisfied, then transform the final latent variables back to physical densities.

Benchmark tests demonstrated that SiMPL requires up to 80% fewer iterations to arrive at an optimal design compared to traditional algorithms, translating to 4-5x improvement in computational efficiency [19]. This performance improvement makes topology optimization accessible for a broader range of industries and enables designs at much finer resolution than previously feasible.

Visualization of Methodologies

Target-Oriented Bayesian Optimization Workflow

Multi-Objective Material Optimization

Table 3: Research Reagent Solutions for Material Optimization

| Tool/Category | Specific Examples | Function in Research | Implementation Considerations |

|---|---|---|---|

| Optimization Algorithms | Gray Wolf Optimizer (GWO), Aquila Optimizer (AO) [24] | Metaheuristic optimization of machine learning model parameters | Balance between exploration and exploitation; Parameter tuning requirements |

| Bayesian Optimization Frameworks | t-EGO with t-EI acquisition function [10] | Efficient experimental design for target-specific material properties | Requires careful uncertainty calibration; Performs best with limited data |

| Reinforcement Learning Systems | Deep Q-Networks (DQN), Proximal Policy Optimization (PPO) [23] | Adaptive control in multi-dimensional self-assembly processes | High computational resources needed; Benefits from transfer learning |

| Multi-Objective Decision Support | TOPSIS, VIKOR, MABAC [24] | Ranking Pareto-optimal solutions with multiple criteria | Sensitivity to weighting schemes; Requires clear objective prioritization |

| Data Management Infrastructure | Automated metadata harvesting, Data lineage tools [25] | Tracking material provenance and experimental conditions | Integration with existing lab systems; Metadata standardization needs |

| High-Performance Computing | Parallel processing, GPU acceleration [19] [23] | Handling computationally intensive simulations and models | Resource allocation strategies; Scalability across computing clusters |

Regulatory and Data Governance Considerations

Material optimization research increasingly intersects with regulatory frameworks, particularly in biomedical and energy applications. Effective data governance provides the foundation for regulatory compliance, ensuring data quality, integrity, and appropriate usage throughout the research lifecycle [26]. Key considerations include:

Data Provenance and Lineage: Comprehensive tracking of material data from origin through transformations is essential for demonstrating research validity and reproducibility [25]. This aligns with regulatory requirements for electronic records in scientific research.

Privacy-Enhancing Technologies: For research involving biological materials or patient data, technologies such as federated learning and synthetic data generation can help balance analytical utility with privacy protection [27].

Automated Compliance Monitoring: AI-augmented governance tools can automatically detect sensitive data and ensure appropriate handling throughout material research workflows [25] [27].

Research organizations should implement data governance frameworks that naturally support regulatory requirements rather than treating compliance as a separate concern [26]. This approach creates a foundation where meeting regulatory standards becomes a byproduct of robust research data management practices.

The comparative analysis presented in this guide demonstrates that no single optimization strategy universally dominates across all dimensions of scalability, data management, and compliance. Target-oriented Bayesian optimization excels in experimental efficiency when seeking specific material properties [10]. Multi-objective AI approaches provide comprehensive handling of complex trade-offs in material design [24]. Reinforcement learning offers unparalleled adaptability in high-dimensional, dynamic environments [23]. Topology optimization algorithms deliver significant computational advantages for structural design problems [19].

Researchers should select optimization strategies based on their specific challenge profile: the dimensionality of the design space, the availability of training data, the precision requirements for target properties, and the regulatory context of the application. As material optimization continues to evolve, the integration of these strategies—such as incorporating Bayesian elements within reinforcement learning frameworks—promises to further enhance our ability to navigate the complex triad of scalability, data management, and regulatory compliance challenges.

Advanced Methodologies in Action: From AI-Driven Design to Experimental Frameworks

Harnessing Artificial Intelligence and Machine Learning for De Novo Molecular Design

De novo molecular design represents a paradigm shift in drug discovery, enabling the creation of novel drug-like molecules from scratch rather than relying on the modification of existing compounds [28]. This approach has been revitalized by artificial intelligence (AI) and machine learning (ML), which can now explore the vast chemical space—estimated to contain 10³³ to 10⁶⁰ potential organic compounds—with unprecedented efficiency [29] [28]. The integration of AI into the drug discovery pipeline addresses critical challenges in pharmaceutical development, including escalating costs (exceeding $2.6 billion per approved drug) and extended timelines (10-15 years from discovery to market) [30]. This comparative analysis examines the performance, experimental methodologies, and practical implementation of leading AI-driven de novo design strategies, providing researchers with a framework for selecting and optimizing these tools within material optimization research.

Comparative Analysis of Major AI Approaches and Architectures

Key Generative Model Architectures

Table 1: Performance Comparison of Major AI Architectures for De Novo Design

| Model Architecture | Molecular Representation | Key Strengths | Reported Limitations | Notable Applications/Examples |

|---|---|---|---|---|

| Chemical Language Models (CLMs) [31] | SMILES strings | Strong foundational knowledge of chemistry; excellent for ligand-based design. | Can generate invalid SMILES; requires transfer learning for specific tasks. | Fine-tuned RNNs; DRAGONFLY's LSTM component. |

| Graph Neural Networks (GNNs) [30] | 2D/3D molecular graphs | Naturally represents molecular structure; captures spatial relationships. | Computational complexity; pose prediction challenges. | Graph Transformer Neural Networks (GTNN); Attentive FP. |

| Generative Adversarial Networks (GANs) [29] | Multiple (SMILES, graphs) | Capable of generating highly novel structures. | Training instability; mode collapse. | Objective-Reinforced GAN (ORGAN). |

| Variational Autoencoders (VAEs) [29] | Multiple (SMILES, graphs) | Continuous latent space for optimization. | Can produce blurry or averaged outputs. | Standard benchmark in MOSES. |

| Diffusion Models [32] | 3D molecular structures | State-of-the-art image and pose quality; reduced steric clashes. | Computationally intensive sampling process. | PoLiGenX for ligand generation with favorable poses. |

| Agentic AI Systems [30] | Variable | Autonomous navigation of discovery pipelines; multi-step reasoning. | Emerging technology; requires careful validation. | Development of autonomous chemistry labs. |

Advanced Models: Specialized Frameworks and Comparative Performance

Beyond these core architectures, specialized frameworks have been developed to address specific challenges in de novo design. The DRAGONFLY (Drug-target interActome-based GeneratiON oF noveL biologicallY active molecules) framework exemplifies this trend by combining a Graph Transformer Neural Network (GTNN) with a Chemical Language Model (LSTM) to leverage both structural and sequence-based molecular information [31]. This hybrid approach utilizes a drug-target interactome—a graph containing approximately 360,000 ligands and 2,989 targets—enabling it to incorporate information from both ligands and their macromolecular targets across multiple nodes, thus avoiding the need for application-specific transfer learning [31].

Table 2: Benchmarking Results of Generative Models (Based on GuacaMol, MOSES, and FCD)

| Model / Framework | Validity (%) | Uniqueness (%) | Novelty (Scaffold) | Synthesizability (SA Score or RAScore) | Fréchet ChemNet Distance (FCD) ↓ |

|---|---|---|---|---|---|

| DRAGONFLY [31] | High (exact % not reported) | High (exact % not reported) | Superior to fine-tuned RNNs | Assessed via RAScore [31] | Not explicitly reported |

| Fine-tuned RNN (Baseline) [31] | Reported as lower than DRAGONFLY | Reported as lower than DRAGONFLY | Lower than DRAGONFLY | Lower than DRAGONFLY | Not explicitly reported |

| BIMODAL (Bidirectional RNN) [29] | >90% (Similar to standard RNN) | >90% (Similar to standard RNN) | High scaffold diversity | Not explicitly reported | Comparable to standard RNN (1024 hidden units) |

| Character-level RNN (CharRNN) [29] | Reported in benchmarks | Reported in benchmarks | Reported in benchmarks | Reported in benchmarks | Used as a baseline in studies |

| Variational Autoencoder (VAE) [29] | Reported in benchmarks | Reported in benchmarks | Reported in benchmarks | Reported in benchmarks | Used as a baseline in studies |

| Adversarial Autoencoder (AAE) [29] | Reported in benchmarks | Reported in benchmarks | Reported in benchmarks | Reported in benchmarks | Used as a baseline in studies |

In direct performance comparisons, DRAGONFLY demonstrated superior performance over fine-tuned recurrent neural networks (RNNs) across the majority of templates and properties examined, including synthesizability, novelty, and predicted bioactivity [31]. Furthermore, the framework achieved high Pearson correlation coefficients (r ≥ 0.95) between desired and actual molecular properties, including molecular weight, lipophilicity (MolLogP), and polar surface area, indicating precise control over the generated molecular structures [31].

Experimental Protocols and Methodologies

Standardized Benchmarking Frameworks

Robust evaluation is critical for comparing generative models. Standardized benchmarking platforms assess models across multiple criteria to ensure generated molecules are not only novel but also valid, diverse, and drug-like [29].

Key Benchmarking Platforms:

- GuacaMol: Establishes a suite of tasks to measure a model's ability to generate molecules with desired properties and explores the chemical space [29].

- MOSES (Molecular Sets): Provides a standardized benchmarking platform with metrics for validity, uniqueness, novelty, and diversity to ensure fair comparison of generative models [29] [28].

- Fréchet ChemNet Distance (FCD): Measures the distance between the distribution of generated molecules and real-world bioactive molecules, incorporating both chemical and biological information from the bioactivity-trained network ChemNet [29]. FCD has been shown to be more effective at detecting model biases and failures than metrics based solely on chemical fingerprints [29].

Prospective Validation and Experimental Workflows

Beyond computational benchmarks, prospective validation involving chemical synthesis and biological testing is the ultimate measure of a model's utility. The successful application of the DRAGONFLY framework to generate new ligands for the human peroxisome proliferator-activated receptor gamma (PPARγ) exemplifies this process [31].

Experimental Protocol for Prospective Validation (as in DRAGONFLY Study [31]):

- Model Configuration: The interactome-based model is configured for structure-based design, using an interactome containing ~208,000 ligands and 726 targets with known 3D structures.

- Ligand Generation: The model (GTNN + LSTM) processes the 3D graph of the target binding site and generates novel molecules as SMILES strings, conditioned on the desired bioactivity and physicochemical properties.

- In Silico Evaluation:

- Property Prediction: Generated molecules are evaluated for key physicochemical properties (e.g., Molecular Weight, LogP, H-bond donors/acceptors).

- Synthesizability Assessment: The Retrosynthetic Accessibility Score (RAScore) is used to evaluate the feasibility of chemical synthesis [31].

- Bioactivity Prediction: Quantitative Structure-Activity Relationship (QSAR) models, often using Kernel Ridge Regression (KRR) with molecular descriptors (ECFP4, CATS, USRCAT), predict pIC50 values against the intended target [31].

- Novelty Assessment: A rule-based algorithm quantifies scaffold and structural novelty compared to known bioactive molecules.

- Compound Selection & Synthesis: Top-ranking designs based on the above criteria are selected for chemical synthesis.

- Experimental Characterization:

- Biophysical & Biochemical Assays: Synthesized compounds are characterized using techniques like Surface Plasmon Resonance (SPR) and functional enzymatic/cellular assays to determine binding affinity (KD), half-maximal inhibitory concentration (IC50), and efficacy (EC50).

- Selectivity Profiling: Activity is tested against related targets (e.g., other nuclear receptors) to establish selectivity.

- Structural Validation: If possible, the binding mode is confirmed through methods like X-ray crystallography of the ligand-receptor complex [31].

The following workflow diagram illustrates the key stages of this experimental process for prospective de novo design validation.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of AI-driven de novo design relies on a suite of computational tools, datasets, and software libraries that constitute the modern computational chemist's toolkit.

Table 3: Essential Research Reagents and Solutions for AI-Driven De Novo Design

| Tool / Resource Name | Type | Primary Function | Relevance to Experimental Protocol |

|---|---|---|---|

| ChEMBL [31] [29] | Database | Large-scale, curated database of bioactive molecules with drug-like properties. | Provides the foundational bioactivity data for training and validating models (e.g., building interactomes). |

| ZINC [29] | Database | Publicly available database of commercially available compounds for virtual screening. | Used as a source of "real-world" molecular distributions for benchmarking. |

| RDKit | Cheminformatics Library | Open-source toolkit for cheminformatics and machine learning. | Used for manipulating molecules, calculating descriptors, and integrating with ML pipelines. |

| Gnina [32] | Software | Molecular docking software that uses convolutional neural networks (CNNs) for scoring protein-ligand poses. | Critical for structure-based design and evaluating generated molecules in silico. |

| ChemProp [32] | Software | Message-passing neural network for molecular property prediction. | Used to rapidly predict key ADMET and physicochemical properties of generated molecules. |

| ECFP4 / CATS / USRCAT [31] | Molecular Descriptors | Different types of fingerprints and descriptors (structural, pharmacophore, shape-based). | Used as input for QSAR models to predict the bioactivity of de novo designs. |

| RAScore [31] | Metric | Retrosynthetic accessibility score to evaluate the synthesizability of a molecule. | A key filter applied to generated molecules before selection for synthesis. |

| Fréchet ChemNet Distance (FCD) [29] | Benchmarking Metric | Measures the quality and biological relevance of a set of generated molecules. | Used for the final, holistic evaluation of a generative model's output against known bioactive space. |

The comparative analysis of AI and ML strategies for de novo molecular design reveals a rapidly maturing field capable of generating novel, synthesizable, and biologically active molecules. Frameworks like DRAGONFLY demonstrate that hybrid models, which integrate multiple data types and learning paradigms, can outperform traditional single-architecture models [31]. The critical evaluation of these tools requires robust benchmarking suites like GuacaMol and MOSES [29], complemented by prospective validation with chemical synthesis and biological testing, as exemplified by the generation of confirmed PPARγ agonists [31].

While AI-driven de novo design has produced clinical candidates, challenges remain in ensuring generalizability, improving out-of-distribution performance, and fully integrating these tools into the Design-Make-Test-Analyze (DMTA) cycle [30] [28]. The future points toward more autonomous, agentic AI systems and a closer, synergistic partnership between computational prediction and experimental validation, accelerating the delivery of innovative therapeutics.

Implementing Design of Experiments (DoE) for Systematic Formulation Development

In the competitive and highly regulated pharmaceutical industry, systematic formulation development is not merely a best practice but a critical component of ensuring drug safety, efficacy, and manufacturability. Historically, formulation scientists relied on One Factor At a Time (OFAT) approaches, which are inefficient, time-consuming, and incapable of detecting interactions between formulation factors [33]. The adoption of Design of Experiments (DoE) within a Quality by Design (QbD) framework represents a paradigm shift, enabling a scientific, systematic, and risk-based approach to product development [33] [34]. DoE allows all potential factors to be evaluated simultaneously and systematically, transforming formulation development from an art into a data-driven science [35].

This guide provides a comparative analysis of DoE methodologies, offering formulation scientists and drug development professionals a clear understanding of how to select and apply appropriate experimental designs. By objectively comparing different DoE strategies and their applications in real-world tablet development, we aim to equip researchers with the knowledge to build quality into their products from the earliest development stages, ultimately leading to more robust and effective pharmaceutical formulations.

Comparative Analysis of DoE Approaches and Applications

Design of Experiments encompasses a range of methodological approaches, each with distinct strengths and optimal use cases. The selection of a specific design depends on the development stage, the number of factors to be investigated, and the desired model complexity. Below, we compare the fundamental DoE approaches relevant to pharmaceutical formulation.

Table 1: Comparison of Common Experimental Designs in Formulation Development

| Design Type | Key Characteristics | Optimal Use Case | Advantages | Limitations |

|---|---|---|---|---|

| Full Factorial [36] | Experiments at all possible combinations of all factor levels. | Preliminary studies with a limited number (2-4) of critical factors. | Captures all main effects and interaction effects; comprehensive. | Number of runs grows exponentially with factors (e.g., 5 levels for 3 factors = 125 runs [34]). |

| Mixture Design [34] | Components are proportions of a blend; constrained to sum to 100%. | Formulation optimization where excipient ratios are critical. | Efficiently models the formulation space; ideal for excipient compatibility and optimization. | Standard designs require adaptation for process variable incorporation. |

| Central Composite Design (CCD) [36] | A 2-level factorial design augmented with center and axial points. | Building a second-order (quadratic) response surface model for optimization. | Can fit complex non-linear responses; more efficient than a 3-level factorial. | Inscribed CCD avoids impractical experimental conditions outside the range [36]. |

| Optimal Experimental Design (OED) [36] | Computer-generated design optimized for a specific model and statistical criterion. | Resource-intensive experiments where model parameters must be estimated with high precision. | Maximizes information gain per experiment; can be twice as efficient as a full factorial design [36]. | Requires prior model and parameter knowledge; computationally intensive. |

The comparative efficiency of these designs is a major consideration. For instance, investigating three factors at five levels each using a full factorial approach would require 125 experiments, which is often impractical [34]. Mixture designs and other fractional factorial designs dramatically reduce this experimental burden while still providing powerful insights into factor effects and interactions. Research comparing DoE techniques for modeling microbial growth found that inscribed central composite and full factorial designs were the most suitable among classical DOE techniques, while D-optimal designs (a type of OED) performed best overall, delivering lower model prediction uncertainty and being less dependent on experimental variability [36].

Experimental Protocols: Implementing DoE for Tablet Formulation

This section provides a detailed, step-by-step methodology for applying DoE to develop an immediate-release tablet formulation, using a real-world case study based on the development of piroxicam amorphous solid dispersions (ASD) [34].

Phase 1: Formulation Preliminary Study

Objective: To select the final excipients for the formulation system from a list of chemically compatible candidates.

Methodology:

- Define Initial Formulation System: Based on excipient compatibility studies, define the initial set of excipients. For a simple tablet, this typically includes the API %, a choice of diluents (e.g., three types), disintegrants (e.g., two types), and lubricants (e.g., two types) [35].

- Select DoE Design: A full factorial design is often appropriate for this screening phase. The example system with 1 factor at 2 levels (API %), 1 factor at 3 levels (diluents), and 2 factors at 2 levels (disintegrants, lubricants) results in a manageable 24-experiment design [35].

- Execute and Analyze: Manufacture and test the 24 formulations. Key responses (Critical Quality Attributes or CQAs) such as tensile strength, disintegration time, and friability are measured. Statistical analysis (Analysis of Variance or ANOVA) identifies which excipient types have a statistically significant (p-value < 0.05) effect on the CQAs [34].

- Define Final Formulation System: Based on the results, select the specific excipients that yield the best performance for the final formulation system (e.g., one specific diluent, one disintegrant, one lubricant) [35].

Phase 2: Formulation Optimization Study

Objective: To define the optimal levels (percentages) of each excipient in the final formulation system.

Methodology:

- Define Final Formulation Factors: The factors are now the proportions of the selected excipients. A typical final formulation system could include the API %, the selected diluent %, the selected disintegrant %, and the selected lubricant % [35].

- Select DoE Design: A mixture design is the most efficient choice because the factors are components of a mixture that must sum to 100% [34]. For three components, a simplex lattice or simplex centroid design is standard. The piroxicam ASD study used a constrained mixture design with 18 randomized experiments for three excipients (Avicel PH102, Pearlitol SD 200, Ac-Di-Sol) summing to 68.25% of the formulation [34].

- Execute Experiments: Tablets are manufactured according to the randomized experimental design. Using an instrumented tablet press (e.g., STYL'One Nano) controlled by specialized software (e.g., Alix software) ensures process parameter consistency [34].

- Model Fitting and Data Analysis:

- Visualize Data: Plot each response against all factors to identify main trends (e.g., an increase in Avicel PH102 increases tensile strength and solid fraction) [34].

- Fit Model: Use regression to fit a statistical model (e.g., including main terms and interaction terms) to the experimental data. The "Actual by Predicted" plot is used to visualize how well the model fits the experimental data [34].

- Analyze Variance: Perform ANOVA. The R²Adjusted value indicates how much data variation the model explains, the F-Ratio represents the signal-to-noise, and p-values (< 0.05) confirm the statistical significance of each model term [34].

- Construct Prediction Profiler: Use the fitted model to create a dynamic prediction profiler. This tool shows how the predicted responses change as individual factor settings are adjusted, allowing for the identification of a design space [34].

- Define Optimal Formulation: Set desirability goals for each response (e.g., tensile strength > 2.10 MPa, friability < 0.3%) within the prediction profiler. The software then calculates the optimal factor settings (excipient levels) that simultaneously satisfy all goals [34]. In the cited example, the optimal settings were Avicel PH102 36.9%, Pearlitol SD 200 28.6%, and Ac-Di-Sol 2.69% [34].

Diagram 1: DoE Workflow for Tablet Formulation. This workflow outlines the three-phase systematic approach to formulation development, from screening to optimization.

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful formulation development relies on the precise selection and control of materials and equipment. The following table details key reagents, materials, and instruments used in a typical tablet DoE study and their critical functions.

Table 2: Key Research Reagents, Materials, and Equipment for Tablet DoE

| Item | Category | Function in Formulation Development |

|---|---|---|

| Avicel PH102 (Microcrystalline Cellulose) | Excipient (Diluent/Binder) | A ductile material that plastically deforms at low compression pressure, forming bonds between particles to increase tablet tensile strength [34]. |

| Pearlitol SD 200 (Mannitol) | Excipient (Diluent) | A moderately hard-ductile diluent; its different compaction mechanism compared to Avicel affects the solid fraction and mechanical properties of the blend [34]. |

| Ac-Di-Sol (Croscarmellose Sodium) | Excipient (Disintegrant) | Promotes tablet disintegration by swelling upon contact with water, facilitating drug dissolution [35] [34]. |

| Magnesium Stearate | Excipient (Lubricant) | Reduces friction during tablet ejection from the die, preventing sticking and ensuring manufacturing consistency [35]. |

| Instrumented Tablet Press (e.g., STYL'One Nano) | Equipment | A R&D-scale press that enables high-throughput manufacturing of small powder batches with precise control and monitoring of compression parameters [34]. |

| Compression Control Software (e.g., Alix Software) | Software | Used to control the instrumented tablet press, ensuring consistent application of force, pressure, and speed across all experimental runs in the DoE [34]. |

Data Presentation and Statistical Analysis Workflow

The true power of DoE is realized through rigorous statistical analysis of the collected data, transforming experimental results into predictive, actionable knowledge. The workflow for this analysis is methodical.

Diagram 2: Data Analysis Workflow. This chart illustrates the standard statistical analysis process following data collection from a DoE.